实现Prometheus Server与Exporter基于账户名和密码的安全认证

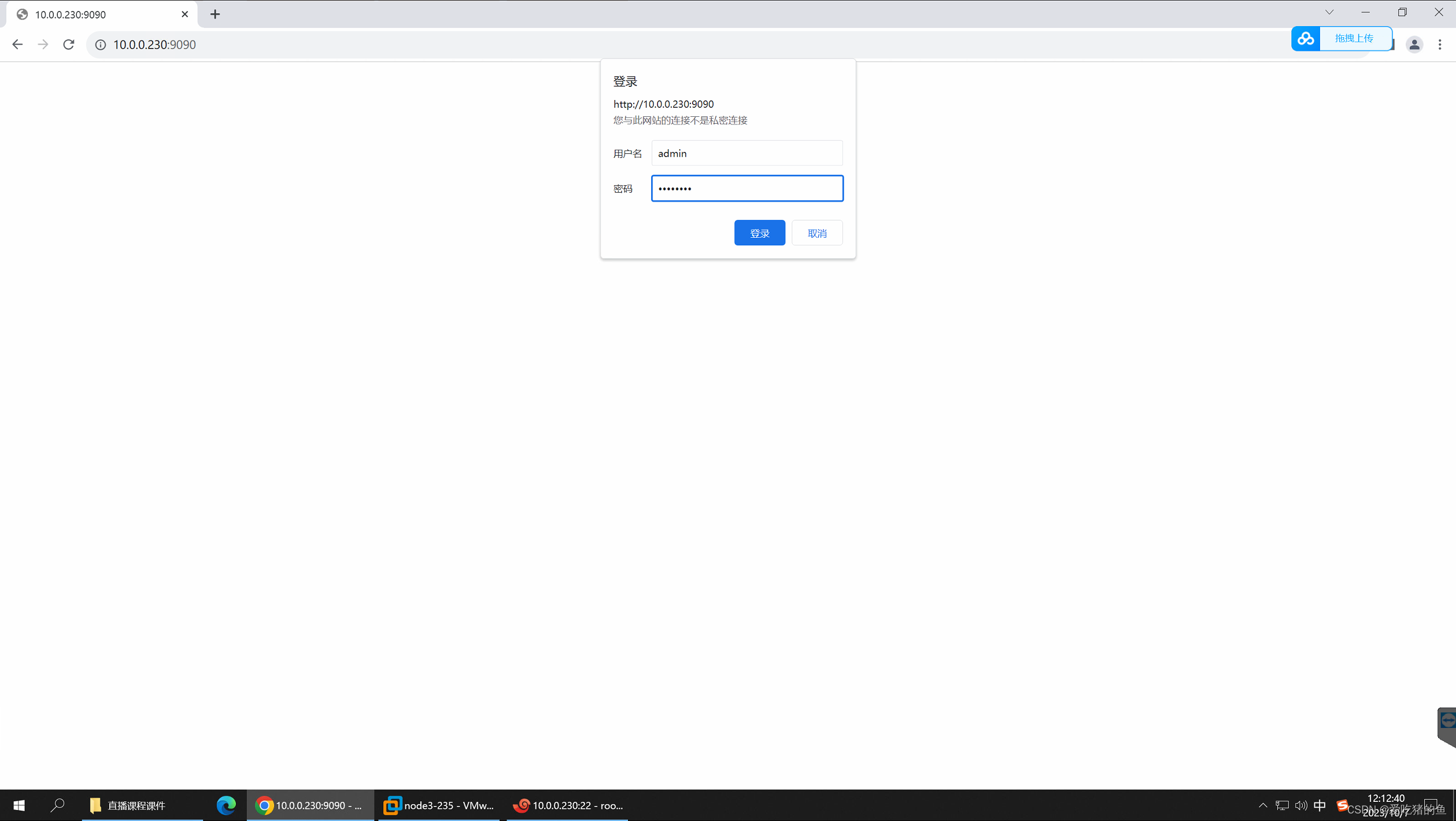

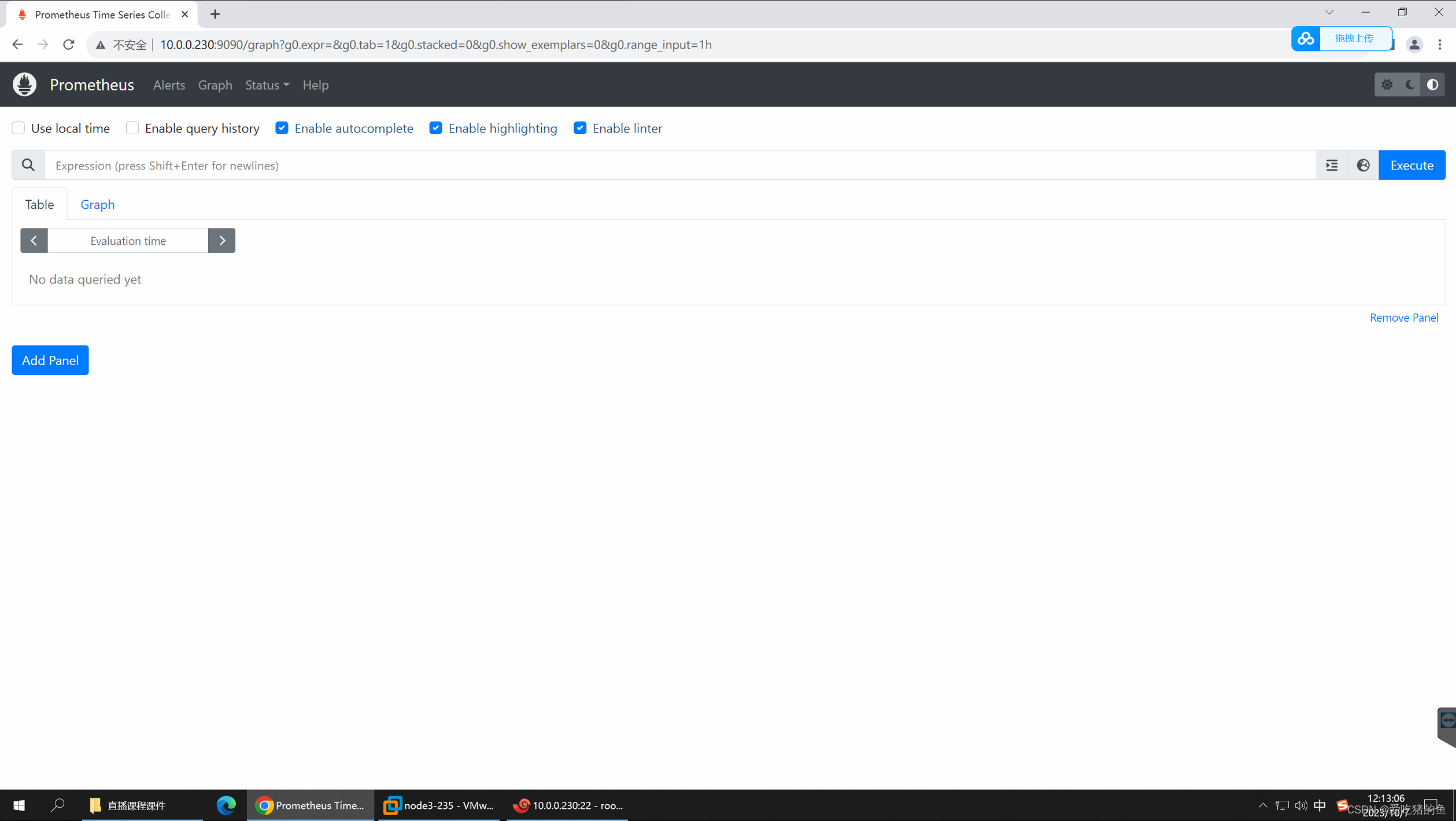

Prometheus Server web登录认证

#centos下是安装http-tools的包

apt update && apt install apache2-utils

#指定使用bcrypt加密密码,账户名admin、密码为admin123,-n不更新密码文件,-b命令行获取密码,-B使用bcrypt加密;

# -C加密的时间,default: 5, valid: 4 to 17,越大越安全

htpasswd -nbB -C 10 admin admin123

admin:$2y$10$zwwpKzZOFKPzt5Z.MOhLsujhlwsRwUbVmqb1q6iltddqoYNC64fya

#创建安全认证文件;可以配置多个账号和密码

vim /apps/prometheus/web-auth.yaml

basic_auth_users:

admin: $2y$10$ccEsAGpJSl9jhgsL/UJbzOGEXi6agE5ceBHcZFhC.0ix05hAOCPFC

#编辑prometheus.service文件;--web.config.file=/apps/prometheus/web-auth.yaml 启用web认证

vim /etc/systemd/system/prometheus.service

[Unit]

Description=Prometheus Server

Documentation=https://prometheus.io/docs/introduction/overview/

After=network.target

[Service]

Restart=on-failure

WorkingDirectory=/apps/prometheus/

ExecStart=/apps/prometheus/prometheus --config.file=/apps/prometheus/prometheus.yml --web.enable-lifecycle --web.config.file=/apps/prometheus/web-auth.yaml

[Install]

WantedBy=multi-user.target

#编辑prometheus的配置文件,配置认证,使它自己收集自己的指标通过。

vim /apps/prometheus/prometheus.yml

- job_name: "prometheus"

basic_auth:

username: admin

password: admin123

# password_file: /apps/prometheus/web-auth.yaml #账号密码和文件选一种即可

static_configs:

- targets: ["localhost:9090"]

#重启prometheus

systemctl daemon-reload && systemctl restart prometheus.service

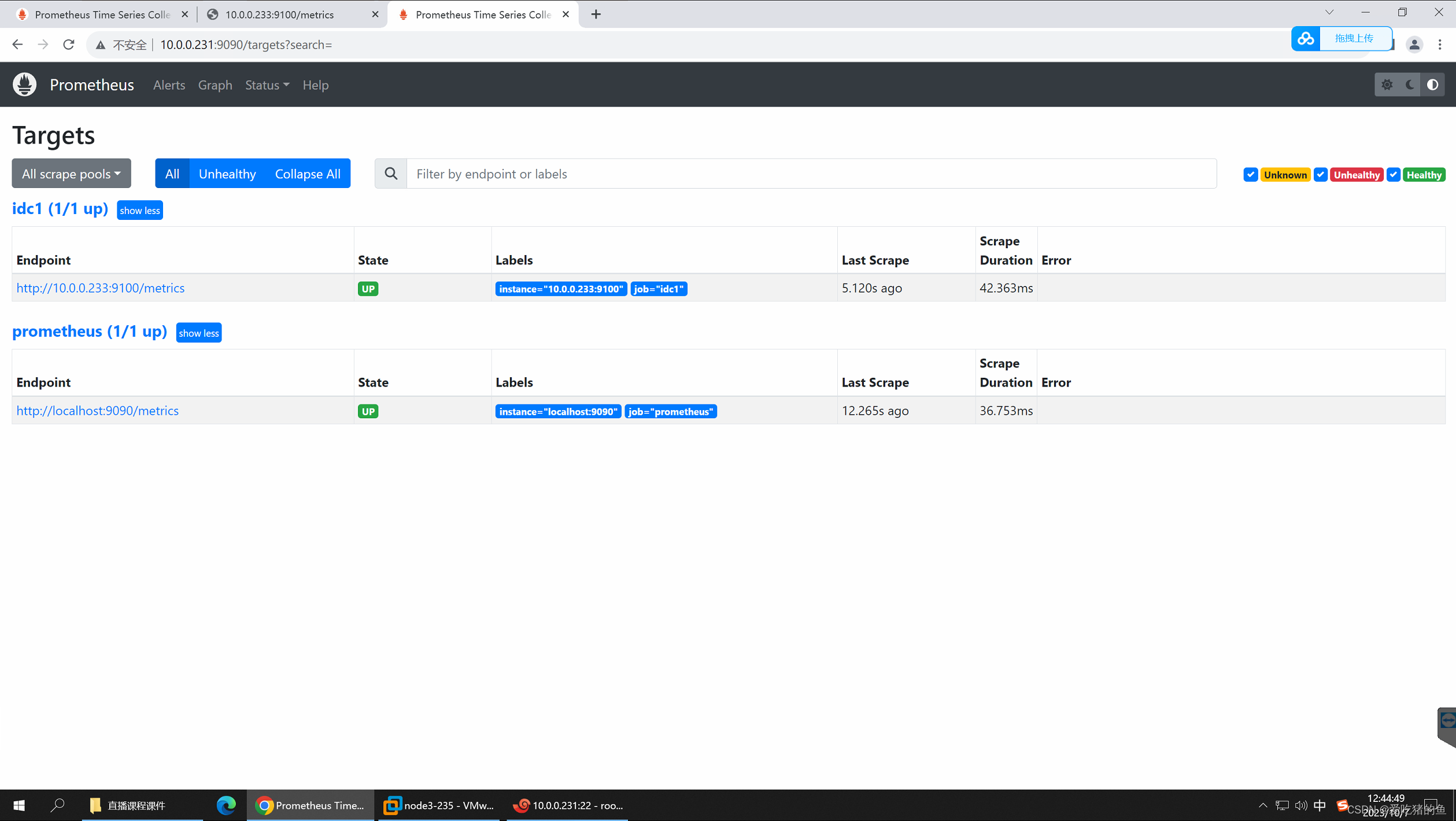

web登录验证

Prometheus Server与Node Exporter的安全认证

配置Node Exporter的安全认证

apt update && apt install apache2-utils -y

#生成密码

htpasswd -nbB -C 10 user1 user123

user1:$2y$10$PgpypT7/aA8yjTIxvmZ3RuGqXD5TFXr62P7DmWRMWEig7KFEjGLCm

#编辑node exporter 的认证文件

vim /apps/node_exporter/api-auth.yaml

basic_auth_users:

user1: $2y$10$PgpypT7/aA8yjTIxvmZ3RuGqXD5TFXr62P7DmWRMWEig7KFEjGLCm

#配置node exporter的service文件;安全认证选项--web.config.file=/apps/node_exporter/api-auth.yaml

vim /etc/systemd/system/node-exporter.service

[Unit]

Description=Prometheus Node Exporter

After=network.target

[Service]

ExecStart=/apps/node_exporter/node_exporter --web.config.file=/apps/node_exporter/api-auth.yaml

[Install]

WantedBy=multi-user.target

#重启node exporter

systemctl daemon-reload && systemctl restart node-exporter.service

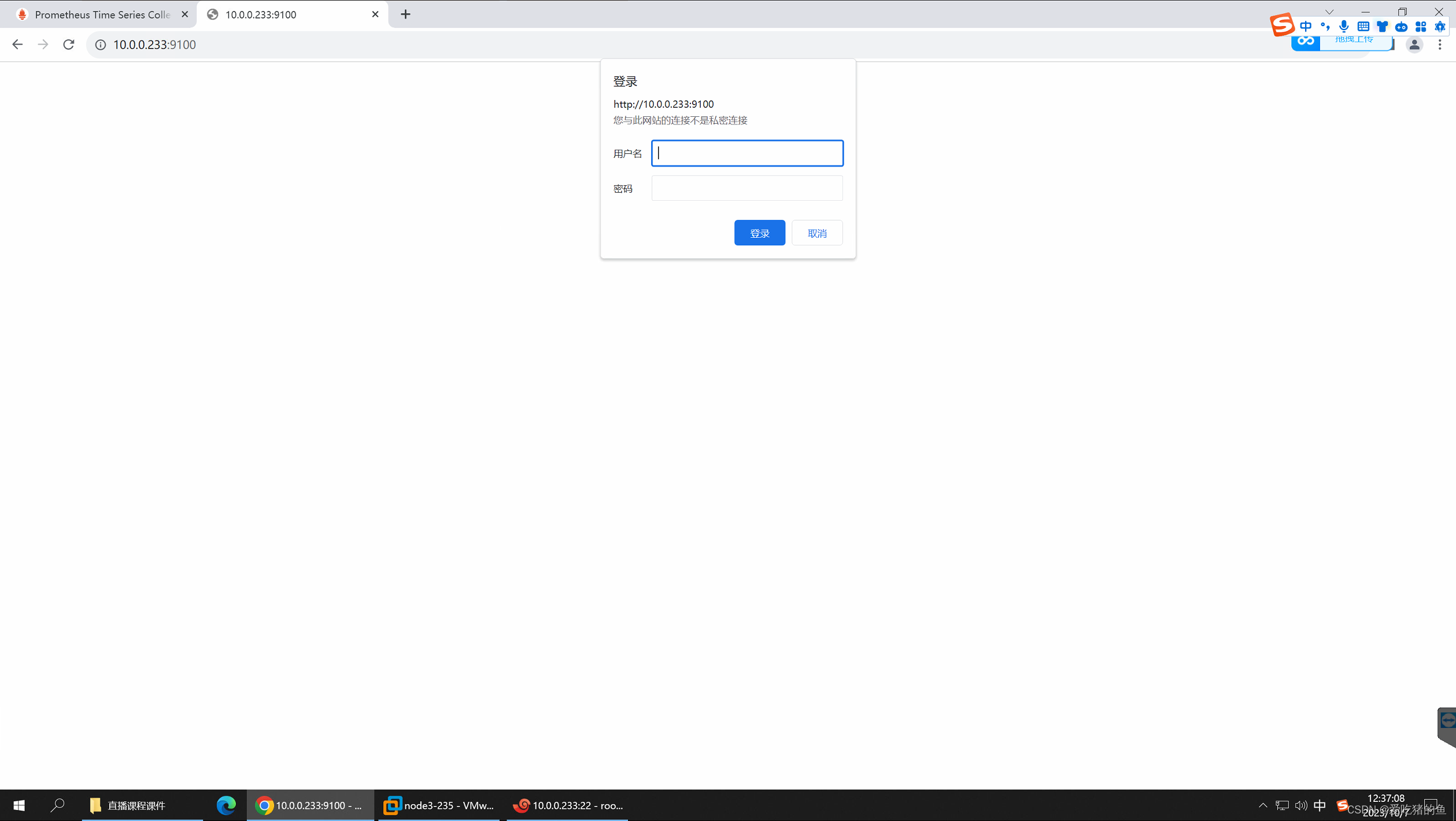

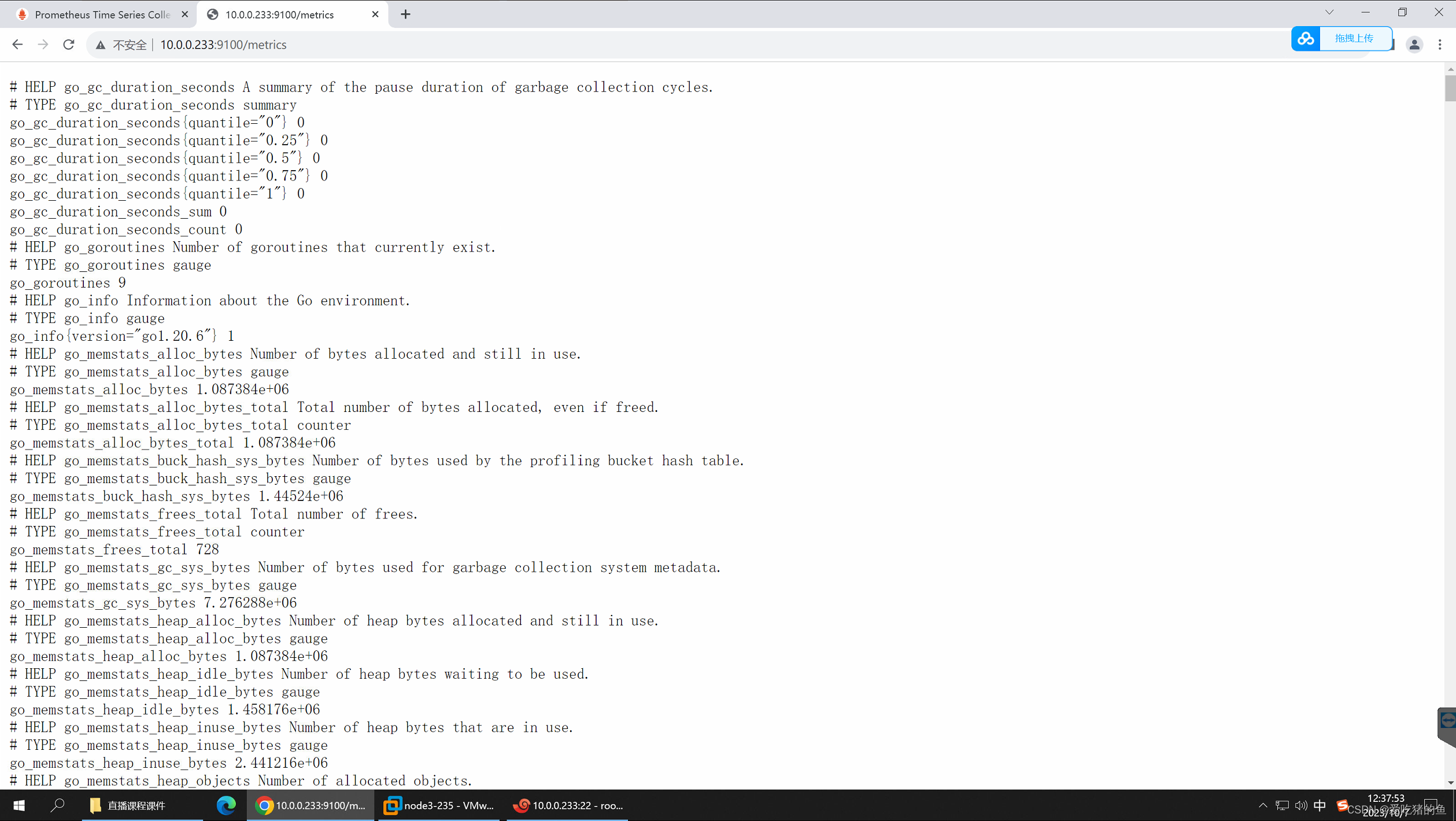

web界面验证

配置prometheus server端通过node exporter认证

vim /apps/prometheus/prometheus.yml

- job_name: 'idc1'

basic_auth:

username: user1

password: user123

#password_file: /apps/node_exporter/api-auth.yaml

static_configs:

- targets: ["10.0.0.233:9100"]

systemctl restart prometheus

测试在使用Kube-Prometheus进行部署

使用kube-prometheus部署prometheus,主要要注意镜像下载

官网地址:https://github.com/prometheus-operator/kube-prometheus

需要注意:k8s的版本的兼容性

#clone release-0.13这个版本

apt install -y git

#-b 指定需要的版本;不加默认是main这个版本

git clone -b release-0.13 https://github.com/prometheus-operator/kube-prometheus.git

#进入清单目录

cd kube-prometheus/manifests/

#查看有哪些镜像,因为有些镜像下载不了,所以需要提前下载,传到自己仓库。并且修改yaml文件中的镜像地址

root@k8s-master1:/opt/kube-prometheus/manifests# grep 'image:' ./* -R

./alertmanager-alertmanager.yaml: image: quay.io/prometheus/alertmanager:v0.26.0

./blackboxExporter-deployment.yaml: image: quay.io/prometheus/blackbox-exporter:v0.24.0

./blackboxExporter-deployment.yaml: image: jimmidyson/configmap-reload:v0.5.0

./blackboxExporter-deployment.yaml: image: quay.io/brancz/kube-rbac-proxy:v0.14.2

./grafana-deployment.yaml: image: grafana/grafana:9.5.3

./kubeStateMetrics-deployment.yaml: image: registry.k8s.io/kube-state-metrics/kube-state-metrics:v2.9.2

./kubeStateMetrics-deployment.yaml: image: quay.io/brancz/kube-rbac-proxy:v0.14.2

./kubeStateMetrics-deployment.yaml: image: quay.io/brancz/kube-rbac-proxy:v0.14.2

./nodeExporter-daemonset.yaml: image: quay.io/prometheus/node-exporter:v1.6.1

./nodeExporter-daemonset.yaml: image: quay.io/brancz/kube-rbac-proxy:v0.14.2

./prometheus-prometheus.yaml: image: quay.io/prometheus/prometheus:v2.46.0

./prometheusAdapter-deployment.yaml: image: registry.k8s.io/prometheus-adapter/prometheus-adapter:v0.11.1

./prometheusOperator-deployment.yaml: image: quay.io/prometheus-operator/prometheus-operator:v0.67.1

./prometheusOperator-deployment.yaml: image: quay.io/brancz/kube-rbac-proxy:v0.14.2

#查看networkPolicy的文件,将他们移到另一个目录。这里networkPolicy还有没有学习,他的授权比较严格,会影响在部署完后对项目的访问。

root@k8s-master1:/opt/kube-prometheus/manifests# ll *networkPolicy*.yaml

-rw-r--r-- 1 root root 977 Oct 7 13:38 alertmanager-networkPolicy.yaml

-rw-r--r-- 1 root root 722 Oct 7 13:38 blackboxExporter-networkPolicy.yaml

-rw-r--r-- 1 root root 651 Oct 7 13:38 grafana-networkPolicy.yaml

-rw-r--r-- 1 root root 723 Oct 7 13:38 kubeStateMetrics-networkPolicy.yaml

-rw-r--r-- 1 root root 671 Oct 7 13:38 nodeExporter-networkPolicy.yaml

-rw-r--r-- 1 root root 1073 Oct 7 13:38 prometheus-networkPolicy.yaml

-rw-r--r-- 1 root root 565 Oct 7 13:38 prometheusAdapter-networkPolicy.yaml

-rw-r--r-- 1 root root 694 Oct 7 13:38 prometheusOperator-networkPolicy.yaml

#当前目录下创建一个networkPolicy目录

mkdir networkPolicy

#将networkPolicy的文件移到networkPolicy目录中

mv *networkPolicy*.yaml networkPolicy/

#这个目录里面有些资源比较大,以前create可以用创建,apply不行;但是现在可以用apply创建了,加参数--server-side指定它是一个server模型

#kubectl create -f setup/

kubectl apply --server-side -f setup/

customresourcedefinition.apiextensions.k8s.io/alertmanagerconfigs.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/alertmanagers.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/podmonitors.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/probes.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/prometheuses.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/prometheusagents.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/prometheusrules.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/scrapeconfigs.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/servicemonitors.monitoring.coreos.com serverside-applied

customresourcedefinition.apiextensions.k8s.io/thanosrulers.monitoring.coreos.com serverside-applied

namespace/monitoring serverside-applied

#上面创建完成后再执行

root@k8s-master1:/opt/kube-prometheus/manifests# kubectl apply -f ./

#查看pod

root@k8s-master1:/opt/kube-prometheus/manifests# kubectl get pod -n monitoring

NAME READY STATUS RESTARTS AGE

alertmanager-main-0 2/2 Running 0 2m38s

alertmanager-main-1 2/2 Running 0 2m38s

alertmanager-main-2 2/2 Running 0 2m38s

blackbox-exporter-7889b585f6-htgsz 3/3 Running 0 3m6s

grafana-6dd9984f6d-wxm85 1/1 Running 0 3m4s

kube-state-metrics-6649c5757b-wjcxq 3/3 Running 0 3m4s

node-exporter-264v2 2/2 Running 0 3m3s

node-exporter-44rf9 2/2 Running 0 3m3s

node-exporter-9dtff 2/2 Running 0 3m3s

node-exporter-b2k7t 2/2 Running 0 3m3s

node-exporter-fxggh 2/2 Running 0 3m3s

node-exporter-v24ls 2/2 Running 0 3m3s

prometheus-adapter-68c4db7bb5-dzzh2 1/1 Running 0 3m2s

prometheus-adapter-68c4db7bb5-m5dgd 1/1 Running 0 3m2s

prometheus-k8s-0 2/2 Running 0 2m36s

prometheus-k8s-1 2/2 Running 0 2m36s

prometheus-operator-58499f797-svnqm 2/2 Running 0 3m2s

#查看svc

root@k8s-master1:/opt/kube-prometheus/manifests# kubectl get svc -n monitoring

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

alertmanager-main ClusterIP 10.10.19.186 <none> 9093/TCP,8080/TCP 4m33s

alertmanager-operated ClusterIP None <none> 9093/TCP,9094/TCP,9094/UDP 4m4s

blackbox-exporter ClusterIP 10.10.46.14 <none> 9115/TCP,19115/TCP 4m32s

grafana NodePort 10.10.193.189 <none> 3000:30300/TCP 2m1s

kube-state-metrics ClusterIP None <none> 8443/TCP,9443/TCP 4m30s

node-exporter ClusterIP None <none> 9100/TCP 4m29s

prometheus-adapter ClusterIP 10.10.174.49 <none> 443/TCP 4m28s

prometheus-k8s NodePort 10.10.242.68 <none> 9090:30090/TCP,8080:32567/TCP 2m

prometheus-operated ClusterIP None <none> 9090/TCP 4m2s

prometheus-operator ClusterIP None <none> 8443/TCP 4m28s

web访问测试

自定义yaml部署Prometheus、基于daemonset部署CAdvisor监控Pod、基于daemonset部署node-exporter

部署cadvisor

cat daemonset-deploy-cadvisor.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: cadvisor

namespace: monitoring

spec:

selector:

matchLabels:

app: cAdvisor

template:

metadata:

labels:

app: cAdvisor

spec:

tolerations: #污点容忍,忽略master的NoSchedule;master也要部署一个即是设置了NoSchedule

- effect: NoSchedule

key: node-role.kubernetes.io/master

hostNetwork: true #直接使用书主机网络

restartPolicy: Always # 重启策略;

containers:

- name: cadvisor

image: registry.cn-hangzhou.aliyuncs.com/zhangshijie/cadvisor-amd64:v0.45.0

imagePullPolicy: IfNotPresent # 镜像策略

ports:

- containerPort: 8080

volumeMounts:

- name: root

mountPath: /rootfs

- name: run

mountPath: /var/run

- name: sys

mountPath: /sys

- name: docker

#mountPath: /var/lib/docker

mountPath: /var/lib/containerd

volumes:

- name: root

hostPath:

path: /

- name: run

hostPath:

path: /var/run

- name: sys

hostPath:

path: /sys

- name: docker

hostPath:

#path: /var/lib/docker

path: /var/lib/containerd

#部署

kubectl create ns monitoring

kubectl apply -f daemonset-deploy-cadvisor.yaml

#查看pod验证

root@k8s-master1:/opt/1.prometheus-case-files# kubectl get pod -n monitoring

NAME READY STATUS RESTARTS AGE

cadvisor-27rlx 1/1 Running 0 42s

cadvisor-4dd5p 1/1 Running 0 42s

cadvisor-nj795 1/1 Running 0 42s

cadvisor-ntzlw 1/1 Running 0 42s

cadvisor-pdrz5 1/1 Running 0 42s

cadvisor-ww74q 1/1 Running 0 42s

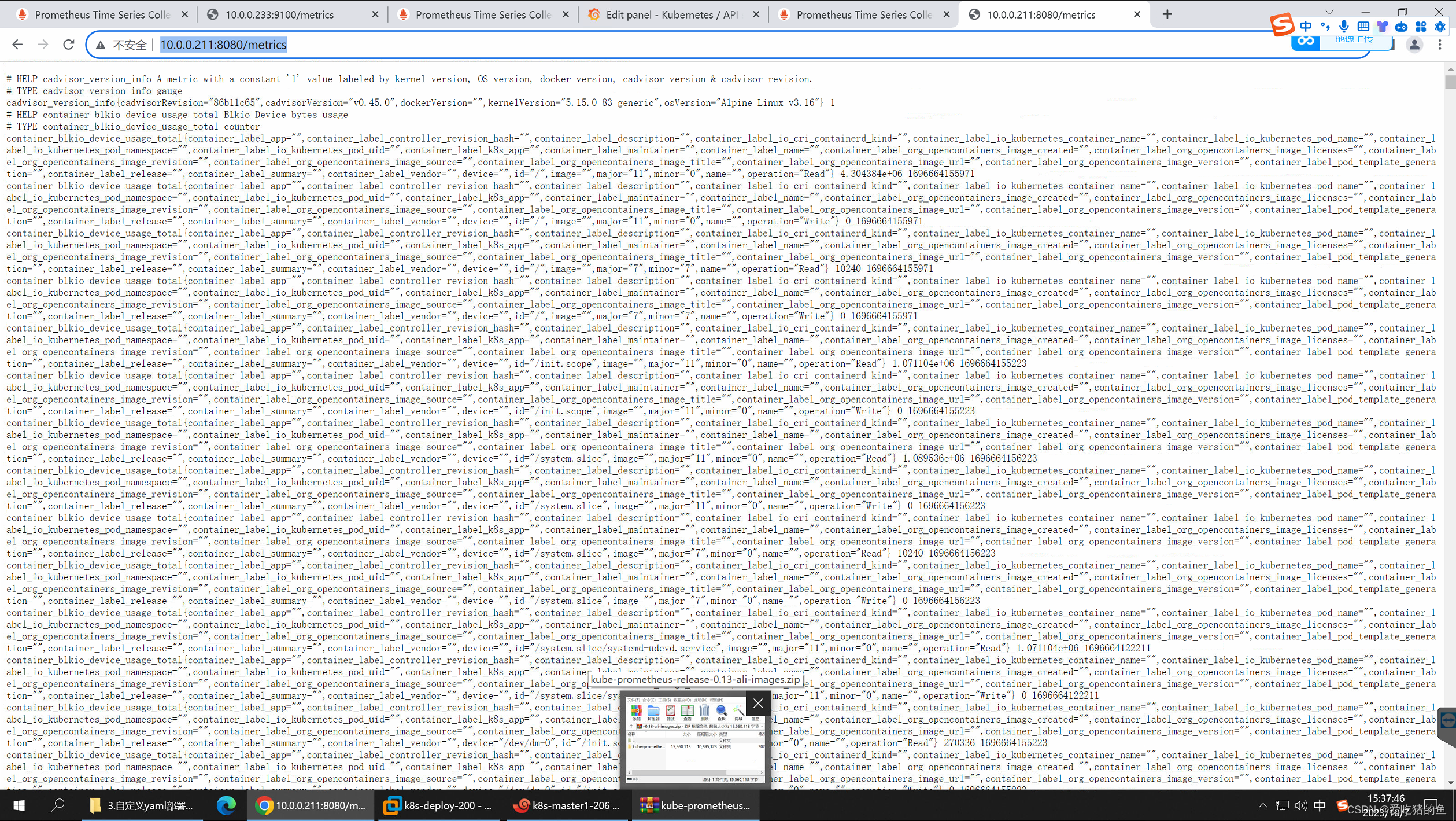

web查看收集的宿主机的容器指标

每个节点地址:http://10.0.0.211:8080/metrics

部署node exporter

收集宿主机的cpu,内存,网卡等这些指标

cat daemonset-deploy-node-exporter.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitoring

labels:

k8s-app: node-exporter

spec:

selector:

matchLabels:

k8s-app: node-exporter

template:

metadata:

labels:

k8s-app: node-exporter

spec:

tolerations:

- effect: NoSchedule

key: node-role.kubernetes.io/master

containers:

- image: registry.cn-hangzhou.aliyuncs.com/zhangshijie/node-exporter:v1.5.0

imagePullPolicy: IfNotPresent

name: prometheus-node-exporter

ports:

- containerPort: 9100

hostPort: 9100

protocol: TCP

name: metrics

volumeMounts:

- mountPath: /host/proc

name: proc

- mountPath: /host/sys

name: sys

- mountPath: /host

name: rootfs

args:

- --path.procfs=/host/proc

- --path.sysfs=/host/sys

- --path.rootfs=/host

volumes: #把宿主机的这些目录挂载到容器里,就可以在容器了收集宿主机的指标

- name: proc

hostPath:

path: /proc

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /

hostNetwork: true

hostPID: true

#---

#apiVersion: v1

#kind: Service

#metadata:

# annotations:

# prometheus.io/scrape: "true"

# labels:

# k8s-app: node-exporter

# name: node-exporter

# namespace: monitoring

#spec:

# type: NodePort

# ports:

# - name: http

# port: 9100

# nodePort: 39100

# protocol: TCP

# selector:

# k8s-app: node-exporter

#部署

kubectl apply -f daemonset-deploy-node-exporter.yaml

#查看pod

root@k8s-master1:/opt/1.prometheus-case-files# kubectl get pod -n monitoring |grep node

node-exporter-584xx 1/1 Running 0 2m6s

node-exporter-f8l52 1/1 Running 0 2m6s

node-exporter-gspcc 1/1 Running 0 2m6s

node-exporter-jlvfd 1/1 Running 0 2m6s

node-exporter-ssdnw 1/1 Running 0 2m6s

node-exporter-vh9vm 1/1 Running 0 2m6s

部署prometheus server

编辑prometheus server配置文件

vim prometheus-cfg.yaml

kind: ConfigMap

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus-config

namespace: monitoring

data:

prometheus.yml: |

global:

scrape_interval: 15s

scrape_timeout: 10s

evaluation_interval: 1m

scrape_configs:

- job_name: mysql-monitor-172.31.2.102

static_configs:

- targets: ['172.31.2.102:9104']

- job_name: 'kube-state-metrics' #收集容器状态等的一些指标

static_configs:

- targets: ['172.31.7.111:31666']

- job_name: 'kubernetes-node' #服务发现配置,发现node的

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'kubernetes-cadvisor' #服务发现配置,发现cadvisor的

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:8080'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-apiserver'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-nginx-pods'

kubernetes_sd_configs:

- role: pod

namespaces: #可选指定namepace,如果不指定就是发现所有的namespace中的pod

names:

- myserver

- magedu

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

#创建配置

kubectl apply -f prometheus-cfg.yaml

部署prometheus server

可以把prometheus server调度到一个固定的节点(当prometheus拉取数据的时候,他的cpu会瞬间升高),也要考虑prometheus server的数据存储。

vim prometheus-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitoring

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

#nodeName: 172.31.7.113 #指定prometheus在指定的node节点

serviceAccountName: monitor

containers:

- name: prometheus

image: registry.cn-hangzhou.aliyuncs.com/zhangshijie/prometheus:v2.42.0

imagePullPolicy: IfNotPresent

command:

- prometheus #指定prometheus启动参数

- --config.file=/etc/prometheus/prometheus.yml

- --storage.tsdb.path=/prometheus #数据保存目录

- --storage.tsdb.retention=720h #数据的保存周期

- --web.enable-lifecycle

resources:

limits:

memory: "2048Mi"

cpu: "1"

requests:

memory: "2048Mi"

cpu: "1"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus/prometheus.yml

name: prometheus-config

subPath: prometheus.yml

- mountPath: /prometheus/

name: prometheus-storage-volume

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

items:

- key: prometheus.yml

path: prometheus.yml

mode: 0644

- name: prometheus-storage-volume

nfs:

server: 10.0.0.200

path: /data/k8sdata/prometheusdata

#hostPath:

# path: /data/prometheusdata

# type: Directory

#prometheus是普通用户的权限,所以/data/k8sdata/prometheusdata这个目录要修改权限。

#先把prometheus通过容器跑起来,去查看它是什么用户。然后给/data/k8sdata/prometheusdata

root@k8s-deploy:~# chown 65534.65534 -R /data/k8sdata/prometheusdata

#创建账号serviceAccountName: monitor

#创建监控账号

kubectl create serviceaccount monitor -n monitoring

#对 monitoring 账号授权

kubectl create clusterrolebinding monitor-clusterrolebinding -n monitoring --clusterrole=cluster-admin --serviceaccount=monitoring:monitor

#部署:

kubectl apply -f prometheus-deployment.yaml

#查看

root@k8s-master1:/opt/1.prometheus-case-files# kubectl get pod -n monitoring |grep prometheus

prometheus-server-5db694b586-ghcfv 1/1 Running 0 50s

#查看有没有启动错误

kubectl logs -f prometheus-server-5db694b586-5rf2f -n monitoring

创建k8s外访问svc

root@k8s-master1:/opt/1.prometheus-case-files# cat prometheus-svc.yaml

---

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitoring

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

nodePort: 30090

protocol: TCP

selector:

app: prometheus

component: server

#部署kubectl apply -f prometheus-svc.yaml

#查看

root@k8s-master1:/opt/1.prometheus-case-files# kubectl get svc -n monitoring

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

prometheus NodePort 10.10.202.14 <none> 9090:30090/TCP 29m

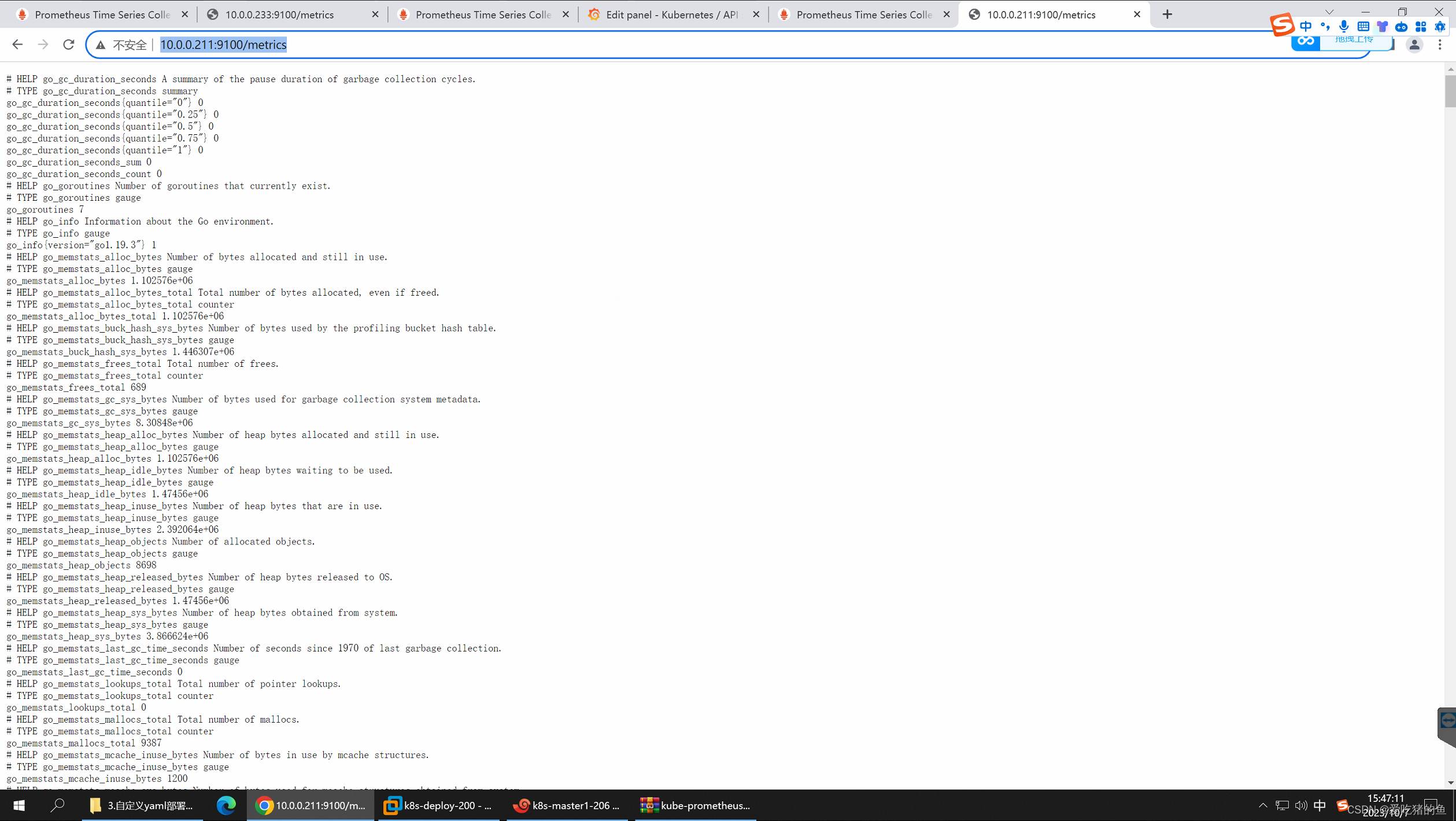

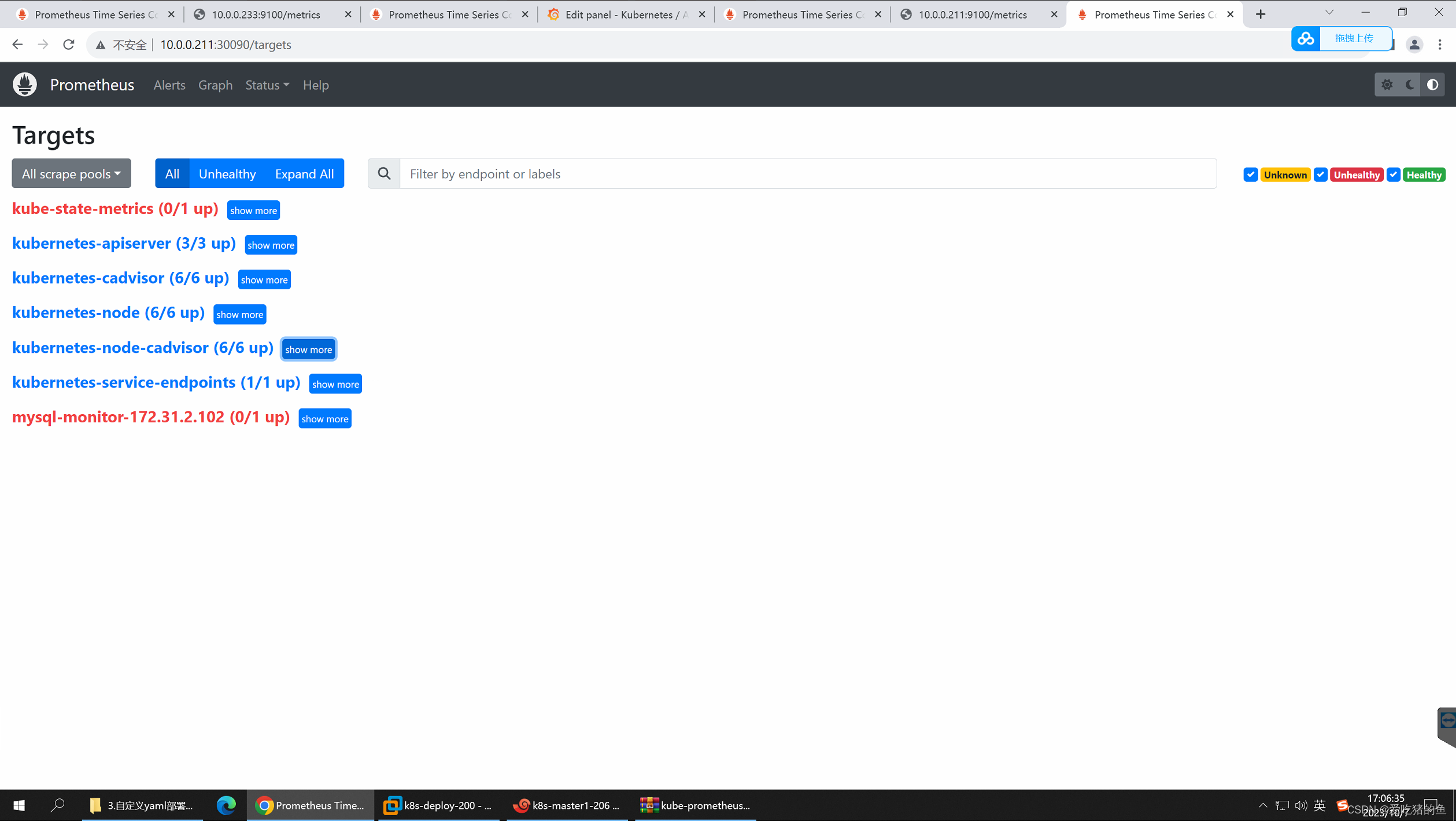

web验证

总结Prometheus服务发现基础、总结relabel基础及语法

prometheus 获取数据源 target 的方式有多种,如静态配置和动态服务发现配置,prometheus 目前支持的服务发现有很多种,常用的主要分为以下几种:

https://prometheus.io/docs/prometheus/latest/configuration/configuration/#configuration-file

1、kubernetes_sd_configs: #基于 Kubernetes API 实现的服务发现,让 prometheus 动态发现 kubernetes 中被监控的目标

2、static_configs: #静态服务发现,基于 prometheus 配置文件指定的监控目标

3、dns_sd_configs: #DNS 服务发现监控目标

4、consul_sd_configs: #Consul 服务发现,基于 consul 服务动态发现监控目

5、file_sd_configs: #基于指定的文件实现服务发现,基于指定的文件发现监控目标

promethues 的静态静态服务发现 static_configs:每当有一个新的目标实例需要监控,都需要手动修改配置文件配置目标 target

promethues 的 consul 服务发现 consul_sd_configs:Prometheus 一直监视 consul 服务,当发现在 consul中注册的服务有变化,prometheus 就会自动监控到所有注册到 consul 中的目标资

promethues 的 k8s 服务发现 kubernetes_sd_configs:Prometheus 与 Kubernetes 的 API 进行交互,动态的发现 Kubernetes 中部署的所有可监控的目标资源。

基于kubernetes_sd_config支持的动态服务发现类型:

node #node节点

service #发现service

pod #发现pod

endpoints #基于svc发现endpoints(pod)

Endpointslice #对endpoint进行切片

ingress #发现ingress

relabeling 简介及 kubernetes_sd_configs

promethues 的 relabeling(重新修改标签)功能很强大,它能够在抓取到目标实例之前把目标实例的元数据标签动态重新修改,动态添加或者覆盖标签

prometheus 从 Kubernetes API 动态发现目标(targer)之后,在被发现的 target 实例中,都包含一些原始的Metadata 标签信息,默认的标签有:(原始的metadata标签是k8s apiserver返回给prometheus的)

1、address:以: 格式显示目标 targets 的地址

2、scheme:采集的目标服务地址的 Scheme 形式,HTTP 或者 HTTP

3、metrics_path:采集的目标服务的访问路径

基础功能-重新标记目的

为了更好的识别监控指标,便于后期调用数据绘图、告警等需求,prometheus 支持对发现的目标进行 label 修改,在两个阶段可以重新标记

1、relabel_configs : 在对 target 进行数据采集之前(比如在采集数据之前重新定义标签信息,如目的 IP、目的端口等信息),可以使用 relabel_configs 添加、修改或删除一些标签、也可以只采集特定目标或过滤目标。

2、metric_relabel_configs:在对 target 进行数据采集之后,即如果是已经抓取到指标数据时,可以使用metric_relabel_configs 做最后的重新标记和过滤。

label类型:

source_labels:源标签,没有经过relabel处理之前的标签名字

target_label:通过action处理之后的新的标签名字

regex:给定的值或正则表达式匹配,匹配源标签的值

replacement:通过分组替换后,标签(target_label)对应的/()/() $1:$2

action简介:

replace: #替换标签值,根据regex正则匹配到源标签的值,使用replacement来引用表达式匹配的分组。

keep: #满足regex正则条件的实例进行采集,把source_labels中没有匹配到regex正则内容的Target实例丢掉,即只采集匹配成功的实例。

drop:#满足regex正则条件的实例不采集,把source_labels中匹配到regex正则内容的Target实例丢掉,即只采集没有匹配到的实例。

labelmap:#匹配regex所有标签名称,然后复制匹配标签的值进行分组,可以通过replacement分组引用(

1

,

{1},

1,{2},…)替代

labelkeep:匹配regex所有标签名称,其它不匹配的标签都将从标签集中删除

labeldrop:匹配regex所有标签名称,其它匹配的标签都将从标签集中删除

hashmod:使用hashmod计算source_labels的Hash值并进行对比,基于自定义的模数取模,以实现对目标进行分类、重新赋值等功能:

关于注解的功能

关于annotation_prometheus_io_scrape及kubernetes_service_annotation_prometheus_io_port:

在k8s中,如果deployment的yaml文件指定了annotation_prometheus_io_scrape及kubernetes_service_annotation_prometheus_io_port,那么基于prometheus的发现规则,需要在被发现的目的target定义注解匹配annotation_prometheus_io_scrape的值为true和kubernetes_service_annotation_prometheus_io_port对应的端口号如9153,且必须匹配成功该注解才会保留监控target,然后再进行数据抓取并进行标签替换,主要用于精确匹配目的target(过滤掉不匹配的其它target):

Prometheus 基于api-server实现node、pod、Cadvisor等实现服务发现;

node

-job_name: 'kubernetes-node' #job name

kubernetes_sd_configs: #发现配置

- role: node #发现类型

relabel_configs: #标签重写配置

- source_labels: [__address__] #源标签

regex: '(.*):10250' #通过正则匹配后缀为:10250的实例,10250是kubelet端口

replacement: '${1}:9100' #重写为IP:9100,即将端口替换为prometheusnode-exporter的端口

target_label: __address__ #将[__address__]替换为__address__

action: replace #将[__address__] 的值依然赋值给__address__

#发现lable并引用

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

pod

- job_name: 'kubernetes-nginx-pods'

kubernetes_sd_configs:

- role: pod

namespaces: #可选指定namepace,如果不指定就是发现所有的namespace中的pod

names:

- myserver

- magedu

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

Cadvisor

采集node里面容器的指标

tls_config配置的证书地址是每个Pod连接apiserver所使用的地址,无论证书是否用得上,在Pod启动的时候kubelet都会给每一个pod自动注入ca的公钥,即所有的pod启动的时候都会有一个ca公钥被注入进去用于在访问apiserver的时候被调用。

root@k8s-master1:/opt/1.prometheus-case-files# kubectl exec cadvisor-27rlx -n monitoring ls /var/run/secrets/kubernetes.io/serviceaccount/

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

ca.crt

namespace

token

#通过k8s api 去查看cadvisor数据:

curl --cacert /etc/kubernetes/ssl/ca.pem -H "Authorization:Bearer $TOKEN" https://172.31.7.101:6443/api/v1/nodes/172.31.7.113/proxy/metrics/cadvisor

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

prometheus 部署在k8s集群以外(二进制环境)并实现服务发现

prometheus部署在k8s集群以外并实现服务发现:

在namespace monitoring创建服务发现账号prometheus并授权

#在namespace monitoring创建服务发现账号prometheus并授权

cat prom-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitoring

---

apiVersion: v1

kind: Secret

type: kubernetes.io/service-account-token

metadata:

name: monitoring-token

namespace: monitoring

annotations:

kubernetes.io/service-account.name: "prometheus"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups:

- ""

resources:

- nodes

- services

- endpoints

- pods

- nodes/proxy

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- configmaps

- nodes/metrics

verbs:

- get

- nonResourceURLs:

- /metrics

verbs:

- get

---

#apiVersion: rbac.authorization.k8s.io/v1beta1

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitoring

#创建

kubectl apply -f case4-prom-rbac.yaml

#查看secrets

root@k8s-master1:/opt/1.prometheus-case-files# kubectl get secrets -n monitoring

NAME TYPE DATA AGE

monitoring-token kubernetes.io/service-account-token 3 58s

#获取token

root@k8s-master1:/opt/1.prometheus-case-files# kubectl describe secret -n monitoring monitoring-token

Name: monitoring-token

Namespace: monitoring

Labels: <none>

Annotations: kubernetes.io/service-account.name: prometheus

kubernetes.io/service-account.uid: 624325fc-be1a-4246-a90e-150a42e61356

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1310 bytes

namespace: 10 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IlEwRUo4T2s0TjZ6eUczOFIyX0FIMmlhTnpiTUlkWEVCSzNmVS0xWS1UclkifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJtb25pdG9yaW5nIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZWNyZXQubmFtZSI6Im1vbml0b3JpbmctdG9rZW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoicHJvbWV0aGV1cyIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjYyNDMyNWZjLWJlMWEtNDI0Ni1hOTBlLTE1MGE0MmU2MTM1NiIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDptb25pdG9yaW5nOnByb21ldGhldXMifQ.Ci4gFBWu-lq0QM2hmm97b8Mz3_IE6IfoySdTfwcTzNnnD-Q_F-y8d2sgf9SOnOnL42RGXh7jm5Cb-lJoiqvoK0isgJEHrRG46JNz-FdNGuuHDLs8FRkSGDcUwP-FcRZR33jslVfnGHGPGDZQl0gudsIv2uQuLKDiwuTOAi8H3ttXgPmgRrRp3x8yP2SFE9F75jMNEoNsKEy3stkha1IU2qs0aS_5LNFPW2Bsku4a23hNoeW1dryrbKbXJ4TyRflCLXJyJrN88CAlyYRNnQ6jXcfrghY4h7Qs66g7rEz7qN5Jv_iBV86d3DK08P2DIxn0ivHQJfRkxoxknSI8d9ZTtA

将获取到token配置k8s外的prometheus server上

vim /apps/prometheus/k8s.token

eyJhbGciOiJSUzI1NiIsImtpZCI6IlEwRUo4T2s0TjZ6eUczOFIyX0FIMmlhTnpiTUlkWEVCSzNmVS0xWS1UclkifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJtb25pdG9yaW5nIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZWNyZXQubmFtZSI6Im1vbml0b3JpbmctdG9rZW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoicHJvbWV0aGV1cyIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjYyNDMyNWZjLWJlMWEtNDI0Ni1hOTBlLTE1MGE0MmU2MTM1NiIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDptb25pdG9yaW5nOnByb21ldGhldXMifQ.Ci4gFBWu-lq0QM2hmm97b8Mz3_IE6IfoySdTfwcTzNnnD-Q_F-y8d2sgf9SOnOnL42RGXh7jm5Cb-lJoiqvoK0isgJEHrRG46JNz-FdNGuuHDLs8FRkSGDcUwP-FcRZR33jslVfnGHGPGDZQl0gudsIv2uQuLKDiwuTOAi8H3ttXgPmgRrRp3x8yP2SFE9F75jMNEoNsKEy3stkha1IU2qs0aS_5LNFPW2Bsku4a23hNoeW1dryrbKbXJ4TyRflCLXJyJrN88CAlyYRNnQ6jXcfrghY4h7Qs66g7rEz7qN5Jv_iBV86d3DK08P2DIxn0ivHQJfRkxoxknSI8d9ZTtA

编辑prometheus配置文件

cat /apps/prometheus/prometheus.yml

# my global config

global:

scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.

# scrape_timeout is set to the global default (10s).

# Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

# - alertmanager:9093

# Load rules once and periodically evaluate them according to the global 'evaluation_interval'.

rule_files:

# - "first_rules.yml"

# - "second_rules.yml"

# A scrape configuration containing exactly one endpoint to scrape:

# Here it's Prometheus itself.

scrape_configs:

# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.

- job_name: "prometheus"

# metrics_path defaults to '/metrics'

# scheme defaults to 'http'.

static_configs:

- targets: ["localhost:9090"]

#API Serevr节点发现

- job_name: 'kubernetes-apiservers-monitor'

kubernetes_sd_configs:

- role: endpoints

api_server: https://10.0.0.190:6443

tls_config:

insecure_skip_verify: true

bearer_token_file: /apps/prometheus/k8s.token

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /apps/prometheus/k8s.token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

#课自定义替换发现的服务器端口、协议等

- source_labels: [__address__]

regex: '(.*):6443'

replacement: '${1}:9100'

target_label: __address__

action: replace

- source_labels: [__scheme__]

regex: https

replacement: http

target_label: __scheme__

action: replace

#node节点发现

- job_name: 'kubernetes-nodes-monitor'

scheme: http

tls_config:

insecure_skip_verify: true

bearer_token_file: /apps/prometheus/k8s.token

kubernetes_sd_configs:

- role: node

api_server: https://10.0.0.190:6443

tls_config:

insecure_skip_verify: true

bearer_token_file: /apps/prometheus/k8s.token

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- source_labels: [__meta_kubernetes_node_label_failure_domain_beta_kubernetes_io_region]

regex: '(.*)'

replacement: '${1}'

action: replace

target_label: LOC

- source_labels: [__meta_kubernetes_node_label_failure_domain_beta_kubernetes_io_region]

regex: '(.*)'

replacement: 'NODE'

action: replace

target_label: Type

- source_labels: [__meta_kubernetes_node_label_failure_domain_beta_kubernetes_io_region]

regex: '(.*)'

replacement: 'K8S-test'

action: replace

target_label: Env

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

#指定namespace 的pod

- job_name: 'kubernetes-发现指定namespace的所有pod'

kubernetes_sd_configs:

- role: pod

api_server: https://10.0.0.190:6443

tls_config:

insecure_skip_verify: true

bearer_token_file: /apps/prometheus/k8s.token

namespaces:

names:

- myserver

- magedu

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

#指定Pod发现条件

- job_name: 'kubernetes-指定发现条件的pod'

kubernetes_sd_configs:

- role: pod

api_server: https://10.0.0.190:6443

tls_config:

insecure_skip_verify: true

bearer_token_file: /apps/prometheus/k8s.token

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

- source_labels: [__meta_kubernetes_pod_label_pod_template_hash]

regex: '(.*)'

replacement: 'K8S-test'

action: replace

target_label: Env

#重启prometheus

systemctl restart prometheus.service

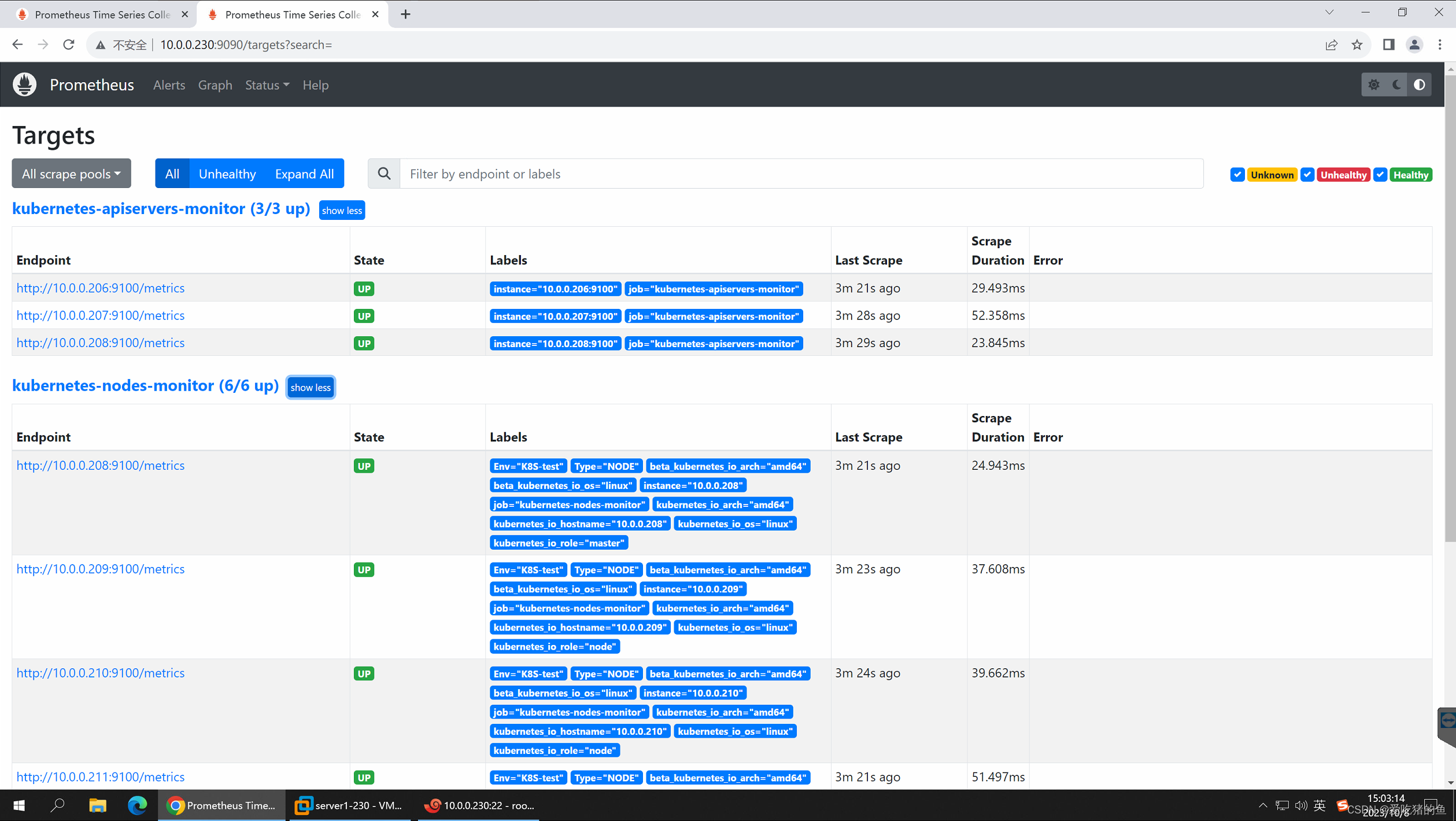

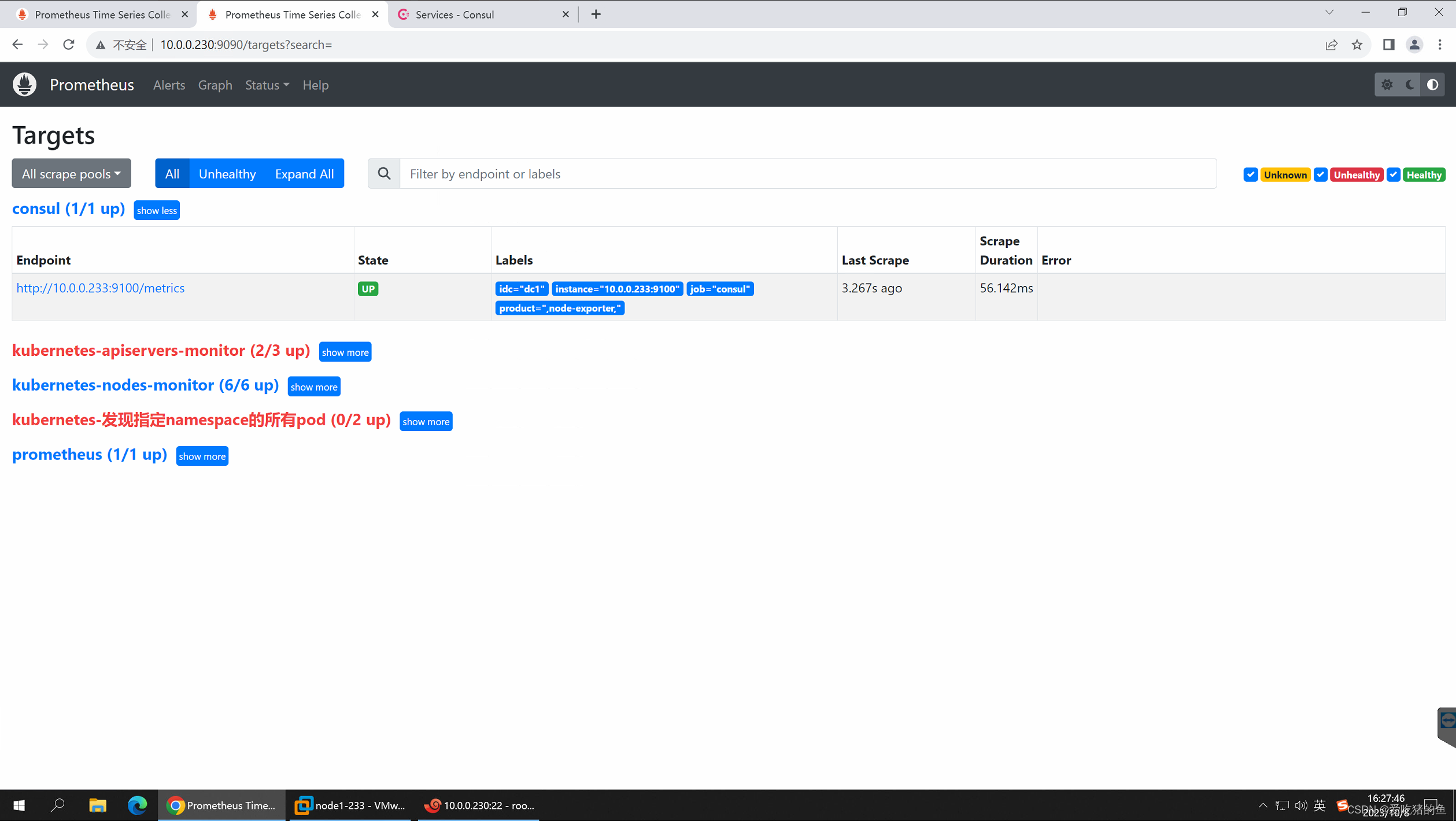

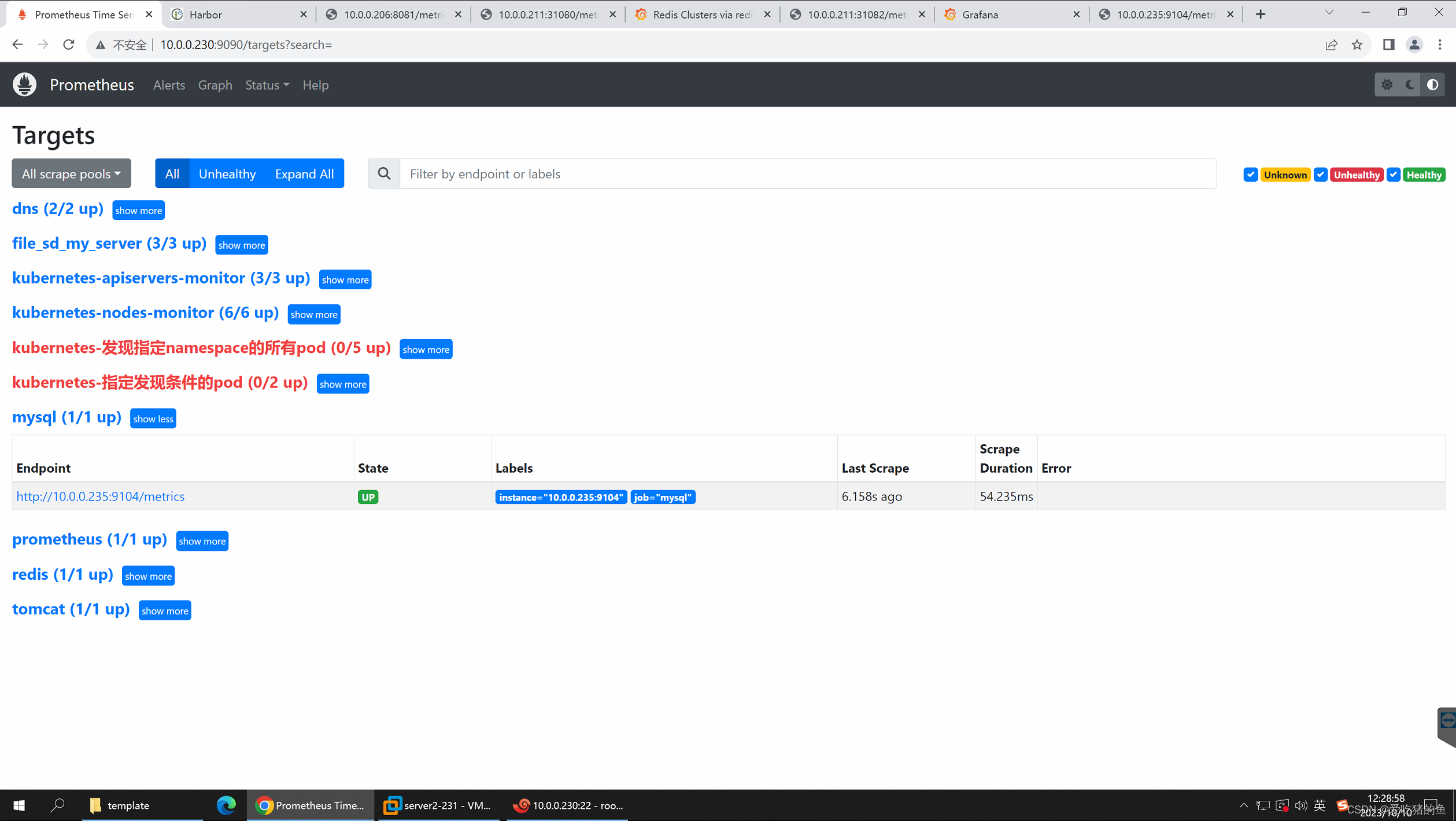

web界面验证

Prometheus 基于consul、file/HTTP、dns实现服务发现

基于consul的服务发现(consul_sd_configs)

prometheus有些服务无法直接的发现,可以先注册到consul里面,然后通过consul间接的发现

部署consul

cat docker-compose.yaml

version: '3.7'

services:

consul1:

image: consul:1.14.8

container_name: consul-server

command: agent -server -bootstrap -node=node1 -ui -bind=0.0.0.0 -client=0.0.0.0 -datacenter=dc1

ports:

- 8500:8500

volumes:

- /data/consul:/consul/data

- /data/consul/log:/consul/log

mkdir -p /data/consul/log

docker-compose up -d

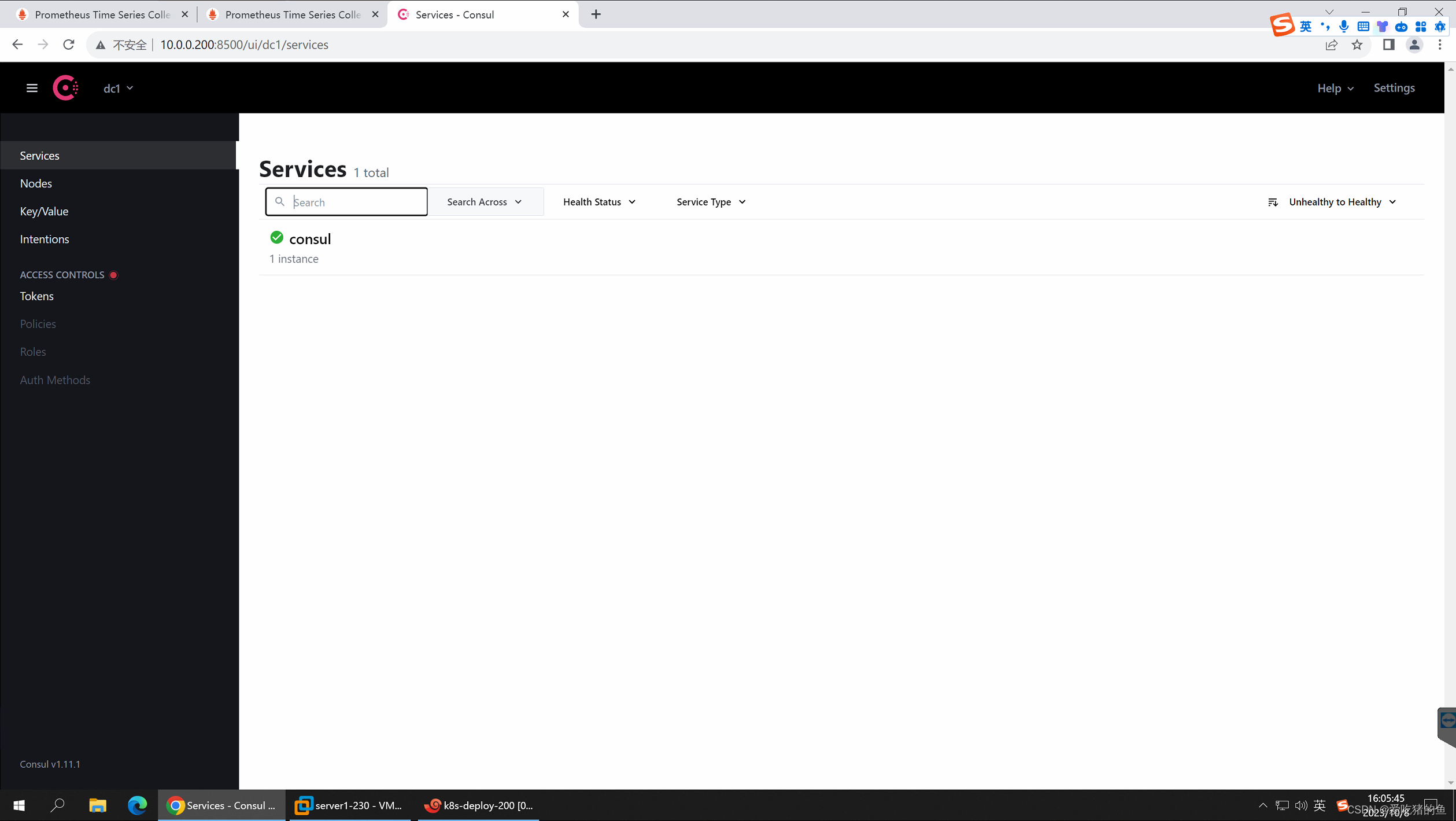

web界面验证

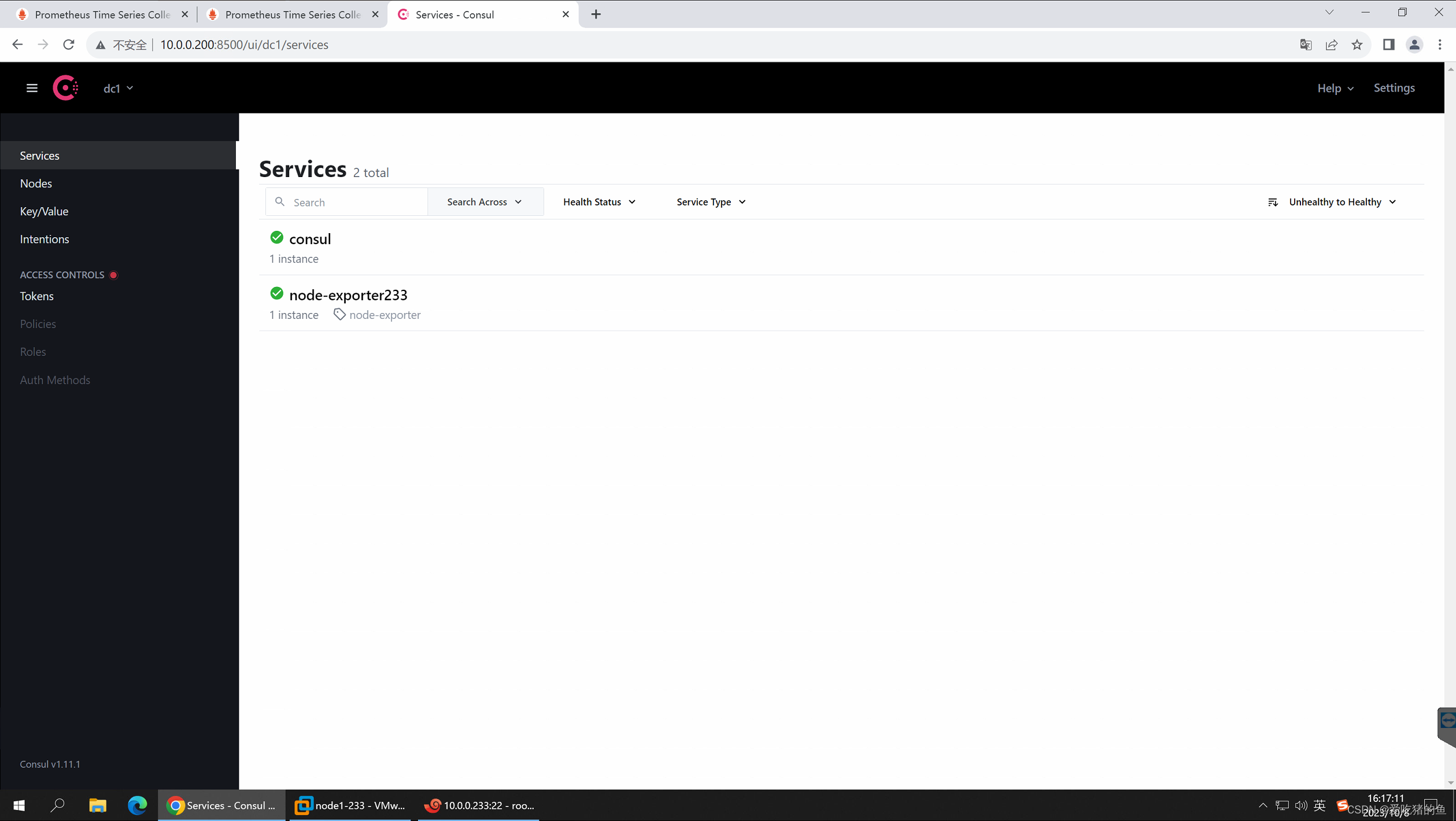

向consul写入数据

#把node exporter http://10.0.0.233:9100注册进入consul

curl -X PUT -d '{"id": "node-exporter233","name": "node-exporter233","address": "10.0.0.233","port":9100,"tags": ["node-exporter"],"checks": [{"http": "http://10.0.0.233:9100/","interval": "5s"}]}' http://10.0.0.200:8500/v1/agent/service/register

prometheus配置文件配置

vim /apps/prometheus/prometheus.yml

- job_name: 'consul'

honor_labels: true

metrics_path: /metrics

scheme: http

consul_sd_configs:

- server: 10.0.0.200:8500

services: [] #发现的目标服务名称,空为所有服务,可以 写servicea,servcieb,service

relabel_configs:

- source_labels: ['__meta_consul_tags']

target_label: 'product'

- source_labels: ['__meta_consul_dc']

target_label: 'idc'

- source_labels: ['__meta_consul_service']

regex: 'consul'

action: drop

从consul上删除节点

curl -X PUT http://10.0.0.200:8500/v1/agent/service/deregister/node-exporter233

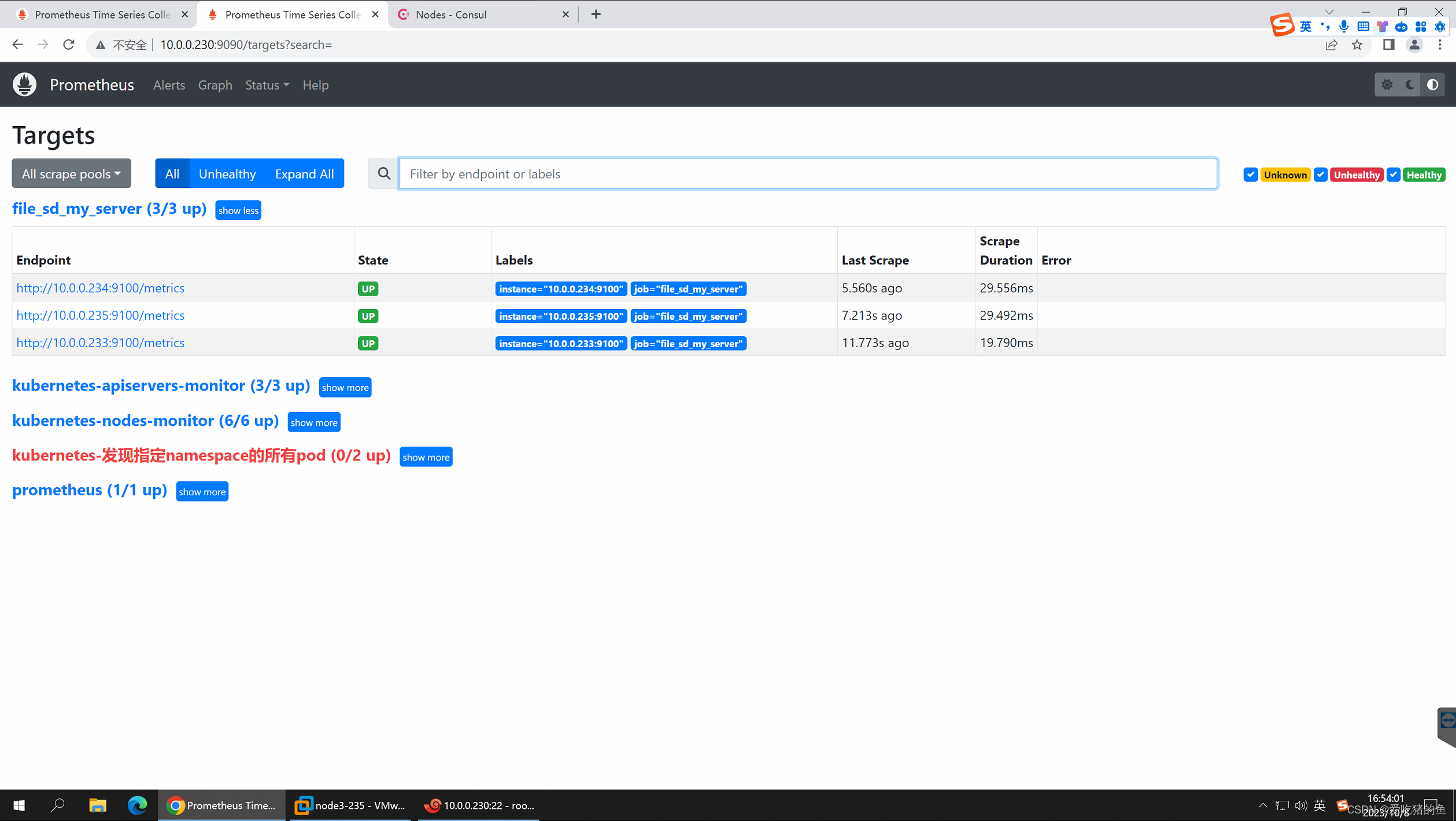

基于文件的发现(file_sd_configs)

基于文件的发现,需要在prometheus server服务器中维护一个json文件

vim /apps/prometheus/sd_my_server.json

[

{

"targets": ["10.0.0.233:9100","10.0.0.234:9100","10.0.0.235:9100"]

}

]

prometheus配置文件配置

vim /apps/prometheus/prometheus.yml

- job_name: 'file_sd_my_server'

file_sd_configs:

- files:

- /apps/prometheus/sd_my_server.json

refresh_interval: 10s #刷新时间

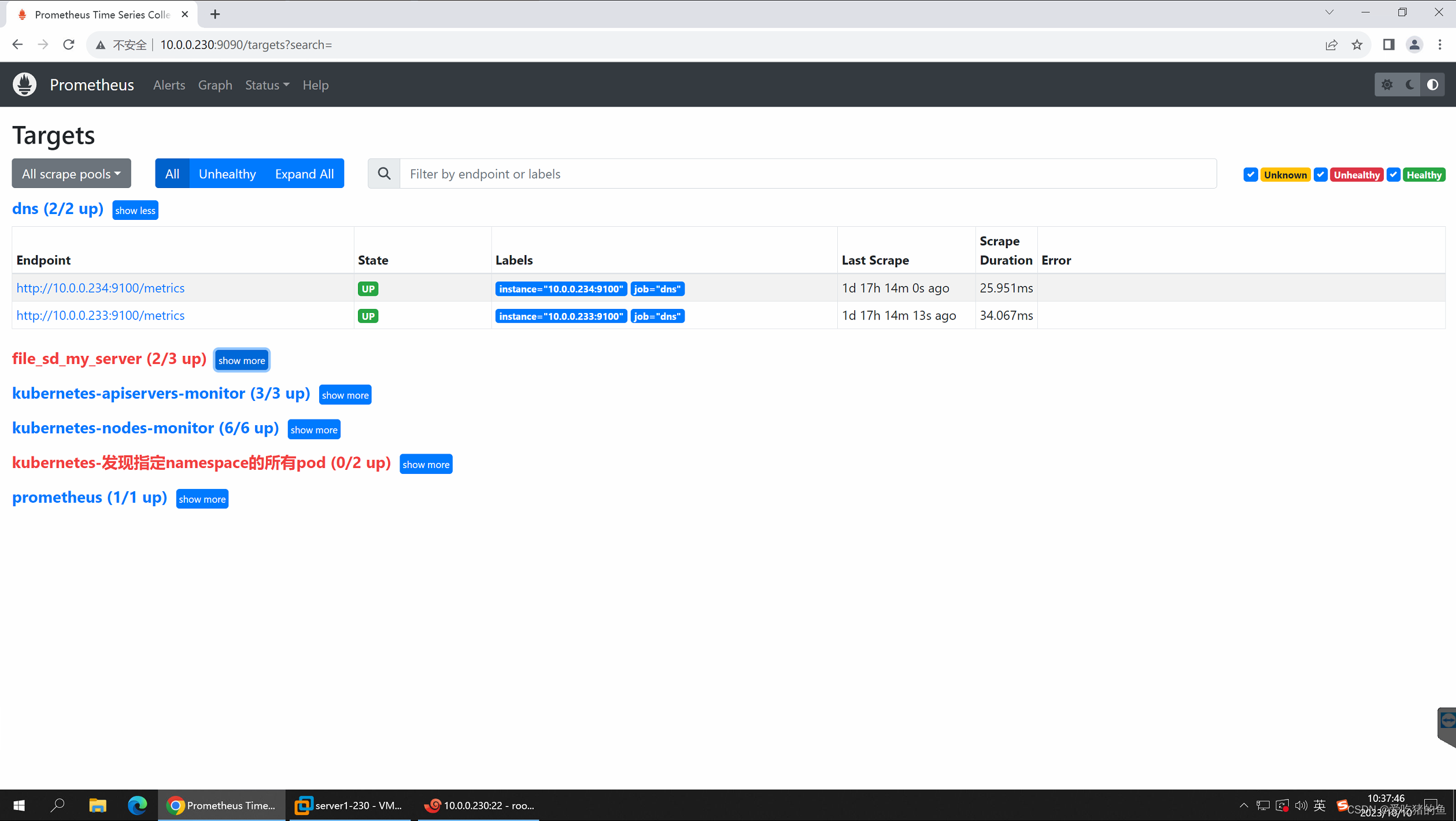

基于dns发现(dns_sd_configs)

基于DNS实现服务发现:

基于 DNS 的服务发现允许配置指定一组 DNS 域名,这些域名会定期查询以发现目标列表,域名需要可以被配置的DNS服务器解析为IP。

此服务发现方法仅支持基本的 DNS A、AAAA 和 SRV 记录查询

A记录: 域名解析为IP

SRV:SRV记录了哪台计算机提供了具体哪个服务,格式为:自定义的服务的名字.协议的类型.域名(例如:_example- server._tcp.www.mydns.com)

prometheus会对收集的指标数据进行重新打标,重新标记期间,可以使用以下元标签:

__meta_dns_name:产生发现目标的记录名称。

__meta_dns_srv_record_target: SRV 记录的目标字

__meta_dns_srv_record_port: SRV 记录的端口

A记录服务发现

vim /etc/hosts

10.0.0.233 www.wang1.com

10.0.0.234 www.wang2.com

#编辑prometheus的配置文件

vim /apps/prometheus/prometheus.yml

- job_name: 'dns'

metrics_path: "/metrics"

dns_sd_configs:

- names: ["www.wang1.com","www.wang2.com"]

type: A

port: 9100

web验证

SRV服务发现

#需要有DNS服务器实现域名解析

#编辑prometheus的配置文件

vim /apps/prometheus/prometheus.yml

- job_name: 'dns-srv'

metrics_path: "/metrics"

dns_sd_configs:

- names: ["_prometheus._tcp.www.wang1.com"]

type: SRV

port: 9100

配置Prometheus监控JVM和Tomcat

https://github.com/nlighten/tomcat_exporter

监控java服务的活跃连接数:

#TYPE tomcat_connections_active_total gauge

tomcat_connections_active_total{name=“http-nio-8080”,} 2.0

监控java服务的堆栈内存使用信息:

#TYPE jvm_memory_bytes_used gauge

jvm_memory_bytes_used{area=“heap”,} 2.4451216E7

#镜像构建:

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/tomcat# pwd

/opt/1.prometheus-case-files/app-monitor-case/tomcat

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/tomcat# ll

total 28

drwxr-xr-x 5 root root 4096 Mar 22 2023 ./

drwxr-xr-x 6 root root 4096 Mar 23 2023 ../

-rw-r--r-- 1 root root 6148 Dec 28 2022 .DS_Store

drwxr-xr-x 2 root root 4096 Oct 10 10:48 template/

drwxr-xr-x 3 root root 4096 Sep 24 16:25 tomcat-image/

drwxr-xr-x 2 root root 4096 Sep 24 17:06 yaml/

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/tomcat# cd tomcat-image/

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/tomcat/tomcat-image# ll

total 473772

drwxr-xr-x 3 root root 4096 Sep 24 16:25 ./

drwxr-xr-x 5 root root 4096 Mar 22 2023 ../

-rw-r--r-- 1 root root 759 Sep 24 16:22 Dockerfile

-rwxr-xr-x 1 root root 275 Sep 24 16:23 build-command.sh*

-rw-r--r-- 1 root root 3405 Dec 17 2021 metrics.war

drwxr-xr-x 2 root root 4096 Mar 22 2023 myapp/

-rw-r--r-- 1 root root 175 Jul 16 2021 myapp.tar.gz

-rwxr-xr-x 1 root root 125 Jul 16 2021 run_tomcat.sh*

-rw-r--r-- 1 root root 7592 Jul 16 2021 server.xml

-rw-r--r-- 1 root root 59477 Dec 17 2021 simpleclient-0.8.0.jar

-rw-r--r-- 1 root root 5840 Dec 17 2021 simpleclient_common-0.8.0.jar

-rw-r--r-- 1 root root 21767 Dec 17 2021 simpleclient_hotspot-0.8.0.jar

-rw-r--r-- 1 root root 7104 Dec 17 2021 simpleclient_servlet-0.8.0.jar

-rw-r--r-- 1 root root 484967424 Dec 17 2021 tomcat-8.5.73-jdk11-corretto.tar.gz

-rw-r--r-- 1 root root 19582 Dec 17 2021 tomcat_exporter_client-0.0.12.jar

-rw-r--r-- 1 root root 3405 Dec 17 2021 tomcat_exporter_servlet-0.0.12.war

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/tomcat/tomcat-image# cat Dockerfile

#FROM tomcat:8.5.73-jdk11-corretto

#FROM tomcat:8.5.73

FROM registry.cn-hangzhou.aliyuncs.com/zhangshijie/tomcat:8.5.73

LABEL maintainer="jack 2973707860@qq.com"

ADD server.xml /usr/local/tomcat/conf/server.xml

RUN mkdir /data/tomcat/webapps -p

ADD myapp /data/tomcat/webapps/myapp

ADD metrics.war /data/tomcat/webapps

ADD simpleclient-0.8.0.jar /usr/local/tomcat/lib/

ADD simpleclient_common-0.8.0.jar /usr/local/tomcat/lib/

ADD simpleclient_hotspot-0.8.0.jar /usr/local/tomcat/lib/

ADD simpleclient_servlet-0.8.0.jar /usr/local/tomcat/lib/

ADD tomcat_exporter_client-0.0.12.jar /usr/local/tomcat/lib/

#ADD run_tomcat.sh /apps/tomcat/bin/

EXPOSE 8080 8443 8009

#CMD ["/apps/tomcat/bin/catalina.sh","run"]

#CMD ["/apps/tomcat/bin/run_tomcat.sh"]

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/tomcat/tomcat-image# cat build-command.sh

#!/bin/bash

nerdctl build -t harbor.canghailyt.com/base/tomcat-app1:v1 .

nerdctl push harbor.canghailyt.com/base/tomcat-app1:v1

#docker build -t harbor.linuxarchitect.io/myserver/tomcat-app1:v1 .

#docker push harbor.linuxarchitect.io/myserver/tomcat-app1:v1

#执行构建

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/tomcat/tomcat-image# bash build-command.sh

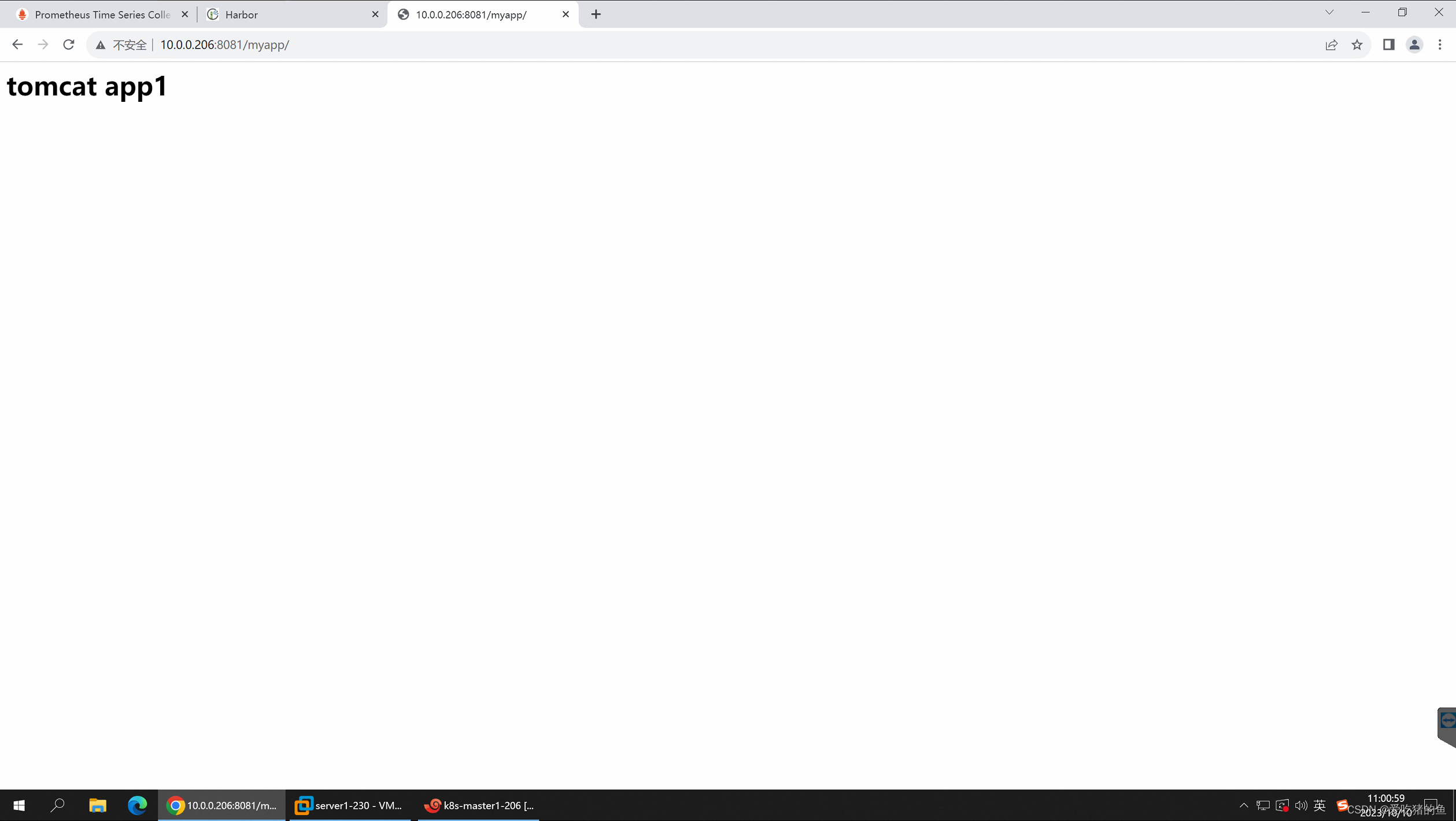

#用容器启动

nerdctl run -d -p 8081:8080 harbor.canghailyt.com/base/tomcat-app1:v1

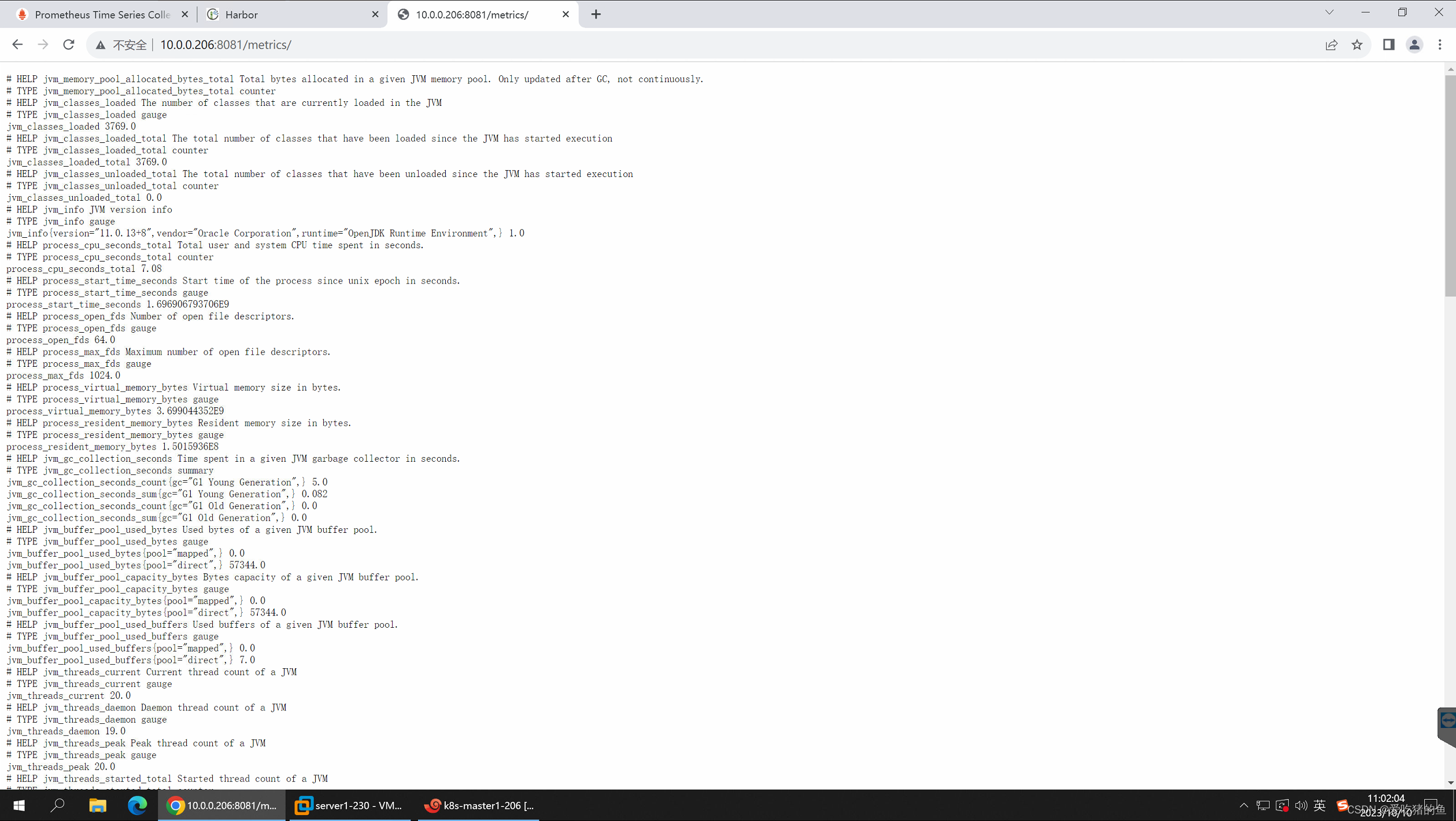

web界面测试

app页面

metrics页面

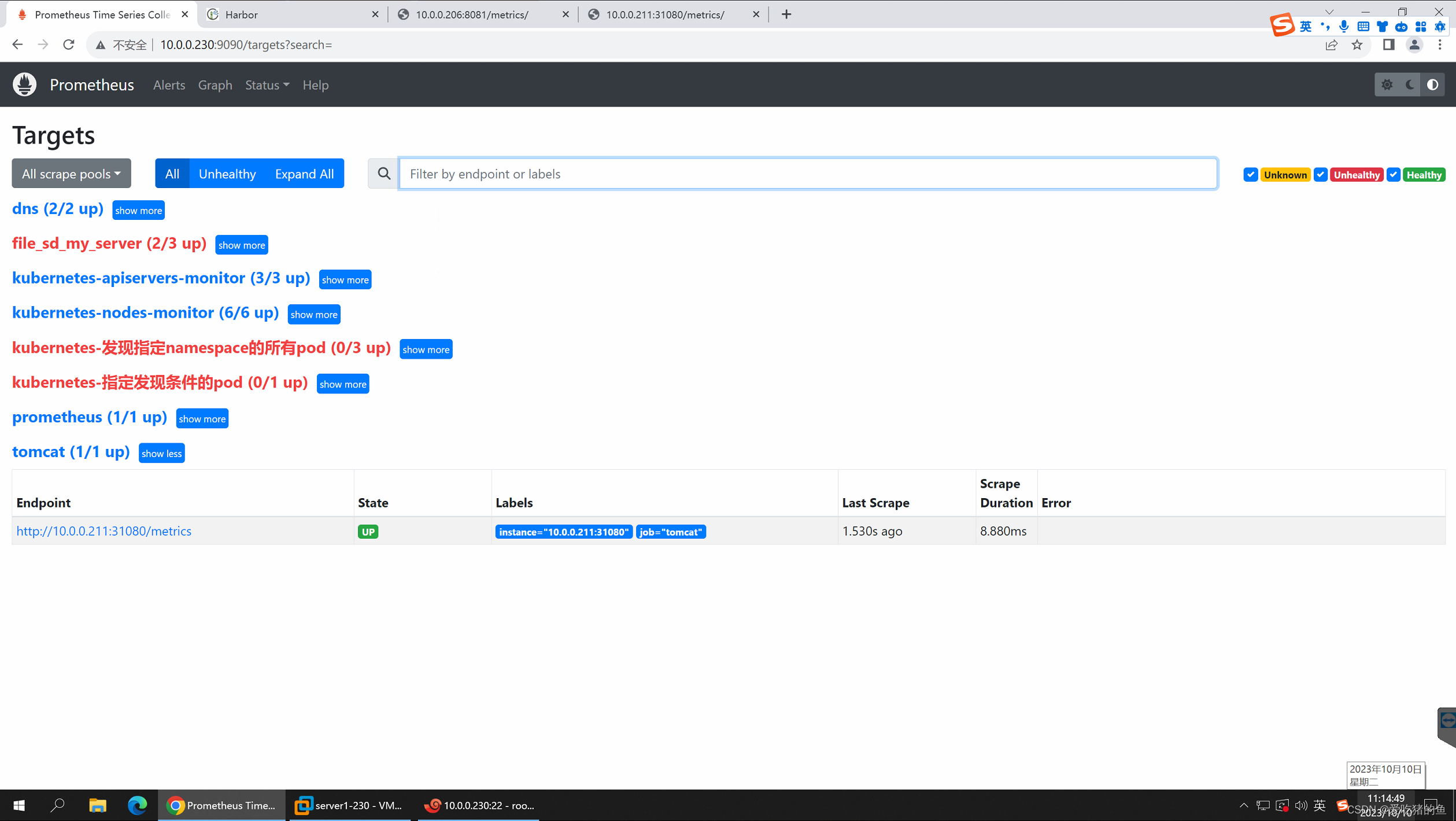

prometheus配置

- job_name: 'tomcat'

static_configs:

- targets: ["10.0.0.211:31080"]

配置Prometheus监控Redis、MySQL、HAProxy

监控Redis

https://github.com/oliver006/redis_exporter

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/redis/yaml# ll

total 20

drwxr-xr-x 2 root root 4096 Oct 10 11:37 ./

drwxr-xr-x 4 root root 4096 Mar 22 2023 ../

-rw-r--r-- 1 root root 715 Sep 24 17:07 redis-deployment.yaml

-rw-r--r-- 1 root root 335 Mar 22 2023 redis-exporter-svc.yaml

-rw-r--r-- 1 root root 305 Mar 22 2023 redis-redis-svc.yaml

#如果redis有密码,redis_exporter需要配置认证密码

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/redis/yaml# cat redis-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: redis

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: redis

template:

metadata:

labels:

app: redis

annotations:

prometheus.io/scrape: 'true'

prometheus.io/port: "9121"

spec:

containers:

- name: redis

image: redis:4.0.14

resources:

requests:

cpu: 200m

memory: 156Mi

ports:

- containerPort: 6379

- name: redis-exporter

image: oliver006/redis_exporter:latest

resources:

requests:

cpu: 100m

memory: 128Mi

ports:

- containerPort: 9121

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/redis/yaml# cat redis-exporter-svc.yaml

kind: Service #service 类型

apiVersion: v1

metadata:

annotations:

prometheus.io/scrape: 'true'

prometheus.io/port: "9121"

name: redis-exporter-service

namespace: magedu

spec:

selector:

app: redis

ports:

- nodePort: 31082

name: prom

port: 9121

protocol: TCP

targetPort: 9121

type: NodePort

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/redis/yaml# cat redis-redis-svc.yaml

kind: Service #service 类型

apiVersion: v1

metadata:

# annotations:

# prometheus.io/scrape: 'false'

name: redis-redis-service

namespace: magedu

spec:

selector:

app: redis

ports:

- nodePort: 31081

name: redis

port: 6379

protocol: TCP

targetPort: 6379

type: NodePort

kubectl apply -f redis-deployment.yaml

kubectl apply -f redis-exporter-svc.yaml

kubectl apply -f redis-redis-svc.yaml

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/redis/yaml# kubectl get pod -n magedu

NAME READY STATUS RESTARTS AGE

redis-6b6d8bf987-4jv45 2/2 Running 0 48s

root@k8s-master1:/opt/1.prometheus-case-files/app-monitor-case/redis/yaml# kubectl get svc -n magedu

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

redis-exporter-service NodePort 10.10.240.77 <none> 9121:31082/TCP 53s

redis-redis-service NodePort 10.10.55.58 <none> 6379:31081/TCP 44s

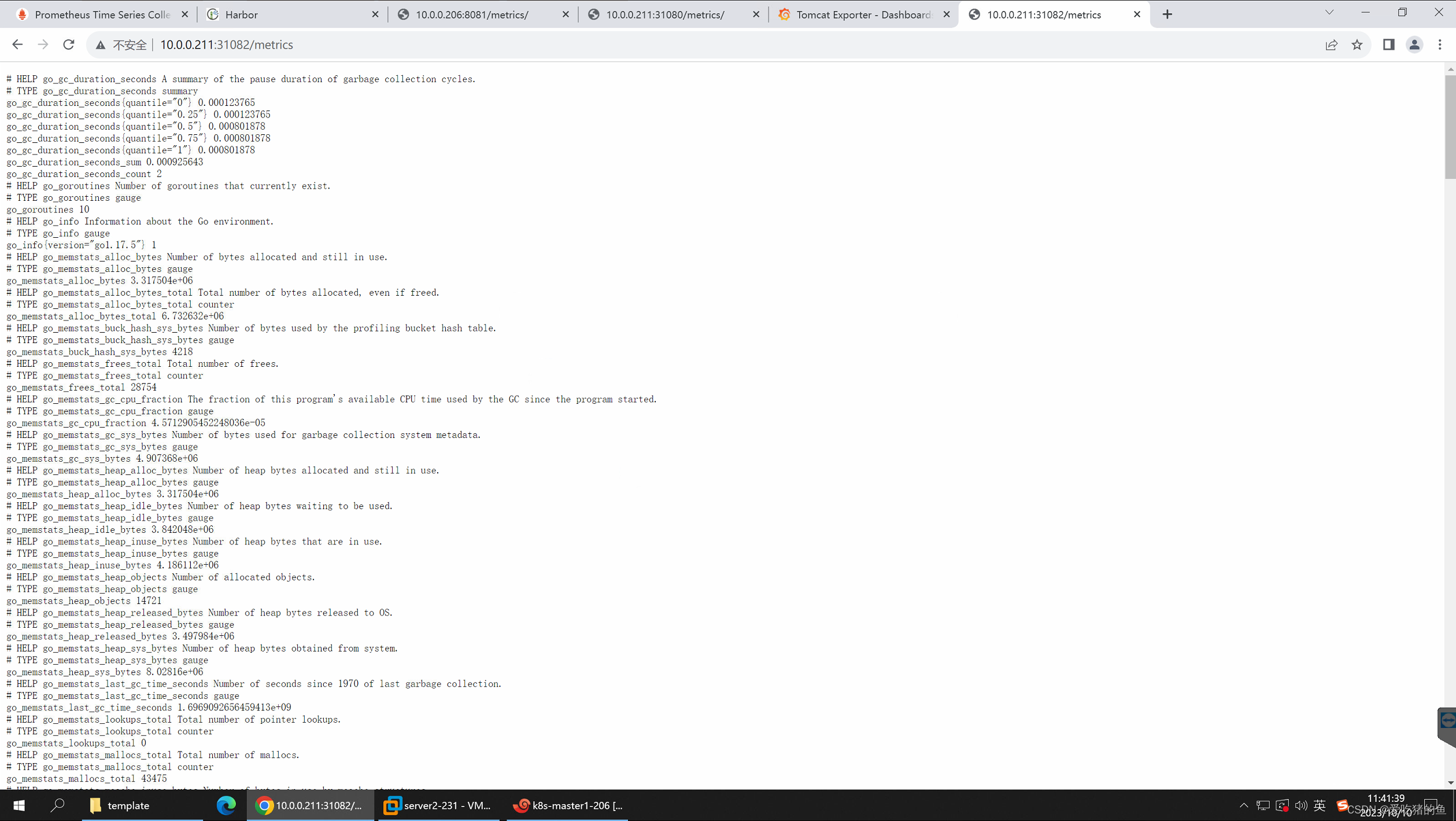

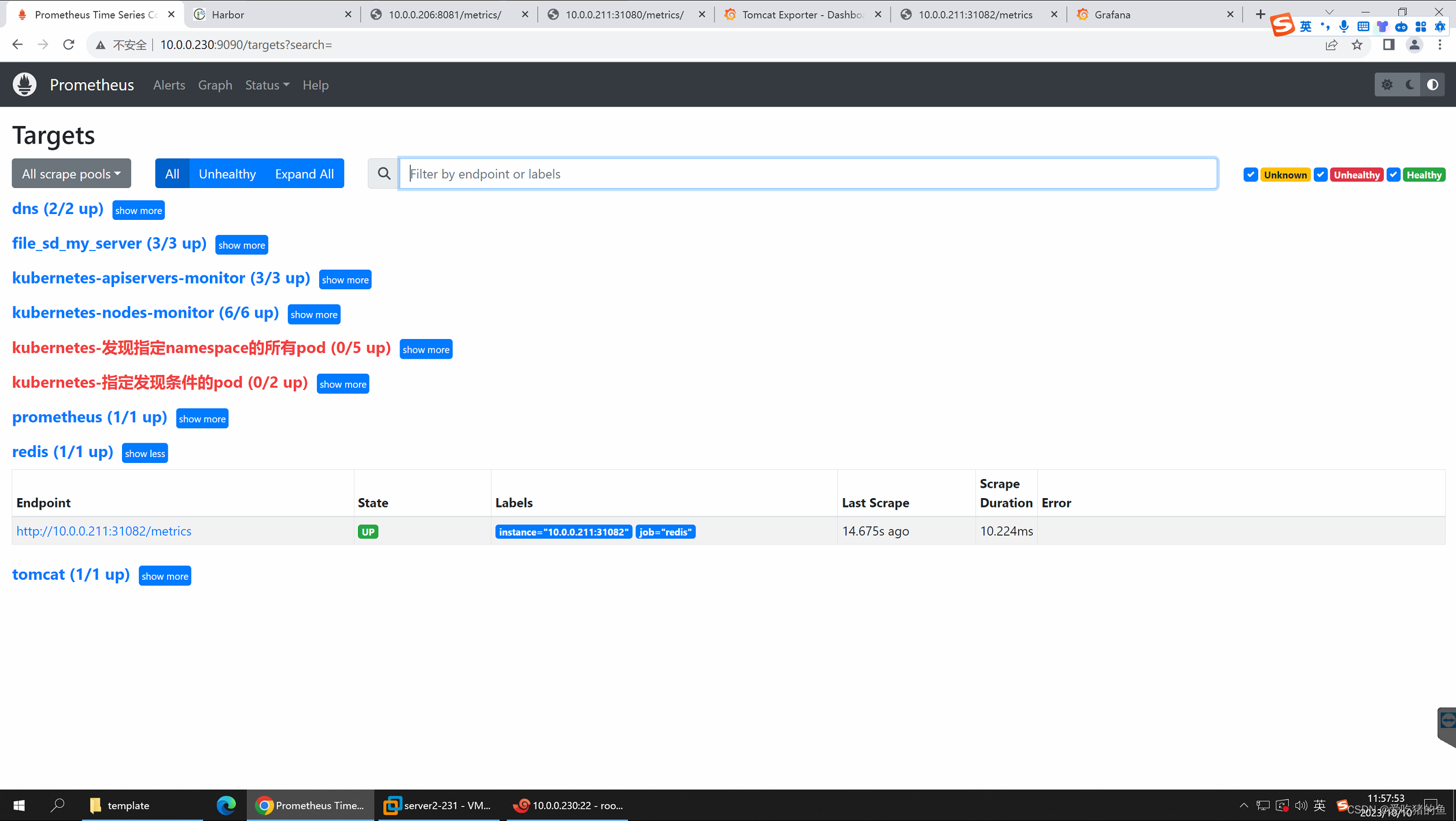

web界面测试

prometheus配置

- job_name: 'redis'

static_configs:

- targets: ["10.0.0.211:31082"]

监控MySQL

通过mysqld_exporter监控MySQL服务的运行状态

https://github.com/prometheus/mysqld_exporter

注意:mysqld_exporte和MySQL的版本

安装mysql

#安装mysql

apt update

apt install mariadb-server

vim /etc/mysql/mariadb.conf.d/50-server.cnf

bind-address = 0.0.0.0

systemctl restart mysqld.service

#授权监控账户权限:

root@ubuntu:~# mysql

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 31

Server version: 10.6.12-MariaDB-0ubuntu0.22.04.1 Ubuntu 22.04

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> CREATE USER 'mysql_exporter'@'localhost' IDENTIFIED BY 'imnot007*';

Query OK, 0 rows affected (0.001 sec)

MariaDB [(none)]> GRANT PROCESS, REPLICATION CLIENT, SELECT ON *.* TO 'mysql_exporter'@'localhost';

Query OK, 0 rows affected (0.001 sec)

#验证权限:

root@ubuntu:~# mysql -umysql_exporter -pimnot007* -hlocalhost

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 32

Server version: 10.6.12-MariaDB-0ubuntu0.22.04.1 Ubuntu 22.04

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]>

#准备mysqld_exporter环境:

安装mysqld_exporter

https://github.com/prometheus/mysqld_exporter/releases #下载地址

tar xvf mysqld_exporter-0.14.0.linux-amd64.tar.gz

mv mysqld_exporter-0.14.0.linux-amd64/mysqld_exporter /usr/local/bin/

#mysql免密码登录配置:

vim /root/.my.cnf

[client]

user=mysql_exporter

password=imnot007*

#验证权限:

root@ubuntu:~# mysql

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 33

Server version: 10.6.12-MariaDB-0ubuntu0.22.04.1 Ubuntu 22.04

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]>

#编写mysql_exporter service文件

vim /etc/systemd/system/mysqld_exporter.service

[Unit]

Description=Prometheus Node Exporter

After=network.target

[Service]

ExecStart=/usr/local/bin/mysqld_exporter --config.my-cnf=/root/.my.cnf

[Install]

WantedBy=multi-user.target

systemctl daemon-reload && systemctl restart mysqld_exporter && systemctl enable mysqld_exporter

配置prometheus配置文件

- job_name: 'mysql'

static_configs:

- targets: ["10.0.0.235:9104"]

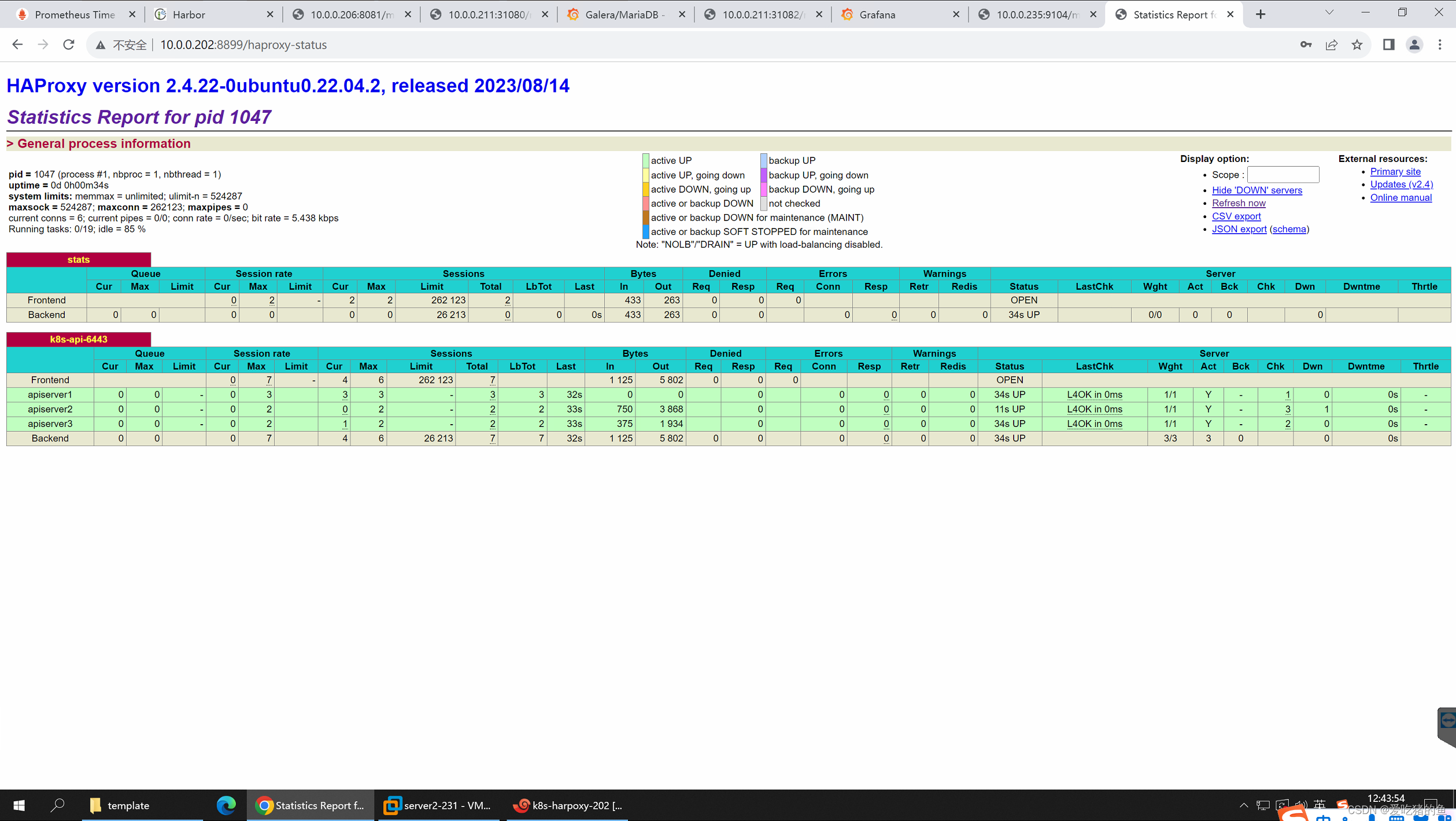

监控Haproxy

通过haproxy_exporter监控haproxy

https://github.com/prometheus/haproxy_exporter

监控Haproxy可以通过Haproxy状态页或者socket文件

#开启状态页

vim /etc/haproxy/haproxy.cfg

listen stats

bind :8899

stats enable

#stats hide-version

stats uri /haproxy-status

stats realm HAPorxy\ Stats\ Page

stats auth haadmin:123456

stats auth admin:123456

systemctl restart haproxy

#开启socket文件

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

#开启socket

stats socket /run/haproxy/admin.sock mode 660 level admin expose-fd listeners

stats timeout 30s

user haproxy

group haproxy

daemon

web验证

部署haproxy_exporter

下载地址:https://github.com/prometheus/haproxy_exporter

tar xvf haproxy_exporter-0.15.0.linux-amd64.tar.gz

mv haproxy_exporter-0.15.0.linux-amd64/haproxy_exporter /usr/local/bin/

#启动方式一:socket

haproxy_exporter --haproxy.scrape-uri=unix:/run/haproxy/admin.sock

#启动方式二:状态页

haproxy_exporter --haproxy.scrape-uri="http://admin:123456@127.0.0.1:8899/haproxy-status;csv" &

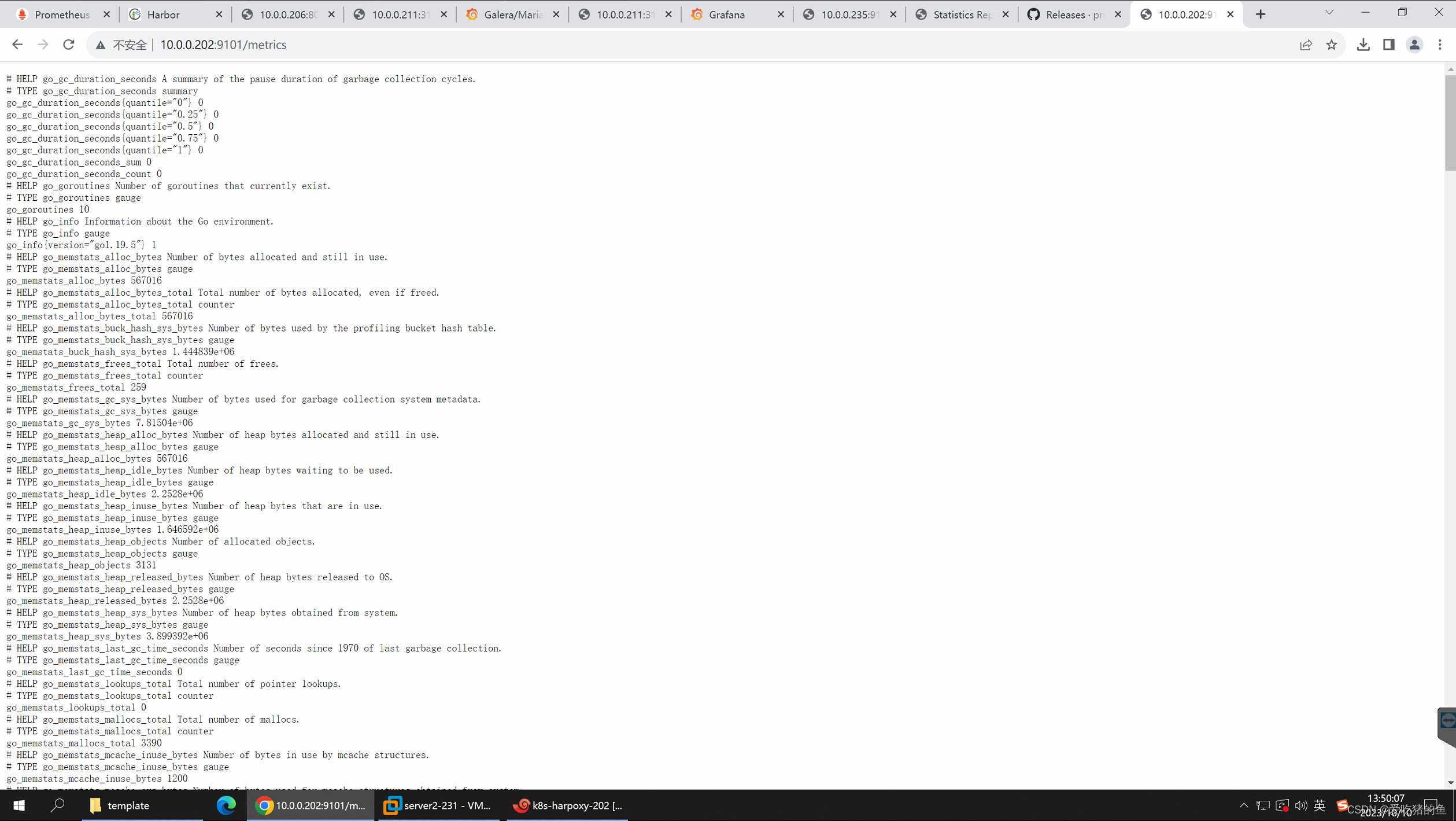

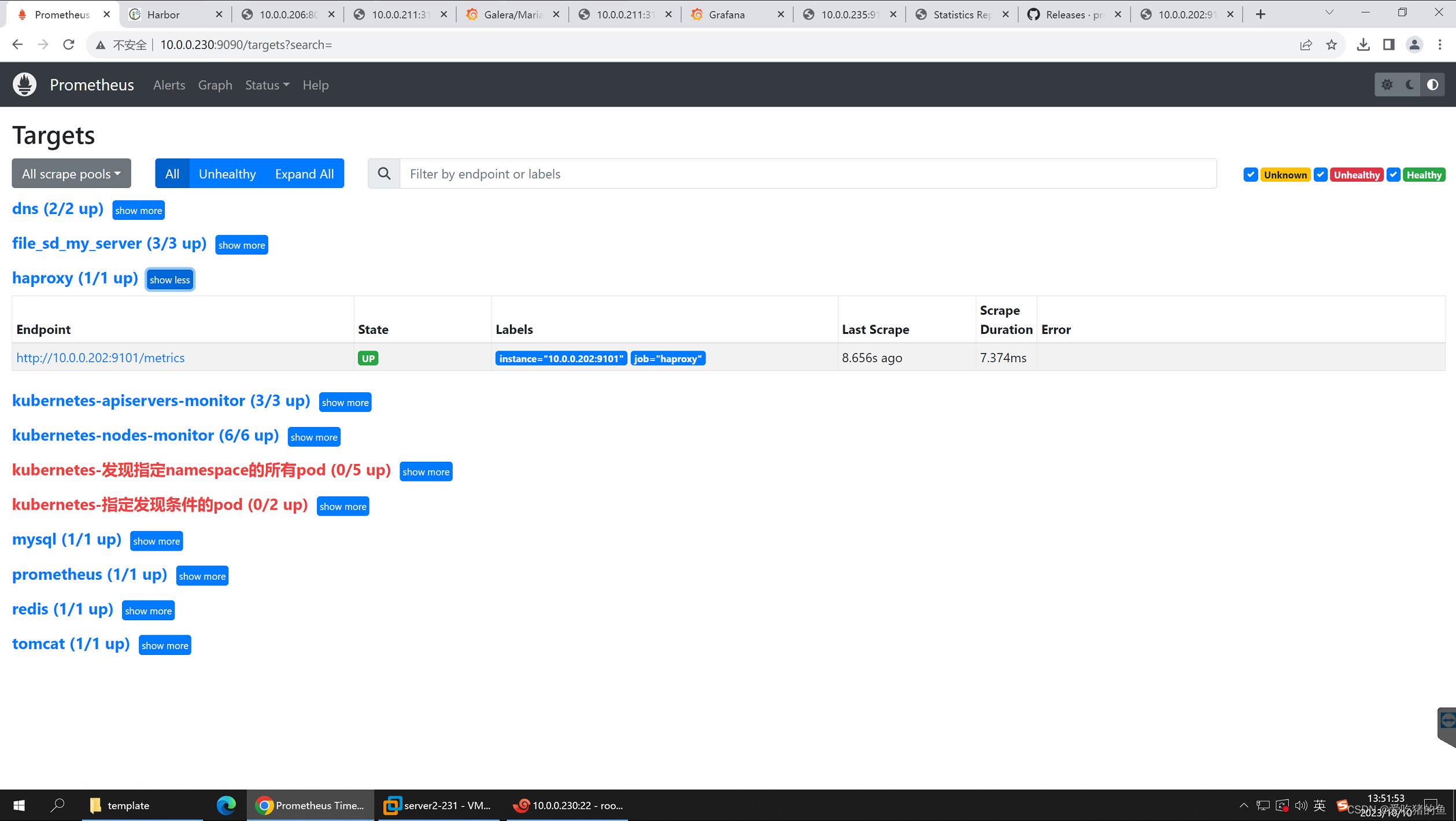

配置prometheus配置文件

- job_name: 'haproxy'

static_configs:

- targets: ["10.0.0.202:9101"]

1676

1676

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?