@[TOC]飞桨-PaddlePaddle技术笔记心得

开始

心得体会

心得体会

经过学校推广,开始了七天的学习之旅,逐渐意识到,这或许是我见过最好的课程。

节奏虽然比一般的的课程快,但是感觉老师教的非常非常非常好,环境网站的教育辅助效果也非常好,有全部都在云端的环境,还有随时可以互相问答的同学群。

还有人美心善代码6的文姐姐,还有可可爱爱的班主任(虽然生气的时候很怕人)。

学习几天后,我开始后悔为什么不早点遇见Paddle,之前走了太多弯路。看书的效率太低,而Paddle飞桨让我感受到了什么叫40分钟顶你看书三天。

班主任的声音很好听,文老师的课讲的十分生动,还有个男老师的头发很多。

老师也给了我们很多好的中文库文档,比如json。

突然觉得有了更高效的学习之处。

打卡营虽然只有七天,但是给我带来的冲击不只是那一两个小时的直播能说明的,这些深度学习的内容好像可以直接应用到我的小项目中去。比如口罩识别,风格迁移等puddle模型。然后我需要花更多的时间和精力去看国外的论文,去精细的解决某个问题,最重要的是一定要去实践,庆幸的是有个飞桨团队在做这件事,有一群志同道合的小伙伴和我一起,他们会回答你的每一个问题,或许不是那么及时,但是你知道了你需要如何去面对困难,不轻言放弃!

Day1

- 是一些简单的python语法,表明这个课程是有为小白同学考虑的。

- 见识到了全云端的百度Al Studio

- 第一天的作业十分简单,是九九乘法表的输出,以及查找特定名称的文件

#

#作业一:输出 9*9 乘法口诀表(注意格式)

def table():

#在这里写下您的乘法口诀表代码吧!

for i in range(1,10):

for j in range(1,10):

if(j>i):

break

print(i,'*',j,'=',i*j,sep="",end="")

if(j==i):

print()

else:

if(i*j>=10):

print(" ",end="")

else:

print(" ",end="")

if __name__ == '__main__':

table()在这里插入代

作业二:查找特定名称文件

遍历”Day1-homework”目录下文件;

找到文件名包含“2020”的文件;

将文件名保存到数组result中;

按照序号、文件名分行打印输出。

注意:提交作业时要有代码执行输出结果。码片

#导入OS模块

import os

#待搜索的目录路径

path = "Day1-homework"

#待搜索的名称

filename = "2020"

#定义保存结果的数组

result = []

file_list = []

def findfiles():

#在这里写下您的查找文件代码吧!

number = 0

for pathList in os.walk(path):

for list_ in pathList[1:]:

for Filename in list_:

if(filename in Filename):

number+=1

result.append([number,pathList[0]+'/'+Filename])

for i in result:

print(i)

if __name__ == '__main__':

findfiles()Day2

- 马上就进入到爬虫任务中来

举例:

深度学习的一般过程

收集数据,尤其是有标签、高质量的数据是一件昂贵的工作。

爬虫的过程,就是模仿浏览器的行为,往目标站点发送请求,接收服务器的响应数据,提取需要的信息,并进行保存的过程。

Python为爬虫的实现提供了工具:requests模块、BeautifulSoup库

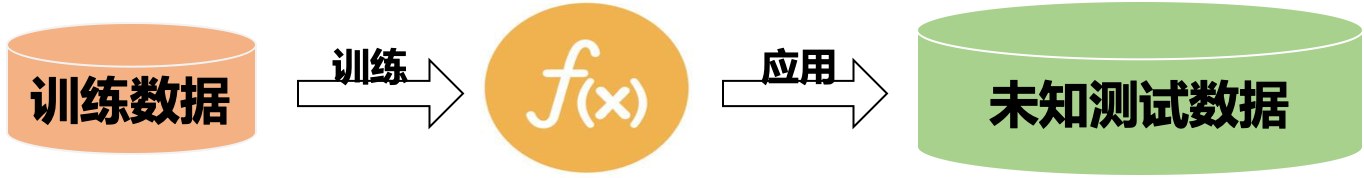

深度学习一般过程:

任务描述

本次实践使用Python来爬取百度百科中《青春有你2》所有参赛选手的信息。

数据获取:https://baike.baidu.com/item/青春有你第二季

上网的全过程:

普通用户:

打开浏览器 --> 往目标站点发送请求 --> 接收响应数据 --> 渲染到页面上。

爬虫程序:

模拟浏览器 --> 往目标站点发送请求 --> 接收响应数据 --> 提取有用的数据 --> 保存到本地/数据库。

爬虫的过程:

1.发送请求(requests模块)

2.获取响应数据(服务器返回)

3.解析并提取数据(BeautifulSoup查找或者re正则)

4.保存数据

import json

import re

import requests

import datetime

from bs4 import BeautifulSoup

import os

#获取当天的日期,并进行格式化,用于后面文件命名,格式:20200420

today = datetime.date.today().strftime('%Y%m%d')

def crawl_wiki_data():

"""

爬取百度百科中《青春有你2》中参赛选手信息,返回html

"""

headers = { #伪装浏览器

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'

}

url='https://baike.baidu.com/item/青春有你第二季'

try:

##print(250)

response = requests.get(url,headers=headers)#伪装,发出请求get

print(response.status_code)

##print(251)

#将一段文档传入BeautifulSoup的构造方法,就能得到一个文档的对象, 可以传入一段字符串

soup = BeautifulSoup(response.text,'lxml')

#print('/n',response.text,'/n'),是整个页面信息!

#返回的是class为table-view log-set-param的<table>所有标签

tables = soup.find_all('table',{'class':'table-view log-set-param'})#table是标签,后面是属性

crawl_table_title = "参赛学员"

##print(252)

for table in tables:

#对当前节点前面的标签和字符串进行查找

table_titles = table.find_previous('div').find_all('h3')

for title in table_titles:

if(crawl_table_title in title):

return table

##print(12312123111)

except Exception as e:#报错了

print(e)

def parse_wiki_data(table_html):

'''

从百度百科返回的html中解析得到选手信息,以当前日期作为文件名,存JSON文件,保存到work目录下

'''

bs = BeautifulSoup(str(table_html),'lxml')

all_trs = bs.find_all('tr')

error_list = ['\'','\"']

stars = []

for tr in all_trs[1:]:

all_tds = tr.find_all('td')

star = {}

#姓名

star["name"]=all_tds[0].text

#个人百度百科链接

star["link"]= 'https://baike.baidu.com' + all_tds[0].find('a').get('href')

#籍贯

star["zone"]=all_tds[1].text

#星座

star["constellation"]=all_tds[2].text

#身高

star["height"]=all_tds[3].text

#体重

star["weight"]= all_tds[4].text

#花语,去除掉花语中的单引号或双引号

flower_word = all_tds[5].text

for c in flower_word:

if c in error_list:

flower_word=flower_word.replace(c,'')

star["flower_word"]=flower_word

#公司

if not all_tds[6].find('a') is None:

star["company"]= all_tds[6].find('a').text

else:

star["company"]= all_tds[6].text

stars.append(star)

json_data = json.loads(str(stars).replace("\'","\""))

with open('work/' + today + '.json', 'w', encoding='UTF-8') as f:

json.dump(json_data, f, ensure_ascii=False)

def crawl_pic_urls():

'''

爬取每个选手的百度百科图片,并保存

'''

with open('work/'+ today + '.json', 'r', encoding='UTF-8') as file:

json_array = json.loads(file.read())

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'

}

for star in json_array:

name = star['name']

link = star['link']

#!!!请在以下完成对每个选手图片的爬取,将所有图片url存储在一个列表pic_urls中!!!

pic_urls=[]

response = requests.get(link,headers=headers)

soup = BeautifulSoup(response.text,'lxml')

url = soup.find_all('div',{'class':'summary-pic'}) #.find('a').get('href')

#妈的 把url字符串化再得到一次soup啊!

url = BeautifulSoup(str(url),'lxml')

url = url.find('a').get('href')

url = 'https://baike.baidu.com'+url#图集页面

#print(url)

#print(url)

#进入新的图册网页url

response = requests.get(url,headers=headers)

soup = BeautifulSoup(response.text,'lxml')

nurl_list = soup.find('div', {'class':'pic-list'})

nurl_list = soup.find_all('a',{'class':'pic-item'})

for nurl in nurl_list:

nurl = BeautifulSoup(str(nurl),'lxml')

if nurl.find('img') is None:

continue

nurl = nurl.find('img').get('src')

nurl = str(nurl)

#nurl = BeautifulSoup(str(nurl),'lxml')

#nurl = str(nurl.get('src'))

#nurl = str(nurl.find('img').get('src'))

if nurl != None:

pic_urls.append(nurl)

#!!!根据图片链接列表pic_urls, 下载所有图片,保存在以name命名的文件夹中!!!

down_pic(name,pic_urls)

def down_pic(name,pic_urls):

'''

根据图片链接列表pic_urls, 下载所有图片,保存在以name命名的文件夹中,

'''

path = 'work/'+'pics/'+name+'/'

if not os.path.exists(path):

os.makedirs(path)

for i, pic_url in enumerate(pic_urls):

try:

pic = requests.get(pic_url, timeout=15)

string = str(i + 1) + '.jpg'

with open(path+string, 'wb') as f:

f.write(pic.content)

print('成功下载第%s张图片: %s' % (str(i + 1), str(pic_url)))

except Exception as e:

print('下载第%s张图片时失败: %s' % (str(i + 1), str(pic_url)))

print(e)

continue

def show_pic_path(path):

'''

遍历所爬取的每张图片,并打印所有图片的绝对路径

'''

pic_num = 0

for (dirpath,dirnames,filenames) in os.walk(path):

for filename in filenames:

pic_num += 1

print("第%d张照片:%s" % (pic_num,os.path.join(dirpath,filename)))

print("共爬取《青春有你2》选手的%d照片" % pic_num)

if __name__ == '__main__':

#爬取百度百科中《青春有你2》中参赛选手信息,返回html

html = crawl_wiki_data()

#解析html,得到选手信息,保存为json文件

parse_wiki_data(html)

#从每个选手的百度百科页面上爬取图片,并保存

crawl_pic_urls()

#打印所爬取的选手图片路径

show_pic_path('/home/aistudio/work/pics/') print("所有信息爬取完成!")

Day3

1.## Day3

- 对《青春有你2》对选手体重分布进行可视化,绘制饼状图

任务描述:

基于第二天实践使用Python来爬去百度百科中《青春有你2》所有参赛选手的信息,进行数据可视化分析。

#绘制选手区域分布柱状图

import matplotlib.pyplot as plt

import numpy as np

import json

import matplotlib.font_manager as font_manager

#显示matplotlib生成的图形

%matplotlib inline

with open('data/data31557/20200422.json', 'r', encoding='UTF-8') as file:

json_array = json.loads(file.read())

#绘制小姐姐区域分布柱状图,x轴为地区,y轴为该区域的小姐姐数量

zones = []

for star in json_array:

zone = star['zone']

zones.append(zone)

print(len(zones))

print(zones)

zone_list = []

count_list = []

for zone in zones:

if zone not in zone_list:

count = zones.count(zone)

zone_list.append(zone)

count_list.append(count)

print(zone_list)

print(count_list)

# 设置显示中文

plt.rcParams['font.sans-serif'] = ['SimHei'] # 指定默认字体

plt.figure(figsize=(20,15))

plt.bar(range(len(count_list)), count_list,color='r',tick_label=zone_list,facecolor='#9999ff',edgecolor='white')

# 这里是调节横坐标的倾斜度,rotation是度数,以及设置刻度字体大小

plt.xticks(rotation=45,fontsize=20)

plt.yticks(fontsize=20)

plt.legend()

plt.title('''《青春有你2》参赛选手''',fontsize = 24)

plt.savefig('/home/aistudio/work/result/bar_result.jpg')

plt.show()

No handles with labels found to put in legend.

109

['中国湖北', '中国四川', '中国山东', '中国浙江', '中国山东', '中国台湾', '中国陕西', '中国广东', '中国黑龙江', '中国上海', '中国四川', '中国山东', '中国安徽', '中国安徽', '中国安徽', '中国北京', '中国贵州', '中国吉林', '中国四川', '中国四川', '中国江苏', '中国山东', '中国山东', '中国山东', '中国山东', '中国江苏', '中国四川', '中国山东', '中国山东', '中国广东', '中国浙江', '中国河南', '中国安徽', '中国河南', '中国北京', '中国北京', '马来西亚', '中国湖北', '中国四川', '中国天津', '中国黑龙江', '中国四川', '中国陕西', '中国辽宁', '中国湖南', '中国上海', '中国贵州', '中国山东', '中国湖北', '中国黑龙江', '中国黑龙江', '中国上海', '中国浙江', '中国湖南', '中国台湾', '中国台湾', '中国台湾', '中国台湾', '中国山东', '中国北京', '中国北京', '中国浙江', '中国河南', '中国河南', '中国福建', '中国河南', '中国北京', '中国山东', '中国四川', '中国安徽', '中国河南', '中国四川', '中国湖北', '中国四川', '中国陕西', '中国湖南', '中国四川', '中国台湾', '中国湖北', '中国广西', '中国江西', '中国湖南', '中国湖北', '中国北京', '中国陕西', '中国上海', '中国四川', '中国山东', '中国辽宁', '中国辽宁', '中国台湾', '中国浙江', '中国北京', '中国黑龙江', '中国北京', '中国安徽', '中国河北', '马来西亚', '中国四川', '中国湖南', '中国台湾', '中国广东', '中国上海', '中国四川', '日本', '中国辽宁', '中国黑龙江', '中国浙江', '中国台湾']

['中国湖北', '中国四川', '中国山东', '中国浙江', '中国台湾', '中国陕西', '中国广东', '中国黑龙江', '中国上海', '中国安徽', '中国北京', '中国贵州', '中国吉林', '中国江苏', '中国河南', '马来西亚', '中国天津', '中国辽宁', '中国湖南', '中国福建', '中国广西', '中国江西', '中国河北', '日本']

[6, 14, 13, 6, 9, 4, 3, 6, 5, 6, 9, 2, 1, 2, 6, 2, 1, 4, 5, 1, 1, 1, 1, 1]#对选手体重分布进行可视化,绘制饼状图

with open('data/data31557/20200422.json','r',encoding='UTF-8') as file:

json_array = json.loads(file.read())

#绘制选手体重分布饼状图

weight_list = []

count_weights = []

for star in json_array:

weight = star['weight']

weight_list.append(weight)

low45=0

between45_50=0

between50_55=0

high55=0

for weight in weight_list:

weight = float(weight[:2])

if(weight<45):

low45+=1

elif(weight<=50):

between45_50+=1

elif(weight<=55):

between50_55+=1

else:

high55+=1

print(low45,between45_50,between50_55,high55)

plt.rcParams['font.sans-serif'] = ['SimHei'] # 指定默认字体

plt.figure(figsize=(10,10))

plt.pie([low45,between45_50,between50_55,high55],labels=['<45kg','45~50kg','50~55kg','>55kg'],explode=[0,0.04,0,0],radius=0.5,shadow=True,autopct='%1.1f%%')

plt.title('青春有你体重分布饼图')#绘制标题

plt.savefig('./青春有你体重分布饼图')#保存图片

plt.show()Day4–《青春有你2》选手识别

#如果需要进行持久化安装, 需要使用持久化路径, 如下方代码示例:

!mkdir /home/aistudio/external-libraries

!pip install beautifulsoup4 -t /home/aistudio/external-libraries

!pip install lxml -t /home/aistudio/external-libraries

import sys

sys.path.append('/home/aistudio/external-libraries')

from bs4 import BeautifulSoup

import json

import re

import requests

import datetime

from bs4 import BeautifulSoup

import os

today = datetime.date.today().strftime('%Y%m%d')

name_num_dic={'虞书欣':0,

'许佳琪':1,

'赵小棠':2,

'安崎':3,

'王承渲':4}

'''

爬取,返回html

'''

headers={#伪装浏览器

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'

}

with open('dataset/'+'tupianurl.txt', 'r', encoding='UTF-8') as file:

url = file.readlines()

for i in range(len(url)):

url[i] = url[i].strip('\n')

print(url)

urls_name = [url[0].split(" "),url[1].split(" "),url[2].split(" "),url[3].split(" "),url[4].split(" ")]

with open('dataset/validate_list.txt','r+') as f:

f.truncate()

with open('dataset/train_list.txt','r+') as f:

f.truncate()

for url,name in urls_name:

pic_urls=[]

response = requests.get(url,headers=headers)

soup = BeautifulSoup(response.text,'html')

star_pictures = soup.find_all('img')

#迭代get

for picture in star_pictures:

src=picture.get('src')

pic_urls.append(str(src))

#依次下载

path_train = 'dataset/'+'train/'+name+'/'

path_validate = 'dataset/'+'validate/'+name+'/'

if not os.path.exists(path_train):

os.makedirs(path_train)

if not os.path.exists(path_validate):

os.makedirs(path_validate)

for i,pic_url in enumerate(pic_urls):

try:

pic = requests.get(pic_url,timeout=15)

string = str(i+1) + '.jpg'

if(i<6):

with open(path_validate+string,'wb') as f:

f.write(pic.content)

print("成功下载一张验证集"+name+"图片")

with open('dataset/validate_list.txt','a+') as f:

f.write('validate/'+name+'/'+string+' '+str(name_num_dic[name])+'\n')

elif(i<50):

#写入训练集

with open(path_train+string,'wb') as f:

f.write(pic.content)

print("成功下载一张训练集"+name+"图片")

with open('dataset/train_list.txt','a+') as f:

f.write('train/'+name+'/'''+string+' '+str(name_num_dic[name])+'\n')

except Exception as e:

print("失败下载一张"+name+"图片/r")

print(e)

continue

#CPU环境启动请务必执行该指令

%set_env CPU_NUM=1

#安装paddlehub

!pip install paddlehub==1.6.0 -i https://pypi.tuna.tsinghua.edu.cn/simple

Step1、基础工作加载数据文件导入python包In[7]!unzip -o file.zip -d ./dataset/unzip: cannot find or open file.zip, file.zip.zip or file.zip.ZIP.

In[8]import paddlehub as hubStep2、加载预训练模型接下来我们要在PaddleHub中选择合适的预训练模型来Finetune,由于是图像分类任务,因此我们使用经典的ResNet-50作为预训练模型。PaddleHub提供了丰富的图像分类预训练模型,包括了最新的神经网络架构搜索类的PNASNet,我们推荐您尝试不同的预训练模型来获得更好的性能。In[9]module = hub.Module(name="resnet_v2_50_imagenet")[2020-04-26 19:17:00,122] [ INFO] - Installing resnet_v2_50_imagenet module

Downloading resnet_v2_50_imagenet

[==================================================] 100.00%

Uncompress /home/aistudio/.paddlehub/tmp/tmpnqfjsfbx/resnet_v2_50_imagenet

[==================================================] 100.00%

[2020-04-26 19:17:11,030] [ INFO] - Successfully installed resnet_v2_50_imagenet-1.0.1

Step3、数据准备接着需要加载图片数据集。我们使用自定义的数据进行体验,请查看适配自定义数据In[10]from paddlehub.dataset.base_cv_dataset import BaseCVDataset

class DemoDataset(BaseCVDataset):

def __init__(self):

# 数据集存放位置

self.dataset_dir = "dataset"

super(DemoDataset, self).__init__(

base_path=self.dataset_dir,# = dataset文件夹 ="dataset"

train_list_file="train_list.txt", #"训练集" 题海战术

validate_list_file="validate_list.txt",#验证集 平时考试

test_list_file="test_list.txt", #测试集 期末考试 dataset/test/yushuxin.jpg 0

label_list_file="label_list.txt", #标签[虞书欣,许佳琪,赵小棠,安崎,王承渲]

)

dataset = DemoDataset()Step4、生成数据读取器接着生成一个图像分类的reader,reader负责将dataset的数据进行预处理,接着以特定格式组织并输入给模型进行训练。当我们生成一个图像分类的reader时,需要指定输入图片的大小In[11]data_reader = hub.reader.ImageClassificationReader(

image_width=module.get_expected_image_width(),

image_height=module.get_expected_image_height(),

images_mean=module.get_pretrained_images_mean(),

images_std=module.get_pretrained_images_std(),

dataset=dataset)[2020-04-26 19:17:11,318] [ INFO] - Dataset label map = {'虞书欣': 0, '许佳琪': 1, '赵小棠': 2, '安崎': 3, '王承渲': 4}

Step5、配置策略在进行Finetune前,我们可以设置一些运行时的配置,例如如下代码中的配置,表示:use_cuda:设置为False表示使用CPU进行训练。如果您本机支持GPU,且安装的是GPU版本的PaddlePaddle,我们建议您将这个选项设置为True;epoch:迭代轮数;batch_size:每次训练的时候,给模型输入的每批数据大小为32,模型训练时能够并行处理批数据,因此batch_size越大,训练的效率越高,但是同时带来了内存的负荷,过大的batch_size可能导致内存不足而无法训练,因此选择一个合适的batch_size是很重要的一步;log_interval:每隔10 step打印一次训练日志;eval_interval:每隔50 step在验证集上进行一次性能评估;checkpoint_dir:将训练的参数和数据保存到cv_finetune_turtorial_demo目录中;strategy:使用DefaultFinetuneStrategy策略进行finetune;更多运行配置,请查看RunConfig同时PaddleHub提供了许多优化策略,如AdamWeightDecayStrategy、ULMFiTStrategy、DefaultFinetuneStrategy等,详细信息参见策略In[16]config = hub.RunConfig(

use_cuda=False, #是否使用GPU训练,默认为False;

num_epoch=3, #Fine-tune的轮数;

checkpoint_dir="cv_v2",#模型checkpoint保存路径, 若用户没有指定,程序会自动生成;必须修改才能重新跑训练

batch_size=3, #训练的批大小,如果使用GPU,请根据实际情况调整batch_size;

eval_interval=10, #模型评估的间隔,默认每100个step评估一次验证集;

strategy=hub.finetune.strategy.DefaultFinetuneStrategy()) #Fine-tune优化策略;[2020-04-26 19:25:06,961] [ INFO] - Checkpoint dir: cv_v2

Step6、组建Finetune Task有了合适的预训练模型和准备要迁移的数据集后,我们开始组建一个Task。由于该数据设置是一个二分类的任务,而我们下载的分类module是在ImageNet数据集上训练的千分类模型,所以我们需要对模型进行简单的微调,把模型改造为一个二分类模型:获取module的上下文环境,包括输入和输出的变量,以及Paddle Program;从输出变量中找到特征图提取层feature_map;在feature_map后面接入一个全连接层,生成Task;In[17]input_dict, output_dict, program = module.context(trainable=True)

img = input_dict["image"]

#print(img)

feature_map = output_dict["feature_map"]#特征图提取层

#print('feature_map:',feature_map)

feed_list = [img.name]

# print('img::::::::::::::::::::::::::\n',img)

# print('img.name::::::::::::::::::',img.name)

# print('feed_list::::::::::::::::::',feed_list)

#print(img.name)#@HUB_resnet_v2_50_imagenet@image

task = hub.ImageClassifierTask(

data_reader=data_reader,

feed_list=feed_list,

feature=feature_map,

num_classes=dataset.num_labels,

config=config)

[2020-04-26 19:25:10,014] [ INFO] - 267 pretrained paramaters loaded by PaddleHub

Step5、开始Finetune我们选择finetune_and_eval接口来进行模型训练,这个接口在finetune的过程中,会周期性的进行模型效果的评估,以便我们了解整个训练过程的性能变化。In[18]run_states = task.finetune_and_eval()[2020-04-26 19:25:17,068] [ INFO] - Strategy with slanted triangle learning rate, L2 regularization,

[2020-04-26 19:25:17,100] [ INFO] - Try loading checkpoint from cv_v2/ckpt.meta

[2020-04-26 19:25:17,101] [ INFO] - PaddleHub model checkpoint not found, start from scratch...

[2020-04-26 19:25:17,163] [ INFO] - PaddleHub finetune start

[2020-04-26 19:25:30,025] [ TRAIN] - step 10 / 220: loss=1.37691 acc=0.46667 [step/sec: 0.78]

[2020-04-26 19:25:30,026] [ INFO] - Evaluation on dev dataset start

share_vars_from is set, scope is ignored.

[2020-04-26 19:25:33,727] [ EVAL] - [dev dataset evaluation result] loss=1.35510 acc=0.36667 [step/sec: 2.99]

[2020-04-26 19:25:33,728] [ EVAL] - best model saved to cv_v2/best_model [best acc=0.36667]

[2020-04-26 19:25:46,897] [ TRAIN] - step 20 / 220: loss=1.52444 acc=0.40000 [step/sec: 0.80]

[2020-04-26 19:25:46,899] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:25:50,227] [ EVAL] - [dev dataset evaluation result] loss=1.40791 acc=0.43333 [step/sec: 3.02]

[2020-04-26 19:25:50,228] [ EVAL] - best model saved to cv_v2/best_model [best acc=0.43333]

[2020-04-26 19:26:03,143] [ TRAIN] - step 30 / 220: loss=1.33692 acc=0.46667 [step/sec: 0.80]

[2020-04-26 19:26:03,144] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:26:06,543] [ EVAL] - [dev dataset evaluation result] loss=1.43014 acc=0.53333 [step/sec: 2.96]

[2020-04-26 19:26:06,544] [ EVAL] - best model saved to cv_v2/best_model [best acc=0.53333]

[2020-04-26 19:26:19,430] [ TRAIN] - step 40 / 220: loss=1.34856 acc=0.50000 [step/sec: 0.80]

[2020-04-26 19:26:19,432] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:26:22,812] [ EVAL] - [dev dataset evaluation result] loss=1.54921 acc=0.40000 [step/sec: 2.97]

[2020-04-26 19:26:35,316] [ TRAIN] - step 50 / 220: loss=1.13991 acc=0.60000 [step/sec: 0.80]

[2020-04-26 19:26:35,317] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:26:38,666] [ EVAL] - [dev dataset evaluation result] loss=1.69096 acc=0.60000 [step/sec: 3.00]

[2020-04-26 19:26:38,667] [ EVAL] - best model saved to cv_v2/best_model [best acc=0.60000]

[2020-04-26 19:26:51,673] [ TRAIN] - step 60 / 220: loss=1.12802 acc=0.50000 [step/sec: 0.80]

[2020-04-26 19:26:51,674] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:26:54,906] [ EVAL] - [dev dataset evaluation result] loss=1.60980 acc=0.56667 [step/sec: 3.11]

[2020-04-26 19:27:07,255] [ TRAIN] - step 70 / 220: loss=1.25939 acc=0.63333 [step/sec: 0.81]

[2020-04-26 19:27:07,256] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:27:10,541] [ EVAL] - [dev dataset evaluation result] loss=1.65182 acc=0.46667 [step/sec: 3.06]

[2020-04-26 19:27:22,332] [ TRAIN] - step 80 / 220: loss=0.87910 acc=0.66667 [step/sec: 0.80]

[2020-04-26 19:27:22,333] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:27:25,647] [ EVAL] - [dev dataset evaluation result] loss=1.60258 acc=0.46667 [step/sec: 3.04]

[2020-04-26 19:27:37,897] [ TRAIN] - step 90 / 220: loss=0.82920 acc=0.70000 [step/sec: 0.82]

[2020-04-26 19:27:37,898] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:27:41,180] [ EVAL] - [dev dataset evaluation result] loss=1.33638 acc=0.46667 [step/sec: 3.06]

[2020-04-26 19:27:53,355] [ TRAIN] - step 100 / 220: loss=1.02337 acc=0.56667 [step/sec: 0.82]

[2020-04-26 19:27:53,356] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:27:56,741] [ EVAL] - [dev dataset evaluation result] loss=1.57412 acc=0.40000 [step/sec: 2.97]

[2020-04-26 19:28:08,922] [ TRAIN] - step 110 / 220: loss=0.76328 acc=0.66667 [step/sec: 0.82]

[2020-04-26 19:28:08,923] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:28:12,561] [ EVAL] - [dev dataset evaluation result] loss=1.86589 acc=0.46667 [step/sec: 2.76]

[2020-04-26 19:28:25,031] [ TRAIN] - step 120 / 220: loss=0.90081 acc=0.63333 [step/sec: 0.80]

[2020-04-26 19:28:25,033] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:28:28,384] [ EVAL] - [dev dataset evaluation result] loss=2.21186 acc=0.40000 [step/sec: 3.00]

[2020-04-26 19:28:40,850] [ TRAIN] - step 130 / 220: loss=1.06672 acc=0.53333 [step/sec: 0.80]

[2020-04-26 19:28:40,851] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:28:44,178] [ EVAL] - [dev dataset evaluation result] loss=1.67196 acc=0.46667 [step/sec: 3.02]

[2020-04-26 19:28:56,606] [ TRAIN] - step 140 / 220: loss=1.31448 acc=0.60000 [step/sec: 0.80]

[2020-04-26 19:28:56,608] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:28:59,900] [ EVAL] - [dev dataset evaluation result] loss=1.56059 acc=0.46667 [step/sec: 3.05]

[2020-04-26 19:29:11,533] [ TRAIN] - step 150 / 220: loss=0.83359 acc=0.83333 [step/sec: 0.80]

[2020-04-26 19:29:11,534] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:29:14,855] [ EVAL] - [dev dataset evaluation result] loss=1.43374 acc=0.53333 [step/sec: 3.03]

[2020-04-26 19:29:26,882] [ TRAIN] - step 160 / 220: loss=0.74533 acc=0.80000 [step/sec: 0.83]

[2020-04-26 19:29:26,883] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:29:30,220] [ EVAL] - [dev dataset evaluation result] loss=1.27921 acc=0.46667 [step/sec: 3.01]

[2020-04-26 19:29:42,285] [ TRAIN] - step 170 / 220: loss=0.77313 acc=0.80000 [step/sec: 0.83]

[2020-04-26 19:29:42,286] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:29:45,692] [ EVAL] - [dev dataset evaluation result] loss=1.26914 acc=0.46667 [step/sec: 2.95]

[2020-04-26 19:29:57,953] [ TRAIN] - step 180 / 220: loss=0.82522 acc=0.76667 [step/sec: 0.82]

[2020-04-26 19:29:57,954] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:30:01,298] [ EVAL] - [dev dataset evaluation result] loss=1.39048 acc=0.53333 [step/sec: 3.01]

[2020-04-26 19:30:13,589] [ TRAIN] - step 190 / 220: loss=0.97577 acc=0.63333 [step/sec: 0.81]

[2020-04-26 19:30:13,590] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:30:16,874] [ EVAL] - [dev dataset evaluation result] loss=1.71017 acc=0.53333 [step/sec: 3.06]

[2020-04-26 19:30:29,006] [ TRAIN] - step 200 / 220: loss=1.22012 acc=0.66667 [step/sec: 0.82]

[2020-04-26 19:30:29,007] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:30:32,378] [ EVAL] - [dev dataset evaluation result] loss=1.20058 acc=0.50000 [step/sec: 2.98]

[2020-04-26 19:30:44,568] [ TRAIN] - step 210 / 220: loss=0.70932 acc=0.83333 [step/sec: 0.82]

[2020-04-26 19:30:44,569] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:30:47,903] [ EVAL] - [dev dataset evaluation result] loss=1.25520 acc=0.50000 [step/sec: 3.02]

[2020-04-26 19:31:00,132] [ TRAIN] - step 220 / 220: loss=1.51449 acc=0.53333 [step/sec: 0.82]

[2020-04-26 19:31:00,133] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:31:03,397] [ EVAL] - [dev dataset evaluation result] loss=1.65621 acc=0.46667 [step/sec: 3.08]

[2020-04-26 19:31:05,115] [ INFO] - Evaluation on dev dataset start

[2020-04-26 19:31:08,466] [ EVAL] - [dev dataset evaluation result] loss=1.71872 acc=0.50000 [step/sec: 3.00]

[2020-04-26 19:31:08,467] [ INFO] - Load the best model from cv_v2/best_model

[2020-04-26 19:31:08,712] [ INFO] - Evaluation on test dataset start

[2020-04-26 19:31:09,440] [ EVAL] - [test dataset evaluation result] loss=2.61351 acc=0.33333 [step/sec: 3.74]

[2020-04-26 19:31:09,441] [ INFO] - Saving model checkpoint to cv_v2/step_222

[2020-04-26 19:31:10,097] [ INFO] - PaddleHub finetune finished.

Step6、预测当Finetune完成后,我们使用模型来进行预测,先通过以下命令来获取测试的图片In[21]import numpy as np

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

with open("dataset/test_list.txt","r") as f:

filepath = f.readlines()

data = [filepath[0].split(" ")[0],filepath[1].split(" ")[0],filepath[2].split(" ")[0],filepath[3].split(" ")[0],filepath[4].split(" ")[0]]

label_map = dataset.label_dict()

#print(1111111111,label_map)

index = 0

run_states = task.predict(data=data)

results = [run_state.run_results for run_state in run_states]

#print(1111111111,results,1111)# 3*5+2*5

for batch_result in results:

print(batch_result)

batch_result = np.argmax(batch_result, axis=2)[0]

print(batch_result)

for result in batch_result:

index += 1

result = label_map[result]

print("input %i is %s, and the predict result is %s" %

(index, data[index - 1], result))

[2020-04-26 19:35:04,521] [ INFO] - PaddleHub predict start

[2020-04-26 19:35:04,522] [ INFO] - The best model has been loaded

share_vars_from is set, scope is ignored.

[2020-04-26 19:35:05,337] [ INFO] - PaddleHub predict finished.

[array([[0.35639164, 0.12188639, 0.14282106, 0.31989613, 0.0590048 ],

[0.22618689, 0.49202806, 0.03302279, 0.02794226, 0.22082007],

[0.05379125, 0.67873746, 0.14674082, 0.11127331, 0.00945712]],

dtype=float32)]

[0 1 1]

input 1 is dataset/test/yushuxin.jpg, and the predict result is 虞书欣

input 2 is dataset/test/xujiaqi.jpg, and the predict result is 许佳琪

input 3 is dataset/test/zhaoxiaotang.jpg, and the predict result is 许佳琪

[array([[0.43066207, 0.14861931, 0.09600072, 0.21908128, 0.10563661],

[0.03933568, 0.6435218 , 0.26945707, 0.04617555, 0.00150993]],

dtype=float32)]

[0 1]

input 4 is dataset/test/anqi.jpg, and the predict result is 虞书欣

input 5 is dataset/test/wangchengxuan.jpg, and the predict result is 许佳琪

Day5

综合大作业第一步:爱奇艺《青春有你2》评论数据爬取(参考链接:https://www.iqiyi.com/v_19ryfkiv8w.html#curid=15068699100_9f9bab7e0d1e30c494622af777f4ba39)爬取任意一期正片视频下评论评论条数不少于1000条第二步:词频统计并可视化展示数据预处理:清理清洗评论中特殊字符(如:@#¥%、emoji表情符),清洗后结果存储为txt文档中文分词:添加新增词(如:青你、奥利给、冲鸭),去除停用词(如:哦、因此、不然、也好、但是)统计top10高频词可视化展示高频词第三步:绘制词云根据词频生成词云可选项-添加背景图片,根据背景图片轮廓生成词云第四步:结合PaddleHub,对评论进行内容审核需要的配置和准备中文分词需要jieba词云绘制需要wordcloud可视化展示中需要的中文字体网上公开资源中找一个中文停用词表根据分词结果自己制作新增词表准备一张词云背景图(附加项,不做要求,可用hub抠图实现)paddlehub配置

from __future__ import print_function

import requests

import json

import re #正则匹配

import time #时间处理模块

import jieba #中文分词

import numpy as np

import matplotlib

import matplotlib.pyplot as plt

import matplotlib.font_manager as font_manager

from PIL import Image

from wordcloud import WordCloud #绘制词云模块

import paddlehub as hub

from bs4 import BeautifulSoup

#请求爱奇艺评论接口,返回response信息

def getMovieinfo(url):

'''

请求爱奇艺评论接口,返回response信息

参数 url: 评论的url

:return: response信息

'''

#会话保持

session = requests.Session()

headers = {

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.108 Safari/537.36'

}

#url = 'https://www.iqiyi.com/v_19ryfkiv8w.html#curid=15068699100_9f9bab7e0d1e30c494622af777f4ba39'

response = session.get(url,headers=headers)

if response.content:#200表示网络请求成功,已经返回数据

return response.text

return None #否则啥也没有

#解析json数据,获取评论

def saveMovieInfoToFile(lastId,arr):

'''

解析json数据,获取评论

参数 lastId:最后一条评论ID arr:存放文本的list

:return: 新的lastId

'''

url ='''https://sns-comment.iqiyi.com/v3/comment/get_comments.action?agent_type=118&agent_ver

sion=9.11.5&business_type=17&content_id=15068699100&hot_size=0&page=&page_size=20&types=time&callback=jsonp_1587997260157_26200

&last_id='''

url += str(lastId)

responseTxt = getMovieinfo(url)#返回一个response.text

#将json格式数据转换为字典

#print(responseTxt)

location=str(responseTxt).find("\"data\"")

responseStr = str(responseTxt)[location-1:]

location_end=responseStr.find(') }catch(e){};')

responseStr = responseStr[:location_end]

#print(responseStr)

responseJson = json.loads(responseStr)

comments = responseJson['data']['comments']

for item in comments:

if 'content' in item.keys():

print(item['content'])#输出内容

arr.append(item['content'])

lastId = str(item['id'])

return lastId

# arr=[]

# saveMovieInfoToFile(0,arr)

#去除文本中特殊字符

def clear_special_char(content):

'''

正则处理特殊字符

参数 content:原文本

return: 清除后的文本

数据预处理:清理清洗评论中特殊字符(如:@#¥%、emoji表情符)

,清洗后结果存储为txt文档

'''

#去掉数据

s = re.sub(r"<?<.+?\)>| |\t|\r","",content)

s = re.sub(r"\n"," ",s)

s = re.sub(r"\*","\\*",s)

s = re.sub('[^\u4c00-\u9fa5^a-z^A-Z^0-9]','',s)

s = re.sub('[\001\002\003\004\005\006\007\x08\x09\x0a\x0b\x0c\x0d\x0e\x0f\x10\x11\x12\x13\x14\x15\x16\x17\x18\x19\x1a]','',s)

s = re.sub('[a-zA-z]','',s)

s = re.sub('^\d+(\.\d+)?$','',s)

return s

def fenci(text):

'''

利用jieba进行分词

参数 text:需要分词的句子或文本

return:分词结果

'''

jieba.load_userdict('add_words.txt')#添加自定义字典

seg = jieba.lcut(text,cut_all=False)#分割

return seg

def stopwordslist(file_path):

'''

创建停用词表

参数 file_path:停用词文本路径

return:停用词list

'''

stopwords = [line.strip() for line in open(file_path,encoding='UTF-8').readlines()]

return stopwords

def movestopwords(sentence,stopwords,counts):

'''

去除停用词,统计词频

参数 file_path:停用词文本路径 stopwords:停用词list counts: 词频统计结果

return:None

'''

out = []

for word in sentence:

if word not in stopwords:

if len(word)!=1:

counts[word] = counts.get(word,0)+1

return counts

# 找到matplotlib 加载的配置文件路径

import matplotlib

matplotlib.matplotlib_fname()

#rm -r ~/.cache/matplotlib```

def drawcounts(counts,num):

'''

绘制词频统计表

参数 counts: 词频统计结果 num:绘制topN

return:none

'''

x_aixs = []

y_aixs = []

c_order = sorted(counts.items(),key=lambda x:x[1],reverse=True)

print(c_order)

for c in c_order[:num]:

x_aixs.append(c[0])#进词 x轴

y_aixs.append(c[1])#进个数 y轴

#设置显示中文

matplotlib.rcParams['font.sans-serif']=['SimHei']#指定默认字体

matplotlib.rcParams['font.family']='sans-serif' #解决负号'-'显示为方块的问题

matplotlib.rcParams['axes.unicode_minus']=False#解决保存图像是负号'-'显示为方块的问题

plt.bar(x_aixs,y_aixs)

plt.show()

def drawcloud(word_f):

'''

根据词频绘制词云图

参数 word_f:统计出的词频结果

return:none

'''

#加载背景图片

cloud_mask = np.array(Image.open('zzz.png'))

#忽略显示的词

st = set(['东西','这是'])

#生成wordcloud对象

wc = WordCloud(background_color="white",

mask = cloud_mask,

max_words=150,

font_path = 'simhei.ttf',

min_font_size = 10,

max_font_size = 100,

width = 400,

height=200,

relative_scaling = 0.3,

stopwords=st

)

wc.fit_words(word_f)

wc.to_file('pic.png')

def text_detection(test_text,file_path):

'''

使用hub对评论进行内容分析

return:分析结果

'''

porn_detection_lstm = hub.Module(name="porn_detection_lstm")

f = open('aqy.txt','r',encoding='utf-8')

for line in f:

if len(line.strip()) == 1:#去除头尾换行符和回车

continue

else:

test_text.append(line)

f.close()

input_dict = {"text":test_text}

results = porn_detection_lstm.detection(

data=input_dict,use_gpu=True,batch_size=1)

for index,item in enumerate(results):

if item['porn_detection_key']=='porn':

print(item['text'],':',item['porn_probs'])

#评论是多分页的,得多次请求爱奇艺的评论接口才能获取多页评论,有些评论含有表情、特殊字符之类的

#num 是页数,一页10条评论,假如爬取1000条评论,设置num=100

if __name__ == "__main__":

num = 100

lastId = '0' #lastId是接口分页id

arr =[]

with open('aqy.txt','a',encoding='utf-8') as f:

for i in range(num):

lastId = saveMovieInfoToFile(lastId,arr)

time.sleep(0.5)

print(arr)

#频繁访问爱奇艺接口,偶尔出现接口连接报错情况

#睡眠0.5秒,增加每次访问间隔时间

#除去特殊字符

# with open('aqy.txt','a',encoding='utf-8') as f:

# for item in arr:

# Item = clear_special_char(item)

# if Item.strip()!='':

# try:

# f.write(Item+'\n')

# except Exception as e:

# print('含有特殊字符')

# print('共爬取评论:',len(arr))

# with open('aqy.txt','r',encoding='utf-8') as f:

# counts = {}

# for line in f:

# words = fenci(line)

# stopwords = stopwordslist('cn_stopwords.txt')

# movestopwords(words,stopwords,counts)

# drawcounts(counts,10)#绘制top10,高频词

# drawcloud(counts)#绘制词云

# '''

# 使用hub对评论进行内容分析

# '''

# file_path = 'aqy.txt'

# test_text = []

# text_detection(test_text,file_path)

7759

7759

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?