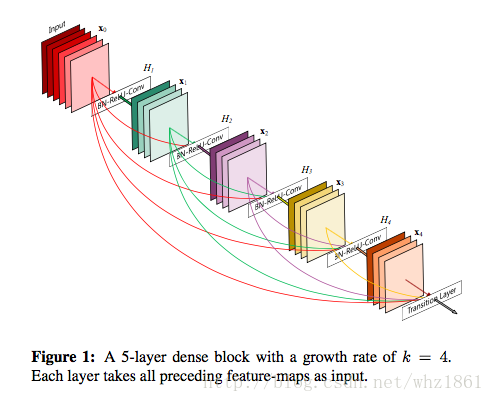

- In this paper, we embrace this observation and introduce the Dense Convolutional Network(DenseNet), which connects each layer to every other layer in a feed-forward fashion.

- DenseNets have several compelling advantages: they alleviate the vanishing-gradient problem, strengthen feature propagation, encourage feature reuse, and substantially reduce the number of parameters.

思想

denseNet的思想来自于作者之前的工作(随机深度网络,Deep Network with Stochastic depth),其训练过程中采用随机dropout一些中间层的方法改进ResNet,发现可以显著提高ResNet的泛化能力。

注:Deep Network with Stochastic depth,在训练过程中,随机去掉很多层,并没有影响算法的收敛性,说明了ResNet具有很好的冗余性。而且去掉中间几层对最终的结果也没什么影响,说明ResNet每一层学习的特征信息都非常少,也说明了ResNet具有很好的冗余性。【这个应该得益于ResNet的skip connections的作用】

从而作者让每一层都直接与前面的所有层建立连接。但DenseNet不同与ResNet:

- To ensure maximum information flow between layers in the network, we connect all layers (with matching features-map sizes) directly with each other

- currialy, in contrast to ResNets, we never combine features throughout summation before the are passed into a layer; instead, we combine features by concatenating them

优点

- 减少参数量:在ImageNet上相同精度下,DenseNet参数量只有ResNet的一半

- 信息传递:DenseNet提高了信息流和梯度在网络之间传递。

- 正则化作用:减少过拟合overfitting

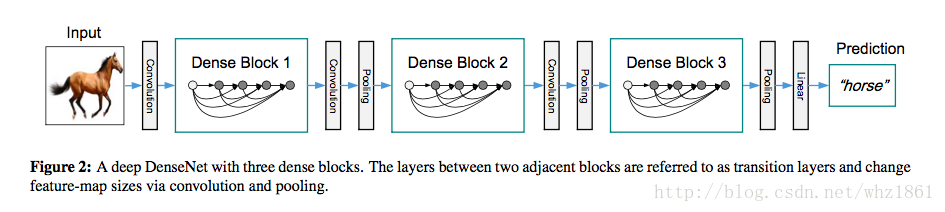

网络结构

DenseNet网络结构采用传统的卷积和池化操作与Dense Block之间交替叠加,从而可以有效的增加网络深度。

- 结构对比

| 网络名称 | 说明 |

|---|---|

| ResNet | xl=Hl( |

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2680

2680

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?