文章目录

前言

在项目的末尾需要做大屏展示时,遇到了需要将hive的维度表导入到MySQL中。这里除了使用Sqoop外,我们当然还可以使用java来操作:调用jdbc来操作即可;这里又突然想到如果用MyBatis来操作呢,想到了就去实现,下面就是实现过程。

可能出现的问题:

如果hive表数据过多的话,程序在向hive取数据时就长时间无响应!

一、思路整理

首先我们得知道取数据和插入数据时都有可能导致程序的崩溃(数据量过大,插入时长度限制等);既然如此那退而求其次,我们在取数据和插入数据都一批次一批次的操作,这样程序总不会罢工了吧!

- Hive

a.设定每次拿50000条数据,既然要分批次拿,使用开窗给每行数据加上序号;

b.将将序号取余50000并向下取整,保证每个序号有50000条数据;

c.将数据导入中间表供取数据时使用,取数据时根据序号取就ok了;

中间表建立方法参考

create table dwd_inters.tmp_dwd_events as

select

b.*,floor(b.rank/50000) flag

from(

select

a.*,row_number() over() rank

from

dwd_inters.dwd_events a)b

- mysql

与hive类似,hive过来的数据每次分批插入;这里因为插入语句的长度限制,每次向mysql插入5000条数据;

二、Dependency

- mysql-connector-java ==>MySQL连接

- mybatis ==>MyBatis包

- druid ==>阿里数据库连接池

- hive-jdbc ==>hive连接

<!-- mysql-connector-java -->

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.31</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.mybatis/mybatis -->

<dependency>

<groupId>org.mybatis</groupId>

<artifactId>mybatis</artifactId>

<version>3.4.6</version>

</dependency>

<!-- https://mvnrepository.com/artifact/com.alibaba/druid -->

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>druid</artifactId>

<version>1.1.10</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hive/hive-jdbc -->

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-jdbc</artifactId>

<version>1.1.0</version>

</dependency>

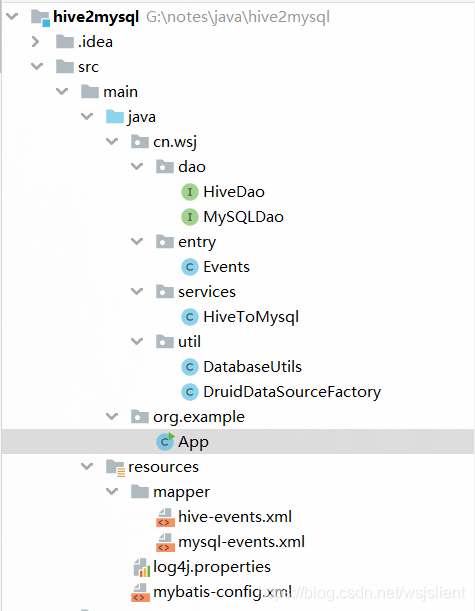

三、Code

包结构图:

3.1 实体类

根据对应的表字段写即可;

package cn.wsj.entry;

public class Events {

private String event_id;

private String userid;

private String starttime;

private String city;

private String states;

private String zip;

private String country;

private String lat;

private String lng;

private String features;

}

3.2 DAO

a. HiveDao

package cn.wsj.dao;

import cn.wsj.entry.Events;

import java.util.List;

public interface HiveDao {

public List<Events> showAll(int num);

}

b. MySQLDao

package cn.wsj.dao;

import cn.wsj.entry.Events;

import java.util.List;

public interface MySQLDao {

public void batchInsert(List<Events> events);

}

3.3 配置文件

a.mybatis-config.xml

<?xml version="1.0" encoding="UTF-8" ?>

<!DOCTYPE configuration PUBLIC

"-//mybatis.org//DTD Config 3.0//EN"

"http://mybatis.org/dtd/mybatis-3-config.dtd">

<configuration>

<typeAliases>

<typeAlias type="cn.wsj.util.DruidDataSourceFactory" alias="DRUID"></typeAlias>

<typeAlias type="cn.wsj.entry.Events" alias="event"></typeAlias>

</typeAliases>

<environments default="wsj">

<environment id="wsj2hive">

<transactionManager type="JDBC"></transactionManager>

<!-- 配置数据库连接信息 -->

<dataSource type="DRUID">

<property name="driver" value="org.apache.hive.jdbc.HiveDriver"/>

<property name="url" value="jdbc:hive2://192.168.237.160:10000/dwd_inters"/>

<property name="username" value=""/>

<property name="password" value=""/>

</dataSource>

</environment>

<environment id="wsj2mysql">

<transactionManager type="JDBC"></transactionManager>

<!-- 配置数据库连接信息 -->

<dataSource type="DRUID">

<property name="driver" value="com.mysql.jdbc.Driver"/>

<property name="url" value="jdbc:mysql://192.168.237.160:3306/ms_dm_inters"/>

<property name="username" value="root"/>

<property name="password" value="root"/>

</dataSource>

</environment>

</environments>

<!-- mybatis的mapper文件,每个xml配置文件对应一个接口 -->

<mappers>

<mapper resource="mapper/hive-events.xml"></mapper>

<mapper resource="mapper/mysql-events.xml"></mapper>

</mappers>

</configuration>

b. hive-events.xml

<?xml version="1.0" encoding="UTF-8" ?>

<!DOCTYPE mapper PUBLIC

"-//mybatis.org//DTD Mapper 3.0//EN"

"http://mybatis.org/dtd/mybatis-3-mapper.dtd">

<mapper namespace="cn.dao.HiveEventDao">

<select id="findAll" resultType="event" parameterType="int">

select

event_id,userid,starttime,city,states,zip,country,lat,lng,features

from

dwd_inters.tmp_dwd_events

where

flag=#{flag}

</select>

</mapper>

c. mysql-events.xml

<?xml version="1.0" encoding="UTF-8" ?>

<!DOCTYPE mapper PUBLIC

"-//mybatis.org//DTD Mapper 3.0//EN"

"http://mybatis.org/dtd/mybatis-3-mapper.dtd">

<mapper namespace="cn.dao.MySQLEventDAO">

<select id="findAll" resultType="event">

select * from dm_events_bak

</select>

<insert id="batchInsert" parameterType="java.util.List">

insert into dm_events_bak2 values

<foreach collection="list" item="eve" separator=",">

(#{eve.event_id},#{eve.userid},#{eve.starttime},#{eve.city},#{eve.states},#{eve.zip},#{eve.country},#{eve.lat},#{eve.lng},#{eve.features})

</foreach>

</insert>

</mapper>

3.4 工具类

a. DruidDataSourceFactory

package cn.wsj.util;

import com.alibaba.druid.pool.DruidDataSource;

import org.apache.ibatis.datasource.DataSourceFactory;

import javax.sql.DataSource;

import java.sql.SQLException;

import java.util.Properties;

public class DruidDataSourceFactory implements DataSourceFactory {

private Properties prop;

@Override

public void setProperties(Properties properties) {

this.prop =properties;

}

@Override

public DataSource getDataSource() {

DruidDataSource druid = new DruidDataSource();

druid.setDriverClassName(this.prop.getProperty("driver"));

druid.setUrl(this.prop.getProperty("url"));

druid.setUsername(this.prop.getProperty("username"));

druid.setPassword(this.prop.getProperty("password"));

try {

druid.init();

} catch (SQLException e) {

e.printStackTrace();

}

return druid;

}

}

b. DatabaseUtils

package cn.wsj.util;

import org.apache.ibatis.io.Resources;

import org.apache.ibatis.session.SqlSession;

import org.apache.ibatis.session.SqlSessionFactory;

import org.apache.ibatis.session.SqlSessionFactoryBuilder;

import java.io.IOException;

import java.io.InputStream;

public class DatabaseUtils {

private static final String configpath = "mybatis-config.xml";

public static SqlSession getSession(String db){

InputStream is = null;

try {

is = Resources.getResourceAsStream(configpath);

} catch (IOException e) {

e.printStackTrace();

}

//根据传入的数据库名建立不同的会话连接工厂对象

SqlSessionFactory factory = new SqlSessionFactoryBuilder().build(is,db.equals("mysql")?"wsj2mysql":"wsj2hive");

SqlSession session = factory.openSession();

return session;

}

public static void closeSession(SqlSession session){

session.close();

}

}

3.5 服务类

- HiveToMysql

package cn.wsj.services;

import cn.wsj.dao.HiveDao;

import cn.wsj.dao.MySQLDao;

import cn.wsj.entry.Events;

import cn.wsj.util.DatabaseUtils;

import org.apache.ibatis.session.SqlSession;

import java.util.ArrayList;

import java.util.List;

public class HiveToMysql {

static HiveDao hiveDao;

//这里将hive保持,避免重复创建

static {

SqlSession hiveSession = DatabaseUtils.getSession("hive");

hiveDao = hiveSession.getMapper(HiveDao.class);

}

public void loadData(){

//准备mysql链接

SqlSession session = DatabaseUtils.getSession("mysql");

MySQLDao mySQLDao = session.getMapper(MySQLDao.class);

for (int i = 0; i <63 ; i++) {

//hive取数据,一次50000条数据

//表中三百万多条数据,一次性拿取数据就会导致系统长时间等待

//原表数据经过处理,开窗取row_number(),之后将序号取余50000并向下取整,保证每个序号有50000条数据

//将数据导入中间表供导入时使用,虽然都走mapreduce但相比从原表直接拿更加快速

List<Events> events = hiveDao.showAll(i);

//mysql插入数据,一次5000条

List<Events> tmpData = new ArrayList<>();

for (int j = 1; j <= events.size(); j++) {

tmpData.add(events.get(j - 1));

if (j % 5000 == 0 || j == events.size()) {

mySQLDao.batchInsert(tmpData);

session.commit();

tmpData.clear();

}

}

}

}

}

3.6 测试类App

package org.example;

import cn.wsj.services.HiveToMysql;

public class App {

public static void main(String[] args) {

HiveToMysql htm = new HiveToMysql();

htm.loadData();

}

}

TIPS:这种方法只是一种想法的实现,并不见得速度很快;使用Sqoop是最快的,或者jdbc采用a批量插入ddBatch()的方法也可以提高一些速度(与mybatis的相比都是在向Hive取数据时耗费了大量时间,因为走的时MapReduce,而我们的Sqoop比较凶残,虽然也是MapReduce,但别人是文件对拷,速度快的飞起! )

PS:如果有写错或者写的不好的地方,欢迎各位大佬在评论区留下宝贵的意见或者建议,敬上!如果这篇博客对您有帮助,希望您可以顺手帮我点个赞!不胜感谢!

| 原创作者:wsjslient |

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?