本文简单分析FFmpeg中一个常用的函数:avformat_find_stream_info()。该函数可以读取一部分视音频数据并且获得一些相关的信息。avformat_find_stream_info()的声明位于libavformat\avformat.h,如下所示。

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

- int avformat_find_stream_info(AVFormatContext *ic, AVDictionary **options);

简单解释一下它的参数的含义:

ic:输入的AVFormatContext。

options:额外的选项,目前没有深入研究过。

函数正常执行后返回值大于等于0。

该函数最典型的例子可以参考:

最简单的基于FFMPEG+SDL的视频播放器 ver2 (采用SDL2.0)

PS:由于该函数比较复杂,所以只看了一部分代码,以后有时间再进一步分析。

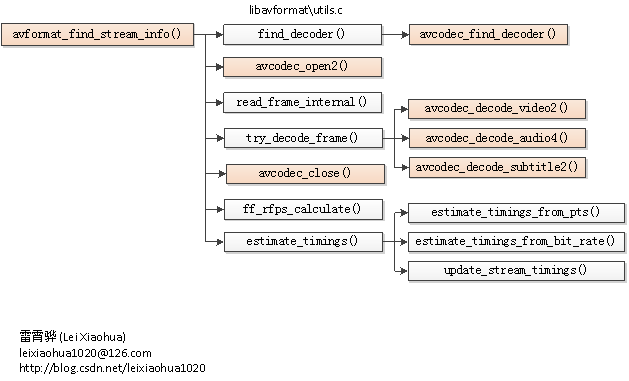

函数调用关系图

函数的调用关系如下图所示。

avformat_find_stream_info()

avformat_find_stream_info()的定义位于libavformat\utils.c。它的代码比较长,如下所示。

- int avformat_find_stream_info(AVFormatContext *ic, AVDictionary **options)

- {

- int i, count, ret = 0, j;

- int64_t read_size;

- AVStream *st;

- AVPacket pkt1, *pkt;

- int64_t old_offset = avio_tell(ic->pb);

-

- int orig_nb_streams = ic->nb_streams;

- int flush_codecs;

- int64_t max_analyze_duration = ic->max_analyze_duration2;

- int64_t probesize = ic->probesize2;

-

-

- if (!max_analyze_duration)

- max_analyze_duration = ic->max_analyze_duration;

- if (ic->probesize)

- probesize = ic->probesize;

- flush_codecs = probesize > 0;

-

-

- av_opt_set(ic, "skip_clear", "1", AV_OPT_SEARCH_CHILDREN);

-

-

- if (!max_analyze_duration) {

- if (!strcmp(ic->iformat->name, "flv") && !(ic->ctx_flags & AVFMTCTX_NOHEADER)) {

- max_analyze_duration = 10*AV_TIME_BASE;

- } else

- max_analyze_duration = 5*AV_TIME_BASE;

- }

-

-

- if (ic->pb)

- av_log(ic, AV_LOG_DEBUG, "Before avformat_find_stream_info() pos: %"PRId64" bytes read:%"PRId64" seeks:%d\n",

- avio_tell(ic->pb), ic->pb->bytes_read, ic->pb->seek_count);

-

-

- for (i = 0; i < ic->nb_streams; i++) {

- const AVCodec *codec;

- AVDictionary *thread_opt = NULL;

- st = ic->streams[i];

-

-

- if (st->codec->codec_type == AVMEDIA_TYPE_VIDEO ||

- st->codec->codec_type == AVMEDIA_TYPE_SUBTITLE) {

-

-

- if (!st->codec->time_base.num)

- st->codec->time_base = st->time_base;

- }

-

- if (!st->parser && !(ic->flags & AVFMT_FLAG_NOPARSE)) {

- st->parser = av_parser_init(st->codec->codec_id);

- if (st->parser) {

- if (st->need_parsing == AVSTREAM_PARSE_HEADERS) {

- st->parser->flags |= PARSER_FLAG_COMPLETE_FRAMES;

- } else if (st->need_parsing == AVSTREAM_PARSE_FULL_RAW) {

- st->parser->flags |= PARSER_FLAG_USE_CODEC_TS;

- }

- } else if (st->need_parsing) {

- av_log(ic, AV_LOG_VERBOSE, "parser not found for codec "

- "%s, packets or times may be invalid.\n",

- avcodec_get_name(st->codec->codec_id));

- }

- }

- codec = find_decoder(ic, st, st->codec->codec_id);

-

-

-

-

- av_dict_set(options ? &options[i] : &thread_opt, "threads", "1", 0);

-

-

- if (ic->codec_whitelist)

- av_dict_set(options ? &options[i] : &thread_opt, "codec_whitelist", ic->codec_whitelist, 0);

-

-

-

- if (st->codec->codec_type == AVMEDIA_TYPE_SUBTITLE

- && codec && !st->codec->codec) {

- if (avcodec_open2(st->codec, codec, options ? &options[i] : &thread_opt) < 0)

- av_log(ic, AV_LOG_WARNING,

- "Failed to open codec in av_find_stream_info\n");

- }

-

-

-

- if (!has_codec_parameters(st, NULL) && st->request_probe <= 0) {

- if (codec && !st->codec->codec)

- if (avcodec_open2(st->codec, codec, options ? &options[i] : &thread_opt) < 0)

- av_log(ic, AV_LOG_WARNING,

- "Failed to open codec in av_find_stream_info\n");

- }

- if (!options)

- av_dict_free(&thread_opt);

- }

-

-

- for (i = 0; i < ic->nb_streams; i++) {

- #if FF_API_R_FRAME_RATE

- ic->streams[i]->info->last_dts = AV_NOPTS_VALUE;

- #endif

- ic->streams[i]->info->fps_first_dts = AV_NOPTS_VALUE;

- ic->streams[i]->info->fps_last_dts = AV_NOPTS_VALUE;

- }

-

-

- count = 0;

- read_size = 0;

- for (;;) {

- if (ff_check_interrupt(&ic->interrupt_callback)) {

- ret = AVERROR_EXIT;

- av_log(ic, AV_LOG_DEBUG, "interrupted\n");

- break;

- }

-

-

-

- for (i = 0; i < ic->nb_streams; i++) {

- int fps_analyze_framecount = 20;

-

-

- st = ic->streams[i];

- if (!has_codec_parameters(st, NULL))

- break;

-

-

-

- if (av_q2d(st->time_base) > 0.0005)

- fps_analyze_framecount *= 2;

- if (!tb_unreliable(st->codec))

- fps_analyze_framecount = 0;

- if (ic->fps_probe_size >= 0)

- fps_analyze_framecount = ic->fps_probe_size;

- if (st->disposition & AV_DISPOSITION_ATTACHED_PIC)

- fps_analyze_framecount = 0;

-

- if (!(st->r_frame_rate.num && st->avg_frame_rate.num) &&

- st->info->duration_count < fps_analyze_framecount &&

- st->codec->codec_type == AVMEDIA_TYPE_VIDEO)

- break;

- if (st->parser && st->parser->parser->split &&

- !st->codec->extradata)

- break;

- if (st->first_dts == AV_NOPTS_VALUE &&

- !(ic->iformat->flags & AVFMT_NOTIMESTAMPS) &&

- st->codec_info_nb_frames < ic->max_ts_probe &&

- (st->codec->codec_type == AVMEDIA_TYPE_VIDEO ||

- st->codec->codec_type == AVMEDIA_TYPE_AUDIO))

- break;

- }

- if (i == ic->nb_streams) {

-

-

- if (!(ic->ctx_flags & AVFMTCTX_NOHEADER)) {

-

- ret = count;

- av_log(ic, AV_LOG_DEBUG, "All info found\n");

- flush_codecs = 0;

- break;

- }

- }

-

- if (read_size >= probesize) {

- ret = count;

- av_log(ic, AV_LOG_DEBUG,

- "Probe buffer size limit of %"PRId64" bytes reached\n", probesize);

- for (i = 0; i < ic->nb_streams; i++)

- if (!ic->streams[i]->r_frame_rate.num &&

- ic->streams[i]->info->duration_count <= 1 &&

- ic->streams[i]->codec->codec_type == AVMEDIA_TYPE_VIDEO &&

- strcmp(ic->iformat->name, "image2"))

- av_log(ic, AV_LOG_WARNING,

- "Stream #%d: not enough frames to estimate rate; "

- "consider increasing probesize\n", i);

- break;

- }

-

-

-

-

- ret = read_frame_internal(ic, &pkt1);

- if (ret == AVERROR(EAGAIN))

- continue;

-

-

- if (ret < 0) {

-

- break;

- }

-

-

- if (ic->flags & AVFMT_FLAG_NOBUFFER)

- free_packet_buffer(&ic->packet_buffer, &ic->packet_buffer_end);

- {

- pkt = add_to_pktbuf(&ic->packet_buffer, &pkt1,

- &ic->packet_buffer_end);

- if (!pkt) {

- ret = AVERROR(ENOMEM);

- goto find_stream_info_err;

- }

- if ((ret = av_dup_packet(pkt)) < 0)

- goto find_stream_info_err;

- }

-

-

- st = ic->streams[pkt->stream_index];

- if (!(st->disposition & AV_DISPOSITION_ATTACHED_PIC))

- read_size += pkt->size;

-

-

- if (pkt->dts != AV_NOPTS_VALUE && st->codec_info_nb_frames > 1) {

-

- if (st->info->fps_last_dts != AV_NOPTS_VALUE &&

- st->info->fps_last_dts >= pkt->dts) {

- av_log(ic, AV_LOG_DEBUG,

- "Non-increasing DTS in stream %d: packet %d with DTS "

- "%"PRId64", packet %d with DTS %"PRId64"\n",

- st->index, st->info->fps_last_dts_idx,

- st->info->fps_last_dts, st->codec_info_nb_frames,

- pkt->dts);

- st->info->fps_first_dts =

- st->info->fps_last_dts = AV_NOPTS_VALUE;

- }

-

-

-

- if (st->info->fps_last_dts != AV_NOPTS_VALUE &&

- st->info->fps_last_dts_idx > st->info->fps_first_dts_idx &&

- (pkt->dts - st->info->fps_last_dts) / 1000 >

- (st->info->fps_last_dts - st->info->fps_first_dts) /

- (st->info->fps_last_dts_idx - st->info->fps_first_dts_idx)) {

- av_log(ic, AV_LOG_WARNING,

- "DTS discontinuity in stream %d: packet %d with DTS "

- "%"PRId64", packet %d with DTS %"PRId64"\n",

- st->index, st->info->fps_last_dts_idx,

- st->info->fps_last_dts, st->codec_info_nb_frames,

- pkt->dts);

- st->info->fps_first_dts =

- st->info->fps_last_dts = AV_NOPTS_VALUE;

- }

-

-

-

- if (st->info->fps_first_dts == AV_NOPTS_VALUE) {

- st->info->fps_first_dts = pkt->dts;

- st->info->fps_first_dts_idx = st->codec_info_nb_frames;

- }

- st->info->fps_last_dts = pkt->dts;

- st->info->fps_last_dts_idx = st->codec_info_nb_frames;

- }

- if (st->codec_info_nb_frames>1) {

- int64_t t = 0;

-

-

- if (st->time_base.den > 0)

- t = av_rescale_q(st->info->codec_info_duration, st->time_base, AV_TIME_BASE_Q);

- if (st->avg_frame_rate.num > 0)

- t = FFMAX(t, av_rescale_q(st->codec_info_nb_frames, av_inv_q(st->avg_frame_rate), AV_TIME_BASE_Q));

-

-

- if ( t == 0

- && st->codec_info_nb_frames>30

- && st->info->fps_first_dts != AV_NOPTS_VALUE

- && st->info->fps_last_dts != AV_NOPTS_VALUE)

- t = FFMAX(t, av_rescale_q(st->info->fps_last_dts - st->info->fps_first_dts, st->time_base, AV_TIME_BASE_Q));

-

-

- if (t >= max_analyze_duration) {

- av_log(ic, AV_LOG_VERBOSE, "max_analyze_duration %"PRId64" reached at %"PRId64" microseconds\n",

- max_analyze_duration,

- t);

- if (ic->flags & AVFMT_FLAG_NOBUFFER)

- av_packet_unref(pkt);

- break;

- }

- if (pkt->duration) {

- st->info->codec_info_duration += pkt->duration;

- st->info->codec_info_duration_fields += st->parser && st->need_parsing && st->codec->ticks_per_frame ==2 ? st->parser->repeat_pict + 1 : 2;

- }

- }

- #if FF_API_R_FRAME_RATE

- if (st->codec->codec_type == AVMEDIA_TYPE_VIDEO)

- ff_rfps_add_frame(ic, st, pkt->dts);

- #endif

- if (st->parser && st->parser->parser->split && !st->codec->extradata) {

- int i = st->parser->parser->split(st->codec, pkt->data, pkt->size);

- if (i > 0 && i < FF_MAX_EXTRADATA_SIZE) {

- if (ff_alloc_extradata(st->codec, i))

- return AVERROR(ENOMEM);

- memcpy(st->codec->extradata, pkt->data,

- st->codec->extradata_size);

- }

- }

-

-

-

-

-

-

-

-

-

-

-

- try_decode_frame(ic, st, pkt,

- (options && i < orig_nb_streams) ? &options[i] : NULL);

-

-

- if (ic->flags & AVFMT_FLAG_NOBUFFER)

- av_packet_unref(pkt);

-

-

- st->codec_info_nb_frames++;

- count++;

- }

-

-

- if (flush_codecs) {

- AVPacket empty_pkt = { 0 };

- int err = 0;

- av_init_packet(&empty_pkt);

-

-

- for (i = 0; i < ic->nb_streams; i++) {

-

-

- st = ic->streams[i];

-

-

-

- if (st->info->found_decoder == 1) {

- do {

- err = try_decode_frame(ic, st, &empty_pkt,

- (options && i < orig_nb_streams)

- ? &options[i] : NULL);

- } while (err > 0 && !has_codec_parameters(st, NULL));

-

-

- if (err < 0) {

- av_log(ic, AV_LOG_INFO,

- "decoding for stream %d failed\n", st->index);

- }

- }

- }

- }

-

-

-

- for (i = 0; i < ic->nb_streams; i++) {

- st = ic->streams[i];

- avcodec_close(st->codec);

- }

-

-

- ff_rfps_calculate(ic);

-

-

- for (i = 0; i < ic->nb_streams; i++) {

- st = ic->streams[i];

- if (st->codec->codec_type == AVMEDIA_TYPE_VIDEO) {

- if (st->codec->codec_id == AV_CODEC_ID_RAWVIDEO && !st->codec->codec_tag && !st->codec->bits_per_coded_sample) {

- uint32_t tag= avcodec_pix_fmt_to_codec_tag(st->codec->pix_fmt);

- if (avpriv_find_pix_fmt(avpriv_get_raw_pix_fmt_tags(), tag) == st->codec->pix_fmt)

- st->codec->codec_tag= tag;

- }

-

-

-

- if (st->info->codec_info_duration_fields &&

- !st->avg_frame_rate.num &&

- st->info->codec_info_duration) {

- int best_fps = 0;

- double best_error = 0.01;

-

-

- if (st->info->codec_info_duration >= INT64_MAX / st->time_base.num / 2||

- st->info->codec_info_duration_fields >= INT64_MAX / st->time_base.den ||

- st->info->codec_info_duration < 0)

- continue;

- av_reduce(&st->avg_frame_rate.num, &st->avg_frame_rate.den,

- st->info->codec_info_duration_fields * (int64_t) st->time_base.den,

- st->info->codec_info_duration * 2 * (int64_t) st->time_base.num, 60000);

-

-

-

-

- for (j = 0; j < MAX_STD_TIMEBASES; j++) {

- AVRational std_fps = { get_std_framerate(j), 12 * 1001 };

- double error = fabs(av_q2d(st->avg_frame_rate) /

- av_q2d(std_fps) - 1);

-

-

- if (error < best_error) {

- best_error = error;

- best_fps = std_fps.num;

- }

- }

- if (best_fps)

- av_reduce(&st->avg_frame_rate.num, &st->avg_frame_rate.den,

- best_fps, 12 * 1001, INT_MAX);

- }

-

-

- if (!st->r_frame_rate.num) {

- if ( st->codec->time_base.den * (int64_t) st->time_base.num

- <= st->codec->time_base.num * st->codec->ticks_per_frame * (int64_t) st->time_base.den) {

- st->r_frame_rate.num = st->codec->time_base.den;

- st->r_frame_rate.den = st->codec->time_base.num * st->codec->ticks_per_frame;

- } else {

- st->r_frame_rate.num = st->time_base.den;

- st->r_frame_rate.den = st->time_base.num;

- }

- }

- } else if (st->codec->codec_type == AVMEDIA_TYPE_AUDIO) {

- if (!st->codec->bits_per_coded_sample)

- st->codec->bits_per_coded_sample =

- av_get_bits_per_sample(st->codec->codec_id);

-

- switch (st->codec->audio_service_type) {

- case AV_AUDIO_SERVICE_TYPE_EFFECTS:

- st->disposition = AV_DISPOSITION_CLEAN_EFFECTS;

- break;

- case AV_AUDIO_SERVICE_TYPE_VISUALLY_IMPAIRED:

- st->disposition = AV_DISPOSITION_VISUAL_IMPAIRED;

- break;

- case AV_AUDIO_SERVICE_TYPE_HEARING_IMPAIRED:

- st->disposition = AV_DISPOSITION_HEARING_IMPAIRED;

- break;

- case AV_AUDIO_SERVICE_TYPE_COMMENTARY:

- st->disposition = AV_DISPOSITION_COMMENT;

- break;

- case AV_AUDIO_SERVICE_TYPE_KARAOKE:

- st->disposition = AV_DISPOSITION_KARAOKE;

- break;

- }

- }

- }

-

-

- if (probesize)

- estimate_timings(ic, old_offset);

-

-

- av_opt_set(ic, "skip_clear", "0", AV_OPT_SEARCH_CHILDREN);

-

-

- if (ret >= 0 && ic->nb_streams)

-

- ret = -1;

- for (i = 0; i < ic->nb_streams; i++) {

- const char *errmsg;

- st = ic->streams[i];

- if (!has_codec_parameters(st, &errmsg)) {

- char buf[256];

- avcodec_string(buf, sizeof(buf), st->codec, 0);

- av_log(ic, AV_LOG_WARNING,

- "Could not find codec parameters for stream %d (%s): %s\n"

- "Consider increasing the value for the 'analyzeduration' and 'probesize' options\n",

- i, buf, errmsg);

- } else {

- ret = 0;

- }

- }

-

-

- compute_chapters_end(ic);

-

-

- find_stream_info_err:

- for (i = 0; i < ic->nb_streams; i++) {

- st = ic->streams[i];

- if (ic->streams[i]->codec->codec_type != AVMEDIA_TYPE_AUDIO)

- ic->streams[i]->codec->thread_count = 0;

- if (st->info)

- av_freep(&st->info->duration_error);

- av_freep(&ic->streams[i]->info);

- }

- if (ic->pb)

- av_log(ic, AV_LOG_DEBUG, "After avformat_find_stream_info() pos: %"PRId64" bytes read:%"PRId64" seeks:%d frames:%d\n",

- avio_tell(ic->pb), ic->pb->bytes_read, ic->pb->seek_count, count);

- return ret;

- }

由于avformat_find_stream_info()代码比较长,难以全部分析,在这里只能简单记录一下它的要点。该函数主要用于给每个媒体流(音频/视频)的AVStream结构体赋值。我们大致浏览一下这个函数的代码,会发现它其实已经实现了解码器的查找,解码器的打开,视音频帧的读取,视音频帧的解码等工作。换句话说,该函数实际上已经“走通”的解码的整个流程。下面看一下除了成员变量赋值之外,该函数的几个关键流程。

1.查找解码器:find_decoder()

2.打开解码器:avcodec_open2()

3.读取完整的一帧压缩编码的数据:read_frame_internal()

注:av_read_frame()内部实际上就是调用的read_frame_internal()。

4.解码一些压缩编码数据:try_decode_frame()

下面选择上述流程中几个关键函数的代码简单看一下。

find_decoder()

find_decoder()用于找到合适的解码器,它的定义如下所示。

- static const AVCodec *find_decoder(AVFormatContext *s, AVStream *st, enum AVCodecID codec_id)

- {

- if (st->codec->codec)

- return st->codec->codec;

-

-

- switch (st->codec->codec_type) {

- case AVMEDIA_TYPE_VIDEO:

- if (s->video_codec) return s->video_codec;

- break;

- case AVMEDIA_TYPE_AUDIO:

- if (s->audio_codec) return s->audio_codec;

- break;

- case AVMEDIA_TYPE_SUBTITLE:

- if (s->subtitle_codec) return s->subtitle_codec;

- break;

- }

-

-

- return avcodec_find_decoder(codec_id);

- }

从代码中可以看出,如果指定的AVStream已经包含了解码器,则函数什么也不做直接返回。否则调用avcodec_find_decoder()获取解码器。avcodec_find_decoder()是一个FFmpeg的API函数,在这里不做详细分析。

read_frame_internal()

read_frame_internal()的功能是读取一帧压缩码流数据。FFmpeg的API函数av_read_frame()内部调用的就是read_frame_internal()。有关这方面的知识可以参考文章:

ffmpeg 源代码简单分析 : av_read_frame()

因此,可以认为read_frame_internal()和av_read_frame()的功能基本上是等同的。

try_decode_frame()

try_decode_frame()的功能可以从字面上的意思进行理解:“尝试解码一些帧”,它的定义如下所示。

-

- static int try_decode_frame(AVFormatContext *s, AVStream *st, AVPacket *avpkt,

- AVDictionary **options)

- {

- const AVCodec *codec;

- int got_picture = 1, ret = 0;

- AVFrame *frame = av_frame_alloc();

- AVSubtitle subtitle;

- AVPacket pkt = *avpkt;

-

-

- if (!frame)

- return AVERROR(ENOMEM);

-

-

- if (!avcodec_is_open(st->codec) &&

- st->info->found_decoder <= 0 &&

- (st->codec->codec_id != -st->info->found_decoder || !st->codec->codec_id)) {

- AVDictionary *thread_opt = NULL;

-

-

- codec = find_decoder(s, st, st->codec->codec_id);

-

-

- if (!codec) {

- st->info->found_decoder = -st->codec->codec_id;

- ret = -1;

- goto fail;

- }

-

-

-

-

- av_dict_set(options ? options : &thread_opt, "threads", "1", 0);

- if (s->codec_whitelist)

- av_dict_set(options ? options : &thread_opt, "codec_whitelist", s->codec_whitelist, 0);

- ret = avcodec_open2(st->codec, codec, options ? options : &thread_opt);

- if (!options)

- av_dict_free(&thread_opt);

- if (ret < 0) {

- st->info->found_decoder = -st->codec->codec_id;

- goto fail;

- }

- st->info->found_decoder = 1;

- } else if (!st->info->found_decoder)

- st->info->found_decoder = 1;

-

-

- if (st->info->found_decoder < 0) {

- ret = -1;

- goto fail;

- }

-

-

- while ((pkt.size > 0 || (!pkt.data && got_picture)) &&

- ret >= 0 &&

- (!has_codec_parameters(st, NULL) || !has_decode_delay_been_guessed(st) ||

- (!st->codec_info_nb_frames &&

- st->codec->codec->capabilities & CODEC_CAP_CHANNEL_CONF))) {

- got_picture = 0;

- switch (st->codec->codec_type) {

- case AVMEDIA_TYPE_VIDEO:

- ret = avcodec_decode_video2(st->codec, frame,

- &got_picture, &pkt);

- break;

- case AVMEDIA_TYPE_AUDIO:

- ret = avcodec_decode_audio4(st->codec, frame, &got_picture, &pkt);

- break;

- case AVMEDIA_TYPE_SUBTITLE:

- ret = avcodec_decode_subtitle2(st->codec, &subtitle,

- &got_picture, &pkt);

- ret = pkt.size;

- break;

- default:

- break;

- }

- if (ret >= 0) {

- if (got_picture)

- st->nb_decoded_frames++;

- pkt.data += ret;

- pkt.size -= ret;

- ret = got_picture;

- }

- }

-

-

- if (!pkt.data && !got_picture)

- ret = -1;

-

-

- fail:

- av_frame_free(&frame);

- return ret;

- }

从try_decode_frame()的定义可以看出,该函数首先判断视音频流的解码器是否已经打开,如果没有打开的话,先打开相应的解码器。接下来根据视音频流类型的不同,调用不同的解码函数进行解码:视频流调用avcodec_decode_video2(),音频流调用avcodec_decode_audio4(),字幕流调用avcodec_decode_subtitle2()。解码的循环会一直持续下去直到满足了while()的所有条件。

while()语句的条件中有一个has_codec_parameters()函数,用于判断AVStream中的成员变量是否都已经设置完毕。该函数在avformat_find_stream_info()中的多个地方被使用过。下面简单看一下该函数。

has_codec_parameters()

has_codec_parameters()用于检查AVStream中的成员变量是否都已经设置完毕。函数的定义如下。

- static int has_codec_parameters(AVStream *st, const char **errmsg_ptr)

- {

- AVCodecContext *avctx = st->codec;

-

-

- #define FAIL(errmsg) do { \

- if (errmsg_ptr) \

- *errmsg_ptr = errmsg; \

- return 0; \

- } while (0)

-

-

- if ( avctx->codec_id == AV_CODEC_ID_NONE

- && avctx->codec_type != AVMEDIA_TYPE_DATA)

- FAIL("unknown codec");

- switch (avctx->codec_type) {

- case AVMEDIA_TYPE_AUDIO:

- if (!avctx->frame_size && determinable_frame_size(avctx))

- FAIL("unspecified frame size");

- if (st->info->found_decoder >= 0 &&

- avctx->sample_fmt == AV_SAMPLE_FMT_NONE)

- FAIL("unspecified sample format");

- if (!avctx->sample_rate)

- FAIL("unspecified sample rate");

- if (!avctx->channels)

- FAIL("unspecified number of channels");

- if (st->info->found_decoder >= 0 && !st->nb_decoded_frames && avctx->codec_id == AV_CODEC_ID_DTS)

- FAIL("no decodable DTS frames");

- break;

- case AVMEDIA_TYPE_VIDEO:

- if (!avctx->width)

- FAIL("unspecified size");

- if (st->info->found_decoder >= 0 && avctx->pix_fmt == AV_PIX_FMT_NONE)

- FAIL("unspecified pixel format");

- if (st->codec->codec_id == AV_CODEC_ID_RV30 || st->codec->codec_id == AV_CODEC_ID_RV40)

- if (!st->sample_aspect_ratio.num && !st->codec->sample_aspect_ratio.num && !st->codec_info_nb_frames)

- FAIL("no frame in rv30/40 and no sar");

- break;

- case AVMEDIA_TYPE_SUBTITLE:

- if (avctx->codec_id == AV_CODEC_ID_HDMV_PGS_SUBTITLE && !avctx->width)

- FAIL("unspecified size");

- break;

- case AVMEDIA_TYPE_DATA:

- if (avctx->codec_id == AV_CODEC_ID_NONE) return 1;

- }

-

-

- return 1;

- }

estimate_timings()

estimate_timings()位于avformat_find_stream_info()最后面,用于估算AVFormatContext以及AVStream的时长duration。它的代码如下所示。

- static void estimate_timings(AVFormatContext *ic, int64_t old_offset)

- {

- int64_t file_size;

-

-

-

- if (ic->iformat->flags & AVFMT_NOFILE) {

- file_size = 0;

- } else {

- file_size = avio_size(ic->pb);

- file_size = FFMAX(0, file_size);

- }

-

-

- if ((!strcmp(ic->iformat->name, "mpeg") ||

- !strcmp(ic->iformat->name, "mpegts")) &&

- file_size && ic->pb->seekable) {

-

- estimate_timings_from_pts(ic, old_offset);

- ic->duration_estimation_method = AVFMT_DURATION_FROM_PTS;

- } else if (has_duration(ic)) {

-

-

- fill_all_stream_timings(ic);

- ic->duration_estimation_method = AVFMT_DURATION_FROM_STREAM;

- } else {

-

- estimate_timings_from_bit_rate(ic);

- ic->duration_estimation_method = AVFMT_DURATION_FROM_BITRATE;

- }

- update_stream_timings(ic);

-

-

- {

- int i;

- AVStream av_unused *st;

- for (i = 0; i < ic->nb_streams; i++) {

- st = ic->streams[i];

- av_dlog(ic, "%d: start_time: %0.3f duration: %0.3f\n", i,

- (double) st->start_time / AV_TIME_BASE,

- (double) st->duration / AV_TIME_BASE);

- }

- av_dlog(ic,

- "stream: start_time: %0.3f duration: %0.3f bitrate=%d kb/s\n",

- (double) ic->start_time / AV_TIME_BASE,

- (double) ic->duration / AV_TIME_BASE,

- ic->bit_rate / 1000);

- }

- }

从estimate_timings()的代码中可以看出,有3种估算方法:

(1)通过pts(显示时间戳)。该方法调用estimate_timings_from_pts()。它的基本思想就是读取视音频流中的结束位置AVPacket的PTS和起始位置AVPacket的PTS,两者相减得到时长信息。

(2)通过已知流的时长。该方法调用fill_all_stream_timings()。它的代码没有细看,但从函数的注释的意思来说,应该是当有些视音频流有时长信息的时候,直接赋值给其他视音频流。

(3)通过bitrate(码率)。该方法调用estimate_timings_from_bit_rate()。它的基本思想就是获得整个文件大小,以及整个文件的bitrate,两者相除之后得到时长信息。

estimate_timings_from_bit_rate()

在这里附上上述几种方法中最简单的函数estimate_timings_from_bit_rate()的代码。

- static void estimate_timings_from_bit_rate(AVFormatContext *ic)

- {

- int64_t filesize, duration;

- int i, show_warning = 0;

- AVStream *st;

-

-

-

- if (ic->bit_rate <= 0) {

- int bit_rate = 0;

- for (i = 0; i < ic->nb_streams; i++) {

- st = ic->streams[i];

- if (st->codec->bit_rate > 0) {

- if (INT_MAX - st->codec->bit_rate < bit_rate) {

- bit_rate = 0;

- break;

- }

- bit_rate += st->codec->bit_rate;

- }

- }

- ic->bit_rate = bit_rate;

- }

-

-

-

- if (ic->duration == AV_NOPTS_VALUE &&

- ic->bit_rate != 0) {

- filesize = ic->pb ? avio_size(ic->pb) : 0;

- if (filesize > ic->data_offset) {

- filesize -= ic->data_offset;

- for (i = 0; i < ic->nb_streams; i++) {

- st = ic->streams[i];

- if ( st->time_base.num <= INT64_MAX / ic->bit_rate

- && st->duration == AV_NOPTS_VALUE) {

- duration = av_rescale(8 * filesize, st->time_base.den,

- ic->bit_rate *

- (int64_t) st->time_base.num);

- st->duration = duration;

- show_warning = 1;

- }

- }

- }

- }

- if (show_warning)

- av_log(ic, AV_LOG_WARNING,

- "Estimating duration from bitrate, this may be inaccurate\n");

- }

从代码中可以看出,该函数做了两步工作:

(1)如果AVFormatContext中没有bit_rate信息,就把所有AVStream的bit_rate加起来作为AVFormatContext的bit_rate信息。

(2)使用文件大小filesize除以bitrate得到时长信息。具体的方法是:

AVStream->duration=(filesize*8/bit_rate)/time_base

PS:

1)filesize乘以8是因为需要把Byte转换为Bit

2)具体的实现函数是那个av_rescale()函数。x=av_rescale(a,b,c)的含义是x=a*b/c。

3)之所以要除以time_base,是因为AVStream中的duration的单位是time_base,注意这和AVFormatContext中的duration的单位(单位是AV_TIME_BASE,固定取值为1000000)是不一样的。

至此,avformat_find_stream_info()主要的函数就分析完了。

在进行demux时候,采用ffmpeg进行。

转载地址:http://jiya.io/archives/vlc_optimize_1.html

0x00 前置信息

版本:ffmpeg2.2.0

文件:vlc src/module/demux/avformat/demux.c

函数:OpenDemux

0x01 研究背景

ffmpeg的两个接口avformat_open_input和avformat_find_stream_info分别用于打开一个流和分析流信息。在初始信息不足的情况下,avformat_find_stream_info接口需要在内部调用read_frame_internal接口读取流数据,然后再分析后,设置核心数据结构AVFormatContext。由于需要读取数据包,avformat_find_stream_info接口会带来很大的延迟,那么有几种方案可以降低该接口的延迟,具体如下:

- 通过设置AVFormatContext的probesize成员,来限制avformat_find_stream_info接口内部读取的最大数据量,代码如下:

AVFormatContext *fmt_ctx = NULL;

ret = avformat_open_input(&fmt_ctx, url, input_fmt, NULL);

fmt_ctx->probesize = 4096;

ret = avformat_find_stream_info(fmt_ctx, NULL);

这样的方法其实会带来弊端,因为预读长度设置的过小时,在avformat_find_stream_info内部至多只会读取一帧数据,有些情况时,会导致这些数据不足以分析这个流的信息。

- 通过设置AVFormatContext的flags成员,来设置将avformat_find_stream_info内部读取的数据包不放入AVFormatContext的缓冲区packet_buffer中,代码如下:

AVFormatContext *fmt_ctx = NULL;

ret = avformat_open_input(&fmt_ctx, url, input_fmt, NULL);

fmt_ctx->flags |= AVFMT_FLAG_NOBUFFER;

ret = avformat_find_stream_info(fmt_ctx, NULL);

深入avformat_find_stream_info接口内部就可以发现,当设置了AVFMT_FLAG_NOBUFFER选项后,数据包不入缓冲区,相当于在avformat_find_stream_info接口内部读取的每一帧数据只用于分析,不显示,摘avformat_find_stream_info接口中的一段代码即可理解:

if (ic->flags & AVFMT_FLAG_NOBUFFER) {

pkt = &pkt1;

} else {

pkt = add_to_pktbuf(&ic->packet_buffer, &pkt1,

&ic->packet_buffer_end);

if ((ret = av_dup_packet(pkt)) < 0)

goto find_stream_info_err;

}

当读取的数据包很多时,实际avformat_find_stream_info接口内部尝试解码以及分析的过程也是耗时的(具体没有测试),所以想到一种极端的解决方案,直接跳过avformat_find_stream_info接口,自定义初始化解码环境。

0x02 解决方法

前提条件:发送端的流信息可知。

我这里环境的流信息为:

audio: AAC 44100Hz 2 channel 16bit

video: H264 640*480 30fps

直播流

调用avformat_open_input接口后,不继续调用avformat_find_stream_info接口,具体代码如下:

AVFormatContext *fmt_ctx = NULL;

ret = avformat_open_input(&fmt_ctx, url, input_fmt, NULL);

fmt_ctx->probesize = 4096;

ret = init_decode(fmt_ctx);

init_decode为自己实现的接口:接口及详细代码如下:

enum {

FLV_TAG_TYPE_AUDIO = 0x08,

FLV_TAG_TYPE_VIDEO = 0x09,

FLV_TAG_TYPE_META = 0x12,

};

static AVStream *create_stream(AVFormatContext *s, int codec_type)

{

AVStream *st = avformat_new_stream(s, NULL);

if (!st)

return NULL;

st->codec->codec_type = codec_type;

return st;

}

static int get_video_extradata(AVFormatContext *s, int video_index)

{

int type, size, flags, pos, stream_type;

int ret = -1;

int64_t dts;

bool got_extradata = false;

if (!s || video_index < 0 || video_index > 2)

return ret;

for (;; avio_skip(s->pb, 4)) {

pos = avio_tell(s->pb);

type = avio_r8(s->pb);

size = avio_rb24(s->pb);

dts = avio_rb24(s->pb);

dts |= avio_r8(s->pb) << 24;

avio_skip(s->pb, 3);

if (0 == size)

break;

if (FLV_TAG_TYPE_AUDIO == type || FLV_TAG_TYPE_META == type) {

avio_seek(s->pb, size, SEEK_CUR);

} else if (type == FLV_TAG_TYPE_VIDEO) {

size -= 5;

s->streams[video_index]->codec->extradata = xmalloc(size + FF_INPUT_BUFFER_PADDING_SIZE);

if (NULL == s->streams[video_index]->codec->extradata)

break;

memset(s->streams[video_index]->codec->extradata, 0, size + FF_INPUT_BUFFER_PADDING_SIZE);

memcpy(s->streams[video_index]->codec->extradata, s->pb->buf_ptr + 5, size);

s->streams[video_index]->codec->extradata_size = size;

ret = 0;

got_extradata = true;

} else {

break;

}

if (got_extradata)

break;

}

return ret;

}

static int init_decode(AVFormatContext *s)

{

int video_index = -1;

int audio_index = -1;

int ret = -1;

if (!s)

return ret;

if (0 == s->nb_streams) {

create_stream(s, AVMEDIA_TYPE_VIDEO);

create_stream(s, AVMEDIA_TYPE_AUDIO);

video_index = 0;

audio_index = 1;

} else if (1 == s->nb_streams) {

if (AVMEDIA_TYPE_VIDEO == s->streams[0]->codec->codec_type) {

create_stream(s, AVMEDIA_TYPE_AUDIO);

video_index = 0;

audio_index = 1;

} else if (AVMEDIA_TYPE_AUDIO == s->streams[0]->codec->codec_type) {

create_stream(s, AVMEDIA_TYPE_VIDEO);

video_index = 1;

audio_index = 0;

}

} else if (2 == s->nb_streams) {

if (AVMEDIA_TYPE_VIDEO == s->streams[0]->codec->codec_type) {

video_index = 0;

audio_index = 1;

} else if (AVMEDIA_TYPE_VIDEO == s->streams[1]->codec->codec_type) {

video_index = 1;

audio_index = 0;

}

}

if (video_index != 0 && video_index != 1)

return ret;

s->streams[audio_index]->codec->codec_id = AV_CODEC_ID_AAC;

s->streams[audio_index]->codec->sample_rate = 44100;

s->streams[audio_index]->codec->time_base.den = 44100;

s->streams[audio_index]->codec->time_base.num = 1;

s->streams[audio_index]->codec->bits_per_coded_sample = 16;

s->streams[audio_index]->codec->channels = 2;

s->streams[audio_index]->codec->channel_layout = 3;

s->streams[audio_index]->pts_wrap_bits = 32;

s->streams[audio_index]->time_base.den = 1000;

s->streams[audio_index]->time_base.num = 1;

s->streams[video_index]->codec->codec_id = AV_CODEC_ID_H264;

s->streams[video_index]->codec->width = 640;

s->streams[video_index]->codec->height = 480;

s->streams[video_index]->codec->ticks_per_frame = 2;

s->streams[video_index]->codec->pix_fmt = 0;

s->streams[video_index]->pts_wrap_bits = 32;

s->streams[video_index]->time_base.den = 1000;

s->streams[video_index]->time_base.num = 1;

s->streams[video_index]->avg_frame_rate.den = 90;

s->streams[video_index]->avg_frame_rate.num = 3;

s->streams[video_index]->r_frame_rate.den = 60;

s->streams[video_index]->r_frame_rate.num = 2;

ret = get_video_extradata(s, video_index);

s->nb_streams = 2;

s->pb->buf_ptr = s->pb->buf_end;

s->pb->pos = s->pb->buf_end;

return ret;

}

分析:

在init_decode接口执行的操作如下:

- 经过avformat_open_input接口的调用,AVFormatContext内部有几个流其实是无法预知的,所以需要判断,没有的流需调用create_stream接口创建,并分别设置video_index和audio_index。

- 根据已知信息,初始化audio和video的流信息。

- 因为H264解码时需要sps/pps信息,这个信息在接收到的第一个video tag中,通过get_video_extradata接口获取:

3.1 avio_*系列接口读到的数据已经是flv格式的,所以判断读到的tag如果是audio tag或者metadata tag时,跳过这个tag数据,继续读。

3.2 如果是video tag,读取其中的数据至s->streams[video_index]->codec->extradata中,跳出循环。 - 更新AVFormatContext信息。

- 将缓冲区置空。

如果video tag是H263编码的,在init_decode接口内部,无需调用get_video_extradata接口即可成功初始化解码环境(需将codec_id设置为AV_CODEC_ID_FLV1)。

对于大多数情况,都可以通过自定义的接口init_decode替代avformat_find_stream_info接口来降低延迟,当然会有很多限制,就看具体项目需求了。

0x03 总结

init_decode接口能够适配多种设备发出的流,当然还有很多细节没有关注到,需要在后续研究中跟进。

9225

9225

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?