AudioTrack被用于PCM音频流的回放。它的使用方式如下,

| //buffer int bufsize = AudioTrack.getMinBufferSize(8000,//采样率: AudioFormat.CHANNEL_CONFIGURATION_STEREO,//双声道 AudioFormat.ENCODING_PCM_16BIT//采样精度);

// AudioTrack AudioTrack trackplayer = new AudioTrack( AudioManager.STREAM_MUSIC,// 8000,AudioFormat.CHANNEL_CONFIGURATION_ STEREO, AudioFormat.ENCODING_PCM_16BIT, bufsize, AudioTrack.MODE_STREAM); // trackplayer.play() ;

...... // trackplayer.write(bytes_pkg, 0,bytes_pkg.length) ;/ ......

// trackplayer.stop(); trackplayer.release();

|

frameworks\base\media\tests\MediaFrameworkTest\src\com\android\mediaframeworktest\functional\audio也有demo程序MediaAudioTrackTest.java.

在数据传送上它有两种方式:push和pull,即主动或被动获取数据。

数据播放上,AudioTrack还同时支持static和streaming两种模式,即一次加载完数据还是加载部分数据就可播放。

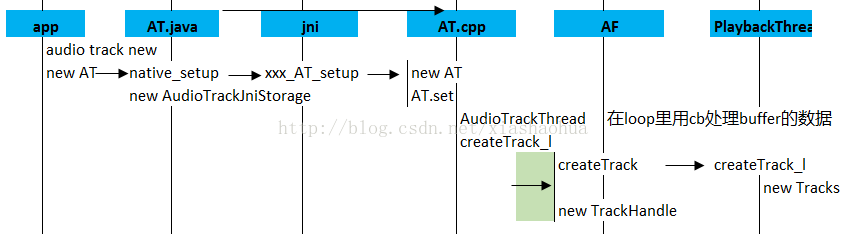

AudioTrack的创建流程如下,

部分函数说明如下,

createTrack_l的主要调用关系如下,

| status_t AudioTrack::createTrack_l() { …… sp<IAudioTrack> track = audioFlinger->createTrack(streamType, mSampleRate, mFormat, mChannelMask, &temp, &trackFlags, mSharedBuffer, output, mClientPid, tid, &mSessionId, mClientUid, &status);

// update proxy if (mSharedBuffer == 0) { mStaticProxy.clear(); mProxy = new AudioTrackClientProxy(cblk, buffers, frameCount, mFrameSize); } else { mStaticProxy = new StaticAudioTrackClientProxy(cblk, buffers, frameCount, mFrameSize); mProxy = mStaticProxy; } …… }

|

通过binder到AudioFlinger::createTrack,这里主要完成以下功能,

| PlaybackThread *thread = checkPlaybackThread_l(output); track = thread->createTrack_l(client, streamType, sampleRate, format, channelMask, frameCount, sharedBuffer, lSessionId, flags, tid, clientUid, &lStatus);//主要是创建一个playback的Track

setAudioHwSyncForSession_l(thread, lSessionId);

// return handle to client trackHandle = new TrackHandle(track); //作为IAudioTack的server端,提供接口给AT,并在AF内做功能桥接 |

596

596

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?