3.有‘手机垃圾短信’数据集,将其放在文件中:sms_spam.csv,该文件共有5537行,2列,分别是类型(type,ham为非垃圾短信,spam为垃圾短信)和内容(text,短信的具体内容)。

(1)对该‘手机垃圾短信”数据集进行文本挖掘。

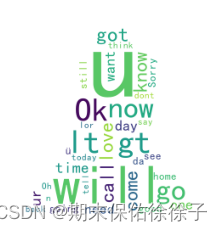

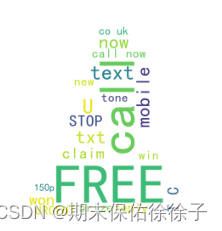

(2)划分非垃圾短信ham和垃圾短信spam,并分别作词云图。

'''

3.有‘手机垃圾短信’数据集,将其放在文件中:sms_spam.csv,该文件共有5537行,2列,

分别是类型(type,ham为非垃圾短信,spam为垃圾短信)和内容(text,短信的具体内容)。

(1)对该‘手机垃圾短信”数据集进行文本挖掘。

(2)划分非垃圾短信ham和垃圾短信spam,并分别作词云图。

'''

# 库

import nltk

import pandas as pd

from nltk.tokenize import word_tokenize

from nltk.stem import PorterStemmer

from collections import Counter

import jieba

import jieba.analyse

from os import path

import imageio

from ipykernel import kernelapp as app

import matplotlib as mpl

import matplotlib.pyplot as plt

from wordcloud import WordCloud,STOPWORDS,ImageColorGenerator

# NLTK数据

nltk.download('punkt')

# 数据

sms = pd.read_csv('sms_spam.csv')

# 停用词

stop=pd.read_csv('C:/Users/86186/Desktop/大二 下/数据挖掘/第7章/Estopwords.txt',sep='bucunzai',encoding='utf_8',header=None)

stopwords=[' ']+list(stop[0])

# 单行去除

word2 = []

for i in range(0,len(sms),1):

j = word_tokenize(sms['text'][i])

word2.append(j)

word22 = []

for j in range(0,len(word2),1):

word21 = []

for n in word2[j]:

if n not in stopwords:

word21.append(n)

word22.insert(i,word21)

print('单行去除停用词看word22')

# 词干提取

word3 = []

stemmer=PorterStemmer()

for i in range(0,len(word22),1):

word31 = []

for j in word22[i]:

word31.append(stemmer.stem(j))

word3.insert(i,word31)

print('单行词干提取看word3')

# 词频(前25)

word_words=[x for x in word12 if len(x)>=2]

counter1 = Counter(word_words)

counter2 = Counter(word_words).most_common(25)

print(counter2)

# (2)

# 分类

ham = []

for i in range(0,len(sms),1):

if sms['type'][i] == 'ham':

ham.append(sms['text'][i])

spam = []

for i in range(0,len(sms),1):

if sms['type'][i] == 'spam':

spam.append(sms['text'][i])

ham1 = " ".join(ham)

ham11 = word_tokenize(ham1)

ham12 = []

for word in ham11:

if word not in stopwords:

ham12.append(word)

print('整体去除停用词看ham12')

spam1 = " ".join(spam)

spam11 = word_tokenize(spam1)

spam12 = []

for word in spam11:

if word not in stopwords:

spam12.append(word)

print('整体去除停用词看spam12')

# 使用词底图

maskImg=imageio.imread('C:/Users/86186/Desktop/大二 下/数据挖掘/第7章/renwu.png')

wc=WordCloud(font_path='C:/Windows/Fonts/simhei.ttf',background_color='white',

max_words=10000,mask=maskImg,max_font_size=120,min_font_size=10,

random_state=42,width=1200,height=900)

# 词云函数

def word__cloud(text):

# 设置字体及规格

plt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

plt.figure(figsize = (10,4))

maskImg=imageio.imread('C:/Users/86186/Desktop/大二 下/数据挖掘/第7章/renwu.png')

wc=WordCloud(font_path='C:/Windows/Fonts/simhei.ttf',background_color='white',

max_words=10000,mask=maskImg,max_font_size=120,min_font_size=10,

random_state=42,width=1200,height=900)

#生成text词云图

wc.generate(text)

image_colors=ImageColorGenerator(maskImg)

print(image_colors)

plt.imshow(wc)

plt.axis('off')

plt.title('词云图',fontsize = 20)

plt.show()

wc.to_file('词云图.jpg')

word__cloud(ham1)

word__cloud(spam1)

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?