三层神经网络 python实现

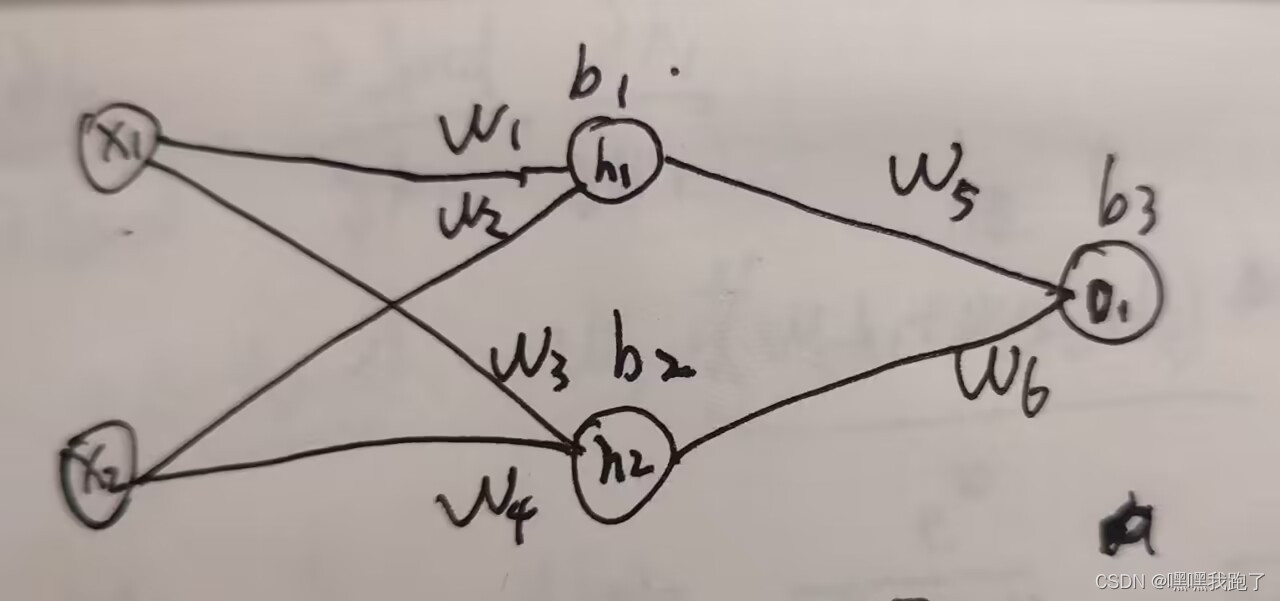

一、 输入层隐藏层输出层图示

#图中x1为x[0]

#图中x2为x[1]

#w1,w2,w3,w4,w5,w6为权值

#b1,b2,b3为偏置量

h1 = sigmoid(x[0]*self.w1+x[1]*self.w2+self.b1)

h2 = sigmoid(x[0]*self.w3+x[1]*self.w4+self.b3)

o1 = sigmoid(h1*self.w5+h2*self.w6+self.b3)

二、 初始化

def __init__(self):

self.w1 = np.random.normal()

self.w2 = np.random.normal()

self.w3 = np.random.normal()

self.w4 = np.random.normal()

self.w5 = np.random.normal()

self.w6 = np.random.normal()

self.b1 = np.random.normal()

self.b2 = np.random.normal()

self.b3 = np.random.normal()

三、 前向传播

#计算出预测组

def feedForward(self, x):

h1 = sigmoid(x[0]*self.w1+x[1]*self.w2+self.b1)

h2 = sigmoid(x[0]*self.w3+x[1]*self.w4+self.b3)

o1 = sigmoid(h1*self.w5+h2*self.w6+self.b3)

ypred = o1

return ypred

四、 后向传播

def train(self, data, all_y_trues):

...

for x,ytrue in zip(data, all_y_trues):

h1 = sigmoid(x[0]*self.w1+x[1]*self.w2+self.b1)

h2 = sigmoid(x[0]*self.w3+x[1]*self.w4+self.b3)

o1 = sigmoid(h1*self.w5+h2*self.w6+self.b3)

d_L_d_ypred = -2 * (ytrue - o1)

d_ypred_d_h1 = self.w5 * der_sigmoid(o1)

d_ypred_d_h2 = self.w6 * der_sigmoid(o1)

d_ypred_d_w5 = h1 * der_sigmoid(o1)

d_ypred_d_w6 = h2 * der_sigmoid(o1)

d_ypred_d_b3 = der_sigmoid(o1)

d_h1_d_w1 = x[0] * der_sigmoid(h1)

d_h1_d_w2 = x[1] * der_sigmoid(h1)

d_h1_d_b1 = der_sigmoid(h1)

d_h2_d_w3 = x[0] * der_sigmoid(h2)

d_h2_d_w4 = x[1] * der_sigmoid(h2)

d_h2_d_b2 = der_sigmoid(h2)

#计算出梯度,即损失函数对权值和偏置的偏导,即权值和偏置量对损失函数的影响大小

#利用链式法则求偏导

d_L_d_w1 = d_L_d_ypred * d_ypred_d_h1 * d_h1_d_w1

d_L_d_w2 = d_L_d_ypred * d_ypred_d_h1 * d_h1_d_w2

d_L_d_w3 = d_L_d_ypred * d_ypred_d_h2 * d_h2_d_w3

d_L_d_w4 = d_L_d_ypred * d_ypred_d_h2 * d_h2_d_w4

d_L_d_w5 = d_L_d_ypred * d_ypred_d_w5

d_L_d_w6 = d_L_d_ypred * d_ypred_d_w6

d_L_d_b1 = d_L_d_ypred * d_ypred_d_h1 * d_h1_d_b1

d_L_d_b2 = d_L_d_ypred * d_ypred_d_h2 * d_h2_d_b2

d_L_d_b3 = d_L_d_ypred * d_ypred_d_b3

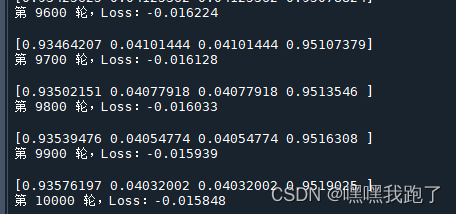

if(i%100 == 0):

ypreds = np.apply_along_axis(self.feedForward, 1, data)

#根据损失函数,计算出损失值

loss = Loss_MSE(ypreds, all_y_trues)

print(ypreds)

print("第 %d 轮,Loss:%.6f\n"%(i, loss))

五、 更新权值和偏置

learnrate = 0.01

self.w1 -= learnrate * self.w1

self.w2 -= learnrate * self.w2

self.w3 -= learnrate * self.w3

self.w4 -= learnrate * self.w4

self.w5 -= learnrate * self.w5

self.w6 -= learnrate * self.w6

self.b1 -= learnrate * self.b1

self.b2 -= learnrate * self.b2

self.b3 -= learnrate * self.b3

六、 数据集

# Define dataset

data = np.array([

[-2, -1], # Alice 处理后的身高,体重数据

[25, 6], # Bob

[19, 4], # Charlie

[-15, -6], # Diana

])

all_y_trues = np.array([

1, # Alice 女性

0, # Bob 男性

0, # Charlie

1, # Diana

])

七、 总结

通过学习Victor Zhou的博客,https://victorzhou.com/blog/intro-to-neural-networks/

使用python实现了三层神经网络。

接下来将继续学习pytorch文档中的图片/视频、音频、文本三个基础任务,并更新。

八、 效果图

九、 源代码

import numpy as np

def sigmoid(x):

return 1/(1+np.exp(-x))

def der_sigmoid(x):

return x*(1-x)

def Loss_MSE(ytrue, ypred):

return ((ytrue-ypred)*2).mean()

class myNN:

def __init__(self):

self.w1 = np.random.normal()

self.w2 = np.random.normal()

self.w3 = np.random.normal()

self.w4 = np.random.normal()

self.w5 = np.random.normal()

self.w6 = np.random.normal()

self.b1 = np.random.normal()

self.b2 = np.random.normal()

self.b3 = np.random.normal()

def feedForward(self, x):

h1 = sigmoid(x[0]*self.w1+x[1]*self.w2+self.b1)

h2 = sigmoid(x[0]*self.w3+x[1]*self.w4+self.b3)

o1 = sigmoid(h1*self.w5+h2*self.w6+self.b3)

ypred = o1

return ypred

def train(self, data, all_y_trues):

learnrate = 0.01

i = 1

for i in range(10001):

for x,ytrue in zip(data, all_y_trues):

h1 = sigmoid(x[0]*self.w1+x[1]*self.w2+self.b1)

h2 = sigmoid(x[0]*self.w3+x[1]*self.w4+self.b3)

o1 = sigmoid(h1*self.w5+h2*self.w6+self.b3)

d_L_d_ypred = -2 * (ytrue - o1)

d_ypred_d_h1 = self.w5 * der_sigmoid(o1)

d_ypred_d_h2 = self.w6 * der_sigmoid(o1)

d_ypred_d_w5 = h1 * der_sigmoid(o1)

d_ypred_d_w6 = h2 * der_sigmoid(o1)

d_ypred_d_b3 = der_sigmoid(o1)

d_h1_d_w1 = x[0] * der_sigmoid(h1)

d_h1_d_w2 = x[1] * der_sigmoid(h1)

d_h1_d_b1 = der_sigmoid(h1)

d_h2_d_w3 = x[0] * der_sigmoid(h2)

d_h2_d_w4 = x[1] * der_sigmoid(h2)

d_h2_d_b2 = der_sigmoid(h2)

self.w1 -= learnrate * d_L_d_ypred * d_ypred_d_h1 * d_h1_d_w1

self.w2 -= learnrate * d_L_d_ypred * d_ypred_d_h1 * d_h1_d_w2

self.w3 -= learnrate * d_L_d_ypred * d_ypred_d_h2 * d_h2_d_w3

self.w4 -= learnrate * d_L_d_ypred * d_ypred_d_h2 * d_h2_d_w4

self.w5 -= learnrate * d_L_d_ypred * d_ypred_d_w5

self.w6 -= learnrate * d_L_d_ypred * d_ypred_d_w6

self.b1 -= learnrate * d_L_d_ypred * d_ypred_d_h1 * d_h1_d_b1

self.b2 -= learnrate * d_L_d_ypred * d_ypred_d_h2 * d_h2_d_b2

self.b3 -= learnrate * d_L_d_ypred * d_ypred_d_b3

if(i%100 == 0):

ypreds = np.apply_along_axis(self.feedForward, 1, data)

loss = Loss_MSE(ypreds, all_y_trues)

print(ypreds)

print("第 %d 轮,Loss:%.6f\n"%(i, loss))

# Define dataset

data = np.array([

[-2, -1], # Alice

[25, 6], # Bob

[19, 4], # Charlie

[-15, -6], # Diana

])

all_y_trues = np.array([

1, # Alice

0, # Bob

0, # Charlie

1, # Diana

])

nn = myNN()

nn.train(data, all_y_trues)

5890

5890

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?