第一次接触Kaggle比赛。从练习区开始~~~

做了一个最简单的手写数字识别练习(Digit Recognizer)。

尝试了用KNN,bayes,Logistic Regression,svm。

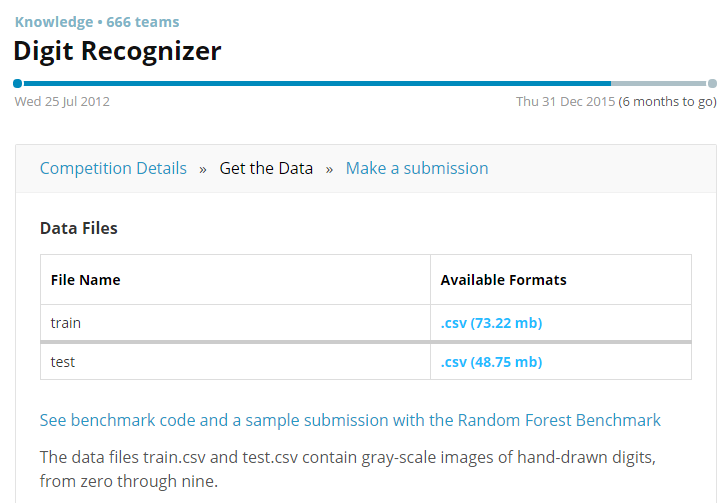

首页拿到练习数据,digit-recognizer-data

下载train.csv test.csv文件

train.csv

train.csv里面是42000*785的数据

每一行代表一个图片~,由784个像素组成(0~255)。

第0列是真实的数字值。

test.csv

train.csv里面是28000*784的数据

比训练数据少了第0列。所以要拿来预测~

KNN

首先将每个图片的像素值都变成010010010形式,即像素值大于0的变成1.

将train.csv变成42000*784的矩阵。

def csv2vector(filename,index=0):

fr = open(filename)

filelines = fr.readlines()

del filelines[0]

lenlines = len(filelines)

returnVect = zeros((lenlines,784))

labellist = [0]*lenlines

for i in range(lenlines):

lineStr = filelines[i]

linearr = lineStr.split(',')

if len(linearr)< 784:

continue

labellist[i] = linearr[0]

for j in range(index,len(linearr)):

if linearr[j] != '0':

returnVect[i,j-index] = 1

else:

returnVect[i,j-index] = 0

fr.close()

return returnVect,labellist然后拿一部分训练数据当测试数据,测试下正确率有多少。

>>> trainSet,trainlabel = knn.csv2vector(“train.csv”,1)

>>> knn.testrun(trainSet[20000:30000],trainlabel[20000:30000],trainSet[0:20000],trainlabel[0:20000])

这里选取2w做训练数据,1w当测试数据,查看效果~。

这里要跑好一会的~。如果效果还可以,然后就可以直接上 test.csv数据,然后提交结果。(如何提交下面再讲)。

def testknn(testSet,testlabel,trainSet,trainlabel):

start = time.clock()

num=0

for i in range(len(testSet)):

tmp = classify0(testSet[i],trainSet,trainlabel,3)

if tmp != testlabel[i]:

num = num + 1

print tmp,",",testlabel[i]

print "error:",num

print "error percent:%f" % (float(num)/len(testSet))

end = time.clock()

这篇博客记录了作者初次参与Kaggle的Digit Recognizer比赛,尝试了KNN、Bayes、Logistic Regression和SVM四种算法进行手写数字识别。KNN在部分数据上达到0.95543的正确率,朴素贝叶斯效果不佳,Logistic Regression正确率约0.90,而SVM在默认参数下达到0.94的正确率且训练速度快。作者认识到要达到高准确率还需更深入工作,并计划继续优化算法。

这篇博客记录了作者初次参与Kaggle的Digit Recognizer比赛,尝试了KNN、Bayes、Logistic Regression和SVM四种算法进行手写数字识别。KNN在部分数据上达到0.95543的正确率,朴素贝叶斯效果不佳,Logistic Regression正确率约0.90,而SVM在默认参数下达到0.94的正确率且训练速度快。作者认识到要达到高准确率还需更深入工作,并计划继续优化算法。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

575

575

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?