docker storm搭建和测试(windows版)

1.安装docker desktop

这里就不介绍了,可以参考docker官网信息,网址如下:Install Docker Desktop on Windows | Docker Docs

安装完成后打开即可。

2.利用docker compose搭建storm

具体信息可以参考docker hub仓库中的介绍来搭建storm,这里给出我的yml文件一键式安装,yml文件如下:

version: '3.1'

services:

zookeeper:

image: zookeeper:3.8.0

container_name: zookeeper

restart: always

nimbus:

image: storm:2.2.0

container_name: nimbus

command: storm nimbus

depends_on:

- zookeeper

links:

- zookeeper

restart: always

ports:

- 6627:6627

supervisor:

image: storm:2.2.0

container_name: supervisor

command: storm supervisor

depends_on:

- nimbus

- zookeeper

links:

- nimbus

- zookeeper

restart: always

ui:

image: storm:2.2.0

container_name: ui

command: storm ui

depends_on:

- nimbus

- zookeeper

links:

- nimbus

- zookeeper

restart: always

ports:

- 8080:8080

文件命名为ex.yml,之后打开Windows powerShell,输入下面命令:

docker-compose -f D:\docker_desktop\ex.yml up -d

自己看情况更改目录即可。

停止运行该yml的命令如下:

docker-compose -f D:\docker_desktop\storm.yml up -d

当需要关闭storm时运行。

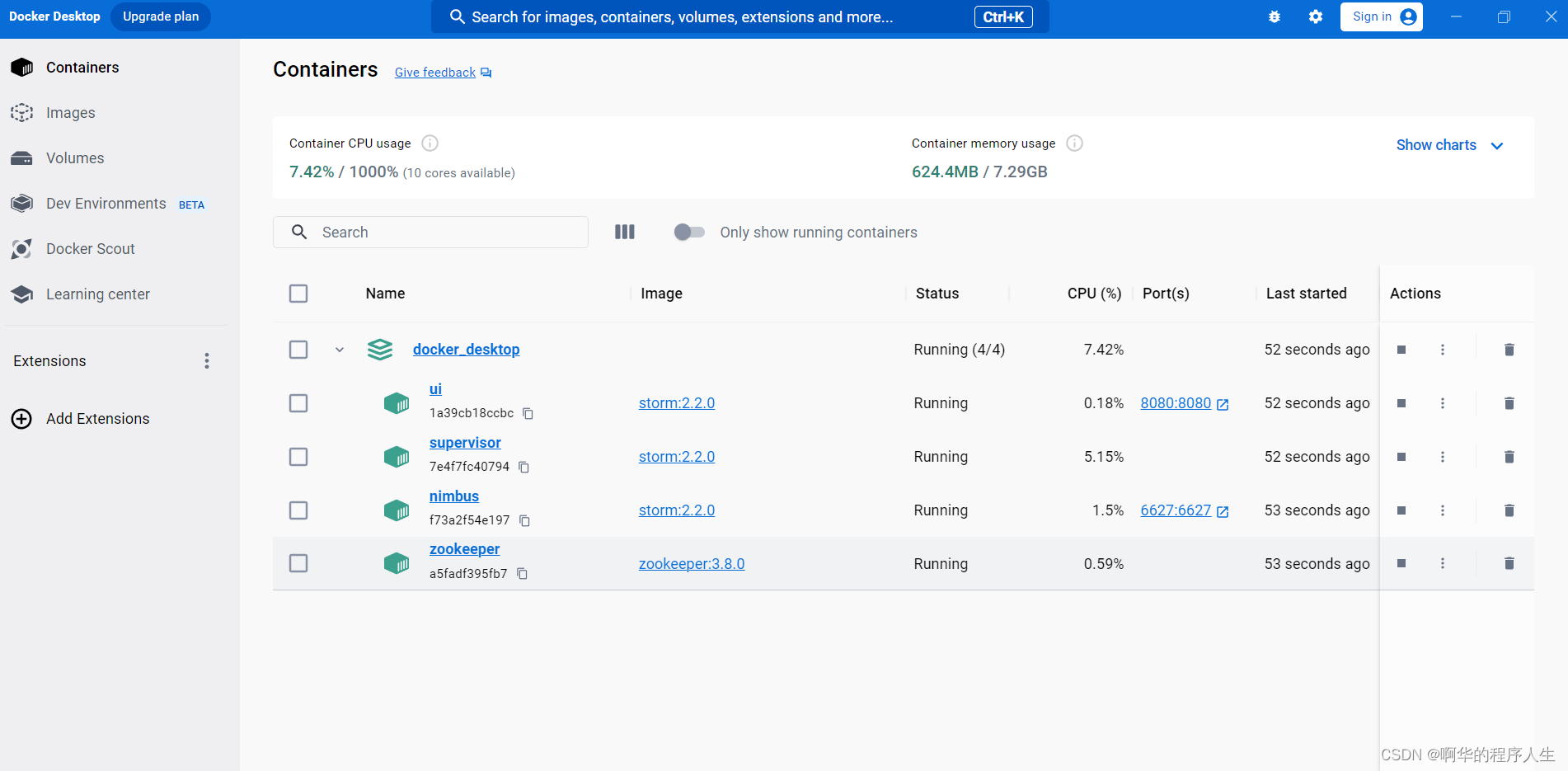

运行启动yml文件配置的命令后,可以在docker desktop下看到storm已经成功运行,如下图:

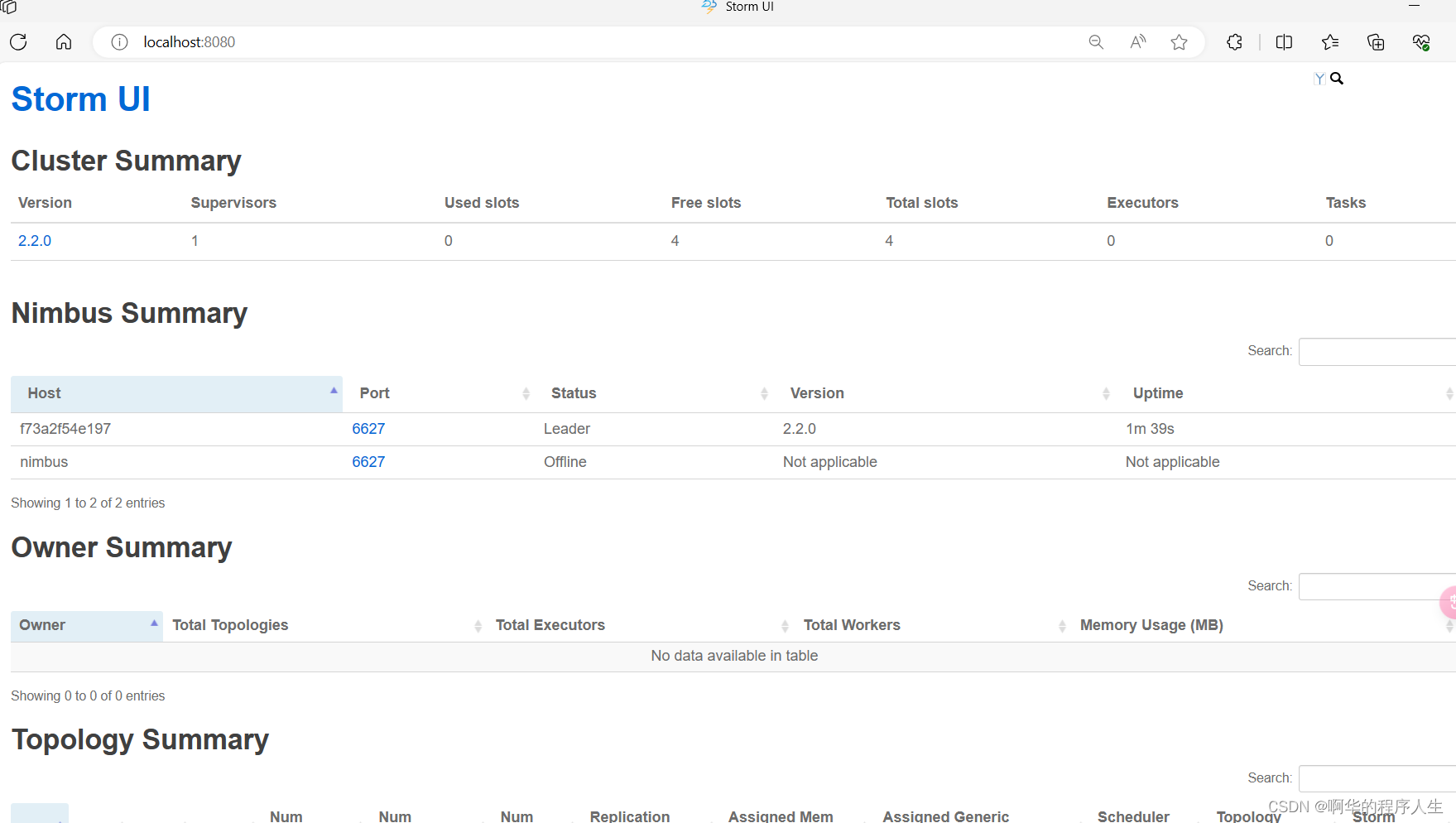

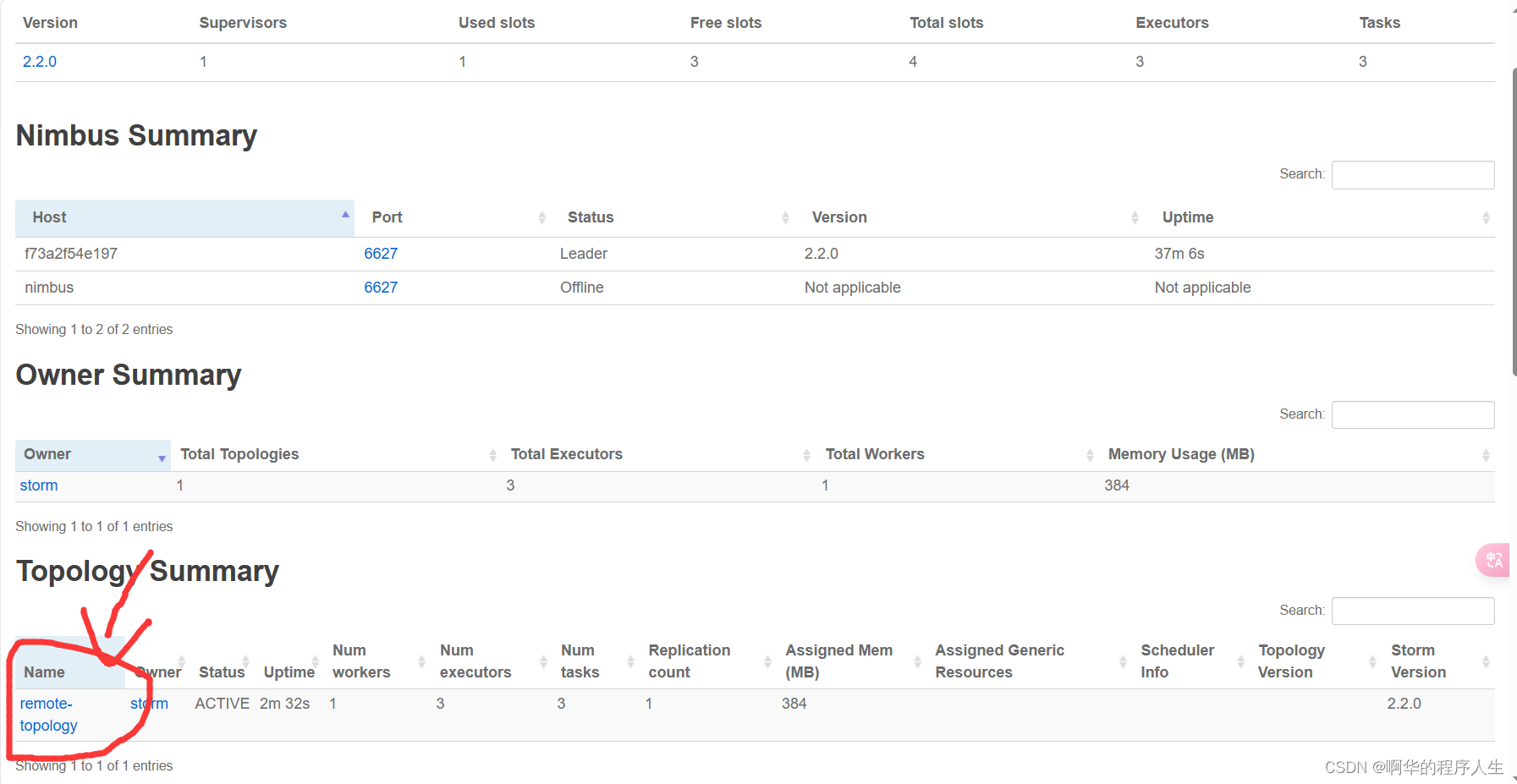

我们打开ui界面,点击上图的8080端口即可,如下图:

这样就说明安装成功了。

3.java本地测试

具体信息参考storm官网信息,网址如下:Tutorial (apache.org)

下面开始测试。

创建一个maven工程,并导入下面依赖:

<dependency>

<groupId>org.apache.storm</groupId>

<artifactId>storm-core</artifactId>

<version>2.2.0</version> <!-- 使用你需要的Storm版本 -->

</dependency>

<dependency>

<groupId>org.apache.storm</groupId>

<artifactId>storm-client</artifactId>

<version>2.2.0</version>

<scope>provided</scope>

</dependency>

创建一个spout类,用于读取数据,这里以读取股票信息csv文件为例,代码如下:

import org.apache.storm.spout.SpoutOutputCollector;

import org.apache.storm.task.TopologyContext;

import org.apache.storm.topology.OutputFieldsDeclarer;

import org.apache.storm.topology.base.BaseRichSpout;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Values;

import org.apache.storm.utils.Utils;

import java.io.BufferedReader;

import java.io.FileInputStream;

import java.io.InputStreamReader;

import java.util.Map;

public class CSVReaderSpout extends BaseRichSpout {

private SpoutOutputCollector collector;

private String[] files = {"H:\\a-storm测试\\股票数据2.csv"}; // 你的CSV文件路径

@Override

public void open(Map conf, TopologyContext context, SpoutOutputCollector collector) {

this.collector = collector;

}

@Override

public void nextTuple() {

String file=files[0];

try (BufferedReader br = new BufferedReader(

new InputStreamReader(

new FileInputStream(file),

"GBK"))){

br.readLine();// 跳过第一行

String line;

while (true) {

if((line = br.readLine()) != null)

{collector.emit(new Values(line));Utils.sleep(1);}

else{

Utils.sleep(1000);

break;

}

}

} catch (Exception e) {

e.printStackTrace();

}finally {

// 当读取完所有数据后自动停止程序运行并终止整个程序

System.exit(0);

}

}

@Override

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("line"));//说明发送的数据字段信息

}

}

创建一个bolt类,用于接收数据,并统计相关信息,代码如下:

import org.apache.storm.task.OutputCollector;

import org.apache.storm.task.TopologyContext;

import org.apache.storm.topology.OutputFieldsDeclarer;

import org.apache.storm.topology.base.BaseRichBolt;

import org.apache.storm.tuple.Tuple;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.HashMap;

import java.util.Map;

public class StockStatisticsBolt extends BaseRichBolt {

int count=0;

int fg=0;

private Map<String, Double> stockTypeVolume = new HashMap<>();

private Map<String, Double> stockTypeAmount = new HashMap<>();

private Map<String, Double> hourVolume = new HashMap<>();

private Map<String, Double> hourAmount = new HashMap<>();

@Override

public void prepare(Map map, TopologyContext topologyContext, OutputCollector outputCollector) {

}

@Override

public void execute(Tuple tuple) {

count++;

fg++;

String line = tuple.getStringByField("line");

try {

// 解析CSV数据

String[] parts = line.split(",");

String time = parts[0];

String stockCode = parts[1];

String stockName = parts[2];

double price = Double.parseDouble(parts[3]);

double tradeVolume = Double.parseDouble(parts[4]);

// 统计不同类型的股票交易量和交易总金额

if (stockTypeVolume.containsKey(stockCode)) {

// If the map already contains the stock type, update the values

double currentTradeVolume = stockTypeVolume.get(stockCode);

stockTypeVolume.put(stockCode, currentTradeVolume + tradeVolume*price);

} else {

// If the map does not contain the stock type, add it to the map

stockTypeVolume.put(stockCode, tradeVolume*price);

}

SimpleDateFormat dateFormat = new SimpleDateFormat("yyyy-MM-dd HH:mm");

Date date = dateFormat.parse(time);

// 解析时间并获取小时部分

String hour = new SimpleDateFormat("HH").format(date);

// 统计不同小时的交易量和交易总金额

if (hourVolume.containsKey(hour)) {

// If the map already contains the stock type, update the values

double currentTradeVolume = hourVolume.get(hour);

hourVolume.put(hour, currentTradeVolume + tradeVolume*price);

} else {

// If the map does not contain the stock type, add it to the map

hourVolume.put(hour, tradeVolume*price);

}

} catch (Exception e) {

e.printStackTrace();

}finally {

if(fg<9999){

fg++;

} else{fg=0;printResults();

}

}

}

@Override

public void declareOutputFields(OutputFieldsDeclarer declarer) {

// 不需要发射数据到下一个Bolt,因此这个方法可以为空

}

// 新增的方法,用于打印统计结果

public void printResults() {

System.out.println("Stock Type Statistics:");

for (Map.Entry<String, Double> entry : stockTypeVolume.entrySet()) {

String stockCode = entry.getKey();

double volume = entry.getValue();

System.out.println("Stock Code: " + stockCode + ", Volume: " + volume);

}

System.out.println("Hourly Statistics:");

for (Map.Entry<String, Double> entry : hourVolume.entrySet()) {

String hour = entry.getKey();

double volume = entry.getValue();

System.out.println("Hour: " + hour + ", Volume: "+ volume);

}

System.out.println("股票信息总数为:"+count);

}

}

最后创建一个Topology类,用于创建topology结构,代码如下:

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.StormSubmitter;

import org.apache.storm.topology.TopologyBuilder;

import org.apache.storm.utils.Utils;

public class StockAnalysisTopology {

public static void main(String[] args) {

try {

TopologyBuilder builder = new TopologyBuilder();

builder.setSpout("1spout", new CSVReaderSpout());

builder.setBolt("2bolt", new StockStatisticsBolt()).shuffleGrouping

("1spout");

Config config = new Config();

//config.setDebug(true);

if (args != null && args.length > 0) {

config.setNumWorkers(1); // 设置工作进程数量

StormSubmitter.submitTopology(args[0], config, builder.createTopology());

} else {

LocalCluster cluster = null;

try {

config.setNumWorkers(4);

cluster = new LocalCluster();

cluster.submitTopology("1topology", config, builder.createTopology());

// 等待拓扑运行一段时间

Utils.sleep(600000);

}catch (Exception e) {

e.printStackTrace();

}

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

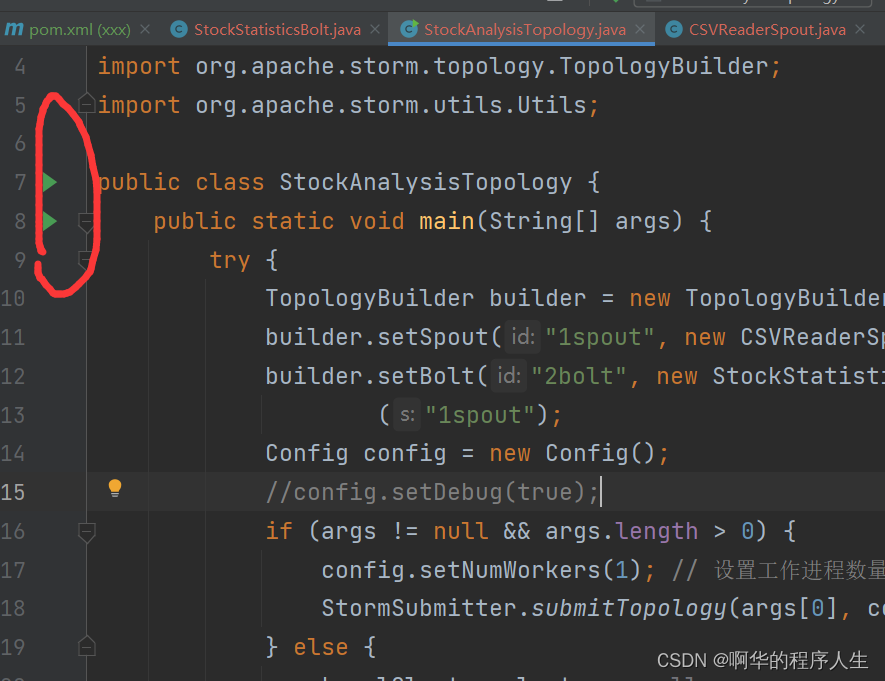

检查所有代码有没有报错,没有的话直接点击运行StockAnalysisTopology类中的main方法,如下图所示:

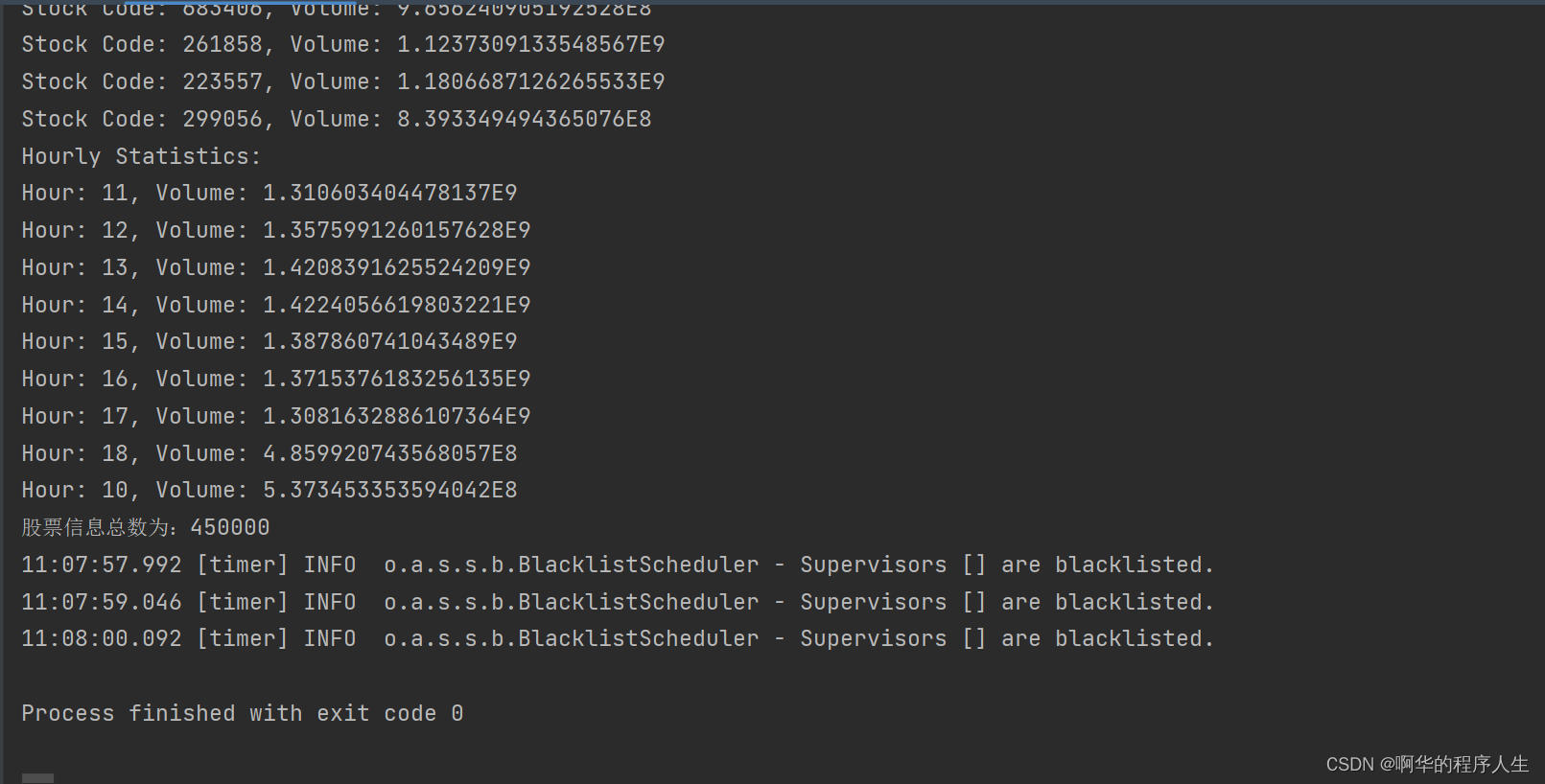

运行结束后可以看到一下结果:

下面进行远程连接storm,先将项目打包成jar包,实际只要有spout和bolt俩个类即可。(直接打包就行)

之后修改StockAnalysisTopology代码,如下所示,看情况修改。

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.StormSubmitter;

import org.apache.storm.shade.com.google.common.collect.ImmutableList;

import org.apache.storm.topology.TopologyBuilder;

import org.apache.storm.utils.Utils;

public class StockAnalysisTopology {

public static void main(String[] args) {

String nimbusHost = "127.0.0.1"; // 修改为你的 Nimbus 服务器主机名或 IP 地址

String zookeeperHost = "127.0.0.1"; // 修改为你的 ZooKeeper 服务器主机名或 IP 地址

try {

TopologyBuilder builder = new TopologyBuilder();

builder.setSpout("csv-reader-spout", new CSVReaderSpout());

builder.setBolt("stock-statistics-bolt", new StockStatisticsBolt()).shuffleGrouping("csv-reader-spout");

Config config = new Config();

config.setDebug(true);

// 设置 Nimbus 主机和 ZooKeeper 服务器

config.put(Config.NIMBUS_SEEDS, ImmutableList.of(nimbusHost));

config.put(Config.STORM_ZOOKEEPER_SERVERS, ImmutableList.of(zookeeperHost));

// 设置拓扑的总任务数(worker 数量),这里设置为 3

//config.setNumWorkers(3);

System.setProperty("storm.jar","D:\\Idea\\java项目\\xxx\\target\\xxx-1.0-SNAPSHOT.jar");

StormSubmitter.submitTopology("remote-topology", config, builder.createTopology());

} catch (Exception e) {

e.printStackTrace();

}

}

}

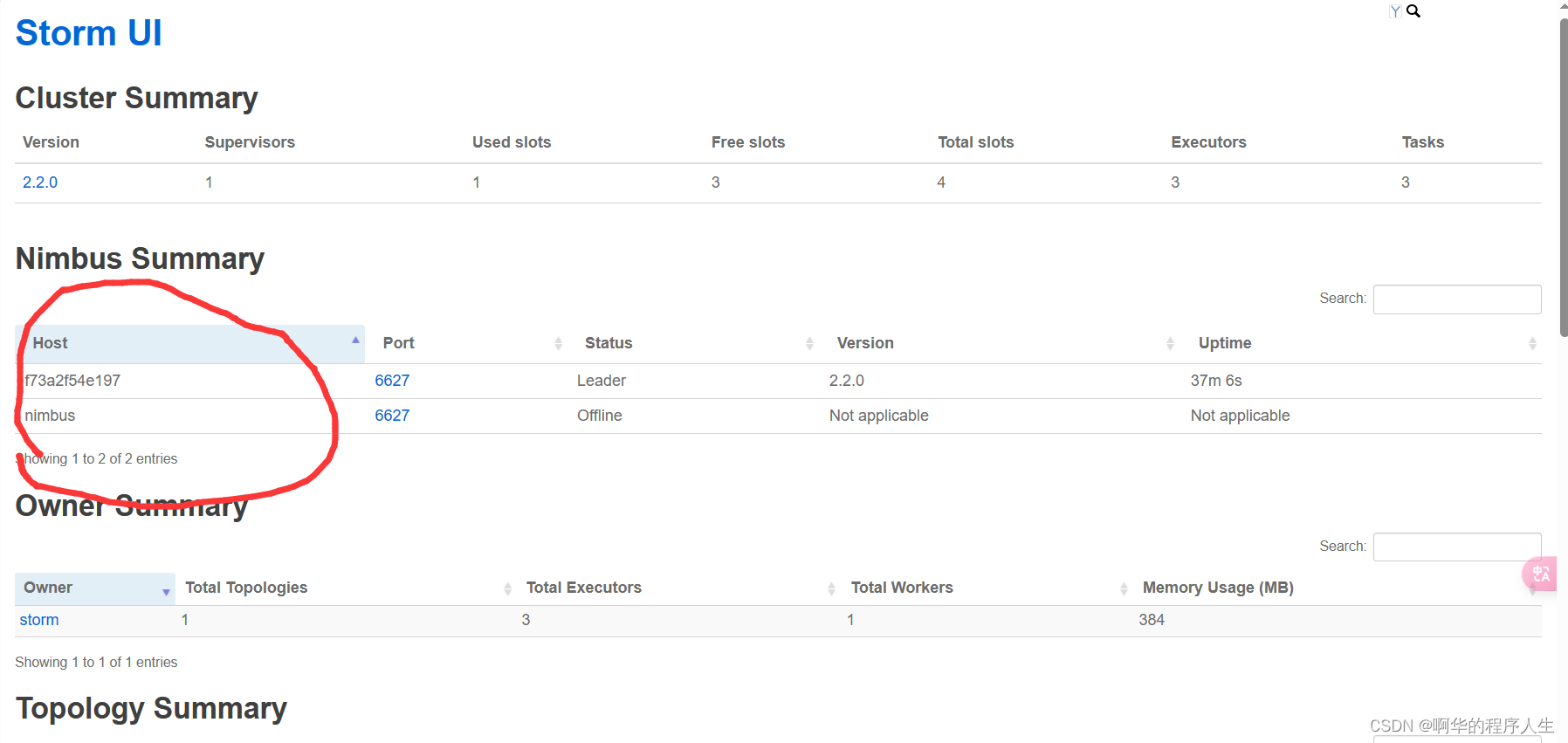

在运行该代码之前,在storm ui里面看一下leader的IP名称,如下图:

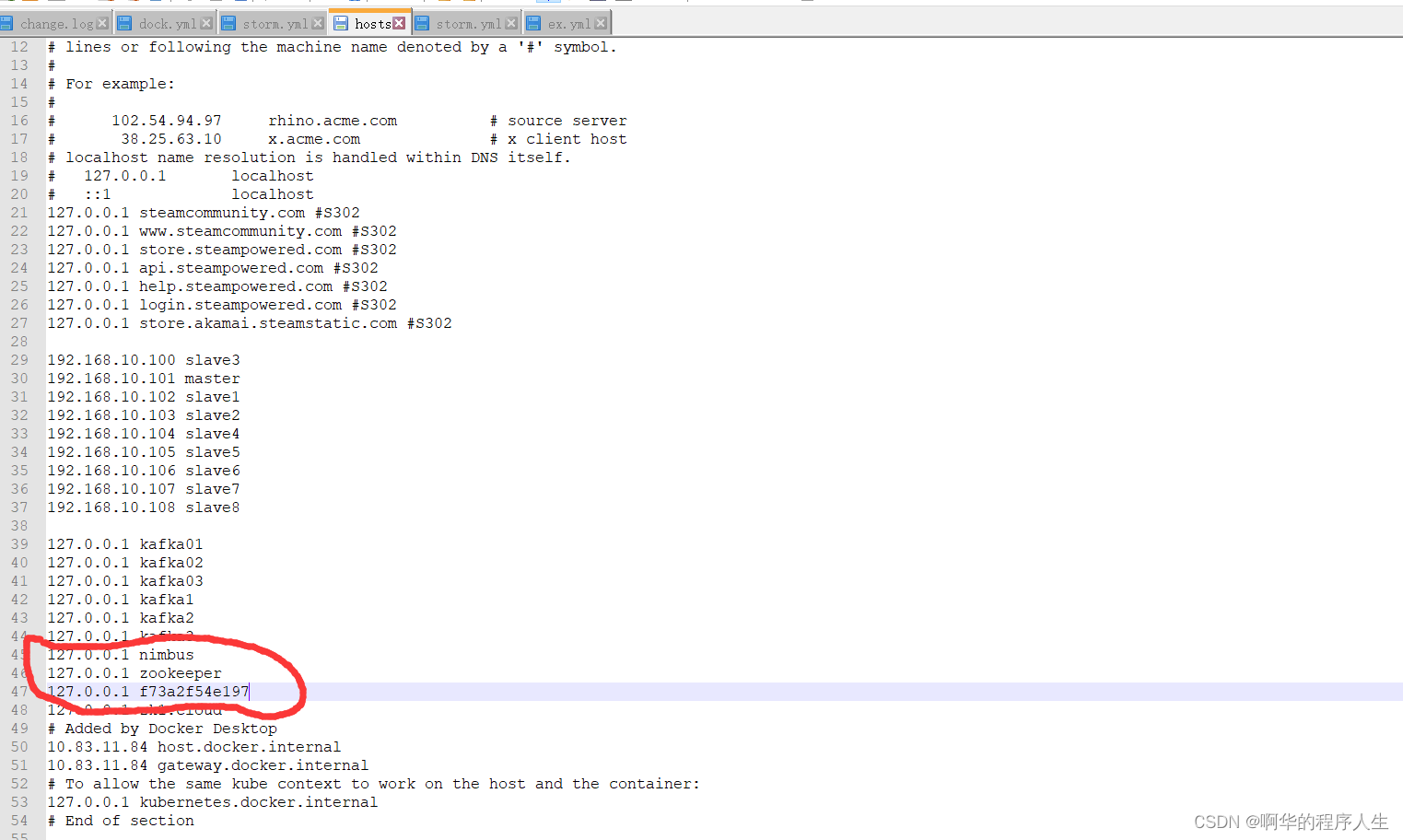

把上面的ip添加到hosts文件中,该文件地址为:C:\Windows\System32\drivers\etc\hosts,

修改如下:

把你的nimbus和zookeeper都添加进去,之后保存即可。

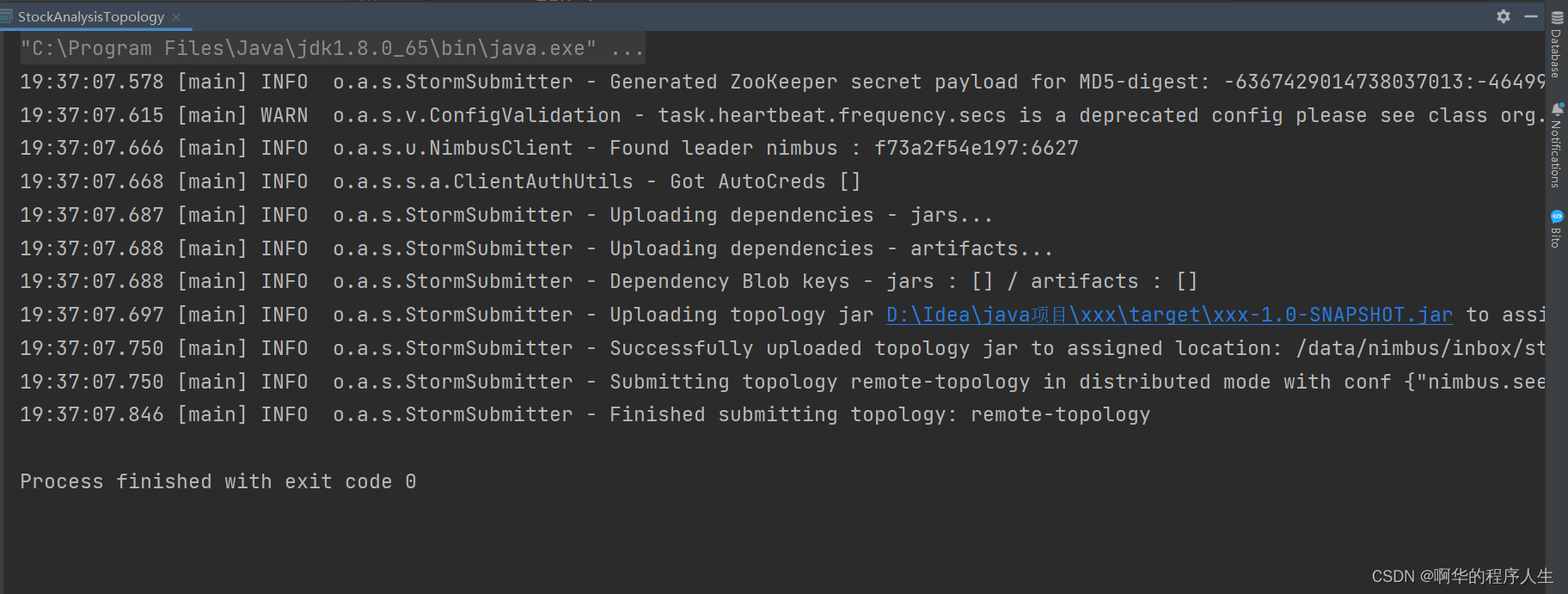

最后我们运行上面 的代码,结果如下:

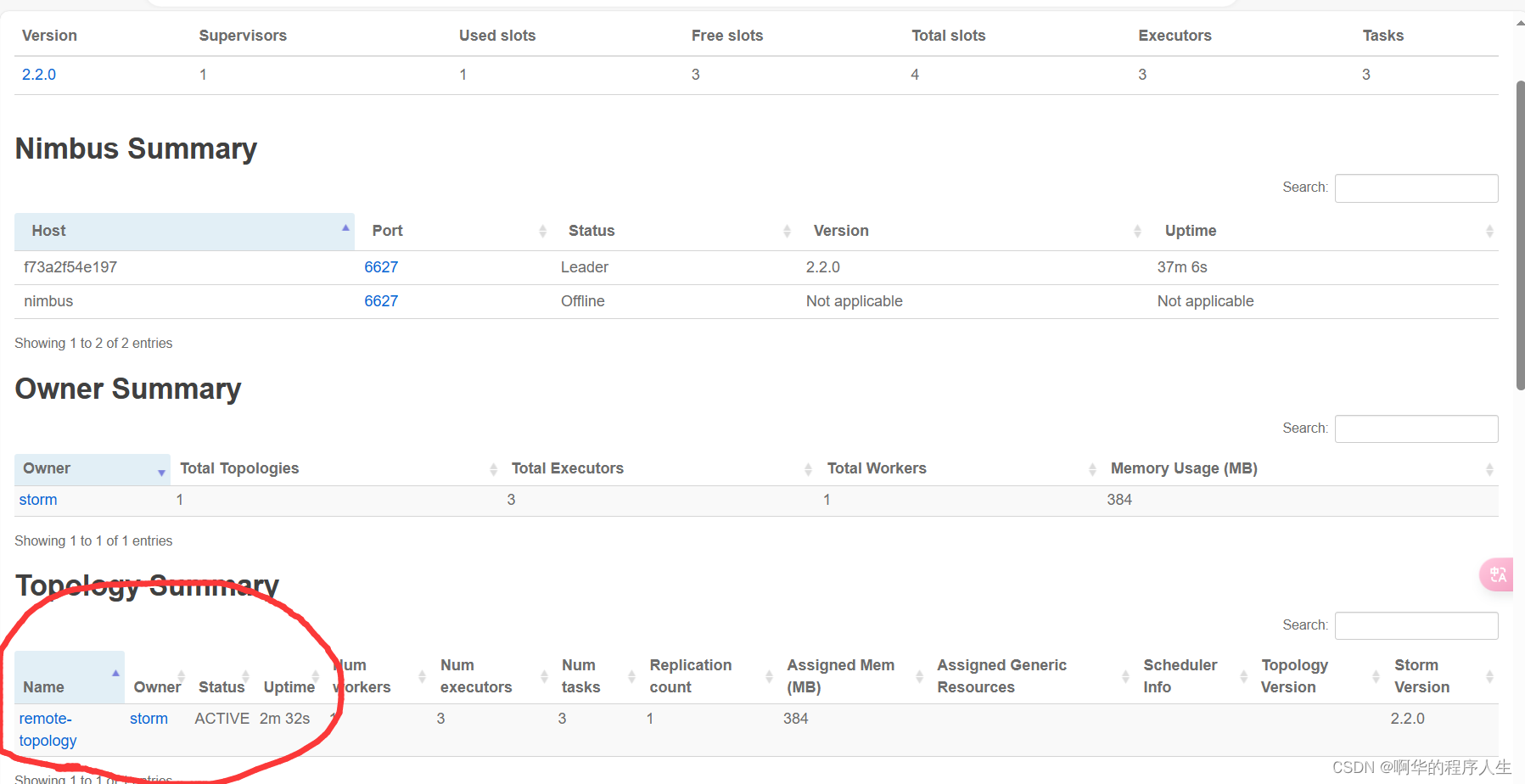

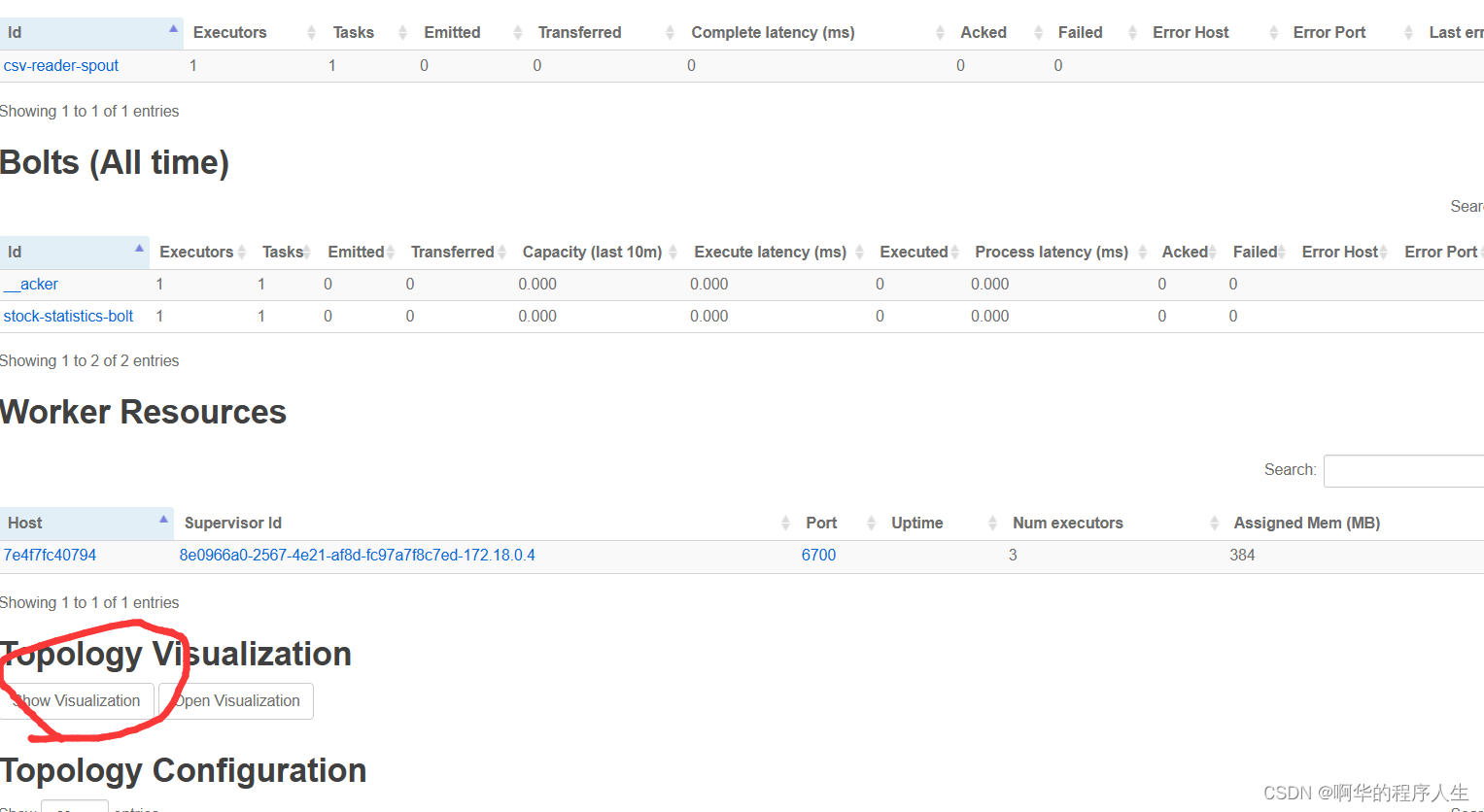

可以看到已经成功运行,接下来我们再去storm ui中看一下所有存在该topology结构,如下:

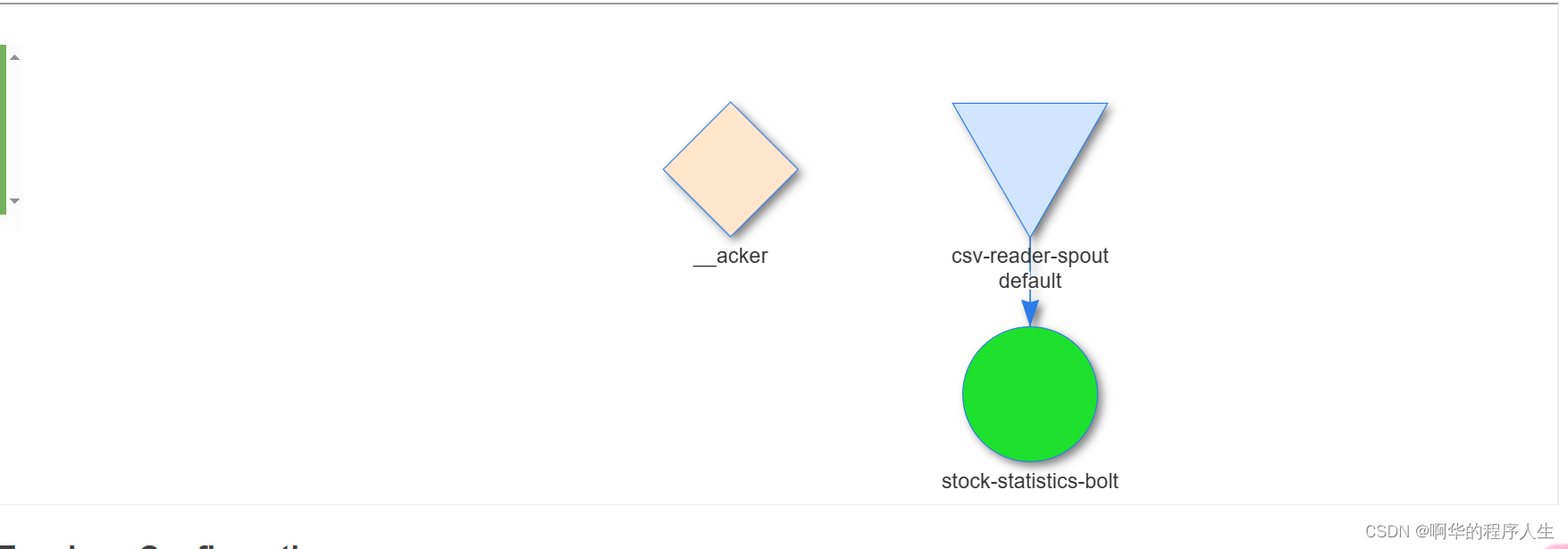

可以看到已经成功提交,我们再看看结构:

好了,这次的环境搭建和测试就到处结束。

提交拓扑结构后发现无法读取本地文件,参考:http://t.csdnimg.cn/9e53J

就是不能。

119

119

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?