1、准备环境

操作系统:Mac OS X 10.11.6

JDK:1.8.0_111

Hadoop:2.7.3

2、配置ssh

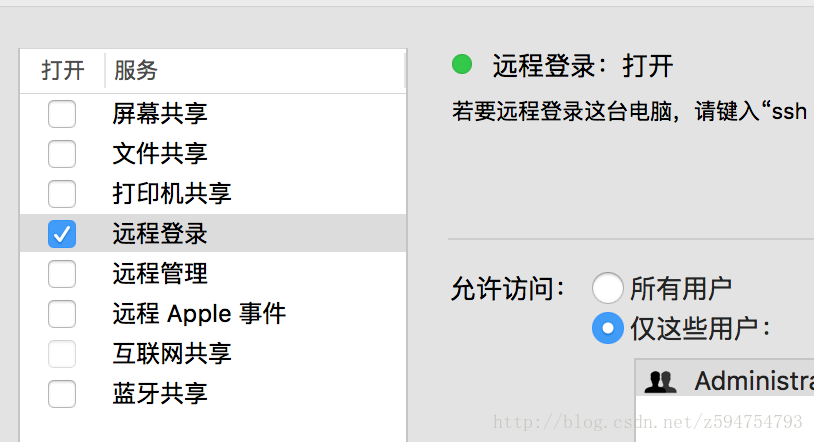

首先确认能够远程登录

系统偏好设置-共享

在终端执行

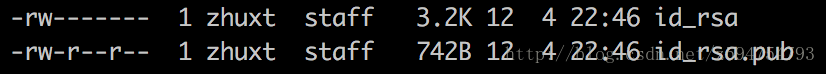

ssh-keyagent -t一路回车就行

会在~/.ssh目录下生成两个文件

然后执行

cat ~/.ssh/id_rsa.pub > ~/.ssh/authorized_keys3、验证ssh

ssh localhost

Last login: Sat Dec 17 14:25:32 2016

➜ ~4、安装hadoop

下载链接

hadoop2.7.3

解压到相应目录

tar -zxf hadoop-2.7.3.tar.gz

ln -s hadoop-2.7.3 hadoop5、修改配置文件

如果没有配置java环境变量,需要在hadoop-env.sh增加

export JAVA_HOME=/Library/Java/JavaVirtualMachines/jdk1.8.0_111.jdk/Contents/Homecore-site.xml

该配置文件用于指明namenode的主机名和端口,hadoop临时目录

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/Users/zhuxt/Documents/hadoop/tmp</value>

</property>

</configuration>hadfs-site.xml

默认副本数3,修改为1,dfs.namenode.name.dir指明fsimage存放目录,多个目录用逗号隔开。dfs.datanode.data.dir指定块文件存放目录,多个目录逗号隔开

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/Users/zhuxt/Documents/hadoop/tmp/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/Users/zhuxt/Documents/hadoop/tmp/hdfs/data</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>localhost:9001</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>yarn配置

mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.admin.user.env</name>

<value>HADOOP_MAPRED_HOME=$HADOOP_COMMON_HOME</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=$HADOOP_COMMON_HOME</value>

</property>yarn-site.xml

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>6、配置hadoop环境变量

sudo vim /etc/profile

export HADOOP_HOME=/Users/zhuxt/Documents/hadoop

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/binsource /etc/profile

7、运行Hadoop

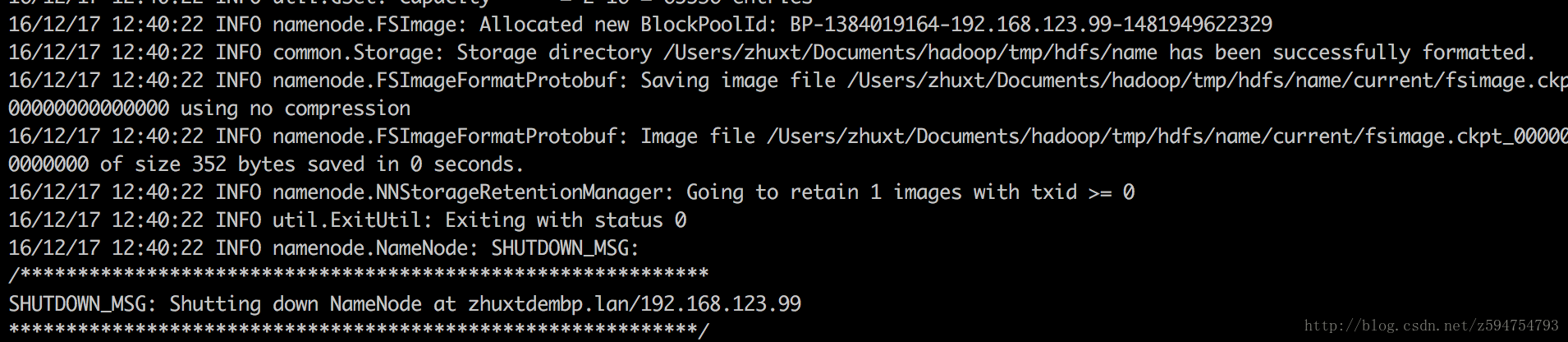

格式化HDFS

hdfs namenode -format启动hdfs

start-dfs.sh

启动yarn

start-yarn.sh

运行测试例子

创建hdfs目录

hdfs dfs -mkdir -p /user/zhuxt/input

上传测试文件

hdfs dfs -put $HADOOP_HOME/etc/hadoop/*.xml input

执行测试jar

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.0.0-alpha1.jar grep input output ‘dfs[a-z.]+’

查看执行结果

hdfs dfs -cat output/part-r-00000

16/12/17 12:56:25 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

1 dfsadmin

1 dfs.webhdfs.enabled

1 dfs.replication

1 dfs.namenode.secondary.http

1 dfs.namenode.name.dir

1 dfs.datanode.data.dir完毕

2175

2175

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?