MapReduce之共同好友

背景

如今大多数的社交网站都有提供的共同好友的服务,可以帮助与好友之间共享图片,消息,视频

定义

令 U 为包含所有用户的一个集合:

{

U

1

,

U

2

,

.

.

.

,

U

n

U_1,U_2,...,U_n

U1,U2,...,Un}

则为每个{

U

i

,

U

j

U_i,U_j

Ui,Uj}对 {i ≠ j} 的共同好友

例如

好友1 的好友列表中有 100,200,300,400,500

好友2 的好友列表中有 200,300

则两人的共同好友为 200,300

样例输入

100 200 300 400 500 600

200 100 300 400

300 100 200 400 500

400 100 200 300

500 100 300

600 100

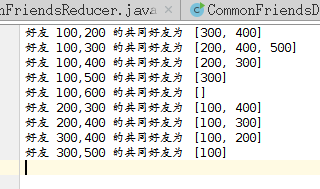

样例输出

mapper阶段任务

mapper阶段对数据进行分割并使用buildSortedKey()函数对键进行排序,避免输出如(100,200)和(200,100)这样相同的键,最终对reducer发送如<(好友1,好友2),friends>这样的键

mapper阶段编码

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Mapper.Context;

public class CommonFriendsMapper extends Mapper<LongWritable,Text,Text,Text>{

static String getFriends(String[] tokens){

if(tokens.length==2) return "";

StringBuilder builder=new StringBuilder();

for(int i=1;i<tokens.length;i++){

builder.append(tokens[i]);

if(i<(tokens.length-1)){

builder.append(",");

}

}

return builder.toString();

}

static String buildSortedKey(String person,String friend){

long p=Long.parseLong(person);

long f=Long.parseLong(friend);

if(p<f){

return person+","+friend;

}else{

return friend+","+person;

}

}

public void map(LongWritable key,Text value,Context context) throws IOException,InterruptedException{

String[] tokens=value.toString().split(" ");

String friends=getFriends(tokens);

String person=tokens[0];

for(int i=1;i<tokens.length;i++){

String friend=tokens[i];

String reduceKeyAsString=buildSortedKey(person,friend);

context.write(new Text(reduceKeyAsString),new Text(friends));

}

}

}

reducer阶段任务

该阶段中对mapper传输得到的<(好友1,好友2),friends>键值对进行处理,进而通过map函数统计出好友1和好友2 的共同好友

具体思路如下:

使用numberOfPairs标记相同的键出现的次数,利用Map函数统计好友列表中好友出现的次数,当好友出现的次数和numberOfPairs相同时即表示该好友为两个人的共同好友

reducer阶段编码

import java.util.Map;

import java.util.HashMap;

import java.util.List;

import java.util.ArrayList;

import java.util.Iterator;

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.Reducer.Context;

public class CommonFriendsReducer extends Reducer<Text,Text,Text,Text>{

public void reduce(Text key,Iterable<Text> values,Context context) throws IOException,InterruptedException{

Map<String,Integer> map=new HashMap<String,Integer>();

Iterator<Text> iterator=values.iterator();

int numOfValues=0;

while(iterator.hasNext()){

String friends=iterator.next().toString();

if(friends.equals("")){

context.write(new Text("好友 "+key+" 的共同好友为"),new Text("[]"));

return ;

}

addFriends(map,friends);

numOfValues++;

}

List<String> commonFriends=new ArrayList<String>();

for(Map.Entry<String,Integer> entry:map.entrySet()){

if(entry.getValue()==numOfValues){

commonFriends.add(entry.getKey());

}

}

context.write(new Text("好友 "+key+" 的共同好友为"),new Text(commonFriends.toString()));

}

static void addFriends(Map<String,Integer> map,String friendsList){

String[] friends=friendsList.split(",");

for(String friend:friends){

Integer count=map.get(friend);

if(count==null){

map.put(friend,1);

}else{

map.put(friend,++count);

}

}

}

}

完整代码

import com.deng.FileUtil;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import java.io.IOException;

import java.util.*;

public class CommonFriendsDriver {

public static class CommonFriendsMapper extends Mapper<LongWritable,Text,Text,Text> {

static String getFriends(String[] tokens){

if(tokens.length==2) return "";

StringBuilder builder=new StringBuilder();

for(int i=1;i<tokens.length;i++){

builder.append(tokens[i]);

if(i<(tokens.length-1)){

builder.append(",");

}

}

return builder.toString();

}

static String buildSortedKey(String person,String friend){

long p=Long.parseLong(person);

long f=Long.parseLong(friend);

if(p<f){

return person+","+friend;

}else{

return friend+","+person;

}

}

public void map(LongWritable key,Text value,Context context) throws IOException,InterruptedException{

String[] tokens=value.toString().split(" ");

String friends=getFriends(tokens);

String person=tokens[0];

for(int i=1;i<tokens.length;i++){

String friend=tokens[i];

String reduceKeyAsString=buildSortedKey(person,friend);

context.write(new Text(reduceKeyAsString),new Text(friends));

}

}

}

public static class CommonFriendsReducer extends Reducer<Text,Text,Text,Text> {

public void reduce(Text key,Iterable<Text> values,Context context) throws IOException,InterruptedException{

Map<String,Integer> map=new HashMap<String,Integer>();

Iterator<Text> iterator=values.iterator();

int numOfValues=0;

while(iterator.hasNext()){

String friends=iterator.next().toString();

if(friends.equals("")){

context.write(new Text("好友 "+key+" 的共同好友为"),new Text("[]"));

return ;

}

addFriends(map,friends);

numOfValues++;

}

List<String> commonFriends=new ArrayList<String>();

for(Map.Entry<String,Integer> entry:map.entrySet()){

if(entry.getValue()==numOfValues){

commonFriends.add(entry.getKey());

}

}

context.write(new Text("好友 "+key+" 的共同好友为"),new Text(commonFriends.toString()));

}

static void addFriends(Map<String,Integer> map,String friendsList){

String[] friends=friendsList.split(",");

for(String friend:friends){

Integer count=map.get(friend);

if(count==null){

map.put(friend,1);

}else{

map.put(friend,++count);

}

}

}

}

public static void main(String[] args) throws Exception{

FileUtil.deleteDirs("output");

Configuration conf=new Configuration();

String[] otherArgs=new String[]{"input/CommonFriends.txt","output"};

if(otherArgs.length!=2){

System.err.println("参赛错误");

System.exit(2);

}

Job job=new Job(conf,"CommonFriendsDriver");

FileInputFormat.addInputPath(job,new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job,new Path(otherArgs[1]));

job.setJarByClass(CommonFriendsDriver.class);

job.setMapperClass(CommonFriendsMapper.class);

job.setReducerClass(CommonFriendsReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

System.exit(job.waitForCompletion(true)?0:1);

}

}

248

248

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?