XGBoost梯度提升方法

实验环境为google colab

XGBoost参数

这里可以参考博客如下:

作者:大石头的笔记

链接:https://juejin.cn/post/6844904134336839693

来源:掘金

XGBoost的参数可以分为三种类型:通用参数、booster参数以及学习目标参数

- General parameters:参数控制在提升(boosting)过程中使用哪种booster,常用的booster有树模型(tree)和线性模型(linear model)。

- Booster parameters:这取决于使用哪种booster。

- Learning Task parameters:控制学习的场景,例如在回归问题中会使用不同的参数控制排序。

- 除了以上参数还可能有其它参数,在命令行中使用

import pandas as pd

import numpy as np

df = pd.read_csv('/content/drive/MyDrive/NOx/dataset/NOx_df.csv')

df

| Unnamed: 0 | t | mainsteam | fuel | o2 | primaryfan | fan | Acoal | Bcoal | Ccoal | Dcoal | brunout | NOx1 | NOx2 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0.000000 | 0.039618 | 0.162146 | 0.655239 | 0.132023 | 0.061273 | 0.658795 | 0.418702 | 0.440941 | 0.000062 | 0.1 | 0.435172 | 0.405764 |

| 1 | 1 | 0.000001 | 0.039614 | 0.162172 | 0.653935 | 0.129584 | 0.060510 | 0.654308 | 0.415185 | 0.440287 | 0.000062 | 0.1 | 0.435172 | 0.405764 |

| 2 | 2 | 0.000002 | 0.039610 | 0.162199 | 0.652631 | 0.127146 | 0.059748 | 0.649822 | 0.411667 | 0.439633 | 0.000062 | 0.1 | 0.435172 | 0.405764 |

| 3 | 3 | 0.000003 | 0.039606 | 0.162226 | 0.651328 | 0.134634 | 0.058985 | 0.645336 | 0.411790 | 0.440069 | 0.000062 | 0.1 | 0.435172 | 0.405764 |

| 4 | 4 | 0.000005 | 0.039602 | 0.162252 | 0.650024 | 0.142122 | 0.058222 | 0.640850 | 0.411913 | 0.440505 | 0.000062 | 0.1 | 0.435172 | 0.405764 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 863995 | 863995 | 0.999995 | 0.342407 | 0.336440 | 0.523509 | 0.168394 | 0.085292 | 0.285160 | 0.396835 | 0.421643 | 0.369558 | 1.0 | 0.529368 | 0.587031 |

| 863996 | 863996 | 0.999997 | 0.343297 | 0.337215 | 0.522751 | 0.158369 | 0.083910 | 0.272471 | 0.396628 | 0.421126 | 0.368794 | 1.0 | 0.529574 | 0.588070 |

| 863997 | 863997 | 0.999998 | 0.344188 | 0.337989 | 0.521994 | 0.148344 | 0.082527 | 0.259781 | 0.396420 | 0.420609 | 0.368030 | 1.0 | 0.530418 | 0.588785 |

| 863998 | 863998 | 0.999999 | 0.345079 | 0.338763 | 0.521236 | 0.131015 | 0.084145 | 0.268946 | 0.395324 | 0.422463 | 0.368412 | 1.0 | 0.531262 | 0.589500 |

| 863999 | 863999 | 1.000000 | 0.345969 | 0.339538 | 0.520478 | 0.113685 | 0.085763 | 0.278111 | 0.394229 | 0.424317 | 0.368794 | 1.0 | 0.532105 | 0.590214 |

864000 rows × 14 columns

读取数据

X = df[["t",'mainsteam','fuel','o2','primaryfan','fan','Acoal','Bcoal','Ccoal','Dcoal','brunout']].values

y = df[['NOx1']].values

X.shape, y.shape

((864000, 11), (864000, 1))

切分训练集和测试集

from sklearn.model_selection import train_test_split

# 划分数据集,70% 训练数据和 30% 测试数据

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

X_train.shape, X_test.shape, y_train.shape, y_test.shape

((604800, 11), (259200, 11), (604800, 1), (259200, 1))

XGBoost梯度提升回归方法

import xgboost as xgb

model_r = xgb.XGBRegressor()

model_r

XGBRegressor(base_score=0.5, booster='gbtree', colsample_bylevel=1,

colsample_bynode=1, colsample_bytree=1, gamma=0,

importance_type='gain', learning_rate=0.1, max_delta_step=0,

max_depth=3, min_child_weight=1, missing=None, n_estimators=100,

n_jobs=1, nthread=None, objective='reg:linear', random_state=0,

reg_alpha=0, reg_lambda=1, scale_pos_weight=1, seed=None,

silent=None, subsample=1, verbosity=1)

model_r.fit(X_train, y_train) # 使用训练数据训练

model_r.score(X_test, y_test) # 使用测试数据计算准确度

模型测试准确率为80.71%

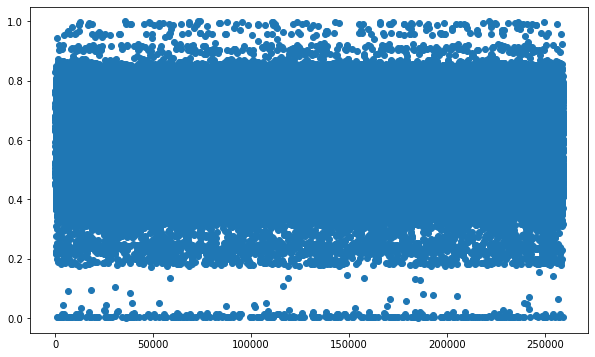

给出预测结果

y_ = model_r.predict(X_test)

y_, y_test

import matplotlib.pyplot as plt

from matplotlib.pylab import rcParams

%matplotlib inline

epochs = range(0, y_test.shape[0])

plt.figure(figsize=(10, 6))

plt.scatter(epochs, y_test)

plt.figure(figsize=(10, 6))

plt.scatter(epochs, y_)

983

983

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?