1、pom文件

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>3.4.3</version>

<relativePath/> <!-- lookup parent from repository -->

</parent>

<groupId>com.langfuse</groupId>

<artifactId>spring-ai-demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>spring-ai-demo</name>

<description>Spring AI demo project for Spring Boot</description>

<url/>

<properties>

<java.version>21</java.version>

</properties>

<dependencyManagement>

<dependencies>

<dependency>

<groupId>io.opentelemetry.instrumentation</groupId>

<artifactId>opentelemetry-instrumentation-bom</artifactId>

<version>2.14.0</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-openai-spring-boot-starter</artifactId>

<version>1.0.0-M6</version>

<exclusions>

<exclusion>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-openai</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-openai</artifactId>

<version>1.0.0-M6-XIN</version>

</dependency>

<dependency>

<groupId>com.squareup.okhttp3</groupId>

<artifactId>okhttp</artifactId>

<version>4.12.0</version>

</dependency>

<dependency>

<groupId>io.projectreactor.netty</groupId>

<artifactId>reactor-netty</artifactId>

<version>1.3.0-M1</version>

</dependency>

<!-- Spring AI needs a reactive web server to run for some reason-->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>io.opentelemetry.instrumentation</groupId>

<artifactId>opentelemetry-spring-boot-starter</artifactId>

</dependency>

<!-- Spring Boot Actuator for observability support -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-actuator</artifactId>

</dependency>

<!-- Micrometer Observation -> OpenTelemetry bridge -->

<dependency>

<groupId>io.micrometer</groupId>

<artifactId>micrometer-tracing-bridge-otel</artifactId>

</dependency>

<!-- OpenTelemetry OTLP exporter for traces -->

<dependency>

<groupId>io.opentelemetry</groupId>

<artifactId>opentelemetry-exporter-otlp</artifactId>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

</project>

2、配置文件

spring:

application:

name: java-demo

ai:

chat:

observations:

include-prompt: true # Include prompt content in tracing (disabled by default for privacy)

include-completion: true # Include completion content in tracing (disabled by default)

openai:

api-key: "not empty"

base-url: http://192.168.3.100:9997

# 兼容其他OpenAI格式的大模型配置示例

chat:

options:

# 模型ID,需要替换为实际的接入点ID

model: qwen2-instruct

management:

tracing:

sampling:

probability: 1.0 # Sample 100% of requests for full tracing (adjust in production as needed)

observations:

annotations:

enabled: true # Enable @Observed (if you use observation annotations in code)

# sk-lf-c8d2601e-xxxx-xxxx-b10f-9275c48878a7

# pk-lf-b23b07b2-oooo-oooo-a689-c658d36ab616

# http://192.168.3.100:33000

# pk-lf-b23b07b2-oooo-oooo-a689-c658d36ab616:sk-lf-c8d2601e-xxxx-xxxx-b10f-9275c48878a7

# sk-lf-29ac1c23-xxxx-xxxx-b5cb-606fcff3be92

# pk-lf-2efef304-oooo-oooo-9bb5-84147f8dce94

# pk-lf-2efef304-oooo-oooo-9bb5-84147f8dce94:sk-lf-29ac1c23-xxxx-xxxx-b5cb-606fcff3be92

# pk-lf-2cbd8bc2-xxxx-xxxx-bad8-9eab53553926

# sk-lf-11e8581c-oooo-oooo-9b02-5d89cefff193

# pk-lf-2cbd8bc2-xxxx-xxxx-bad8-9eab53553926:sk-lf-11e8581c-oooo-oooo-9b02-5d89cefff193

otel:

exporter:

otlp:

endpoint: http://117.xx.xxx.4:3000/api/public/otel

headers:

Authorization: Basic cGstbGYtMmNiZDhiYzItMWMzYS00NmE3LWJhZDgtOWVhYjUzNTUzOTI2OnNrLWxmLTExZTg1ODFjLWYxM2MtNGZlMC05YjAyLTVkODljZWZmZjE5Mw==

# Authorization = Basic 加空格 加 pk:sk的base64

server:

port: 580003、service代码

package com.langfuse.springai;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.boot.context.event.ApplicationReadyEvent;

import org.springframework.context.event.EventListener;

import org.springframework.stereotype.Service;

@Service

public class ChatService {

static final Logger LOGGER = LoggerFactory.getLogger(ChatService.class);

private final ChatClient chatClient;

public ChatService(ChatClient.Builder builder) {

chatClient = builder.build();

}

public String testAiCall() {

LOGGER.info("Invoking LLM");

String answer = chatClient.prompt("Reply with the word 'java'").call().content();

LOGGER.info("AI answered: {}", answer);

return answer;

}

}

controller代码

package com.langfuse.springai;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RestController;

@RestController

@RequestMapping("/v1/chat")

public class ChatController {

private final ChatService chatService;

public ChatController(ChatService chatService) {

this.chatService = chatService;

}

@GetMapping

public String get() {

return chatService.testAiCall();

}

}

启动类

package com.langfuse.springai;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class SpringAiDemoApplication {

public static void main(String[] args) {

SpringApplication.run(SpringAiDemoApplication.class, args);

}

}

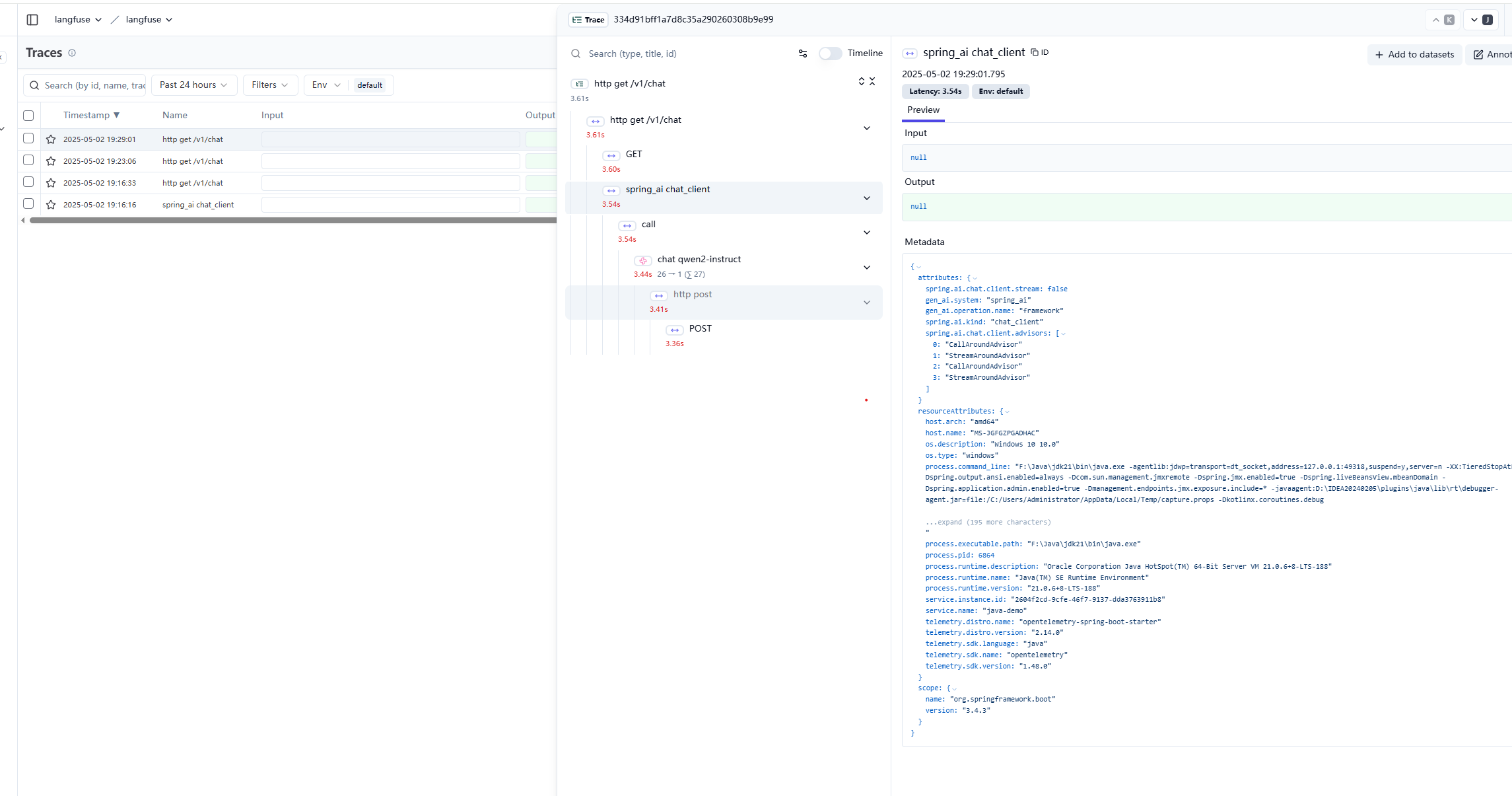

4、访问地址

http://localhost:58000/v1/chat

5、langfuse地址

http://xxx.xxx.xx.4:3000/project/cma6p4ytt0005o207mv7dm6rw/traces

如下

1179

1179

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?