Background

- Developed by Jay Earley in 1970

- No need to convert grammar to CNF

- Left to right

Complexity

fast than O(n3) in many cases

Earley Parser

- look for both full and partial constituents

- when reading word k, it has already identified all hypotheses that are consistent with words 1 to k-1

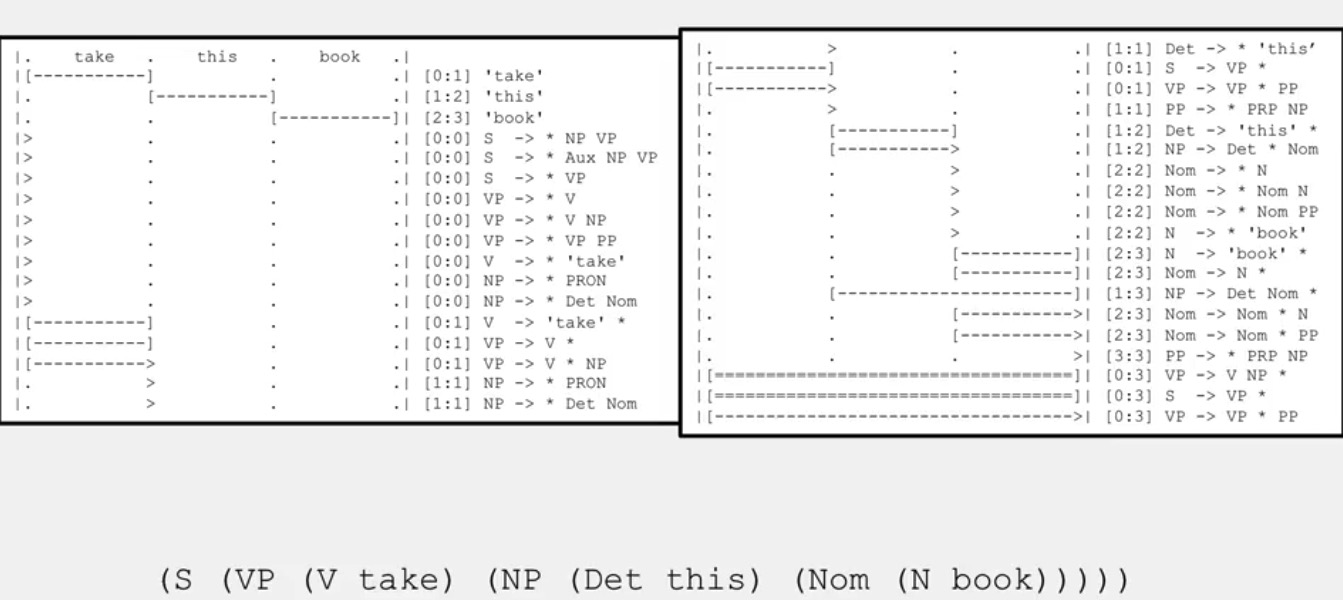

Data structure

- It uses dynamic programming table, just like CKY

- Example entry in column 1:

- [0:1] VP -> VP . PP

- created when processing word 1

- corresponds to words 0 to 1 (the part on the left of . represents the part that we have found, thus VP, and if we found later PP, we will find the whole non terminal)

- the dot(.) separates the completed(known) part from the incomplete(possibly unattainable) part

3 types of entries

- ‘scan’- for words

- ‘predict’ - for non-terminals

- ‘complete’ - otherwise

Example

Take this book.

at the end we could find that it is either a verb phrase or a sentence.

The problem of CFG

Agreement

- Number

- Chen is/ People are

- Person

- I am/ Chen is

- was/ is/ will be

- Case

- Gender

Combinatorial explosion

- Many combinations of rules are needed to express agreement

- S -> NP VP

- S -> 1sgNP 1sgVP

- S -> 2sgNP 2sgVP

- …

Subcategorization frames

For different type of words, the rules we have are different.

- direct object

- prepositional phrase

- predictive adjective

- bare infinitive

- to-infinitive

- participial phrase

- that-clause

- question-form clause

CFG independence assumption

The probability of different non terminals are not independent in the context of rules.

Remark: The solution of it is the Lexicalized CFG(PCFG).

Conclusion

Because the possibilities of combinations, the number of the parses of a sentence is exponential, so to find all the parses, the you have to spend exponential time.

330

330

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?