Caffe (CNN, deep learning) 介绍

Caffe -----------Convolution Architecture For Feature Embedding (Extraction)

- Caffe 是什么东东?

- CNN (Deep Learning) 工具箱

- C++ 语言架构

- CPU 和GPU 无缝交换

- Python 和matlab的封装

- 但是,Decaf只是CPU 版本。

-

为什么要用Caffe?

- 运算速度快。简单 友好的架构 用到的一些库:

- Google Logging library (Glog): 一个C++语言的应用级日志记录框架,提供了C++风格的流操作和各种助手宏.

- lebeldb(数据存储): 是一个google实现的非常高效的kv数据库,单进程操作。

- CBLAS library(CPU版本的矩阵操作)

- CUBLAS library (GPU 版本的矩阵操作)

-

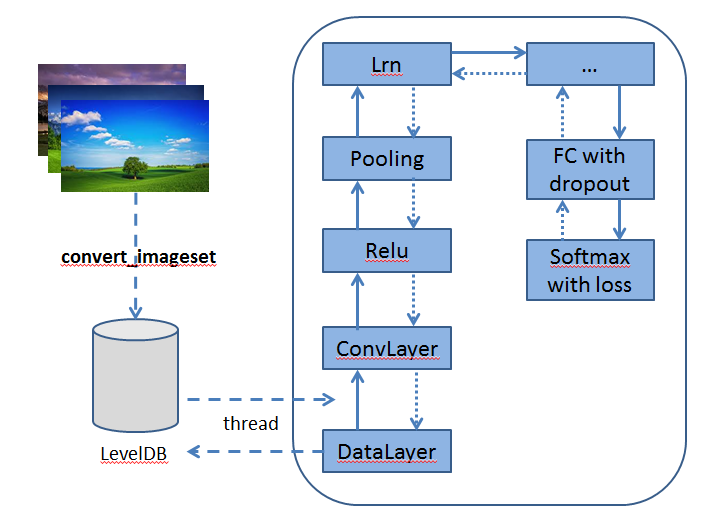

Caffe 架构

- 预处理图像的leveldb构建

输入:一批图像和label (2和3)

输出:leveldb (4)

指令里包含如下信息:- conver_imageset (构建leveldb的可运行程序)

- train/ (此目录放处理的jpg或者其他格式的图像)

- label.txt (图像文件名及其label信息)

- 输出的leveldb文件夹的名字

- CPU/GPU (指定是在cpu上还是在gpu上运行code)

-

CNN网络配置文件

- Imagenet_solver.prototxt (包含全局参数的配置的文件)

- Imagenet.prototxt (包含训练网络的配置的文件)

- Imagenet_val.prototxt (包含测试网络的配置文件)

Caffe深度学习之图像分类模型AlexNet解读

在imagenet上的图像分类challenge上Alex提出的alexnet网络结构模型赢得了2012届的冠军。要研究CNN类型DL网络模型在图像分类上的应用,就逃不开研究alexnet,这是CNN在图像分类上的经典模型(DL火起来之后)。

在DL开源实现caffe的model样例中,它也给出了alexnet的复现,具体网络配置文件如下train_val.prototxt98

接下来本文将一步步对该网络配置结构中各个层进行详细的解读(训练阶段):

-

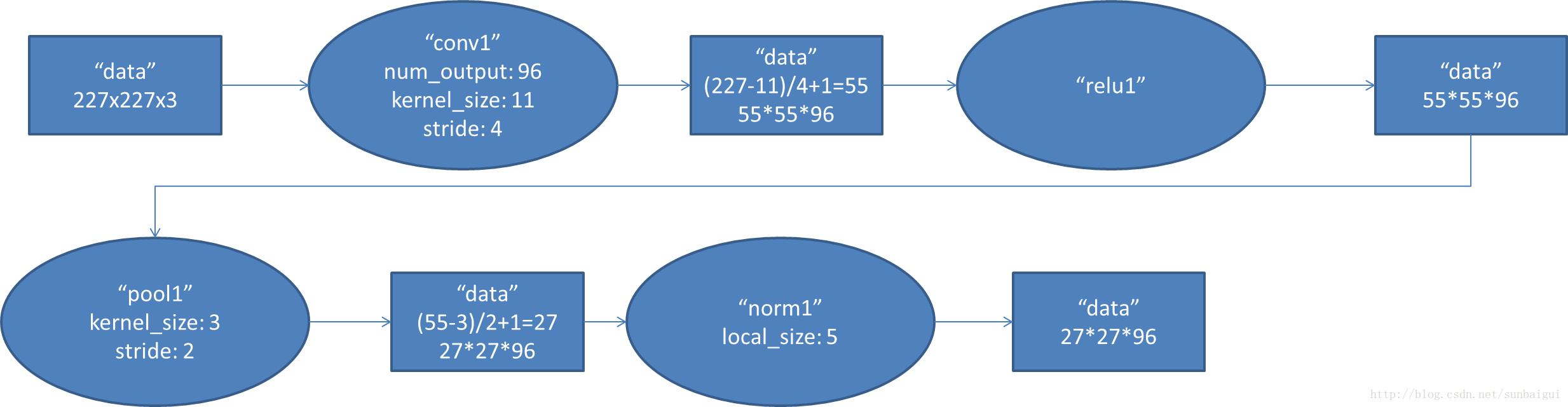

conv1阶段DFD(data flow diagram):

-

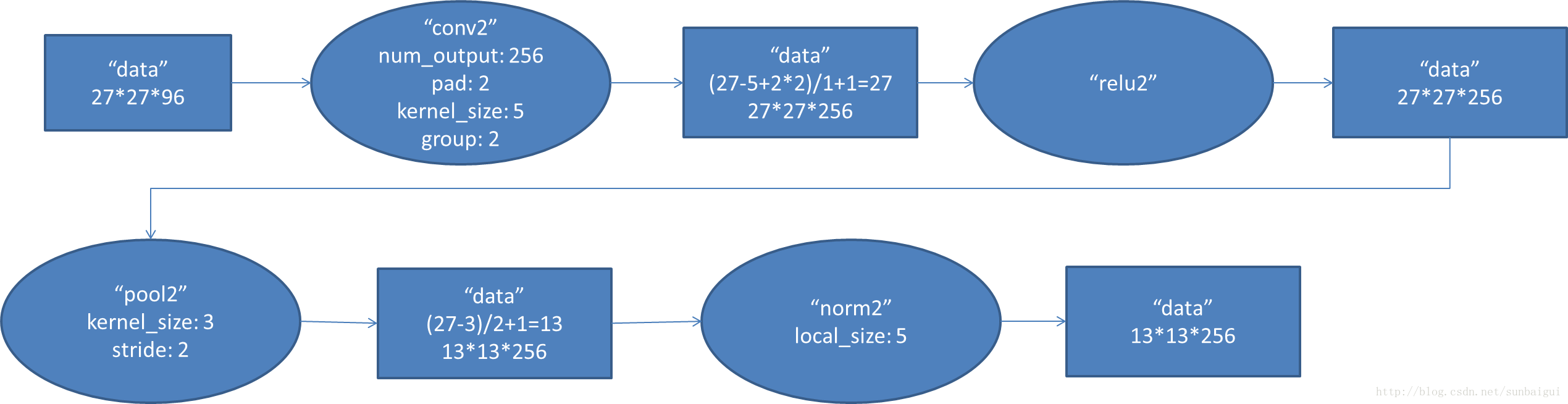

conv2阶段DFD(data flow diagram):

-

conv3阶段DFD(data flow diagram):

-

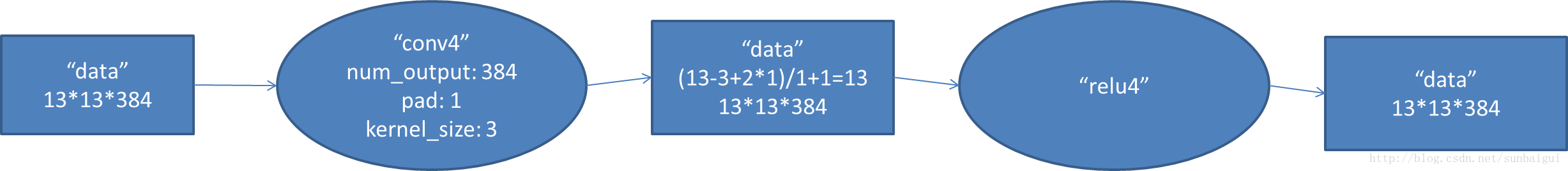

conv4阶段DFD(data flow diagram):

-

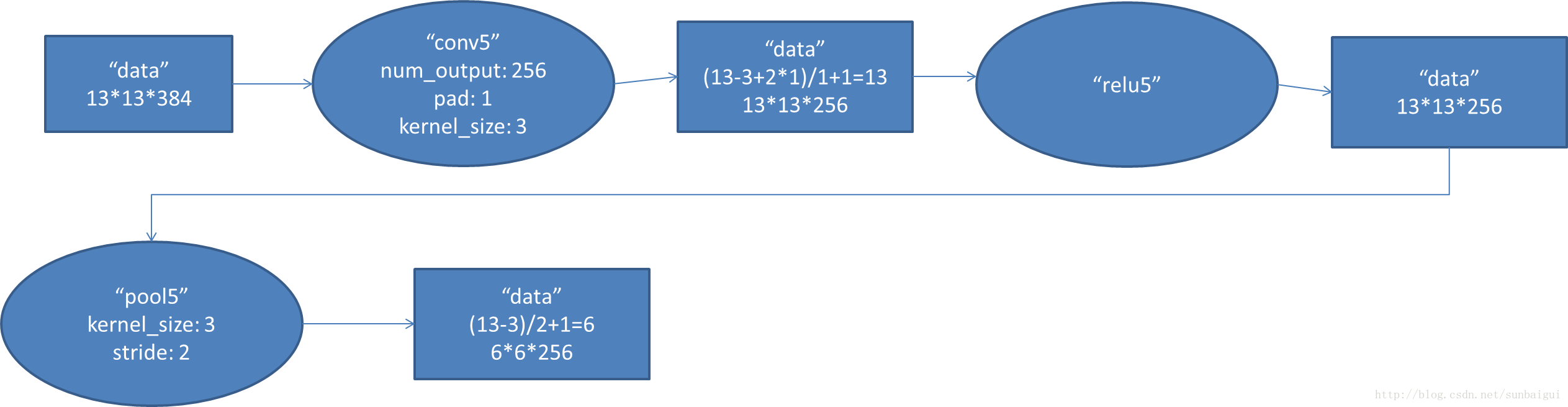

conv5阶段DFD(data flow diagram):

-

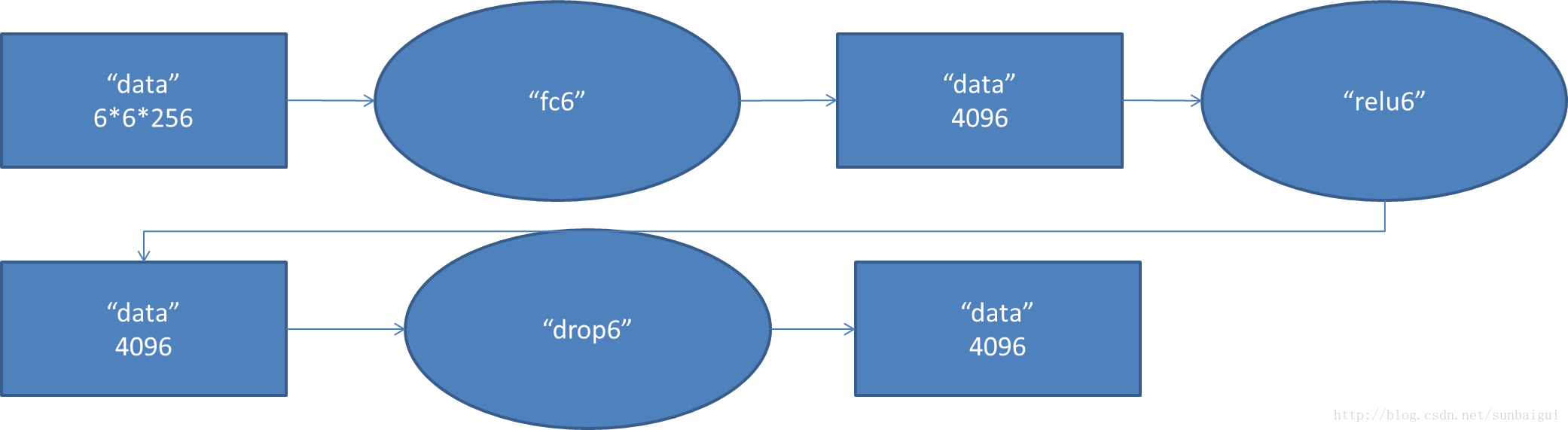

fc6阶段DFD(data flow diagram):

-

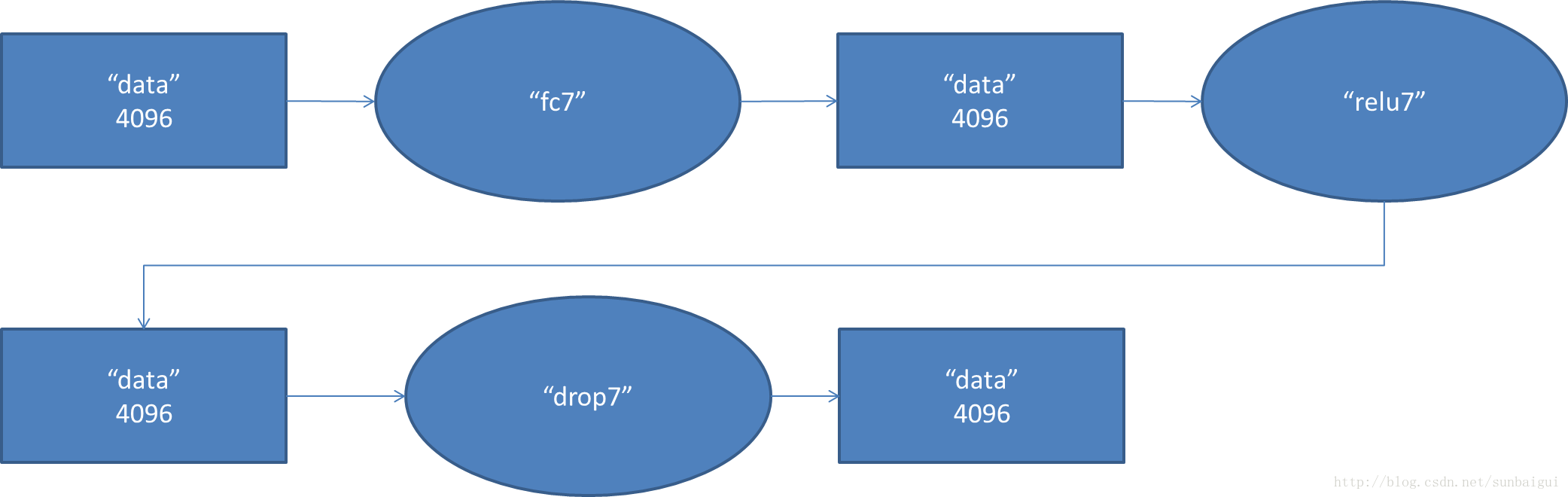

fc7阶段DFD(data flow diagram):

-

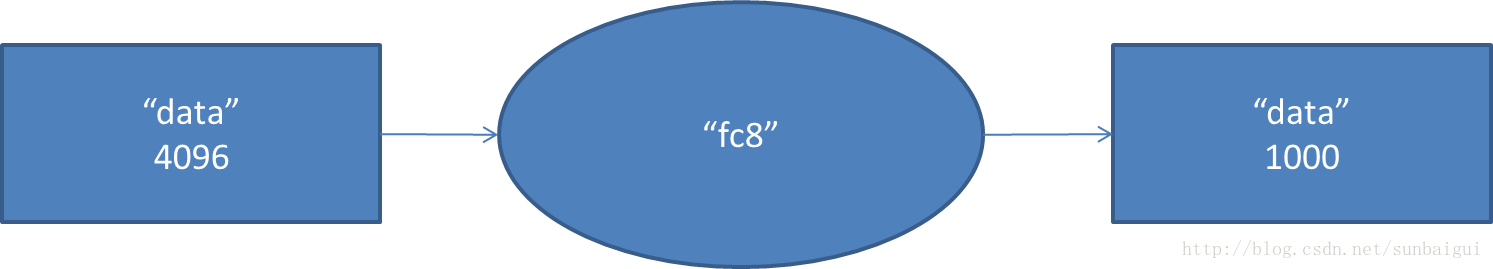

fc8阶段DFD(data flow diagram):

各种layer的operation更多解释可以参考Caffe Layer Catalogue82

从计算该模型的数据流过程中,该模型参数大概5kw+。

caffe的输出中也有包含这块的内容日志,详情如下:

I0721 10:38:15.326920 4692 net.cpp:125] Top shape: 256 3 227 227 (39574272)

I0721 10:38:15.326971 4692 net.cpp:125] Top shape: 256 1 1 1 (256)

I0721 10:38:15.326982 4692 net.cpp:156] data does not need backward computation.

I0721 10:38:15.327003 4692 net.cpp:74] Creating Layer conv1

I0721 10:38:15.327011 4692 net.cpp:84] conv1 <- data

I0721 10:38:15.327033 4692 net.cpp:110] conv1 -> conv1

I0721 10:38:16.721956 4692 net.cpp:125] Top shape: 256 96 55 55 (74342400)

I0721 10:38:16.722030 4692 net.cpp:151] conv1 needs backward computation.

I0721 10:38:16.722059 4692 net.cpp:74] Creating Layer relu1

I0721 10:38:16.722070 4692 net.cpp:84] relu1 <- conv1

I0721 10:38:16.722082 4692 net.cpp:98] relu1 -> conv1 (in-place)

I0721 10:38:16.722096 4692 net.cpp:125] Top shape: 256 96 55 55 (74342400)

I0721 10:38:16.722105 4692 net.cpp:151] relu1 needs backward computation.

I0721 10:38:16.722116 4692 net.cpp:74] Creating Layer pool1

I0721 10:38:16.722125 4692 net.cpp:84] pool1 <- conv1

I0721 10:38:16.722133 4692 net.cpp:110] pool1 -> pool1

I0721 10:38:16.722167 4692 net.cpp:125] Top shape: 256 96 27 27 (17915904)

I0721 10:38:16.722187 4692 net.cpp:151] pool1 needs backward computation.

I0721 10:38:16.722205 4692 net.cpp:74] Creating Layer norm1

I0721 10:38:16.722221 4692 net.cpp:84] norm1 <- pool1

I0721 10:38:16.722234 4692 net.cpp:110] norm1 -> norm1

I0721 10:38:16.722251 4692 net.cpp:125] Top shape: 256 96 27 27 (17915904)

I0721 10:38:16.722260 4692 net.cpp:151] norm1 needs backward computation.

I0721 10:38:16.722272 4692 net.cpp:74] Creating Layer conv2

I0721 10:38:16.722280 4692 net.cpp:84] conv2 <- norm1

I0721 10:38:16.722290 4692 net.cpp:110] conv2 -> conv2

I0721 10:38:16.725225 4692 net.cpp:125] Top shape: 256 256 27 27 (47775744)

I0721 10:38:16.725242 4692 net.cpp:151] conv2 needs backward computation.

I0721 10:38:16.725253 4692 net.cpp:74] Creating Layer relu2

I0721 10:38:16.725261 4692 net.cpp:84] relu2 <- conv2

I0721 10:38:16.725270 4692 net.cpp:98] relu2 -> conv2 (in-place)

I0721 10:38:16.725280 4692 net.cpp:125] Top shape: 256 256 27 27 (47775744)

I0721 10:38:16.725288 4692 net.cpp:151] relu2 needs backward computation.

I0721 10:38:16.725298 4692 net.cpp:74] Creating Layer pool2

I0721 10:38:16.725307 4692 net.cpp:84] pool2 <- conv2

I0721 10:38:16.725317 4692 net.cpp:110] pool2 -> pool2

I0721 10:38:16.725329 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.725338 4692 net.cpp:151] pool2 needs backward computation.

I0721 10:38:16.725358 4692 net.cpp:74] Creating Layer norm2

I0721 10:38:16.725368 4692 net.cpp:84] norm2 <- pool2

I0721 10:38:16.725378 4692 net.cpp:110] norm2 -> norm2

I0721 10:38:16.725389 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.725399 4692 net.cpp:151] norm2 needs backward computation.

I0721 10:38:16.725409 4692 net.cpp:74] Creating Layer conv3

I0721 10:38:16.725419 4692 net.cpp:84] conv3 <- norm2

I0721 10:38:16.725427 4692 net.cpp:110] conv3 -> conv3

I0721 10:38:16.735193 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.735213 4692 net.cpp:151] conv3 needs backward computation.

I0721 10:38:16.735224 4692 net.cpp:74] Creating Layer relu3

I0721 10:38:16.735234 4692 net.cpp:84] relu3 <- conv3

I0721 10:38:16.735242 4692 net.cpp:98] relu3 -> conv3 (in-place)

I0721 10:38:16.735250 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.735258 4692 net.cpp:151] relu3 needs backward computation.

I0721 10:38:16.735302 4692 net.cpp:74] Creating Layer conv4

I0721 10:38:16.735312 4692 net.cpp:84] conv4 <- conv3

I0721 10:38:16.735321 4692 net.cpp:110] conv4 -> conv4

I0721 10:38:16.743952 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.743988 4692 net.cpp:151] conv4 needs backward computation.

I0721 10:38:16.744000 4692 net.cpp:74] Creating Layer relu4

I0721 10:38:16.744010 4692 net.cpp:84] relu4 <- conv4

I0721 10:38:16.744020 4692 net.cpp:98] relu4 -> conv4 (in-place)

I0721 10:38:16.744030 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.744038 4692 net.cpp:151] relu4 needs backward computation.

I0721 10:38:16.744050 4692 net.cpp:74] Creating Layer conv5

I0721 10:38:16.744057 4692 net.cpp:84] conv5 <- conv4

I0721 10:38:16.744067 4692 net.cpp:110] conv5 -> conv5

I0721 10:38:16.748935 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.748955 4692 net.cpp:151] conv5 needs backward computation.

I0721 10:38:16.748965 4692 net.cpp:74] Creating Layer relu5

I0721 10:38:16.748975 4692 net.cpp:84] relu5 <- conv5

I0721 10:38:16.748983 4692 net.cpp:98] relu5 -> conv5 (in-place)

I0721 10:38:16.748998 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.749011 4692 net.cpp:151] relu5 needs backward computation.

I0721 10:38:16.749022 4692 net.cpp:74] Creating Layer pool5

I0721 10:38:16.749030 4692 net.cpp:84] pool5 <- conv5

I0721 10:38:16.749039 4692 net.cpp:110] pool5 -> pool5

I0721 10:38:16.749050 4692 net.cpp:125] Top shape: 256 256 6 6 (2359296)

I0721 10:38:16.749058 4692 net.cpp:151] pool5 needs backward computation.

I0721 10:38:16.749074 4692 net.cpp:74] Creating Layer fc6

I0721 10:38:16.749083 4692 net.cpp:84] fc6 <- pool5

I0721 10:38:16.749091 4692 net.cpp:110] fc6 -> fc6

I0721 10:38:17.160079 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.160148 4692 net.cpp:151] fc6 needs backward computation.

I0721 10:38:17.160166 4692 net.cpp:74] Creating Layer relu6

I0721 10:38:17.160177 4692 net.cpp:84] relu6 <- fc6

I0721 10:38:17.160190 4692 net.cpp:98] relu6 -> fc6 (in-place)

I0721 10:38:17.160202 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.160212 4692 net.cpp:151] relu6 needs backward computation.

I0721 10:38:17.160222 4692 net.cpp:74] Creating Layer drop6

I0721 10:38:17.160230 4692 net.cpp:84] drop6 <- fc6

I0721 10:38:17.160238 4692 net.cpp:98] drop6 -> fc6 (in-place)

I0721 10:38:17.160258 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.160265 4692 net.cpp:151] drop6 needs backward computation.

I0721 10:38:17.160277 4692 net.cpp:74] Creating Layer fc7

I0721 10:38:17.160286 4692 net.cpp:84] fc7 <- fc6

I0721 10:38:17.160295 4692 net.cpp:110] fc7 -> fc7

I0721 10:38:17.342094 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.342157 4692 net.cpp:151] fc7 needs backward computation.

I0721 10:38:17.342175 4692 net.cpp:74] Creating Layer relu7

I0721 10:38:17.342185 4692 net.cpp:84] relu7 <- fc7

I0721 10:38:17.342198 4692 net.cpp:98] relu7 -> fc7 (in-place)

I0721 10:38:17.342208 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.342217 4692 net.cpp:151] relu7 needs backward computation.

I0721 10:38:17.342228 4692 net.cpp:74] Creating Layer drop7

I0721 10:38:17.342236 4692 net.cpp:84] drop7 <- fc7

I0721 10:38:17.342245 4692 net.cpp:98] drop7 -> fc7 (in-place)

I0721 10:38:17.342254 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.342262 4692 net.cpp:151] drop7 needs backward computation.

I0721 10:38:17.342274 4692 net.cpp:74] Creating Layer fc8

I0721 10:38:17.342283 4692 net.cpp:84] fc8 <- fc7

I0721 10:38:17.342291 4692 net.cpp:110] fc8 -> fc8

I0721 10:38:17.343199 4692 net.cpp:125] Top shape: 256 22 1 1 (5632)

I0721 10:38:17.343214 4692 net.cpp:151] fc8 needs backward computation.

I0721 10:38:17.343231 4692 net.cpp:74] Creating Layer loss

I0721 10:38:17.343240 4692 net.cpp:84] loss <- fc8

I0721 10:38:17.343250 4692 net.cpp:84] loss <- label

I0721 10:38:17.343264 4692 net.cpp:151] loss needs backward computation.

I0721 10:38:17.343305 4692 net.cpp:173] Collecting Learning Rate and Weight Decay.

I0721 10:38:17.343327 4692 net.cpp:166] Network initialization done.

I0721 10:38:17.343335 4692 net.cpp:167] Memory required for Data 1073760256CIFAR-10在caffe上进行训练与学习

使用数据库:CIFAR-10

60000张 32X32 彩色图像 10类,50000张训练,10000张测试

准备

在终端运行以下指令:

cd $CAFFE_ROOT/data/cifar10

./get_cifar10.sh

cd $CAFFE_ROOT/examples/cifar10

./create_cifar10.sh其中CAFFE_ROOT是caffe-master在你机子的地址

运行之后,将会在examples中出现数据库文件./cifar10-leveldb和数据库图像均值二进制文件./mean.binaryproto

模型

该CNN由卷积层,POOLing层,非线性变换层,在顶端的局部对比归一化线性分类器组成。该模型的定义在CAFFE_ROOT/examples/cifar10 directory’s cifar10_quick_train.prototxt中,可以进行修改。其实后缀为prototxt很多都是用来修改配置的。

训练和测试

训练这个模型非常简单,当我们写好参数设置的文件cifar10_quick_solver.prototxt和定义的文件cifar10_quick_train.prototxt和cifar10_quick_test.prototxt后,运行train_quick.sh或者在终端输入下面的命令:

cd $CAFFE_ROOT/examples/cifar10

./train_quick.sh即可,train_quick.sh是一个简单的脚本,会把执行的信息显示出来,培训的工具是train_net.bin,cifar10_quick_solver.prototxt作为参数。

然后出现类似以下的信息:这是搭建模型的相关信息

I0317 21:52:48.945710 2008298256 net.cpp:74] Creating Layer conv1

I0317 21:52:48.945716 2008298256 net.cpp:84] conv1 <- data

I0317 21:52:48.945725 2008298256 net.cpp:110] conv1 -> conv1

I0317 21:52:49.298691 2008298256 net.cpp:125] Top shape: 100 32 32 32 (3276800)

I0317 21:52:49.298719 2008298256 net.cpp:151] conv1 needs backward computation.接着:

0317 21:52:49.309370 2008298256 net.cpp:166] Network initialization done.

I0317 21:52:49.309376 2008298256 net.cpp:167] Memory required for Data 23790808

I0317 21:52:49.309422 2008298256 solver.cpp:36] Solver scaffolding done.

I0317 21:52:49.309447 2008298256 solver.cpp:47] Solving CIFAR10_quick_train之后,训练开始

I0317 21:53:12.179772 2008298256 solver.cpp:208] Iteration 100, lr = 0.001

I0317 21:53:12.185698 2008298256 solver.cpp:65] Iteration 100, loss = 1.73643

...

I0317 21:54:41.150030 2008298256 solver.cpp:87] Iteration 500, Testing net

I0317 21:54:47.129461 2008298256 solver.cpp:114] Test score #0: 0.5504

I0317 21:54:47.129500 2008298256 solver.cpp:114] Test score #1: 1.27805其中每100次迭代次数显示一次训练时lr(learningrate),和loss(训练损失函数),每500次测试一次,输出score 0(准确率)和score 1(测试损失函数)

当5000次迭代之后,正确率约为75%,模型的参数存储在二进制protobuf格式在cifar10_quick_iter_5000

然后,这个模型就可以用来运行在新数据上了。

其他

另外,更改cifar*solver.prototxt文件可以使用CPU训练,

# solver mode: CPU or GPU

solver_mode: CPU可以看看CPU和GPU训练的差别。

主要资料来源:caffe官网教程

from: http://suanfazu.com/t/caffe/281/5

Caffe (CNN, deep learning) 介绍

Caffe -----------Convolution Architecture For Feature Embedding (Extraction)

- Caffe 是什么东东?

- CNN (Deep Learning) 工具箱

- C++ 语言架构

- CPU 和GPU 无缝交换

- Python 和matlab的封装

- 但是,Decaf只是CPU 版本。

-

为什么要用Caffe?

- 运算速度快。简单 友好的架构 用到的一些库:

- Google Logging library (Glog): 一个C++语言的应用级日志记录框架,提供了C++风格的流操作和各种助手宏.

- lebeldb(数据存储): 是一个google实现的非常高效的kv数据库,单进程操作。

- CBLAS library(CPU版本的矩阵操作)

- CUBLAS library (GPU 版本的矩阵操作)

-

Caffe 架构

- 预处理图像的leveldb构建

输入:一批图像和label (2和3)

输出:leveldb (4)

指令里包含如下信息:- conver_imageset (构建leveldb的可运行程序)

- train/ (此目录放处理的jpg或者其他格式的图像)

- label.txt (图像文件名及其label信息)

- 输出的leveldb文件夹的名字

- CPU/GPU (指定是在cpu上还是在gpu上运行code)

-

CNN网络配置文件

- Imagenet_solver.prototxt (包含全局参数的配置的文件)

- Imagenet.prototxt (包含训练网络的配置的文件)

- Imagenet_val.prototxt (包含测试网络的配置文件)

Caffe深度学习之图像分类模型AlexNet解读

在imagenet上的图像分类challenge上Alex提出的alexnet网络结构模型赢得了2012届的冠军。要研究CNN类型DL网络模型在图像分类上的应用,就逃不开研究alexnet,这是CNN在图像分类上的经典模型(DL火起来之后)。

在DL开源实现caffe的model样例中,它也给出了alexnet的复现,具体网络配置文件如下train_val.prototxt98

接下来本文将一步步对该网络配置结构中各个层进行详细的解读(训练阶段):

-

conv1阶段DFD(data flow diagram):

-

conv2阶段DFD(data flow diagram):

-

conv3阶段DFD(data flow diagram):

-

conv4阶段DFD(data flow diagram):

-

conv5阶段DFD(data flow diagram):

-

fc6阶段DFD(data flow diagram):

-

fc7阶段DFD(data flow diagram):

-

fc8阶段DFD(data flow diagram):

各种layer的operation更多解释可以参考Caffe Layer Catalogue82

从计算该模型的数据流过程中,该模型参数大概5kw+。

caffe的输出中也有包含这块的内容日志,详情如下:

I0721 10:38:15.326920 4692 net.cpp:125] Top shape: 256 3 227 227 (39574272)

I0721 10:38:15.326971 4692 net.cpp:125] Top shape: 256 1 1 1 (256)

I0721 10:38:15.326982 4692 net.cpp:156] data does not need backward computation.

I0721 10:38:15.327003 4692 net.cpp:74] Creating Layer conv1

I0721 10:38:15.327011 4692 net.cpp:84] conv1 <- data

I0721 10:38:15.327033 4692 net.cpp:110] conv1 -> conv1

I0721 10:38:16.721956 4692 net.cpp:125] Top shape: 256 96 55 55 (74342400)

I0721 10:38:16.722030 4692 net.cpp:151] conv1 needs backward computation.

I0721 10:38:16.722059 4692 net.cpp:74] Creating Layer relu1

I0721 10:38:16.722070 4692 net.cpp:84] relu1 <- conv1

I0721 10:38:16.722082 4692 net.cpp:98] relu1 -> conv1 (in-place)

I0721 10:38:16.722096 4692 net.cpp:125] Top shape: 256 96 55 55 (74342400)

I0721 10:38:16.722105 4692 net.cpp:151] relu1 needs backward computation.

I0721 10:38:16.722116 4692 net.cpp:74] Creating Layer pool1

I0721 10:38:16.722125 4692 net.cpp:84] pool1 <- conv1

I0721 10:38:16.722133 4692 net.cpp:110] pool1 -> pool1

I0721 10:38:16.722167 4692 net.cpp:125] Top shape: 256 96 27 27 (17915904)

I0721 10:38:16.722187 4692 net.cpp:151] pool1 needs backward computation.

I0721 10:38:16.722205 4692 net.cpp:74] Creating Layer norm1

I0721 10:38:16.722221 4692 net.cpp:84] norm1 <- pool1

I0721 10:38:16.722234 4692 net.cpp:110] norm1 -> norm1

I0721 10:38:16.722251 4692 net.cpp:125] Top shape: 256 96 27 27 (17915904)

I0721 10:38:16.722260 4692 net.cpp:151] norm1 needs backward computation.

I0721 10:38:16.722272 4692 net.cpp:74] Creating Layer conv2

I0721 10:38:16.722280 4692 net.cpp:84] conv2 <- norm1

I0721 10:38:16.722290 4692 net.cpp:110] conv2 -> conv2

I0721 10:38:16.725225 4692 net.cpp:125] Top shape: 256 256 27 27 (47775744)

I0721 10:38:16.725242 4692 net.cpp:151] conv2 needs backward computation.

I0721 10:38:16.725253 4692 net.cpp:74] Creating Layer relu2

I0721 10:38:16.725261 4692 net.cpp:84] relu2 <- conv2

I0721 10:38:16.725270 4692 net.cpp:98] relu2 -> conv2 (in-place)

I0721 10:38:16.725280 4692 net.cpp:125] Top shape: 256 256 27 27 (47775744)

I0721 10:38:16.725288 4692 net.cpp:151] relu2 needs backward computation.

I0721 10:38:16.725298 4692 net.cpp:74] Creating Layer pool2

I0721 10:38:16.725307 4692 net.cpp:84] pool2 <- conv2

I0721 10:38:16.725317 4692 net.cpp:110] pool2 -> pool2

I0721 10:38:16.725329 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.725338 4692 net.cpp:151] pool2 needs backward computation.

I0721 10:38:16.725358 4692 net.cpp:74] Creating Layer norm2

I0721 10:38:16.725368 4692 net.cpp:84] norm2 <- pool2

I0721 10:38:16.725378 4692 net.cpp:110] norm2 -> norm2

I0721 10:38:16.725389 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.725399 4692 net.cpp:151] norm2 needs backward computation.

I0721 10:38:16.725409 4692 net.cpp:74] Creating Layer conv3

I0721 10:38:16.725419 4692 net.cpp:84] conv3 <- norm2

I0721 10:38:16.725427 4692 net.cpp:110] conv3 -> conv3

I0721 10:38:16.735193 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.735213 4692 net.cpp:151] conv3 needs backward computation.

I0721 10:38:16.735224 4692 net.cpp:74] Creating Layer relu3

I0721 10:38:16.735234 4692 net.cpp:84] relu3 <- conv3

I0721 10:38:16.735242 4692 net.cpp:98] relu3 -> conv3 (in-place)

I0721 10:38:16.735250 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.735258 4692 net.cpp:151] relu3 needs backward computation.

I0721 10:38:16.735302 4692 net.cpp:74] Creating Layer conv4

I0721 10:38:16.735312 4692 net.cpp:84] conv4 <- conv3

I0721 10:38:16.735321 4692 net.cpp:110] conv4 -> conv4

I0721 10:38:16.743952 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.743988 4692 net.cpp:151] conv4 needs backward computation.

I0721 10:38:16.744000 4692 net.cpp:74] Creating Layer relu4

I0721 10:38:16.744010 4692 net.cpp:84] relu4 <- conv4

I0721 10:38:16.744020 4692 net.cpp:98] relu4 -> conv4 (in-place)

I0721 10:38:16.744030 4692 net.cpp:125] Top shape: 256 384 13 13 (16613376)

I0721 10:38:16.744038 4692 net.cpp:151] relu4 needs backward computation.

I0721 10:38:16.744050 4692 net.cpp:74] Creating Layer conv5

I0721 10:38:16.744057 4692 net.cpp:84] conv5 <- conv4

I0721 10:38:16.744067 4692 net.cpp:110] conv5 -> conv5

I0721 10:38:16.748935 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.748955 4692 net.cpp:151] conv5 needs backward computation.

I0721 10:38:16.748965 4692 net.cpp:74] Creating Layer relu5

I0721 10:38:16.748975 4692 net.cpp:84] relu5 <- conv5

I0721 10:38:16.748983 4692 net.cpp:98] relu5 -> conv5 (in-place)

I0721 10:38:16.748998 4692 net.cpp:125] Top shape: 256 256 13 13 (11075584)

I0721 10:38:16.749011 4692 net.cpp:151] relu5 needs backward computation.

I0721 10:38:16.749022 4692 net.cpp:74] Creating Layer pool5

I0721 10:38:16.749030 4692 net.cpp:84] pool5 <- conv5

I0721 10:38:16.749039 4692 net.cpp:110] pool5 -> pool5

I0721 10:38:16.749050 4692 net.cpp:125] Top shape: 256 256 6 6 (2359296)

I0721 10:38:16.749058 4692 net.cpp:151] pool5 needs backward computation.

I0721 10:38:16.749074 4692 net.cpp:74] Creating Layer fc6

I0721 10:38:16.749083 4692 net.cpp:84] fc6 <- pool5

I0721 10:38:16.749091 4692 net.cpp:110] fc6 -> fc6

I0721 10:38:17.160079 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.160148 4692 net.cpp:151] fc6 needs backward computation.

I0721 10:38:17.160166 4692 net.cpp:74] Creating Layer relu6

I0721 10:38:17.160177 4692 net.cpp:84] relu6 <- fc6

I0721 10:38:17.160190 4692 net.cpp:98] relu6 -> fc6 (in-place)

I0721 10:38:17.160202 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.160212 4692 net.cpp:151] relu6 needs backward computation.

I0721 10:38:17.160222 4692 net.cpp:74] Creating Layer drop6

I0721 10:38:17.160230 4692 net.cpp:84] drop6 <- fc6

I0721 10:38:17.160238 4692 net.cpp:98] drop6 -> fc6 (in-place)

I0721 10:38:17.160258 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.160265 4692 net.cpp:151] drop6 needs backward computation.

I0721 10:38:17.160277 4692 net.cpp:74] Creating Layer fc7

I0721 10:38:17.160286 4692 net.cpp:84] fc7 <- fc6

I0721 10:38:17.160295 4692 net.cpp:110] fc7 -> fc7

I0721 10:38:17.342094 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.342157 4692 net.cpp:151] fc7 needs backward computation.

I0721 10:38:17.342175 4692 net.cpp:74] Creating Layer relu7

I0721 10:38:17.342185 4692 net.cpp:84] relu7 <- fc7

I0721 10:38:17.342198 4692 net.cpp:98] relu7 -> fc7 (in-place)

I0721 10:38:17.342208 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.342217 4692 net.cpp:151] relu7 needs backward computation.

I0721 10:38:17.342228 4692 net.cpp:74] Creating Layer drop7

I0721 10:38:17.342236 4692 net.cpp:84] drop7 <- fc7

I0721 10:38:17.342245 4692 net.cpp:98] drop7 -> fc7 (in-place)

I0721 10:38:17.342254 4692 net.cpp:125] Top shape: 256 4096 1 1 (1048576)

I0721 10:38:17.342262 4692 net.cpp:151] drop7 needs backward computation.

I0721 10:38:17.342274 4692 net.cpp:74] Creating Layer fc8

I0721 10:38:17.342283 4692 net.cpp:84] fc8 <- fc7

I0721 10:38:17.342291 4692 net.cpp:110] fc8 -> fc8

I0721 10:38:17.343199 4692 net.cpp:125] Top shape: 256 22 1 1 (5632)

I0721 10:38:17.343214 4692 net.cpp:151] fc8 needs backward computation.

I0721 10:38:17.343231 4692 net.cpp:74] Creating Layer loss

I0721 10:38:17.343240 4692 net.cpp:84] loss <- fc8

I0721 10:38:17.343250 4692 net.cpp:84] loss <- label

I0721 10:38:17.343264 4692 net.cpp:151] loss needs backward computation.

I0721 10:38:17.343305 4692 net.cpp:173] Collecting Learning Rate and Weight Decay.

I0721 10:38:17.343327 4692 net.cpp:166] Network initialization done.

I0721 10:38:17.343335 4692 net.cpp:167] Memory required for Data 1073760256CIFAR-10在caffe上进行训练与学习

使用数据库:CIFAR-10

60000张 32X32 彩色图像 10类,50000张训练,10000张测试

准备

在终端运行以下指令:

cd $CAFFE_ROOT/data/cifar10

./get_cifar10.sh

cd $CAFFE_ROOT/examples/cifar10

./create_cifar10.sh其中CAFFE_ROOT是caffe-master在你机子的地址

运行之后,将会在examples中出现数据库文件./cifar10-leveldb和数据库图像均值二进制文件./mean.binaryproto

模型

该CNN由卷积层,POOLing层,非线性变换层,在顶端的局部对比归一化线性分类器组成。该模型的定义在CAFFE_ROOT/examples/cifar10 directory’s cifar10_quick_train.prototxt中,可以进行修改。其实后缀为prototxt很多都是用来修改配置的。

训练和测试

训练这个模型非常简单,当我们写好参数设置的文件cifar10_quick_solver.prototxt和定义的文件cifar10_quick_train.prototxt和cifar10_quick_test.prototxt后,运行train_quick.sh或者在终端输入下面的命令:

cd $CAFFE_ROOT/examples/cifar10

./train_quick.sh即可,train_quick.sh是一个简单的脚本,会把执行的信息显示出来,培训的工具是train_net.bin,cifar10_quick_solver.prototxt作为参数。

然后出现类似以下的信息:这是搭建模型的相关信息

I0317 21:52:48.945710 2008298256 net.cpp:74] Creating Layer conv1

I0317 21:52:48.945716 2008298256 net.cpp:84] conv1 <- data

I0317 21:52:48.945725 2008298256 net.cpp:110] conv1 -> conv1

I0317 21:52:49.298691 2008298256 net.cpp:125] Top shape: 100 32 32 32 (3276800)

I0317 21:52:49.298719 2008298256 net.cpp:151] conv1 needs backward computation.接着:

0317 21:52:49.309370 2008298256 net.cpp:166] Network initialization done.

I0317 21:52:49.309376 2008298256 net.cpp:167] Memory required for Data 23790808

I0317 21:52:49.309422 2008298256 solver.cpp:36] Solver scaffolding done.

I0317 21:52:49.309447 2008298256 solver.cpp:47] Solving CIFAR10_quick_train之后,训练开始

I0317 21:53:12.179772 2008298256 solver.cpp:208] Iteration 100, lr = 0.001

I0317 21:53:12.185698 2008298256 solver.cpp:65] Iteration 100, loss = 1.73643

...

I0317 21:54:41.150030 2008298256 solver.cpp:87] Iteration 500, Testing net

I0317 21:54:47.129461 2008298256 solver.cpp:114] Test score #0: 0.5504

I0317 21:54:47.129500 2008298256 solver.cpp:114] Test score #1: 1.27805其中每100次迭代次数显示一次训练时lr(learningrate),和loss(训练损失函数),每500次测试一次,输出score 0(准确率)和score 1(测试损失函数)

当5000次迭代之后,正确率约为75%,模型的参数存储在二进制protobuf格式在cifar10_quick_iter_5000

然后,这个模型就可以用来运行在新数据上了。

其他

另外,更改cifar*solver.prototxt文件可以使用CPU训练,

# solver mode: CPU or GPU

solver_mode: CPU可以看看CPU和GPU训练的差别。

主要资料来源:caffe官网教程

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?