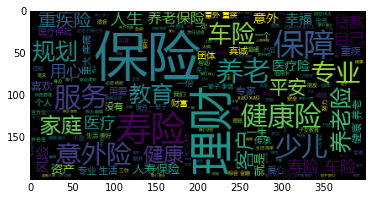

#先上图

#代码

import time

import re

import requests

from bs4 import BeautifulSoup

import pandas as pd

import numpy as np

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

from selenium.webdriver.common.action_chains import ActionChains

from selenium.webdriver.support.select import Select

#driver=webdriver.Chrome()

url_list=[]

for i in range(1,1742):

print(i)

url='https://www.bxd365.com/agent/0-0-0/'+str(i)+'.html'

#url='https://www.bxd365.com/agent/0-0-0/2.html'

try:

page=requests.get(url,timeout=15)

except:

time.sleep(5)

page=requests.get(url,timeout=15)

page.encoding='utf-8'

page=page.text

p_url=re.compile(r"""class="name">\r\n\t\t\t\t\t\t\t<a href="([\s\S]*?)" target="_blank">""")

url=p_url.findall(page)

url_list.extend(url)

len(url_list)

count=0

content_dict={}

for url in url_list:

#url='https://bxd574268973.bxd365.com/'

try:

page=requests.get(url,timeout=15)

except:

time.sleep(5)

page=requests.get(url,timeout=15)

page.encoding='utf-8'

page=page.text

page

p_content=re.compile(r"""个性签名:</span>\r\n\t\t\t\t\t\t<a class="f14co2 cu">\r\n\t\t\t\t\t\t\t([\s\S]*?)\t\t\t\t\t\t</a>""")

content=p_content.findall(page)

if len(content)>0:

content_dict[url]=content[0]

print(url)

count+=1

print(count)

len(content_dict)

result=''

count=0

for i in content_dict.values():

if i!='保险是晴天的一把伞,是汽车的安全带':

result=result+i

count=count+1

print(count)

resultimport matplotlib.pyplot as plt #数学绘图库

import jieba #分词库

from wordcloud import WordCloud #词云库

#1、读入txt文本数据

text = open(r'C:/Users/Administrator/Desktop/保险代理人个性标签.txt',"r").read()

#2、结巴分词,默认精确模式。可以添加自定义词典userdict.txt,然后jieba.load_userdict(file_name) ,file_name为文件类对象或自定义词典的路径

# 自定义词典格式和默认词库dict.txt一样,一个词占一行:每一行分三部分:词语、词频(可省略)、词性(可省略),用空格隔开,顺序不可颠倒

cut_text= jieba.cut(text)

result= "/".join(cut_text)#必须给个符号分隔开分词结果来形成字符串,否则不能绘制词云

#print(result)

my_wordcloud = WordCloud(font_path='C:/Users/Administrator/Desktop/msyh.ttf').generate(result)

plt.imshow(my_wordcloud)

plt.axis("off")

plt.show()

1965

1965

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?