【Machine Learning实验1】batch gradient descent(批量梯度下降) 和 stochastic gradient descent(随机梯度下降)

批量梯度下降是一种对参数的update进行累积,然后批量更新的一种方式。用于在已知整个训练集时的一种训练方式,但对于大规模数据并不合适。

随机梯度下降是一种对参数随着样本训练,一个一个的及时update的方式。常用于大规模训练集,当往往容易收敛到局部最优解。

详细参见:Andrew Ng 的Machine Learning的课件(见参考1)

可能存在的改进

1)样本可靠度,特征完备性的验证

例如可能存在一些outlier,这种outlier可能是测量误差,也有可能是未考虑样本特征,例如有一件衣服色彩评分1分,料子1分,确可以卖到10000万元,原来是上面有一个姚明的签名,这个特征没有考虑,所以出现了训练的误差,识别样本中outlier产生的原因。

2)批量梯度下降方法的改进

3)随机梯度下降方法的改进

找到一个合适的训练路径(学习顺序),去最大可能的找到全局最优解

4)假设合理性的检验

H(X)是否合理的检验

5)维度放大

维度放大和过拟合问题,维度过大对训练集拟合会改善,对测试集的适用性会变差,如果找到合理的方法?

下面是我做的一个实验

假定有这样一个对衣服估价的训练样本,代码中matrix表示,第一列表示色彩的评分,第二列表示对料子质地的评分,例如第一个样本1,4表示这件衣服色彩打1分,料子打4分。我们需要训练的是theta,其表示在衣服的估价中,色彩和料子的权重,这个权重是未知量,是需要训练的,训练的依据是这四个样本的真实价格已知,分别为19元,...20元。

通过批量梯度下降和随机梯度下降的方法均可得到theta_C={3,4}T

/*

Matrix_A

1 4

2 5

5 1

4 2

theta_C

?

?

Matrix_A*theta_C

19

26

19

20

*/

批量梯度下降法:

- #include "stdio.h"

- int main(void)

- {

- float matrix[4][2]={{1,4},{2,5},{5,1},{4,2}};

- float result[4]={19,26,19,20};

- float theta[2]={2,5}; //initialized theta {2,5}, we use the algorithm to get {3,4} to fit the model

- float learning_rate = 0.01;

- float loss = 1000.0; //set a loss big enough

- for(int i = 0;i<100&&loss>0.0001;++i)

- {

- float error_sum = 0.0;

- for(int j = 0;j<4;++j)

- {

- float h = 0.0;

- for(int k=0;k<2;++k)

- {

- h += matrix[j][k]*theta[k];

- }

- error_sum = result[j]-h;

- for(int k=0;k<2;++k)

- {

- theta[k] += learning_rate*(error_sum)*matrix[j][k];

- }

- }

- printf("*************************************\n");

- printf("theta now: %f,%f\n",theta[0],theta[1]);

- loss = 0.0;

- for(int j = 0;j<4;++j)

- {

- float sum=0.0;

- for(int k = 0;k<2;++k)

- {

- sum += matrix[j][k]*theta[k];

- }

- loss += (sum-result[j])*(sum-result[j]);

- }

- printf("loss now: %f\n",loss);

- }

- return 0;

- }

随机梯度下降法

- int main(void)

- {

- float matrix[4][2]={{1,4},{2,5},{5,1},{4,2}};

- float result[4]={19,26,19,20};

- float theta[2]={2,5};

- float loss = 10.0;

- for(int i =0 ;i<100&&loss>0.001;++i)

- {

- float error_sum=0.0;

- int j=i%4;

- {

- float h = 0.0;

- for(int k=0;k<2;++k)

- {

- h += matrix[j][k]*theta[k];

- }

- error_sum = result[j]-h;

- for(int k=0;k<2;++k)

- {

- theta[k] = theta[k]+0.01*(error_sum)*matrix[j][k];

- }

- }

- printf("%f,%f\n",theta[0],theta[1]);

- float loss = 0.0;

- for(int j = 0;j<4;++j)

- {

- float sum=0.0;

- for(int k = 0;k<2;++k)

- {

- sum += matrix[j][k]*theta[k];

- }

- loss += (sum-result[j])*(sum-result[j]);

- }

- printf("%f\n",loss);

- }

- return 0;

- }

参考:

【1】http://www.stanford.edu/class/cs229/notes/cs229-notes1.pdf

【2】http://www.cnblogs.com/rocketfan/archive/2011/02/27/1966325.html

【3】http://www.dsplog.com/2011/10/29/batch-gradient-descent/

【4】http://ygc.name/2011/03/22/machine-learning-ex2-linear-regression/

【Machine Learning实验2】 Logistic Regression求解classification问题

classification问题和regression问题类似,区别在于y值是一个离散值,例如binary classification,y值只取0或1。

方法来自Andrew Ng的Machine Learning课件的note1的PartII,Classification and logsitic regression.

实验表明,通过多次迭代,能够最大化Likehood,使得分类有效,实验数据为人工构建,没有实际物理意义,matrix的第一列为x0,取常数1,第二列为区分列,第三列,第四列为非区分列,最后对预测起到主导地位的参数是theta[0]和theta[1]。

- #include "stdio.h"

- #include "math.h"

- double matrix[6][4]={{1,47,76,24}, //include x0=1

- {1,46,77,23},

- {1,48,74,22},

- {1,34,76,21},

- {1,35,75,24},

- {1,34,77,25},

- };

- double result[]={1,1,1,0,0,0};

- double theta[]={1,1,1,1}; // include theta0

- double function_g(double x)

- {

- double ex = pow(2.718281828,x);

- return ex/(1+ex);

- }

- int main(void)

- {

- double likelyhood = 0.0;

- float sum=0.0;

- for(int j = 0;j<6;++j)

- {

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[k];

- }

- printf("sample %d,%f\n",j,function_g(xi));

- sum += result[j]*log(function_g(xi)) + (1-result[j])*log(1-function_g(xi)) ;

- }

- printf("%f\n",sum);

- for(int i =0 ;i<1000;++i)

- {

- double error_sum=0.0;

- int j=i%6;

- {

- double h = 0.0;

- for(int k=0;k<4;++k)

- {

- h += matrix[j][k]*theta[k];

- }

- error_sum = result[j]-function_g(h);

- for(int k=0;k<4;++k)

- {

- theta[k] = theta[k]+0.001*(error_sum)*matrix[j][k];

- }

- }

- printf("theta now:%f,%f,%f,%f\n",theta[0],theta[1],theta[2],theta[3]);

- float sum=0.0;

- for(int j = 0;j<6;++j)

- {

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[k];

- }

- printf("sample output now: %d,%f\n",j,function_g(xi));

- sum += result[j]*log(function_g(xi)) + (1-result[j])*log(1-function_g(xi)) ;

- }

- printf("maximize the log likelihood now:%f\n",sum);

- printf("************************************\n");

- }

- return 0;

- }

神奇的SoftMax regression,搞了一晚上搞不定,凌晨3点起来继续搞,刚刚终于调通。我算是彻底理解了,哈哈。代码试验了Andrew Ng的第四课上提到的SoftMax regression算法,并参考了http://ufldl.stanford.edu/wiki/index.php/Softmax_Regression

最终收敛到这个结果,巨爽。

smaple 0: 0.983690,0.004888,0.011422,likelyhood:-0.016445

smaple 1: 0.940236,0.047957,0.011807,likelyhood:-0.061625

smaple 2: 0.818187,0.001651,0.180162,likelyhood:-0.200665

smaple 3: 0.000187,0.999813,0.000000,likelyhood:-0.000187

smaple 4: 0.007913,0.992087,0.000000,likelyhood:-0.007945

smaple 5: 0.001585,0.998415,0.000000,likelyhood:-0.001587

smaple 6: 0.020159,0.000001,0.979840,likelyhood:-0.020366

smaple 7: 0.018230,0.000000,0.981770,likelyhood:-0.018398

smaple 8: 0.025072,0.000000,0.974928,likelyhood:-0.025392

- #include "stdio.h"

- #include "math.h"

- double matrix[9][4]={{1,47,76,24}, //include x0=1

- {1,46,77,23},

- {1,48,74,22},

- {1,34,76,21},

- {1,35,75,24},

- {1,34,77,25},

- {1,55,76,21},

- {1,56,74,22},

- {1,55,72,22},

- };

- double result[]={1,

- 1,

- 1,

- 2,

- 2,

- 2,

- 3,

- 3,

- 3,};

- double theta[2][4]={

- {0.3,0.3,0.01,0.01},

- {0.5,0.5,0.01,0.01}}; // include theta0

- double function_g(double x)

- {

- double ex = pow(2.718281828,x);

- return ex/(1+ex);

- }

- double function_e(double x)

- {

- return pow(2.718281828,x);

- }

- int main(void)

- {

- double likelyhood = 0.0;

- for(int j = 0;j<9;++j)

- {

- double sum = 1.0; // this is very important, because exp(thetak x)=1

- for(int l = 0;l<2;++l)

- {

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[l][k];

- }

- sum += function_e(xi);

- }

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[0][k];

- }

- double p1 = function_e(xi)/sum;

- xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[1][k];

- }

- double p2 = function_e(xi)/sum;

- double p3 = 1-p1-p2;

- double ltheta = 0.0;

- if(result[j]==1)

- ltheta = log(p1);

- else if(result[j]==2)

- ltheta = log(p2);

- else if(result[j]==3)

- ltheta = log(p3);

- else

- {}

- printf("smaple %d: %f,%f,%f,likelyhood:%f\n",j,p1,p2,p3,ltheta);

- }

- for(int i =0 ;i<1000;++i)

- {

- for(int j=0;j<9;++j)

- {

- double sum = 1.0; // this is very important, because exp(thetak x)=1

- for(int l = 0;l<2;++l)

- {

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[l][k];

- }

- sum += function_e(xi);

- }

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[0][k];

- }

- double p1 = function_e(xi)/sum;

- xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[1][k];

- }

- double p2 = function_e(xi)/sum;

- double p3 = 1-p1-p2;

- for(int m = 0; m<4; ++m)

- {

- if(result[j]==1)

- {

- theta[0][m] = theta[0][m] + 0.001*(1-p1)*matrix[j][m];

- }

- else

- {

- theta[0][m] = theta[0][m] + 0.001*(-p1)*matrix[j][m];

- }

- if(result[j]==2)

- {

- theta[1][m] = theta[1][m] + 0.001*(1-p2)*matrix[j][m];

- }

- else

- {

- theta[1][m] = theta[1][m] + 0.001*(-p2)*matrix[j][m];

- }

- }

- }

- double likelyhood = 0.0;

- for(int j = 0;j<9;++j)

- {

- double sum = 1.0; // this is very important, because exp(thetak x)=1

- for(int l = 0;l<2;++l)

- {

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[l][k];

- }

- sum += function_e(xi);

- }

- double xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[0][k];

- }

- double p1 = function_e(xi)/sum;

- xi = 0.0;

- for(int k=0;k<4;++k)

- {

- xi += matrix[j][k]*theta[1][k];

- }

- double p2 = function_e(xi)/sum;

- double p3 = 1-p1-p2;

- double ltheta = 0.0;

- if(result[j]==1)

- ltheta = log(p1);

- else if(result[j]==2)

- ltheta = log(p2);

- else if(result[j]==3)

- ltheta = log(p3);

- else

- {}

- printf("smaple %d: %f,%f,%f,likelyhood:%f\n",j,p1,p2,p3,ltheta);

- }

- }

- return 0;

- }

[Machine learning 实验4]linear programming

- #include "stdio.h"

-

- int main(void)

- {

- /*float constrain_set[3][7]={

- {-3,-6,-2,0,0,0,0}, //x0-3x1-6x2-2x3=0 object function

- {3,4,1,1,0,2,0}, //0x0+3x1+4x2+x3+x4=2 constrain 1

- {1,3,2,0,1,1,0} //0x0+x1+3x2+2x3+x5=1 constrain 2

- };

- int b = 5;

- int beta = 6;

- int row=3;*/

- float constrain_set[][7]={ //add the last column to store beta,no use for problem defination

- {-4,-3,0,0,0,0 ,0},//x0-4x1-3x2=0

- { 3, 4,1,0,0,12,0},//0x0+3x1+4x2+x3=12

- { 3, 3,0,1,0,10,0},//0x0+3x1+3x2+x4=10

- { 4, 2,0,0,1,8,0}, //0x0+4x1+2x2+x5=8

- };

- int b=5;

- int beta=6;

- int row=4;

-

-

- bool quit = false;

- for(;!quit;)

- {

- //find the min_value && negative, define column

- float min_value = 0.0;

- int min_column = 0;

- for(int i=0;i<b;++i)

- {

- if(constrain_set[0][i] < min_value)

- {

- min_value = constrain_set[0][i] ;

- min_column = i;

- }

- }

- if(min_value>=0.0)

- {

- break;

- }

- //find the min_beta ,define row

- for(int i=1;i<row;++i)

- {

- constrain_set[i][beta] = constrain_set[i][b]/constrain_set[i][min_column];

- }

- float min_beta = constrain_set[1][beta];

- int min_row = 1;

- for(int i=1;i<row;++i)

- {

- if(constrain_set[i][beta]<min_beta)

- {

- min_beta = constrain_set[i][beta];

- min_row = i;

- }

- }

- float c = constrain_set[min_row][min_column];

- for(int i=0;i<=b;++i)

- {

- constrain_set[min_row][i] /= c;

- }

- for(int i=0;i<row;++i)

- {

- if(i==min_row)continue;

- c = constrain_set[i][min_column];

- for(int j=0;j<=b;++j)

- {

- constrain_set[i][j]-=constrain_set[min_row][j]*c;

- }

- }

- printf("maxz:%f\n",constrain_set[0][b]);

- }

- return 0;

-

- }

- #include "stdio.h"

- int main(void)

- {

- /*float constrain_set[3][7]={

- {-3,-6,-2,0,0,0,0}, //x0-3x1-6x2-2x3=0 object function

- {3,4,1,1,0,2,0}, //0x0+3x1+4x2+x3+x4=2 constrain 1

- {1,3,2,0,1,1,0} //0x0+x1+3x2+2x3+x5=1 constrain 2

- };

- int b = 5;

- int beta = 6;

- int row=3;*/

- float constrain_set[][7]={ //add the last column to store beta,no use for problem defination

- {-4,-3,0,0,0,0 ,0},//x0-4x1-3x2=0

- { 3, 4,1,0,0,12,0},//0x0+3x1+4x2+x3=12

- { 3, 3,0,1,0,10,0},//0x0+3x1+3x2+x4=10

- { 4, 2,0,0,1,8,0}, //0x0+4x1+2x2+x5=8

- };

- int b=5;

- int beta=6;

- int row=4;

- bool quit = false;

- for(;!quit;)

- {

- //find the min_value && negative, define column

- float min_value = 0.0;

- int min_column = 0;

- for(int i=0;i<b;++i)

- {

- if(constrain_set[0][i] < min_value)

- {

- min_value = constrain_set[0][i] ;

- min_column = i;

- }

- }

- if(min_value>=0.0)

- {

- break;

- }

- //find the min_beta ,define row

- for(int i=1;i<row;++i)

- {

- constrain_set[i][beta] = constrain_set[i][b]/constrain_set[i][min_column];

- }

- float min_beta = constrain_set[1][beta];

- int min_row = 1;

- for(int i=1;i<row;++i)

- {

- if(constrain_set[i][beta]<min_beta)

- {

- min_beta = constrain_set[i][beta];

- min_row = i;

- }

- }

- float c = constrain_set[min_row][min_column];

- for(int i=0;i<=b;++i)

- {

- constrain_set[min_row][i] /= c;

- }

- for(int i=0;i<row;++i)

- {

- if(i==min_row)continue;

- c = constrain_set[i][min_column];

- for(int j=0;j<=b;++j)

- {

- constrain_set[i][j]-=constrain_set[min_row][j]*c;

- }

- }

- printf("maxz:%f\n",constrain_set[0][b]);

- }

- return 0;

- }

以上代码分别为解如下两个线性规划的例题:第一个来自来自参考文献1,第二个来自参考文献2,算法主要受到参考文献2的启发

max z = 3x1+6x2+2x3

constrain:

3x1+4x2+x3 <= 2

x1+3x2+2x3 = 1

x1>=0,x2>=0,x3>=0

和

max z=4x1+3x2

constrain:

3x1+4x2<=12

3x1+3x2<=10

4x1+2x2<=8

x1>=0,x2>=0

确定换入基的原则是,

该列的系数为负值,且最小,例如-6. 表明这个系数对优化结果的影响是正面的,且看上去是最大的。

该行的beta为最小,这是为了保证变化后优化目标的系数均大于0.

参考文献

1)《组合数学》第四版 卢开澄

2)Optimization Techniques An introduction L.R.Foulds 1981 by Springer-Verlag New York Inc

说明:

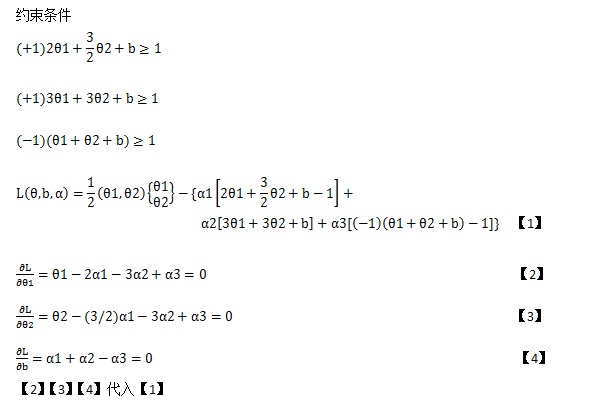

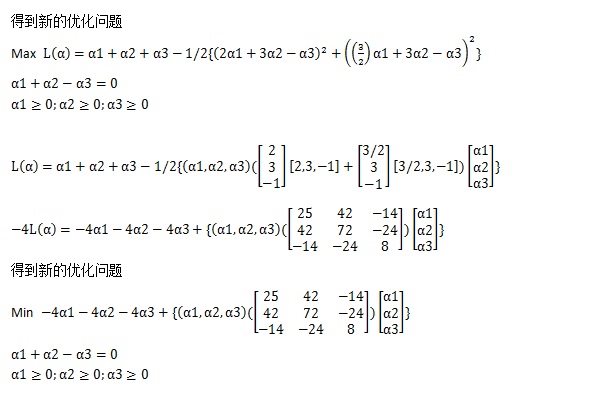

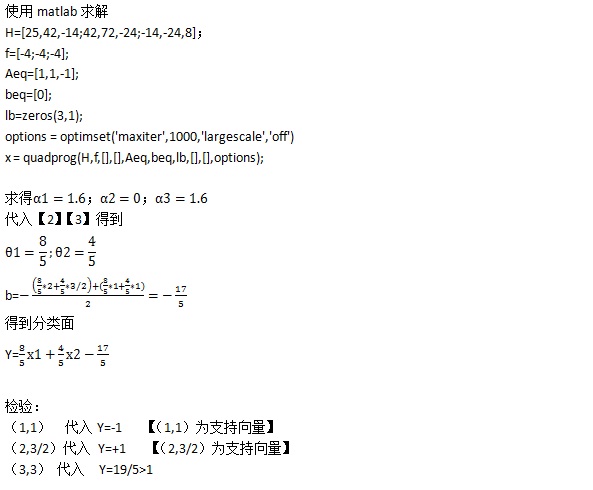

1)α2=0表示第二个样例不在分类面上,在分类面上的点αi均不为零。

2)二次项矩阵,可以通过矩阵相乘相加方法得到,如上例

3)目标函数变为负值,是为了照顾matlab的标准型。

如果我们有如下训练数据,和如下假设:

训练数据 结论

X11,X12,X13,…X1n Y1

X21,X22,X23,…x2n Y2

…

Xm1,Xm2,Xm3,…Xmn Ym

假设H(Yi) =θ0+θ1*xi1+θ2*xi2+…θn*xin

需要通过训练数据得到一组(θ0, θ1,…θn)的系数数组。

当有新的数据需要预测时,只需要将新的数据和系数数组求内积即可。<(1,N1,N2,…Nn),(θ0, θ1,…θn)>,结果即为预测值。

采用LMS algorithm(least meansquares)

实验参见:http://blog.csdn.net/pennyliang/article/details/6998517

参考文献:Andrew NG的machine learning <Supervised Learning Part I Linear Regression>

未完待续

[Machine learning]SVM实验续

假定应用多项式核(核方法) 样本使用此前的样本。

若有新元素(0,0)需要分类。

则Y(0,0) = ,则(0,0)为负例

利用核方法后,支持向量代入后不再为+1,或者-1.即Y(1,1)!=-1,Y(2,3/2)!=1。这个我还没搞明白为什么,希望有朋友能告诉我。

将x1,x2通过核函数转化为 x1*x1,x1*x2,x2*x1,x2*x2,原语料转化为

4,3,3,9/4 +

9,9,9,9 +

1,1,1,1 -

按照类似的解法解得:

Y=(< (4,3,3,9/4),(x1,x2,x3,x4) > - < (1,1,1,1),(x1,x2,x3,x4) > + b

解得b=-17.53125

Y=[(< (4,3,3,9/4),(x1,x2,x3,x4) > - < (1,1,1,1),(x1,x2,x3,x4) >+ -17.53125

除以9.28125得到:

Y=(< (0.4310 0.3232 0.3232 0.2424),(x1,x2,x3,x4) > - < (0.1077 0.1077 0.1077 0.1077),(x1,x2,x3,x4) > + -1,8889

这样:

Y(2,3/2) = +1

Y(1,1) = -1

因此没有比较纠结支持向量是否为+1或者-1,只需要正例为+r,负例为-r即可,最小的r,最大的-r均有支持向量获得。

SVM实验再续(SMO)

- #include "stdio.h"

- #include <vector>

- using namespace std;

-

- float function(float alfa[5],float H[5][5],float sign[5])

- {

-

- float ret = alfa[0]+alfa[1]+alfa[2]+alfa[3]+alfa[4];

- for(int j=0;j<5;++j)

- {

- float t=0.0;

- for(int i=0;i<5;++i)

- {

- t+=sign[i]*alfa[i]*H[j][i];

- }

- ret += -1*(t*alfa[j]*sign[j])/2;

- }

- return ret;

- }

- int main(void)

- {

- float matrix[5][4]={

- {1,5,1},

- {1,2,1},

- {2,2,-1},

- {2,1,-1},

- {1,1,-1}};

- float H[5][5];

- vector<float> c1;

- vector<float> c2;

- for(int i=0;i<5;++i)

- {

- c1.push_back(matrix[i][0]);

- c2.push_back(matrix[i][1]);

- }

- for(int i=0;i<5;++i)

- {

- for(int j=0;j<5;++j)

- {

- H[i][j]=c1[i]*c1[j]+c2[i]*c2[j];

- printf("%f\t",H[i][j]);

- }

- printf("\n");

- }

- float alfa[5]={3,3,2,2,2};

- float sign[5];

- for(int i=0;i<5;++i)

- sign[i]=matrix[i][2];

- float last_r = function(alfa,H,sign);

- float new_r;

- float con_r;

- for(int i=0;i<5;++i)

- {

- for(int j=0;j<5;j++)

- {

- printf("%f,alfa={%f,%f,%f,%f,%f}\n",last_r,alfa[0],alfa[1],alfa[2],alfa[3],alfa[4]);

- if(i==j) continue;

- else if((alfa[i]<0.01&&alfa[i]>-0.01)&&(alfa[j]<0.01&&alfa[j]>-0.01)) continue;

- else if((alfa[j]>0.01)&&(alfa[i]<0.01&&alfa[i]>-0.01))

- {

- while(alfa[j]>0.01){

- alfa[i]+=0.1;

- new_r = function(alfa,H,sign);

- if( new_r > last_r )

- {

- alfa[j] -= 0.1*sign[i]*sign[j];

- last_r = function(alfa,H,sign);

- }

- else

- {

- alfa[i]-=0.1;

- break;

- }

- };

- }

- else if((alfa[i]>0.01)&&(alfa[j]<0.01&&alfa[j]>-0.01))

- {

- while(alfa[i]>0.01){

- alfa[j]+=0.1;

- new_r = function(alfa,H,sign);

- if( new_r > last_r )

- {

- alfa[i] -= 0.1*sign[i]*sign[j];

- last_r = function(alfa,H,sign);

- }

- else

- {

- alfa[j]-=0.1;

- break;

- }

- };

- }

- else

- {

-

- alfa[j]+=0.1;

- new_r = function(alfa,H,sign);

- alfa[j]-=0.2;

- con_r = function(alfa,H,sign);

- alfa[j]+=0.1;

-

- if(new_r>con_r&&new_r>last_r)

- {

- while(alfa[i]>0.01&&alfa[j]>0.01)

- {

- alfa[j] += 0.1;

- alfa[i] -= 0.1*sign[i]*sign[j];

- new_r = function(alfa,H,sign);

- if(new_r > last_r)

- {

- last_r = new_r;

- }

- else

- {

- alfa[j] -= 0.1;

- alfa[i] += 0.1*sign[i]*sign[j];

- break;

- }

- };

-

- }

- else if(con_r>new_r&&con_r>last_r)

- {

- while(alfa[i]>0.01&&alfa[j]>0.01)

- {

- alfa[j] -= 0.1;

- alfa[i] += 0.1*sign[i]*sign[j];

- con_r = function(alfa,H,sign);

- if(con_r > last_r)

- {

- last_r = con_r;

- }

- else

- {

- alfa[j] += 0.1;

- alfa[i] -= 0.1*sign[i]*sign[j];

- break;

- }

- }

- }

- else

- {}

- }

-

- }

- }

- printf("%f,alfa={%f,%f,%f,%f,%f}\n",last_r,alfa[0],alfa[1],alfa[2],alfa[3],alfa[4]);

- return 0;

- }

- #include "stdio.h"

- #include <vector>

- using namespace std;

- float function(float alfa[5],float H[5][5],float sign[5])

- {

- float ret = alfa[0]+alfa[1]+alfa[2]+alfa[3]+alfa[4];

- for(int j=0;j<5;++j)

- {

- float t=0.0;

- for(int i=0;i<5;++i)

- {

- t+=sign[i]*alfa[i]*H[j][i];

- }

- ret += -1*(t*alfa[j]*sign[j])/2;

- }

- return ret;

- }

- int main(void)

- {

- float matrix[5][4]={

- {1,5,1},

- {1,2,1},

- {2,2,-1},

- {2,1,-1},

- {1,1,-1}};

- float H[5][5];

- vector<float> c1;

- vector<float> c2;

- for(int i=0;i<5;++i)

- {

- c1.push_back(matrix[i][0]);

- c2.push_back(matrix[i][1]);

- }

- for(int i=0;i<5;++i)

- {

- for(int j=0;j<5;++j)

- {

- H[i][j]=c1[i]*c1[j]+c2[i]*c2[j];

- printf("%f\t",H[i][j]);

- }

- printf("\n");

- }

- float alfa[5]={3,3,2,2,2};

- float sign[5];

- for(int i=0;i<5;++i)

- sign[i]=matrix[i][2];

- float last_r = function(alfa,H,sign);

- float new_r;

- float con_r;

- for(int i=0;i<5;++i)

- {

- for(int j=0;j<5;j++)

- {

- printf("%f,alfa={%f,%f,%f,%f,%f}\n",last_r,alfa[0],alfa[1],alfa[2],alfa[3],alfa[4]);

- if(i==j) continue;

- else if((alfa[i]<0.01&&alfa[i]>-0.01)&&(alfa[j]<0.01&&alfa[j]>-0.01)) continue;

- else if((alfa[j]>0.01)&&(alfa[i]<0.01&&alfa[i]>-0.01))

- {

- while(alfa[j]>0.01){

- alfa[i]+=0.1;

- new_r = function(alfa,H,sign);

- if( new_r > last_r )

- {

- alfa[j] -= 0.1*sign[i]*sign[j];

- last_r = function(alfa,H,sign);

- }

- else

- {

- alfa[i]-=0.1;

- break;

- }

- };

- }

- else if((alfa[i]>0.01)&&(alfa[j]<0.01&&alfa[j]>-0.01))

- {

- while(alfa[i]>0.01){

- alfa[j]+=0.1;

- new_r = function(alfa,H,sign);

- if( new_r > last_r )

- {

- alfa[i] -= 0.1*sign[i]*sign[j];

- last_r = function(alfa,H,sign);

- }

- else

- {

- alfa[j]-=0.1;

- break;

- }

- };

- }

- else

- {

- alfa[j]+=0.1;

- new_r = function(alfa,H,sign);

- alfa[j]-=0.2;

- con_r = function(alfa,H,sign);

- alfa[j]+=0.1;

- if(new_r>con_r&&new_r>last_r)

- {

- while(alfa[i]>0.01&&alfa[j]>0.01)

- {

- alfa[j] += 0.1;

- alfa[i] -= 0.1*sign[i]*sign[j];

- new_r = function(alfa,H,sign);

- if(new_r > last_r)

- {

- last_r = new_r;

- }

- else

- {

- alfa[j] -= 0.1;

- alfa[i] += 0.1*sign[i]*sign[j];

- break;

- }

- };

- }

- else if(con_r>new_r&&con_r>last_r)

- {

- while(alfa[i]>0.01&&alfa[j]>0.01)

- {

- alfa[j] -= 0.1;

- alfa[i] += 0.1*sign[i]*sign[j];

- con_r = function(alfa,H,sign);

- if(con_r > last_r)

- {

- last_r = con_r;

- }

- else

- {

- alfa[j] += 0.1;

- alfa[i] -= 0.1*sign[i]*sign[j];

- break;

- }

- }

- }

- else

- {}

- }

- }

- }

- printf("%f,alfa={%f,%f,%f,%f,%f}\n",last_r,alfa[0],alfa[1],alfa[2],alfa[3],alfa[4]);

- return 0;

- }

代码不解释,纯属实验,验证想法,不为实用,优化空间巨大,不详细解释,详细可参见各种论文。

Machine Learning实验6 理解核函数

- #include"stdio.h"

- #include <math.h>

- double function(double matrix[5][4],double theta[3],int sample_i)

- {

- double ret=0.0;

- for(int i=0;i<3;++i)

- {

- ret+=matrix[sample_i][i]*theta[i];

- }

- return ret;

- }

- double theta[3]={1,1,1};

- int main(void)

- {

- double matrix[5][4]={

- {1,1,1,1},

- {1,1,4,2},

- {1,1,9,3},

- {1,1,16,4},

- {1,1,25,5},

- };

-

- double alfa = 0.1;

- double c = 0;

- double d = 0.5;

-

- double loss = 0.0;

- for(int j = 0;j<15;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][3]),2);

- }

- printf("loss : %lf\n",loss);

- for(int i=0;i<200;++i)

- {

- for(int sample_i = 0; sample_i<5;sample_i++)

- {

- double result = function(matrix,theta,sample_i)+c;

- for(int j=0;j<3;++j)

- {

- theta[j] = theta[j] - alfa*(pow(result,d)-matrix[sample_i][3])*d*pow((result),d-1)*matrix[sample_i][j];

- }

- }

- double loss = 0.0;

- for(int j = 0;j<5;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][3]),2);

- }

- printf("%d,loss now: %lf,%lf,%lf,%lf\n",i,loss,theta[0],theta[1],theta[2]);

-

- }

- return 0;

- }

- #include"stdio.h"

- #include <math.h>

- double function(double matrix[5][4],double theta[3],int sample_i)

- {

- double ret=0.0;

- for(int i=0;i<3;++i)

- {

- ret+=matrix[sample_i][i]*theta[i];

- }

- return ret;

- }

- double theta[3]={1,1,1};

- int main(void)

- {

- double matrix[5][4]={

- {1,1,1,1},

- {1,1,4,2},

- {1,1,9,3},

- {1,1,16,4},

- {1,1,25,5},

- };

- double alfa = 0.1;

- double c = 0;

- double d = 0.5;

- double loss = 0.0;

- for(int j = 0;j<15;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][3]),2);

- }

- printf("loss : %lf\n",loss);

- for(int i=0;i<200;++i)

- {

- for(int sample_i = 0; sample_i<5;sample_i++)

- {

- double result = function(matrix,theta,sample_i)+c;

- for(int j=0;j<3;++j)

- {

- theta[j] = theta[j] - alfa*(pow(result,d)-matrix[sample_i][3])*d*pow((result),d-1)*matrix[sample_i][j];

- }

- }

- double loss = 0.0;

- for(int j = 0;j<5;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][3]),2);

- }

- printf("%d,loss now: %lf,%lf,%lf,%lf\n",i,loss,theta[0],theta[1],theta[2]);

- }

- return 0;

- }

上面这个是多项式核函数的实验,暂时不解释。未来整体写一个博客来解释。

通过本实验加强对向量点乘的理解。

以上实验受到Multivariate calculus课程的影响

网易公开课看到这个课程:http://v.163.com/special/opencourse/multivariable.html

MIT地址:http://ocw.mit.edu/courses/mathematics/18-02sc-multivariable-calculus-fall-2010/

machine learning实验7 矩阵求逆

- #include"stdio.h"

- #include <math.h>

- double function(double matrix[3][6],double theta[3],int sample_i)

- {

- double ret=0.0;

- for(int i=0;i<3;++i)

- {

- ret+=matrix[sample_i][i]*theta[i];

- }

- return ret;

- }

- double theta[3]={1,1,1};

- int main(void)

- {

- double matrix[3][6]={

- {1,2,3,1,0,0},

- {2,1,1,0,1,0},

- {3,1,2,0,0,1},

- };

-

- double alfa = 0.1;

- double c = 0;

- double d = 1;

- for(int z=0;z<3;++z)

- {

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("loss : %lf\n",loss);

- for(int i=0;i<200;++i)

- {

- for(int sample_i = 0; sample_i<3;sample_i++)

- {

- double result = function(matrix,theta,sample_i)+c;

- for(int j=0;j<3;++j)

- {

- theta[j] = theta[j] - alfa*(pow(result,d)-matrix[sample_i][z+3])*d*pow((result),d-1)*matrix[sample_i][j];

- }

- }

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("%d,loss now: %lf,%lf,%lf,%lf\n",i,loss,theta[0],theta[1],theta[2]);

-

- }

- printf("%lf,%lf,%lf\n",theta[0],theta[1],theta[2]);

- }

- return 0;

- }

-

以上代码从实验6稍微修改而来

- #include"stdio.h"

- #include <math.h>

- double function(double matrix[3][6],double theta[3],int sample_i)

- {

- double ret=0.0;

- for(int i=0;i<3;++i)

- {

- ret+=matrix[sample_i][i]*theta[i];

- }

- return ret;

- }

- double theta[3]={1,1,1};

- int main(void)

- {

- double matrix[3][6]={

- {1,2,3,1,0,0},

- {2,1,1,0,1,0},

- {3,1,2,0,0,1},

- };

- double alfa = 0.1;

- double c = 0;

- double d = 1;

- for(int z=0;z<3;++z)

- {

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("loss : %lf\n",loss);

- for(int i=0;i<200;++i)

- {

- for(int sample_i = 0; sample_i<3;sample_i++)

- {

- double result = function(matrix,theta,sample_i)+c;

- for(int j=0;j<3;++j)

- {

- theta[j] = theta[j] - alfa*(pow(result,d)-matrix[sample_i][z+3])*d*pow((result),d-1)*matrix[sample_i][j];

- }

- }

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("%d,loss now: %lf,%lf,%lf,%lf\n",i,loss,theta[0],theta[1],theta[2]);

- }

- printf("%lf,%lf,%lf\n",theta[0],theta[1],theta[2]);

- }

- return 0;

- }

求一个矩阵的逆阵,如下例:

1 2 3 x1 y1 z1 1 0 0

2 1 1 x2 y2 z2 0 1 0

3 1 2 x3 y3 z3 0 0 1

精确结果应为:

-1/2 1/2 1/2

1/2 7/4 5/4

1/2 -3/2 3/4

迭代的结果为

-0.249835,0.250747,0.249447

0.247973,1.740834,-1.243221

0.253544,-1.233976,0.738149

machine learning实验7 矩阵求逆

- #include"stdio.h"

- #include <math.h>

- double function(double matrix[3][6],double theta[3],int sample_i)

- {

- double ret=0.0;

- for(int i=0;i<3;++i)

- {

- ret+=matrix[sample_i][i]*theta[i];

- }

- return ret;

- }

- double theta[3]={1,1,1};

- int main(void)

- {

- double matrix[3][6]={

- {1,2,3,1,0,0},

- {2,1,1,0,1,0},

- {3,1,2,0,0,1},

- };

-

- double alfa = 0.1;

- double c = 0;

- double d = 1;

- for(int z=0;z<3;++z)

- {

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("loss : %lf\n",loss);

- for(int i=0;i<200;++i)

- {

- for(int sample_i = 0; sample_i<3;sample_i++)

- {

- double result = function(matrix,theta,sample_i)+c;

- for(int j=0;j<3;++j)

- {

- theta[j] = theta[j] - alfa*(pow(result,d)-matrix[sample_i][z+3])*d*pow((result),d-1)*matrix[sample_i][j];

- }

- }

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("%d,loss now: %lf,%lf,%lf,%lf\n",i,loss,theta[0],theta[1],theta[2]);

-

- }

- printf("%lf,%lf,%lf\n",theta[0],theta[1],theta[2]);

- }

- return 0;

- }

-

以上代码从实验6稍微修改而来

- #include"stdio.h"

- #include <math.h>

- double function(double matrix[3][6],double theta[3],int sample_i)

- {

- double ret=0.0;

- for(int i=0;i<3;++i)

- {

- ret+=matrix[sample_i][i]*theta[i];

- }

- return ret;

- }

- double theta[3]={1,1,1};

- int main(void)

- {

- double matrix[3][6]={

- {1,2,3,1,0,0},

- {2,1,1,0,1,0},

- {3,1,2,0,0,1},

- };

- double alfa = 0.1;

- double c = 0;

- double d = 1;

- for(int z=0;z<3;++z)

- {

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("loss : %lf\n",loss);

- for(int i=0;i<200;++i)

- {

- for(int sample_i = 0; sample_i<3;sample_i++)

- {

- double result = function(matrix,theta,sample_i)+c;

- for(int j=0;j<3;++j)

- {

- theta[j] = theta[j] - alfa*(pow(result,d)-matrix[sample_i][z+3])*d*pow((result),d-1)*matrix[sample_i][j];

- }

- }

- double loss = 0.0;

- for(int j = 0;j<3;++j)

- {

- double sum=function(matrix,theta,j);

- loss += pow((pow(sum+c,d)-matrix[j][z+3]),2);

- }

- //printf("%d,loss now: %lf,%lf,%lf,%lf\n",i,loss,theta[0],theta[1],theta[2]);

- }

- printf("%lf,%lf,%lf\n",theta[0],theta[1],theta[2]);

- }

- return 0;

- }

求一个矩阵的逆阵,如下例:

1 2 3 x1 y1 z1 1 0 0

2 1 1 x2 y2 z2 0 1 0

3 1 2 x3 y3 z3 0 0 1

精确结果应为:

-1/2 1/2 1/2

1/2 7/4 5/4

1/2 -3/2 3/4

迭代的结果为

-0.249835,0.250747,0.249447

0.247973,1.740834,-1.243221

0.253544,-1.233976,0.738149

6686

6686

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?