更新日期:

2017-07-11

2017-07-120.神经网络的架构

构成任意一个神经网络的四个要素:

层数(num_layers)、大小(sizes)、偏置(biases)、权重(weights)。

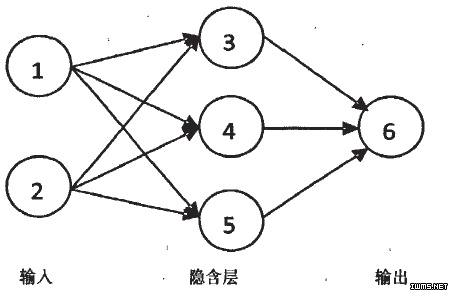

说明:在下图中,大小(sizes)为[2,3,1]

1.神经网络Network对象的初始化

前后层之间的关系如下式:

分析隐藏层:第1个神经元节点输入为权重为1*2的矩阵(以下省略“矩阵”二字)和偏置为1*1,输出为1*1,则从具有三个神经元节点的整体来看,输入的权重为3*2(3代表隐藏层神经元节点数,2代表输入层神经元节点数)、偏置为3*1(3代表隐藏层神经元节点数,1为固定值),输出为3*1。

分析输出层:只有一个神经元节点。输入的权重1*3(1代表输出层神经元节点数,3代表隐藏层神经元节点数),偏置1*1(1代表隐藏层神经元节点数,1为固定值),输出1*1。

偏置(biases,简称b):3*1和1*1,对应self.biases=[(3*1),(1*1)]((3*1)表示3*1的数组)

权重(weights,简称w):3*2和1*3,对应self.weights=[(3*2),(1*3)]

关系式中的a:2*1和3*1,对应a=[(2*1),(3*1)]

Network对象的代码如下[2][3]:

class Network:

def __init__(self,sizes):

self.num_layers=len(sizes)

self.sizes=sizes

self.biases=[np.random.randn(y,1) for y in sizes[1:]]

self.weights=[np.random.randn(x,y) \

for x,y in zip(sizes[1:],sizes[:-1])]

2.神经网络层次

这里设置如下结构的神经网络。第一层有2个神经元,第二层有3个神经元,最后一层有1个神经元,如图1所示。

net=Network([2,3,1])3.sigmoid函数

def sigmoid(z):

return 1.0/(1.0+np.exp(-z))4.feedforward函数

公式(1)的实现[4]:

def feedforward(self,a):

for w,b in zip(self.weights,self.biases)

a=sigmoid(np.dot(w,a)+b)

return a5.代价函数

6.反向传播

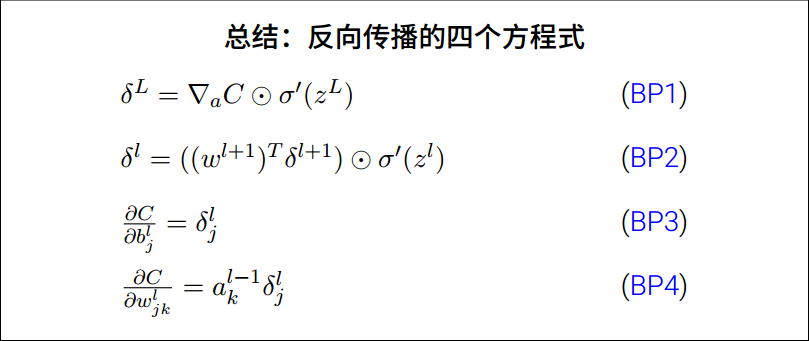

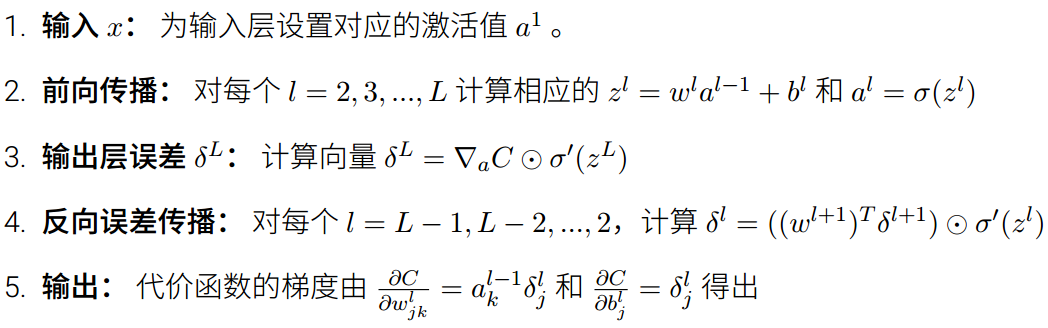

反向传播其实是对权重和偏置变化影响代价函数过程的理解。最终级的含义其实就是计算代价函数对权重和权值的偏导数。计算机不擅长求偏导,因而先求神经元上的误差,再求偏导数。反向传播的四个方程式如下所示:

方向传播方程给出了一种计算代价函数梯度的方法。算法描述如下:

4.sigmoid函数的导数:sigoid_prime

def sigmoid_prime(z):

return sigmoid(z)*(1-sigmoid(z))7.加载MNIST数据

"""

mnist_loader

~~~~~~~~~~~~

A library to load the MNIST image data. For details of the data

structures that are returned, see the doc strings for ``load_data``

and ``load_data_wrapper``. In practice, ``load_data_wrapper`` is the

function usually called by our neural network code.

"""

#### Libraries

# Standard library

import cPickle

import gzip

# Third-party libraries

import numpy as np

def load_data():

"""Return the MNIST data as a tuple containing the training data,

the validation data, and the test data.

The ``training_data`` is returned as a tuple with two entries.

The first entry contains the actual training images. This is a

numpy ndarray with 50,000 entries. Each entry is, in turn, a

numpy ndarray with 784 values, representing the 28 * 28 = 784

pixels in a single MNIST image.

The second entry in the ``training_data`` tuple is a numpy ndarray

containing 50,000 entries. Those entries are just the digit

values (0...9) for the corresponding images contained in the first

entry of the tuple.

The ``validation_data`` and ``test_data`` are similar, except

each contains only 10,000 images.

This is a nice data format, but for use in neural networks it's

helpful to modify the format of the ``training_data`` a little.

That's done in the wrapper function ``load_data_wrapper()``, see

below.

"""

f = gzip.open('../data/mnist.pkl.gz', 'rb')

training_data, validation_data, test_data = cPickle.load(f)

f.close()

return (training_data, validation_data, test_data)

def load_data_wrapper():

"""Return a tuple containing ``(training_data, validation_data,

test_data)``. Based on ``load_data``, but the format is more

convenient for use in our implementation of neural networks.

In particular, ``training_data`` is a list containing 50,000

2-tuples ``(x, y)``. ``x`` is a 784-dimensional numpy.ndarray

containing the input image. ``y`` is a 10-dimensional

numpy.ndarray representing the unit vector corresponding to the

correct digit for ``x``.

``validation_data`` and ``test_data`` are lists containing 10,000

2-tuples ``(x, y)``. In each case, ``x`` is a 784-dimensional

numpy.ndarry containing the input image, and ``y`` is the

corresponding classification, i.e., the digit values (integers)

corresponding to ``x``.

Obviously, this means we're using slightly different formats for

the training data and the validation / test data. These formats

turn out to be the most convenient for use in our neural network

code."""

tr_d, va_d, te_d = load_data()

training_inputs = [np.reshape(x, (784, 1)) for x in tr_d[0]]

training_results = [vectorized_result(y) for y in tr_d[1]]

training_data = zip(training_inputs, training_results)

validation_inputs = [np.reshape(x, (784, 1)) for x in va_d[0]]

validation_data = zip(validation_inputs, va_d[1])

test_inputs = [np.reshape(x, (784, 1)) for x in te_d[0]]

test_data = zip(test_inputs, te_d[1])

return (training_data, validation_data, test_data)

def vectorized_result(j):

"""Return a 10-dimensional unit vector with a 1.0 in the jth

position and zeroes elsewhere. This is used to convert a digit

(0...9) into a corresponding desired output from the neural

network."""

e = np.zeros((10, 1))

e[j] = 1.0

return e参考资料

[1] 神经网络与深度学习.pdf

[2] Python入门教程

[3] Python 并行遍历zip()函数使用方法

[4] Python及其函数库(Numpy、)常用函数集锦

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?