1.neuron_layer.hpp 、neuron_layer.cpp

class NeuronLayer 这个类公有继承自Layer类,特点是输入blob数量为1,输出blob数量也为1。

.cpp文件里面就实现了一个函数Reshape(在layer.hpp中Layer类里定义为虚函数),功能是将输出blob的形状改为和输入blob一样。

hpp:

#ifndef CAFFE_NEURON_LAYER_HPP_

#define CAFFE_NEURON_LAYER_HPP_

#include <vector>

#include "caffe/blob.hpp"

#include "caffe/layer.hpp"

#include "caffe/proto/caffe.pb.h"

namespace caffe {

/**

* @brief An interface for layers that take one blob as input (@f$ x @f$)

* and produce one equally-sized blob as output (@f$ y @f$), where

* each element of the output depends only on the corresponding input

* element.

*/

template <typename Dtype>

class NeuronLayer : public Layer<Dtype> {

public:

explicit NeuronLayer(const LayerParameter& param)

: Layer<Dtype>(param) {}

virtual void Reshape(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual inline int ExactNumBottomBlobs() const { return 1; }

virtual inline int ExactNumTopBlobs() const { return 1; }

};

} // namespace caffe

#endif // CAFFE_NEURON_LAYER_HPP_

cpp:

#include <vector>

#include "caffe/layers/neuron_layer.hpp"

namespace caffe {

template <typename Dtype>

void NeuronLayer<Dtype>::Reshape(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

top[0]->ReshapeLike(*bottom[0]);

}

INSTANTIATE_CLASS(NeuronLayer);

} // namespace caffe

2.relu_layer.hpp、relu_layer.cpp

relu_layer继承自neuron_layer,所以输入和输出都是一个blob。

cpp文件主要实现了前向传播和反向传播函数。

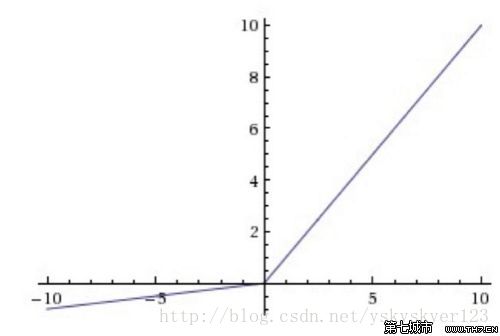

LeakyRelu图像:

caffe里面关于 ReLUParameter的描述:

message ReLUParameter {

// Allow non-zero slope for negative inputs to speed up optimization

// Described in:

// Maas, A. L., Hannun, A. Y., & Ng, A. Y. (2013). Rectifier nonlinearities

// improve neural network acoustic models. In ICML Workshop on Deep Learning

// for Audio, Speech, and Language Processing.

optional float negative_slope = 1 [default = 0];

enum Engine {

DEFAULT = 0;

CAFFE = 1;

CUDNN = 2;

}

optional Engine engine = 2 [default = DEFAULT];

}所以relu_layer使用的是LeakyRelu,

设输入为x,输出为y。

∂E∂x

为损失函数相对于输入x的倒数

前向传播:

f(x)=max(0,x)+negative_slope×min(0,x)

反向传播:

∂E∂x=⎧⎩⎨∂E∂y,negative_slope∗∂E∂y,if n>0 if n<=0

hpp:

#ifndef CAFFE_RELU_LAYER_HPP_

#define CAFFE_RELU_LAYER_HPP_

#include <vector>

#include "caffe/blob.hpp"

#include "caffe/layer.hpp"

#include "caffe/proto/caffe.pb.h"

#include "caffe/layers/neuron_layer.hpp"

namespace caffe {

/**

* @brief Rectified Linear Unit non-linearity @f$ y = \max(0, x) @f$.

* The simple max is fast to compute, and the function does not saturate.

*/

template <typename Dtype>

class ReLULayer : public NeuronLayer<Dtype> {

public:

/**

* @param param provides ReLUParameter relu_param,

* with ReLULayer options:

* - negative_slope (\b optional, default 0).

* the value @f$ \nu @f$ by which negative values are multiplied.

*/

explicit ReLULayer(const LayerParameter& param)

: NeuronLayer<Dtype>(param) {}

virtual inline const char* type() const { return "ReLU"; }

protected:

/**

* @param bottom input Blob vector (length 1)

* -# @f$ (N \times C \times H \times W) @f$

* the inputs @f$ x @f$

* @param top output Blob vector (length 1)

* -# @f$ (N \times C \times H \times W) @f$

* the computed outputs @f$

* y = \max(0, x)

* @f$ by default. If a non-zero negative_slope @f$ \nu @f$ is provided,

* the computed outputs are @f$ y = \max(0, x) + \nu \min(0, x) @f$.

*/

virtual void Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual void Forward_gpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

/**

* @brief Computes the error gradient w.r.t. the ReLU inputs.

*

* @param top output Blob vector (length 1), providing the error gradient with

* respect to the outputs

* -# @f$ (N \times C \times H \times W) @f$

* containing error gradients @f$ \frac{\partial E}{\partial y} @f$

* with respect to computed outputs @f$ y @f$

* @param propagate_down see Layer::Backward.

* @param bottom input Blob vector (length 1)

* -# @f$ (N \times C \times H \times W) @f$

* the inputs @f$ x @f$; Backward fills their diff with

* gradients @f$

* \frac{\partial E}{\partial x} = \left\{

* \begin{array}{lr}

* 0 & \mathrm{if} \; x \le 0 \\

* \frac{\partial E}{\partial y} & \mathrm{if} \; x > 0

* \end{array} \right.

* @f$ if propagate_down[0], by default.

* If a non-zero negative_slope @f$ \nu @f$ is provided,

* the computed gradients are @f$

* \frac{\partial E}{\partial x} = \left\{

* \begin{array}{lr}

* \nu \frac{\partial E}{\partial y} & \mathrm{if} \; x \le 0 \\

* \frac{\partial E}{\partial y} & \mathrm{if} \; x > 0

* \end{array} \right.

* @f$.

*/

virtual void Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom);

virtual void Backward_gpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom);

};

} // namespace caffe

#endif // CAFFE_RELU_LAYER_HPP_

cpp:

#include <algorithm>

#include <vector>

#include "caffe/layers/relu_layer.hpp"

namespace caffe {

template <typename Dtype>

void ReLULayer<Dtype>::Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

const Dtype* bottom_data = bottom[0]->cpu_data();

Dtype* top_data = top[0]->mutable_cpu_data();

const int count = bottom[0]->count();

Dtype negative_slope = this->layer_param_.relu_param().negative_slope();

for (int i = 0; i < count; ++i) {

top_data[i] = std::max(bottom_data[i], Dtype(0))

+ negative_slope * std::min(bottom_data[i], Dtype(0));

}

}

template <typename Dtype>

void ReLULayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down,

const vector<Blob<Dtype>*>& bottom) {

if (propagate_down[0]) {

const Dtype* bottom_data = bottom[0]->cpu_data();

const Dtype* top_diff = top[0]->cpu_diff();

Dtype* bottom_diff = bottom[0]->mutable_cpu_diff();

const int count = bottom[0]->count();

Dtype negative_slope = this->layer_param_.relu_param().negative_slope();

for (int i = 0; i < count; ++i) {

bottom_diff[i] = top_diff[i] * ((bottom_data[i] > 0)

+ negative_slope * (bottom_data[i] <= 0));

}

}

}

#ifdef CPU_ONLY

STUB_GPU(ReLULayer);

#endif

INSTANTIATE_CLASS(ReLULayer);

} // namespace caffe

392

392

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?