docker network create --driver bridge --subnet 172.25.0.0/16 --gateway 172.25.0.1 bigdata

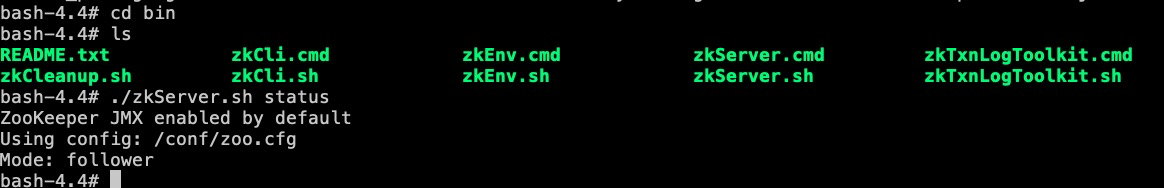

Zookeeper搭建

拉取zookeeper镜像

#选取自己合适的镜像即可

docker pull zookeeper:3.4.13

使用docker-compose创建三个zookeeper容器

version: '2'

services:

zoo1:

image: zookeeper:3.4.13 # 镜像名称

restart: always # 当发生错误时自动重启

hostname: zoo1

container\_name: zoo1

privileged: true

ports: # 端口

- 2181:2181

volumes: # 挂载数据卷

- ./zoo1/data:/data

- ./zoo1/datalog:/datalog

environment:

TZ: Asia/Shanghai

ZOO\_MY\_ID: 1 # 节点ID

ZOO\_PORT: 2181 # zookeeper端口号

ZOO\_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888 # zookeeper节点列表

networks:

default:

ipv4\_address: 172.25.0.11

zoo2:

image: zookeeper:3.4.13

restart: always

hostname: zoo2

container\_name: zoo2

privileged: true

ports:

- 2182:2181

volumes:

- ./zoo2/data:/data

- ./zoo2/datalog:/datalog

environment:

TZ: Asia/Shanghai

ZOO\_MY\_ID: 2

ZOO\_PORT: 2181

ZOO\_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888

networks:

default:

ipv4\_address: 172.25.0.12

zoo3:

image: zookeeper:3.4.13

restart: always

hostname: zoo3

container\_name: zoo3

privileged: true

ports:

- 2183:2181

volumes:

- ./zoo3/data:/data

- ./zoo3/datalog:/datalog

environment:

TZ: Asia/Shanghai

ZOO\_MY\_ID: 3

ZOO\_PORT: 2181

ZOO\_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888

networks:

default:

ipv4\_address: 172.25.0.13

networks:

default:

external:

name: bigdata

#运行命令

docker-compose up -d

➜ zookeeper docker-compose up -d

Recreating 44dad6cddccd_zoo1 ... done

Recreating 9b0f2cfe666f_zoo3 ... done

Creating zoo2 ... done

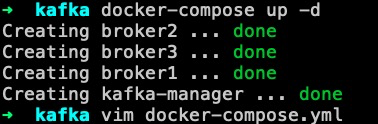

Kafka集群搭建

#拉取Kafka镜像和kafka-manager镜像

docker pull wurstmeister/kafka:2.12-2.3.1

docker pull sheepkiller/kafka-manager

编辑docker-compose.yml文件

version: '2'

services:

broker1:

image: wurstmeister/kafka:2.12-2.3.1

restart: always # 出现错误时自动重启

hostname: broker1# 节点主机

container\_name: broker1 # 节点名称

privileged: true # 可以在容器里面使用一些权限

ports:

- "9091:9092" # 将容器的9092端口映射到宿主机的9091端口上

environment:

KAFKA\_BROKER\_ID: 1

KAFKA\_LISTENERS: PLAINTEXT://broker1:9092

KAFKA\_ADVERTISED\_LISTENERS: PLAINTEXT://broker1:9092

KAFKA\_ADVERTISED\_HOST\_NAME: broker1

KAFKA\_ADVERTISED\_PORT: 9092

KAFKA\_ZOOKEEPER\_CONNECT: zoo1:2181/kafka1,zoo2:2181/kafka1,zoo3:2181/kafka1

JMX\_PORT: 9988 # 负责kafkaManager的端口JMX通信

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- ./broker1:/kafka/kafka\-logs\-broker1

external\_links:

- zoo1

- zoo2

- zoo3

networks:

default:

ipv4\_address: 172.25.0.14

broker2:

image: wurstmeister/kafka:2.12-2.3.1

restart: always

hostname: broker2

container\_name: broker2

privileged: true

ports:

- "9092:9092"

environment:

KAFKA\_BROKER\_ID: 2

KAFKA\_LISTENERS: PLAINTEXT://broker2:9092

KAFKA\_ADVERTISED\_LISTENERS: PLAINTEXT://broker2:9092

KAFKA\_ADVERTISED\_HOST\_NAME: broker2

KAFKA\_ADVERTISED\_PORT: 9092

KAFKA\_ZOOKEEPER\_CONNECT: zoo1:2181/kafka1,zoo2:2181/kafka1,zoo3:2181/kafka1

JMX\_PORT: 9988

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- ./broker2:/kafka/kafka\-logs\-broker2

external\_links: # 连接本compose文件以外的container

- zoo1

- zoo2

- zoo3

networks:

default:

ipv4\_address: 172.25.0.15

broker3:

image: wurstmeister/kafka:2.12-2.3.1

restart: always

hostname: broker3

container\_name: broker3

privileged: true

ports:

- "9093:9092"

environment:

KAFKA\_BROKER\_ID: 3

KAFKA\_LISTENERS: PLAINTEXT://broker3:9092

KAFKA\_ADVERTISED\_LISTENERS: PLAINTEXT://broker3:9092

KAFKA\_ADVERTISED\_HOST\_NAME: broker3

KAFKA\_ADVERTISED\_PORT: 9092

KAFKA\_ZOOKEEPER\_CONNECT: zoo1:2181/kafka1,zoo2:2181/kafka1,zoo3:2181/kafka1

JMX\_PORT: 9988

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- ./broker3:/kafka/kafka\-logs\-broker3

external\_links: # 连接本compose文件以外的container

- zoo1

- zoo2

- zoo3

networks:

default:

ipv4\_address: 172.25.0.16

kafka-manager:

image: sheepkiller/kafka-manager:latest

restart: always

container\_name: kafka-manager

hostname: kafka-manager

ports:

- "9000:9000"

links: # 连接本compose文件创建的container

- broker1

- broker2

- broker3

external\_links: # 连接本compose文件以外的container

- zoo1

- zoo2

- zoo3

environment:

ZK\_HOSTS: zoo1:2181/kafka1,zoo2:2181/kafka1,zoo3:2181/kafka1

KAFKA\_BROKERS: broker1:9092,broker2:9092,broker3:9092

APPLICATION\_SECRET: letmein

KM\_ARGS: -Djava.net.preferIPv4Stack=true

networks:

default:

ipv4\_address: 172.25.0.10

networks:

default:

external: # 使用已创建的网络

name: bigdata

#运行命令

docker-compose up -d

**看看本地端口9000也确实起来了

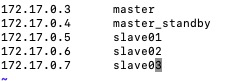

Hadoop高可用集群搭建

docker-compose创建集群

version: '2'

services:

master:

image: hadoop:latest

restart: always # 出现错误时自动重启

hostname: master# 节点主机

container\_name: master # 节点名称

privileged: true # 可以在容器里面使用一些权限

networks:

default:

ipv4\_address: 172.25.0.3

master\_standby:

image: hadoop:latest

restart: always

hostname: master_standby

container\_name: master_standby

privileged: true

networks:

default:

ipv4\_address: 172.25.0.4

slave01:

image: hadoop:latest

restart: always

hostname: slave01

container\_name: slave01

privileged: true

networks:

default:

ipv4\_address: 172.25.0.5

slave02:

image: hadoop:latest

restart: always

container\_name: slave02

hostname: slave02

networks:

default:

ipv4\_address: 172.25.0.6

slave03:

image: hadoop:latest

restart: always

container\_name: slave03

hostname: slave03

networks:

default:

ipv4\_address: 172.25.0.7

命令行方式创建

#创建一个master节点

docker run -tid --name master --privileged=true hadoop:latest /usr/sbin/init

#创建热备master\_standby节点

docker run -tid --name master_standby --privileged=true hadoop:latest /usr/sbin/init

#创建三个slave

docker run -tid --name slave01 --privileged=true hadoop:latest /usr/sbin/init

docker run -tid --name slave02 --privileged=true hadoop:latest /usr/sbin/init

docker run -tid --name slave03 --privileged=true hadoop:latest /usr/sbin/init

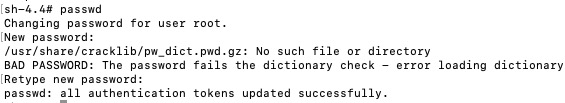

给每台节点配置免密码登陆

ssh-keygen -t rsa

#然后不断会车,最终如下图所示

#每台机器都是如此

将各自的公钥传到每台机器authorized_keys里面

这里有个小问题:先检查安装了passwd没有,如果没有执行以下命令:

yum install passwd

#然后设置密码

passwd

#一台机器的公钥都要弄到自己和其他机器的authorized\_keys

#以免以后安装其他东西减少不必要的麻烦

cat id_rsa.pub >> .ssh/authorized_keys

编辑/etc/hosts

注意:这里的master_standby可能不允许带下划线,有的机器在hdfs格式化的时候会不合法,所以你配置最后不要带特殊字符

#将/etc/hosts复制到每台节点

scp /etc/hosts master_standby:/etc/

scp /etc/hosts slave01:/etc/

scp /etc/hosts slave02:/etc/

scp /etc/hosts slave03:/etc/

配置Hadoop

#解压hadoop包

tar -zxvf hadoop-2.8.5.tar.gz

配置环境变量

#配置环境变量

vim ~/.bashrc

#添加以下内容

export HADOOP\_HOME=/usr/local/hadoop-2.8.5

export CLASSPATH=.:$HADOOP\_HOME/lib:$CLASSPATH

export PATH=$PATH:$HADOOP\_HOME/bin

export PATH=$PATH:$HADOOP\_HOME/sbin

export HADOOP\_MAPRED\_HOME=$HADOOP\_HOME

export HADOOP\_COMMON\_HOME=$HADOOP\_HOME

export HADOOP\_HDFS\_HOME=$HADOOP\_HOME

export YARN\_HOME=$HADOOP\_HOME

export HADOOP\_ROOT\_LOGGER=INFO,console

export HADOOP\_COMMON\_LIB\_NATIVE\_DIR=$HADOOP\_HOME/lib/native

export HADOOP\_OPTS="-Djava.library.path=$HADOOP\_HOME/lib"

#将这个文件拷到其他机器的下面

scp ~/.bashrc 机器名字:~/

#hadoop命令验证一下

配置文件

hdfs-site.xml

<configuration>

<!-- same with core-site.xml:defaultFS-->

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<!-- two NameNode,nn1 and nn2-->

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<!-- mycluster.nn1 Namenode's RPC Address-->

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>master:9000</value>

</property>

<!-- mycluster.nn1 Namenode's Http Address-->

<property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>master:50070</value>

</property>

<!-- mycluster.nn2 Namenode's RPC Address-->

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>master_standby:9000</value>

</property>

<!-- mycluster.nn2 Namenode's Http Address-->

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>master_standby:50070</value>

</property>

<!-- where the NameNode's metadata store in JournalNodes -->

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://slave01:8485;slave02:8485;slave03:8485/mycluster</value>

</property>

<!-- where Journaldata store in its disk-->

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/usr/local/hadoop-2.8.5/journaldata</value>

</property>

<!-- open automatic-failover when fail-->

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<!-- the way when fail -->

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<!-- set the methods which disdancy-->

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<!-- -->

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_rsa</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.connect-timeout</name>

<value>30000</value>

</property>

</configuration>

core-site.xml

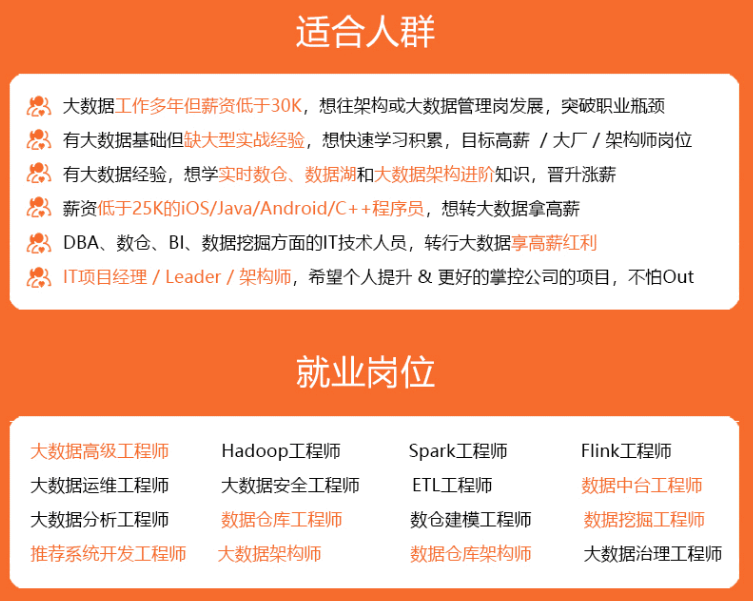

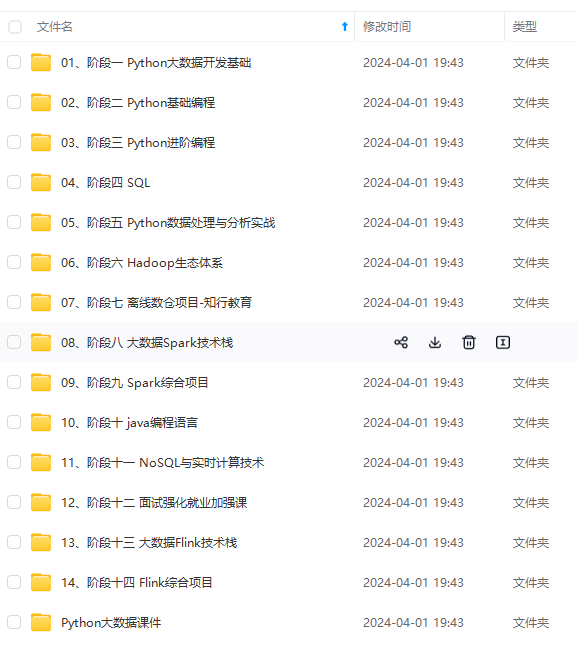

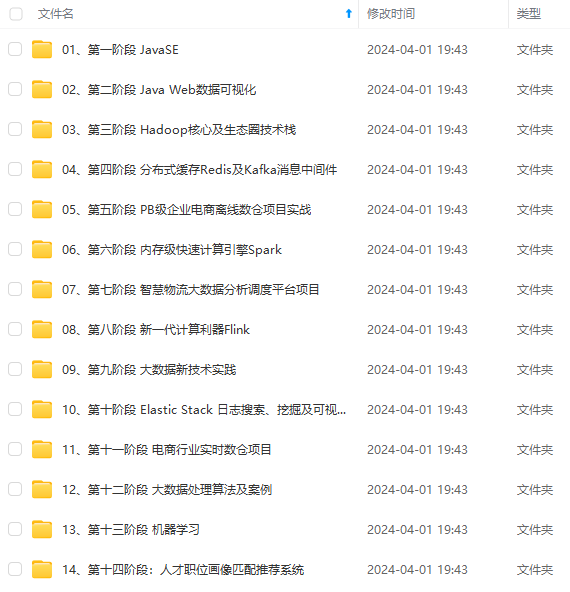

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

-key-files

/root/.ssh/id_rsa

**core-site.xml**

[外链图片转存中...(img-EvJ805fe-1714699365397)]

[外链图片转存中...(img-1nr1i87a-1714699365397)]

[外链图片转存中...(img-nrWNbbIu-1714699365398)]

**既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!**

**由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

381

381

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?