打卡~

555 我的环境:

● 语言环境:Python

● 编译器:jupyter notebook

● 深度学习环境:Pytorch

>- **🍨 本文为[🔗365天深度学习训练营](https://mp.weixin.qq.com/s/0dvHCaOoFnW8SCp3JpzKxg) 中的学习记录博客**

>- **🍖 原作者:[K同学啊](https://mtyjkh.blog.csdn.net/)**

# 一、前期准备

# 1.1 设置环境

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

import torchvision

device = torch.device("cpu") # 1.2 导入数据

# 使用dataset下载CIFAR10数据集,并划分好训练集与测试集

# 使用dataloader加载数据,并设置好基本的batch_size

train_ds = torchvision.datasets.CIFAR10('data',

train=True,

transform=torchvision.transforms.ToTensor(), # 将数据类型转化为Tensor

download=True)

test_ds = torchvision.datasets.CIFAR10('data',

train=False,

transform=torchvision.transforms.ToTensor(), # 将数据类型转化为Tensor

download=True)Downloading https://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz to data/cifar-10-python.tar.gz

100%|████████████████████████| 170498071/170498071 [00:34<00:00, 4977040.72it/s]

Extracting data/cifar-10-python.tar.gz to data Files already downloaded and verified

batch_size = 32

train_dl = torch.utils.data.DataLoader(train_ds,

batch_size=batch_size,

shuffle=True)

test_dl = torch.utils.data.DataLoader(test_ds,

batch_size=batch_size)imgs, labels = next(iter(train_dl))

imgs.shapetorch.Size([32, 3, 32, 32])

# 1.3 数据可视化

import numpy as np

# 指定图片大小,图像大小为20宽、5高的绘图(单位为英寸inch)

plt.figure(figsize=(20, 5))

for i, imgs in enumerate(imgs[:20]):

# 进行轴变换

npimg = imgs.numpy().transpose((1, 2, 0))

# 将整个figure分成2行10列,绘制第i+1个子图。

plt.subplot(2, 10, i+1)

plt.imshow(npimg, cmap=plt.cm.binary)

plt.axis('off')

plt.show()

# 二、简易cnn网络

# 2.1 构建模型

import torch.nn.functional as F

num_classes = 10 # 图片的类别数

class Model(nn.Module):

def __init__(self):

super().__init__()

# 特征提取网络

self.conv1 = nn.Conv2d(3, 64, kernel_size=3) # 第一层卷积,输入通道为 3,输出通道为 64,卷积核大小为3*3

self.pool1 = nn.MaxPool2d(kernel_size=2) # 设置池化层,池化核大小为2*2

self.conv2 = nn.Conv2d(64, 64, kernel_size=3) # 第二层卷积,输入通道为 64,输出通道为 64,卷积核大小为3*3

self.pool2 = nn.MaxPool2d(kernel_size=2)

self.conv3 = nn.Conv2d(64, 128, kernel_size=3) # 第三层卷积,输入通道为 64,输出通道为 128,卷积核大小为3*3

self.pool3 = nn.MaxPool2d(kernel_size=2)

# 分类网络

self.fc1 = nn.Linear(512, 256) # 是第一个全连接层,输入维度为 512,输出维度为 256。

self.fc2 = nn.Linear(256, num_classes) # 是第二个全连接层,输入维度为 256,输出维度为 num_classes,即图片的类别数。

# 前向传播

def forward(self, x):

x = self.pool1(F.relu(self.conv1(x)))

x = self.pool2(F.relu(self.conv2(x)))

x = self.pool3(F.relu(self.conv3(x)))

x = torch.flatten(x, start_dim=1)

x = F.relu(self.fc1(x))

x = self.fc2(x)

return x

# 在 __init__ 方法中,定义了网络的各层结构,包括特征提取部分的卷积层 conv1、conv2、conv3 和池化层 pool1、pool2、pool3,以及分类部分的全连接层 fc1 和 fc2。

# 在 forward 方法中,定义了前向传播的计算过程。输入图片经过卷积和池化操作后,被展平为一维向量,然后经过两个全连接层进行分类预测。

# 2.2 加载并打印

from torchinfo import summary

model = Model().to(device)

summary(model)================================================================= Layer (type:depth-idx) Param # ================================================================= Model -- ├─Conv2d: 1-1 1,792 ├─MaxPool2d: 1-2 -- ├─Conv2d: 1-3 36,928 ├─MaxPool2d: 1-4 -- ├─Conv2d: 1-5 73,856 ├─MaxPool2d: 1-6 -- ├─Linear: 1-7 131,328 ├─Linear: 1-8 2,570 ================================================================= Total params: 246,474 Trainable params: 246,474 Non-trainable params: 0 =================================================================

# 三、训练模型

# 3.1 设置超参数

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

learn_rate = 1e-2 # 学习率

opt = torch.optim.SGD(model.parameters(),lr=learn_rate)# 3.2 编写训练函数

# 训练循环

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset) # 训练集的大小,一共60000张图片

num_batches = len(dataloader) # 批次数目,1875(60000/32)

train_loss, train_acc = 0, 0 # 初始化训练损失和正确率

for X, y in dataloader: # 获取图片及其标签

X, y = X.to(device), y.to(device)

# 计算预测误差

pred = model(X) # 网络输出

loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录acc与loss

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss# 3.3 编写测试函数

# 测试函数和训练函数大致相同,但是由于不进行梯度下降对网络权重进行更新,所以不需要传入优化器

def test (dataloader, model, loss_fn):

size = len(dataloader.dataset) # 测试集的大小,一共10000张图片

num_batches = len(dataloader) # 批次数目,313(10000/32=312.5,向上取整)

test_loss, test_acc = 0, 0

# 当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

# 计算loss

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_loss += loss.item()

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss# 3.4 正式训练

epochs = 30

train_loss = []

train_acc = []

test_loss = []

test_acc = []

for epoch in range(epochs):

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%,Test_loss:{:.3f}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss))

print('Done')Epoch: 1, Train_acc:13.0%, Train_loss:2.284, Test_acc:17.9%,Test_loss:2.185 Epoch: 2, Train_acc:24.3%, Train_loss:2.030, Test_acc:26.7%,Test_loss:1.969 Epoch: 3, Train_acc:33.0%, Train_loss:1.821, Test_acc:38.0%,Test_loss:1.702 Epoch: 4, Train_acc:39.4%, Train_loss:1.661, Test_acc:41.1%,Test_loss:1.640 Epoch: 5, Train_acc:43.5%, Train_loss:1.550, Test_acc:44.1%,Test_loss:1.543 Epoch: 6, Train_acc:47.1%, Train_loss:1.460, Test_acc:49.7%,Test_loss:1.389 Epoch: 7, Train_acc:50.3%, Train_loss:1.379, Test_acc:51.6%,Test_loss:1.351 Epoch: 8, Train_acc:53.3%, Train_loss:1.303, Test_acc:52.0%,Test_loss:1.392 Epoch: 9, Train_acc:55.9%, Train_loss:1.239, Test_acc:56.3%,Test_loss:1.218 Epoch:10, Train_acc:58.2%, Train_loss:1.183, Test_acc:54.8%,Test_loss:1.279 Epoch:11, Train_acc:60.3%, Train_loss:1.128, Test_acc:57.8%,Test_loss:1.182 Epoch:12, Train_acc:62.1%, Train_loss:1.081, Test_acc:57.4%,Test_loss:1.247 Epoch:13, Train_acc:64.1%, Train_loss:1.035, Test_acc:61.6%,Test_loss:1.126 Epoch:14, Train_acc:65.3%, Train_loss:0.996, Test_acc:62.8%,Test_loss:1.084 Epoch:15, Train_acc:66.9%, Train_loss:0.954, Test_acc:63.3%,Test_loss:1.064 Epoch:16, Train_acc:68.3%, Train_loss:0.917, Test_acc:64.4%,Test_loss:1.030 Epoch:17, Train_acc:69.3%, Train_loss:0.883, Test_acc:64.0%,Test_loss:1.032 Epoch:18, Train_acc:70.6%, Train_loss:0.848, Test_acc:64.8%,Test_loss:1.013 Epoch:19, Train_acc:71.8%, Train_loss:0.817, Test_acc:65.0%,Test_loss:1.025 Epoch:20, Train_acc:72.8%, Train_loss:0.786, Test_acc:67.1%,Test_loss:0.943 Epoch:21, Train_acc:73.8%, Train_loss:0.754, Test_acc:67.1%,Test_loss:0.990 Epoch:22, Train_acc:74.7%, Train_loss:0.726, Test_acc:69.5%,Test_loss:0.891 Epoch:23, Train_acc:75.8%, Train_loss:0.697, Test_acc:68.2%,Test_loss:0.927 Epoch:24, Train_acc:76.8%, Train_loss:0.669, Test_acc:69.1%,Test_loss:0.925 Epoch:25, Train_acc:77.8%, Train_loss:0.642, Test_acc:69.4%,Test_loss:0.904 Epoch:26, Train_acc:78.6%, Train_loss:0.614, Test_acc:69.7%,Test_loss:0.916 Epoch:27, Train_acc:79.5%, Train_loss:0.587, Test_acc:69.3%,Test_loss:0.936 Epoch:28, Train_acc:80.6%, Train_loss:0.561, Test_acc:69.7%,Test_loss:0.932 Epoch:29, Train_acc:81.4%, Train_loss:0.535, Test_acc:69.8%,Test_loss:0.926 Epoch:30, Train_acc:82.3%, Train_loss:0.508, Test_acc:70.0%,Test_loss:0.972 Done

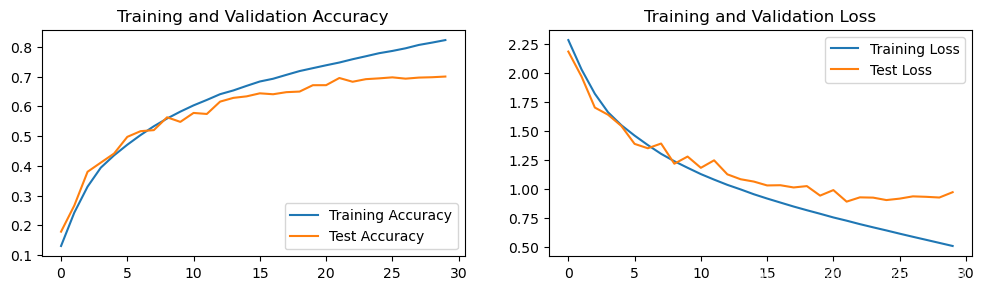

plt.rcParams['font.sans-serif'] = ['SimHei', 'path/to/SimHei.ttf']# 四、结果可视化

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

2085

2085

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?