WordNet是一个在20世纪80年代由Princeton大学的著名认知心理学家George Miller团队构建的一个大型的英文词汇数据库。名词、动词、形容词和副词以同义词集合(synsets)的形式存储在这个数据库中。

import nltk

nltk.download('wordnet')

from nltk.corpus import wordnet as wn

from nltk.corpus import brown

print(brown.words())

3、测试

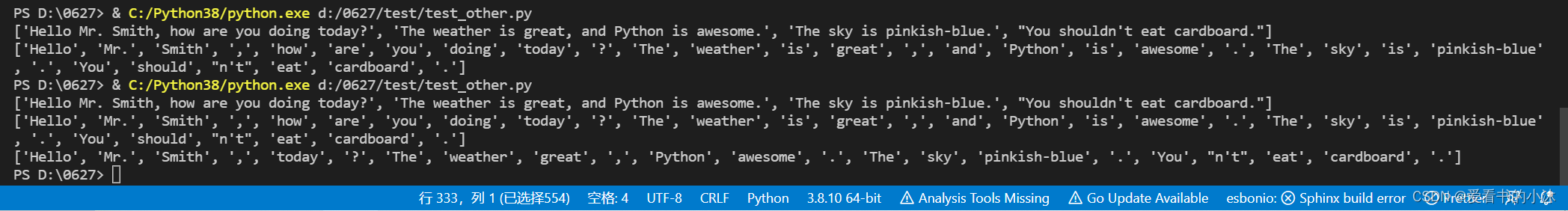

3.1 分句分词

英文分句:nltk.sent_tokenize :对文本按照句子进行分割

英文分词:nltk.word_tokenize:将句子按照单词进行分隔,返回一个列表

from nltk.tokenize import sent_tokenize, word_tokenize

EXAMPLE_TEXT = "Hello Mr. Smith, how are you doing today? The weather is great, and Python is awesome. The sky is pinkish-blue. You shouldn't eat cardboard."

print(sent_tokenize(EXAMPLE_TEXT))

print(word_tokenize(EXAMPLE_TEXT))

from nltk.corpus import stopwords

stop_word = set(stopwords.words('english')) # 获取所有的英文停止词

word_tokens = word_tokenize(EXAMPLE_TEXT) # 获取所有分词词语

filtered_sentence = [w for w in word_tokens if not w in stop_word] #获取案例文本中的非停止词

print(filtered_sentence)

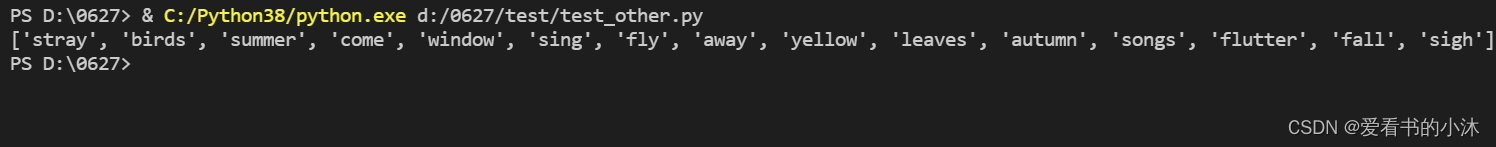

3.2 停用词过滤

停止词:nltk.corpus的 stopwords:查看英文中的停止词表。

定义了一个过滤英文停用词的函数,将文本中的词汇归一化处理为小写并提取。从停用词语料库中提取出英语停用词,将文本进行区分。

from nltk.tokenize import sent_tokenize, word_tokenize #导入 分句、分词模块

from nltk.corpus import stopwords #导入停止词模块

def remove\_stopwords(text):

text_lower=[w.lower() for w in text if w.isalpha()]

stopword_set =set(stopwords.words('english'))

result = [w for w in text_lower if w not in stopword_set]

return result

example_text = "Stray birds of summer come to my window to sing and fly away. And yellow leaves of autumn,which have no songs,flutter and fall there with a sigh."

word_tokens = word_tokenize(example_text)

print(remove_stopwords(word_tokens))

from nltk.tokenize import sent_tokenize, word_tokenize #导入 分句、分词模块

example_text = "Stray birds of summer come to my window to sing and fly away. And yellow leaves of autumn,which have no songs,flutter and fall there with a sigh."

word_tokens = word_tokenize(example_text)

from nltk.corpus import stopwords

test_words = [word.lower() for word in word_tokens]

test_words_set = set(test_words)

test_words_set.intersection(set(stopwords.words('english')))

filtered = [w for w in test_words_set if(w not in stopwords.words('english'))]

print(filtered)

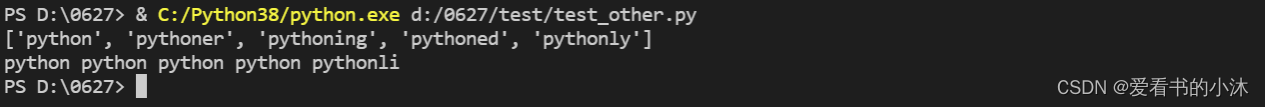

3.3 词干提取

词干提取:是去除词缀得到词根的过程,例如:fishing、fished,为同一个词干 fish。Nltk,提供PorterStemmer进行词干提取。

from nltk.stem import PorterStemmer

from nltk.tokenize import sent_tokenize,word_tokenize

ps = PorterStemmer()

example_words = ["python","pythoner","pythoning","pythoned","pythonly"]

print(example_words)

for w in example_words:

print(ps.stem(w),end=' ')

from nltk.stem import PorterStemmer

from nltk.tokenize import sent_tokenize,word_tokenize

ps = PorterStemmer()

example_text = "Stray birds of summer come to my window to sing and fly away. And yellow leaves of autumn,which have no songs,flut

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

3041

3041

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?