ResNet18、ResNet34模型的完整代码

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, Sequential

第一个残差模块

class BasicBlock(layers.Layer):

def init(self, filter_num, stride=1):

super(BasicBlock, self).init()

self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride, padding=‘same’)

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation(‘relu’)

self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1, padding=‘same’)

self.bn2 = layers.BatchNormalization()

se-block

self.se_globalpool = keras.layers.GlobalAveragePooling2D()

self.se_resize = keras.layers.Reshape((1, 1, filter_num))

self.se_fc1 = keras.layers.Dense(units=filter_num // 16, activation=‘relu’,

use_bias=False)

self.se_fc2 = keras.layers.Dense(units=filter_num, activation=‘sigmoid’,

use_bias=False)

if stride != 1:

self.downsample = Sequential()

self.downsample.add(layers.Conv2D(filter_num, (1, 1), strides=stride))

else:

self.downsample = lambda x: x

def call(self, input, training=None):

out = self.conv1(input)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

se_block

b = out

out = self.se_globalpool(out)

out = self.se_resize(out)

out = self.se_fc1(out)

out = self.se_fc2(out)

out = keras.layers.Multiply()([b, out])

identity = self.downsample(input)

output = layers.add([out, identity])

output = tf.nn.relu(output)

return output

class ResNet(keras.Model):

def init(self, layer_dims, num_classes=10):

super(ResNet, self).init()

预处理层

self.padding = keras.layers.ZeroPadding2D((3, 3))

self.stem = Sequential([

layers.Conv2D(64, (7, 7), strides=(2, 2)),

layers.BatchNormalization(),

layers.Activation(‘relu’),

layers.MaxPool2D(pool_size=(3, 3), strides=(2, 2), padding=‘same’)

])

resblock

self.layer1 = self.build_resblock(64, layer_dims[0])

self.layer2 = self.build_resblock(128, layer_dims[1], stride=2)

self.layer3 = self.build_resblock(256, layer_dims[2], stride=2)

self.layer4 = self.build_resblock(512, layer_dims[3], stride=2)

全局池化

self.avgpool = layers.GlobalAveragePooling2D()

全连接层

self.fc = layers.Dense(num_classes, activation=tf.keras.activations.softmax)

def call(self, input, training=None):

x= self.padding(input)

x = self.stem(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

[b,c]

x = self.avgpool(x)

x = self.fc(x)

return x

def build_resblock(self, filter_num, blocks, stride=1):

res_blocks = Sequential()

res_blocks.add(BasicBlock(filter_num, stride))

for pre in range(1, blocks):

res_blocks.add(BasicBlock(filter_num, stride=1))

return res_blocks

def ResNet34(num_classes=10):

return ResNet([2, 2, 2, 2], num_classes=num_classes)

def ResNet34(num_classes=10):

return ResNet([3, 4, 6, 3], num_classes=num_classes)

model = ResNet34(num_classes=1000)

model.build(input_shape=(1, 224, 224, 3))

print(model.summary()) # 统计网络参数

ResNet50、ResNet101、ResNet152完整代码

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, Sequential

第二个残差模块

class Block(layers.Layer):

def init(self, filters, downsample=False, stride=1):

super(Block, self).init()

self.downsample = downsample

self.conv1 = layers.Conv2D(filters, (1, 1), strides=stride, padding=‘same’)

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation(‘relu’)

self.conv2 = layers.Conv2D(filters, (3, 3), strides=1, padding=‘same’)

self.bn2 = layers.BatchNormalization()

self.conv3 = layers.Conv2D(4 * filters, (1, 1), strides=1, padding=‘same’)

self.bn3 = layers.BatchNormalization()

se-block

self.se_globalpool = keras.layers.GlobalAveragePooling2D()

self.se_resize = keras.layers.Reshape((1, 1, 4 * filters))

self.se_fc1 = keras.layers.Dense(units=4 * filters // 16, activation=‘relu’,

use_bias=False)

self.se_fc2 = keras.layers.Dense(units=4 * filters, activation=‘sigmoid’,

use_bias=False)

if self.downsample:

self.shortcut = Sequential()

self.shortcut.add(layers.Conv2D(4 * filters, (1, 1), strides=stride))

self.shortcut.add(layers.BatchNormalization(axis=3))

def call(self, input, training=None):

out = self.conv1(input)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

b = out

out = self.se_globalpool(out)

out = self.se_resize(out)

out = self.se_fc1(out)

out = self.se_fc2(out)

out = keras.layers.Multiply()([b, out])

if self.downsample:

shortcut = self.shortcut(input)

else:

shortcut = input

output = layers.add([out, shortcut])

output = tf.nn.relu(output)

return output

class ResNet(keras.Model):

def init(self, layer_dims, num_classes=10):

super(ResNet, self).init()

预处理层

self.padding = keras.layers.ZeroPadding2D((3, 3))

self.stem = Sequential([

layers.Conv2D(64, (7, 7), strides=(2, 2)),

layers.BatchNormalization(),

layers.Activation(‘relu’),

layers.MaxPool2D(pool_size=(3, 3), strides=(2, 2), padding=‘same’)

])

resblock

self.layer1 = self.build_resblock(64, layer_dims[0],stride=1)

self.layer2 = self.build_resblock(128, layer_dims[1], stride=2)

self.layer3 = self.build_resblock(256, layer_dims[2], stride=2)

self.layer4 = self.build_resblock(512, layer_dims[3], stride=2)

全局池化

self.avgpool = layers.GlobalAveragePooling2D()

全连接层

self.fc = layers.Dense(num_classes, activation=tf.keras.activations.softmax)

def call(self, input, training=None):

x = self.padding(input)

x = self.stem(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

[b,c]

x = self.avgpool(x)

x = self.fc(x)

return x

def build_resblock(self, filter_num, blocks, stride=1):

res_blocks = Sequential()

if stride != 1 or filter_num * 4 != 64:

res_blocks.add(Block(filter_num, downsample=True,stride=stride))

for pre in range(1, blocks):

res_blocks.add(Block(filter_num, stride=1))

return res_blocks

自我介绍一下,小编13年上海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。

深知大多数Python工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但对于培训机构动则几千的学费,着实压力不小。自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

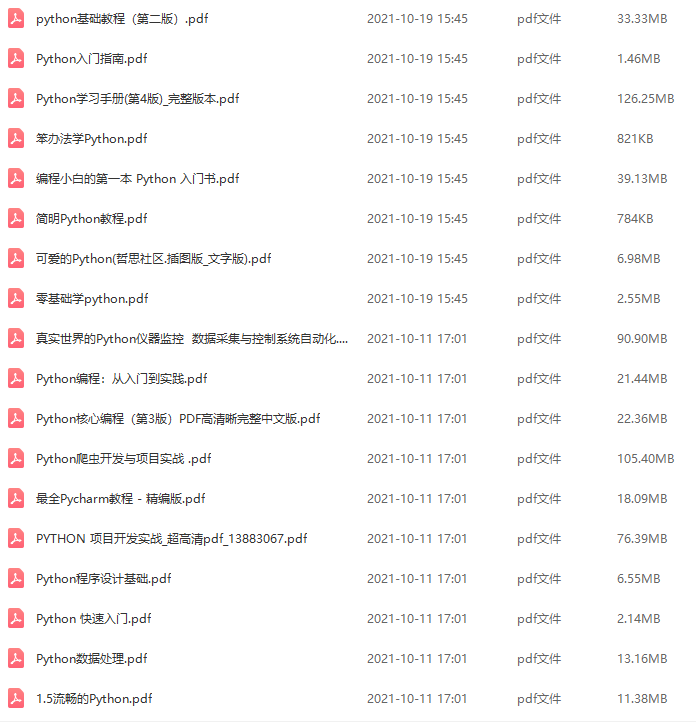

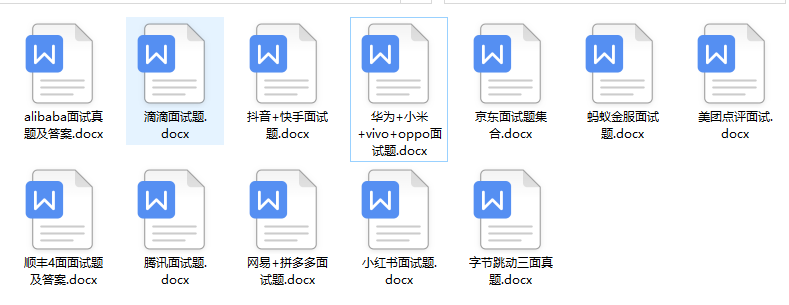

因此收集整理了一份《2024年Python开发全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友,同时减轻大家的负担。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,基本涵盖了95%以上Python开发知识点,真正体系化!

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加V获取:vip1024c (备注Python)

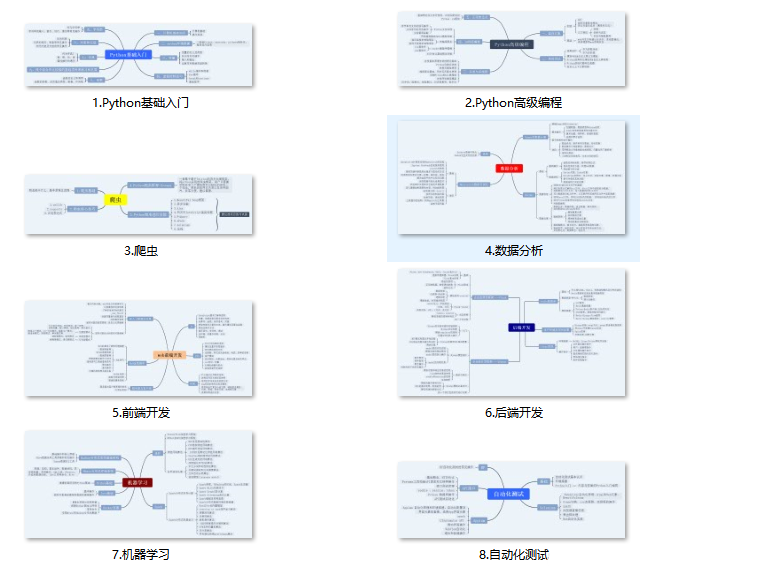

(1)Python所有方向的学习路线(新版)

这是我花了几天的时间去把Python所有方向的技术点做的整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

最近我才对这些路线做了一下新的更新,知识体系更全面了。

(2)Python学习视频

包含了Python入门、爬虫、数据分析和web开发的学习视频,总共100多个,虽然没有那么全面,但是对于入门来说是没问题的,学完这些之后,你可以按照我上面的学习路线去网上找其他的知识资源进行进阶。

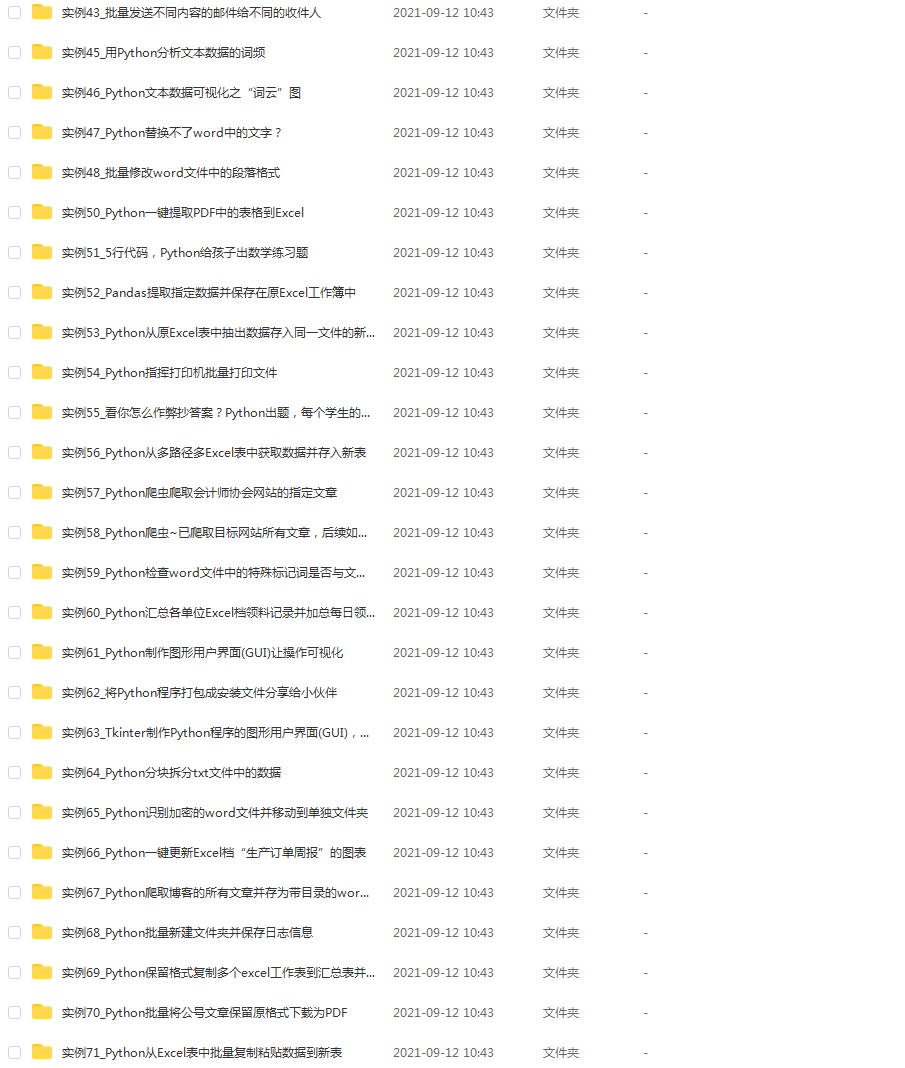

(3)100多个练手项目

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了,只是里面的项目比较多,水平也是参差不齐,大家可以挑自己能做的项目去练练。

130abf71d796.png#pic_center)

(2)Python学习视频

包含了Python入门、爬虫、数据分析和web开发的学习视频,总共100多个,虽然没有那么全面,但是对于入门来说是没问题的,学完这些之后,你可以按照我上面的学习路线去网上找其他的知识资源进行进阶。

(3)100多个练手项目

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了,只是里面的项目比较多,水平也是参差不齐,大家可以挑自己能做的项目去练练。

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?