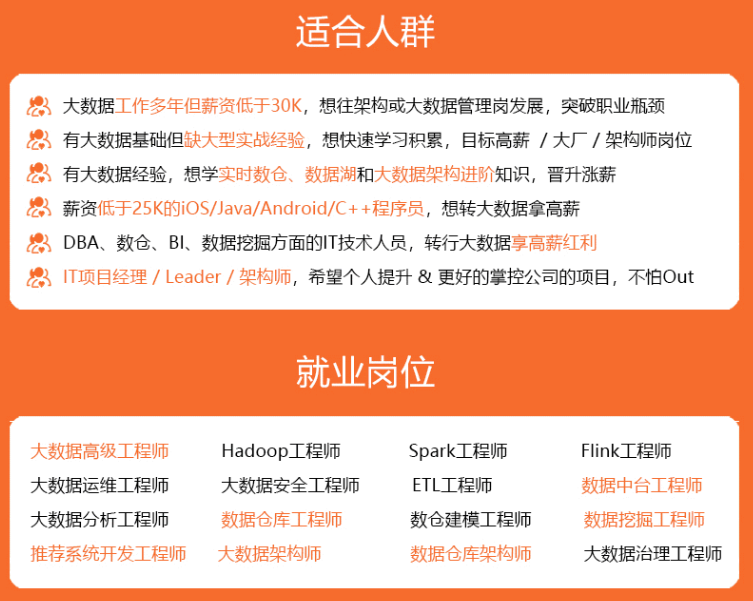

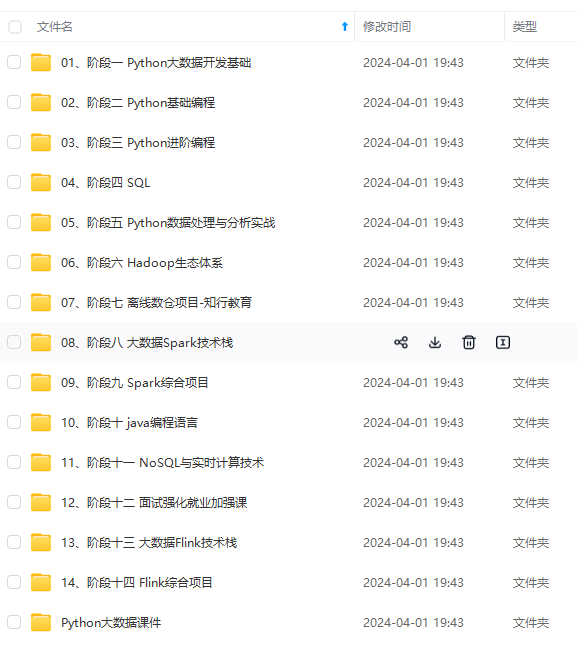

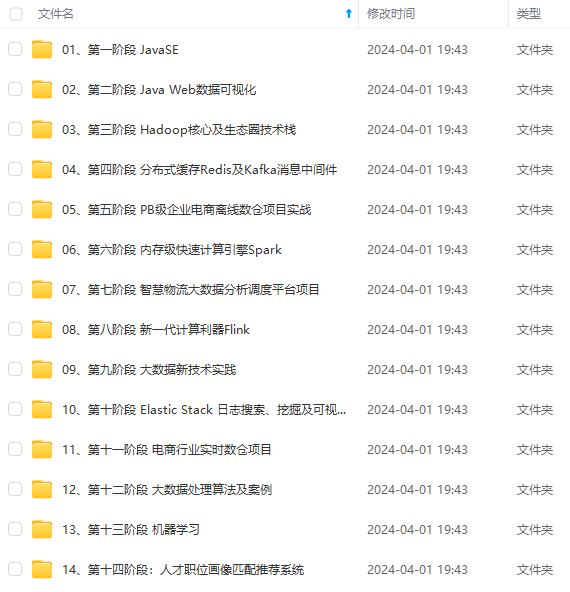

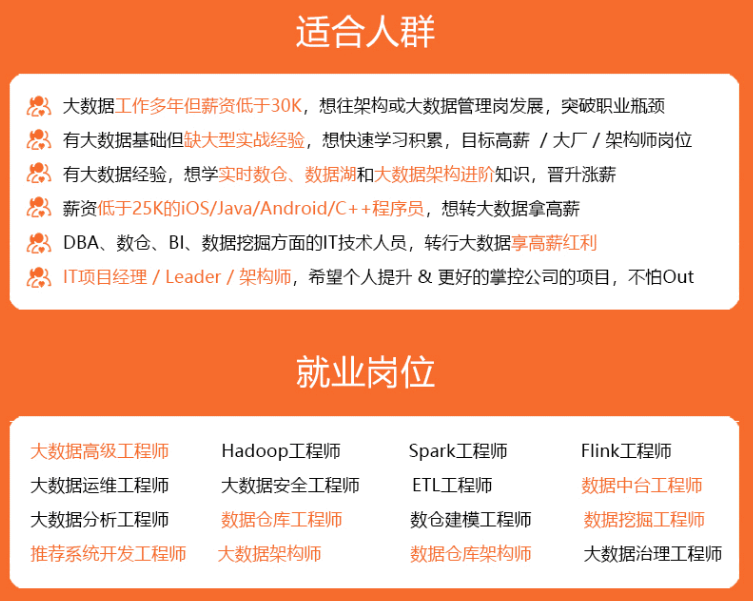

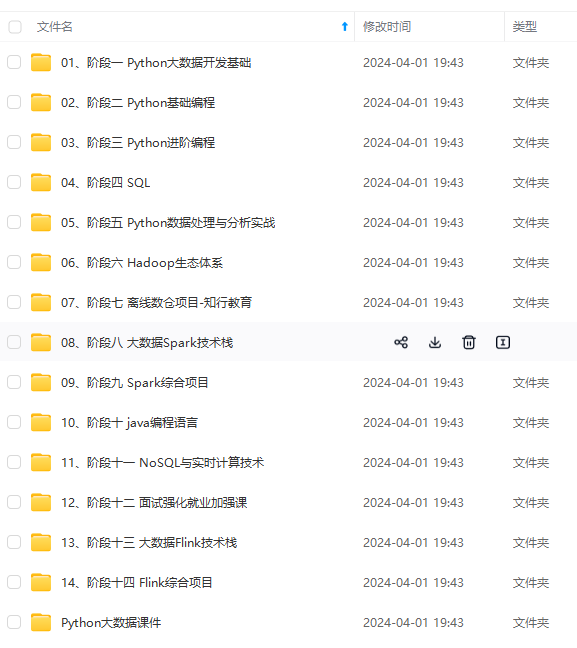

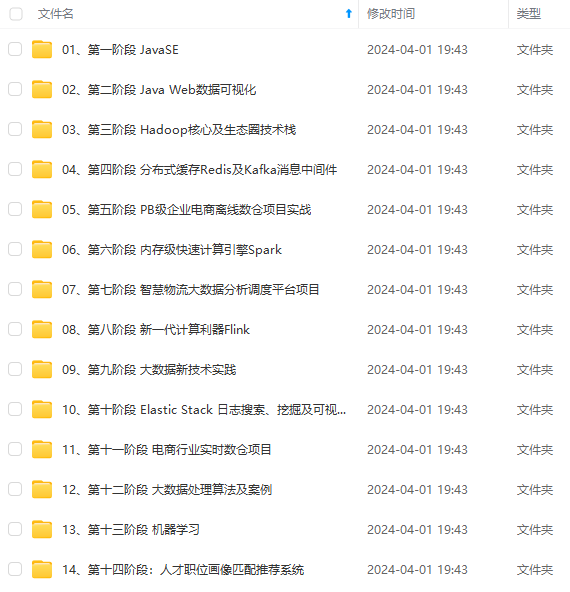

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

cfg['data_root'], 1, cfg['batch_size'], shuffle=False, mode='test')

return train_loader, val_loader, test_loader

@staticmethod

def build_model(cfg):

model = Network()

model.add(FCLayer(784, 10))

return model

@staticmethod

def build_optimizer(model, cfg):

return SGD(model, cfg['learning_rate'], cfg['momentum'])

def train(self):

max_epoch = self.cfg['max_epoch']

epoch_train_loss, epoch_train_acc = [], []

for epoch in range(max_epoch):

iteration_train_loss, iteration_train_acc = [], []

for iteration, (images, labels) in enumerate(self.train_loader):

# forward pass

logits = self.model.forward(images)

loss, acc = self.criterion.forward(logits, labels)

# backward_pass

delta = self.criterion.backward()

self.model.backward(delta)

# updata the model weights

self.optimizer.step()

# restore loss and accuracy

iteration_train_loss.append(loss)

iteration_train_acc.append(acc)

# display iteration training info

if iteration % self.cfg['display_freq'] == 0:

print("Epoch [{}][{}]\t Batch [{}][{}]\t Training Loss {:.4f}\t Accuracy {:.4f}".format(

epoch, max_epoch, iteration, len(self.train_loader), loss, acc))

avg_train_loss, avg_train_acc = np.mean(iteration_train_loss), np.mean(iteration_train_acc)

epoch_train_loss.append(avg_train_loss)

epoch_train_acc.append(avg_train_acc)

# validate

avg_val_loss, avg_val_acc = self.validate()

# display epoch training info

print('\nEpoch [{}]\t Average training loss {:.4f}\t Average training accuracy {:.4f}'.format(

epoch, avg_train_loss, avg_train_acc))

# display epoch valiation info

print('Epoch [{}]\t Average validation loss {:.4f}\t Average validation accuracy {:.4f}\n'.format(

epoch, avg_val_loss, avg_val_acc))

return epoch_train_loss, epoch_train_acc

def validate(self):

logits_set, labels_set = [], []

for images, labels in self.val_loader:

logits = self.model.forward(images)

logits_set.append(logits)

labels_set.append(labels)

logits = np.concatenate(logits_set)

labels = np.concatenate(labels_set)

loss, acc = self.criterion.forward(logits, labels)

return loss, acc

def test(self):

logits_set, labels_set = [], []

for images, labels in self.test_loader:

logits = self.model.forward(images)

logits_set.append(logits)

labels_set.append(labels)

logits = np.concatenate(logits_set)

labels = np.concatenate(labels_set)

loss, acc = self.criterion.forward(logits, labels)

return loss, acc

if name == ‘main’:

# You can modify the hyerparameters by yourself.

relu_cfg = {

‘data_root’: ‘data’,

‘max_epoch’: 10,

‘batch_size’: 100,

‘learning_rate’: 0.1,

‘momentum’: 0.9,

‘display_freq’: 50,

‘activation_function’: ‘relu’,

}

runner = Solver(relu_cfg)

relu_loss, relu_acc = runner.train()

test_loss, test_acc = runner.test()

print('Final test accuracy {:.4f}\n'.format(test_acc))

# You can modify the hyerparameters by yourself.

sigmoid_cfg = {

'data_root': 'data',

'max_epoch': 10,

'batch_size': 100,

'learning_rate': 0.1,

'momentum': 0.9,

'display_freq': 50,

'activation_function': 'sigmoid',

}

runner = Solver(sigmoid_cfg)

sigmoid_loss, sigmoid_acc = runner.train()

test_loss, test_acc = runner.test()

print('Final test accuracy {:.4f}\n'.format(test_acc))

plot_loss_and_acc({

"relu": [relu_loss, relu_acc],

"sigmoid": [sigmoid_loss, sigmoid_acc],

})

dataloader.py

import os

import struct

import numpy as np

class Dataset(object):

def __init__(self, data_root, mode='train', num_classes=10):

assert mode in ['train', 'val', 'test']

# load images and labels

kind = {'train': 'train', 'val': 'train', 'test': 't10k'}[mode]

labels_path = os.path.join(data_root, '{}-labels-idx1-ubyte'.format(kind))

images_path = os.path.join(data_root, '{}-images-idx3-ubyte'.format(kind))

with open(labels_path, 'rb') as lbpath:

magic, n = struct.unpack('>II', lbpath.read(8))

labels = np.fromfile(lbpath, dtype=np.uint8)

with open(images_path, 'rb') as imgpath:

magic, num, rows, cols = struct.unpack('>IIII', imgpath.read(16))

images = np.fromfile(imgpath, dtype=np.uint8).reshape(len(labels), 784)

if mode == 'train':

# training images and labels

self.images = images[:55000] # shape: (55000, 784)

self.labels = labels[:55000] # shape: (55000,)

elif mode == 'val':

# validation images and labels

self.images = images[55000:] # shape: (5000, 784)

self.labels = labels[55000:] # shape: (5000, )

else:

# test data

self.images = images # shape: (10000, 784)

self.labels = labels # shape: (10000, )

self.num_classes = 10

def __len__(self):

return len(self.images)

def __getitem__(self, idx):

image = self.images[idx]

label = self.labels[idx]

# Normalize from [0, 255.] to [0., 1.0], and then subtract by the mean value

image = image / 255.0

image = image - np.mean(image)

return image, label

class IterationBatchSampler(object):

def __init__(self, dataset, max_epoch, batch_size=2, shuffle=True):

self.dataset = dataset

self.batch_size = batch_size

self.shuffle = shuffle

def prepare_epoch_indices(self):

indices = np.arange(len(self.dataset))

if self.shuffle:

np.random.shuffle(indices)

num_iteration = len(indices) // self.batch_size + int(len(indices) % self.batch_size)

self.batch_indices = np.split(indices, num_iteration)

def __iter__(self):

return iter(self.batch_indices)

def __len__(self):

return len(self.batch_indices)

class Dataloader(object):

def __init__(self, dataset, sampler):

self.dataset = dataset

self.sampler = sampler

def __iter__(self):

self.sampler.prepare_epoch_indices()

for batch_indices in self.sampler:

batch_images = []

batch_labels = []

for idx in batch_indices:

img, label = self.dataset[idx]

batch_images.append(img)

batch_labels.append(label)

batch_images = np.stack(batch_images)

batch_labels = np.stack(batch_labels)

yield batch_images, batch_labels

def __len__(self):

return len(self.sampler)

def build_dataloader(data_root, max_epoch, batch_size, shuffle=False, mode=‘train’):

dataset = Dataset(data_root, mode)

sampler = IterationBatchSampler(dataset, max_epoch, batch_size, shuffle)

data_lodaer = Dataloader(dataset, sampler)

return data_lodaer

loss.py

import numpy as np

a small number to prevent dividing by zero, maybe useful for you

EPS = 1e-11

class SoftmaxCrossEntropyLoss(object):

def forward(self, logits, labels):

"""

Inputs: (minibatch)

- logits: forward results from the last FCLayer, shape (batch_size, 10)

- labels: the ground truth label, shape (batch_size, )

"""

############################################################################

# TODO: Put your code here

# Calculate the average accuracy and loss over the minibatch

# Return the loss and acc, which will be used in solver.py

# Hint: Maybe you need to save some arrays for backward

self.one_hot_labels = np.zeros_like(logits)

self.one_hot_labels[np.arange(len(logits)), labels] = 1

self.prob = np.exp(logits) / (EPS + np.exp(logits).sum(axis=1, keepdims=True))

# calculate the accuracy

preds = np.argmax(self.prob, axis=1) # self.prob, not logits.

acc = np.mean(preds == labels)

# calculate the loss

loss = np.sum(-self.one_hot_labels * np.log(self.prob + EPS), axis=1)

loss = np.mean(loss)

############################################################################

return loss, acc

def backward(self):

############################################################################

# TODO: Put your code here

# Calculate and return the gradient (have the same shape as logits)

return self.prob - self.one_hot_labels

############################################################################

network.py

class Network(object):

def init(self):

self.layerList = []

self.numLayer = 0

def add(self, layer):

self.numLayer += 1

self.layerList.append(layer)

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

中…(img-Jg8ejOJD-1715828008916)]

[外链图片转存中…(img-Uy7wLwFA-1715828008916)]

[外链图片转存中…(img-5J0yFqYI-1715828008916)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

685

685

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?