最后

🍅 硬核资料:关注即可领取PPT模板、简历模板、行业经典书籍PDF。

🍅 技术互助:技术群大佬指点迷津,你的问题可能不是问题,求资源在群里喊一声。

🍅 面试题库:由技术群里的小伙伴们共同投稿,热乎的大厂面试真题,持续更新中。

🍅 知识体系:含编程语言、算法、大数据生态圈组件(Mysql、Hive、Spark、Flink)、数据仓库、Python、前端等等。

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

整个模块内容很多。看着看着才发现应该从后面末尾倒着往上面看。越后面的内容就越接近使用者,越往前面就越是标准上面的东西。

所以从后面开始简单介绍一下。

MozillaCookieJar和LWPCookieJar类都是FileCookieJar的子类。实现了具体的把cookie内容保存为文件的方法。只是这两个类对应的标准不同而已。

FileCookieJar好明显,是为了统筹文件cookie而设计的。定义了一些文件方面的标准操作。

真正抽象的是CookieJar,看到这里,其实已经可能没必要再详细了解CookieJar了。边学边用就行了。

CookieJar是对于Cookie类的一个类似管理类的封装。

Cookie是具体的内容,更接近于数据结构,另外还有一些非常基础的函数。

然后就是策略类,CookiePolicy,定义了一些标准的策略。

DefaultCookiePolicy才是完全实现了标准的策略类。不过这些策略我也不是很懂。这里已经非常抽象了。

等以后用到在深入理解。

下面是代码的注释。要从后面看起才舒服。

r"""HTTP cookie handling for web clients.

This module has (now fairly distant) origins in Gisle Aas' Perl module

HTTP::Cookies, from the libwww-perl library.

Docstrings, comments and debug strings in this code refer to the

attributes of the HTTP cookie system as cookie-attributes, to distinguish

them clearly from Python attributes.

Class diagram (note that BSDDBCookieJar and the MSIE* classes are not

distributed with the Python standard library, but are available from

http://wwwsearch.sf.net/):

CookieJar____

/ \ \

FileCookieJar \ \

/ | \ \ \

MozillaCookieJar | LWPCookieJar \ \

| | \

| ---MSIEBase | \

| / | | \

| / MSIEDBCookieJar BSDDBCookieJar

|/

MSIECookieJar

"""

#导出的类、函数等

__all__ = ['Cookie', 'CookieJar', 'CookiePolicy', 'DefaultCookiePolicy',

'FileCookieJar', 'LWPCookieJar', 'LoadError', 'MozillaCookieJar']

import copy

import datetime

import re

import time

import urllib.parse

try:

import threading as _threading #应该是多个系统兼容处理

except ImportError:

import dummy_threading as _threading

import http.client # only for the default HTTP port

from calendar import timegm #日历

#debug模式会有一些特殊的信息打印出来

debug = False # set to True to enable debugging via the logging module

logger = None

#logging日志模块,可以设置不同的级别,不同的输出格式,输出目标等

def _debug(*args):

if not debug:

return

global logger

if not logger:

import logging

logger = logging.getLogger("http.cookiejar")

return logger.debug(*args)

#http端口

DEFAULT_HTTP_PORT = str(http.client.HTTP_PORT)

MISSING_FILENAME_TEXT = ("a filename was not supplied (nor was the CookieJar "

"instance initialised with one)")

#捕捉异常和发出警告

def _warn_unhandled_exception():

# There are a few catch-all except: statements in this module, for

# catching input that's bad in unexpected ways. Warn if any

# exceptions are caught there.

import io, warnings, traceback

f = io.StringIO()

traceback.print_exc(None, f)

msg = f.getvalue()

warnings.warn("http.cookiejar bug!\n%s" % msg, stacklevel=2)

# Date/time conversion 日期转换

# -----------------------------------------------------------------------------

EPOCH_YEAR = 1970

def _timegm(tt):

year, month, mday, hour, min, sec = tt[:6]

if ((year >= EPOCH_YEAR) and (1 <= month <= 12) and (1 <= mday <= 31) and

(0 <= hour <= 24) and (0 <= min <= 59) and (0 <= sec <= 61)):

return timegm(tt)

else:

return None

DAYS = ["Mon", "Tue", "Wed", "Thu", "Fri", "Sat", "Sun"]

MONTHS = ["Jan", "Feb", "Mar", "Apr", "May", "Jun",

"Jul", "Aug", "Sep", "Oct", "Nov", "Dec"]

MONTHS_LOWER = []

for month in MONTHS: MONTHS_LOWER.append(month.lower())

#按固定格式返回一个时间的字符串

def time2isoz(t=None):

"""Return a string representing time in seconds since epoch, t.

If the function is called without an argument, it will use the current

time.

The format of the returned string is like "YYYY-MM-DD hh:mm:ssZ",

representing Universal Time (UTC, aka GMT). An example of this format is:

1994-11-24 08:49:37Z

"""

if t is None:

dt = datetime.datetime.utcnow()

else:

dt = datetime.datetime.utcfromtimestamp(t)

return "%04d-%02d-%02d %02d:%02d:%02dZ" % (

dt.year, dt.month, dt.day, dt.hour, dt.minute, dt.second)

#按固定格式返回一个时间的字符串

def time2netscape(t=None):

"""Return a string representing time in seconds since epoch, t.

If the function is called without an argument, it will use the current

time.

The format of the returned string is like this:

Wed, DD-Mon-YYYY HH:MM:SS GMT

"""

if t is None:

dt = datetime.datetime.utcnow()

else:

dt = datetime.datetime.utcfromtimestamp(t)

return "%s %02d-%s-%04d %02d:%02d:%02d GMT" % (

DAYS[dt.weekday()], dt.day, MONTHS[dt.month-1],

dt.year, dt.hour, dt.minute, dt.second)

UTC_ZONES = {"GMT": None, "UTC": None, "UT": None, "Z": None}

TIMEZONE_RE = re.compile(r"^([-+])?(\d\d?):?(\d\d)?$", re.ASCII)

def offset_from_tz_string(tz):

offset = None

if tz in UTC_ZONES:

offset = 0

else:

m = TIMEZONE_RE.search(tz)

if m:

offset = 3600 * int(m.group(2))

if m.group(3):

offset = offset + 60 * int(m.group(3))

if m.group(1) == '-':

offset = -offset

return offset

#把字符串编程时间

def _str2time(day, mon, yr, hr, min, sec, tz):

# translate month name to number

# month numbers start with 1 (January)

try:

mon = MONTHS_LOWER.index(mon.lower())+1

except ValueError:

# maybe it's already a number

try:

imon = int(mon)

except ValueError:

return None

if 1 <= imon <= 12:

mon = imon

else:

return None

# make sure clock elements are defined

if hr is None: hr = 0

if min is None: min = 0

if sec is None: sec = 0

yr = int(yr)

day = int(day)

hr = int(hr)

min = int(min)

sec = int(sec)

if yr < 1000:

# find "obvious" year

cur_yr = time.localtime(time.time())[0]

m = cur_yr % 100

tmp = yr

yr = yr + cur_yr - m

m = m - tmp

if abs(m) > 50:

if m > 0: yr = yr + 100

else: yr = yr - 100

# convert UTC time tuple to seconds since epoch (not timezone-adjusted)

t = _timegm((yr, mon, day, hr, min, sec, tz))

if t is not None:

# adjust time using timezone string, to get absolute time since epoch

if tz is None:

tz = "UTC"

tz = tz.upper()

offset = offset_from_tz_string(tz)

if offset is None:

return None

t = t - offset

return t

STRICT_DATE_RE = re.compile(

r"^[SMTWF][a-z][a-z], (\d\d) ([JFMASOND][a-z][a-z]) "

"(\d\d\d\d) (\d\d):(\d\d):(\d\d) GMT$", re.ASCII)

WEEKDAY_RE = re.compile(

r"^(?:Sun|Mon|Tue|Wed|Thu|Fri|Sat)[a-z]*,?\s*", re.I | re.ASCII)

LOOSE_HTTP_DATE_RE = re.compile(

r"""^

(\d\d?) # day

(?:\s+|[-\/])

(\w+) # month

(?:\s+|[-\/])

(\d+) # year

(?:

(?:\s+|:) # separator before clock

(\d\d?):(\d\d) # hour:min

(?::(\d\d))? # optional seconds

)? # optional clock

\s*

([-+]?\d{2,4}|(?![APap][Mm]\b)[A-Za-z]+)? # timezone

\s*

(?:\(\w+\))? # ASCII representation of timezone in parens.

\s*$""", re.X | re.ASCII)

def http2time(text):

"""Returns time in seconds since epoch of time represented by a string.

Return value is an integer.

None is returned if the format of str is unrecognized, the time is outside

the representable range, or the timezone string is not recognized. If the

string contains no timezone, UTC is assumed.

The timezone in the string may be numerical (like "-0800" or "+0100") or a

string timezone (like "UTC", "GMT", "BST" or "EST"). Currently, only the

timezone strings equivalent to UTC (zero offset) are known to the function.

The function loosely parses the following formats:

Wed, 09 Feb 1994 22:23:32 GMT -- HTTP format

Tuesday, 08-Feb-94 14:15:29 GMT -- old rfc850 HTTP format

Tuesday, 08-Feb-1994 14:15:29 GMT -- broken rfc850 HTTP format

09 Feb 1994 22:23:32 GMT -- HTTP format (no weekday)

08-Feb-94 14:15:29 GMT -- rfc850 format (no weekday)

08-Feb-1994 14:15:29 GMT -- broken rfc850 format (no weekday)

The parser ignores leading and trailing whitespace. The time may be

absent.

If the year is given with only 2 digits, the function will select the

century that makes the year closest to the current date.

"""

# fast exit for strictly conforming string

m = STRICT_DATE_RE.search(text)

if m:

g = m.groups()

mon = MONTHS_LOWER.index(g[1].lower()) + 1

tt = (int(g[2]), mon, int(g[0]),

int(g[3]), int(g[4]), float(g[5]))

return _timegm(tt)

# No, we need some messy parsing...

# clean up

text = text.lstrip()

text = WEEKDAY_RE.sub("", text, 1) # Useless weekday

# tz is time zone specifier string

day, mon, yr, hr, min, sec, tz = [None]*7

# loose regexp parse

m = LOOSE_HTTP_DATE_RE.search(text)

if m is not None:

day, mon, yr, hr, min, sec, tz = m.groups()

else:

return None # bad format

return _str2time(day, mon, yr, hr, min, sec, tz)

ISO_DATE_RE = re.compile(

"""^

(\d{4}) # year

[-\/]?

(\d\d?) # numerical month

[-\/]?

(\d\d?) # day

(?:

(?:\s+|[-:Tt]) # separator before clock

(\d\d?):?(\d\d) # hour:min

(?::?(\d\d(?:\.\d*)?))? # optional seconds (and fractional)

)? # optional clock

\s*

([-+]?\d\d?:?(:?\d\d)?

|Z|z)? # timezone (Z is "zero meridian", i.e. GMT)

\s*$""", re.X | re. ASCII)

def iso2time(text):

"""

As for http2time, but parses the ISO 8601 formats:

1994-02-03 14:15:29 -0100 -- ISO 8601 format

1994-02-03 14:15:29 -- zone is optional

1994-02-03 -- only date

1994-02-03T14:15:29 -- Use T as separator

19940203T141529Z -- ISO 8601 compact format

19940203 -- only date

"""

# clean up

text = text.lstrip()

# tz is time zone specifier string

day, mon, yr, hr, min, sec, tz = [None]*7

# loose regexp parse

m = ISO_DATE_RE.search(text)

if m is not None:

# XXX there's an extra bit of the timezone I'm ignoring here: is

# this the right thing to do?

yr, mon, day, hr, min, sec, tz, _ = m.groups()

else:

return None # bad format

return _str2time(day, mon, yr, hr, min, sec, tz)

# Header parsing

# -----------------------------------------------------------------------------

#返回匹配对象不匹配的部分

def unmatched(match):

"""Return unmatched part of re.Match object."""

start, end = match.span(0)

return match.string[:start]+match.string[end:]

HEADER_TOKEN_RE = re.compile(r"^\s*([^=\s;,]+)")

HEADER_QUOTED_VALUE_RE = re.compile(r"^\s*=\s*\"([^\"\\]*(?:\\.[^\"\\]*)*)\"")

HEADER_VALUE_RE = re.compile(r"^\s*=\s*([^\s;,]*)")

HEADER_ESCAPE_RE = re.compile(r"\\(.)")

def split_header_words(header_values):

r"""Parse header values into a list of lists containing key,value pairs.

The function knows how to deal with ",", ";" and "=" as well as quoted

values after "=". A list of space separated tokens are parsed as if they

were separated by ";".

If the header_values passed as argument contains multiple values, then they

are treated as if they were a single value separated by comma ",".

This means that this function is useful for parsing header fields that

follow this syntax (BNF as from the HTTP/1.1 specification, but we relax

the requirement for tokens).

headers = #header

header = (token | parameter) *( [";"] (token | parameter))

token = 1*<any CHAR except CTLs or separators>

separators = "(" | ")" | "<" | ">" | "@"

| "," | ";" | ":" | "\" | <">

| "/" | "[" | "]" | "?" | "="

| "{" | "}" | SP | HT

quoted-string = ( <"> *(qdtext | quoted-pair ) <"> )

qdtext = <any TEXT except <">>

quoted-pair = "\" CHAR

parameter = attribute "=" value

attribute = token

value = token | quoted-string

Each header is represented by a list of key/value pairs. The value for a

simple token (not part of a parameter) is None. Syntactically incorrect

headers will not necessarily be parsed as you would want.

This is easier to describe with some examples:

>>> split_header_words(['foo="bar"; port="80,81"; discard, bar=baz'])

[[('foo', 'bar'), ('port', '80,81'), ('discard', None)], [('bar', 'baz')]]

>>> split_header_words(['text/html; charset="iso-8859-1"'])

[[('text/html', None), ('charset', 'iso-8859-1')]]

>>> split_header_words([r'Basic realm="\"foo\bar\""'])

[[('Basic', None), ('realm', '"foobar"')]]

"""

assert not isinstance(header_values, str)

result = []

for text in header_values:

orig_text = text

pairs = []

while text:

m = HEADER_TOKEN_RE.search(text)

if m:

text = unmatched(m)

name = m.group(1)

m = HEADER_QUOTED_VALUE_RE.search(text)

if m: # quoted value

text = unmatched(m)

value = m.group(1)

value = HEADER_ESCAPE_RE.sub(r"\1", value)

else:

m = HEADER_VALUE_RE.search(text)

if m: # unquoted value

text = unmatched(m)

value = m.group(1)

value = value.rstrip()

else:

# no value, a lone token

value = None

pairs.append((name, value))

elif text.lstrip().startswith(","):

# concatenated headers, as per RFC 2616 section 4.2

text = text.lstrip()[1:]

if pairs: result.append(pairs)

pairs = []

else:

# skip junk

non_junk, nr_junk_chars = re.subn("^[=\s;]*", "", text)

assert nr_junk_chars > 0, (

"split_header_words bug: '%s', '%s', %s" %

(orig_text, text, pairs))

text = non_junk

if pairs: result.append(pairs)

return result

HEADER_JOIN_ESCAPE_RE = re.compile(r"([\"\\])")

def join_header_words(lists):

"""Do the inverse (almost) of the conversion done by split_header_words.

Takes a list of lists of (key, value) pairs and produces a single header

value. Attribute values are quoted if needed.

>>> join_header_words([[("text/plain", None), ("charset", "iso-8859/1")]])

'text/plain; charset="iso-8859/1"'

>>> join_header_words([[("text/plain", None)], [("charset", "iso-8859/1")]])

'text/plain, charset="iso-8859/1"'

"""

headers = []

for pairs in lists:

attr = []

for k, v in pairs:

if v is not None:

if not re.search(r"^\w+$", v):

v = HEADER_JOIN_ESCAPE_RE.sub(r"\\\1", v) # escape " and \

v = '"%s"' % v

k = "%s=%s" % (k, v)

attr.append(k)

if attr: headers.append("; ".join(attr))

return ", ".join(headers)

def strip_quotes(text):

if text.startswith('"'):

text = text[1:]

if text.endswith('"'):

text = text[:-1]

return text

def parse_ns_headers(ns_headers):

"""Ad-hoc parser for Netscape protocol cookie-attributes.

The old Netscape cookie format for Set-Cookie can for instance contain

an unquoted "," in the expires field, so we have to use this ad-hoc

parser instead of split_header_words.

XXX This may not make the best possible effort to parse all the crap

that Netscape Cookie headers contain. Ronald Tschalar's HTTPClient

parser is probably better, so could do worse than following that if

this ever gives any trouble.

Currently, this is also used for parsing RFC 2109 cookies.

"""

known_attrs = ("expires", "domain", "path", "secure",

# RFC 2109 attrs (may turn up in Netscape cookies, too)

"version", "port", "max-age")

result = []

for ns_header in ns_headers:

pairs = []

version_set = False

for ii, param in enumerate(re.split(r";\s*", ns_header)):

param = param.rstrip()

if param == "": continue

if "=" not in param:

k, v = param, None

else:

k, v = re.split(r"\s*=\s*", param, 1)

k = k.lstrip()

if ii != 0:

lc = k.lower()

if lc in known_attrs:

k = lc

if k == "version":

# This is an RFC 2109 cookie.

v = strip_quotes(v)

version_set = True

if k == "expires":

# convert expires date to seconds since epoch

v = http2time(strip_quotes(v)) # None if invalid

pairs.append((k, v))

if pairs:

if not version_set:

pairs.append(("version", "0"))

result.append(pairs)

return result

IPV4_RE = re.compile(r"\.\d+$", re.ASCII)

def is_HDN(text):

"""Return True if text is a host domain name."""

# XXX

# This may well be wrong. Which RFC is HDN defined in, if any (for

# the purposes of RFC 2965)?

# For the current implementation, what about IPv6? Remember to look

# at other uses of IPV4_RE also, if change this.

if IPV4_RE.search(text):

return False

if text == "":

return False

if text[0] == "." or text[-1] == ".":

return False

return True

def domain_match(A, B):

"""Return True if domain A domain-matches domain B, according to RFC 2965.

A and B may be host domain names or IP addresses.

RFC 2965, section 1:

Host names can be specified either as an IP address or a HDN string.

Sometimes we compare one host name with another. (Such comparisons SHALL

be case-insensitive.) Host A's name domain-matches host B's if

* their host name strings string-compare equal; or

* A is a HDN string and has the form NB, where N is a non-empty

name string, B has the form .B', and B' is a HDN string. (So,

x.y.com domain-matches .Y.com but not Y.com.)

Note that domain-match is not a commutative operation: a.b.c.com

domain-matches .c.com, but not the reverse.

"""

# Note that, if A or B are IP addresses, the only relevant part of the

# definition of the domain-match algorithm is the direct string-compare.

A = A.lower()

B = B.lower()

if A == B:

return True

if not is_HDN(A):

return False

i = A.rfind(B)

if i == -1 or i == 0:

# A does not have form NB, or N is the empty string

return False

if not B.startswith("."):

return False

if not is_HDN(B[1:]):

return False

return True

def liberal_is_HDN(text):

"""Return True if text is a sort-of-like a host domain name.

For accepting/blocking domains.

"""

if IPV4_RE.search(text):

return False

return True

def user_domain_match(A, B):

"""For blocking/accepting domains.

A and B may be host domain names or IP addresses.

"""

A = A.lower()

B = B.lower()

if not (liberal_is_HDN(A) and liberal_is_HDN(B)):

if A == B:

# equal IP addresses

return True

return False

initial_dot = B.startswith(".")

if initial_dot and A.endswith(B):

return True

if not initial_dot and A == B:

return True

return False

cut_port_re = re.compile(r":\d+$", re.ASCII)

def request_host(request):

"""Return request-host, as defined by RFC 2965.

Variation from RFC: returned value is lowercased, for convenient

comparison.

"""

url = request.get_full_url()

host = urllib.parse.urlparse(url)[1]

if host == "":

host = request.get_header("Host", "")

# remove port, if present

host = cut_port_re.sub("", host, 1)

return host.lower()

def eff_request_host(request):

"""Return a tuple (request-host, effective request-host name).

As defined by RFC 2965, except both are lowercased.

"""

erhn = req_host = request_host(request)

if req_host.find(".") == -1 and not IPV4_RE.search(req_host):

erhn = req_host + ".local"

return req_host, erhn

def request_path(request):

"""Path component of request-URI, as defined by RFC 2965."""

url = request.get_full_url()

parts = urllib.parse.urlsplit(url)

path = escape_path(parts.path)

if not path.startswith("/"):

# fix bad RFC 2396 absoluteURI

path = "/" + path

return path

def request_port(request):

host = request.host

i = host.find(':')

if i >= 0:

port = host[i+1:]

try:

int(port)

except ValueError:

_debug("nonnumeric port: '%s'", port)

return None

else:

port = DEFAULT_HTTP_PORT

return port

# Characters in addition to A-Z, a-z, 0-9, '_', '.', and '-' that don't

# need to be escaped to form a valid HTTP URL (RFCs 2396 and 1738).

HTTP_PATH_SAFE = "%/;:@&=+$,!~*'()"

ESCAPED_CHAR_RE = re.compile(r"%([0-9a-fA-F][0-9a-fA-F])")

def uppercase_escaped_char(match):

return "%%%s" % match.group(1).upper()

def escape_path(path):

"""Escape any invalid characters in HTTP URL, and uppercase all escapes."""

# There's no knowing what character encoding was used to create URLs

# containing %-escapes, but since we have to pick one to escape invalid

# path characters, we pick UTF-8, as recommended in the HTML 4.0

# specification:

# http://www.w3.org/TR/REC-html40/appendix/notes.html#h-B.2.1

# And here, kind of: draft-fielding-uri-rfc2396bis-03

# (And in draft IRI specification: draft-duerst-iri-05)

# (And here, for new URI schemes: RFC 2718)

path = urllib.parse.quote(path, HTTP_PATH_SAFE)

path = ESCAPED_CHAR_RE.sub(uppercase_escaped_char, path)

return path

def reach(h):

"""Return reach of host h, as defined by RFC 2965, section 1.

The reach R of a host name H is defined as follows:

* If

- H is the host domain name of a host; and,

- H has the form A.B; and

- A has no embedded (that is, interior) dots; and

- B has at least one embedded dot, or B is the string "local".

then the reach of H is .B.

* Otherwise, the reach of H is H.

>>> reach("www.acme.com")

'.acme.com'

>>> reach("acme.com")

'acme.com'

>>> reach("acme.local")

'.local'

"""

i = h.find(".")

if i >= 0:

#a = h[:i] # this line is only here to show what a is

b = h[i+1:]

i = b.find(".")

if is_HDN(h) and (i >= 0 or b == "local"):

return "."+b

return h

def is_third_party(request):

"""

RFC 2965, section 3.3.6:

An unverifiable transaction is to a third-party host if its request-

host U does not domain-match the reach R of the request-host O in the

origin transaction.

"""

req_host = request_host(request)

if not domain_match(req_host, reach(request.origin_req_host)):

return True

else:

return False

#cookie类,非常简单,主要是cookie的属性

class Cookie:

"""HTTP Cookie.

This class represents both Netscape and RFC 2965 cookies.

This is deliberately a very simple class. It just holds attributes. It's

possible to construct Cookie instances that don't comply with the cookie

standards. CookieJar.make_cookies is the factory function for Cookie

objects -- it deals with cookie parsing, supplying defaults, and

normalising to the representation used in this class. CookiePolicy is

responsible for checking them to see whether they should be accepted from

and returned to the server.

Note that the port may be present in the headers, but unspecified ("Port"

rather than"Port=80", for example); if this is the case, port is None.

"""

def __init__(self, version, name, value,

port, port_specified,

domain, domain_specified, domain_initial_dot,

path, path_specified,

secure,

expires,

discard,

comment,

comment_url,

rest,

rfc2109=False,

):

if version is not None: version = int(version)#版本

if expires is not None: expires = int(expires)#过期时间

if port is None and port_specified is True:#端口

raise ValueError("if port is None, port_specified must be false")

self.version = version#版本

self.name = name#名称

self.value = value#值

self.port = port#端口

self.port_specified = port_specified#特殊端口

# normalise case, as per RFC 2965 section 3.3.3

self.domain = domain.lower()#域

self.domain_specified = domain_specified#特殊域

# Sigh. We need to know whether the domain given in the

# cookie-attribute had an initial dot, in order to follow RFC 2965

# (as clarified in draft errata). Needed for the returned $Domain

# value.

self.domain_initial_dot = domain_initial_dot#是否以.开头域名

self.path = path#路径

self.path_specified = path_specified#特殊路径

self.secure = secure#安全级别

self.expires = expires#过期时间

self.discard = discard#丢弃

self.comment = comment#

self.comment_url = comment_url

self.rfc2109 = rfc2109

self._rest = copy.copy(rest)

#有非标准属性

def has_nonstandard_attr(self, name):

return name in self._rest

#获得某个非标准属性

def get_nonstandard_attr(self, name, default=None):

return self._rest.get(name, default)

#设置非标准属性

def set_nonstandard_attr(self, name, value):

self._rest[name] = value

#是否过期

def is_expired(self, now=None):

if now is None: now = time.time()

if (self.expires is not None) and (self.expires <= now):

return True

return False

def __str__(self):

if self.port is None: p = ""

else: p = ":"+self.port

limit = self.domain + p + self.path

if self.value is not None:

namevalue = "%s=%s" % (self.name, self.value)

else:

namevalue = self.name

return "<Cookie %s for %s>" % (namevalue, limit)

def __repr__(self):

args = []

for name in ("version", "name", "value",

"port", "port_specified",

"domain", "domain_specified", "domain_initial_dot",

"path", "path_specified",

"secure", "expires", "discard", "comment", "comment_url",

):

attr = getattr(self, name)

args.append("%s=%s" % (name, repr(attr)))

args.append("rest=%s" % repr(self._rest))

args.append("rfc2109=%s" % repr(self.rfc2109))

return "Cookie(%s)" % ", ".join(args)

#cookie策略类,应该是做一些标准的公用的处理,

#还有一个默认策略类

class CookiePolicy:

"""Defines which cookies get accepted from and returned to server.

May also modify cookies, though this is probably a bad idea.

The subclass DefaultCookiePolicy defines the standard rules for Netscape

and RFC 2965 cookies -- override that if you want a customised policy.

"""

#设置可以从request中获得cookie,就返回true。为实现

def set_ok(self, cookie, request):

"""Return true if (and only if) cookie should be accepted from server.

Currently, pre-expired cookies never get this far -- the CookieJar

class deletes such cookies itself.

"""

raise NotImplementedError()

def return_ok(self, cookie, request):

"""Return true if (and only if) cookie should be returned to server."""

raise NotImplementedError()

def domain_return_ok(self, domain, request):

"""Return false if cookies should not be returned, given cookie domain.

"""

return True

def path_return_ok(self, path, request):

"""Return false if cookies should not be returned, given cookie path.

"""

return True

#实现了标准规则的策略类

class DefaultCookiePolicy(CookiePolicy):

"""Implements the standard rules for accepting and returning cookies."""

DomainStrictNoDots = 1

DomainStrictNonDomain = 2

DomainRFC2965Match = 4

DomainLiberal = 0

DomainStrict = DomainStrictNoDots|DomainStrictNonDomain

def __init__(self,

blocked_domains=None, allowed_domains=None,

netscape=True, rfc2965=False,

rfc2109_as_netscape=None,

hide_cookie2=False,

strict_domain=False,

strict_rfc2965_unverifiable=True,

strict_ns_unverifiable=False,

strict_ns_domain=DomainLiberal,

strict_ns_set_initial_dollar=False,

strict_ns_set_path=False,

):

"""Constructor arguments should be passed as keyword arguments only."""

self.netscape = netscape

self.rfc2965 = rfc2965

self.rfc2109_as_netscape = rfc2109_as_netscape

self.hide_cookie2 = hide_cookie2

self.strict_domain = strict_domain

self.strict_rfc2965_unverifiable = strict_rfc2965_unverifiable

self.strict_ns_unverifiable = strict_ns_unverifiable

self.strict_ns_domain = strict_ns_domain

self.strict_ns_set_initial_dollar = strict_ns_set_initial_dollar

self.strict_ns_set_path = strict_ns_set_path

if blocked_domains is not None:

self._blocked_domains = tuple(blocked_domains)

else:

self._blocked_domains = ()

if allowed_domains is not None:

allowed_domains = tuple(allowed_domains)

self._allowed_domains = allowed_domains

def blocked_domains(self):

"""Return the sequence of blocked domains (as a tuple)."""

return self._blocked_domains

def set_blocked_domains(self, blocked_domains):

"""Set the sequence of blocked domains."""

self._blocked_domains = tuple(blocked_domains)

def is_blocked(self, domain):

for blocked_domain in self._blocked_domains:

if user_domain_match(domain, blocked_domain):

return True

return False

def allowed_domains(self):

"""Return None, or the sequence of allowed domains (as a tuple)."""

return self._allowed_domains

def set_allowed_domains(self, allowed_domains):

"""Set the sequence of allowed domains, or None."""

if allowed_domains is not None:

allowed_domains = tuple(allowed_domains)

self._allowed_domains = allowed_domains

def is_not_allowed(self, domain):

if self._allowed_domains is None:

return False

for allowed_domain in self._allowed_domains:

if user_domain_match(domain, allowed_domain):

return False

return True

def set_ok(self, cookie, request):

"""

If you override .set_ok(), be sure to call this method. If it returns

false, so should your subclass (assuming your subclass wants to be more

strict about which cookies to accept).

"""

_debug(" - checking cookie %s=%s", cookie.name, cookie.value)

assert cookie.name is not None

for n in "version", "verifiability", "name", "path", "domain", "port":

fn_name = "set_ok_"+n

fn = getattr(self, fn_name)

if not fn(cookie, request):

return False

return True

def set_ok_version(self, cookie, request):

if cookie.version is None:

# Version is always set to 0 by parse_ns_headers if it's a Netscape

# cookie, so this must be an invalid RFC 2965 cookie.

_debug(" Set-Cookie2 without version attribute (%s=%s)",

cookie.name, cookie.value)

return False

if cookie.version > 0 and not self.rfc2965:

_debug(" RFC 2965 cookies are switched off")

return False

elif cookie.version == 0 and not self.netscape:

_debug(" Netscape cookies are switched off")

return False

return True

def set_ok_verifiability(self, cookie, request):

if request.unverifiable and is_third_party(request):

if cookie.version > 0 and self.strict_rfc2965_unverifiable:

_debug(" third-party RFC 2965 cookie during "

"unverifiable transaction")

return False

elif cookie.version == 0 and self.strict_ns_unverifiable:

_debug(" third-party Netscape cookie during "

"unverifiable transaction")

return False

return True

def set_ok_name(self, cookie, request):

# Try and stop servers setting V0 cookies designed to hack other

# servers that know both V0 and V1 protocols.

if (cookie.version == 0 and self.strict_ns_set_initial_dollar and

cookie.name.startswith("$")):

_debug(" illegal name (starts with '$'): '%s'", cookie.name)

return False

return True

def set_ok_path(self, cookie, request):

if cookie.path_specified:

req_path = request_path(request)

if ((cookie.version > 0 or

(cookie.version == 0 and self.strict_ns_set_path)) and

not req_path.startswith(cookie.path)):

_debug(" path attribute %s is not a prefix of request "

"path %s", cookie.path, req_path)

return False

return True

def set_ok_domain(self, cookie, request):

if self.is_blocked(cookie.domain):

_debug(" domain %s is in user block-list", cookie.domain)

return False

if self.is_not_allowed(cookie.domain):

_debug(" domain %s is not in user allow-list", cookie.domain)

return False

if cookie.domain_specified:

req_host, erhn = eff_request_host(request)

domain = cookie.domain

if self.strict_domain and (domain.count(".") >= 2):

# XXX This should probably be compared with the Konqueror

# (kcookiejar.cpp) and Mozilla implementations, but it's a

# losing battle.

i = domain.rfind(".")

j = domain.rfind(".", 0, i)

if j == 0: # domain like .foo.bar

tld = domain[i+1:]

sld = domain[j+1:i]

if sld.lower() in ("co", "ac", "com", "edu", "org", "net",

"gov", "mil", "int", "aero", "biz", "cat", "coop",

"info", "jobs", "mobi", "museum", "name", "pro",

"travel", "eu") and len(tld) == 2:

# domain like .co.uk

_debug(" country-code second level domain %s", domain)

return False

if domain.startswith("."):

undotted_domain = domain[1:]

else:

undotted_domain = domain

embedded_dots = (undotted_domain.find(".") >= 0)

if not embedded_dots and domain != ".local":

_debug(" non-local domain %s contains no embedded dot",

domain)

return False

if cookie.version == 0:

if (not erhn.endswith(domain) and

(not erhn.startswith(".") and

not ("."+erhn).endswith(domain))):

_debug(" effective request-host %s (even with added "

"initial dot) does not end with %s",

erhn, domain)

return False

if (cookie.version > 0 or

(self.strict_ns_domain & self.DomainRFC2965Match)):

if not domain_match(erhn, domain):

_debug(" effective request-host %s does not domain-match "

"%s", erhn, domain)

return False

if (cookie.version > 0 or

(self.strict_ns_domain & self.DomainStrictNoDots)):

host_prefix = req_host[:-len(domain)]

if (host_prefix.find(".") >= 0 and

not IPV4_RE.search(req_host)):

_debug(" host prefix %s for domain %s contains a dot",

host_prefix, domain)

return False

return True

def set_ok_port(self, cookie, request):

if cookie.port_specified:

req_port = request_port(request)

if req_port is None:

req_port = "80"

else:

req_port = str(req_port)

for p in cookie.port.split(","):

try:

int(p)

except ValueError:

_debug(" bad port %s (not numeric)", p)

return False

if p == req_port:

break

else:

_debug(" request port (%s) not found in %s",

req_port, cookie.port)

return False

return True

def return_ok(self, cookie, request):

"""

If you override .return_ok(), be sure to call this method. If it

returns false, so should your subclass (assuming your subclass wants to

be more strict about which cookies to return).

"""

# Path has already been checked by .path_return_ok(), and domain

# blocking done by .domain_return_ok().

_debug(" - checking cookie %s=%s", cookie.name, cookie.value)

for n in "version", "verifiability", "secure", "expires", "port", "domain":

fn_name = "return_ok_"+n

fn = getattr(self, fn_name)

if not fn(cookie, request):

return False

return True

def return_ok_version(self, cookie, request):

if cookie.version > 0 and not self.rfc2965:

_debug(" RFC 2965 cookies are switched off")

return False

elif cookie.version == 0 and not self.netscape:

_debug(" Netscape cookies are switched off")

return False

return True

def return_ok_verifiability(self, cookie, request):

if request.unverifiable and is_third_party(request):

if cookie.version > 0 and self.strict_rfc2965_unverifiable:

_debug(" third-party RFC 2965 cookie during unverifiable "

"transaction")

return False

elif cookie.version == 0 and self.strict_ns_unverifiable:

_debug(" third-party Netscape cookie during unverifiable "

"transaction")

return False

return True

def return_ok_secure(self, cookie, request):

if cookie.secure and request.type != "https":

_debug(" secure cookie with non-secure request")

return False

return True

def return_ok_expires(self, cookie, request):

if cookie.is_expired(self._now):

_debug(" cookie expired")

return False

return True

def return_ok_port(self, cookie, request):

if cookie.port:

req_port = request_port(request)

if req_port is None:

req_port = "80"

for p in cookie.port.split(","):

if p == req_port:

break

else:

_debug(" request port %s does not match cookie port %s",

req_port, cookie.port)

return False

return True

def return_ok_domain(self, cookie, request):

req_host, erhn = eff_request_host(request)

domain = cookie.domain

# strict check of non-domain cookies: Mozilla does this, MSIE5 doesn't

if (cookie.version == 0 and

(self.strict_ns_domain & self.DomainStrictNonDomain) and

not cookie.domain_specified and domain != erhn):

_debug(" cookie with unspecified domain does not string-compare "

"equal to request domain")

return False

if cookie.version > 0 and not domain_match(erhn, domain):

_debug(" effective request-host name %s does not domain-match "

"RFC 2965 cookie domain %s", erhn, domain)

return False

if cookie.version == 0 and not ("."+erhn).endswith(domain):

_debug(" request-host %s does not match Netscape cookie domain "

"%s", req_host, domain)

return False

return True

def domain_return_ok(self, domain, request):

# Liberal check of. This is here as an optimization to avoid

# having to load lots of MSIE cookie files unless necessary.

req_host, erhn = eff_request_host(request)

if not req_host.startswith("."):

req_host = "."+req_host

if not erhn.startswith("."):

erhn = "."+erhn

if not (req_host.endswith(domain) or erhn.endswith(domain)):

#_debug(" request domain %s does not match cookie domain %s",

# req_host, domain)

return False

if self.is_blocked(domain):

_debug(" domain %s is in user block-list", domain)

return False

if self.is_not_allowed(domain):

_debug(" domain %s is not in user allow-list", domain)

return False

return True

def path_return_ok(self, path, request):

_debug("- checking cookie path=%s", path)

req_path = request_path(request)

if not req_path.startswith(path):

_debug(" %s does not path-match %s", req_path, path)

return False

return True

def vals_sorted_by_key(adict):

keys = sorted(adict.keys())

return map(adict.get, keys)

def deepvalues(mapping):

"""Iterates over nested mapping, depth-first, in sorted order by key."""

values = vals_sorted_by_key(mapping)

for obj in values:

mapping = False

try:

obj.items

except AttributeError:

pass

else:

mapping = True

yield from deepvalues(obj)

if not mapping:

yield obj

# Used as second parameter to dict.get() method, to distinguish absent

# dict key from one with a None value.

class Absent: pass

class CookieJar:

"""Collection of HTTP cookies.

如果没有需求,可以不使用这方面的知识

You may not need to know about this class: try

urllib.request.build_opener(HTTPCookieProcessor).open(url).

"""

#各种正则表达式

non_word_re = re.compile(r"\W")

quote_re = re.compile(r"([\"\\])")

strict_domain_re = re.compile(r"\.?[^.]*")

domain_re = re.compile(r"[^.]*")

dots_re = re.compile(r"^\.+")

magic_re = re.compile(r"^\#LWP-Cookies-(\d+\.\d+)", re.ASCII)

#初始化

def __init__(self, policy=None):

if policy is None:

policy = DefaultCookiePolicy()#使用默认的cookie策略

self._policy = policy

self._cookies_lock = _threading.RLock()#cookie锁,对这个线程加锁解锁

self._cookies = {}#按照[domain][path][name]组织的多重字典结构

#设置cookie策略

def set_policy(self, policy):

self._policy = policy

def _cookies_for_domain(self, domain, request):

cookies = []

if not self._policy.domain_return_ok(domain, request):

return []

_debug("Checking %s for cookies to return", domain)

cookies_by_path = self._cookies[domain]

for path in cookies_by_path.keys():

if not self._policy.path_return_ok(path, request):

continue

cookies_by_name = cookies_by_path[path]

for cookie in cookies_by_name.values():

if not self._policy.return_ok(cookie, request):

_debug(" not returning cookie")

continue

_debug(" it's a match")

cookies.append(cookie)

return cookies

def _cookies_for_request(self, request):

"""Return a list of cookies to be returned to server."""

#返回服务端获得的cookies列表

cookies = []

for domain in self._cookies.keys():

cookies.extend(self._cookies_for_domain(domain, request))

return cookies

def _cookie_attrs(self, cookies):

"""Return a list of cookie-attributes to be returned to server.

like ['foo="bar"; $Path="/"', ...]

The $Version attribute is also added when appropriate (currently only

once per request).

"""

# add cookies in order of most specific (ie. longest) path first

cookies.sort(key=lambda a: len(a.path), reverse=True)

version_set = False

### 最后

Python崛起并且风靡,因为优点多、应用领域广、被大牛们认可。学习 Python 门槛很低,但它的晋级路线很多,通过它你能进入机器学习、数据挖掘、大数据,CS等更加高级的领域。Python可以做网络应用,可以做科学计算,数据分析,可以做网络爬虫,可以做机器学习、自然语言处理、可以写游戏、可以做桌面应用…Python可以做的很多,你需要学好基础,再选择明确的方向。这里给大家分享一份全套的 Python 学习资料,给那些想学习 Python 的小伙伴们一点帮助!

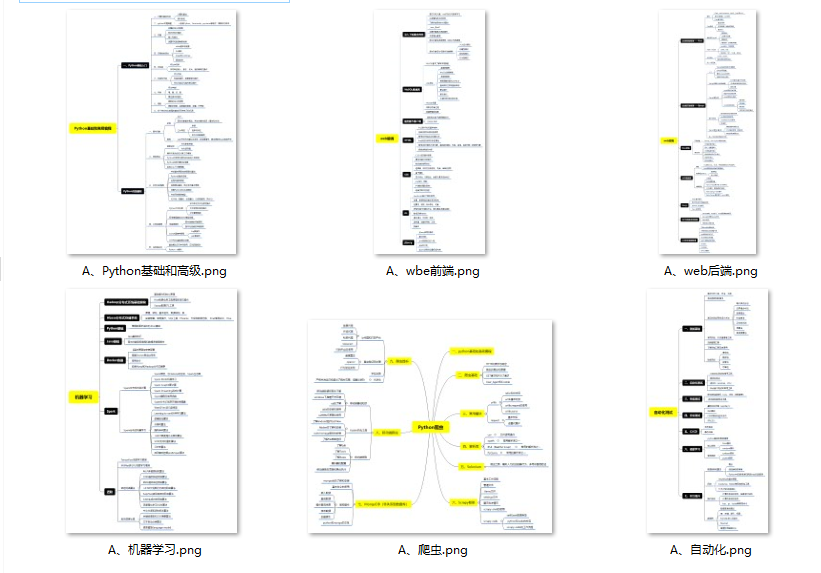

#### 👉Python所有方向的学习路线👈

Python所有方向的技术点做的整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

#### 👉Python必备开发工具👈

工欲善其事必先利其器。学习Python常用的开发软件都在这里了,给大家节省了很多时间。

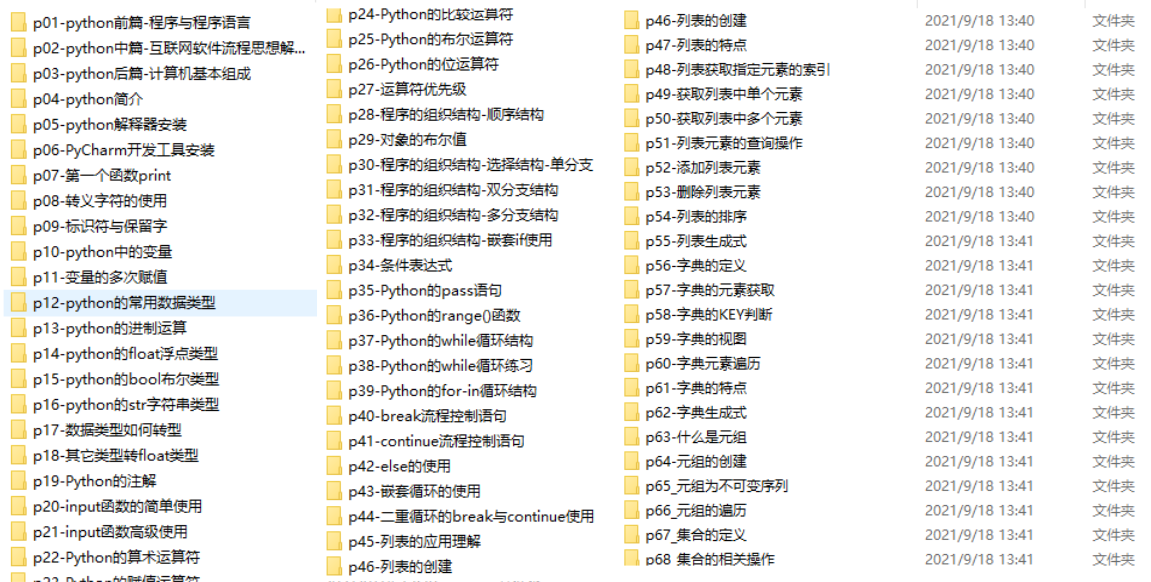

#### 👉Python全套学习视频👈

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了。

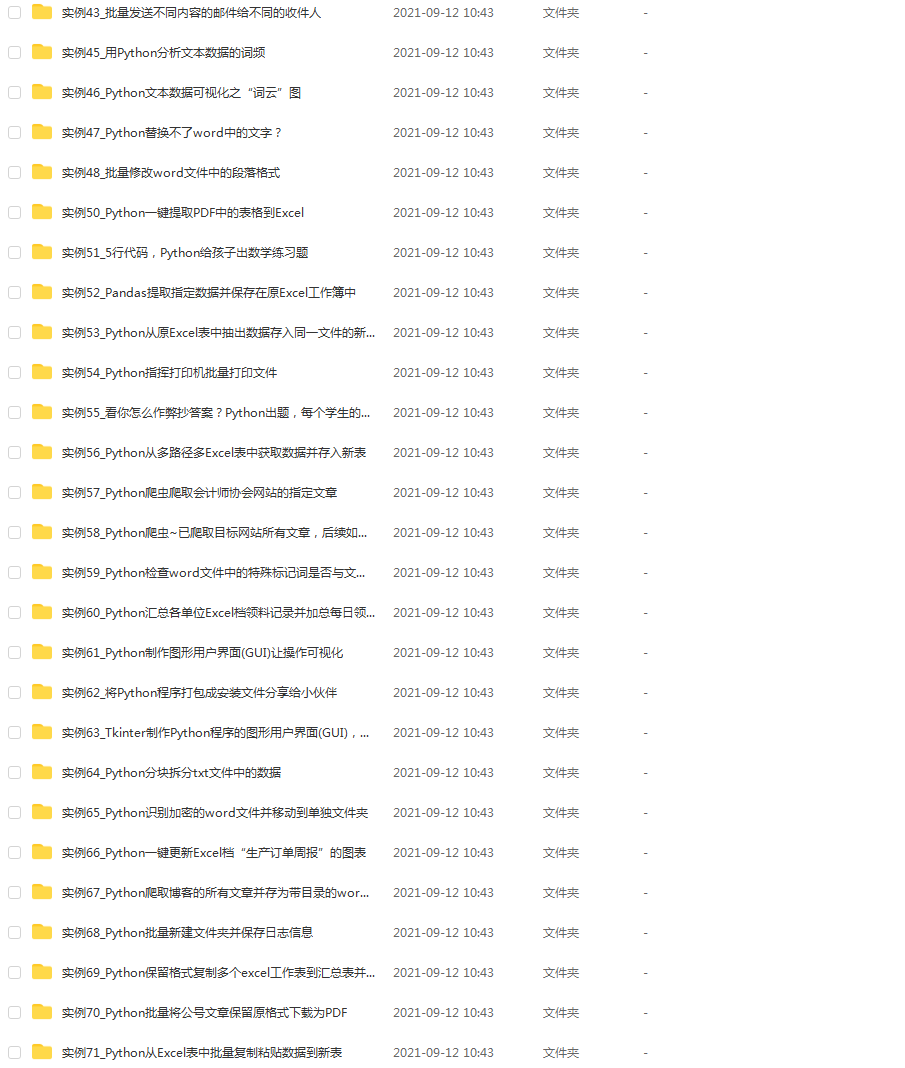

#### 👉实战案例👈

学python就与学数学一样,是不能只看书不做题的,直接看步骤和答案会让人误以为自己全都掌握了,但是碰到生题的时候还是会一筹莫展。

因此在学习python的过程中一定要记得多动手写代码,教程只需要看一两遍即可。

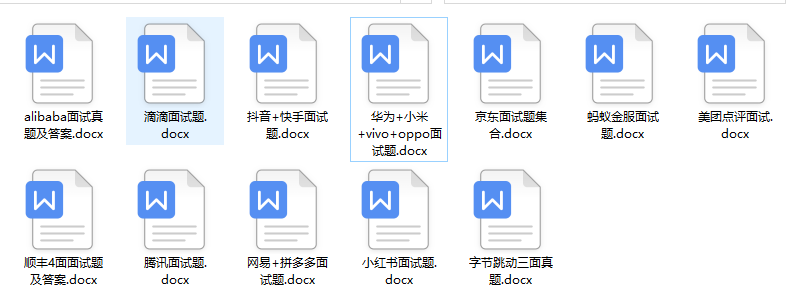

#### 👉大厂面试真题👈

我们学习Python必然是为了找到高薪的工作,下面这些面试题是来自阿里、腾讯、字节等一线互联网大厂最新的面试资料,并且有阿里大佬给出了权威的解答,刷完这一套面试资料相信大家都能找到满意的工作。

**[需要这份系统化学习资料的朋友,可以戳这里获取](https://bbs.csdn.net/forums/4304bb5a486d4c3ab8389e65ecb71ac0)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

554

554

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?