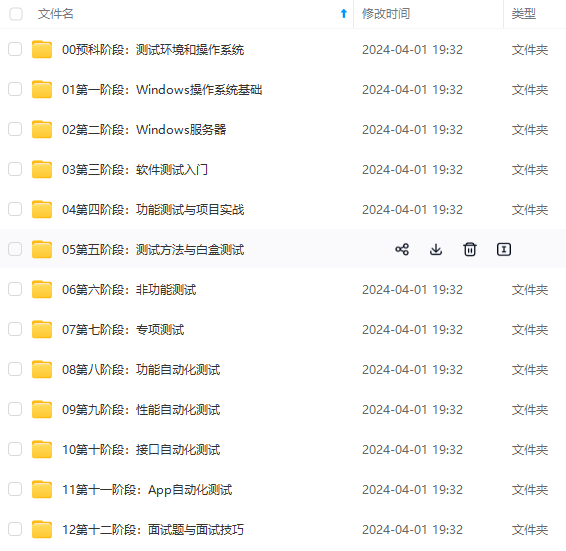

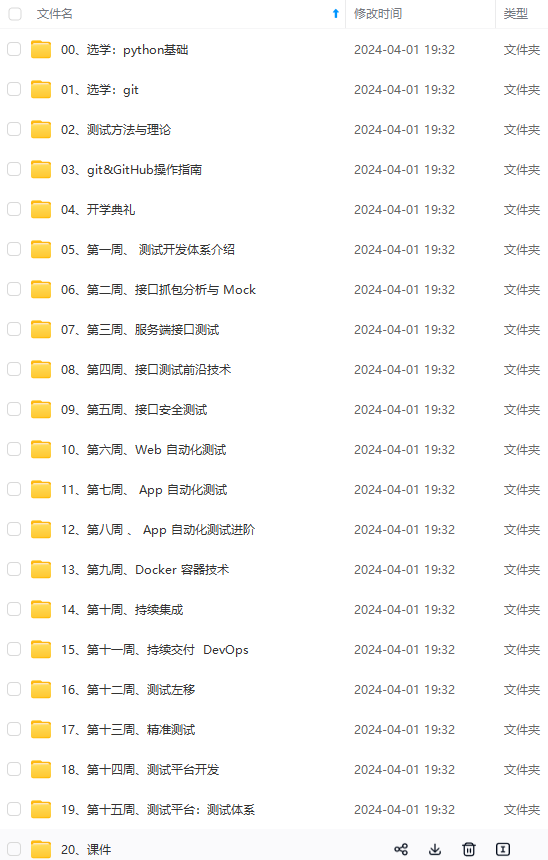

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上软件测试知识点,真正体系化!

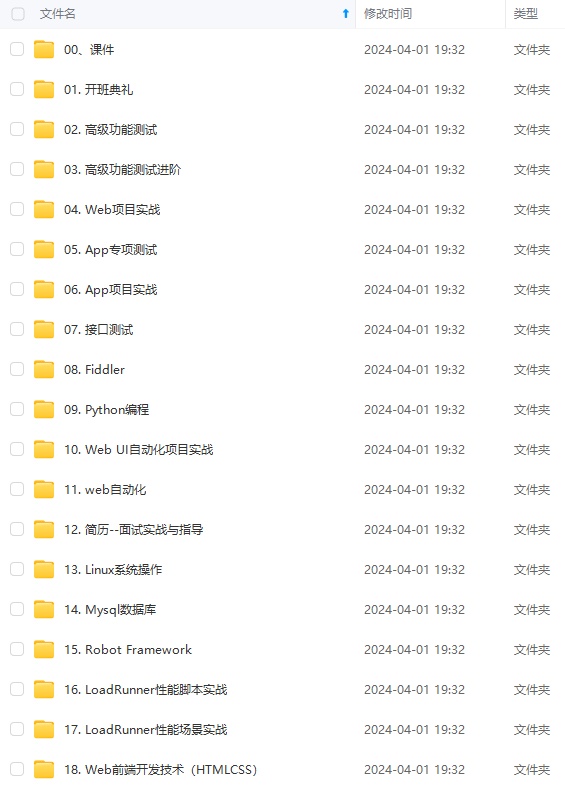

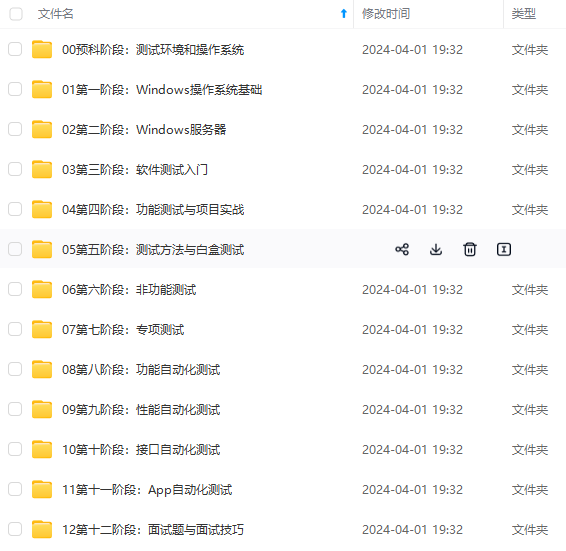

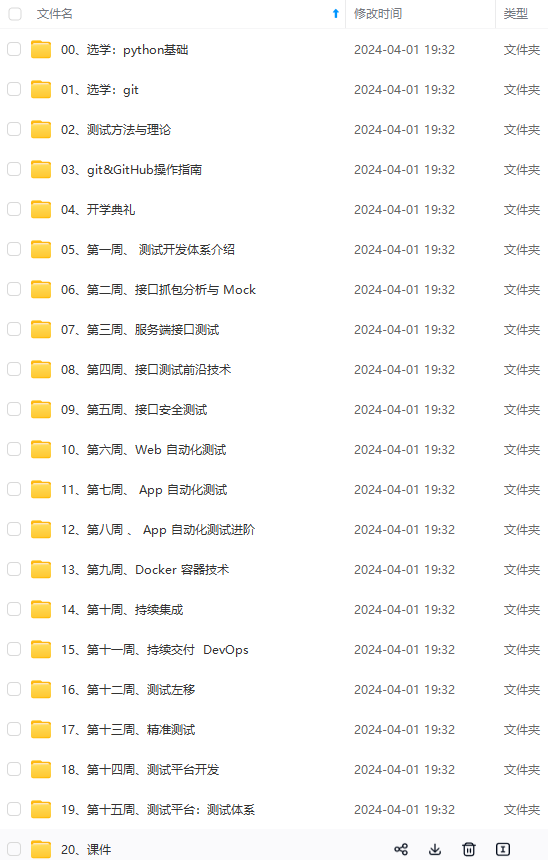

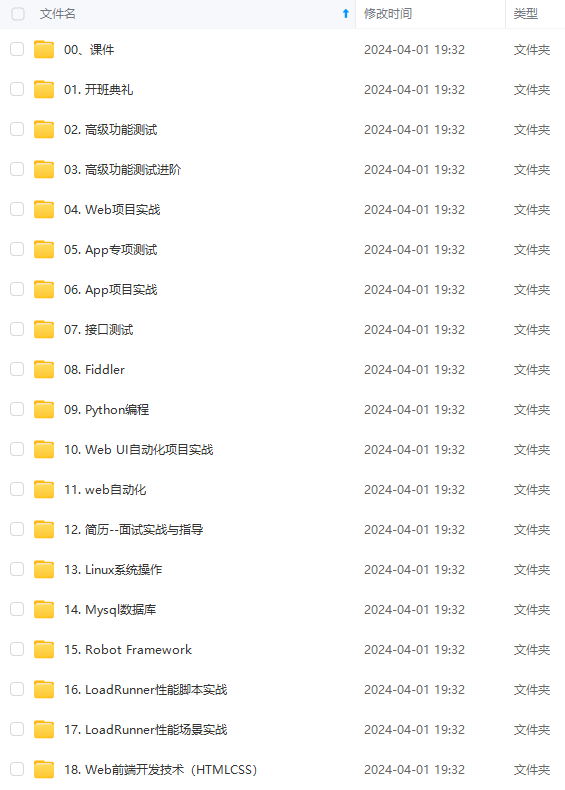

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

7、查看containerd运行状态

systemctl status containerd

### 安装kubeadm

说明:以下操作在所有节点执行。

1、添加kubernetes源,使用阿里云apt源进行替换:

apt update -y

apt-get install -y apt-transport-https

curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -

cat </etc/apt/sources.list.d/kubernetes.list

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

EOF

2、查看可安装的版本

apt-get update

apt-cache madison kubectl | more

3、安装指定版本kubeadm、kubelet及kubectl

export KUBERNETES_VERSION=1.28.1-00

apt update -y

apt-get install -y kubelet=

K

U

B

E

R

N

E

T

E

S

_

V

E

R

S

I

O

N

k

u

b

e

a

d

m

=

{KUBERNETES\_VERSION} kubeadm=

KUBERNETES_VERSIONkubeadm={KUBERNETES_VERSION} kubectl=${KUBERNETES_VERSION}

apt-mark hold kubelet kubeadm kubectl

4、启动kubelet服务

systemctl enable --now kubelet

### 部署haproxy和keepalived

说明:以下操作在所有master节点执行。

1、 安装haproxy和keepalived

apt install -y haproxy keepalived

2、创建haproxy配置文件,3个master节点配置相同,注意修改变量适配自身机器环境

export APISERVER_DEST_PORT=6444

export APISERVER_SRC_PORT=6443

export MASTER1_ADDRESS=192.168.72.30

export MASTER2_ADDRESS=192.168.72.31

export MASTER3_ADDRESS=192.168.72.32

cp /etc/haproxy/haproxy.cfg{,.bak}

cat >/etc/haproxy/haproxy.cfg<<EOF

global

log 127.0.0.1 local0

log 127.0.0.1 local1 notice

maxconn 20000

daemon

spread-checks 2

defaults

mode http

log global

option tcplog

option dontlognull

option http-server-close

option redispatch

timeout http-request 2s

timeout queue 3s

timeout connect 1s

timeout client 1h

timeout server 1h

timeout http-keep-alive 1h

timeout check 2s

maxconn 18000

backend stats-back

mode http

balance roundrobin

stats uri /haproxy/stats

stats auth admin:1111

frontend stats-front

bind *:8081

mode http

default_backend stats-back

frontend apiserver

bind *😒{APISERVER_DEST_PORT}

mode tcp

option tcplog

default_backend apiserver

backend apiserver

mode tcp

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server kube-apiserver-1

M

A

S

T

E

R

1

_

A

D

D

R

E

S

S

:

{MASTER1\_ADDRESS}:

MASTER1_ADDRESS:{APISERVER_SRC_PORT} check

server kube-apiserver-2

M

A

S

T

E

R

2

_

A

D

D

R

E

S

S

:

{MASTER2\_ADDRESS}:

MASTER2_ADDRESS:{APISERVER_SRC_PORT} check

server kube-apiserver-3

M

A

S

T

E

R

3

_

A

D

D

R

E

S

S

:

{MASTER3\_ADDRESS}:

MASTER3_ADDRESS:{APISERVER_SRC_PORT} check

EOF

3、创建keepalived配置文件,3个master节点配置相同,注意修改变量适配自身机器环境

export APISERVER_VIP=192.168.72.200

export INTERFACE=ens33

export ROUTER_ID=51

cp /etc/keepalived/keepalived.conf{,.bak}

cat >/etc/keepalived/keepalived.conf<<EOF

global_defs {

router_id ${ROUTER_ID}

vrrp_version 2

vrrp_garp_master_delay 1

vrrp_garp_master_refresh 1

script_user root

enable_script_security

}

vrrp_script check_apiserver {

script “/usr/bin/killall -0 haproxy”

timeout 3

interval 5 # check every 5 second

fall 3 # require 3 failures for KO

rise 2 # require 2 successes for OK

}

vrrp_instance lb-kube-vip {

state BACKUP

interface ${INTERFACE}

virtual_router_id ${ROUTER_ID}

priority 51

advert_int 1

nopreempt

track_script {

check_apiserver

}

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

${APISERVER_VIP} dev ${INTERFACE}

}

}

EOF

说明:这里所有节点都为BACKUP状态,由keepalvied根据优先级自行选举master节点。

4、启动haproxy和keepalived服务

systemctl enable --now haproxy

systemctl enable --now keepalived

5、检查3个master节点,确认VIP地址`192.168.72.200`生成在哪个节点上

以下示例显示VIP绑定在master1节点的ens33网卡上,可以通过重启该节点确认VIP是否能够自动切换到其他节点。

root@master1:~# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:50:56:aa:75:9f brd ff:ff:ff:ff:ff:ff

altname enp2s1

inet 192.168.72.30/24 brd 192.168.72.255 scope global ens33

valid_lft forever preferred_lft forever

inet 192.168.72.200/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::250:56ff:feaa:759f/64 scope link

valid_lft forever preferred_lft forever

### 提前拉取k8s镜像

备注:以下操作在所有节点执行。

默认初始化集群时kubeadm能够自动拉取镜像,提前拉取镜像能够缩短集群初次初始化的时间,该操作为可选项。

1、查看可安装的kubernetes版本

kubectl version --short

2、查看对应kubernetes版本的容器镜像

由于`registry.k8s.io` 项目由谷歌 GCP 和 AWS 托管并捐赠支持,`registry.k8s.io`已被屏蔽,K8S官方社区表示无能为力,因此需要通过`--image-repository`参数指定使用国内[阿里云k8s]( )镜像仓库。

kubeadm config images list

–kubernetes-version=v1.28.1

–image-repository registry.aliyuncs.com/google_containers

3、在所有节点执行以下命令,提前拉取镜像

kubeadm config images pull

–kubernetes-version=v1.28.1

–image-repository registry.aliyuncs.com/google_containers

4、查看拉取的镜像

root@master1:~# nerdctl -n k8s.io images |grep -v none

REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE

registry.aliyuncs.com/google_containers/coredns v1.10.1 90d3eeb2e210 About a minute ago linux/amd64 51.1 MiB 15.4 MiB

registry.aliyuncs.com/google_containers/etcd 3.5.9-0 b124583790d2 About a minute ago linux/amd64 283.8 MiB 98.1 MiB

registry.aliyuncs.com/google_containers/kube-apiserver v1.28.1 1e9a3ea7d1d4 2 minutes ago linux/amd64 123.1 MiB 33.0 MiB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.28.1 f6838231cb74 2 minutes ago linux/amd64 119.5 MiB 31.8 MiB

registry.aliyuncs.com/google_containers/kube-proxy v1.28.1 feb6017bf009 About a minute ago linux/amd64 73.6 MiB 23.4 MiB

registry.aliyuncs.com/google_containers/kube-scheduler v1.28.1 b76ea016d6b9 2 minutes ago linux/amd64 60.6 MiB 17.9 MiB

registry.aliyuncs.com/google_containers/pause 3.9 7031c1b28338 About a minute ago linux/amd64 728.0 KiB 314.0 KiB

### 创建集群配置文件

说明:以下操作仅在第一个master节点执行。

1、生成默认的集群初始化配置文件。

kubeadm config print init-defaults --component-configs KubeProxyConfiguration > kubeadm.yaml

2、修改集群配置文件

$ cat kubeadm.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages: - signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.72.30

bindPort: 6443

nodeRegistration:

criSocket: unix:///var/run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

name: master1

taints: null

- system:bootstrappers:kubeadm:default-node-token

apiServer:

timeoutForControlPlane: 4m0s

certSANs:

- master1

- master2

- master3

- node1

- 192.168.72.200

- 192.168.72.30

- 192.168.72.31

- 192.168.72.32

- 192.168.72.33

controlPlaneEndpoint: 192.168.72.200:6444

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: 1.28.1

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16

scheduler: {}

apiVersion: kubelet.config.k8s.io/v1beta1

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.crt

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 0s

cacheUnauthorizedTTL: 0s

cgroupDriver: systemd

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

containerRuntimeEndpoint: “”

cpuManagerReconcilePeriod: 0s

evictionPressureTransitionPeriod: 0s

fileCheckFrequency: 0s

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 0s

imageMinimumGCAge: 0s

kind: KubeletConfiguration

logging:

flushFrequency: 0

options:

json:

infoBufferSize: “0”

verbosity: 0

memorySwap: {}

nodeStatusReportFrequency: 0s

nodeStatusUpdateFrequency: 0s

resolvConf: /run/systemd/resolve/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 0s

shutdownGracePeriod: 0s

shutdownGracePeriodCriticalPods: 0s

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 0s

syncFrequency: 0s

volumeStatsAggPeriod: 0s

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

bindAddressHardFail: false

clientConnection:

acceptContentTypes: “”

burst: 0

contentType: “”

kubeconfig: /var/lib/kube-proxy/kubeconfig.conf

qps: 0

clusterCIDR: “”

configSyncPeriod: 0s

conntrack:

maxPerCore: null

min: null

tcpCloseWaitTimeout: null

tcpEstablishedTimeout: null

detectLocal:

bridgeInterface: “”

interfaceNamePrefix: “”

detectLocalMode: “”

enableProfiling: false

healthzBindAddress: “”

hostnameOverride: “”

iptables:

localhostNodePorts: null

masqueradeAll: false

masqueradeBit: null

minSyncPeriod: 0s

syncPeriod: 0s

ipvs:

excludeCIDRs: null

minSyncPeriod: 0s

scheduler: “”

strictARP: false

syncPeriod: 0s

tcpFinTimeout: 0s

tcpTimeout: 0s

udpTimeout: 0s

kind: KubeProxyConfiguration

logging:

flushFrequency: 0

options:

json:

infoBufferSize: “0”

verbosity: 0

metricsBindAddress: “”

mode: “ipvs”

nodePortAddresses: null

oomScoreAdj: null

portRange: “”

showHiddenMetricsForVersion: “”

winkernel:

enableDSR: false

forwardHealthCheckVip: false

networkName: “”

rootHnsEndpointName: “”

sourceVip: “”

3、在默认值基础之上需要配置的参数说明:

InitConfiguration

kind: InitConfiguration

apiVersion: kubeadm.k8s.io/v1beta3

localAPIEndpoint:

advertiseAddress: 192.168.72.30

bindPort: 6443

nodeRegistration:

name: master1

ClusterConfiguration

kind: ClusterConfiguration

apiVersion: kubeadm.k8s.io/v1beta3

controlPlaneEndpoint: 192.168.72.200:6444

imageRepository: registry.aliyuncs.com/google_containers

kubernetesVersion: 1.28.1

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16

apiServer:

certSANs:

- master1

- master2

- master3

- node1

- 192.168.72.200

- 192.168.72.30

- 192.168.72.31

- 192.168.72.32

- 192.168.72.33

KubeletConfiguration

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

cgroupDriver: systemd

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

containerRuntimeEndpoint: “”

KubeProxyConfiguration

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

mode: “ipvs”

### 初始化第一个master节点

1、在第一个master节点运行以下命令开始初始化master节点:

kubeadm init --upload-certs --config kubeadm.yaml

如果初始化报错可以执行以下命令检查kubelet相关日志。

journalctl -xeu kubelet

2、记录日志输出中的join control-plane和join worker命令。

…

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown

(

i

d

−

u

)

:

(id -u):

(id−u):(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run “kubectl apply -f [podnetwork].yaml” with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of the control-plane node running the following command on each as root:

kubeadm join 192.168.72.200:6444 --token abcdef.0123456789abcdef

–discovery-token-ca-cert-hash sha256:293b145b86ee0839a650befd4d32d706852ac5c77848a55e0cb186be29ff38de

–control-plane --certificate-key 578ad0ee6a1052703962b0a8591d0036f23a514d4456bd08fa253eda00128fca

Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

“kubeadm init phase upload-certs --upload-certs” to reload certs afterward.

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.72.200:6444 --token abcdef.0123456789abcdef

–discovery-token-ca-cert-hash sha256:293b145b86ee0839a650befd4d32d706852ac5c77848a55e0cb186be29ff38de

3、master节点初始化完成后参考最后提示配置kubectl客户端连接

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown

(

i

d

−

u

)

:

(id -u):

(id−u):(id -g) $HOME/.kube/config

4、查看节点状态,当前还未安装网络插件节点处于NotReady状态

root@master1:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

node NotReady control-plane 40s v1.28.1

5、查看pod状态,当前还未安装网络插件coredns pod处于Pending状态

root@master1:~# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-66f779496c-44fcc 0/1 Pending 0 32s

kube-system coredns-66f779496c-9cjmf 0/1 Pending 0 32s

kube-system etcd-node 1/1 Running 1 44s

kube-system kube-apiserver-node 1/1 Running 1 44s

kube-system kube-controller-manager-node 1/1 Running 1 44s

kube-system kube-proxy-g4kns 1/1 Running 0 32s

kube-system kube-scheduler-node 1/1 Running 1 44s

### 安装calico网络插件

说明:以下操作仅在第一个master节点执行。

参考:<https://projectcalico.docs.tigera.io/getting-started/kubernetes/quickstart>

1、在第一个master节点安装helm

version=v3.12.3

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上软件测试知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

1/1 Running 0 32s

kube-system kube-scheduler-node 1/1 Running 1 44s

### 安装calico网络插件

说明:以下操作仅在第一个master节点执行。

参考:<https://projectcalico.docs.tigera.io/getting-started/kubernetes/quickstart>

1、在第一个master节点安装helm

version=v3.12.3

[外链图片转存中…(img-mWKtExWv-1715311907608)]

[外链图片转存中…(img-sgumUigF-1715311907608)]

[外链图片转存中…(img-TI5geo9I-1715311907608)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上软件测试知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

538

538

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?