今天要分享的是服务化部署框架(MindSpore Serving)

具体要实现的就是一个可以在线识图的页面。

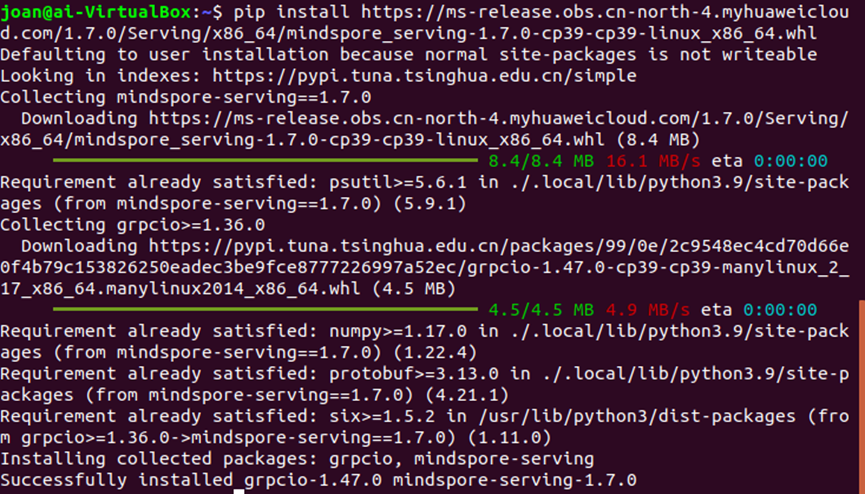

1.MindSpore Serving安装

MindSpore Serving目前只能通过指定whl包安装

pip install https://ms-release.obs.cn-north-4.myhuaweicloud.com/1.7.0/Serving/x86_64/mindspore_serving-1.7.0-cp39-cp39-linux_x86_64.whl

2.MindSpore Serving使用

查看相关文档

基于MindSpore Serving部署推理服务 — MindSpore master documentation

快速入门的例子有点不够形象化

于是去github的代码仓里面看看样例都有哪些

需要没有GPU, 就拿resnet50来跑跑看,cifa10的数据集也不大,cpu下训练也不费事。

如下是相关模型的训练代码:

from mindspore.train import Model

from mindvision.dataset import Cifar10

from mindvision.engine.callback import ValAccMonitor

from mindvision.classification.models.classifiers import BaseClassifier

from mindvision.classification.models.head import DenseHead

from mindvision.classification.models.neck import GlobalAvgPooling

from mindvision.classification.utils.model_urls import model_urls

from mindvision.utils.load_pretrained_model import LoadPretrainedModel

from typing import Type, Union, List, Optional

from mindvision.classification.models.blocks import ConvNormActivation

from mindspore import nn

class ResidualBlockBase(nn.Cell):

expansion: int = 1 # 最后一个卷积核数量与第一个卷积核数量相等

def __init__(self, in_channel: int, out_channel: int,

stride: int = 1, norm: Optional[nn.Cell] = None,

down_sample: Optional[nn.Cell] = None) -> None:

super(ResidualBlockBase, self).__init__()

if not norm:

norm = nn.BatchNorm2d

self.conv1 = ConvNormActivation(in_channel, out_channel,

kernel_size=3, stride=stride, norm=norm)

self.conv2 = ConvNormActivation(out_channel, out_channel,

kernel_size=3, norm=norm, activation=None)

self.relu = nn.ReLU()

self.down_sample = down_sample

def construct(self, x):

"""ResidualBlockBase construct."""

identity = x # shortcuts分支

out = self.conv1(x) # 主分支第一层:3*3卷积层

out = self.conv2(out) # 主分支第二层:3*3卷积层

if self.down_sample:

identity = self.down_sample(x)

out += identity # 输出为主分支与shortcuts之和

out = self.relu(out)

return

本文介绍了如何使用MindSpore Serving部署推理服务,包括安装、模型转换、解决依赖问题、启动服务和页面访问。在遇到设备不支持的问题后,通过设置device_type和使用Mindspore Lite解决问题。最后,利用Streamlit创建了一个简单的Web应用来实现页面访问和模型预测。

本文介绍了如何使用MindSpore Serving部署推理服务,包括安装、模型转换、解决依赖问题、启动服务和页面访问。在遇到设备不支持的问题后,通过设置device_type和使用Mindspore Lite解决问题。最后,利用Streamlit创建了一个简单的Web应用来实现页面访问和模型预测。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1666

1666

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?