pip install安装:requests,beautifulsoup4,lxml

打开开发人员工具:

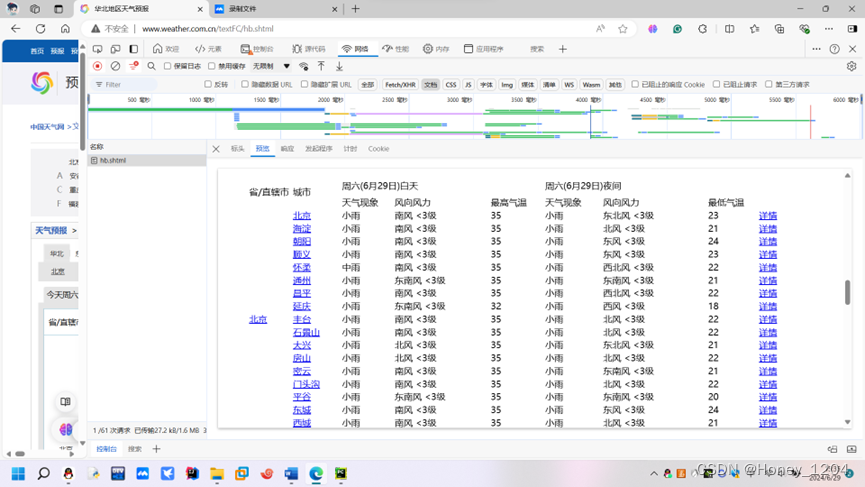

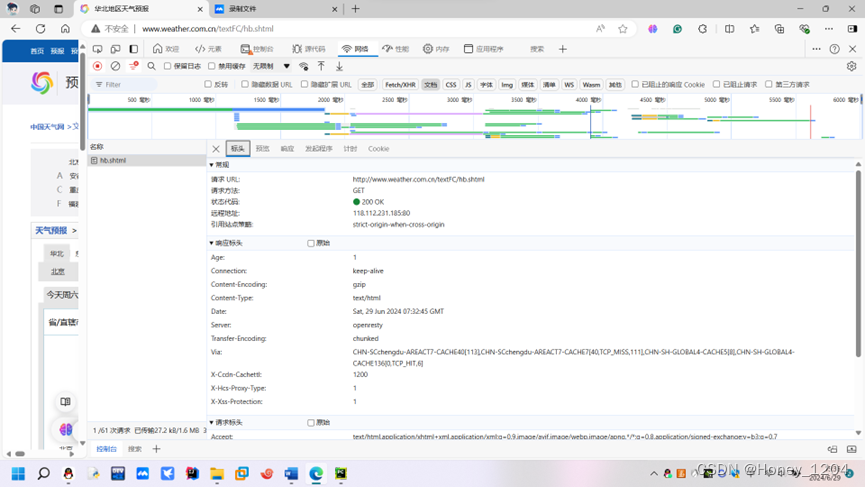

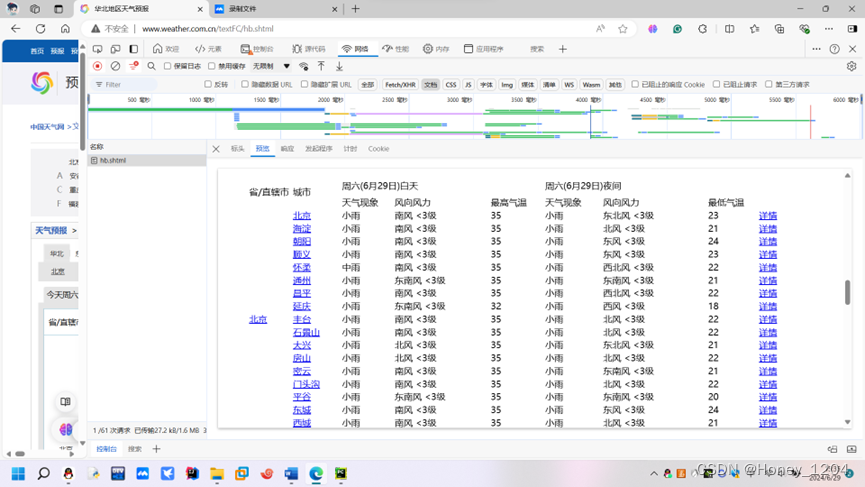

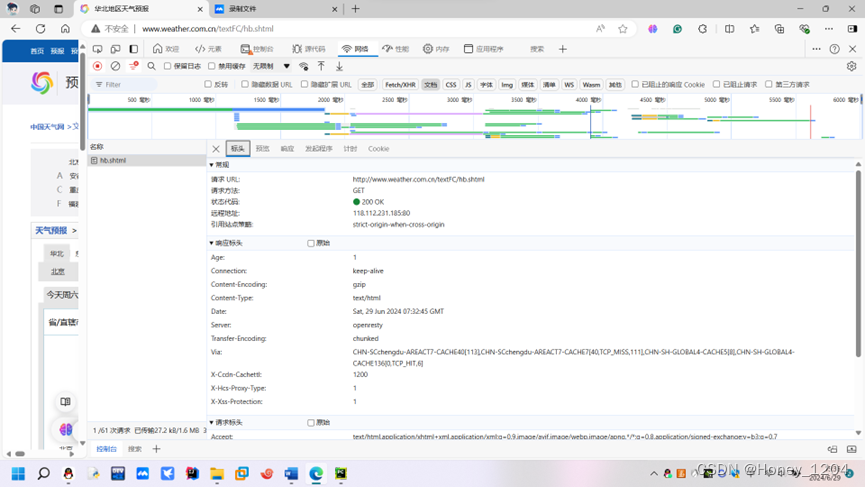

点击网络后刷新>>点击文档>>点击左侧的hb.shtml>>点击预览,可以看到需要采集的数据都在此页面中>>点击标头,可以看到此页面对应的请求url,请求方式,Cookie,User-Agent

点击页面左侧“小鼠标”进行定位,缩小查找范围,逐步找到每条要爬取的数据的位置

pip install安装:requests,beautifulsoup4,lxml

打开开发人员工具:

点击网络后刷新>>点击文档>>点击左侧的hb.shtml>>点击预览,可以看到需要采集的数据都在此页面中>>点击标头,可以看到此页面对应的请求url,请求方式,Cookie,User-Agent

点击页面左侧“小鼠标”进行定位,缩小查找范围,逐步找到每条要爬取的数据的位置

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?