pytorch中的张量默认存放在CPU设备中,

tensor = torch.rand(3,4)

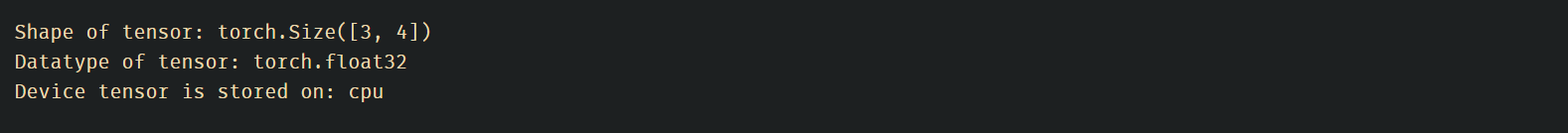

print(f"Shape of tensor: {tensor.shape}")

print(f"Datatype of tensor: {tensor.dtype}")

print(f"Device tensor is stored on: {tensor.device}")输出:

如果GPU可用,将张量移到GPU中

# We move our tensor to the GPU if available

if torch.cuda.is_available():

tensor = tensor.to('cuda')

1973

1973

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?