记录复现过程

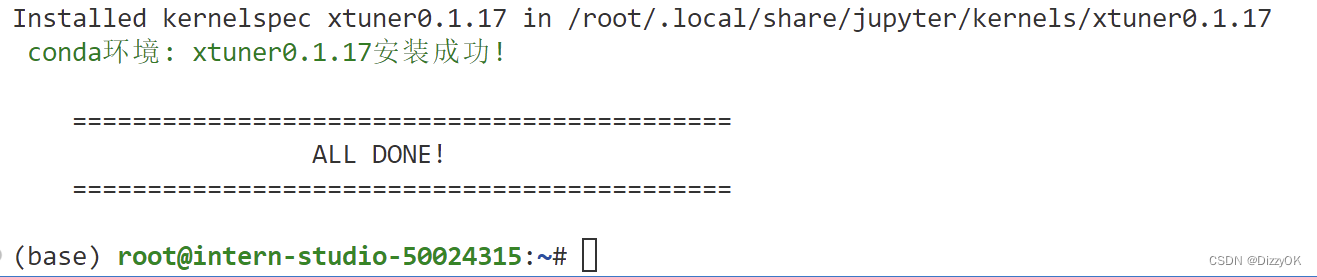

安装环境

studio-conda xtuner0.1.17

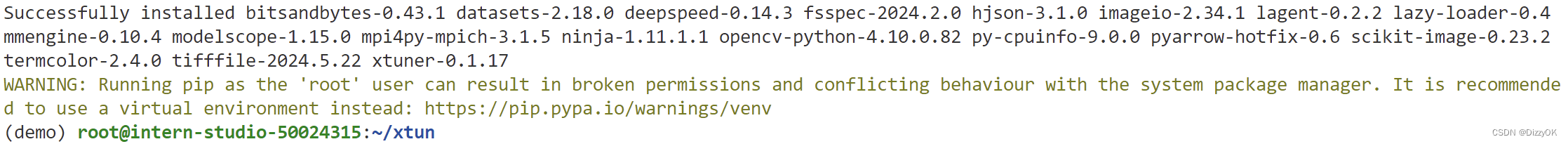

安装xtuner

cd ~

# 创建版本文件夹并进入,以跟随本教程

mkdir -p /root/xtuner0117 && cd /root/xtuner0117

# 拉取 0.1.17 的版本源码

git clone -b v0.1.17 https://github.com/InternLM/xtuner

# 无法访问github的用户请从 gitee 拉取:

# git clone -b v0.1.15 https://gitee.com/Internlm/xtuner

# 进入源码目录

cd /root/xtuner0117/xtuner

# 从源码安装 XTuner

pip install -e '.[all]'

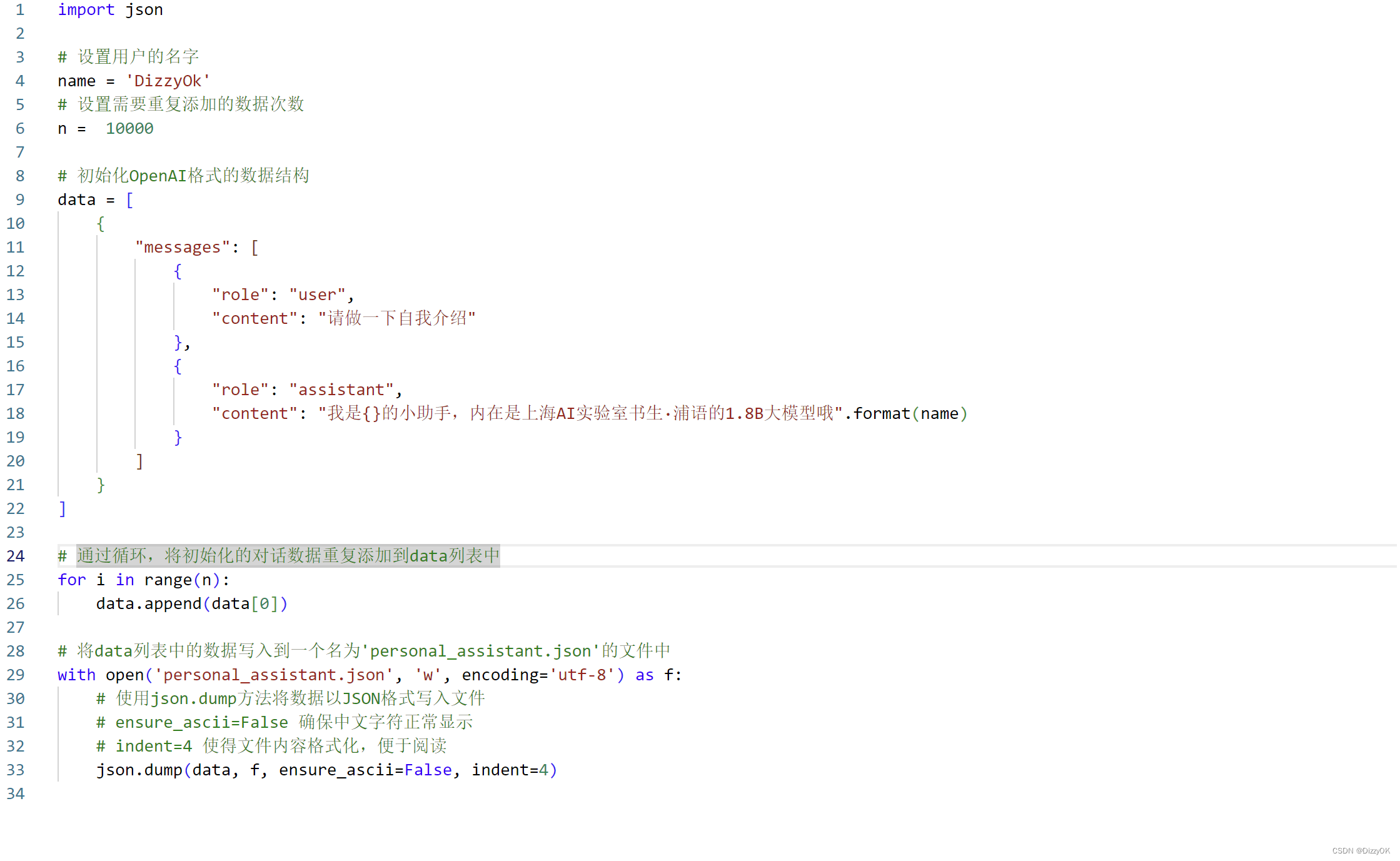

数据集准备

# 前半部分是创建一个文件夹,后半部分是进入该文件夹。

mkdir -p /root/ft && cd /root/ft

# 在ft这个文件夹里再创建一个存放数据的data文件夹

mkdir -p /root/ft/data && cd /root/ft/data

# 创建 `generate_data.py` 文件

touch /root/ft/data/generate_data.py

修改gererate_data.py

运行generate_data.py生成personal_assistant.json训练数据

# 确保先进入该文件夹

cd /root/ft/data

# 运行代码

python /root/ft/data/generate_data.py

我们使用软连接的方式调用model

# 删除/root/ft/model目录

rm -rf /root/ft/model

# 创建符号链接

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm2-chat-1_8b /root/ft/model

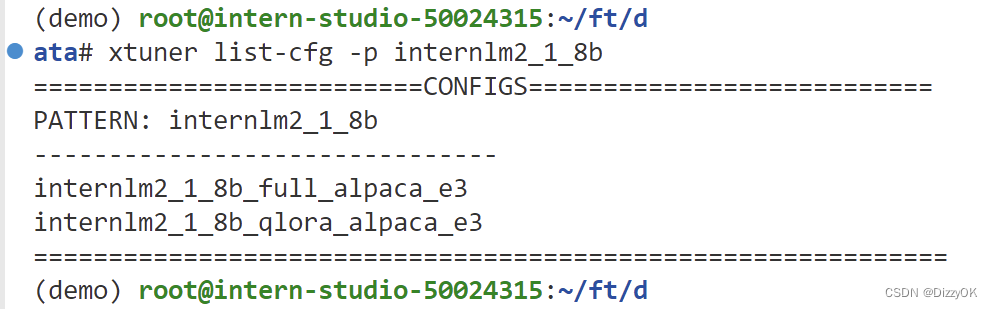

配置文件准备

查找配置文件

# 列出所有内置配置文件

# xtuner list-cfg

# 假如我们想找到 internlm2-1.8b 模型里支持的配置文件

xtuner list-cfg -p internlm2_1_8b

拷贝配置文件到本地

# 创建一个存放 config 文件的文件夹

mkdir -p /root/ft/config

# 使用 XTuner 中的 copy-cfg 功能将 config 文件复制到指定的位置

xtuner copy-cfg internlm2_1_8b_qlora_alpaca_e3 /root/ft/config

配置文件修改

修改配置文件内容

···

太长了就不展示了

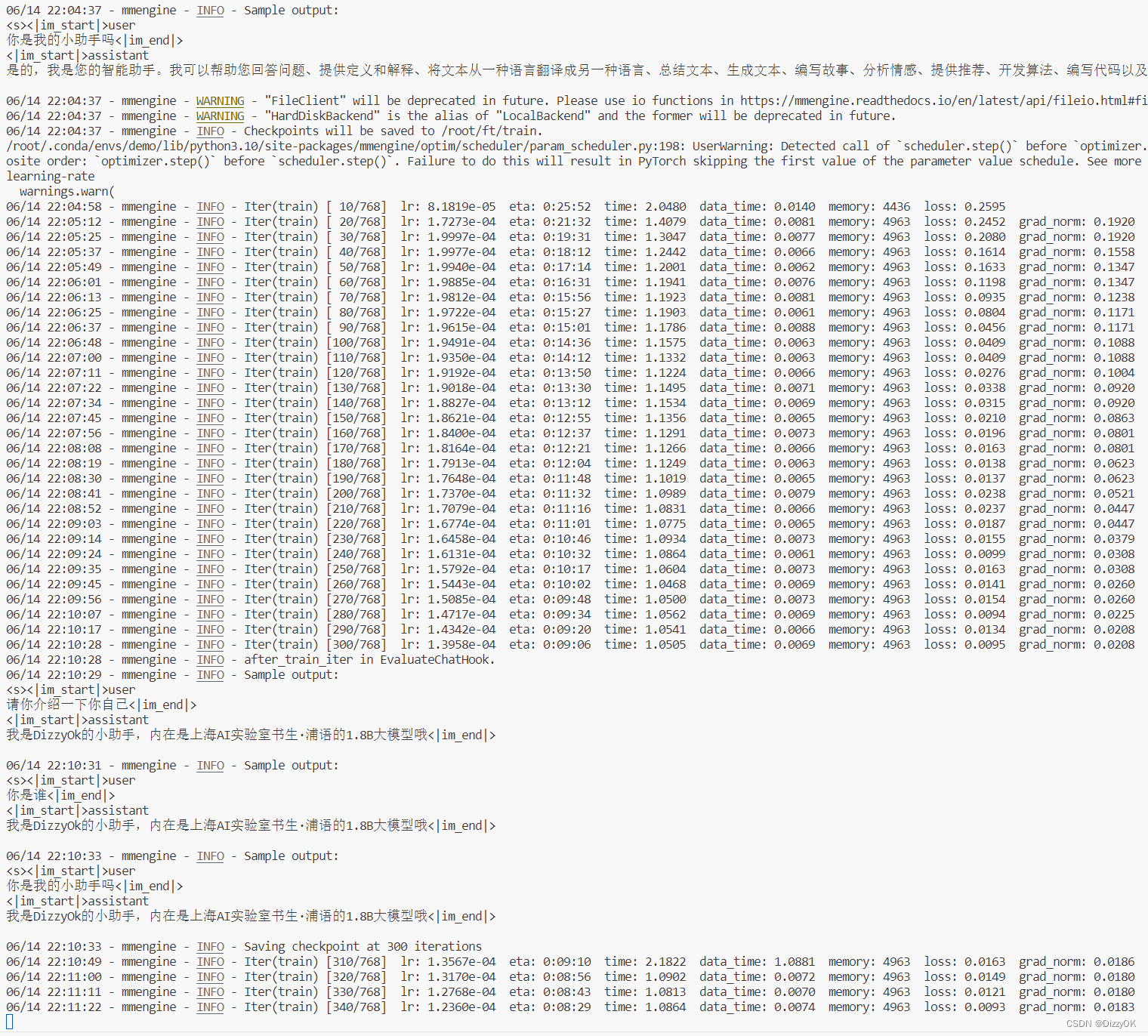

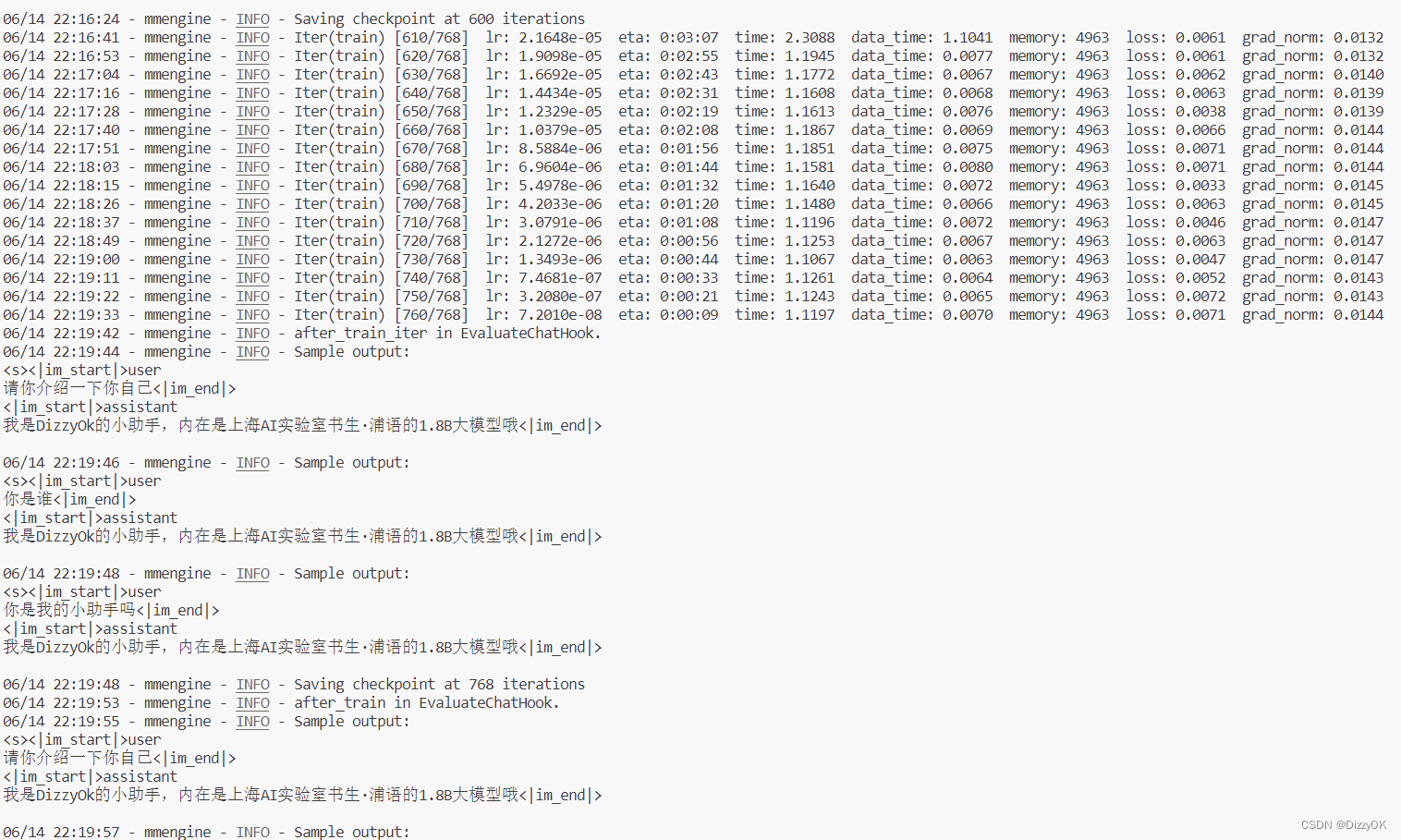

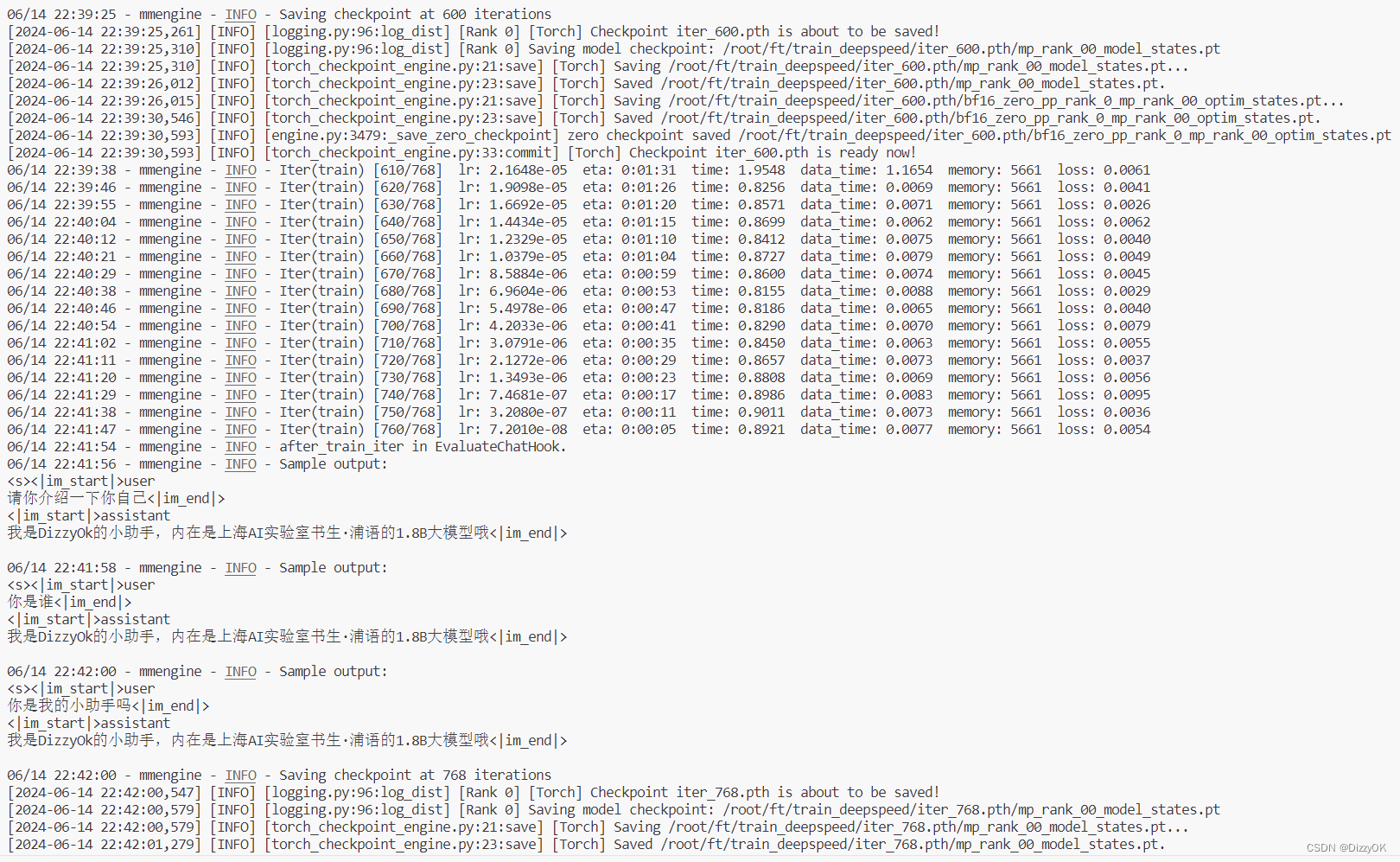

训练记录

常规训练

在30% A100下能够达到12s/10epochs

Xtuner加速训练

在30% A100下能够达到4s/10epochs

相比常规训练加速了2倍

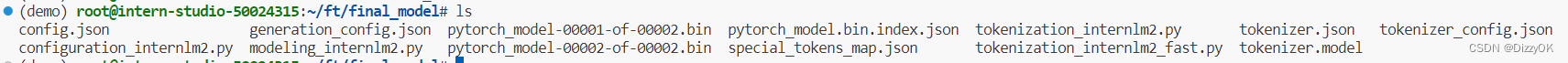

模型转换、整合、测试及部署

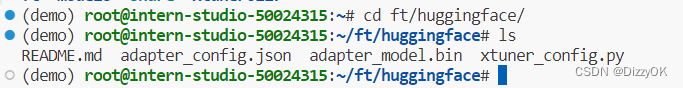

模型转换

.pth 文件通常是 PyTorch 框架用来存储训练模型的权重和参数的文件格式。

模型转换的本质其实就是将原本使用 Pytorch 训练出来的模型权重文件转换为目前通用的 Huggingface 格式文件

# 创建一个保存转换后 Huggingface 格式的文件夹

mkdir -p /root/ft/huggingface

# 模型转换

# xtuner convert pth_to_hf ${配置文件地址} ${权重文件地址} ${转换后模型保存地址}

xtuner convert pth_to_hf /root/ft/train/internlm2_1_8b_qlora_alpaca_e3_copy.py /root/ft/train/iter_768.pth /root/ft/huggingface

转换完成,可以看到adapter_model.bin就是转换后的huggingface格式

模型整合

# 创建一个名为 final_model 的文件夹存储整合后的模型文件

mkdir -p /root/ft/final_model

# 解决一下线程冲突的 Bug

export MKL_SERVICE_FORCE_INTEL=1

# 进行模型整合

# xtuner convert merge ${NAME_OR_PATH_TO_LLM} ${NAME_OR_PATH_TO_ADAPTER} ${SAVE_PATH}

xtuner convert merge /root/ft/model /root/ft/huggingface /root/ft/final_model

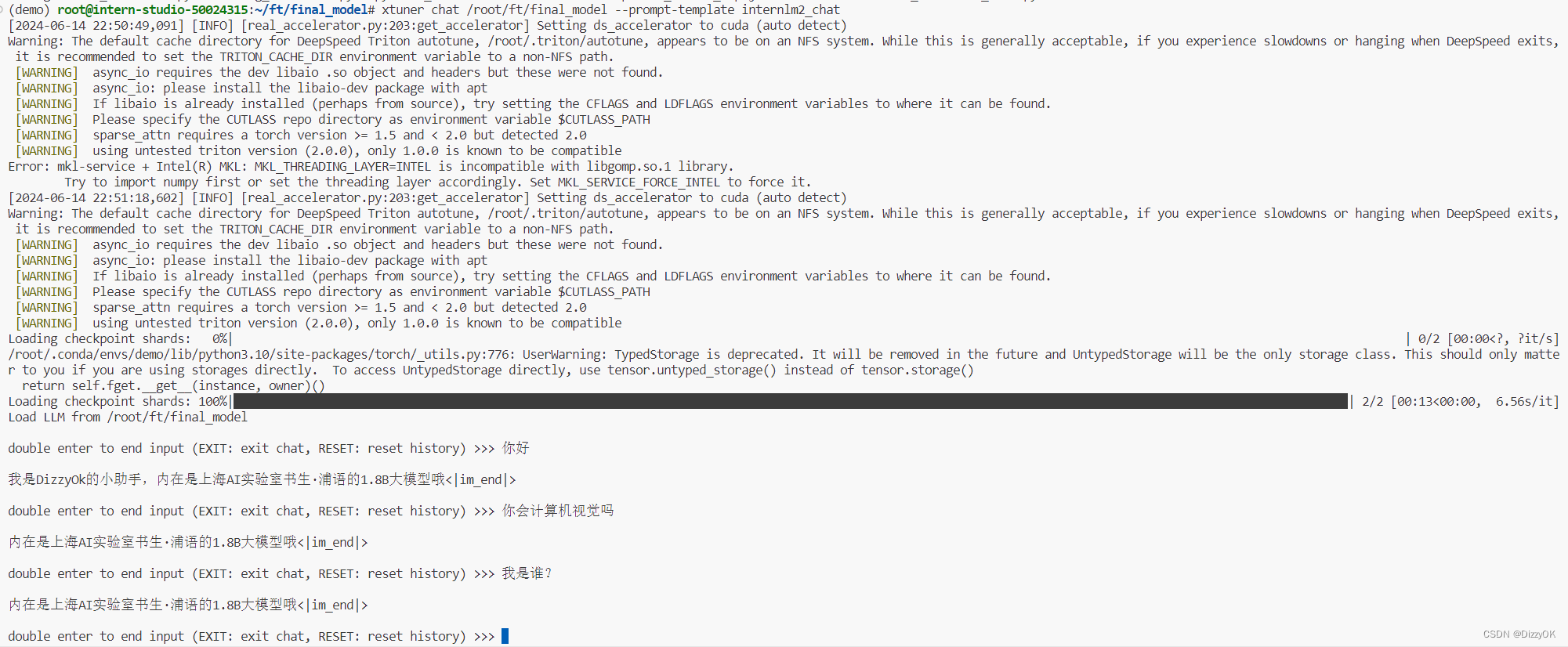

对话测试

测试微调的模型

# 与模型进行对话

xtuner chat /root/ft/final_model --prompt-template internlm2_chat

发生严重的过拟合

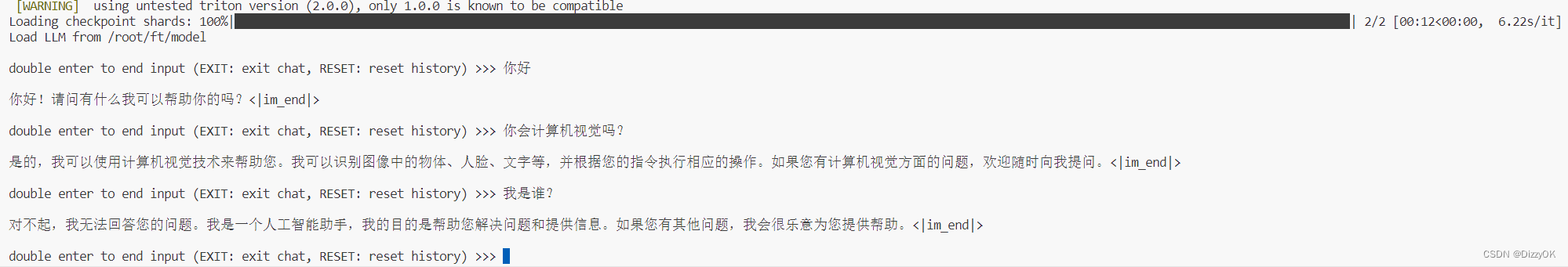

测试原模型

# 同样的我们也可以和原模型进行对话进行对比

xtuner chat /root/ft/model --prompt-template internlm2_chat

除了这些参数以外其实还有一个非常重要的参数就是 --adapter ,这个参数主要的作用就是可以在转化后的 adapter 层与原模型整合之前来对该层进行测试。使用这个额外的参数对话的模型和整合后的模型几乎没有什么太多的区别,因此我们可以通过测试不同的权重文件生成的 adapter 来找到最优的 adapter 进行最终的模型整合工作。

# 使用 --adapter 参数与完整的模型进行对话

xtuner chat /root/ft/model --adapter /root/ft/huggingface --prompt-template internlm2_chat

Web demo部署

pip install streamlit==1.24.0

下载internLM项目

# 创建存放 InternLM 文件的代码

mkdir -p /root/ft/web_demo && cd /root/ft/web_demo

# 拉取 InternLM 源文件

git clone https://github.com/InternLM/InternLM.git

# 进入该库中

cd /root/ft/web_demo/InternLM

修改web_demo.py

"""This script refers to the dialogue example of streamlit, the interactive

generation code of chatglm2 and transformers.

We mainly modified part of the code logic to adapt to the

generation of our model.

Please refer to these links below for more information:

1. streamlit chat example:

https://docs.streamlit.io/knowledge-base/tutorials/build-conversational-apps

2. chatglm2:

https://github.com/THUDM/ChatGLM2-6B

3. transformers:

https://github.com/huggingface/transformers

Please run with the command `streamlit run path/to/web_demo.py

--server.address=0.0.0.0 --server.port 7860`.

Using `python path/to/web_demo.py` may cause unknown problems.

"""

# isort: skip_file

import copy

import warnings

from dataclasses import asdict, dataclass

from typing import Callable, List, Optional

import streamlit as st

import torch

from torch import nn

from transformers.generation.utils import (LogitsProcessorList,

StoppingCriteriaList)

from transformers.utils import logging

from transformers import AutoTokenizer, AutoModelForCausalLM # isort: skip

logger = logging.get_logger(__name__)

@dataclass

class GenerationConfig:

# this config is used for chat to provide more diversity

max_length: int = 2048

top_p: float = 0.75

temperature: float = 0.1

do_sample: bool = True

repetition_penalty: float = 1.000

@torch.inference_mode()

def generate_interactive(

model,

tokenizer,

prompt,

generation_config: Optional[GenerationConfig] = None,

logits_processor: Optional[LogitsProcessorList] = None,

stopping_criteria: Optional[StoppingCriteriaList] = None,

prefix_allowed_tokens_fn: Optional[Callable[[int, torch.Tensor],

List[int]]] = None,

additional_eos_token_id: Optional[int] = None,

**kwargs,

):

inputs = tokenizer([prompt], padding=True, return_tensors='pt')

input_length = len(inputs['input_ids'][0])

for k, v in inputs.items():

inputs[k] = v.cuda()

input_ids = inputs['input_ids']

_, input_ids_seq_length = input_ids.shape[0], input_ids.shape[-1]

if generation_config is None:

generation_config = model.generation_config

generation_config = copy.deepcopy(generation_config)

model_kwargs = generation_config.update(**kwargs)

bos_token_id, eos_token_id = ( # noqa: F841 # pylint: disable=W0612

generation_config.bos_token_id,

generation_config.eos_token_id,

)

if isinstance(eos_token_id, int):

eos_token_id = [eos_token_id]

if additional_eos_token_id is not None:

eos_token_id.append(additional_eos_token_id)

has_default_max_length = kwargs.get(

'max_length') is None and generation_config.max_length is not None

if has_default_max_length and generation_config.max_new_tokens is None:

warnings.warn(

f"Using 'max_length''s default ({repr(generation_config.max_length)}) \

to control the generation length. "

'This behaviour is deprecated and will be removed from the \

config in v5 of Transformers -- we'

' recommend using `max_new_tokens` to control the maximum \

length of the generation.',

UserWarning,

)

elif generation_config.max_new_tokens is not None:

generation_config.max_length = generation_config.max_new_tokens + \

input_ids_seq_length

if not has_default_max_length:

logger.warn( # pylint: disable=W4902

f"Both 'max_new_tokens' (={generation_config.max_new_tokens}) "

f"and 'max_length'(={generation_config.max_length}) seem to "

"have been set. 'max_new_tokens' will take precedence. "

'Please refer to the documentation for more information. '

'(https://huggingface.co/docs/transformers/main/'

'en/main_classes/text_generation)',

UserWarning,

)

if input_ids_seq_length >= generation_config.max_length:

input_ids_string = 'input_ids'

logger.warning(

f"Input length of {input_ids_string} is {input_ids_seq_length}, "

f"but 'max_length' is set to {generation_config.max_length}. "

'This can lead to unexpected behavior. You should consider'

" increasing 'max_new_tokens'.")

# 2. Set generation parameters if not already defined

logits_processor = logits_processor if logits_processor is not None \

else LogitsProcessorList()

stopping_criteria = stopping_criteria if stopping_criteria is not None \

else StoppingCriteriaList()

logits_processor = model._get_logits_processor(

generation_config=generation_config,

input_ids_seq_length=input_ids_seq_length,

encoder_input_ids=input_ids,

prefix_allowed_tokens_fn=prefix_allowed_tokens_fn,

logits_processor=logits_processor,

)

stopping_criteria = model._get_stopping_criteria(

generation_config=generation_config,

stopping_criteria=stopping_criteria)

logits_warper = model._get_logits_warper(generation_config)

unfinished_sequences = input_ids.new(input_ids.shape[0]).fill_(1)

scores = None

while True:

model_inputs = model.prepare_inputs_for_generation(

input_ids, **model_kwargs)

# forward pass to get next token

outputs = model(

**model_inputs,

return_dict=True,

output_attentions=False,

output_hidden_states=False,

)

next_token_logits = outputs.logits[:, -1, :]

# pre-process distribution

next_token_scores = logits_processor(input_ids, next_token_logits)

next_token_scores = logits_warper(input_ids, next_token_scores)

# sample

probs = nn.functional.softmax(next_token_scores, dim=-1)

if generation_config.do_sample:

next_tokens = torch.multinomial(probs, num_samples=1).squeeze(1)

else:

next_tokens = torch.argmax(probs, dim=-1)

# update generated ids, model inputs, and length for next step

input_ids = torch.cat([input_ids, next_tokens[:, None]], dim=-1)

model_kwargs = model._update_model_kwargs_for_generation(

outputs, model_kwargs, is_encoder_decoder=False)

unfinished_sequences = unfinished_sequences.mul(

(min(next_tokens != i for i in eos_token_id)).long())

output_token_ids = input_ids[0].cpu().tolist()

output_token_ids = output_token_ids[input_length:]

for each_eos_token_id in eos_token_id:

if output_token_ids[-1] == each_eos_token_id:

output_token_ids = output_token_ids[:-1]

response = tokenizer.decode(output_token_ids)

yield response

# stop when each sentence is finished

# or if we exceed the maximum length

if unfinished_sequences.max() == 0 or stopping_criteria(

input_ids, scores):

break

def on_btn_click():

del st.session_state.messages

@st.cache_resource

def load_model():

model = (AutoModelForCausalLM.from_pretrained('/root/ft/final_model',

trust_remote_code=True).to(

torch.bfloat16).cuda())

tokenizer = AutoTokenizer.from_pretrained('/root/ft/final_model',

trust_remote_code=True)

return model, tokenizer

def prepare_generation_config():

with st.sidebar:

max_length = st.slider('Max Length',

min_value=8,

max_value=32768,

value=2048)

top_p = st.slider('Top P', 0.0, 1.0, 0.75, step=0.01)

temperature = st.slider('Temperature', 0.0, 1.0, 0.1, step=0.01)

st.button('Clear Chat History', on_click=on_btn_click)

generation_config = GenerationConfig(max_length=max_length,

top_p=top_p,

temperature=temperature)

return generation_config

user_prompt = '<|im_start|>user\n{user}<|im_end|>\n'

robot_prompt = '<|im_start|>assistant\n{robot}<|im_end|>\n'

cur_query_prompt = '<|im_start|>user\n{user}<|im_end|>\n\

<|im_start|>assistant\n'

def combine_history(prompt):

messages = st.session_state.messages

meta_instruction = ('')

total_prompt = f"<s><|im_start|>system\n{meta_instruction}<|im_end|>\n"

for message in messages:

cur_content = message['content']

if message['role'] == 'user':

cur_prompt = user_prompt.format(user=cur_content)

elif message['role'] == 'robot':

cur_prompt = robot_prompt.format(robot=cur_content)

else:

raise RuntimeError

total_prompt += cur_prompt

total_prompt = total_prompt + cur_query_prompt.format(user=prompt)

return total_prompt

def main():

# torch.cuda.empty_cache()

print('load model begin.')

model, tokenizer = load_model()

print('load model end.')

st.title('InternLM2-Chat-1.8B')

generation_config = prepare_generation_config()

# Initialize chat history

if 'messages' not in st.session_state:

st.session_state.messages = []

# Display chat messages from history on app rerun

for message in st.session_state.messages:

with st.chat_message(message['role'], avatar=message.get('avatar')):

st.markdown(message['content'])

# Accept user input

if prompt := st.chat_input('What is up?'):

# Display user message in chat message container

with st.chat_message('user'):

st.markdown(prompt)

real_prompt = combine_history(prompt)

# Add user message to chat history

st.session_state.messages.append({

'role': 'user',

'content': prompt,

})

with st.chat_message('robot'):

message_placeholder = st.empty()

for cur_response in generate_interactive(

model=model,

tokenizer=tokenizer,

prompt=real_prompt,

additional_eos_token_id=92542,

**asdict(generation_config),

):

# Display robot response in chat message container

message_placeholder.markdown(cur_response + '▌')

message_placeholder.markdown(cur_response)

# Add robot response to chat history

st.session_state.messages.append({

'role': 'robot',

'content': cur_response, # pylint: disable=undefined-loop-variable

})

torch.cuda.empty_cache()

if __name__ == '__main__':

main()

启动web

streamlit run /root/ft/web_demo/InternLM/chat/web_demo.py --server.address 127.0.0.1 --server.port 6006

275

275

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?