一、优化器

优化器是用来更新网络的参数的。

调试如下代码:

import torch

import torchvision.datasets

from torch import nn

from torch.nn import Sequential, Conv2d, MaxPool2d, Flatten, Linear

from torch.utils.data import DataLoader

dataset = torchvision.datasets.CIFAR10("./dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

dataloader = DataLoader(dataset, batch_size=1)

class Cow(nn.Module):

def __init__(self):

super(Cow, self).__init__()

self.model1 = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 10)

)

def forward(self, x):

x = self.model1(x)

return x

loss = nn.CrossEntropyLoss()

cow = Cow()

optim = torch.optim.SGD(cow.parameters(), lr=0.01)

for data in dataloader:

imgs, targets = data

outputs = cow(imgs)

result_loss = loss(outputs, targets)

optim.zero_grad()

result_loss.backward()

optim.step()

print(result_loss)

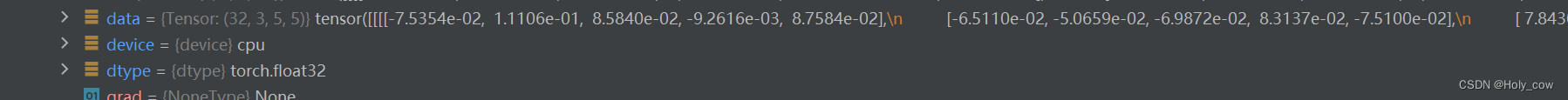

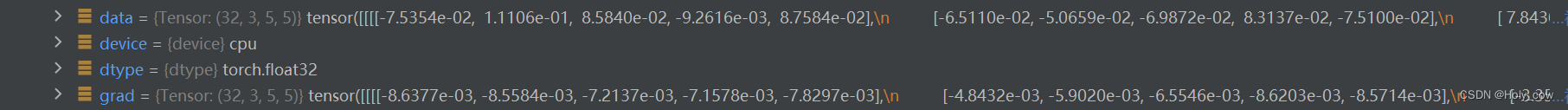

结果如下:

二、训练多个世代

代码:

for epoch in range(20):

running_loss = 0.0

for data in dataloader:

imgs, targets = data

outputs = cow(imgs)

result_loss = loss(outputs, targets)

optim.zero_grad()

result_loss.backward()

optim.step()

running_loss = running_loss + result_loss

print(running_loss)

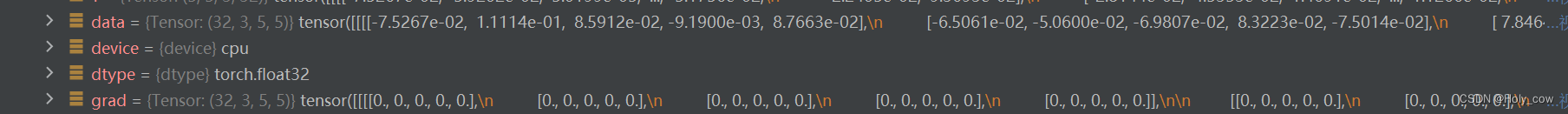

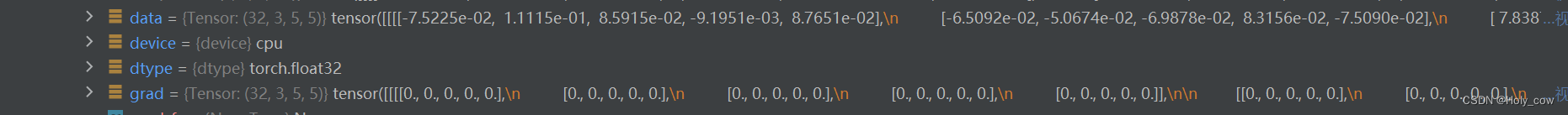

定义了一个变量running_loss,对每个世代的损失求和,运行结果如下:

tensor(18701.4414, grad_fn=<AddBackward0>)

tensor(16160.4707, grad_fn=<AddBackward0>)

tensor(15474.5791, grad_fn=<AddBackward0>)

tensor(16005.3164, grad_fn=<AddBackward0>)

tensor(18091.4219, grad_fn=<AddBackward0>)

tensor(19951.2773, grad_fn=<AddBackward0>)

tensor(21966.7578, grad_fn=<AddBackward0>)

tensor(23601.1602, grad_fn=<AddBackward0>)

tensor(24501.1973, grad_fn=<AddBackward0>)

tensor(24851.4492, grad_fn=<AddBackward0>)

tensor(25541.3633, grad_fn=<AddBackward0>)

tensor(25367.8516, grad_fn=<AddBackward0>)

tensor(26323.0859, grad_fn=<AddBackward0>)

tensor(26919.5176, grad_fn=<AddBackward0>)

tensor(27632.3203, grad_fn=<AddBackward0>)

tensor(28924.0879, grad_fn=<AddBackward0>)

tensor(31648.1758, grad_fn=<AddBackward0>)

tensor(nan, grad_fn=<AddBackward0>)

tensor(nan, grad_fn=<AddBackward0>)

tensor(nan, grad_fn=<AddBackward0>)

8262

8262

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?