一、网络结构

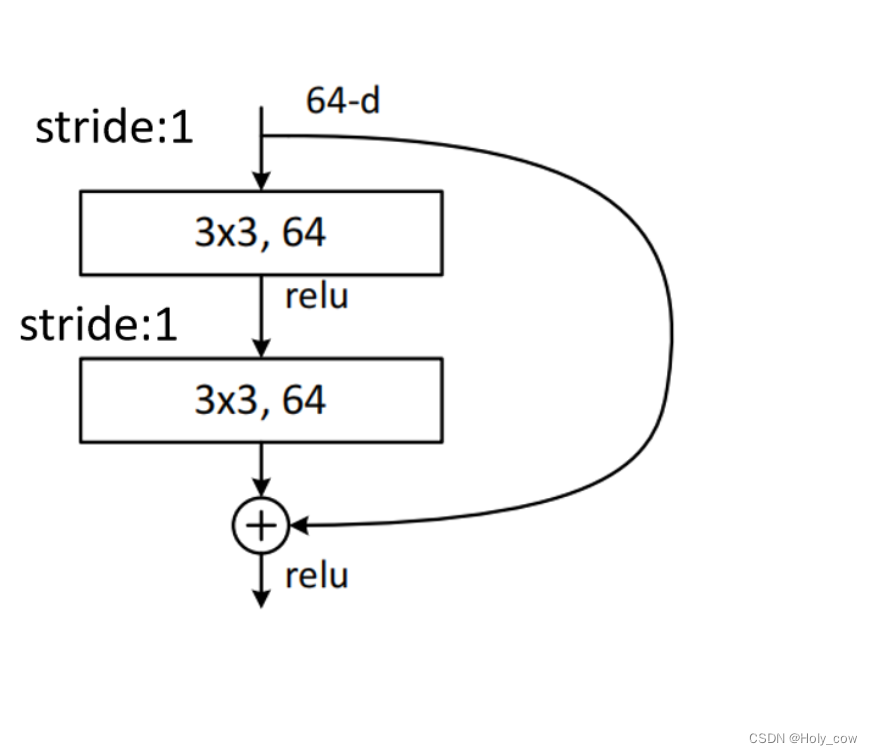

1.1 残差结构

代码如下:

class ResidualBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride=1):

super(ResidualBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channels)

self.relu = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channels)

self.downsample = nn.Sequential()

if stride != 1 or in_channels != out_channels:

self.downsample = nn.Sequential(

nn.Conv2d(in_channels, out_channels, stride=stride, kernel_size=1, bias=False))

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out += self.downsample(identity)

out = self.relu(out)

return out

1.2 ResNet18网络结构

代码如下:

class ResNet(nn.Module):

def __init__(self, num_classes=1000):

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self.maker_layer(64, 64, blocks=2, stride=1)

self.layer2 = self.maker_layer(64, 128, blocks=2, stride=2)

self.layer3 = self.maker_layer(128, 256, blocks=2, stride=2)

self.layer4 = self.maker_layer(256, 512, blocks=2, stride=2)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512, num_classes)

def maker_layer(self, in_channels, out_channels, blocks, stride=1):

layer = []

layer.append(ResidualBlock(in_channels, out_channels, stride))

for _ in range(1, blocks):

layer.append(ResidualBlock(out_channels, out_channels))

return nn.Sequential(*layer)

def forward(self, x):

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.maxpool(out)

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = self.avgpool(out)

out = torch.flatten(out, 1)

out = self.fc(out)

return out

二、运行结果:

ResNet(

(conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

(layer1): Sequential(

(0): ResidualBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential()

)

(1): ResidualBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential()

)

)

(layer2): Sequential(

(0): ResidualBlock(

(conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2), bias=False)

)

)

(1): ResidualBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential()

)

)

(layer3): Sequential(

(0): ResidualBlock(

(conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2), bias=False)

)

)

(1): ResidualBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential()

)

)

(layer4): Sequential(

(0): ResidualBlock(

(conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2), bias=False)

)

)

(1): ResidualBlock(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential()

)

)

(avgpool): AdaptiveAvgPool2d(output_size=(1, 1))

(fc): Linear(in_features=512, out_features=1000, bias=True)

)

----------------------------------------------------------------

Layer (type) Output Shape Param #

================================================================

Conv2d-1 [-1, 64, 112, 112] 9,408

BatchNorm2d-2 [-1, 64, 112, 112] 128

ReLU-3 [-1, 64, 112, 112] 0

MaxPool2d-4 [-1, 64, 56, 56] 0

Conv2d-5 [-1, 64, 56, 56] 36,864

BatchNorm2d-6 [-1, 64, 56, 56] 128

ReLU-7 [-1, 64, 56, 56] 0

Conv2d-8 [-1, 64, 56, 56] 36,864

BatchNorm2d-9 [-1, 64, 56, 56] 128

ReLU-10 [-1, 64, 56, 56] 0

ResidualBlock-11 [-1, 64, 56, 56] 0

Conv2d-12 [-1, 64, 56, 56] 36,864

BatchNorm2d-13 [-1, 64, 56, 56] 128

ReLU-14 [-1, 64, 56, 56] 0

Conv2d-15 [-1, 64, 56, 56] 36,864

BatchNorm2d-16 [-1, 64, 56, 56] 128

ReLU-17 [-1, 64, 56, 56] 0

ResidualBlock-18 [-1, 64, 56, 56] 0

Conv2d-19 [-1, 128, 28, 28] 73,728

BatchNorm2d-20 [-1, 128, 28, 28] 256

ReLU-21 [-1, 128, 28, 28] 0

Conv2d-22 [-1, 128, 28, 28] 147,456

BatchNorm2d-23 [-1, 128, 28, 28] 256

Conv2d-24 [-1, 128, 28, 28] 8,192

ReLU-25 [-1, 128, 28, 28] 0

ResidualBlock-26 [-1, 128, 28, 28] 0

Conv2d-27 [-1, 128, 28, 28] 147,456

BatchNorm2d-28 [-1, 128, 28, 28] 256

ReLU-29 [-1, 128, 28, 28] 0

Conv2d-30 [-1, 128, 28, 28] 147,456

BatchNorm2d-31 [-1, 128, 28, 28] 256

ReLU-32 [-1, 128, 28, 28] 0

ResidualBlock-33 [-1, 128, 28, 28] 0

Conv2d-34 [-1, 256, 14, 14] 294,912

BatchNorm2d-35 [-1, 256, 14, 14] 512

ReLU-36 [-1, 256, 14, 14] 0

Conv2d-37 [-1, 256, 14, 14] 589,824

BatchNorm2d-38 [-1, 256, 14, 14] 512

Conv2d-39 [-1, 256, 14, 14] 32,768

ReLU-40 [-1, 256, 14, 14] 0

ResidualBlock-41 [-1, 256, 14, 14] 0

Conv2d-42 [-1, 256, 14, 14] 589,824

BatchNorm2d-43 [-1, 256, 14, 14] 512

ReLU-44 [-1, 256, 14, 14] 0

Conv2d-45 [-1, 256, 14, 14] 589,824

BatchNorm2d-46 [-1, 256, 14, 14] 512

ReLU-47 [-1, 256, 14, 14] 0

ResidualBlock-48 [-1, 256, 14, 14] 0

Conv2d-49 [-1, 512, 7, 7] 1,179,648

BatchNorm2d-50 [-1, 512, 7, 7] 1,024

ReLU-51 [-1, 512, 7, 7] 0

Conv2d-52 [-1, 512, 7, 7] 2,359,296

BatchNorm2d-53 [-1, 512, 7, 7] 1,024

Conv2d-54 [-1, 512, 7, 7] 131,072

ReLU-55 [-1, 512, 7, 7] 0

ResidualBlock-56 [-1, 512, 7, 7] 0

Conv2d-57 [-1, 512, 7, 7] 2,359,296

BatchNorm2d-58 [-1, 512, 7, 7] 1,024

ReLU-59 [-1, 512, 7, 7] 0

Conv2d-60 [-1, 512, 7, 7] 2,359,296

BatchNorm2d-61 [-1, 512, 7, 7] 1,024

ReLU-62 [-1, 512, 7, 7] 0

ResidualBlock-63 [-1, 512, 7, 7] 0

AdaptiveAvgPool2d-64 [-1, 512, 1, 1] 0

Linear-65 [-1, 1000] 513,000

================================================================

Total params: 11,687,720

Trainable params: 11,687,720

Non-trainable params: 0

----------------------------------------------------------------

Input size (MB): 0.57

Forward/backward pass size (MB): 61.45

Params size (MB): 44.59

Estimated Total Size (MB): 106.61

----------------------------------------------------------------

进程已结束,退出代码0

附录:

import torch

import torch.nn as nn

from torchsummary import summary

class ResidualBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride=1):

super(ResidualBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channels)

self.relu = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channels)

self.downsample = nn.Sequential()

if stride != 1 or in_channels != out_channels:

self.downsample = nn.Sequential(

nn.Conv2d(in_channels, out_channels, stride=stride, kernel_size=1, bias=False))

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out += self.downsample(identity)

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, num_classes=1000):

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self.maker_layer(64, 64, blocks=2, stride=1)

self.layer2 = self.maker_layer(64, 128, blocks=2, stride=2)

self.layer3 = self.maker_layer(128, 256, blocks=2, stride=2)

self.layer4 = self.maker_layer(256, 512, blocks=2, stride=2)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512, num_classes)

def maker_layer(self, in_channels, out_channels, blocks, stride=1):

layer = []

layer.append(ResidualBlock(in_channels, out_channels, stride))

for _ in range(1, blocks):

layer.append(ResidualBlock(out_channels, out_channels))

return nn.Sequential(*layer)

def forward(self, x):

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.maxpool(out)

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = self.avgpool(out)

out = torch.flatten(out, 1)

out = self.fc(out)

return out

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model = ResNet().to(device)

print(model)

summary(model, (3, 224, 224))

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?