1、groupby

org.apache.spark.sql.AnalysisException: expression 'page_click.`time`' is neither present in the group by, nor is it an aggregate function. Add to group by or wrap in first() (or first_value) if you don't care which value you get.;

time这一列在 group by的时候有多个查询结果,需要使用collect_set()一下

mysql不出问题是因为默认取出来了第一个值

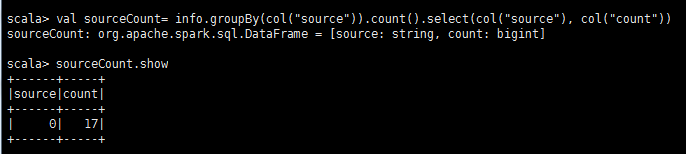

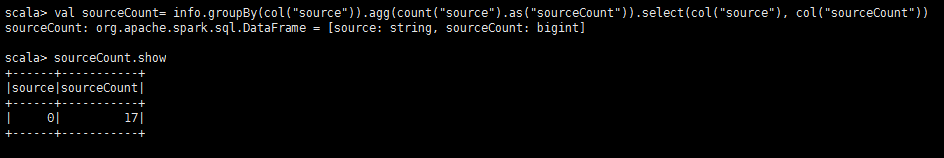

正确的:

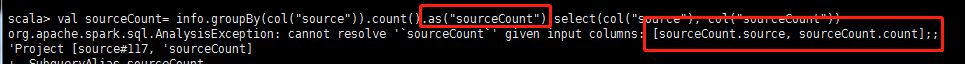

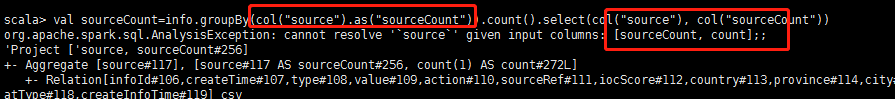

错误的:

2、日期

spark.conf.set("spark.sql.session.timeZone", "Asia/Shanghai")

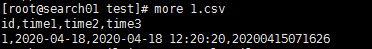

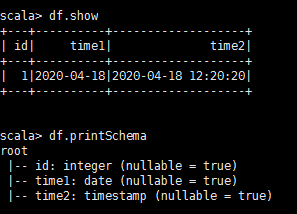

val schema=new StructType(Array( new StructField("id",DataTypes.IntegerType),new StructField("time1",DataTypes.DateType), new StructField("time1",DataTypes.TimestampType)))

val df =spark.read.option("header","true").schema(schema).csv("/data/preHandle/1.csv")

3、

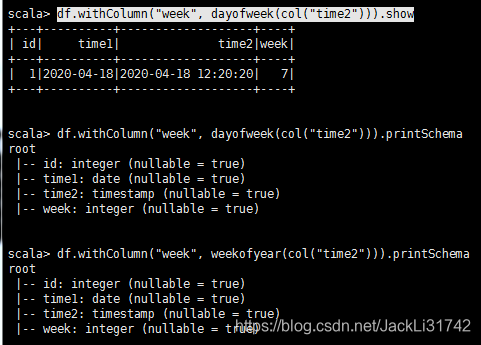

df.withColumn("week", dayofweek(col("time2"))).show

df.withColumn("week", weekofyear(col("time2"))).show

4、

val df2=df.select(col("id").cast(

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1051

1051

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?