import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

#1维y=x^2数据集

x_data=np.linspace(-1,1,200)[np.newaxis,:]

bias=0.5

y_data=np.square(x_data)+bias+np.random.normal(0,0.05,x_data.shape)

class DNN:

args=[]

layer_num=0

def add_layer(self,input_size,output_size,activation_function=None):

self.layer_num=self.layer_num+1

Weights=tf.Variable(tf.random_normal([output_size,input_size]))

bias=tf.Variable(tf.zeros([output_size,1])+0.01)

self.args.append({'Weights':Weights,'bias':bias,'act_fcn':activation_function})

return {'Weights':Weights,'bias':bias,'act_fcn':activation_function}

def forward_propagation(self,input_holder):

# derive the output of a specific input

result=input_holder

for i in range(self.layer_num):

if self.args[i]['act_fcn']==None:

result=tf.matmul(self.args[i]['Weights'],result)+self.args[i]['bias']

else:

fcn=self.args[i]['act_fcn']

result=fcn(tf.matmul(self.args[i]['Weights'],result)+self.args[i]['bias'])

return result

#build the network

network=DNN()

network.add_layer(x_data.shape[0],10,activation_function=tf.nn.relu)

network.add_layer(10,10,activation_function=tf.nn.tanh)

network.add_layer(10,1,activation_function=None)

# input data set

x_data_holder=tf.placeholder(dtype=tf.float32,shape=x_data.shape)

# labels

y_data_holder=tf.placeholder(dtype=tf.float32,shape=y_data.shape)

#prediction

prediction=list(map(network.forward_propagation,tf.split(x_data_holder,x_data.shape[1],1)))

#loss function

loss=tf.reduce_mean(tf.square(tf.concat(prediction,axis=1)-y_data_holder))

train_step=tf.train.AdadeltaOptimizer(learning_rate=0.05).minimize(loss)

#训练

sess=tf.Session()

##初始化

init=tf.global_variables_initializer()

sess.run(init)

##训练模型

loss_record=[]

prediction_record=[]

for episode in range(5000):

print('episode = ', episode)

_,loss_n,prediction_n =sess.run([train_step,loss,prediction],feed_dict={x_data_holder:x_data,y_data_holder:y_data})

print(loss_n)

loss_record.append(loss_n)

prediction_record.append(prediction_n)

plt.figure(1)

plt.plot(loss_record)

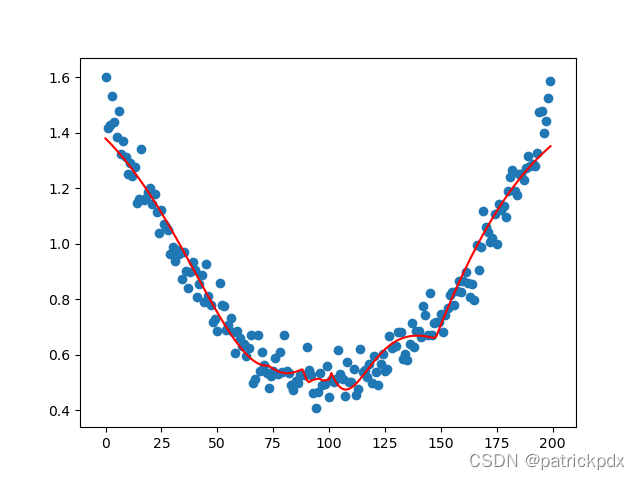

plt.figure(2)

plt.plot(np.arange(x_data.shape[1]),np.squeeze(prediction_record[-1]),'r-')

plt.scatter(np.arange(x_data.shape[1]),np.squeeze(y_data))

plt.show()

效果:

599

599

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?