spark开发环境搭建及测试

(我用的不是maven方式,是导包的)

环境介绍

cm管理 cdh5.8.4

spark 1.6.0

java 1.7

scala本地和集群用的都是2.10.5 intellij idea插件用的也是(scala版本要对应)

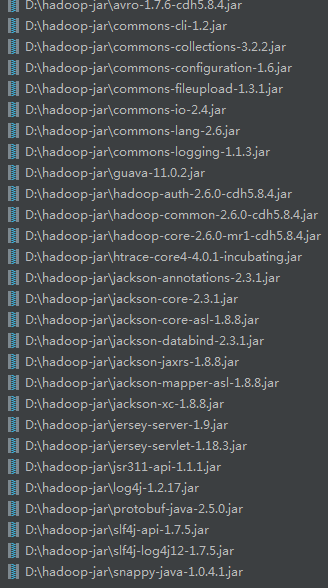

下面是各种报错导入的包,从集群中下载的包。

/opt/cloudera/parcels/CDH-5.8.4-1.cdh5.8.4.p0.5/jars目录下的包

一、提交到本地运行

缺少hadoop的包

Error:scalac: Error: bad symbolic reference. A signature in SparkConf.class refers to term avro

in package org.apache which is not available.

Error:scalac: Error: bad symbolic reference. A signature in SparkRdd.class refers to term avro

in package org.apache which is not available.

缺少

Error:scalac: Error: bad symbolic reference. A signature in SparkConf.class refers to term avro

in package org.apache which is not available.

It may be completely missing from the current classpath, or the version on

the classpath might be incompatible with the version used when compiling SparkConf.class.

scala.reflect.internal.Types$TypeError: bad symbolic reference. A signature in SparkConf.class refers to term avro

in package org.apache which is not available.

It may be completely missing from the current classpath, or the version on

the classpath might be incompatible with the version used when compiling SparkConf.class.

缺少

Caused by: java.lang.ClassNotFoundException: com.google.common.collect.Maps

缺少

Caused by: java.lang.ClassNotFoundException: org.apache.hadoop.util.PlatformName

缺少

Caused by: java.lang.ClassNotFoundException: com.fasterxml.jackson.databind.Module

缺少

Caused by: java.lang.ClassNotFoundException: com.fasterxml.jackson.core.Versioned

缺少

Caused by: java.lang.ClassNotFoundException: com.fasterxml.jackson.annotation.JsonPropertyDescription

缺少

Caused by: java.lang.ClassNotFoundException: org.apache.htrace.core.Tracer$Builder

缺少

Caused by: java.lang.ClassNotFoundException: com.sun.jersey.spi.container.servlet.ServletContainer

缺少

Caused by: java.lang.ClassNotFoundException: com.sun.jersey.spi.container.ContainerRequestFilter

缺少

Caused by: java.lang.ClassNotFoundException: javax.ws.rs.WebApplicationException

缺少

Caused by: java.lang.IllegalStateException: unread block data

缺少

Caused by: java.lang.ClassNotFoundException: org.xerial.snappy.SnappyInputStream

缺少

Error:scalac: bad symbolic reference. A signature in PairRDDFunctions.class refers to term mapred

in package org.apache.hadoop which is not available.

It may be completely missing from the current classpath, or the version on

the classpath might be incompatible with the version used when compiling PairRDDFunctions.class.

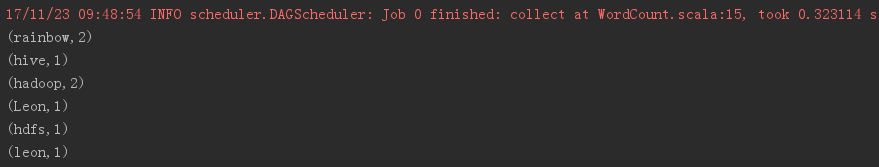

至此运行成功,但是有警告。

最终这些jar

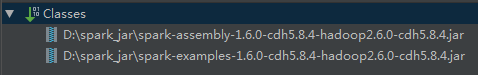

还有spark的jar

报错

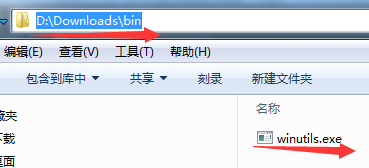

java.io.IOException: Could not locate executable null\bin\winutils.exe in the Hadoop binaries.

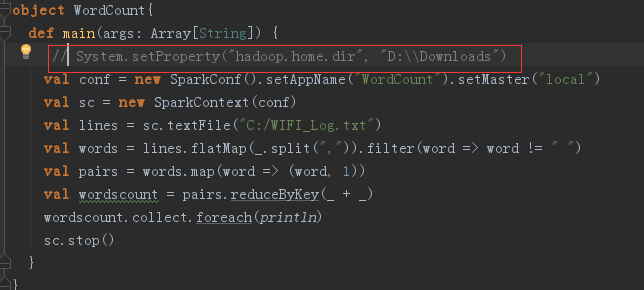

需要在代码中添加(红框)

如下位置,必须在一个bin文件夹下 ,一个叫bin的空文件夹,下载winutils.exe

运行成功

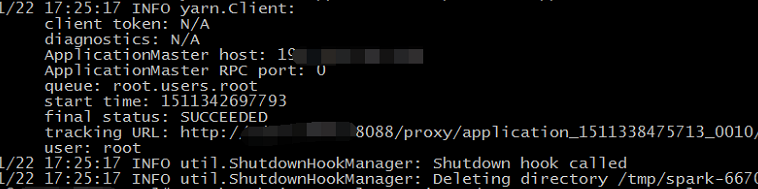

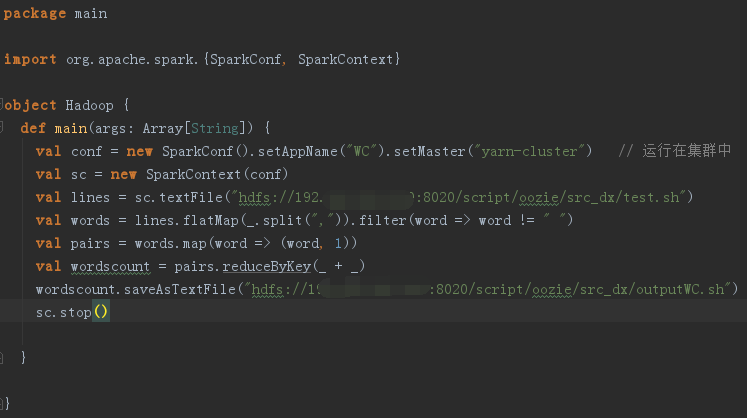

二、打包jar,提交到集群运行

代码如下,我的是cm上的spark,由yarn管理。

562

562

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?