在使用PyTorch进行深度学习训练时,经常需要打印模型结构,查看每一层的名称、尺寸、精度、参数量等等,并在最后计算总参数量。

以下的打印函数包含了打印:

- 模型总参数量

- 模型被冻结的参数量

- 模型未被总结的参数量

- 每层的名称

- 每层的类别名

- 每层的shape

- 每层的精度

- 每层的参数量

- 每层是否被冻结

打印函数

def get_pytorch_model_info(model: torch.nn.Module) -> (dict, list):

"""

输入一个PyTorch Model对象,返回模型的总参数量(格式化为易读格式)以及每一层的名称、尺寸、精度、参数量、是否可训练和层的类别。

:param model: PyTorch Model

:return: (总参数量信息, 参数列表[包括每层的名称、尺寸、数据类型、参数量、是否可训练和层的类别])

"""

params_list = []

total_params = 0

total_params_non_trainable = 0

for name, param in model.named_parameters():

# 获取参数所属层的名称

layer_name = name.split('.')[0]

# 获取层的对象

layer = dict(model.named_modules())[layer_name]

# 获取层的类名

layer_class = layer.__class__.__name__

params_count = param.numel()

trainable = param.requires_grad

params_list.append({

'tensor': name,

'layer_class': layer_class,

'shape': str(list(param.size())),

'precision': str(param.dtype).split('.')[-1],

'params_count': str(params_count),

'trainable': str(trainable),

})

total_params += params_count

if not trainable:

total_params_non_trainable += params_count

total_params_trainable = total_params - total_params_non_trainable

total_params_info = {

'total_params': format_size(total_params),

'total_params_trainable': format_size(total_params_trainable),

'total_params_non_trainable': format_size(total_params_non_trainable)

}

return total_params_info, params_list

测试样例

我们用resnet50来进行测试:

import torchvision

model = torchvision.models.resnet50(weights=None)

完整测试代码:

import torch

import torchvision

def format_size(size):

# 对总参数量做格式优化

K, M, B = 1e3, 1e6, 1e9

if size == 0:

return '0'

elif size < M:

return f"{size / K:.1f}K"

elif size < B:

return f"{size / M:.1f}M"

else:

return f"{size / B:.1f}B"

def get_pytorch_model_info(model: torch.nn.Module) -> (dict, list):

"""

输入一个PyTorch Model对象,返回模型的总参数量(格式化为易读格式)以及每一层的名称、尺寸、精度、参数量、是否可训练和层的类别。

:param model: PyTorch Model

:return: (总参数量信息, 参数列表[包括每层的名称、尺寸、数据类型、参数量、是否可训练和层的类别])

"""

params_list = []

total_params = 0

total_params_non_trainable = 0

for name, param in model.named_parameters():

# 获取参数所属层的名称

layer_name = name.split('.')[0]

# 获取层的对象

layer = dict(model.named_modules())[layer_name]

# 获取层的类名

layer_class = layer.__class__.__name__

params_count = param.numel()

trainable = param.requires_grad

params_list.append({

'tensor': name,

'layer_class': layer_class,

'shape': str(list(param.size())),

'precision': str(param.dtype).split('.')[-1],

'params_count': str(params_count),

'trainable': str(trainable),

})

total_params += params_count

if not trainable:

total_params_non_trainable += params_count

total_params_trainable = total_params - total_params_non_trainable

total_params_info = {

'total_params': format_size(total_params),

'total_params_trainable': format_size(total_params_trainable),

'total_params_non_trainable': format_size(total_params_non_trainable)

}

return total_params_info, params_list

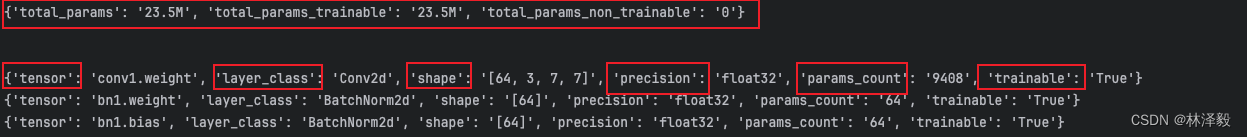

运行结果:

本文介绍了一个用于PyTorch模型的函数,它能打印模型的总参数量、可训练参数量、非训练参数量,以及每一层的名称、尺寸、精度、参数数量和训练状态。以ResNet50为例展示了如何使用该函数获取模型详细信息。

本文介绍了一个用于PyTorch模型的函数,它能打印模型的总参数量、可训练参数量、非训练参数量,以及每一层的名称、尺寸、精度、参数数量和训练状态。以ResNet50为例展示了如何使用该函数获取模型详细信息。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?