0 项目依赖

<properties>

<java.version>1.8</java.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.12</artifactId>

<version>1.12.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>1.12.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.12</artifactId>

<version>1.12.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-jdbc_2.12</artifactId>

<version>1.12.0</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.47</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.5.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

</plugins>

</build>

1 预定义Source

- 基于文件时,要想在控制台查看到完整有序的整个文件,请:

setParallelism(1)

import org.apache.flink.api.common.RuntimeExecutionMode;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import java.util.Arrays;

public class SourceExamples {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setRuntimeMode(RuntimeExecutionMode.STREAMING);

// 基于可变参数

DataStream<String> ds1 = env.fromElements("hadoop,spark,flink", "hadoop spark hdfs", "flink");

// 基于集合

DataStream<String> ds2 = env.fromCollection(Arrays.asList("hadoop,spark,flink", "hadoop spark hdfs", "flink"));

// 基于文件

DataStream<String> ds3 = env.readTextFile("SourceExamples.java");

// 基于文件-HDFS

DataStream<String> ds4 = env.readTextFile("hdfs://localhost:9000/user/hadoop/flink/wordcount/output.txt");

// 基于Socket

DataStream<String> ds5 = env.socketTextStream("localhost", 9999);

ds1.print();

ds2.print();

ds3.print();

ds4.print();

ds5.print();

env.execute();

}

}

2 自定义Source

Source 类继承 RichParallelSourceFunction 并重写 run 方法

2.1 自定义类为Source

import org.apache.flink.api.common.RuntimeExecutionMode;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichParallelSourceFunction;

import java.util.Random;

public class SourceExamplesUser {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setRuntimeMode(RuntimeExecutionMode.AUTOMATIC);

DataStream<User> userDS = env.addSource(new UserSource());

userDS.print();

env.execute();

}

}

class User {

private int id;

private int gender;

public User(int id, int gender) {

this.id = id;

this.gender = gender;

}

@Override

public String toString() {

return "User{" +

"id=" + id +

", gender=" + gender +

'}';

}

}

class UserSource extends RichParallelSourceFunction<User> {

private Boolean flag = true;

@Override

public void run(SourceContext<User> out) throws Exception {

Random random = new Random();

while (flag){

Thread.sleep(1000);

int id = random.nextInt(100);

int gender = random.nextInt(2);

out.collect(new User(id,gender));

}

}

@Override

public void cancel() {

flag = false;

}

}

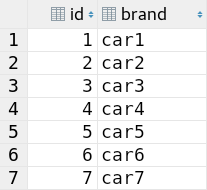

2.2 MySQL为Source

MySQL数据表 car :

代码:

import org.apache.flink.api.common.RuntimeExecutionMode;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.RichParallelSourceFunction;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

public class SourceExamplesMySQL {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setRuntimeMode(RuntimeExecutionMode.AUTOMATIC);

DataStream<Car> carDS = env.addSource(new MySQLSource()).setParallelism(1);

carDS.print().setParallelism(1);

env.execute();

}

}

class Car {

private int id;

private String brand;

public Car(int id, String brand) {

this.id = id;

this.brand = brand;

}

@Override

public String toString() {

return "Source.Car{" +

"id=" + id +

", brand='" + brand + '\'' +

'}';

}

}

class MySQLSource extends RichParallelSourceFunction<Car> {

private boolean flag = true;

private Connection conn = null;

private PreparedStatement ps = null;

private ResultSet rs = null;

// open方法只执行一次

@Override

public void open(Configuration parameters) throws Exception {

//加载驱动,开启连接

conn = DriverManager.getConnection("jdbc:mysql://localhost:3306/hello_flink", "root", "root");

String sql = "select id,brand from car";

ps = conn.prepareStatement(sql);

}

@Override

public void run(SourceContext<Car> out) throws Exception {

while (flag) {

rs = ps.executeQuery();

while (rs.next()) {

int id = rs.getInt("id");

String brand = rs.getString("brand");

out.collect(new Car(id, brand));

}

TimeUnit.SECONDS.sleep(5);

}

}

@Override

public void cancel() {

flag = false;

}

@Override

public void close() throws Exception {

if (rs != null) rs.close();

if (ps != null) ps.close();

if (conn != null) conn.close();

}

}

120

120

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?