Tensorflow 2.x 版本中,移除了contrib组件,无法使用1.x LSTM-RNN例程中sequence_loss_by_example函数,此处提供一种无需将2.x降为1.x的解决方法

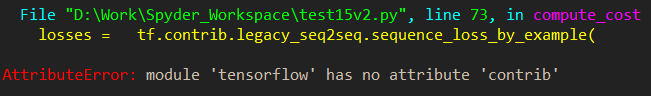

最近在学习Tensorflow过程中,莫烦视频中所用LSTM-RNN例程使用了sequence_loss_by_example()函数求损失函数,由于视频发布时的tensorflow版本较低,而现在我们使用了2.x版本,导致会报错误:

AttributeError: module 'tensorflow_core.compat.v1' has no attribute 'contrib'

这是因为2.x版本的tensorflow中移除了contrib组件。

解决办法:

在"D:\DeepLearningTools\Anaconda3\envs\GPU\Lib\site-packages\tensorflow_core" 文件夹下新建名为"seq_loss.py"文件,里面的全部代码内容为:

from six.moves import xrange # pylint: disable=redefined-builtin

from six.moves import zip # pylint: disable=redefined-builtin

from tensorflow.python.framework import dtypes

from tensorflow.python.framework import ops

from tensorflow.python.ops import array_ops

from tensorflow.python.ops import control_flow_ops

from tensorflow.python.ops import embedding_ops

from tensorflow.python.ops import math_ops

from tensorflow.python.ops import nn_ops

from tensorflow.python.ops import rnn

from tensorflow.python.ops import rnn_cell_impl

from tensorflow.python.ops import variable_scope

from tensorflow.python.util import nest

def sequence_loss_by_example(logits,

targets,

weights,

average_across_timesteps=True,

softmax_loss_function=None,

name=None):

if len(targets) != len(logits) or len(weights) != len(logits):

raise ValueError("Lengths of logits, weights, and targets must be the same "

"%d, %d, %d." % (len(logits), len(weights), len(targets)))

with ops.name_scope(name, "sequence_loss_by_example",

logits + targets + weights):

log_perp_list = []

for logit, target, weight in zip(logits, targets, weights):

if softmax_loss_function is None:

# TODO(irving,ebrevdo): This reshape is needed because

# sequence_loss_by_example is called with scalars sometimes, which

# violates our general scalar strictness policy.

target = array_ops.reshape(target, [-1])

crossent = nn_ops.sparse_softmax_cross_entropy_with_logits(

labels=target, logits=logit)

else:

crossent = softmax_loss_function(labels=target, logits=logit)

log_perp_list.append(crossent * weight)

log_perps = math_ops.add_n(log_perp_list)

if average_across_timesteps:

total_size = math_ops.add_n(weights)

total_size += 1e-12 # Just to avoid division by 0 for all-0 weights.

log_perps /= total_size

return log_perps

然后在莫烦例程中添加文件头:

from tensorflow_core import seq_loss

将计算损失调用函数语句改为:

losses = seq_loss.sequence_loss_by_example

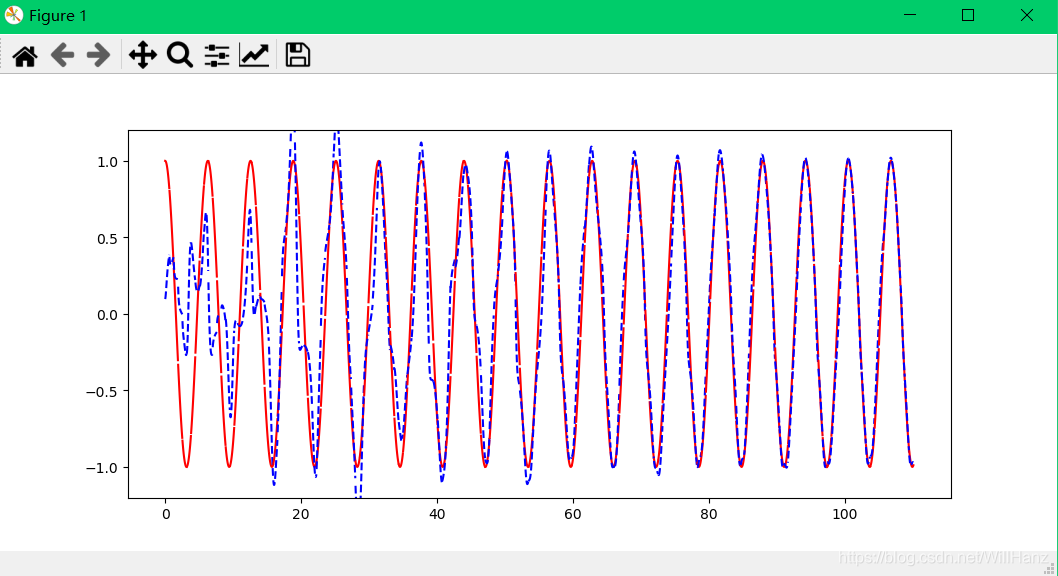

运行即可得到正确结果。

1144

1144

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?