背景

在翻译模型的基础上,实现对话生成网络,使用改造后的transformer对用户的提问和系统之前的回答分别进行encoder和decoder处理,decoder部分的数据经过处理后就是对当前用户提问的回答。

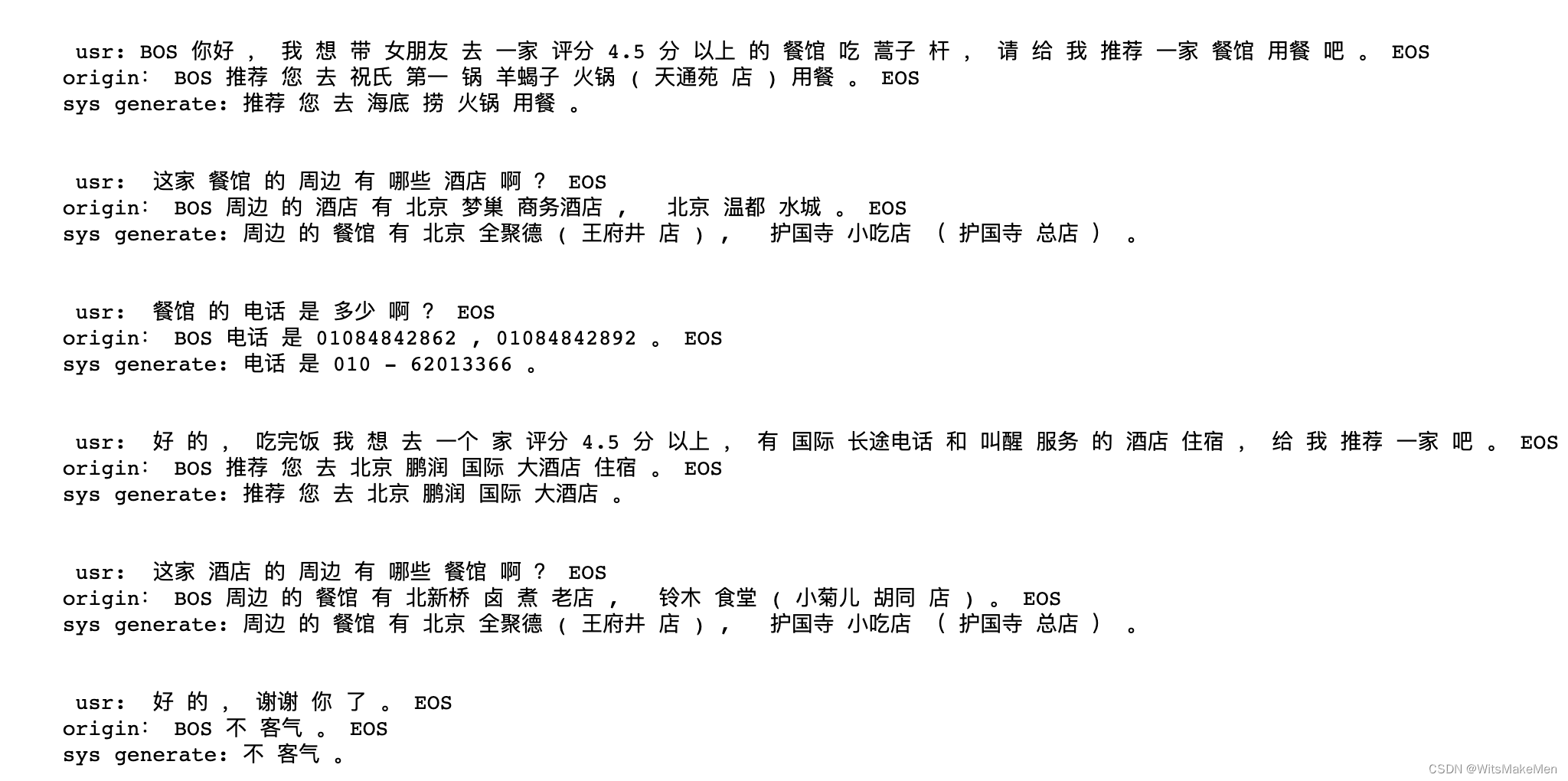

效果

问题

- 模型缺少知识图谱的支持,没有背景知识和常识,回答存在地理位置,常识价格等方面的错误。

- 对于之前的用户提问和系统回答,没有区分时间远近和重要度,存在答非所问的情况。

主要改动部分代码

数据生成

import numpy as np

import unicodedata

from torch.autograd import Variable

import torch

import re

from collections import Counter

UNK = 0 # 未登录词的标识符对应的词典id

PAD = 1 # padding占位符对应的词典id

import os

# os.environ["CUDA_VISIBLE_DEVICES"] = '2'

# DEVICE = torch.device("cuda" if torch.cuda.is_available() else "cpu")

import json

import jieba

# def seq_padding(X, padding=0):

# """

# 对一个batch批次(以单词id表示)的数据进行padding填充对齐长度

# """

# # 计算该批次数据各条数据句子长度

# L = [[len(x) for x in session] for session in X]

# # 获取该批次数据最大句子长度

# session_l = [len(session) for session in X]

# ML = max([max(l) for l in L])

# MSL = max(session_l)

# # 对X中各条数据x进行遍历,如果长度短于该批次数据最大长度ML,则以padding id填充缺失长度ML-len(x)

# x_padding = [[np.concatenate([sentence, [padding] * (ML - len(sentence))])

# if len(sentence) < ML else sentence for sentence in session] for session in X]

# # print('x')

# x_s_padding = [np.concatenate([session, [[padding] * ML for _ in range(MSL - len(session))]])

# if len(session) < MSL else session for session in x_padding]

# return np.array(x_s_padding)

def seq_padding(X, padding=0):

"""

对一个batch批次(以单词id表示)的数据进行padding填充对齐长度

"""

# 计算该批次数据各条数据句子长度

L = [len(x) for x in X]

# 获取该批次数据最大句子长度

ML = max(L)

# 对X中各条数据x进行遍历,如果长度短于该批次数据最大长度ML,则以padding id填充缺失长度ML-len(x)

x_padding = np.array([

np.concatenate([x, [padding] * (ML - len(x))]) if len(x) < ML else x for x in X

])

return x_padding

def subsequent_mask(size):

"Mask out subsequent positions."

# 设定subsequent_mask矩阵的shape

attn_shape = (1, size, size)

# TODO: 生成一个右上角(不含主对角线)为全1,左下角(含主对角线)为全0的subsequent_mask矩阵

subsequent_mask = np.triu(np.ones(attn_shape), k=1).astype('uint8')

# TODO: 返回一个右上角(不含主对角线)为全False,左下角(含主对角线)为全True的subsequent_mask矩阵

return torch.from_numpy(subsequent_mask) == 0

class Batch:

"Object for holding a batch of data with mask during training."

def __init__(self, src, trg, pad=0, DEVICE=None):

# 将输入与输出的单词id表示的数据规范成整数类型

# src = src.astype(int)

src = torch.from_numpy(src).to(DEVICE).long()

# print('src=', src.shape, src)

trg = torch.from_numpy(trg).to(DEVICE).long()

# trg = torch.from_numpy(trg).to(DEVICE).long()

self.src = src

# 对于当前输入的句子非空部分进行判断成bool序列

# 并在seq length前面增加一维,形成维度为 1×seq length 的矩阵

self.src_mask = (src != pad).unsqueeze(-2).to(DEVICE)

# 如果输出目标不为空,则需要对decoder要使用到的target句子进行mask

if trg is not None:

# decoder要用到的target输入部分

self.trg = trg[:, :-1]

# decoder训练时应预测输出的target结果

self.trg_y = trg[:, 1:]

# 将target输入部分进行attention mask

self.trg_mask = self.make_std_mask(self.trg, pad).to(DEVICE)

# 将应输出的target结果中实际的词数进行统计

self.ntokens = (self.trg_y != pad).data.sum().to(DEVICE)

# Mask掩码操作

@staticmethod

def make_std_mask(tgt, pad):

"Create a mask to hide padding and future words."

tgt_mask = (tgt != pad).unsqueeze(-2)

tgt_mask = tgt_mask & Variable(subsequent_mask(tgt.size(-1)).type_as(tgt_mask.data))

return tgt_mask

class PrepareData:

def __init__(self, train_file, eval_file, test_file, batch_size, DEVICE):

self.batch_size = batch_size

# 读取数据 并分词

self.train_src, self.train_tgt, _ = self.load_json_data(train_file)

self.eval_src, self.eval_tgt, _ = self.load_json_data(eval_file)

self.test_src, self.test_tgt, _ = self.load_json_data(test_file)

# 构建单词表, 对话问题,需要一个词汇表就够了

self.word_dict, self.total_words, self.index_dict = self.build_dict(self.train_src, self.train_tgt)

self.vocab_cap = len(self.word_dict)

#

# # id化

self.train_src, self.train_tgt = self.wordToID(self.train_src, self.train_tgt, self.word_dict)

self.eval_src, self.eval_tgt = self.wordToID(self.eval_src, self.eval_tgt, self.word_dict)

self.test_src, self.test_tgt = self.wordToID(self.test_src, self.test_tgt, self.word_dict)

# # 划分batch + padding + mask

self.train_data = self.splitBatch(self.train_src, self.train_tgt, batch_size, shuffle=True, DEVICE=DEVICE)

self.eval_data = self.splitBatch(self.eval_src, self.eval_tgt, batch_size, shuffle=False, DEVICE=DEVICE)

self.test_data = self.splitBatch(self.test_src, self.test_tgt, batch_size, shuffle=False, DEVICE=DEVICE)

# self.dev_data = self.splitBatch(self.dev_en, self.dev_cn, batch_size, DEVICE)

def load_json_data(self, path):

file_object = open(path, 'r') # 创建一个文件对象,也是一个可迭代对象

try:

# all_the_text = file_object.read() # 结果为str类型

json_obj = json.load(file_object)

train_src = []

train_tgt = []

meta_info = []

print('len data = ', len(json_obj.keys()))

for key in json_obj.keys():

content = json_obj.get(key)

messages = content.get('messages')

# src = []

# tgt = []

# start_src = True

# start_tgt = True

# end_src = None

# end_tgt = None

src_content = []

for m in messages:

content = m.get('content')

words = jieba.cut(content)

next_content = ['BOS']

src_content.append('BOS')

for w in words:

next_content.append(w)

src_content.append(w)

next_content.append('EOS')

src_content.append('EOS')

role = m.get('role')

if role == 'usr':

# if start_src:

# words_content.insert(0, 'BOT')

# start_src = False

# else:

# words_content.insert(0, 'BOS')

new_list = src_content[:]

train_src.append(new_list)

# end_src = words_content

else:

# if start_tgt:

# words_content.insert(0, 'BOT')

# start_tgt = False

# else:

# words_content.insert(0, 'BOS')

train_tgt.append(next_content)

# end_tgt = words_content

# end_src[-1] = 'EOT'

# end_tgt[-1] = 'EOT'

# train_src.append(src)

# train_tgt.append(tgt)

finally:

file_object.close()

return train_src, train_tgt, meta_info

def build_dict(self, src_dialogs, tgt_dialogs, max_words=60000):

"""

传入load_data构造的分词后的列表数据

构建词典(key为单词,value为id值)

"""

# 对数据中所有单词进行计数

# print('sentences=', sentences)

word_count = Counter()

for ss in src_dialogs:

for w in ss:

word_count[w] += 1

for ss1 in tgt_dialogs:

for w1 in ss1:

word_count[w1] += 1

ls = word_count.most_common(max_words)

# 统计词典的总词数

total_words = len(ls) + 2

word_dict = {w[0]: index + 2 for index, w in enumerate(ls)}

word_dict['UNK'] = UNK

word_dict['PAD'] = PAD

# 再构建一个反向的词典,供id转单词使用

index_dict = {v: k for k, v in word_dict.items()}

return word_dict, total_words, index_dict

def wordToID(self, train_src, train_tgt, word_dict, sort=True):

"""

将对话数据id话

"""

out_src_ids = [[word_dict.get(w, 0) for w in sent] for sent in train_src]

out_tgt_ids = [[word_dict.get(w, 0) for w in sent] for sent in train_tgt]

return out_src_ids, out_tgt_ids

def splitBatch(self, src, tgt, batch_size, shuffle=True, DEVICE=None):

"""

将以单词id列表表示的翻译前(英文)数据和翻译后(中文)数据

按照指定的batch_size进行划分

如果shuffle参数为True,则会对这些batch数据顺序进行随机打乱

"""

# 在按数据长度生成的各条数据下标列表[0, 1, ..., len(en)-1]中

# 每隔指定长度(batch_size)取一个下标作为后续生成batch的起始下标

idx_list = np.arange(0, len(src), batch_size)

# 如果shuffle参数为True,则将这些各batch起始下标打乱

if shuffle:

np.random.shuffle(idx_list)

# 存放各个batch批次的句子数据索引下标

batch_indexs = []

for idx in idx_list:

# 注意,起始下标最大的那个batch可能会超出数据大小

# 因此要限定其终止下标不能超过数据大小

batch_indexs.append(np.arange(idx, min(idx + batch_size, len(src))))

# 按各batch批次的句子数据索引下标,构建实际的单词id列表表示的各batch句子数据

batches = []

for batch_index in batch_indexs:

# 按当前batch的各句子下标(数组批量索引)提取对应的单词id列表句子表示数据

batch_src = [src[index] for index in batch_index]

batch_tgt = [tgt[index] for index in batch_index]

# 对当前batch的各个句子都进行padding对齐长度

# 维度为:batch数量×batch_size×每个batch最大句子长度

batch_src = seq_padding(batch_src)

batch_tgt = seq_padding(batch_tgt)

# 将当前batch的英文和中文数据添加到存放所有batch数据的列表中

batches.append(Batch(batch_src, batch_tgt, DEVICE=DEVICE))

return batches

if __name__ == '__main__':

train = '../data/dialogue/eval.json'

eval = '../data/dialogue/eval.json'

test = '../data/dialogue/eval.json'

prepareData = PrepareData(train, eval, test, 12, None)

# train_src, train_tgt, meta_info = prepareData.load_json_data(path)

# print('train_src=', train_src)

# print('\n\n========================\n\n')

# print('train_tgt=', train_tgt)

# words = jieba.cut('行,我都详细记录了,玩好了,接下来我想找一家豪华型的酒店住,你看有什么好的推荐没有啊?')

# for w in words:

# print('w=', w)

模型训练

import time

import torch

import torch.nn as nn

from torch.autograd import Variable

import os

import random

from dialogue_data_generator import *

import numpy as np

from dialogue_transformer_models import *

os.environ["CUDA_VISIBLE_DEVICES"] = '0'

DEVICE = torch.device("cuda" if torch.cuda.is_available() else "cpu")

class CustomLearnRateOptimizer:

"Optim wrapper that implements rate."

def __init__(self, model_size, factor, warmup, model):

self.optimizer = torch.optim.Adam(model.parameters(), lr=0, betas=(0.9, 0.98), eps=1e-9)

self._step = 0

self.warmup = warmup

self.factor = factor

self.model_size = model_size

self._rate = 0

def step(self):

"Update parameters and rate"

self._step += 1

rate = self.rate()

for p in self.optimizer.param_groups:

p['lr'] = rate

self._rate = rate

self.optimizer.step()

def rate(self, step=None):

"Implement `lrate` above"

if step is None:

step = self._step

return self.factor * (self.model_size ** (-0.5) * min(step ** (-0.5), step * self.warmup ** (-1.5)))

class LabelSmoothingLoss(nn.Module):

"""标签平滑处理"""

def __init__(self, size, padding_idx=0, smoothing=0.0):

super(LabelSmoothingLoss, self).__init__()

self.criterion = nn.KLDivLoss(reduction='sum')

self.padding_idx = padding_idx

self.confidence = 1.0 - smoothing

self.smoothing = smoothing

self.size = size

self.true_dist = None

def forward(self, x, target):

assert x.size(1) == self.size

x = x.to(DEVICE)

target = target.to(DEVICE)

true_dist = x.data.clone()

true_dist.fill_(self.smoothing / (self.size - 2))

true_dist.scatter_(1, target.data.unsqueeze(1), self.confidence)

true_dist[:, self.padding_idx] = 0

mask = torch.nonzero(target.data == self.padding_idx)

if mask.dim() > 0:

true_dist.index_fill_(0, mask.squeeze(), 0.0)

self.true_dist = true_dist

return self.criterion(x, Variable(true_dist, requires_grad=False))

class Trainer:

def __init__(self, epochs, tgt_vocab, D_MODEL, model, SAVE_FILE='model1.pt', MAX_LENGTH=100):

self.epochs = epochs

self.loss = LabelSmoothingLoss(tgt_vocab)

self.optimizer = CustomLearnRateOptimizer(D_MODEL, 1, 2000, model)

self.SAVE_FILE = SAVE_FILE

self.MAX_LENGTH = MAX_LENGTH

self.model = model

def compute_loss(self, x, y, norm):

# 计算loss,并且反向传播

loss = self.loss(x.contiguous().view(-1, x.size(-1)), y.contiguous().view(-1)) / norm

# print('loss=', loss.shape, loss)

loss.backward()

if self.optimizer is not None:

self.optimizer.step()

self.optimizer.optimizer.zero_grad()

return loss.data.item() * norm.float()

def train(self, data):

"""

训练并保存模型

"""

for p in self.model.parameters():

if p.dim() > 1:

# 这里初始化采用的是nn.init.xavier_uniform

nn.init.xavier_uniform_(p)

# 初始化模型在dev集上的最优Loss为一个较大值

best_dev_loss = 1e5

for epoch in range(self.epochs):

# 模型训练

self.model.train()

self.run_epoch(data.train_data, self.model, epoch)

self.model.eval()

# 在dev集上进行loss评估

print('>>>>> Evaluate')

dev_loss = self.run_epoch(data.eval_data, self.model, epoch, max_step=200)

print('<<<<< Evaluate loss: %f' % dev_loss)

# # TODO: 如果当前epoch的模型在dev集上的loss优于之前记录的最优loss则保存当前模型,并更新最优loss值

if dev_loss < best_dev_loss:

print('saving model...')

torch.save(self.model.state_dict(), self.SAVE_FILE)

best_dev_loss = dev_loss

print('>>>>>>>>>>>>>>> Evaluate case, epach=%d' % epoch)

self.evaluate(data)

print('>>>>>>>>>>>>>>> end evaluate case, epach=%d' % epoch)

def run_epoch(self, data, model, epoch, max_step=1000):

start = time.time()

total_tokens = 0.

total_loss = 0.

tokens = 0.

# print('data=', len(data), data)

for i, batch in enumerate(data):

if i>=max_step:

break

# print('batch.src=', batch.src, batch.src.shape)

# print('batch.trg=', batch.trg, batch.trg.shape)

out = model(batch.src, batch.trg, batch.src_mask, batch.trg_mask)

loss = self.compute_loss(out, batch.trg_y, batch.ntokens)

total_loss += loss

total_tokens += batch.ntokens

tokens += batch.ntokens

if i % 100 == 1:

elapsed = time.time() - start

print("Epoch %d Batch: %d Loss: %f Tokens per Sec: %fs" % (epoch, i - 1, loss / batch.ntokens, (tokens.float() / elapsed / 1000.)))

start = time.time()

tokens = 0

return total_loss / total_tokens

def evaluate(self, data):

"""

在data上用训练好的模型进行预测,打印模型翻译结果

"""

# 梯度清零

with torch.no_grad():

# 在data的英文数据长度上遍历下标

s = random.randint(0, len(data.test_src) - 15)

for j in range(10):

i = s + j

# print('i=', i)

# TODO: 打印待翻译的src句子

cn_sent = " ".join([data.index_dict[w] for w in data.test_src[i]])

items = cn_sent.split('EOS BOS')

print("\nusr: " + items[-1])

# TODO: 打印对应的tgt答案

en_sent = " ".join([data.index_dict[w] for w in data.test_tgt[i]])

print('origin:',"".join(en_sent))

# 将当前以单词id表示的英文句子数据转为tensor,并放如DEVICE中

src = torch.from_numpy(np.array(data.test_src[i])).long().to(DEVICE)

# 增加一维

src = src.unsqueeze(0)

# 设置attention mask

src_mask = (src != 0).unsqueeze(-2)

# 用训练好的模型进行decode预测

out = self.greedy_decode(src, src_mask, max_len=self.MAX_LENGTH, start_symbol=data.word_dict["BOS"])

# 初始化一个用于存放模型翻译结果句子单词的列表

translation = []

# 遍历翻译输出字符的下标(注意:开始符"BOS"的索引0不遍历)

for j in range(1, out.size(1)):

# 获取当前下标的输出字符

sym = data.index_dict[out[0, j].item()]

# 如果输出字符不为'EOS'终止符,则添加到当前句子的翻译结果列表

if sym != 'EOS':

translation.append(sym)

# 否则终止遍历

else:

break

# 打印模型翻译输出的中文句子结果

print("sys: %s\n" % " ".join(translation))

def greedy_decode(self, src, src_mask, max_len, start_symbol):

"""

传入一个训练好的模型,对指定数据进行预测

"""

# 先用encoder进行encode

memory = self.model.encode(src, src_mask)

# print('src=', src.shape, src)

# print('src_mask=', src_mask.shape, src_mask)

# print('memory=', memory.shape, memory)

# 初始化预测内容为1×1的tensor,填入开始符('BOS')的id,并将type设置为输入数据类型(LongTensor)

ys = torch.ones(1, 1).fill_(start_symbol).type_as(src.data)

# print('ys=', ys.shape, ys)

# 遍历输出的长度下标

for i in range(max_len - 1):

# decode得到隐层表示

tgt_msk = subsequent_mask(ys.size(1)).type_as(src.data)

# print('i=',i,'tgt_msk=', tgt_msk)

out = self.model.decode(memory,

src_mask,

Variable(ys),

Variable(tgt_msk))

# 将隐藏表示转为对词典各词的log_softmax概率分布表示

# print('i=',i,'out=', out.shape, out)

# out1 = out[:, -1]

# print('out1=', out1, out1.shape)

# prob = model.generator(out[:, -1])

# 获取当前位置最大概率的预测词id

_, next_word = torch.max(out, dim=2)

# print('i = ', i, ', out=', out.shape, out)

# print('i = ', i, ', next_word=', next_word.shape, next_word)

next_word = next_word.data[0, -1]

# 将当前位置预测的字符id与之前的预测内容拼接起来

ys = torch.cat([ys, torch.ones(1, 1).type_as(src.data).fill_(next_word)], dim=1)

# print('i = ', i, ' ,next_word=', next_word, ', ys=', ys)

return ys

if __name__=='__main__':

TRAIN_FILE = '../data/dialogue/eval.json'

DEV_FILE = '../data/dialogue/eval.json'

TEST_FILE = '../data/dialogue/test.json'

SAVE_FILE = 'model_dialog.pt'

h = 8

d_model = 512

d_ff = 2048

dropout = 0.1

N = 6

epochs = 1

# batch_size = 64

batch_size = 16

print('<<<<<<<<<<<<<<<start one step>>>>>>>>>>>>>>>>>>>>>\n1.start prepare data++++++++++++++++++++++\n')

data = PrepareData(TRAIN_FILE, DEV_FILE, TEST_FILE, batch_size, DEVICE)

print("vocab_cap %d" % data.vocab_cap)

print('self.train.len = ', len(data.train_src))

print('self.eval.len = ', len(data.eval_src))

print('self.test.len = ', len(data.test_src))

print('\n2.start to train++++++++++++++++++++++++\n')

transformer = Transformer(h, d_model, d_ff, dropout, N, data.vocab_cap, data.vocab_cap, DEVICE).to(DEVICE)

trainer = Trainer(epochs, data.vocab_cap, d_model, transformer, SAVE_FILE=SAVE_FILE, MAX_LENGTH=20)

trainer.train(data)

print('\n3.start to eval++++++++++++++++++++++++\n')

transformer.load_state_dict(torch.load(SAVE_FILE))

# trainer = Trainer(epochs, data.tgt_vocab, d_model, transformer, SAVE_FILE='model1.pt', MAX_LENGTH=5)

trainer.evaluate(data)

1061

1061

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?