类别不均衡学习的基本策略包括:1)对分类器的预测值缩放,改变阈值;2)对数据集里的反例进行欠采样;3)对正例进行过采样;

欠采样由于丢弃了大量反例,减少了训练集大小,训练时间开销相比过采样要大;

过采样法不能简单地对初始正例进行重复采样,这会导致过拟合;正确过采样的方法是对正例进行插值。

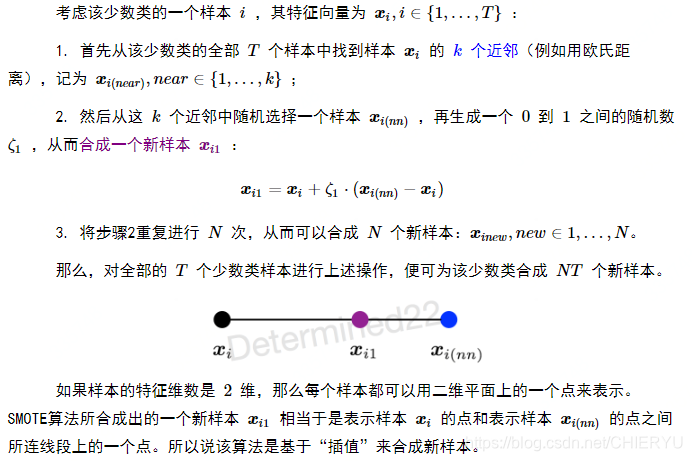

SMOTE的主要步骤根据 机器学习 —— 类不平衡问题与SMOTE过采样算法 的介绍如下所示:

下面分析github上一个代码:https://github.com/kaushalshetty/SMOTE/blob/master/smote.py

'''

Created on 27/03/2017

@author: Kaushal Shetty

This is an implementation of the SMOTE Algorithm.

See: "SMOTE: synthetic minority over-sampling technique" by

Chawla, N.V et al.

'''

import numpy as np

import random

from sklearn.neighbors import NearestNeighbors

import math

from random import randint

import matplotlib.pyplot as plt

from sklearn.decomposition import PCA

class Smote(object):

"""docstring for Smote"""

def __init__(self,distance):

super(Smote, self).__init__()

self.synthetic_arr= []

self.newindex = 0

self.distance_measure = distance

#输入第i个正例样本及其k个近邻样本indices,合成新正例样本,使得第i个样本的扩充数达到N,min_samples是所有正样本

#每个样本都扩充到N个,则总样本数就扩充到原来的1+N倍了

#假设原始正例数为52个,N=2,那么每个样本扩充数为2,最后总数达到52*(1+2)=156

def Populate(self,N,i,indices,min_samples,k):

"""

Populates the synthitic array

Returns:Synthetic Array to generate_syntheic_points

"""

while N!=0:

arr = []

nn = randint(0,k-2)#k-1个邻居中随机挑选一个与第i个样本插值,当然这个随机样本不能是第i个样本,所以此处个人觉得应该是nn= randint(1,k-1)才对啊,???#TODO

features = len(min_samples[0])

for attr in range(features):

diff = min_samples[indices[nn]][attr] - min_samples[i][attr]

gap = random.uniform(0,1)

arr.append(min_samples[i][attr] + gap*diff)

self.synthetic_arr.append(arr)

self.newindex = self.newindex + 1

N = N-1

def k_neighbors(self,euclid_distance,k):

nearest_idx_npy = np.empty([euclid_distance.shape[0],euclid_distance.shape[0]],dtype=np.int64)

for i in range(len(euclid_distance)):

idx = np.argsort(euclid_distance[i])

nearest_idx_npy[i] = idx

idx = 0

return nearest_idx_npy[:,1:k]

def find_k(self,X,k):

"""

Finds k nearest neighbors using euclidian distance

Returns: The k nearest neighbor

"""

euclid_distance = np.empty([X.shape[0],X.shape[0]],dtype = np.float32)

for i in range(len(X)):

dist_arr = []

for j in range(len(X)):

dist_arr.append(math.sqrt(sum((X[j]-X[i])**2)))

dist_arr = np.asarray(dist_arr,dtype = np.float32)

euclid_distance[i] = dist_arr

return self.k_neighbors(euclid_distance,k)

def generate_synthetic_points(self,min_samples,N,k):

"""

Returns (N/100) * n_minority_samples synthetic minority samples.

Parameters

----------

min_samples : Numpy_array-like, shape = [n_minority_samples, n_features]

Holds the minority samples

N : percetange of new synthetic samples:

n_synthetic_samples = N/100 * n_minority_samples. Can be < 100.

k : int. Number of nearest neighbours.

Returns

-------

S : Synthetic samples. array,

shape = [(N/100) * n_minority_samples, n_features].

"""

if N < 100:

raise ValueError("Value of N cannot be less than 100%")

if self.distance_measure not in ('euclidian','ball_tree'):

raise ValueError("Invalid Distance Measure.You can use only Euclidian or ball_tree")

if k>min_samples.shape[0]:

raise ValueError("Size of k cannot exceed the number of samples.")

N = int(N/100)

T = min_samples.shape[0]

if self.distance_measure == 'euclidian':

indices = self.find_k(min_samples,k)

elif self.distance_measure=='ball_tree':

nb = NearestNeighbors(n_neighbors = k,algorithm= 'ball_tree').fit(min_samples)

distance,indices = nb.kneighbors(min_samples)

indices = indices[:,1:]

for i in range(indices.shape[0]):

self.Populate(N,i,indices[i],min_samples,k)

return np.asarray(self.synthetic_arr)

def plot_synthetic_points(self,min_samples,N,k):

"""

Plot the over sampled synthtic samples in a scatterplot

"""

if N < 100:

raise ValueError("Value of N cannot be less than 100%")

if self.distance_measure not in ('euclidian','ball_tree'):

raise ValueError("Invalid Distance Measure.You can use only Euclidian or ball_tree")

if k>min_samples.shape[0]:

raise ValueError("Size of k cannot exceed the number of samples.")

synthetic_points = self.generate_synthetic_points(min_samples,N,k)

pca = PCA(n_components=2)

pca.fit(synthetic_points)

pca_synthetic_points = pca.transform(synthetic_points)

plt.scatter(pca_synthetic_points[:,0],pca_synthetic_points[:,1])

plt.show()

2817

2817

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?