一、项目说明

项目使用paddleNLP提供的大模型套件对Baichuan2-7b/13b进行微调,使用《中医治疗新冠流感支原体感染等有效病历集》进行Lora训练,使大模型具备使用中医方案诊断和治疗新冠、流感等上呼吸道感染的能力。

二、PaddleNLP

PaddleNLP提供的飞桨大模型套件秉承了一站式体验、性能极致、生态兼容的设计理念,旨在提供业界主流大模型预训练、精调(含SFT、PEFT)、量化、推理等统一流程, 帮助开发者低成本、低门槛、快速实现大语言模型定制化。PaddleNLP支持多个主流大模型的SFT、LoRA、Prefix Tuning等精调策略,提供统一、高效精调方案:

-

1. 统一训练入口。飞桨大模型套件精调方案可适配业界主流大模型,用户只需修改配置文件,即能在单卡或多卡(支持4D并行分布式策略)进行多种大模型精调。 -

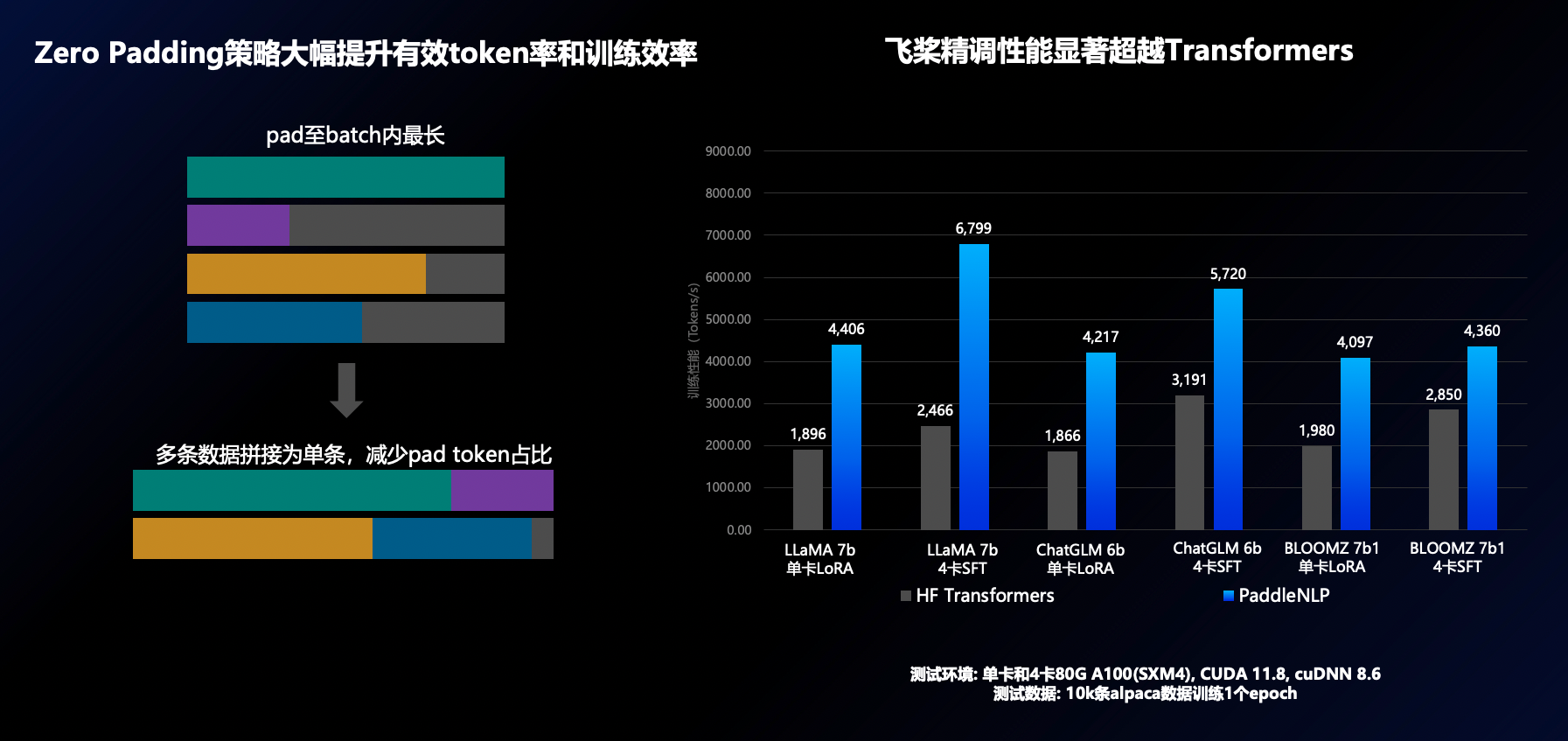

1. 高效数据和分布式策略。Zero Padding零填充优化策略有效减少了pad token的占比,提高模型训练效率高达100%。独创PEFT结合低比特和分布式并行策略,大幅降低大模型精调硬件门槛,支持单卡 (A100 80G)百亿模型微调、单机(A100 80G * 8)千亿模型微调。 -

1. 支持多轮对话。支持统一对话模板,支持多轮对话高效训练,详参多轮对话文档。

三、Baichuan2-7b/13b-chat

Baichuan2系列产品是百川智能在深度学习领域的最新成果,经过微调后的模型在多个任务上取得了优异的性能。开源这些模型将为开发者提供一个强大的工具,帮助他们在各种应用场景中实现更高效、更准确的人工智能应用.

Baichuan 2系列产品完全开源,并且在在「免费商用」这条路上,Baichuan 2 践行得非常彻底,极大弥补了中国开源生态的短板,让中国开发者用上了对中文场景更友好的开源大模型。

Baichuan2系列模型效率也很高,130亿参数的Baichuan2-13b量化版,在消费级显卡的笔记本电脑上也可以实现快速推理。因此,我们选用Baichuan2系统模型做为本项目的基座

四、训练数据说明

《中医治疗新冠流感支原体感染等有效病历集》是云中医整理的近期高发上呼吸道感染中医诊断治疗的有效病历,包含新冠,甲流,支原体,腺病毒,合胞病毒等各种病毒引发的感冒、咳嗽等病历。经处理弱化了原病历的处方及处方药,增加了OTC中成药及家庭食疗的治疗方案,避免医疗的资质问题及可能的纠纷,更适合于一般轻症的自我诊所治疗。 数据分两部分:case为病历记录,diagnosis为从病历提取的诊断结果及处方。数据示例如下:

{"case":"患者,男性,45岁,因新冠感染前来就诊。患者近日出现恶寒、无汗、后背痛的症状,并有发热、身痛、头痛。

背部疼痛严重,影响日常生活。患者还表现出清涕、鼻塞、神疲乏力、声哑、无食欲等症状。舌淡苔白,脉紧。根据患者的主症

和症状关联,考虑为葛根汤证。葛根汤为中医经典方剂,主要用于治疗风寒感冒,尤其对于恶寒、无汗、后背痛等症状有显著疗

效。综上所述,患者新冠感染后出现恶寒、无汗、后背痛、发热、身痛、头痛等症状,考虑为葛根汤证。建议采用葛根汤进行治

疗。",

"diagnosis":"诊断:太阳阳明伤寒 。建议处方:葛根汤。建议中成药:葛根汤颗粒或风寒感冒颗粒或感冒软胶

囊 建议食疗:葱白姜汤"}

PaddleNLP训练数据支持的数据格式是每行包含一个字典,每个字典包含以下字段:

src : str, List(str), 模型的输入指令(instruction)、提示(prompt),模型应该执行的任务。

tgt : str, List(str), 模型的输出。

因此,在训练前,需要将训练数据转换为要求的格式数据。

五、环境准备

1. 获取并安装最新版PaddleNLP

In [1]

#直接克隆github上的最新版本,考虑网络问题,也可以从gitee上克隆(gitee可能版本不是最新,最好是从github上取)

#!git clone https://gitee.com/PaddlePaddle/PaddleNLP

!git clone https://github.com/PaddlePaddle/PaddleNLP.git

Cloning into 'PaddleNLP'... remote: Enumerating objects: 60471, done. remote: Counting objects: 100% (578/578), done. remote: Compressing objects: 100% (423/423), done. remote: Total 60471 (delta 271), reused 382 (delta 144), pack-reused 59893 Receiving objects: 100% (60471/60471), 97.72 MiB | 15.36 MiB/s, done. Resolving deltas: 100% (41419/41419), done.

In [2]

# 安装本地下载的版本.

!pip install -r PaddleNLP/requirements.txt

!pip install -e ./PaddleNLPLooking in indexes: https://mirror.baidu.com/pypi/simple/, https://mirrors.aliyun.com/pypi/simple/, https://pypi.tuna.tsinghua.edu.cn/simple/

Ignoring protobuf: markers 'platform_system == "Windows"' don't match your environment

Requirement already satisfied: jieba in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 1)) (0.42.1)

Requirement already satisfied: colorlog in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 2)) (6.8.0)

Requirement already satisfied: colorama in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 3)) (0.4.6)

Requirement already satisfied: seqeval in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 4)) (1.2.2)

Requirement already satisfied: dill<0.3.5 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 5)) (0.3.4)

Requirement already satisfied: multiprocess<=0.70.12.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 6)) (0.70.12.2)

Requirement already satisfied: datasets>=2.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 7)) (2.16.0)

Requirement already satisfied: tqdm in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 8)) (4.66.1)

Requirement already satisfied: paddlefsl in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 9)) (1.1.0)

Requirement already satisfied: sentencepiece in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 10)) (0.1.99)

Requirement already satisfied: huggingface_hub>=0.11.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 11)) (0.20.1)

Requirement already satisfied: onnx>=1.10.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 12)) (1.15.0)

Requirement already satisfied: protobuf>=3.20.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 13)) (3.20.3)

Requirement already satisfied: paddle2onnx in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 15)) (1.1.0)

Requirement already satisfied: Flask-Babel in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 16)) (4.0.0)

Requirement already satisfied: visualdl in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 17)) (2.5.3)

Requirement already satisfied: fastapi in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 18)) (0.105.0)

Requirement already satisfied: uvicorn in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 19)) (0.25.0)

Requirement already satisfied: typer in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 20)) (0.9.0)

Requirement already satisfied: rich in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 21)) (13.7.0)

Requirement already satisfied: safetensors in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 22)) (0.4.1)

Requirement already satisfied: tool_helpers in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 23)) (0.1.1)

Requirement already satisfied: aistudio-sdk>=0.1.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 24)) (0.1.5)

Requirement already satisfied: jinja2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from -r PaddleNLP/requirements.txt (line 25)) (3.1.2)

Requirement already satisfied: numpy>=1.14.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from seqeval->-r PaddleNLP/requirements.txt (line 4)) (1.26.2)

Requirement already satisfied: scikit-learn>=0.21.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from seqeval->-r PaddleNLP/requirements.txt (line 4)) (1.3.2)

Requirement already satisfied: filelock in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (3.13.1)

Requirement already satisfied: pyarrow>=8.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (14.0.2)

Requirement already satisfied: pyarrow-hotfix in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (0.6)

Requirement already satisfied: pandas in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (2.1.4)

Requirement already satisfied: requests>=2.19.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (2.31.0)

Requirement already satisfied: xxhash in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (3.4.1)

Requirement already satisfied: fsspec<=2023.10.0,>=2023.1.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fsspec[http]<=2023.10.0,>=2023.1.0->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (2023.10.0)

Requirement already satisfied: aiohttp in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (3.9.1)

Requirement already satisfied: packaging in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (23.2)

Requirement already satisfied: pyyaml>=5.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (6.0.1)

Requirement already satisfied: typing-extensions>=3.7.4.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from huggingface_hub>=0.11.1->-r PaddleNLP/requirements.txt (line 11)) (4.9.0)

Requirement already satisfied: Babel>=2.12 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask-Babel->-r PaddleNLP/requirements.txt (line 16)) (2.14.0)

Requirement already satisfied: Flask>=2.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask-Babel->-r PaddleNLP/requirements.txt (line 16)) (3.0.0)

Requirement already satisfied: pytz>=2022.7 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask-Babel->-r PaddleNLP/requirements.txt (line 16)) (2023.3.post1)

Requirement already satisfied: bce-python-sdk in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->-r PaddleNLP/requirements.txt (line 17)) (0.8.98)

Requirement already satisfied: Pillow>=7.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->-r PaddleNLP/requirements.txt (line 17)) (10.1.0)

Requirement already satisfied: six>=1.14.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->-r PaddleNLP/requirements.txt (line 17)) (1.16.0)

Requirement already satisfied: matplotlib in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->-r PaddleNLP/requirements.txt (line 17)) (3.8.2)

Requirement already satisfied: rarfile in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->-r PaddleNLP/requirements.txt (line 17)) (4.1)

Requirement already satisfied: psutil in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->-r PaddleNLP/requirements.txt (line 17)) (5.9.7)

Requirement already satisfied: anyio<4.0.0,>=3.7.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fastapi->-r PaddleNLP/requirements.txt (line 18)) (3.7.1)

Requirement already satisfied: pydantic!=1.8,!=1.8.1,!=2.0.0,!=2.0.1,!=2.1.0,<3.0.0,>=1.7.4 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fastapi->-r PaddleNLP/requirements.txt (line 18)) (2.5.3)

Requirement already satisfied: starlette<0.28.0,>=0.27.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fastapi->-r PaddleNLP/requirements.txt (line 18)) (0.27.0)

Requirement already satisfied: click>=7.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from uvicorn->-r PaddleNLP/requirements.txt (line 19)) (8.1.7)

Requirement already satisfied: h11>=0.8 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from uvicorn->-r PaddleNLP/requirements.txt (line 19)) (0.14.0)

Requirement already satisfied: markdown-it-py>=2.2.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from rich->-r PaddleNLP/requirements.txt (line 21)) (2.2.0)

Requirement already satisfied: pygments<3.0.0,>=2.13.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from rich->-r PaddleNLP/requirements.txt (line 21)) (2.17.2)

Requirement already satisfied: pybind11 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from tool_helpers->-r PaddleNLP/requirements.txt (line 23)) (2.11.1)

Requirement already satisfied: MarkupSafe>=2.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from jinja2->-r PaddleNLP/requirements.txt (line 25)) (2.1.3)

Requirement already satisfied: idna>=2.8 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from anyio<4.0.0,>=3.7.1->fastapi->-r PaddleNLP/requirements.txt (line 18)) (3.6)

Requirement already satisfied: sniffio>=1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from anyio<4.0.0,>=3.7.1->fastapi->-r PaddleNLP/requirements.txt (line 18)) (1.3.0)

Requirement already satisfied: exceptiongroup in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from anyio<4.0.0,>=3.7.1->fastapi->-r PaddleNLP/requirements.txt (line 18)) (1.2.0)

Requirement already satisfied: Werkzeug>=3.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask>=2.0->Flask-Babel->-r PaddleNLP/requirements.txt (line 16)) (3.0.1)

Requirement already satisfied: itsdangerous>=2.1.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask>=2.0->Flask-Babel->-r PaddleNLP/requirements.txt (line 16)) (2.1.2)

Requirement already satisfied: blinker>=1.6.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask>=2.0->Flask-Babel->-r PaddleNLP/requirements.txt (line 16)) (1.7.0)

Requirement already satisfied: attrs>=17.3.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (23.1.0)

Requirement already satisfied: multidict<7.0,>=4.5 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (6.0.4)

Requirement already satisfied: yarl<2.0,>=1.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (1.9.4)

Requirement already satisfied: frozenlist>=1.1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (1.4.1)

Requirement already satisfied: aiosignal>=1.1.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (1.3.1)

Requirement already satisfied: async-timeout<5.0,>=4.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (4.0.3)

Requirement already satisfied: mdurl~=0.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from markdown-it-py>=2.2.0->rich->-r PaddleNLP/requirements.txt (line 21)) (0.1.1)

Requirement already satisfied: annotated-types>=0.4.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from pydantic!=1.8,!=1.8.1,!=2.0.0,!=2.0.1,!=2.1.0,<3.0.0,>=1.7.4->fastapi->-r PaddleNLP/requirements.txt (line 18)) (0.6.0)

Requirement already satisfied: pydantic-core==2.14.6 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from pydantic!=1.8,!=1.8.1,!=2.0.0,!=2.0.1,!=2.1.0,<3.0.0,>=1.7.4->fastapi->-r PaddleNLP/requirements.txt (line 18)) (2.14.6)

Requirement already satisfied: charset-normalizer<4,>=2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from requests>=2.19.0->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (3.3.2)

Requirement already satisfied: urllib3<3,>=1.21.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from requests>=2.19.0->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (2.1.0)

Requirement already satisfied: certifi>=2017.4.17 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from requests>=2.19.0->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (2023.11.17)

Requirement already satisfied: scipy>=1.5.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from scikit-learn>=0.21.3->seqeval->-r PaddleNLP/requirements.txt (line 4)) (1.11.4)

Requirement already satisfied: joblib>=1.1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from scikit-learn>=0.21.3->seqeval->-r PaddleNLP/requirements.txt (line 4)) (1.3.2)

Requirement already satisfied: threadpoolctl>=2.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from scikit-learn>=0.21.3->seqeval->-r PaddleNLP/requirements.txt (line 4)) (3.2.0)

Requirement already satisfied: pycryptodome>=3.8.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from bce-python-sdk->visualdl->-r PaddleNLP/requirements.txt (line 17)) (3.19.0)

Requirement already satisfied: future>=0.6.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from bce-python-sdk->visualdl->-r PaddleNLP/requirements.txt (line 17)) (0.18.3)

Requirement already satisfied: contourpy>=1.0.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->-r PaddleNLP/requirements.txt (line 17)) (1.2.0)

Requirement already satisfied: cycler>=0.10 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->-r PaddleNLP/requirements.txt (line 17)) (0.12.1)

Requirement already satisfied: fonttools>=4.22.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->-r PaddleNLP/requirements.txt (line 17)) (4.47.0)

Requirement already satisfied: kiwisolver>=1.3.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->-r PaddleNLP/requirements.txt (line 17)) (1.4.5)

Requirement already satisfied: pyparsing>=2.3.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->-r PaddleNLP/requirements.txt (line 17)) (3.1.1)

Requirement already satisfied: python-dateutil>=2.7 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->-r PaddleNLP/requirements.txt (line 17)) (2.8.2)

Requirement already satisfied: tzdata>=2022.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from pandas->datasets>=2.0.0->-r PaddleNLP/requirements.txt (line 7)) (2023.3)

Looking in indexes: https://mirror.baidu.com/pypi/simple/, https://mirrors.aliyun.com/pypi/simple/, https://pypi.tuna.tsinghua.edu.cn/simple/

Obtaining file:///home/aistudio/PaddleNLP

Installing build dependencies ... done

Checking if build backend supports build_editable ... done

Getting requirements to build editable ... done

Installing backend dependencies ... done

Preparing editable metadata (pyproject.toml) ... done

Requirement already satisfied: jieba in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.42.1)

Requirement already satisfied: colorlog in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (6.8.0)

Requirement already satisfied: colorama in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.4.6)

Requirement already satisfied: seqeval in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (1.2.2)

Requirement already satisfied: dill<0.3.5 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.3.4)

Requirement already satisfied: multiprocess<=0.70.12.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.70.12.2)

Requirement already satisfied: datasets>=2.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (2.16.0)

Requirement already satisfied: tqdm in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (4.66.1)

Requirement already satisfied: paddlefsl in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (1.1.0)

Requirement already satisfied: sentencepiece in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.1.99)

Requirement already satisfied: huggingface-hub>=0.11.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.20.1)

Requirement already satisfied: onnx>=1.10.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (1.15.0)

Requirement already satisfied: paddle2onnx in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (1.1.0)

Requirement already satisfied: Flask-Babel in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (4.0.0)

Requirement already satisfied: visualdl in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (2.5.3)

Requirement already satisfied: fastapi in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.105.0)

Requirement already satisfied: uvicorn in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.25.0)

Requirement already satisfied: typer in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.9.0)

Requirement already satisfied: rich in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (13.7.0)

Requirement already satisfied: safetensors in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.4.1)

Requirement already satisfied: tool-helpers in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.1.1)

Requirement already satisfied: aistudio-sdk>=0.1.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (0.1.5)

Requirement already satisfied: jinja2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (3.1.2)

Requirement already satisfied: protobuf>=3.20.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from paddlenlp==2.6.1.post0) (3.20.3)

Requirement already satisfied: requests in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aistudio-sdk>=0.1.3->paddlenlp==2.6.1.post0) (2.31.0)

Requirement already satisfied: filelock in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (3.13.1)

Requirement already satisfied: numpy>=1.17 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (1.26.2)

Requirement already satisfied: pyarrow>=8.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (14.0.2)

Requirement already satisfied: pyarrow-hotfix in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (0.6)

Requirement already satisfied: pandas in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (2.1.4)

Requirement already satisfied: xxhash in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (3.4.1)

Requirement already satisfied: fsspec<=2023.10.0,>=2023.1.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fsspec[http]<=2023.10.0,>=2023.1.0->datasets>=2.0.0->paddlenlp==2.6.1.post0) (2023.10.0)

Requirement already satisfied: aiohttp in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (3.9.1)

Requirement already satisfied: packaging in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (23.2)

Requirement already satisfied: pyyaml>=5.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from datasets>=2.0.0->paddlenlp==2.6.1.post0) (6.0.1)

Requirement already satisfied: typing-extensions>=3.7.4.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from huggingface-hub>=0.11.1->paddlenlp==2.6.1.post0) (4.9.0)

Requirement already satisfied: anyio<4.0.0,>=3.7.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fastapi->paddlenlp==2.6.1.post0) (3.7.1)

Requirement already satisfied: pydantic!=1.8,!=1.8.1,!=2.0.0,!=2.0.1,!=2.1.0,<3.0.0,>=1.7.4 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fastapi->paddlenlp==2.6.1.post0) (2.5.3)

Requirement already satisfied: starlette<0.28.0,>=0.27.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from fastapi->paddlenlp==2.6.1.post0) (0.27.0)

Requirement already satisfied: Babel>=2.12 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask-Babel->paddlenlp==2.6.1.post0) (2.14.0)

Requirement already satisfied: Flask>=2.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask-Babel->paddlenlp==2.6.1.post0) (3.0.0)

Requirement already satisfied: pytz>=2022.7 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask-Babel->paddlenlp==2.6.1.post0) (2023.3.post1)

Requirement already satisfied: MarkupSafe>=2.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from jinja2->paddlenlp==2.6.1.post0) (2.1.3)

Requirement already satisfied: markdown-it-py>=2.2.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from rich->paddlenlp==2.6.1.post0) (2.2.0)

Requirement already satisfied: pygments<3.0.0,>=2.13.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from rich->paddlenlp==2.6.1.post0) (2.17.2)

Requirement already satisfied: scikit-learn>=0.21.3 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from seqeval->paddlenlp==2.6.1.post0) (1.3.2)

Requirement already satisfied: pybind11 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from tool-helpers->paddlenlp==2.6.1.post0) (2.11.1)

Requirement already satisfied: click<9.0.0,>=7.1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from typer->paddlenlp==2.6.1.post0) (8.1.7)

Requirement already satisfied: h11>=0.8 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from uvicorn->paddlenlp==2.6.1.post0) (0.14.0)

Requirement already satisfied: bce-python-sdk in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->paddlenlp==2.6.1.post0) (0.8.98)

Requirement already satisfied: Pillow>=7.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->paddlenlp==2.6.1.post0) (10.1.0)

Requirement already satisfied: six>=1.14.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->paddlenlp==2.6.1.post0) (1.16.0)

Requirement already satisfied: matplotlib in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->paddlenlp==2.6.1.post0) (3.8.2)

Requirement already satisfied: rarfile in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->paddlenlp==2.6.1.post0) (4.1)

Requirement already satisfied: psutil in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from visualdl->paddlenlp==2.6.1.post0) (5.9.7)

Requirement already satisfied: idna>=2.8 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from anyio<4.0.0,>=3.7.1->fastapi->paddlenlp==2.6.1.post0) (3.6)

Requirement already satisfied: sniffio>=1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from anyio<4.0.0,>=3.7.1->fastapi->paddlenlp==2.6.1.post0) (1.3.0)

Requirement already satisfied: exceptiongroup in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from anyio<4.0.0,>=3.7.1->fastapi->paddlenlp==2.6.1.post0) (1.2.0)

Requirement already satisfied: Werkzeug>=3.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask>=2.0->Flask-Babel->paddlenlp==2.6.1.post0) (3.0.1)

Requirement already satisfied: itsdangerous>=2.1.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask>=2.0->Flask-Babel->paddlenlp==2.6.1.post0) (2.1.2)

Requirement already satisfied: blinker>=1.6.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from Flask>=2.0->Flask-Babel->paddlenlp==2.6.1.post0) (1.7.0)

Requirement already satisfied: attrs>=17.3.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->paddlenlp==2.6.1.post0) (23.1.0)

Requirement already satisfied: multidict<7.0,>=4.5 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->paddlenlp==2.6.1.post0) (6.0.4)

Requirement already satisfied: yarl<2.0,>=1.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->paddlenlp==2.6.1.post0) (1.9.4)

Requirement already satisfied: frozenlist>=1.1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->paddlenlp==2.6.1.post0) (1.4.1)

Requirement already satisfied: aiosignal>=1.1.2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->paddlenlp==2.6.1.post0) (1.3.1)

Requirement already satisfied: async-timeout<5.0,>=4.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from aiohttp->datasets>=2.0.0->paddlenlp==2.6.1.post0) (4.0.3)

Requirement already satisfied: mdurl~=0.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from markdown-it-py>=2.2.0->rich->paddlenlp==2.6.1.post0) (0.1.1)

Requirement already satisfied: annotated-types>=0.4.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from pydantic!=1.8,!=1.8.1,!=2.0.0,!=2.0.1,!=2.1.0,<3.0.0,>=1.7.4->fastapi->paddlenlp==2.6.1.post0) (0.6.0)

Requirement already satisfied: pydantic-core==2.14.6 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from pydantic!=1.8,!=1.8.1,!=2.0.0,!=2.0.1,!=2.1.0,<3.0.0,>=1.7.4->fastapi->paddlenlp==2.6.1.post0) (2.14.6)

Requirement already satisfied: charset-normalizer<4,>=2 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from requests->aistudio-sdk>=0.1.3->paddlenlp==2.6.1.post0) (3.3.2)

Requirement already satisfied: urllib3<3,>=1.21.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from requests->aistudio-sdk>=0.1.3->paddlenlp==2.6.1.post0) (2.1.0)

Requirement already satisfied: certifi>=2017.4.17 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from requests->aistudio-sdk>=0.1.3->paddlenlp==2.6.1.post0) (2023.11.17)

Requirement already satisfied: scipy>=1.5.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from scikit-learn>=0.21.3->seqeval->paddlenlp==2.6.1.post0) (1.11.4)

Requirement already satisfied: joblib>=1.1.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from scikit-learn>=0.21.3->seqeval->paddlenlp==2.6.1.post0) (1.3.2)

Requirement already satisfied: threadpoolctl>=2.0.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from scikit-learn>=0.21.3->seqeval->paddlenlp==2.6.1.post0) (3.2.0)

Requirement already satisfied: pycryptodome>=3.8.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from bce-python-sdk->visualdl->paddlenlp==2.6.1.post0) (3.19.0)

Requirement already satisfied: future>=0.6.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from bce-python-sdk->visualdl->paddlenlp==2.6.1.post0) (0.18.3)

Requirement already satisfied: contourpy>=1.0.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->paddlenlp==2.6.1.post0) (1.2.0)

Requirement already satisfied: cycler>=0.10 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->paddlenlp==2.6.1.post0) (0.12.1)

Requirement already satisfied: fonttools>=4.22.0 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->paddlenlp==2.6.1.post0) (4.47.0)

Requirement already satisfied: kiwisolver>=1.3.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->paddlenlp==2.6.1.post0) (1.4.5)

Requirement already satisfied: pyparsing>=2.3.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->paddlenlp==2.6.1.post0) (3.1.1)

Requirement already satisfied: python-dateutil>=2.7 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from matplotlib->visualdl->paddlenlp==2.6.1.post0) (2.8.2)

Requirement already satisfied: tzdata>=2022.1 in /opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages (from pandas->datasets>=2.0.0->paddlenlp==2.6.1.post0) (2023.3)

Building wheels for collected packages: paddlenlp

Building editable for paddlenlp (pyproject.toml) ... done

Created wheel for paddlenlp: filename=paddlenlp-2.6.1.post0-0.editable-py3-none-any.whl size=15186 sha256=d63900491865a4c53fb8126468b30096bf5f9f684b281a3a5413724d608a6f40

Stored in directory: /tmp/pip-ephem-wheel-cache-dxh_79d_/wheels/ef/67/51/d39210219524142315c8b4babdd3bb2610f53d4d50639f381e

Successfully built paddlenlp

Installing collected packages: paddlenlp

Attempting uninstall: paddlenlp

Found existing installation: paddlenlp 2.6.1.post0

Uninstalling paddlenlp-2.6.1.post0:

Successfully uninstalled paddlenlp-2.6.1.post0

Successfully installed paddlenlp-2.6.1.post0

In [3]

# 查看是否安装成功,为确保可用,此处应重启一下内核

!pip list|grep paddlenlppaddlenlp 2.6.1.post0 /home/aistudio/PaddleNLP

2. 获取Baichuan2-7B/13B-chat模型 AIStudio以及集成了Baichuan2系列模型,模型可以使用from_aistudio=True参数直接加载,代码如下:

AutoModelForCausalLM.from_pretrained(

"aistudio/Baichuan2-7B-Chat", from_aistudio=True

)

不过考虑到本地化部署,我们还是先克隆下来,这里使用7B模型,大家可以根据自己的需要选择模型的版本

In [9]

# 可以从aistudio直接克隆,速度最快:

!git clone http://git.aistudio.baidu.com/aistudio/Baichuan2-7B-Chat.gitCloning into 'Baichuan2-7B-Chat'... remote: Enumerating objects: 75, done. remote: Counting objects: 100% (75/75), done. remote: Compressing objects: 100% (74/74), done. remote: Total 75 (delta 30), reused 0 (delta 0), pack-reused 0 Unpacking objects: 100% (75/75), 13.65 KiB | 873.00 KiB/s, done. Filtering content: 100% (9/9), 3.96 GiB | 8.93 MiB/s, done. Encountered 6 files that may not have been copied correctly on Windows: model-00003-of-00004.safetensors model_state-00003-of-00004.pdparams model_state-00001-of-00004.pdparams model_state-00002-of-00004.pdparams model-00002-of-00004.safetensors model-00001-of-00004.safetensors See: `git lfs help smudge` for more details.

六、数据准备

1. 按训练格式要求转换训练数据

In [5]

import json

from sklearn.model_selection import train_test_split

# 读取 JSON 文件

with open('data/data254538/RecentColdMedicalCase.json', 'r', encoding='utf-8') as f:

data = json.load(f)

# 将数据集划分为训练集和测试集

train, dev = train_test_split(data, test_size=0.1, random_state=42)

#安装训练要求格式转换为src/tgt数据,每条数据一行

with open('TrainData/train.json', 'w', encoding="utf-8") as f:

for item in train:

temp = dict()

temp['src'] = item['case']

temp['tgt'] = item['diagnosis']

json.dump(temp, f, ensure_ascii=False)

f.write('\n')

with open('TrainData/dev.json', 'w', encoding="utf-8") as f:

for item in dev:

temp = dict()

temp['src'] = item['case']

temp['tgt'] = item['diagnosis']

json.dump(temp, f, ensure_ascii=False)

f.write('\n')2. 编辑微调参数 /home/aistudio/PaddleNLP/llm/llama/lora_argument.json中预设了Lora微调的参数,不需要在命令行输入。直接编辑文档,主要修改前两行,模型路径和数据路径,其他参数可以自己根据注释内容自行调整

{

#预训练模型内置名称或者模型所在目录,默认为facebook/llama-7b

"model_name_or_path": "/home/aistudio/Baichuan2-7B-Chat",

#训练数据所在目录

"dataset_name_or_path": "/home/aistudio/TrainData",

#模型参数保存目录

"output_dir": "./checkpoints/llama_lora_ckpts",

#训练批次大小

"per_device_train_batch_size": 4,

#模型参数梯度累积的步数,可用于扩大 batch size。实际的 batch_size = per_device_train_batch_size * gradient_accumulation_steps。

"gradient_accumulation_steps": 4,

#评估批次大小

"per_device_eval_batch_size": 8,

#评估累积步数

"eval_accumulation_steps":16,

#要执行的训练 epoch 总数(如果不是整数,将在停止训练之前执行最后一个 epoch 的小数部分百分比)

"num_train_epochs": 3,

#参数更新的学习率。

"learning_rate": 3e-04,

#学习率热启的步数。

"warmup_steps": 30,

#训练日志打印的间隔步数。

"logging_steps": 1,

#模型评估的策略:每个epoch评估一次,每个batch评估一次或不定期

"evaluation_strategy": "epoch",

#模型保存的策略

"save_strategy": "epoch",

#上下文的最大输入长度,默认为128.

"src_length": 1024,

#

"max_length": 2048,

#使用 float16 精度进行模型训练和推理

"fp16": true,

# float16 精度训练模式,O2表示纯 float16 训练。

"fp16_opt_level": "O2",

#是否训练模型。

"do_train": true,

#是否评估模型。

"do_eval": true,

#是否禁用tqdm库的进度条。

"disable_tqdm": true,

#否在训练结束后加载最佳模型

"load_best_model_at_end": true,

#在评估的时候是否调用model.generate,默认为False。

"eval_with_do_generation": false,

#用于比较模型的评估指标,如loss,accuracy等

"metric_for_best_model": "accuracy",

#是否重新计算评估指标

"recompute": true,

#存储和管理的模型数量,是否保存多个副本

"save_total_limit": 1,

#模型并行数量。

"tensor_parallel_degree": 1,

#流水线中并行执行的任务数量

"pipeline_parallel_degree": 1,

#是否使用LoRA技术。

"lora": true,

#是否使用零填充

"zero_padding": false,

#是否使用Flash Attention(快速注意力)机制。

"use_flash_attention": false

}

七、进行训练

1. 训练前先测试下原始模型的能力

In [3]

import json

import paddle

import get_result

from paddlenlp.transformers import AutoModelForCausalLM,LlamaTokenizer

#载入模型及权重

model = AutoModelForCausalLM.from_pretrained(

'/home/aistudio/Baichuan2-7B-Chat',

dtype="float16",

tensor_parallel_degree=0,

tensor_parallel_rank=0,

)

model.eval()

tokenizer = LlamaTokenizer.from_pretrained('/home/aistudio/Baichuan2-7B-Chat')

result=get_result.generate(model,tokenizer,"我感冒了,有点咳嗽,发热,头疼,有口渴但是小便不利")

print(result)

/opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages/tqdm/auto.py:21: TqdmWarning: IProgress not found. Please update jupyter and ipywidgets. See https://ipywidgets.readthedocs.io/en/stable/user_install.html

from .autonotebook import tqdm as notebook_tqdm

/opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages/_distutils_hack/__init__.py:33: UserWarning: Setuptools is replacing distutils.

warnings.warn("Setuptools is replacing distutils.")

[2023-12-27 12:58:44,513] [ INFO] - We are using <class 'paddlenlp.transformers.llama.modeling.LlamaForCausalLM'> to load '/home/aistudio/Baichuan2-7B-Chat'.

[2023-12-27 12:58:44,514] [ INFO] - Loading configuration file /home/aistudio/Baichuan2-7B-Chat/config.json

[2023-12-27 12:58:44,518] [ INFO] - Loading weights file /home/aistudio/Baichuan2-7B-Chat/model.safetensors.index.json

W1227 12:58:44.522776 2705 gpu_resources.cc:119] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 12.0, Runtime API Version: 11.8

W1227 12:58:44.524257 2705 gpu_resources.cc:149] device: 0, cuDNN Version: 8.9.

Loading checkpoint shards: 100%|██████████| 4/4 [03:48<00:00, 57.18s/it]

[2023-12-27 13:02:48,099] [ INFO] - All model checkpoint weights were used when initializing LlamaForCausalLM.

[2023-12-27 13:02:48,100] [ INFO] - All the weights of LlamaForCausalLM were initialized from the model checkpoint at /home/aistudio/Baichuan2-7B-Chat.

If your task is similar to the task the model of the checkpoint was trained on, you can already use LlamaForCausalLM for predictions without further training.

[2023-12-27 13:02:48,106] [ INFO] - Loading configuration file /home/aistudio/Baichuan2-7B-Chat/generation_config.json

。” 根据您提供的症状, 可能是由于外感风寒引起的感冒现象. 这是一种常见的疾病,可以通过服用一些药物来缓解症状并促进康复。然而,在开始任何药物治疗之前,请务必咨询专业医生的意见和建议;因为每个人的病情和体质不同,可能需要不同的治疗方案或用药剂量。以下是一些建议供您参考: 1. 多休息、多饮水以帮助身体排毒;避免食用辛辣刺激性食物以及油腻食物以减少对呼吸道的刺激 ;保持室内空气流通,以免空气过于干燥引起咽喉不适等症状加重</s>

原始模型的回答比较泛,没有针对病情的精确诊断,也没有太有效的方案。接下来我们使用训练数据进行微调训练

2. 进行微调

执行下面训练前,先要重启一下内核,释放显存,否则会显存不够用

In [1]

%cd ~/PaddleNLP/llm/

# 单卡训练

!python finetune_generation.py ./llama/lora_argument.json

# 分布式训练

# 将lora_argument.json中tensor_parallel_degree修改为2

#python -u -m paddle.distributed.launch --gpus "0,1" finetune_generation.py ./llama/lora_argument.json/home/aistudio/PaddleNLP/llm

/opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages/IPython/core/magics/osm.py:393: UserWarning: using bookmarks requires you to install the `pickleshare` library.

bkms = self.shell.db.get('bookmarks', {})

/opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages/IPython/core/magics/osm.py:417: UserWarning: using dhist requires you to install the `pickleshare` library.

self.shell.db['dhist'] = compress_dhist(dhist)[-100:]

/opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages/_distutils_hack/__init__.py:33: UserWarning: Setuptools is replacing distutils.

warnings.warn("Setuptools is replacing distutils.")

[2023-12-26 17:37:10,698] [ INFO] - The default value for the training argument `--report_to` will change in v5 (from all installed integrations to none). In v5, you will need to use `--report_to all` to get the same behavior as now. You should start updating your code and make this info disappear :-).

[2023-12-26 17:37:10,699] [ INFO] - ============================================================

[2023-12-26 17:37:10,699] [ INFO] - Model Configuration Arguments

[2023-12-26 17:37:10,699] [ INFO] - paddle commit id : 3a1b1659a405a044ce806fbe027cc146f1193e6d

[2023-12-26 17:37:10,699] [ INFO] - paddlenlp commit id : 942865f52b42cd6e0666a19af316f32e151694eb.dirty

[2023-12-26 17:37:10,699] [ INFO] - aistudio_repo_id : None

[2023-12-26 17:37:10,699] [ INFO] - aistudio_repo_license : Apache License 2.0

[2023-12-26 17:37:10,699] [ INFO] - aistudio_repo_private : True

[2023-12-26 17:37:10,699] [ INFO] - aistudio_token : None

[2023-12-26 17:37:10,699] [ INFO] - from_aistudio : False

[2023-12-26 17:37:10,699] [ INFO] - lora : True

[2023-12-26 17:37:10,699] [ INFO] - lora_path : None

[2023-12-26 17:37:10,699] [ INFO] - lora_rank : 8

[2023-12-26 17:37:10,700] [ INFO] - model_name_or_path : /home/aistudio/Baichuan2-7B-Chat

[2023-12-26 17:37:10,700] [ INFO] - neftune : False

[2023-12-26 17:37:10,700] [ INFO] - neftune_noise_alpha : 5.0

[2023-12-26 17:37:10,700] [ INFO] - num_prefix_tokens : 128

[2023-12-26 17:37:10,700] [ INFO] - prefix_tuning : False

[2023-12-26 17:37:10,700] [ INFO] - save_to_aistudio : False

[2023-12-26 17:37:10,700] [ INFO] - use_flash_attention : False

[2023-12-26 17:37:10,700] [ INFO] - weight_blocksize : 64

[2023-12-26 17:37:10,700] [ INFO] - weight_double_quant : False

[2023-12-26 17:37:10,700] [ INFO] - weight_double_quant_block_size: 256

[2023-12-26 17:37:10,700] [ INFO] - weight_quantize_algo : None

[2023-12-26 17:37:10,700] [ INFO] -

[2023-12-26 17:37:10,700] [ INFO] - ============================================================

[2023-12-26 17:37:10,700] [ INFO] - Data Configuration Arguments

[2023-12-26 17:37:10,700] [ INFO] - paddle commit id : 3a1b1659a405a044ce806fbe027cc146f1193e6d

[2023-12-26 17:37:10,700] [ INFO] - paddlenlp commit id : 942865f52b42cd6e0666a19af316f32e151694eb.dirty

[2023-12-26 17:37:10,700] [ INFO] - chat_template : None

[2023-12-26 17:37:10,700] [ INFO] - dataset_name_or_path : /home/aistudio/TrainData

[2023-12-26 17:37:10,701] [ INFO] - eval_with_do_generation : False

[2023-12-26 17:37:10,701] [ INFO] - intokens : None

[2023-12-26 17:37:10,701] [ INFO] - lazy : False

[2023-12-26 17:37:10,701] [ INFO] - max_length : 2048

[2023-12-26 17:37:10,701] [ INFO] - save_generation_output : False

[2023-12-26 17:37:10,701] [ INFO] - src_length : 1024

[2023-12-26 17:37:10,701] [ INFO] - task_name : None

[2023-12-26 17:37:10,701] [ INFO] - task_name_or_path : None

[2023-12-26 17:37:10,701] [ INFO] - zero_padding : False

[2023-12-26 17:37:10,701] [ INFO] -

[2023-12-26 17:37:10,701] [ INFO] - ============================================================

[2023-12-26 17:37:10,701] [ INFO] - Quant Configuration Arguments

[2023-12-26 17:37:10,701] [ INFO] - paddle commit id : 3a1b1659a405a044ce806fbe027cc146f1193e6d

[2023-12-26 17:37:10,701] [ INFO] - paddlenlp commit id : 942865f52b42cd6e0666a19af316f32e151694eb.dirty

[2023-12-26 17:37:10,701] [ INFO] - do_gptq : False

[2023-12-26 17:37:10,701] [ INFO] - do_ptq : False

[2023-12-26 17:37:10,701] [ INFO] - do_qat : False

[2023-12-26 17:37:10,702] [ INFO] - gptq_step : 8

[2023-12-26 17:37:10,702] [ INFO] - ptq_step : 32

[2023-12-26 17:37:10,702] [ INFO] - quant_type : a8w8

[2023-12-26 17:37:10,702] [ INFO] - shift : False

[2023-12-26 17:37:10,702] [ INFO] - shift_all_linears : False

[2023-12-26 17:37:10,702] [ INFO] - shift_sampler : ema

[2023-12-26 17:37:10,702] [ INFO] - shift_step : 32

[2023-12-26 17:37:10,702] [ INFO] - smooth : False

[2023-12-26 17:37:10,702] [ INFO] - smooth_all_linears : False

[2023-12-26 17:37:10,702] [ INFO] - smooth_k_piece : 3

[2023-12-26 17:37:10,702] [ INFO] - smooth_piecewise_search : False

[2023-12-26 17:37:10,703] [ INFO] - smooth_sampler : none

[2023-12-26 17:37:10,703] [ INFO] - smooth_search_piece : False

[2023-12-26 17:37:10,703] [ INFO] - smooth_step : 32

[2023-12-26 17:37:10,703] [ INFO] -

[2023-12-26 17:37:10,703] [ INFO] - ============================================================

[2023-12-26 17:37:10,703] [ INFO] - Generation Configuration Arguments

[2023-12-26 17:37:10,703] [ INFO] - paddle commit id : 3a1b1659a405a044ce806fbe027cc146f1193e6d

[2023-12-26 17:37:10,703] [ INFO] - paddlenlp commit id : 942865f52b42cd6e0666a19af316f32e151694eb.dirty

[2023-12-26 17:37:10,703] [ INFO] - top_k : 1

[2023-12-26 17:37:10,703] [ INFO] - top_p : 1.0

[2023-12-26 17:37:10,703] [ INFO] -

[2023-12-26 17:37:10,703] [ WARNING] - Process rank: -1, device: gpu, world_size: 1, distributed training: False, 16-bits training: True

[2023-12-26 17:37:10,704] [ INFO] - We are using <class 'paddlenlp.transformers.llama.configuration.LlamaConfig'> to load '/home/aistudio/Baichuan2-7B-Chat'.

[2023-12-26 17:37:10,704] [ INFO] - Loading configuration file /home/aistudio/Baichuan2-7B-Chat/config.json

[2023-12-26 17:37:10,705] [ INFO] - We are using <class 'paddlenlp.transformers.llama.modeling.LlamaForCausalLM'> to load '/home/aistudio/Baichuan2-7B-Chat'.

[2023-12-26 17:37:10,706] [ INFO] - Loading weights file /home/aistudio/Baichuan2-7B-Chat/model.safetensors.index.json

W1226 17:37:10.709461 26242 gpu_resources.cc:119] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 12.0, Runtime API Version: 11.8

W1226 17:37:10.710600 26242 gpu_resources.cc:149] device: 0, cuDNN Version: 8.9.

Loading checkpoint shards: 100%|██████████████████| 4/4 [04:16<00:00, 64.11s/it]

[2023-12-26 17:41:56,850] [ INFO] - All model checkpoint weights were used when initializing LlamaForCausalLM.

[2023-12-26 17:41:56,850] [ INFO] - All the weights of LlamaForCausalLM were initialized from the model checkpoint at /home/aistudio/Baichuan2-7B-Chat.

If your task is similar to the task the model of the checkpoint was trained on, you can already use LlamaForCausalLM for predictions without further training.

[2023-12-26 17:41:56,853] [ INFO] - Loading configuration file /home/aistudio/Baichuan2-7B-Chat/generation_config.json

[2023-12-26 17:41:56,853] [ INFO] - We are using <class 'paddlenlp.transformers.llama.tokenizer.LlamaTokenizer'> to load '/home/aistudio/Baichuan2-7B-Chat'.

Downloading data files: 100%|███████████████████| 1/1 [00:00<00:00, 7436.71it/s]

Extracting data files: 100%|████████████████████| 1/1 [00:00<00:00, 1189.87it/s]

Generating train split: 1848 examples [00:00, 108999.65 examples/s]

Downloading data files: 100%|██████████████████| 1/1 [00:00<00:00, 11214.72it/s]

Extracting data files: 100%|████████████████████| 1/1 [00:00<00:00, 1536.38it/s]

Generating train split: 206 examples [00:00, 77987.78 examples/s]

[2023-12-26 17:42:21,202] [ INFO] - Frozen parameters: 7.51e+09 || Trainable parameters:2.00e+07 || Total parameters:7.53e+09|| Trainable:0.27%

[2023-12-26 17:42:21,202] [ INFO] - The global seed is set to 42, local seed is set to 43 and random seed is set to 42.

[2023-12-26 17:42:21,238] [ INFO] - Using half precision

[2023-12-26 17:42:21,268] [ INFO] - ============================================================

[2023-12-26 17:42:21,268] [ INFO] - Training Configuration Arguments

[2023-12-26 17:42:21,268] [ INFO] - paddle commit id : 3a1b1659a405a044ce806fbe027cc146f1193e6d

[2023-12-26 17:42:21,268] [ INFO] - paddlenlp commit id : 942865f52b42cd6e0666a19af316f32e151694eb.dirty

[2023-12-26 17:42:21,268] [ INFO] - _no_sync_in_gradient_accumulation: True

[2023-12-26 17:42:21,268] [ INFO] - adam_beta1 : 0.9

[2023-12-26 17:42:21,268] [ INFO] - adam_beta2 : 0.999

[2023-12-26 17:42:21,268] [ INFO] - adam_epsilon : 1e-08

[2023-12-26 17:42:21,268] [ INFO] - amp_custom_black_list : None

[2023-12-26 17:42:21,268] [ INFO] - amp_custom_white_list : None

[2023-12-26 17:42:21,268] [ INFO] - amp_master_grad : False

[2023-12-26 17:42:21,269] [ INFO] - autotuner_benchmark : False

[2023-12-26 17:42:21,269] [ INFO] - benchmark : False

[2023-12-26 17:42:21,269] [ INFO] - bf16 : False

[2023-12-26 17:42:21,269] [ INFO] - bf16_full_eval : False

[2023-12-26 17:42:21,269] [ INFO] - current_device : gpu:0

[2023-12-26 17:42:21,269] [ INFO] - data_parallel_rank : 0

[2023-12-26 17:42:21,269] [ INFO] - dataloader_drop_last : False

[2023-12-26 17:42:21,269] [ INFO] - dataloader_num_workers : 0

[2023-12-26 17:42:21,269] [ INFO] - dataset_rank : 0

[2023-12-26 17:42:21,269] [ INFO] - dataset_world_size : 1

[2023-12-26 17:42:21,269] [ INFO] - device : gpu

[2023-12-26 17:42:21,269] [ INFO] - disable_tqdm : True

[2023-12-26 17:42:21,269] [ INFO] - distributed_dataloader : False

[2023-12-26 17:42:21,269] [ INFO] - do_eval : True

[2023-12-26 17:42:21,269] [ INFO] - do_export : False

[2023-12-26 17:42:21,269] [ INFO] - do_predict : False

[2023-12-26 17:42:21,269] [ INFO] - do_train : True

[2023-12-26 17:42:21,269] [ INFO] - eval_accumulation_steps : 16

[2023-12-26 17:42:21,270] [ INFO] - eval_batch_size : 8

[2023-12-26 17:42:21,270] [ INFO] - eval_steps : None

[2023-12-26 17:42:21,270] [ INFO] - evaluation_strategy : IntervalStrategy.EPOCH

[2023-12-26 17:42:21,270] [ INFO] - flatten_param_grads : False

[2023-12-26 17:42:21,270] [ INFO] - force_reshard_pp : False

[2023-12-26 17:42:21,270] [ INFO] - fp16 : True

[2023-12-26 17:42:21,270] [ INFO] - fp16_full_eval : False

[2023-12-26 17:42:21,270] [ INFO] - fp16_opt_level : O2

[2023-12-26 17:42:21,270] [ INFO] - gradient_accumulation_steps : 4

[2023-12-26 17:42:21,270] [ INFO] - greater_is_better : True

[2023-12-26 17:42:21,270] [ INFO] - hybrid_parallel_topo_order : None

[2023-12-26 17:42:21,270] [ INFO] - ignore_data_skip : False

[2023-12-26 17:42:21,270] [ INFO] - ignore_load_lr_and_optim : False

[2023-12-26 17:42:21,270] [ INFO] - label_names : None

[2023-12-26 17:42:21,270] [ INFO] - lazy_data_processing : True

[2023-12-26 17:42:21,270] [ INFO] - learning_rate : 0.0003

[2023-12-26 17:42:21,270] [ INFO] - load_best_model_at_end : True

[2023-12-26 17:42:21,270] [ INFO] - load_sharded_model : False

[2023-12-26 17:42:21,270] [ INFO] - local_process_index : 0

[2023-12-26 17:42:21,271] [ INFO] - local_rank : -1

[2023-12-26 17:42:21,271] [ INFO] - log_level : -1

[2023-12-26 17:42:21,271] [ INFO] - log_level_replica : -1

[2023-12-26 17:42:21,271] [ INFO] - log_on_each_node : True

[2023-12-26 17:42:21,271] [ INFO] - logging_dir : ./checkpoints/llama_lora_ckpts/runs/Dec26_17-37-10_jupyter-3484865-7331292

[2023-12-26 17:42:21,271] [ INFO] - logging_first_step : False

[2023-12-26 17:42:21,271] [ INFO] - logging_steps : 1

[2023-12-26 17:42:21,271] [ INFO] - logging_strategy : IntervalStrategy.STEPS

[2023-12-26 17:42:21,271] [ INFO] - logical_process_index : 0

[2023-12-26 17:42:21,271] [ INFO] - lr_end : 1e-07

[2023-12-26 17:42:21,271] [ INFO] - lr_scheduler_type : SchedulerType.LINEAR

[2023-12-26 17:42:21,271] [ INFO] - max_evaluate_steps : -1

[2023-12-26 17:42:21,271] [ INFO] - max_grad_norm : 1.0

[2023-12-26 17:42:21,271] [ INFO] - max_steps : -1

[2023-12-26 17:42:21,271] [ INFO] - metric_for_best_model : accuracy

[2023-12-26 17:42:21,271] [ INFO] - minimum_eval_times : None

[2023-12-26 17:42:21,271] [ INFO] - no_cuda : False

[2023-12-26 17:42:21,271] [ INFO] - num_cycles : 0.5

[2023-12-26 17:42:21,272] [ INFO] - num_train_epochs : 3

[2023-12-26 17:42:21,272] [ INFO] - optim : OptimizerNames.ADAMW

[2023-12-26 17:42:21,272] [ INFO] - optimizer_name_suffix : None

[2023-12-26 17:42:21,272] [ INFO] - output_dir : ./checkpoints/llama_lora_ckpts

[2023-12-26 17:42:21,272] [ INFO] - overwrite_output_dir : False

[2023-12-26 17:42:21,272] [ INFO] - past_index : -1

[2023-12-26 17:42:21,272] [ INFO] - per_device_eval_batch_size : 8

[2023-12-26 17:42:21,272] [ INFO] - per_device_train_batch_size : 4

[2023-12-26 17:42:21,272] [ INFO] - pipeline_parallel_config :

[2023-12-26 17:42:21,272] [ INFO] - pipeline_parallel_degree : -1

[2023-12-26 17:42:21,272] [ INFO] - pipeline_parallel_rank : 0

[2023-12-26 17:42:21,272] [ INFO] - power : 1.0

[2023-12-26 17:42:21,272] [ INFO] - prediction_loss_only : False

[2023-12-26 17:42:21,272] [ INFO] - process_index : 0

[2023-12-26 17:42:21,272] [ INFO] - recompute : True

[2023-12-26 17:42:21,272] [ INFO] - remove_unused_columns : True

[2023-12-26 17:42:21,272] [ INFO] - report_to : ['visualdl']

[2023-12-26 17:42:21,272] [ INFO] - resume_from_checkpoint : None

[2023-12-26 17:42:21,273] [ INFO] - run_name : ./checkpoints/llama_lora_ckpts

[2023-12-26 17:42:21,273] [ INFO] - save_on_each_node : False

[2023-12-26 17:42:21,273] [ INFO] - save_sharded_model : False

[2023-12-26 17:42:21,273] [ INFO] - save_steps : 500

[2023-12-26 17:42:21,273] [ INFO] - save_strategy : IntervalStrategy.EPOCH

[2023-12-26 17:42:21,273] [ INFO] - save_total_limit : 1

[2023-12-26 17:42:21,273] [ INFO] - scale_loss : 32768

[2023-12-26 17:42:21,273] [ INFO] - seed : 42

[2023-12-26 17:42:21,273] [ INFO] - sep_parallel_degree : -1

[2023-12-26 17:42:21,273] [ INFO] - sharding : []

[2023-12-26 17:42:21,273] [ INFO] - sharding_degree : -1

[2023-12-26 17:42:21,273] [ INFO] - sharding_parallel_config :

[2023-12-26 17:42:21,273] [ INFO] - sharding_parallel_degree : -1

[2023-12-26 17:42:21,273] [ INFO] - sharding_parallel_rank : 0

[2023-12-26 17:42:21,273] [ INFO] - should_load_dataset : True

[2023-12-26 17:42:21,273] [ INFO] - should_load_sharding_stage1_model: False

[2023-12-26 17:42:21,273] [ INFO] - should_log : True

[2023-12-26 17:42:21,273] [ INFO] - should_save : True

[2023-12-26 17:42:21,273] [ INFO] - should_save_model_state : True

[2023-12-26 17:42:21,274] [ INFO] - should_save_sharding_stage1_model: False

[2023-12-26 17:42:21,274] [ INFO] - skip_memory_metrics : True

[2023-12-26 17:42:21,274] [ INFO] - skip_profile_timer : True

[2023-12-26 17:42:21,274] [ INFO] - tensor_parallel_config :

[2023-12-26 17:42:21,274] [ INFO] - tensor_parallel_degree : -1

[2023-12-26 17:42:21,274] [ INFO] - tensor_parallel_rank : 0

[2023-12-26 17:42:21,274] [ INFO] - to_static : False

[2023-12-26 17:42:21,274] [ INFO] - train_batch_size : 4

[2023-12-26 17:42:21,274] [ INFO] - unified_checkpoint : False

[2023-12-26 17:42:21,274] [ INFO] - use_auto_parallel : False

[2023-12-26 17:42:21,274] [ INFO] - use_hybrid_parallel : False

[2023-12-26 17:42:21,274] [ INFO] - warmup_ratio : 0.0

[2023-12-26 17:42:21,274] [ INFO] - warmup_steps : 30

[2023-12-26 17:42:21,274] [ INFO] - weight_decay : 0.0

[2023-12-26 17:42:21,274] [ INFO] - weight_name_suffix : None

[2023-12-26 17:42:21,274] [ INFO] - world_size : 1

[2023-12-26 17:42:21,274] [ INFO] -

[2023-12-26 17:42:21,274] [ INFO] - Starting training from resume_from_checkpoint : None

/opt/conda/envs/python35-paddle120-env/lib/python3.10/site-packages/paddle/distributed/parallel.py:411: UserWarning: The program will return to single-card operation. Please check 1, whether you use spawn or fleetrun to start the program. 2, Whether it is a multi-card program. 3, Is the current environment multi-card.

warnings.warn(

[2023-12-26 17:42:21,280] [ INFO] - ***** Running training *****

[2023-12-26 17:42:21,280] [ INFO] - Num examples = 1,848

[2023-12-26 17:42:21,280] [ INFO] - Num Epochs = 3

[2023-12-26 17:42:21,281] [ INFO] - Instantaneous batch size per device = 4

[2023-12-26 17:42:21,281] [ INFO] - Total train batch size (w. parallel, distributed & accumulation) = 16

[2023-12-26 17:42:21,281] [ INFO] - Gradient Accumulation steps = 4

[2023-12-26 17:42:21,281] [ INFO] - Total optimization steps = 345

[2023-12-26 17:42:21,281] [ INFO] - Total num train samples = 5,544

[2023-12-26 17:42:21,285] [ INFO] - Number of trainable parameters = 19,988,480 (per device)

[2023-12-26 17:42:24,950] [ INFO] - loss: 4.04572821, learning_rate: 1e-05, global_step: 1, interval_runtime: 3.6647, interval_samples_per_second: 4.365975805930098, interval_steps_per_second: 0.27287348787063115, ppl: 57.15279011734303, epoch: 0.0087

[2023-12-26 17:42:28,307] [ INFO] - loss: 4.65756416, learning_rate: 2e-05, global_step: 2, interval_runtime: 3.3567, interval_samples_per_second: 4.766609671416571, interval_steps_per_second: 0.2979131044635357, ppl: 105.37908269415595, epoch: 0.0173

[2023-12-26 17:42:31,806] [ INFO] - loss: 4.39336109, learning_rate: 3e-05, global_step: 3, interval_runtime: 3.4991, interval_samples_per_second: 4.572590091463571, interval_steps_per_second: 0.2857868807164732, ppl: 80.91191469147887, epoch: 0.026

[2023-12-26 17:42:35,023] [ INFO] - loss: 4.2095747, learning_rate: 4e-05, global_step: 4, interval_runtime: 3.2167, interval_samples_per_second: 4.974087316240644, interval_steps_per_second: 0.31088045726504027, ppl: 67.32789916460032, epoch: 0.0346

[2023-12-26 17:42:38,280] [ INFO] - loss: 4.45522022, learning_rate: 5e-05, global_step: 5, interval_runtime: 3.2576, interval_samples_per_second: 4.911592329911189, interval_steps_per_second: 0.3069745206194493, ppl: 86.07510421755674, epoch: 0.0433

[2023-12-26 17:42:42,170] [ INFO] - loss: 4.172194, learning_rate: 6e-05, global_step: 6, interval_runtime: 3.89, interval_samples_per_second: 4.113123033615344, interval_steps_per_second: 0.257070189600959, ppl: 64.85759368161546, epoch: 0.0519

[2023-12-26 17:42:45,784] [ INFO] - loss: 3.75121832, learning_rate: 7e-05, global_step: 7, interval_runtime: 3.6141, interval_samples_per_second: 4.427085699961078, interval_steps_per_second: 0.2766928562475674, ppl: 42.57291785460257, epoch: 0.0606

[2023-12-26 17:42:49,598] [ INFO] - loss: 3.57292008, learning_rate: 8e-05, global_step: 8, interval_runtime: 3.8137, interval_samples_per_second: 4.195439390785131, interval_steps_per_second: 0.2622149619240707, ppl: 35.62045601509179, epoch: 0.0693

[2023-12-26 17:42:52,626] [ INFO] - loss: 3.01917839, learning_rate: 9e-05, global_step: 9, interval_runtime: 3.028, interval_samples_per_second: 5.2839536578947826, interval_steps_per_second: 0.3302471036184239, ppl: 20.47446274838995, epoch: 0.0779

[2023-12-26 17:42:55,893] [ INFO] - loss: 2.75773215, learning_rate: 0.0001, global_step: 10, interval_runtime: 3.2669, interval_samples_per_second: 4.897542422550416, interval_steps_per_second: 0.306096401409401, ppl: 15.764051874163764, epoch: 0.0866

[2023-12-26 17:42:59,152] [ INFO] - loss: 2.5989778, learning_rate: 0.00011, global_step: 11, interval_runtime: 3.2584, interval_samples_per_second: 4.9104358222759465, interval_steps_per_second: 0.30690223889224666, ppl: 13.449982433667916, epoch: 0.0952

[2023-12-26 17:43:02,654] [ INFO] - loss: 2.21501446, learning_rate: 0.00012, global_step: 12, interval_runtime: 3.5021, interval_samples_per_second: 4.5686416499291065, interval_steps_per_second: 0.28554010312056916, ppl: 9.161541586542349, epoch: 0.1039

[2023-12-26 17:43:05,641] [ INFO] - loss: 2.03604507, learning_rate: 0.00013, global_step: 13, interval_runtime: 2.9872, interval_samples_per_second: 5.356214143645703, interval_steps_per_second: 0.33476338397785643, ppl: 7.660253444848598, epoch: 0.1126

[2023-12-26 17:43:09,544] [ INFO] - loss: 1.90918612, learning_rate: 0.00014, global_step: 14, interval_runtime: 3.9035, interval_samples_per_second: 4.09884021807836, interval_steps_per_second: 0.2561775136298975, ppl: 6.7475948306385, epoch: 0.1212

[2023-12-26 17:43:13,583] [ INFO] - loss: 1.76850057, learning_rate: 0.00015, global_step: 15, interval_runtime: 4.0385, interval_samples_per_second: 3.9618929230574502, interval_steps_per_second: 0.24761830769109064, ppl: 5.862057024120994, epoch: 0.1299

[2023-12-26 17:43:17,162] [ INFO] - loss: 1.59435368, learning_rate: 0.00016, global_step: 16, interval_runtime: 3.5791, interval_samples_per_second: 4.470455789035147, interval_steps_per_second: 0.2794034868146967, ppl: 4.925144823605828, epoch: 0.1385

[2023-12-26 17:43:20,310] [ INFO] - loss: 1.55910134, learning_rate: 0.00017, global_step: 17, interval_runtime: 3.1482, interval_samples_per_second: 5.082190660424854, interval_steps_per_second: 0.3176369162765534, ppl: 4.754546603849948, epoch: 0.1472

[2023-12-26 17:43:24,120] [ INFO] - loss: 1.37142038, learning_rate: 0.00018, global_step: 18, interval_runtime: 3.8102, interval_samples_per_second: 4.199265432108036, interval_steps_per_second: 0.26245408950675225, ppl: 3.940944360515859, epoch: 0.1558

[2023-12-26 17:43:27,430] [ INFO] - loss: 1.26009345, learning_rate: 0.00019, global_step: 19, interval_runtime: 3.3092, interval_samples_per_second: 4.835006761991377, interval_steps_per_second: 0.30218792262446104, ppl: 3.5257509533974374, epoch: 0.1645

[2023-12-26 17:43:30,616] [ INFO] - loss: 1.38265204, learning_rate: 0.0002, global_step: 20, interval_runtime: 3.1861, interval_samples_per_second: 5.021890906010552, interval_steps_per_second: 0.3138681816256595, ppl: 3.985457216342121, epoch: 0.1732

[2023-12-26 17:43:34,073] [ INFO] - loss: 1.34700322, learning_rate: 0.00021, global_step: 21, interval_runtime: 3.4576, interval_samples_per_second: 4.627547107516889, interval_steps_per_second: 0.28922169421980554, ppl: 3.845882978897622, epoch: 0.1818

[2023-12-26 17:43:37,613] [ INFO] - loss: 0.96020913, learning_rate: 0.00022, global_step: 22, interval_runtime: 3.5402, interval_samples_per_second: 4.519494023607096, interval_steps_per_second: 0.2824683764754435, ppl: 2.6122427146223246, epoch: 0.1905

[2023-12-26 17:43:41,826] [ INFO] - loss: 0.81633461, learning_rate: 0.00023, global_step: 23, interval_runtime: 4.2123, interval_samples_per_second: 3.798402662854955, interval_steps_per_second: 0.2374001664284347, ppl: 2.262192803692768, epoch: 0.1991

[2023-12-26 17:43:46,109] [ INFO] - loss: 0.92583209, learning_rate: 0.00024, global_step: 24, interval_runtime: 4.2829, interval_samples_per_second: 3.735805341596222, interval_steps_per_second: 0.23348783384976388, ppl: 2.523967554998823, epoch: 0.2078

[2023-12-26 17:43:49,823] [ INFO] - loss: 0.97212011, learning_rate: 0.00025, global_step: 25, interval_runtime: 3.7141, interval_samples_per_second: 4.307892351580294, interval_steps_per_second: 0.26924327197376835, ppl: 2.643543124577833, epoch: 0.2165

[2023-12-26 17:43:53,004] [ INFO] - loss: 0.68968725, learning_rate: 0.00026, global_step: 26, interval_runtime: 3.1813, interval_samples_per_second: 5.029367627441282, interval_steps_per_second: 0.3143354767150801, ppl: 1.9930920962051093, epoch: 0.2251

[2023-12-26 17:43:56,862] [ INFO] - loss: 0.75413704, learning_rate: 0.00027, global_step: 27, interval_runtime: 3.8575, interval_samples_per_second: 4.1477499141974175, interval_steps_per_second: 0.2592343696373386, ppl: 2.1257762717033786, epoch: 0.2338

[2023-12-26 17:43:59,763] [ INFO] - loss: 0.63414562, learning_rate: 0.00028, global_step: 28, interval_runtime: 2.9012, interval_samples_per_second: 5.514889879483934, interval_steps_per_second: 0.34468061746774586, ppl: 1.885410596015647, epoch: 0.2424

[2023-12-26 17:44:03,739] [ INFO] - loss: 0.6446268, learning_rate: 0.00029, global_step: 29, interval_runtime: 3.9758, interval_samples_per_second: 4.024363191163546, interval_steps_per_second: 0.2515226994477216, ppl: 1.9052758476273477, epoch: 0.2511

[2023-12-26 17:44:07,650] [ INFO] - loss: 0.63658696, learning_rate: 0.0003, global_step: 30, interval_runtime: 3.9109, interval_samples_per_second: 4.091122564876725, interval_steps_per_second: 0.25569516030479533, ppl: 1.8900191475517596, epoch: 0.2597

[2023-12-26 17:44:11,223] [ INFO] - loss: 0.56769204, learning_rate: 0.000299, global_step: 31, interval_runtime: 3.5735, interval_samples_per_second: 4.477359595438927, interval_steps_per_second: 0.27983497471493296, ppl: 1.7641906676844925, epoch: 0.2684

[2023-12-26 17:44:14,480] [ INFO] - loss: 0.51316339, learning_rate: 0.0002981, global_step: 32, interval_runtime: 3.2572, interval_samples_per_second: 4.912153169838064, interval_steps_per_second: 0.307009573114879, ppl: 1.6705675015668517, epoch: 0.2771

[2023-12-26 17:44:17,453] [ INFO] - loss: 0.54714298, learning_rate: 0.0002971, global_step: 33, interval_runtime: 2.9726, interval_samples_per_second: 5.382444256551639, interval_steps_per_second: 0.33640276603447744, ppl: 1.7283081464920642, epoch: 0.2857

[2023-12-26 17:44:20,106] [ INFO] - loss: 0.5057705, learning_rate: 0.0002962, global_step: 34, interval_runtime: 2.6522, interval_samples_per_second: 6.03280133930277, interval_steps_per_second: 0.3770500837064231, ppl: 1.6582627197822182, epoch: 0.2944

[2023-12-26 17:44:22,814] [ INFO] - loss: 0.44090223, learning_rate: 0.0002952, global_step: 35, interval_runtime: 2.7086, interval_samples_per_second: 5.907133986294971, interval_steps_per_second: 0.3691958741434357, ppl: 1.5541087497017636, epoch: 0.303

[2023-12-26 17:44:26,301] [ INFO] - loss: 0.41087997, learning_rate: 0.0002943, global_step: 36, interval_runtime: 3.4869, interval_samples_per_second: 4.588559640353671, interval_steps_per_second: 0.2867849775221044, ppl: 1.508144323130449, epoch: 0.3117

[2023-12-26 17:44:29,741] [ INFO] - loss: 0.36454743, learning_rate: 0.0002933, global_step: 37, interval_runtime: 3.4403, interval_samples_per_second: 4.65081652284074, interval_steps_per_second: 0.29067603267754627, ppl: 1.439862222225052, epoch: 0.3203

[2023-12-26 17:44:33,123] [ INFO] - loss: 0.34224176, learning_rate: 0.0002924, global_step: 38, interval_runtime: 3.3821, interval_samples_per_second: 4.730738726062611, interval_steps_per_second: 0.29567117037891316, ppl: 1.40810067878726, epoch: 0.329

[2023-12-26 17:44:36,322] [ INFO] - loss: 0.40164879, learning_rate: 0.0002914, global_step: 39, interval_runtime: 3.1989, interval_samples_per_second: 5.001789822888476, interval_steps_per_second: 0.31261186393052975, ppl: 1.4942864321684426, epoch: 0.3377

[2023-12-26 17:44:39,941] [ INFO] - loss: 0.34734392, learning_rate: 0.0002905, global_step: 40, interval_runtime: 3.6195, interval_samples_per_second: 4.420510938298477, interval_steps_per_second: 0.27628193364365483, ppl: 1.415303392821156, epoch: 0.3463

[2023-12-26 17:44:43,331] [ INFO] - loss: 0.34797683, learning_rate: 0.0002895, global_step: 41, interval_runtime: 3.3899, interval_samples_per_second: 4.719858802727873, interval_steps_per_second: 0.29499117517049206, ppl: 1.4161994360189456, epoch: 0.355

[2023-12-26 17:44:46,994] [ INFO] - loss: 0.3465372, learning_rate: 0.0002886, global_step: 42, interval_runtime: 3.6628, interval_samples_per_second: 4.368268092655978, interval_steps_per_second: 0.2730167557909986, ppl: 1.4141620996819957, epoch: 0.3636

[2023-12-26 17:44:50,066] [ INFO] - loss: 0.35139573, learning_rate: 0.0002876, global_step: 43, interval_runtime: 3.0719, interval_samples_per_second: 5.20858310791659, interval_steps_per_second: 0.3255364442447869, ppl: 1.4210495666020952, epoch: 0.3723

[2023-12-26 17:44:53,690] [ INFO] - loss: 0.3359192, learning_rate: 0.0002867, global_step: 44, interval_runtime: 3.6242, interval_samples_per_second: 4.414716444232063, interval_steps_per_second: 0.27591977776450394, ppl: 1.3992259627854928, epoch: 0.381

[2023-12-26 17:44:57,252] [ INFO] - loss: 0.332609, learning_rate: 0.0002857, global_step: 45, interval_runtime: 3.5619, interval_samples_per_second: 4.492029541467871, interval_steps_per_second: 0.28075184634174194, ppl: 1.3946019025079608, epoch: 0.3896

[2023-12-26 17:45:00,691] [ INFO] - loss: 0.30754462, learning_rate: 0.0002848, global_step: 46, interval_runtime: 3.4393, interval_samples_per_second: 4.6521041994702985, interval_steps_per_second: 0.29075651246689366, ppl: 1.360081493984319, epoch: 0.3983

[2023-12-26 17:45:04,107] [ INFO] - loss: 0.2745533, learning_rate: 0.0002838, global_step: 47, interval_runtime: 3.4151, interval_samples_per_second: 4.685137446638551, interval_steps_per_second: 0.29282109041490945, ppl: 1.3159427119464535, epoch: 0.4069

[2023-12-26 17:45:07,275] [ INFO] - loss: 0.33314824, learning_rate: 0.0002829, global_step: 48, interval_runtime: 3.168, interval_samples_per_second: 5.050457186622707, interval_steps_per_second: 0.3156535741639192, ppl: 1.3953541304353352, epoch: 0.4156

[2023-12-26 17:45:11,373] [ INFO] - loss: 0.30882508, learning_rate: 0.0002819, global_step: 49, interval_runtime: 4.0984, interval_samples_per_second: 3.903920851275084, interval_steps_per_second: 0.24399505320469275, ppl: 1.361824139389874, epoch: 0.4242

[2023-12-26 17:45:14,388] [ INFO] - loss: 0.30945367, learning_rate: 0.000281, global_step: 50, interval_runtime: 3.0149, interval_samples_per_second: 5.306904905470886, interval_steps_per_second: 0.3316815565919304, ppl: 1.3626804375276809, epoch: 0.4329

[2023-12-26 17:45:17,930] [ INFO] - loss: 0.29789728, learning_rate: 0.00028, global_step: 51, interval_runtime: 3.5425, interval_samples_per_second: 4.5166080190902775, interval_steps_per_second: 0.28228800119314235, ppl: 1.34702341452768, epoch: 0.4416

[2023-12-26 17:45:21,610] [ INFO] - loss: 0.28248543, learning_rate: 0.000279, global_step: 52, interval_runtime: 3.6793, interval_samples_per_second: 4.348656011857886, interval_steps_per_second: 0.2717910007411179, ppl: 1.3264224489808771, epoch: 0.4502

[2023-12-26 17:45:25,480] [ INFO] - loss: 0.28851509, learning_rate: 0.0002781, global_step: 53, interval_runtime: 3.8697, interval_samples_per_second: 4.134670933214908, interval_steps_per_second: 0.25841693332593174, ppl: 1.3344444861382638, epoch: 0.4589

[2023-12-26 17:45:28,597] [ INFO] - loss: 0.26777136, learning_rate: 0.0002771, global_step: 54, interval_runtime: 3.1172, interval_samples_per_second: 5.132893716860017, interval_steps_per_second: 0.32080585730375105, ppl: 1.3070482623338415, epoch: 0.4675

[2023-12-26 17:45:31,972] [ INFO] - loss: 0.30797887, learning_rate: 0.0002762, global_step: 55, interval_runtime: 3.3754, interval_samples_per_second: 4.740216348520788, interval_steps_per_second: 0.2962635217825493, ppl: 1.3606722376290126, epoch: 0.4762

[2023-12-26 17:45:35,789] [ INFO] - loss: 0.25563985, learning_rate: 0.0002752, global_step: 56, interval_runtime: 3.8169, interval_samples_per_second: 4.191847577319691, interval_steps_per_second: 0.2619904735824807, ppl: 1.2912875869600262, epoch: 0.4848

[2023-12-26 17:45:39,008] [ INFO] - loss: 0.27637056, learning_rate: 0.0002743, global_step: 57, interval_runtime: 3.2185, interval_samples_per_second: 4.971223970727729, interval_steps_per_second: 0.31070149817048304, ppl: 1.3183362962229255, epoch: 0.4935

[2023-12-26 17:45:42,650] [ INFO] - loss: 0.29341272, learning_rate: 0.0002733, global_step: 58, interval_runtime: 3.63, interval_samples_per_second: 4.407697726782218, interval_steps_per_second: 0.27548110792388864, ppl: 1.3409961321598929, epoch: 0.5022

[2023-12-26 17:45:46,185] [ INFO] - loss: 0.2738843, learning_rate: 0.0002724, global_step: 59, interval_runtime: 3.5479, interval_samples_per_second: 4.50970456331901, interval_steps_per_second: 0.28185653520743814, ppl: 1.3150626406888208, epoch: 0.5108

[2023-12-26 17:45:49,729] [ INFO] - loss: 0.29272103, learning_rate: 0.0002714, global_step: 60, interval_runtime: 3.5435, interval_samples_per_second: 4.51530705583338, interval_steps_per_second: 0.28220669098958623, ppl: 1.3400688992610694, epoch: 0.5195

[2023-12-26 17:45:53,090] [ INFO] - loss: 0.24711998, learning_rate: 0.0002705, global_step: 61, interval_runtime: 3.3613, interval_samples_per_second: 4.76002560569709, interval_steps_per_second: 0.29750160035606815, ppl: 1.280332717882807, epoch: 0.5281

[2023-12-26 17:45:56,060] [ INFO] - loss: 0.27978322, learning_rate: 0.0002695, global_step: 62, interval_runtime: 2.9697, interval_samples_per_second: 5.387787058462534, interval_steps_per_second: 0.33673669115390836, ppl: 1.3228430153437678, epoch: 0.5368

[2023-12-26 17:46:00,180] [ INFO] - loss: 0.26979572, learning_rate: 0.0002686, global_step: 63, interval_runtime: 4.1194, interval_samples_per_second: 3.8840582897572737, interval_steps_per_second: 0.2427536431098296, ppl: 1.3096968785260072, epoch: 0.5455

[2023-12-26 17:46:03,959] [ INFO] - loss: 0.2797547, learning_rate: 0.0002676, global_step: 64, interval_runtime: 3.7798, interval_samples_per_second: 4.233009314355052, interval_steps_per_second: 0.26456308214719076, ppl: 1.322805288398959, epoch: 0.5541

[2023-12-26 17:46:07,112] [ INFO] - loss: 0.26245928, learning_rate: 0.0002667, global_step: 65, interval_runtime: 3.1532, interval_samples_per_second: 5.0742238975114935, interval_steps_per_second: 0.31713899359446834, ppl: 1.3001235260606159, epoch: 0.5628

[2023-12-26 17:46:10,654] [ INFO] - loss: 0.27325338, learning_rate: 0.0002657, global_step: 66, interval_runtime: 3.5412, interval_samples_per_second: 4.518302126492652, interval_steps_per_second: 0.28239388290579076, ppl: 1.314233203049469, epoch: 0.5714

[2023-12-26 17:46:13,738] [ INFO] - loss: 0.29552907, learning_rate: 0.0002648, global_step: 67, interval_runtime: 3.0848, interval_samples_per_second: 5.186665460984193, interval_steps_per_second: 0.32416659131151204, ppl: 1.343837154562674, epoch: 0.5801

[2023-12-26 17:46:16,404] [ INFO] - loss: 0.27431649, learning_rate: 0.0002638, global_step: 68, interval_runtime: 2.665, interval_samples_per_second: 6.003694960613001, interval_steps_per_second: 0.37523093503831256, ppl: 1.3156311204482851, epoch: 0.5887

[2023-12-26 17:46:19,818] [ INFO] - loss: 0.30101836, learning_rate: 0.0002629, global_step: 69, interval_runtime: 3.4145, interval_samples_per_second: 4.685920299323026, interval_steps_per_second: 0.29287001870768914, ppl: 1.351234149969267, epoch: 0.5974

[2023-12-26 17:46:23,446] [ INFO] - loss: 0.26293159, learning_rate: 0.0002619, global_step: 70, interval_runtime: 3.6285, interval_samples_per_second: 4.409583156907597, interval_steps_per_second: 0.2755989473067248, ppl: 1.3007377324396991, epoch: 0.6061

[2023-12-26 17:46:26,805] [ INFO] - loss: 0.25193915, learning_rate: 0.000261, global_step: 71, interval_runtime: 3.3583, interval_samples_per_second: 4.764344747034641, interval_steps_per_second: 0.29777154668966505, ppl: 1.2865177502978775, epoch: 0.6147

[2023-12-26 17:46:29,961] [ INFO] - loss: 0.26743451, learning_rate: 0.00026, global_step: 72, interval_runtime: 3.1567, interval_samples_per_second: 5.068537361968574, interval_steps_per_second: 0.3167835851230359, ppl: 1.3066080572723744, epoch: 0.6234

[2023-12-26 17:46:33,563] [ INFO] - loss: 0.26232645, learning_rate: 0.000259, global_step: 73, interval_runtime: 3.6013, interval_samples_per_second: 4.4428989111833515, interval_steps_per_second: 0.27768118194895947, ppl: 1.299950842121707, epoch: 0.632

[2023-12-26 17:46:36,887] [ INFO] - loss: 0.28119218, learning_rate: 0.0002581, global_step: 74, interval_runtime: 3.3247, interval_samples_per_second: 4.812406825716142, interval_steps_per_second: 0.30077542660725887, ppl: 1.324708161888552, epoch: 0.6407

[2023-12-26 17:46:40,115] [ INFO] - loss: 0.27209201, learning_rate: 0.0002571, global_step: 75, interval_runtime: 3.2275, interval_samples_per_second: 4.957351649423985, interval_steps_per_second: 0.30983447808899905, ppl: 1.312707777997345, epoch: 0.6494