idea连接kafka

新建maven工程

添加依赖

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>2.8.0</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.12</artifactId>

<version>2.8.0</version>

</dependency>

新建java类MyProducer

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.serialization.StringSerializer;

import java.util.Properties;

import java.util.Scanner;

public class MyProducer {

public static void main(String[] args) {

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"192.168.153.145:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class);

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class);

/**

* 0 不需要等待broker任何响应(无需应答)

* 1 只需要等待分区副本中的leader应答 可能导致数据丢失

* -1 所有机器全部存放完成leader应答,响应时间最长,数据最安全,不会丢失数据,可能会重复

*/

properties.put(ProducerConfig.ACKS_CONFIG,"0");

KafkaProducer<String, String> producer = new KafkaProducer<String, String>(properties);

Scanner scanner = new Scanner(System.in);

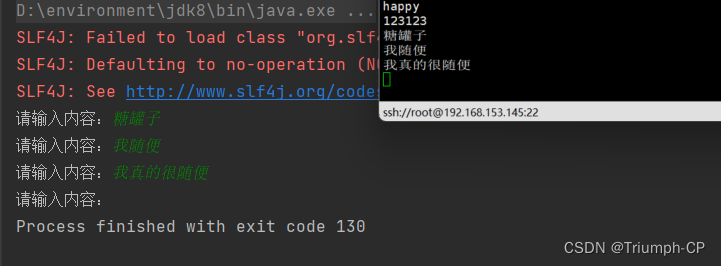

while (true){

System.out.print("请输入内容:");

String msg = scanner.next();

ProducerRecord<String, String> record = new ProducerRecord<>("cp", msg);

producer.send(record);

}

}

}

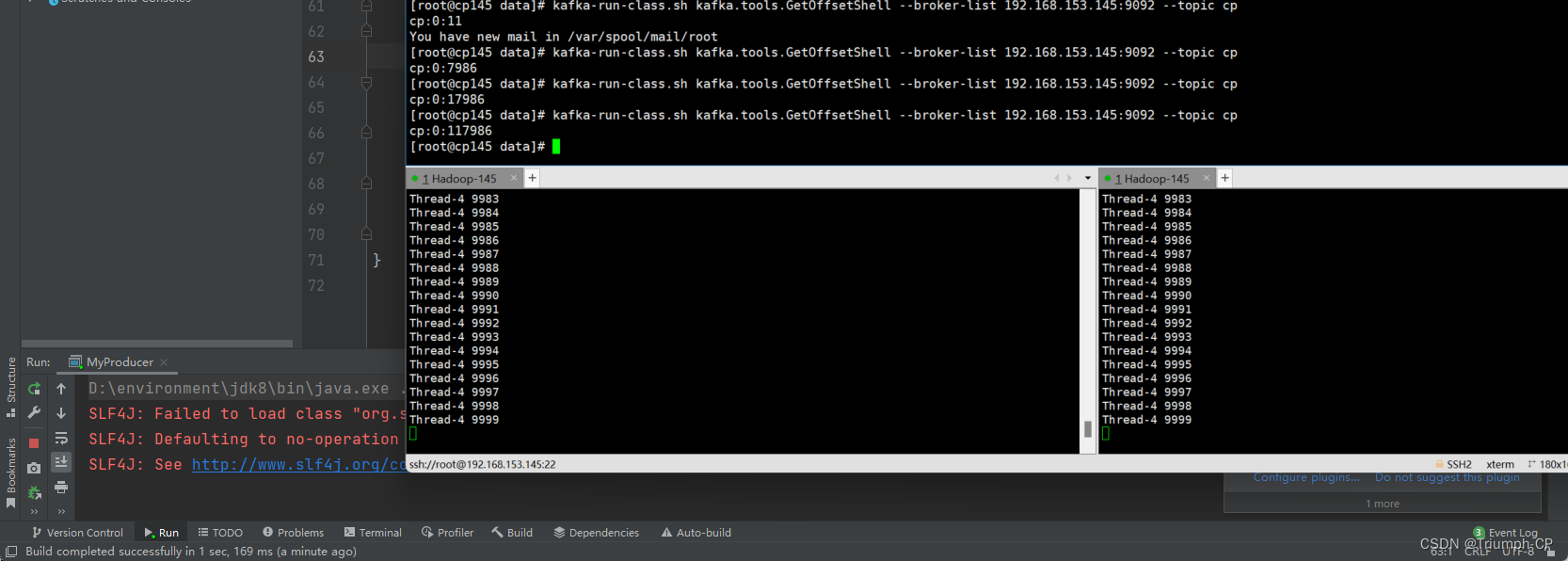

多线程批量插入消息

for (int i = 0; i < 10; i++) {

new Thread(new Runnable() {

@Override

public void run() {

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"192.168.153.145:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class);

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class);

properties.put(ProducerConfig.ACKS_CONFIG,"-1");

KafkaProducer<String, String> producer = new KafkaProducer<String, String>(properties);

for (int j = 0; j < 1000; j++) {

ProducerRecord<String, String> record = new ProducerRecord<>("cp",

Thread.currentThread().getName() + " " + j);

producer.send(record);

}

}

}).start();

}

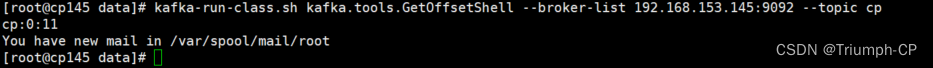

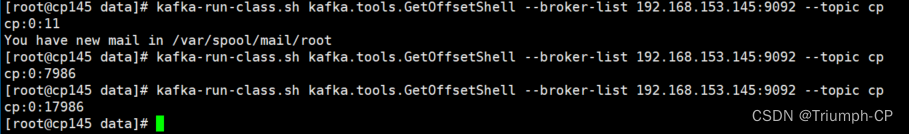

好像有错,只输入了7000多的数据

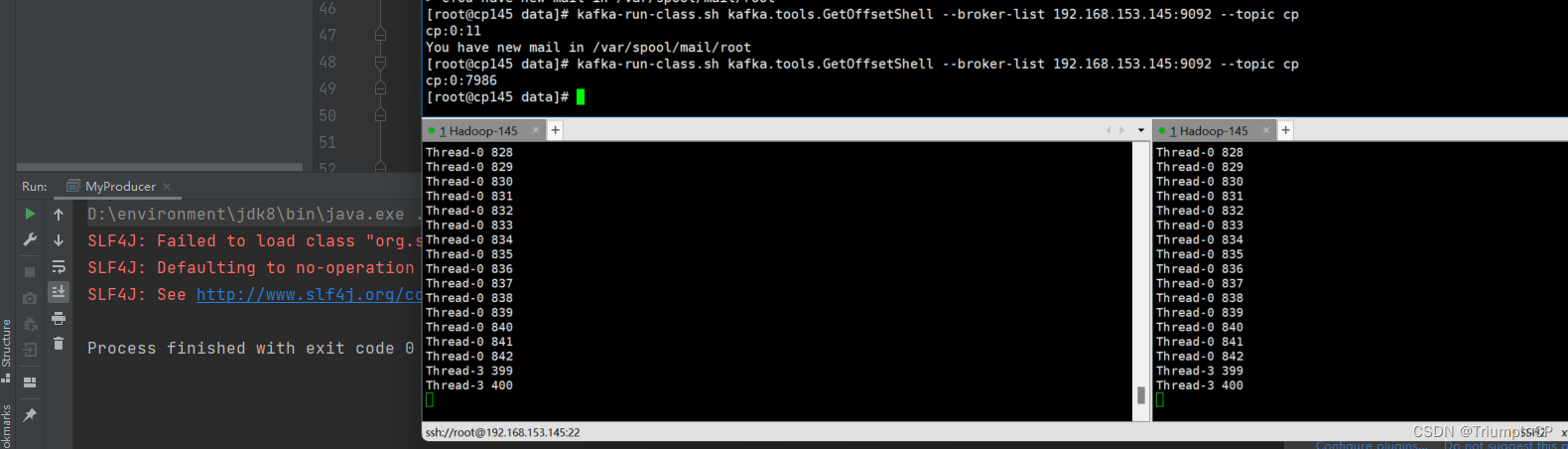

发现问题是主线程跑得太快,主线程跑完了就停了,但子线程还没跑完。让线程休眠会就好了

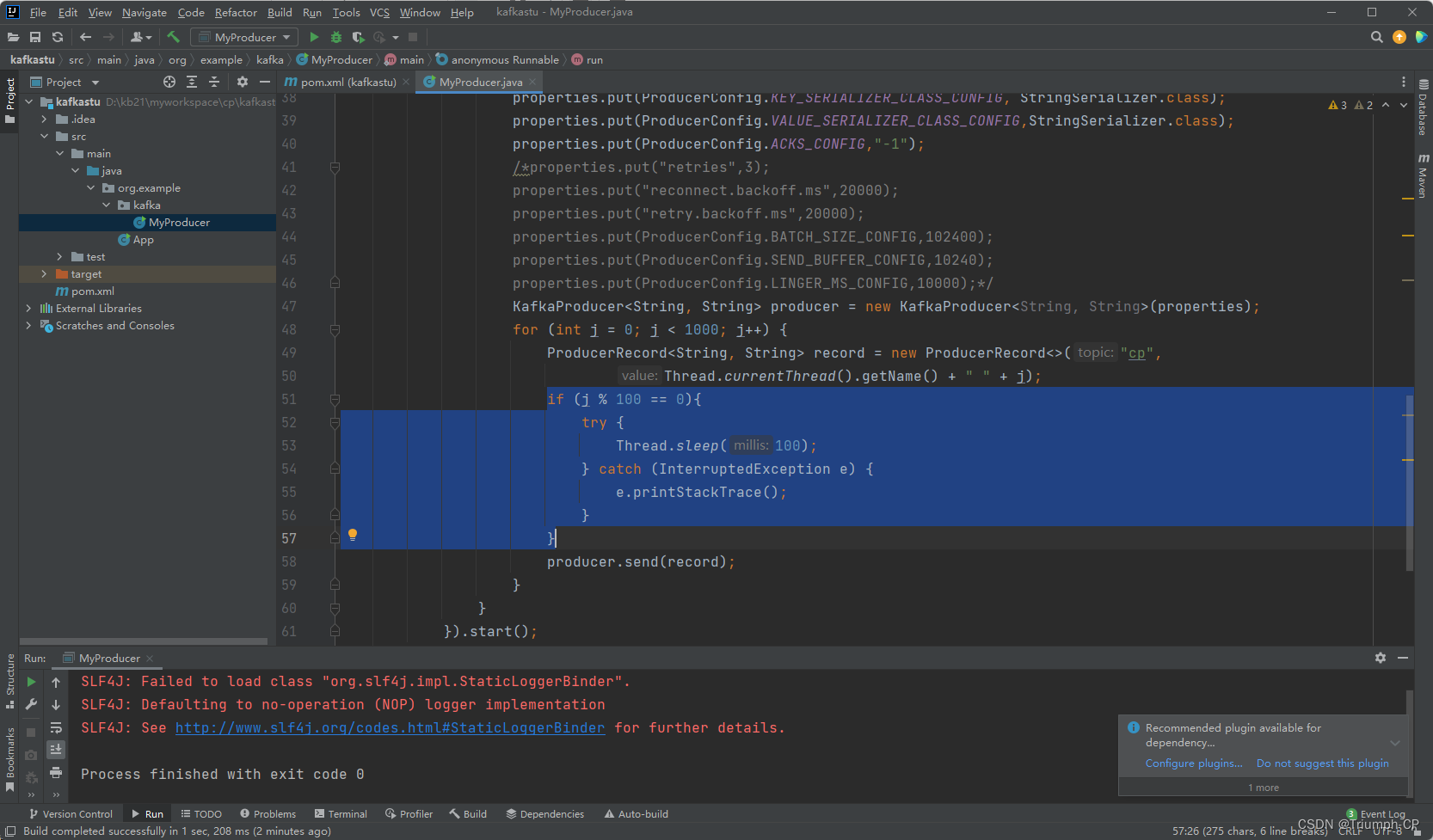

if (j % 100 == 0){

try {

Thread.sleep(100);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

数量就对了

或者直接让主线程休眠,放在循环外

try {

Thread.sleep(120000);

} catch (InterruptedException e) {

e.printStackTrace();

}

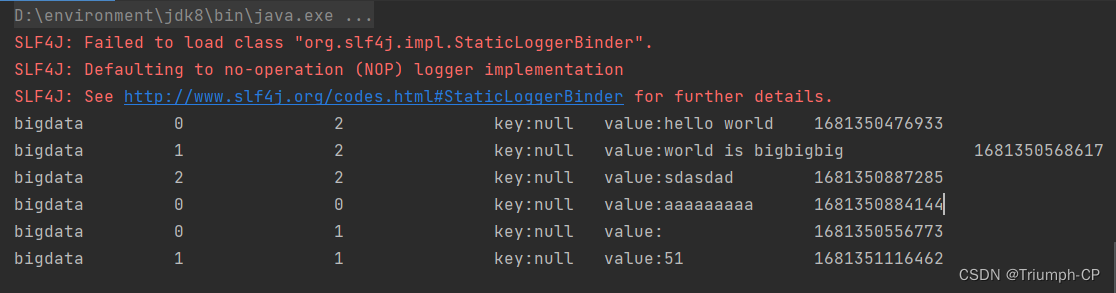

新建java类MyConsumer

/**

* kafka 消费者

*/

public class MyConsumer {

public static void main(String[] args) {

Properties prop = new Properties();

prop.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"192.168.153.145:9092");

prop.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

prop.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class);

//设置是否自动提交,true 自动提交 false 手动提交

prop.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG,"false");

/**

* earliest

* latest

* none

*/

prop.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG,"earliest");

prop.put(ConsumerConfig.GROUP_ID_CONFIG,"GROUP1");

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(prop);

consumer.subscribe(Collections.singleton("bigdata"));//创建好Kafka消费者对象以后,订阅消息,指定消息队列名字

while(true) {

Duration millis = Duration.ofMillis(100);

ConsumerRecords<String,String> records = consumer.poll(millis);

for (ConsumerRecord<String, String> record :

records) {

System.out.println(record.topic() + "\t" +

record.offset() + "\t" +

record.partition() + "\t" +

"key:" + record.key() + " value:" + record.value() + "\t" +

record.timestamp());

}

}

}

}

5831

5831

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?