文章目录

前言

本文实现基于chainer实现MaskRCNN示例分割算法

一、什么是实例分割

实例分割是目标检测和语义分割的结合,在图像中将目标检测出来(目标检测),然后对每个像素打上标签(语义分割)

二、数据集的准备

1.数据集标注

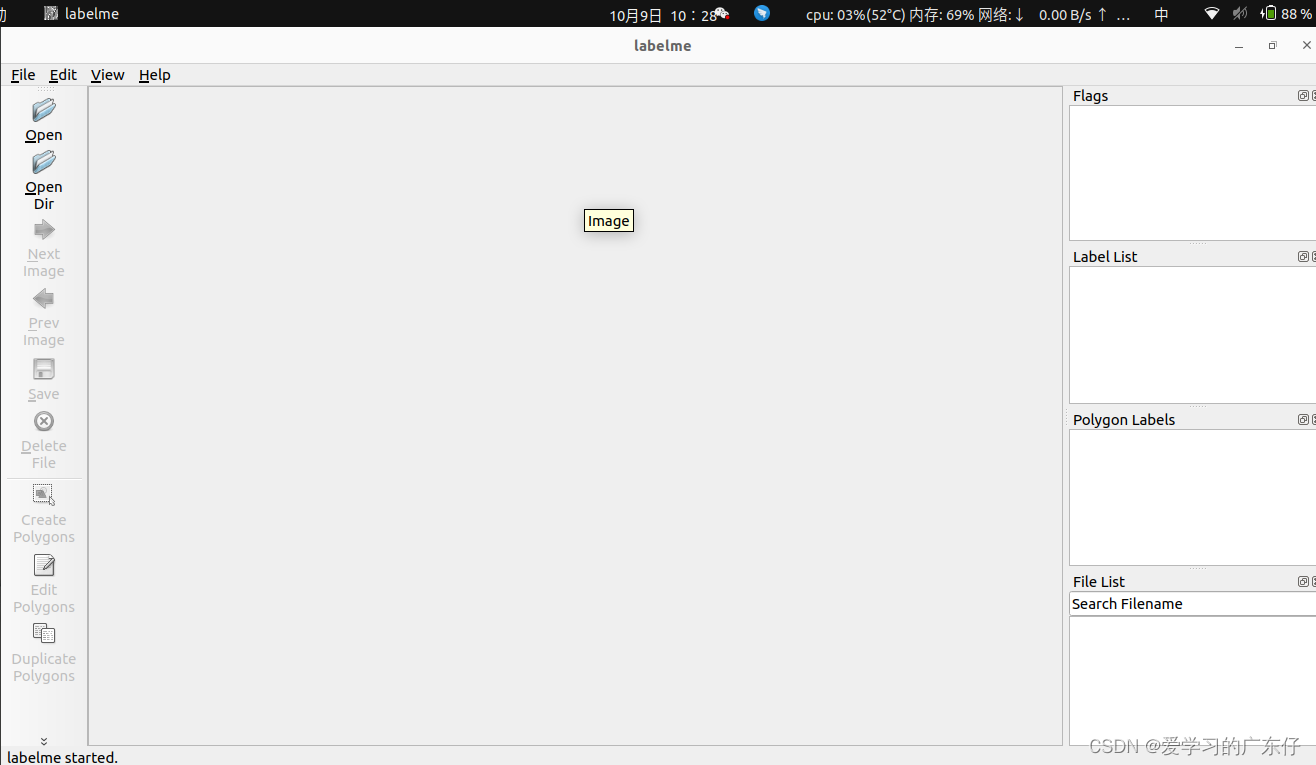

数据标注主要使用labelImg工具,python安装只需要:pip install labelme 即可,然后在命令提示符输入:labelme即可,如图:

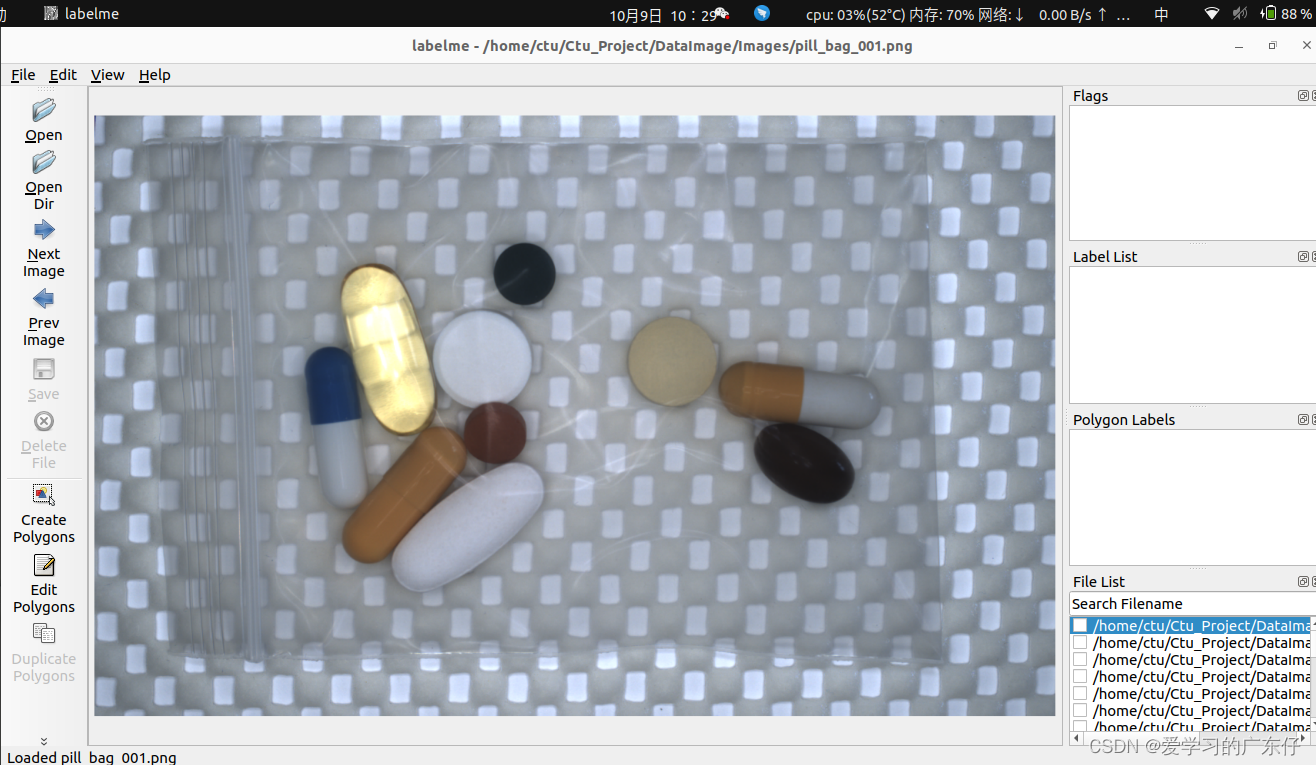

在这里只需要修改“OpenDir“,“OpenDir“主要是存放图片需要标注的路径

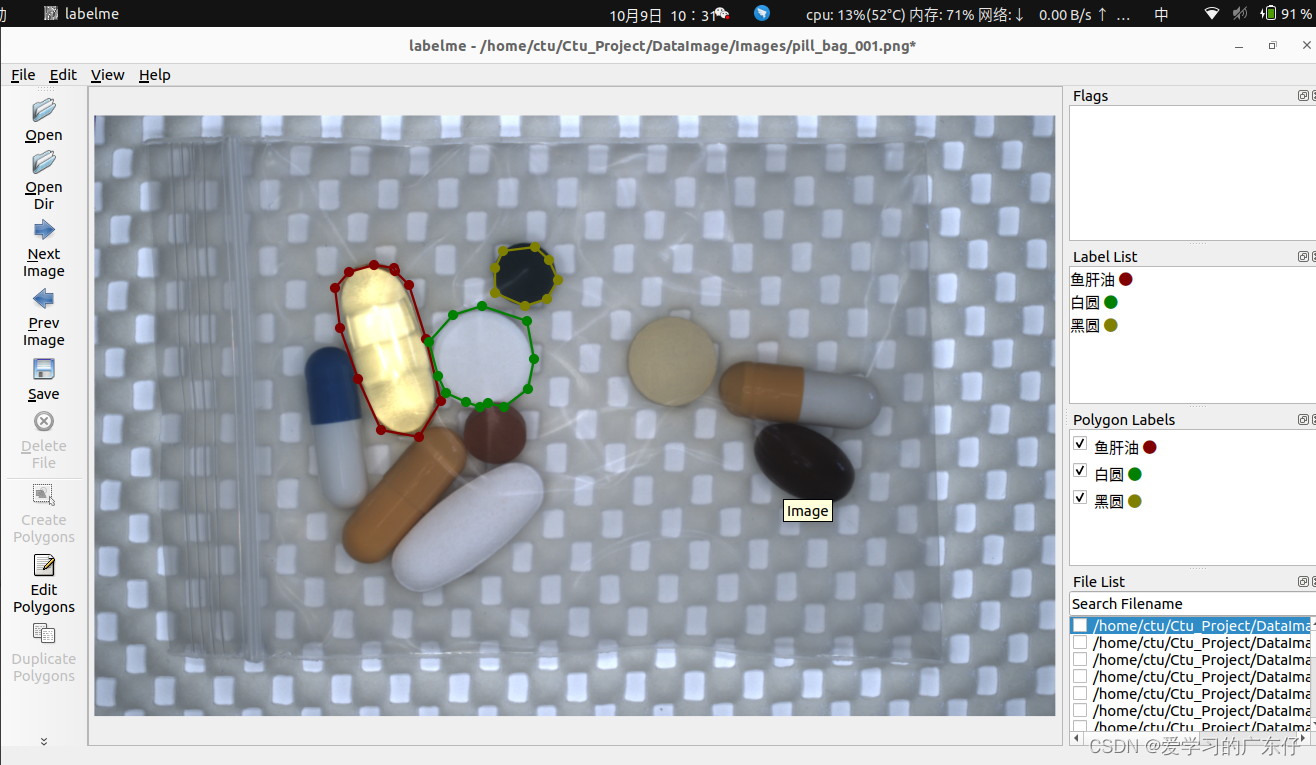

选择好路径之后即可开始绘制:

我在平时标注的时候快捷键一般只用到:

createpolygons:(ctrl+N)开始绘制

a:上一张

d:下一张

绘制过程如图:

就只需要一次把目标绘制完成即可。

2.VOC数据集转COCO数据集

def JSON2COCO(DataDir, SaveDir,split_train):

if os.path.exists(SaveDir):

for root, dirs, files in os.walk(SaveDir, topdown=False):

for name in files:

os.remove(os.path.join(root, name))

for name in dirs:

os.rmdir(os.path.join(root, name))

os.removedirs(SaveDir)

if not os.path.exists(os.path.join(SaveDir, 'annotations')):

os.makedirs(os.path.join(SaveDir, 'annotations'))

if not os.path.exists(os.path.join(SaveDir, 'images','train_data')):

os.makedirs(os.path.join(SaveDir, 'images','train_data'))

if not os.path.exists(os.path.join(SaveDir, 'images','val_data')):

os.makedirs(os.path.join(SaveDir, 'images','val_data'))

train_data_list,val_data_list, classes_names = CreateDataList_Seg(DataDir, split_train)

with open(os.path.join(SaveDir, 'train.txt'), 'w', encoding='utf-8') as f:

for file_name in train_data_list:

fn = os.path.basename(file_name.split('.')[0]+'.bmp')

f.write(os.path.join(SaveDir, 'images', 'train_data',fn) + '\n')

with open(os.path.join(SaveDir, 'val.txt'), 'w', encoding='utf-8') as f:

for file_name in val_data_list:

fn = os.path.basename(file_name.split('.')[0]+'.bmp')

f.write(os.path.join(SaveDir, 'images', 'val_data',fn) + '\n')

with open(os.path.join(SaveDir, 'labels.txt'), 'w', encoding='utf-8') as f:

for cls in classes_names:

f.write(cls + '\n')

labelme2coco(train_data_list, os.path.join(SaveDir,'images','train_data'), os.path.join(SaveDir, 'annotations', 'instances_train_data.json'))

labelme2coco(val_data_list, os.path.join(SaveDir,'images','val_data'), os.path.join(SaveDir, 'annotations', 'instances_val_data.json'))

参数定义:

DataDir:存放标注json文件所在的文件夹目录

SaveDir:输出coco数据集的保存目录

split_train:训练集和验证集的分割

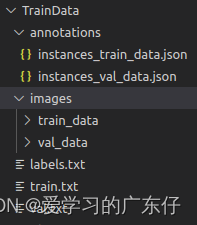

生成后的目录环境如下:

instances_train_data.json:包含了训练数据集的标注信息

instances_val_data.json:包含了验证数据集的标注信息

train_data:保存训练集的原始图像

val_data:保存验证集的原始图像

labels.txt:保存本次训练存在的类别数

train.txt:保存训练集的图像路径

val.txt:保存验证集的图像路径

三、基于chainer搭建MaskRCNN

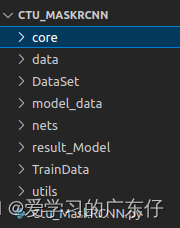

项目目录结构:

core:源码文件夹,保存标准的核心验证模型算法

data:源码文件夹,保存数据加载器和迭代器

DataSet:数据集

model_data:字体库

nets:MaskRCNN源码实现文件夹

result_Model:训练保存路径

TrainData:COCO数据集生成时的路径

utils:标准的算法库

Ctu_MaskRCNN.py:MaskRCNN类及调用方式

1.引入库

import chainer,sys,os,json,time,cv2,random

sys.path.append('.')

from PIL import Image,ImageFont,ImageDraw

from chainer.training import extensions

from chainer.datasets import TransformDataset

import numpy as np

2.CPU/GPU配置

if USEGPU=='-1':

self.gpu_devices = -1

else:

self.gpu_devices = int(USEGPU)

3.获取训练的dataset

1.coco数据集

train_data = COCODataset(DataDir, json_file='instances_val2017.json', name='val2017')

test_data = COCODataset(DataDir, json_file='instances_val2017.json', name='val2017')

2.自定义数据集

self.OutPutData='./TrainData'

JSON2COCO(DataDir,self.OutPutData,train_split)

train_data = CtuDataset(self.OutPutData, model='train')

test_data = CtuDataset(self.OutPutData, model='val')

4.获取类别标签

self.class_names = train_data.coco.dataset['categories']

5.模型构建

mask_rcnn = MaskRCNNResNet(n_fg_class=len(self.class_names),min_size=self.min_size,max_size=self.max_size, alpha=self.alpha, roi_size=14, n_layers=self.n_layers, roi_align = True, class_ids=self.train_class_ids)

mask_rcnn.use_preset('evaluate')

self.model = MaskRCNNTrainChain(mask_rcnn, gamma=1, roi_size=14)

if self.gpu_devices >= 0:

self.model.to_gpu(self.gpu_devices)

6.数据增强

class Transform(object):

def __init__(self, net, labelids):

self.net = net

self.labelids = labelids

def __call__(self, in_data):

if len(in_data)==5:

img, label, bbox, mask, i = in_data

elif len(in_data)==4:

img, bbox, label, i= in_data

label = [self.labelids.index(l) for l in label]

_, H, W = img.shape

if chainer.config.train:

img = self.net.prepare(img)

_, o_H, o_W = img.shape

scale = o_H / H

if len(bbox)==0:

return img, [],[],1

bbox = resize_bbox(bbox, (H, W), (o_H, o_W))

mask = resize(mask,(o_H, o_W))

if chainer.config.train:

img, params = random_flip(img, x_random=True, return_param=True)

bbox = flip_bbox(bbox, (o_H, o_W), x_flip=params['x_flip'])

mask = flip(mask, x_flip=params['x_flip'])

return img, bbox, label, scale, mask, i

7.数据迭代器

self.train_iter = chainer.iterators.SerialIterator(train_data, batch_size=self.batch_size)

self.test_iter = chainer.iterators.SerialIterator(test_data, batch_size=self.batch_size, repeat=False, shuffle=False)

8.模型训练

首先我们先理解一下chainer的训练结构,如图:

从图中我们可以了解到,首先我们需要设置一个Trainer,这个可以理解为一个大大的训练板块,然后做一个Updater,这个从图中可以看出是把训练的数据迭代器和优化器链接到更新器中,实现对模型的正向反向传播,更新模型参数。然后还有就是Extensions,此处的功能是在训练的中途进行操作可以随时做一些回调(描述可能不太对),比如做一些模型评估,修改学习率,可视化验证集等操作。

从图中我们可以了解到,首先我们需要设置一个Trainer,这个可以理解为一个大大的训练板块,然后做一个Updater,这个从图中可以看出是把训练的数据迭代器和优化器链接到更新器中,实现对模型的正向反向传播,更新模型参数。然后还有就是Extensions,此处的功能是在训练的中途进行操作可以随时做一些回调(描述可能不太对),比如做一些模型评估,修改学习率,可视化验证集等操作。

因此我们只需要严格按照此图建设训练步骤基本上没有什么大问题,下面一步一步设置

1.优化器设置

optimizer = chainer.optimizers.Adam()

optimizer.setup(self.model)

optimizer.add_hook(chainer.optimizer.WeightDecay(rate=0.0001))

2.设置update和trainer

updater = chainer.training.StandardUpdater(self.train_iter, optimizer, device=self.gpu_devices)

trainer = chainer.training.Trainer(updater, (TrainNum, 'epoch'), out=ModelPath)

3.Extensions功能设置

1.模型保存

trainer.extend(

chainer.training.extensions.snapshot_object(self.model.mask_rcnn, 'Ctu_final_Model.npz'),

trigger=chainer.training.triggers.ManualScheduleTrigger([each for each in range(1,TrainNum)], 'epoch')

)

2.模型验证

trainer.extend(

COCOAPIEvaluator(self.test_iter, self.model.mask_rcnn, self.test_ids, self.cocoanns),

trigger=chainer.training.triggers.ManualScheduleTrigger([each for each in range(1,TrainNum)], 'epoch')

)

3.训练日志及文件输出

log_interval = 0.1, 'epoch'

trainer.extend(chainer.training.extensions.LogReport(filename='ctu_log.json',trigger=log_interval))

trainer.extend(chainer.training.extensions.observe_lr(), trigger=log_interval)

trainer.extend(extensions.dump_graph("main/loss", filename='ctu_net.net'))

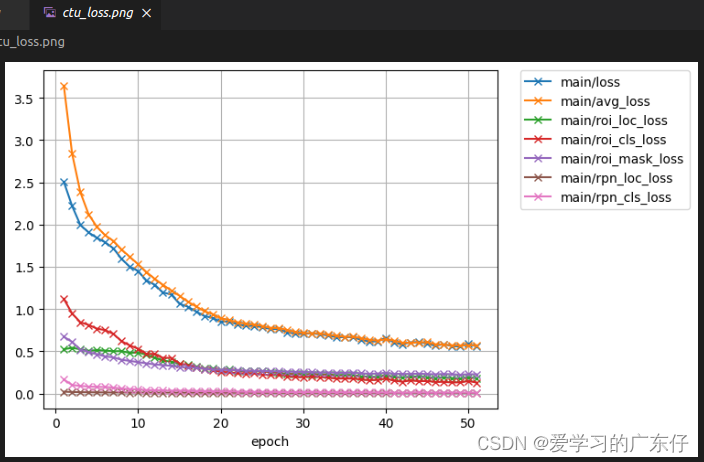

print_loss = [ 'main/loss', 'main/avg_loss', 'main/roi_loc_loss', 'main/roi_cls_loss', 'main/roi_mask_loss', 'main/rpn_loc_loss', 'main/rpn_cls_loss', 'validation/main/loss']

print_acc = ['main/accuracy', 'validation/main/accuracy']

trainer.extend(extensions.PrintReport(['epoch','lr']+print_loss+print_acc), trigger=log_interval)

trainer.extend(extensions.ProgressBar(update_interval=1))

if chainer.training.extensions.PlotReport.available():

trainer.extend(extensions.PlotReport(print_loss, x_key='epoch', file_name='ctu_loss.png'))

trainer.extend(extensions.PlotReport(print_acc, x_key='epoch', file_name='ctu_accuracy.png'))

4.模型训练

trainer.run()

9.模型预测

def predict(self,img_cv):

start_time = time.time()

pre_img = []

for each_img in img_cv:

img = each_img[:, :, ::-1]

img = img.transpose((2, 0, 1))

img = img.astype(np.float32)

pre_img.append(img)

pre_img = np.array(pre_img)

bboxes, labels, scores, masks = ctu.model.predict(pre_img)

result_value = {

"image_result": [],

"time": 0,

'num_image':len(img_cv)

}

result_img=[]

for each_img_index in range(0,len(img_cv)):

bbox, label, score, mask = bboxes[each_img_index], np.asarray(labels[each_img_index],dtype=np.int32), scores[each_img_index], masks[each_img_index]

target_num = len(bbox)

origin_img_pillow = ctu.cv2_pillow(img_cv[each_img_index])

font = ImageFont.truetype(font='./model_data/simhei.ttf', size=np.floor(3e-2 * np.shape(origin_img_pillow)[1] + 0.5).astype('int32'))

thickness = max((np.shape(origin_img_pillow)[0] + np.shape(origin_img_pillow)[1]) // self.min_size, 1)

seg_img = np.zeros((np.shape(img_cv[each_img_index])[0], np.shape(img_cv[each_img_index])[1], 3))

for each_target in range(0,target_num):

bbox, bb_label, score = bboxes[each_img_index], labels[each_img_index], scores[each_img_index]

for each_bbox in range(len(bbox)):

bbox_score = float(score[each_bbox])

ymin, xmin, ymax, xmax = [int(x) for x in bbox[each_bbox]]

class_name = ctu.classes_names[int(bb_label[each_bbox])]

top, left, bottom, right = ymin, xmin, ymax, xmax

label = '{}-{:.3f}'.format(class_name, bbox_score)

draw = ImageDraw.Draw(origin_img_pillow)

label_size = draw.textsize(label, font)

label = label.encode('utf-8')

if top - label_size[1] >= 0:

text_origin = np.array([left, top - label_size[1]])

else:

text_origin = np.array([left, top + 1])

for i in range(thickness):

draw.rectangle([left + i, top + i, right - i, bottom - i], outline=ctu.colors[int(bb_label[each_bbox])])

draw.rectangle([tuple(text_origin), tuple(text_origin + label_size)], fill=ctu.colors[int(bb_label[each_bbox])])

draw.text(text_origin, str(label,'UTF-8'), fill=(0, 0, 0), font=font)

del draw

seg_color = (random.randint(0,100),random.randint(0,100),random.randint(0,100))

seg_img[:,:,0] += ((mask[each_target][:,: ] == 1 )*( seg_color[0] )).astype('uint8')

seg_img[:,:,1] += ((mask[each_target][:,: ] == 1 )*( seg_color[1] )).astype('uint8')

seg_img[:,:,2] += ((mask[each_target][:,: ] == 1 )*( seg_color[2] )).astype('uint8')

res_img = ctu.pillow_cv2(origin_img_pillow)

res_img = res_img.astype("uint8")

seg_img = seg_img.astype("uint8")

img_add = cv2.addWeighted(res_img, 1.0, seg_img, 0.7, 0)

result_img.append(img_add)

result_value['image_result'] = result_img

result_value['time'] = (time.time() - start_time) * 1000

result_value['num_image'] = len(img_cv)

return result_value

四、训练预测代码主入口

if __name__ == '__main__':

# ctu = Ctu_MaskRCNN(USEGPU='0',min_size=416,max_size=416)

# ctu.InitModel(r'./DataSet/DataImage',train_split=0.9,batch_size=1,alpha=4,Pre_Model=None)

# ctu.train(TrainNum=150,learning_rate=0.0001,ModelPath='result_Model')

ctu = Ctu_MaskRCNN(USEGPU='0',min_size=416,max_size=416)

ctu.LoadModel('./result_Model')

predictNum=1

predict_cvs = []

cv2.namedWindow("result", 0)

cv2.resizeWindow("result", 640, 480)

for root, dirs, files in os.walk(r'./TrainData/images/val_data'):

for f in files:

if len(predict_cvs) >=predictNum:

predict_cvs.clear()

img_cv = cv2.imread(os.path.join(root, f),cv2.IMREAD_COLOR)

# img_cv = ctu.read_image(os.path.join(root, f))

if img_cv is None:

continue

predict_cvs.append(img_cv)

if len(predict_cvs) == predictNum:

result = ctu.predict(predict_cvs)

print(result['time'])

for each_id in range(result['num_image']):

cv2.imshow("result", result['image_result'][each_id])

cv2.waitKey()

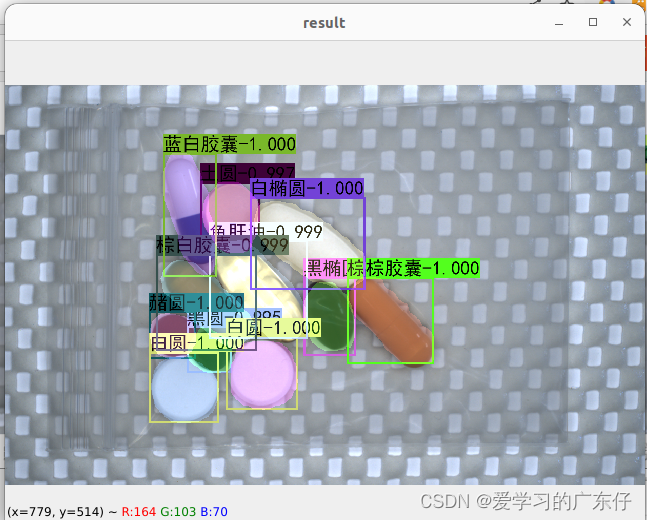

五、效果展示

1.训练过程可视化

2.预测效果

总结

本文章主要是基于chainer实现实例分割MaskRCNN

有问题或者要源码可私聊

2697

2697

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?