import cv2

import torch.nn as nn

import torch

from torchvision.models import AlexNet

import matplotlib.pyplot as plt

from torch.optim.lr_scheduler import LambdaLR

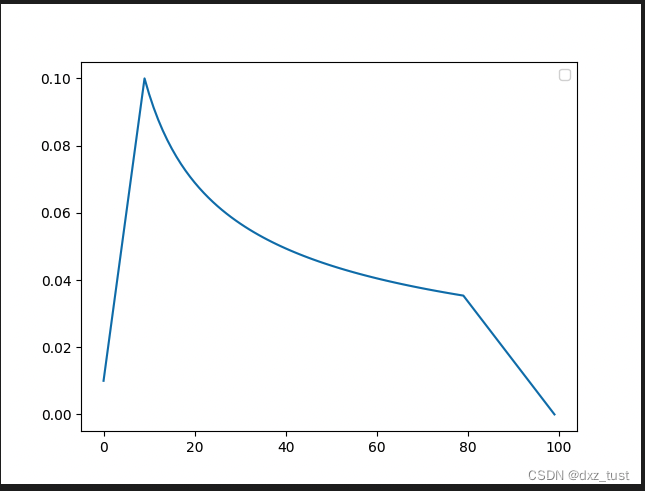

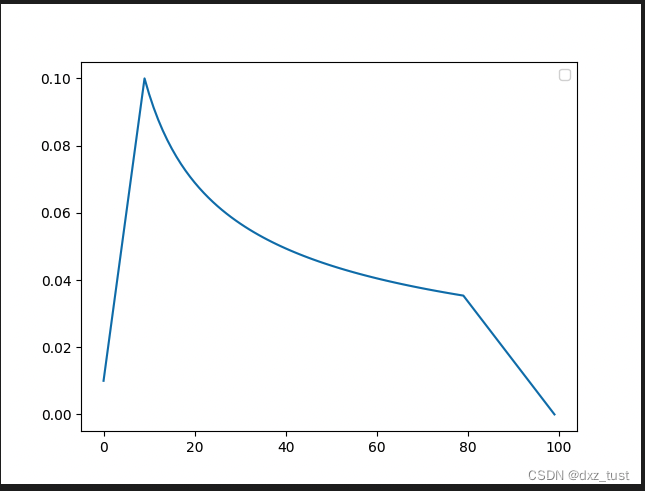

def get_inverse_sqrt_scheduler(optimizer, num_warmup_steps, num_cooldown_steps, num_training_steps):

# linearly warmup for the first args.warmup_updates

lr_step = 1 / num_warmup_steps

# then, decay prop. to the inverse square root of the update number

decay_factor = num_warmup_steps**0.5

decayed_lr = decay_factor * (num_training_steps - num_cooldown_steps) ** -0.5

def lr_lambda(current_step: int):

if current_step < num_warmup_steps:

return float(current_step * lr_step)

elif current_step > (num_training_steps - num_cooldown_steps):

return max(0.0, float(decayed_lr * (num_training_steps - current_step) / num_cooldown_steps))

else:

return float(decay_factor * current_step**-0.5)

return LambdaLR(optimizer, lr_lambda, last_epoch=-1)

#定义2分类网络

steps = []

lrs = []

model = AlexNet(num_classes=2)

lr = 0.1

optimizer = torch.optim.SGD(model.parameters(), lr=lr, momentum=0.9)

#前10steps warmup ,中间70steps正常衰减,最后20个steps期间衰减到0

scheduler = get_inverse_sqrt_scheduler(optimizer,num_warmup_steps=10, num_cooldown_steps=20, num_training_steps=100)

for epoch in range(10):

for batch in range(10):

scheduler.step()

lrs.append(scheduler.get_lr()[0])

steps.append(epoch*10+batch)

plt.figure()

plt.legend()

plt.plot(steps, lrs, label='inverse_sqrt')

plt.savefig("dd.png")

366

366

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?