本章节对应MXNET深度学习框架-05-从0开始的多分类逻辑回归。

1、数据集下载及读取

本文使用fashionmnist数据集,通过tensorflow可以进行下载和读取:

from tensorflow.keras.datasets import fashion_mnist

(x_train, y_train), (x_test, y_test) = fashion_mnist.load_data()

print(x_train.shape,y_train.shape)

# 将训练数据的特征和标签组合

batch_size=256

train_dataset = tfdata.Dataset.from_tensor_slices((x_train, y_train)).shuffle(x_train.shape[0])

test_dataset = tfdata.Dataset.from_tensor_slices((x_test, y_test))

train_iter=train_dataset.batch(batch_size)

test_iter=test_dataset.batch(batch_size)

结果:

从结果可以说明这份数据集是的大小为28*28的单通道图像,训练数据共计60000张。

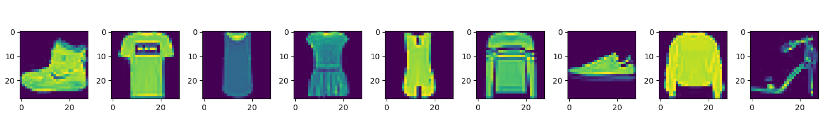

2、显示部分数据集图片和标签

def show_image(image): # 显示图像

n=image.shape[0]

_,figs=plt.subplots(1,n,figsize=(15,15))

for i in range(n):

figs[i].imshow(image[i].reshape((28,28)))

plt.show()

def get_fashion_mnist_labels(labels):# 显示图像标签

text_labels = ['t-shirt', 'trouser', 'pullover', 'dress', 'coat',

'sandal', 'shirt', 'sneaker', 'bag', 'ankle boot']

return [text_labels[int(i)] for i in labels]

show_image(x_train[:9])

print(get_fashion_mnist_labels(y_train[:9]))

结果:

3、数据归一化

def normlize(data):

return tf.cast(data,tf.float32)/255.0

4、初始化参数

num_inputs=28*28

num_output=10

w=tf.Variable(tf.random.truncated_normal(shape=[num_inputs,num_output],stddev=0.01,dtype=tf.float32))

b=tf.Variable(tf.random.truncated_normal(shape=[num_output],dtype=tf.float32))

5、softmax分类器

在下面定义函数中,矩阵logits的行数是样本数,列数是输出个数。为了表达样本预测各个输出的概率,softmax运算会先通过exp函数对每个元素做指数运算,再对exp矩阵同行元素求和,最后令矩阵每行各元素与该行元素之和相除。这样一来,最终得到的矩阵每行元素和为1且非负。因此,该矩阵每行都是合法的概率分布。softmax运算的输出矩阵中的任意一行元素代表了一个样本在各个输出类别上的预测概率。

def softmax(logits, axis=-1):

return tf.exp(logits)/tf.reduce_sum(tf.exp(logits), axis, keepdims=True)

6、定义模型

def net(x):

x=tf.reshape(x,[-1,28*28])

logits=tf.matmul(x,w)+b # 根据公式f(x)=Xw+b

return softmax(logits)

7、定义交叉熵损失函数

def cross_entropy(y_hat, y):

y = tf.cast(tf.reshape(y, shape=[-1, 1]),dtype=tf.int32)

y = tf.one_hot(y, depth=y_hat.shape[-1])

y = tf.cast(tf.reshape(y, shape=[-1, y_hat.shape[-1]]),dtype=tf.int32)

return -tf.math.log(tf.boolean_mask(y_hat, y)+1e-8)

8、计算准确率

def accuracy(y_hat, y):

return np.mean((tf.cast(tf.argmax(y_hat, axis=1),tf.int32) == tf.cast(y,tf.int32)))

9、计算测试集准确率

def evaluate_accuracy(test_iter):

acc_sum, n = 0.0, 0

for X, y in test_iter:

n+=1

y = tf.cast(y, dtype=tf.int32)

output=net(normlize(X))

acc_sum+=accuracy(output,y)

return acc_sum / n

10、SGD优化器

def sgd(params, lr, batch_size, grads):

for i, param in enumerate(params):

param.assign_sub(lr * grads[i] / batch_size)

11、训练

def train(num_epochs,lr):

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

X=normlize(X)

n+=1 #一个epoch取多少次

with tf.GradientTape() as t:

t.watch([w, b])

l = tf.reduce_mean(cross_entropy(net(X), y))

grads = t.gradient(l, [w, b]) # 通过调用反向函数t.gradients计算小批量随机梯度,并调用优化算法sgd迭代模型参数

sgd([w, b], lr, batch_size, grads)

train_acc_sum+=accuracy(net(X),y)

train_l_sum+=l.numpy()

test_acc=evaluate_accuracy(test_iter)

print(str(epoch),"train loss:%.6f, train acc:%.5f, test acc:%.5f"%(train_l_sum/n,train_acc_sum/n,test_acc))

最终训练结果:

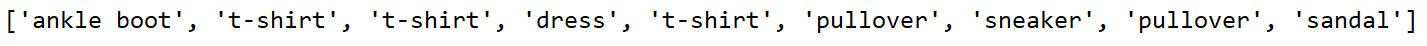

12、预测

def predict():

image_10, label_10 = x_test[:10],y_test[:10] # 拿到前10个数据

show_image(image_10)

print("真实样本标签:", label_10)

print("真实数字标签对应的服饰名:", get_fashion_mnist_labels(label_10))

image_10 = normlize(image_10)

predict_label = tf.argmax(net(image_10),1).numpy()

print("预测样本标签:", predict_label.astype("int8"))

print("预测数字标签对应的服饰名:", get_fashion_mnist_labels(predict_label))

预测结果:

可以看到,预测的准确率为90%(当然有偶然性,毕竟只有10个测试数据)。

可以看到,预测的准确率为90%(当然有偶然性,毕竟只有10个测试数据)。

附上所有源码:

import tensorflow as tf

from tensorflow.keras.datasets import fashion_mnist

import matplotlib.pyplot as plt

import numpy as np

from tensorflow import data as tfdata

# 1、获取和读取数据

(x_train, y_train), (x_test, y_test) = fashion_mnist.load_data()

print(x_train.shape,y_train.shape)

# 将训练数据的特征和标签组合

batch_size=256

train_dataset = tfdata.Dataset.from_tensor_slices((x_train, y_train)).shuffle(x_train.shape[0])

test_dataset = tfdata.Dataset.from_tensor_slices((x_test, y_test))

train_iter=train_dataset.batch(batch_size)

test_iter=test_dataset.batch(batch_size)

# 2、显示部分图片

def show_image(image): # 显示图像

n=image.shape[0]

_,figs=plt.subplots(1,n,figsize=(15,15))

for i in range(n):

figs[i].imshow(image[i].reshape((28,28)))

plt.show()

def get_fashion_mnist_labels(labels):# 显示图像标签

text_labels = ['t-shirt', 'trouser', 'pullover', 'dress', 'coat',

'sandal', 'shirt', 'sneaker', 'bag', 'ankle boot']

return [text_labels[int(i)] for i in labels]

# show_image(x_train[:9]) # 前9张图片

# print(get_fashion_mnist_labels(y_train[:9]))

# 3、数据归一化

def normlize(data):

return tf.cast(data,tf.float32)/255.0

# 4、初始化参数

num_inputs=28*28

num_output=10

w=tf.Variable(tf.random.truncated_normal(shape=[num_inputs,num_output],stddev=0.01,dtype=tf.float32))

b=tf.Variable(tf.random.truncated_normal(shape=[num_output],dtype=tf.float32))

# 5、定义softmax分类器

def softmax(logits, axis=-1):

return tf.exp(logits)/tf.reduce_sum(tf.exp(logits), axis, keepdims=True)

# 6、定义模型

def net(x):

x=tf.reshape(x,[-1,28*28])

logits=tf.matmul(x,w)+b # 根据公式f(x)=Xw+b

return softmax(logits)

#7、定义交叉熵损失函数

def cross_entropy(y_hat, y):

y = tf.cast(tf.reshape(y, shape=[-1, 1]),dtype=tf.int32)

y = tf.one_hot(y, depth=y_hat.shape[-1])

y = tf.cast(tf.reshape(y, shape=[-1, y_hat.shape[-1]]),dtype=tf.int32)

return -tf.math.log(tf.boolean_mask(y_hat, y)+1e-8)

#8、计算准确率

def accuracy(y_hat, y):

return np.mean((tf.cast(tf.argmax(y_hat, axis=1),tf.int32) == tf.cast(y,tf.int32)))

#9、计算测试集准确率

def evaluate_accuracy(test_iter):

acc_sum, n = 0.0, 0

for X, y in test_iter:

n+=1

y = tf.cast(y, dtype=tf.int32)

output=net(normlize(X))

acc_sum+=accuracy(output,y)

return acc_sum / n

#10、SGD优化器

def sgd(params, lr, batch_size, grads):

for i, param in enumerate(params):

param.assign_sub(lr * grads[i] / batch_size)

#11、训练

def train(num_epochs,lr):

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

X=normlize(X)

n+=1 #一个epoch取多少次

with tf.GradientTape() as t:

t.watch([w, b])

l = tf.reduce_mean(cross_entropy(net(X), y))

grads = t.gradient(l, [w, b]) # 通过调用反向函数t.gradients计算小批量随机梯度,并调用优化算法sgd迭代模型参数

sgd([w, b], lr, batch_size, grads)

train_acc_sum+=accuracy(net(X),y)

train_l_sum+=l.numpy()

test_acc=evaluate_accuracy(test_iter)

print("epoch %d, train loss:%.6f, train acc:%.5f, test acc:%.5f"%(epoch,train_l_sum/n,train_acc_sum/n,test_acc))

# 12、预测

def predict():

image_10, label_10 = x_test[:10],y_test[:10] # 拿到前10个数据

show_image(image_10)

print("真实样本标签:", label_10)

print("真实数字标签对应的服饰名:", get_fashion_mnist_labels(label_10))

image_10 = normlize(image_10)

predict_label = tf.argmax(net(image_10),1).numpy()

print("预测样本标签:", predict_label.astype("int8"))

print("预测数字标签对应的服饰名:", get_fashion_mnist_labels(predict_label))

if __name__=="__main__":

train(50,0.3)

predict()# 预测

686

686

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?