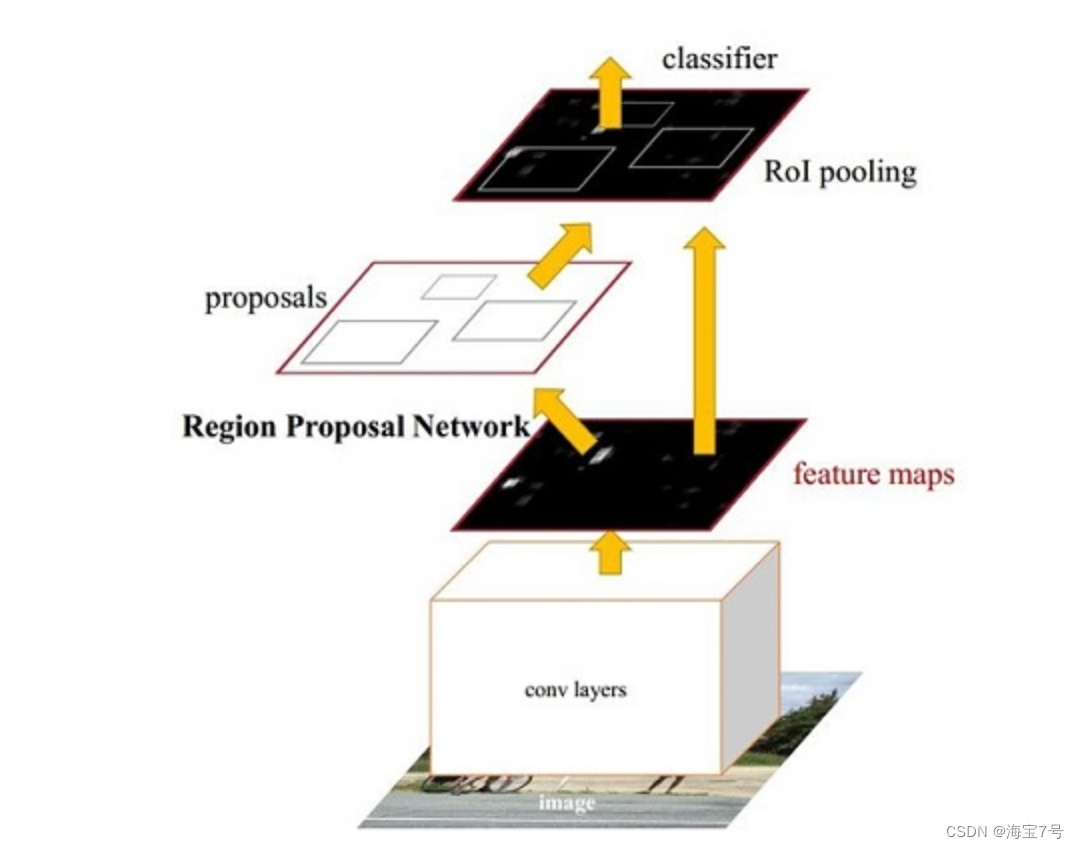

Ross B. Girshick在2016年提出了新的Faster RCNN,在结构上,Faster RCNN已经将特征抽取(feature extraction),proposal提取,bounding box regression(rect refine),classification都整合在了一个网络中,使得综合性能有较大提高,在检测速度方面尤为明显。

Faster R-CNN

Faster R-CNN是截止目前,RCNN系列算法的最杰出产物,two-stage中最为经典的物体检测算法。

主要是两大阶段的处理:

第一阶段先找出图片中待检测物体的anchor矩形框(对背景、待检测物体进行二分类)。

第二阶段对anchor框内待检测物体进行分类。

同时主要包括两个模块:一个是深度全卷积网络RPN,该网络用来产生候选区域;另一个是Fast R-CNN检测器,使用RPN网络产生的候选区域进行分类与边框回归计算。

参考来源:

https://zhuanlan.zhihu.com/p/31426458

https://zhuanlan.zhihu.com/p/82185598

https://blog.csdn.net/fengbingchun/article/details/87195597

https://blog.csdn.net/weixin_43198141/article/details/90178512

论文原文:https://arxiv.org/abs/1506.01497

实例://别人的。

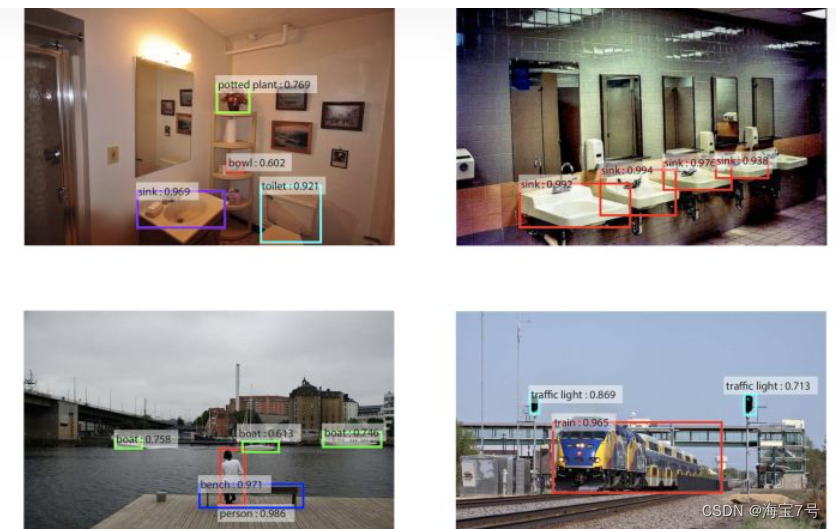

内容暂且不管,深度学习检测应用,先看结果。

*

以终为始

引文说明:

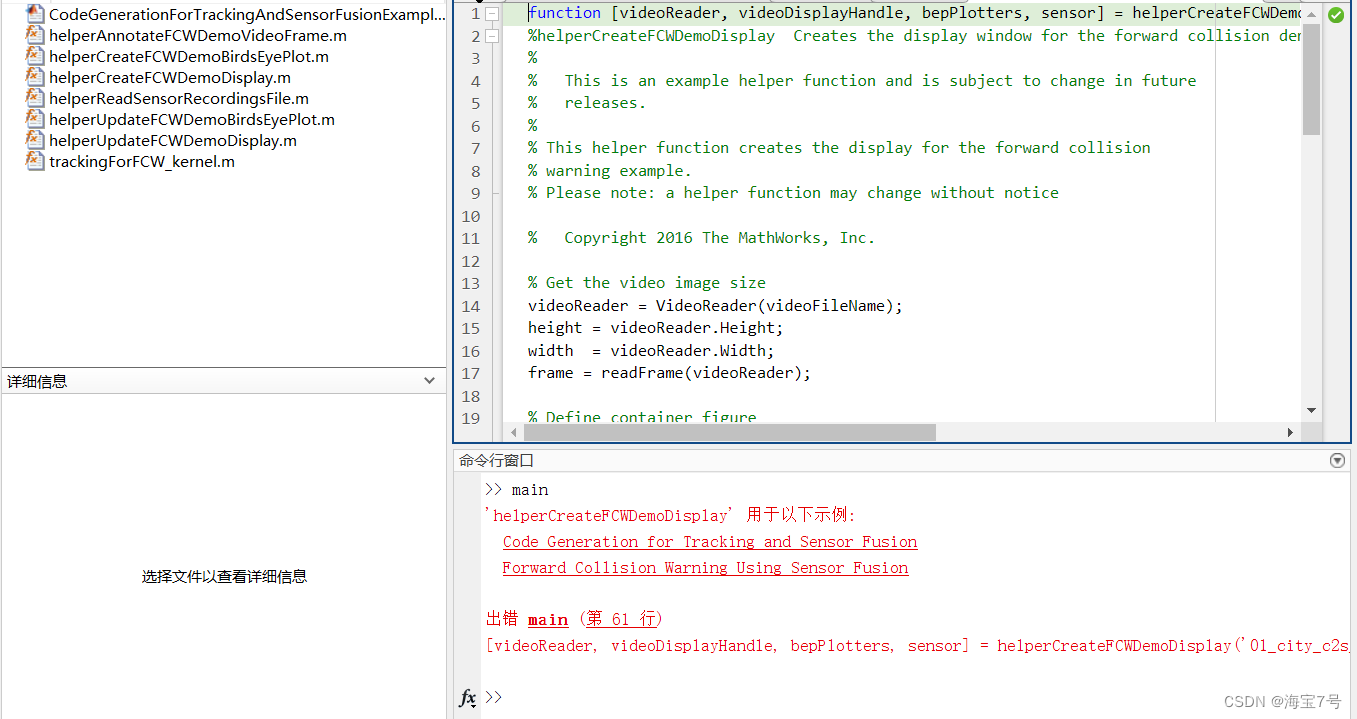

%% Forward Collision Warning Using Sensor Fusion

% This example shows how to perform forward collision warning by fusing

% data from vision and radar sensors to track objects in front of the

% vehicle.

%

%% Overview

% Forward collision warning (FCW) is an important feature in driver

% assistance and automated driving systems, where the goal is to provide

% correct, timely, and reliable warnings to the driver before an impending

% collision with the vehicle in front. To achieve the goal, vehicles are

% equipped with forward-facing vision and radar sensors. Sensor fusion is

% required to increase the probability of accurate warnings and minimize

% the probability of false warnings.

%

% For the purposes of this example, a test car (the ego vehicle) was

% equipped with various sensors and their outputs were recorded. The

% sensors used for this example were:

%

% # Vision sensor, which provided lists of observed objects with their

% classification and information about lane boundaries. The object lists

% were reported 10 times per second. Lane boundaries were reported 20

% times per second.

% # Radar sensor with medium and long range modes, which provided lists of

% unclassified observed objects. The object lists were reported 20 times

% per second.

% # IMU, which reported the speed and turn rate of the ego vehicle 20 times

% per second.

% # Video camera, which recorded a video clip of the scene in front of the

% car. Note: This video is not used by the tracker and only serves to

% display the tracking results on video for verification.

%

% The process of providing a forward collision warning comprises the

% following steps:

%

% # Obtain the data from the sensors.

% # Fuse the sensor data to get a list of tracks, i.e., estimated

% positions and velocities of the objects in front of the car.

% # Issue warnings based on the tracks and FCW criteria. The FCW criteria

% are based on the Euro NCAP AEB test procedure and take into account the

% relative distance and relative speed to the object in front of the car.

%

% For more information about tracking multiple objects, see

% <matlab:helpview(fullfile(docroot,'toolbox','vision','vision.map'),'multipleObjectTracking') Multiple Object Tracking>.

%

% The visualization in this example is done using

% <matlab:doc('monoCamera'), monoCamera> and <matlab:doc('birdsEyePlot')

% birdsEyePlot>. For brevity, the functions that create and update the

% display were moved to helper functions outside of this example. For more

% information on how to use these displays, see

% <UsingMonoCameraToDisplayObjectOnVideoExample.html Annotate Video Using

% Detections in Vehicle Coordinates> and <BirdsEyePlotExample.html

% Visualize Sensor Coverage, Detections, and Tracks>.

%

% This example is a script, with the main body shown here and helper

% routines in the form of

% <matlab:helpview(fullfile(docroot,'toolbox','matlab','matlab_prog','matlab_prog.map'),'matlab_prog_localfunctions_livescripts') local functions>

% in the sections that follow.

使用传感器融合的前向碰撞警告

此示例显示如何通过融合执行前向碰撞警告

来自视觉和雷达传感器的数据,用于跟踪车辆。

概述

前向碰撞警告(FCW)是驾驶员的一项重要功能

协助和自动驾驶系统,其目标是提供在即将发生的

与前面车辆发生碰撞。为了实现这一目标,车辆配备前向视觉和雷达传感器。传感器融合是需要增加准确警告的概率,并将错误警告的概率。

在本示例中,测试车(ego车辆)为配备各种传感器并记录其输出。

本示例中使用的传感器包括:

#视觉传感器,提供观察对象列表及其车道边界的分类和信息。对象列表每秒报告10次。车道边界报告20每秒次。

#中远程模式雷达传感器,提供未分类观察对象。对象列表报告了20次每秒。

#IMU,它报告了ego车辆20次的速度和转向率

每秒。

#摄像机,它在汽车。注意:此视频不供跟踪器使用,仅用于在视频上显示跟踪结果以供验证。

提供前向碰撞警告的过程包括以下步骤:

#从传感器获取数据。

#融合传感器数据以获得轨迹列表,即估计轨迹车辆前方物体的位置和速度。

#根据曲目和FCW标准发出警告。FCW标准

基于欧洲NCAP AEB测试程序,并考虑与车辆前方物体的相对距离和相对速度

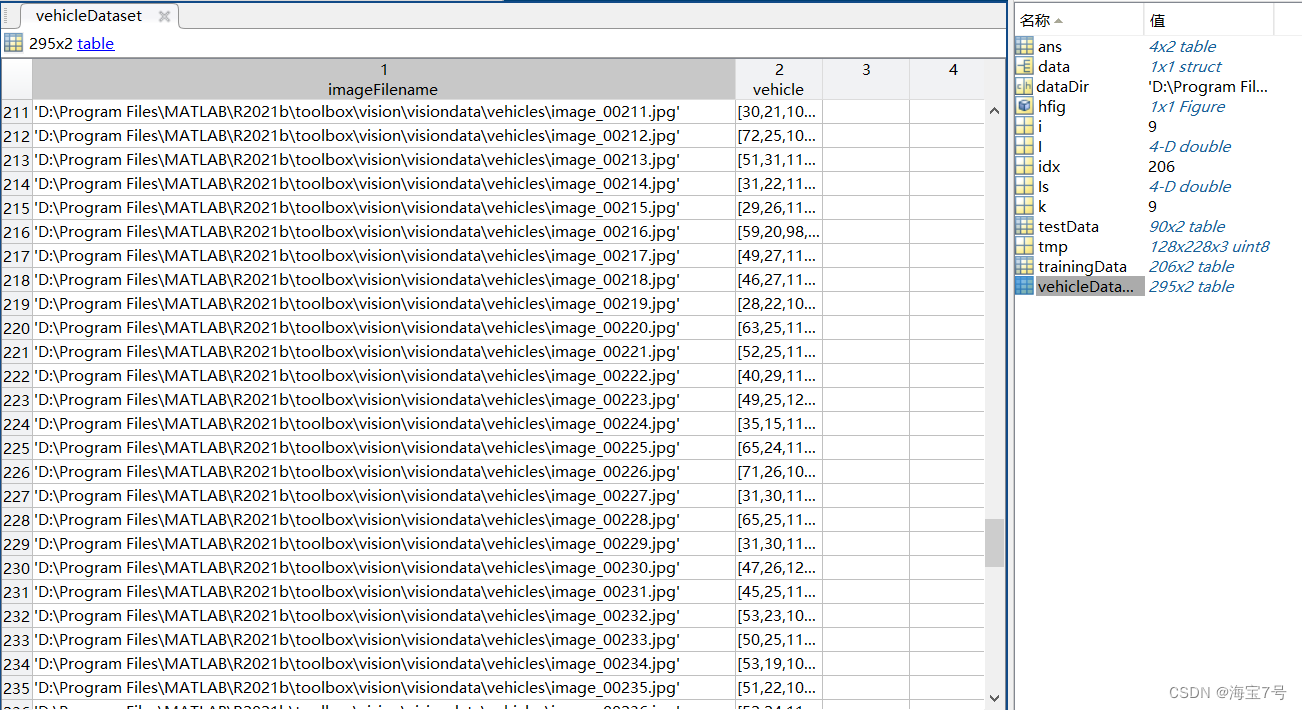

输出矩阵:

4×2 table

imageFilename vehicle

____________________________ ________________

{'vehicles/image_00001.jpg'} {[126 78 20 16]}

{'vehicles/image_00002.jpg'} {[100 72 35 26]}

{'vehicles/image_00003.jpg'} {[ 62 69 26 19]}

{'vehicles/image_00004.jpg'} {[ 71 64 22 21]}

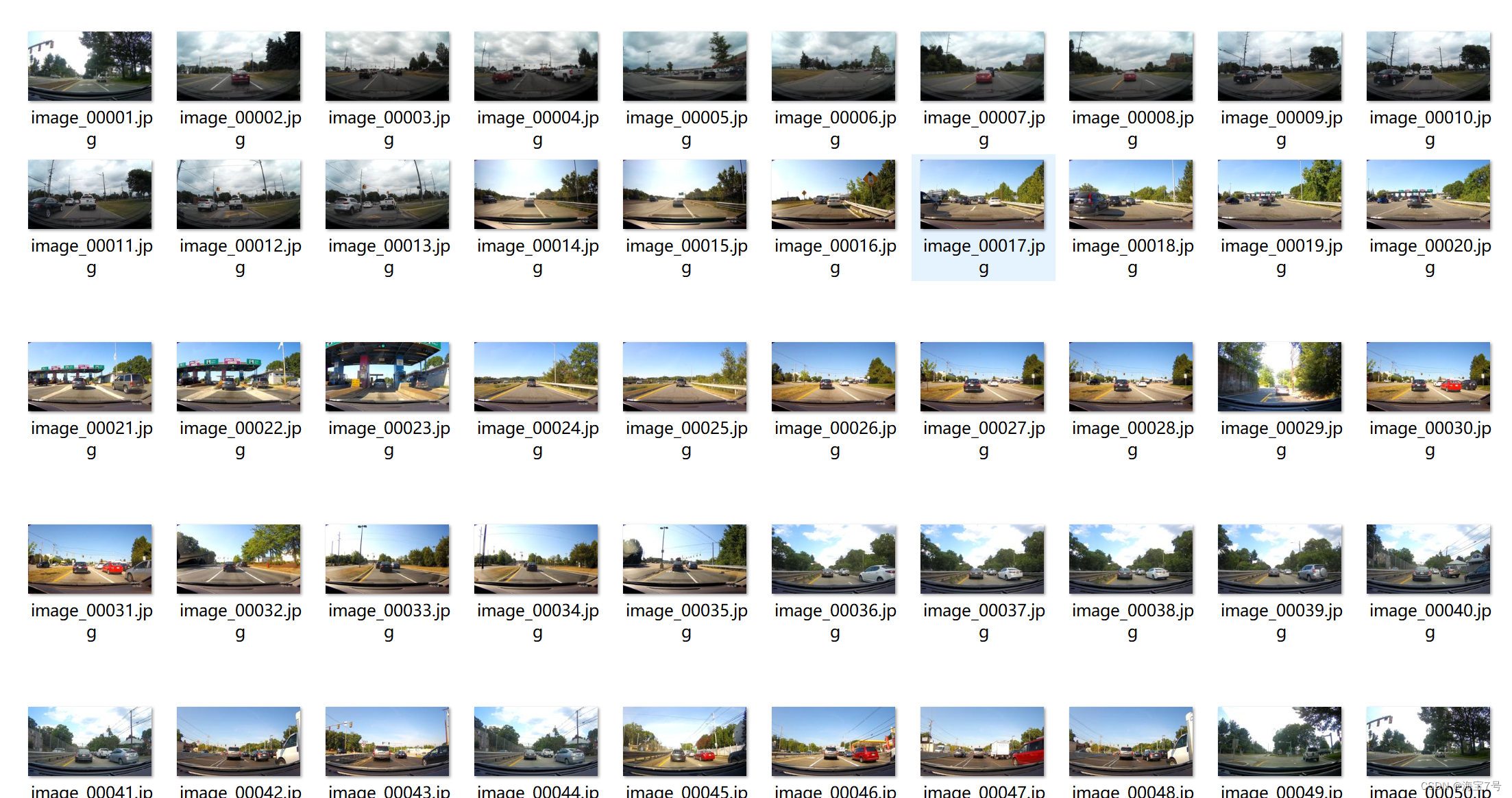

汽车影像文件:

数据集:

加载数据

% vehicleDataset是一个dataset数据类型,第一列是图片的相对路径,第二列是图片中小汽车的位置

data = load('fasterRCNNVehicleTrainingData.mat');

vehicleDataset = data.vehicleTrainingData;

% 显示数据结构

vehicleDataset(1:4,:)

% 设置数据集目录

dataDir = fullfile(toolboxdir('vision'),'visiondata');

vehicleDataset.imageFilename = fullfile(dataDir, vehicleDataset.imageFilename);

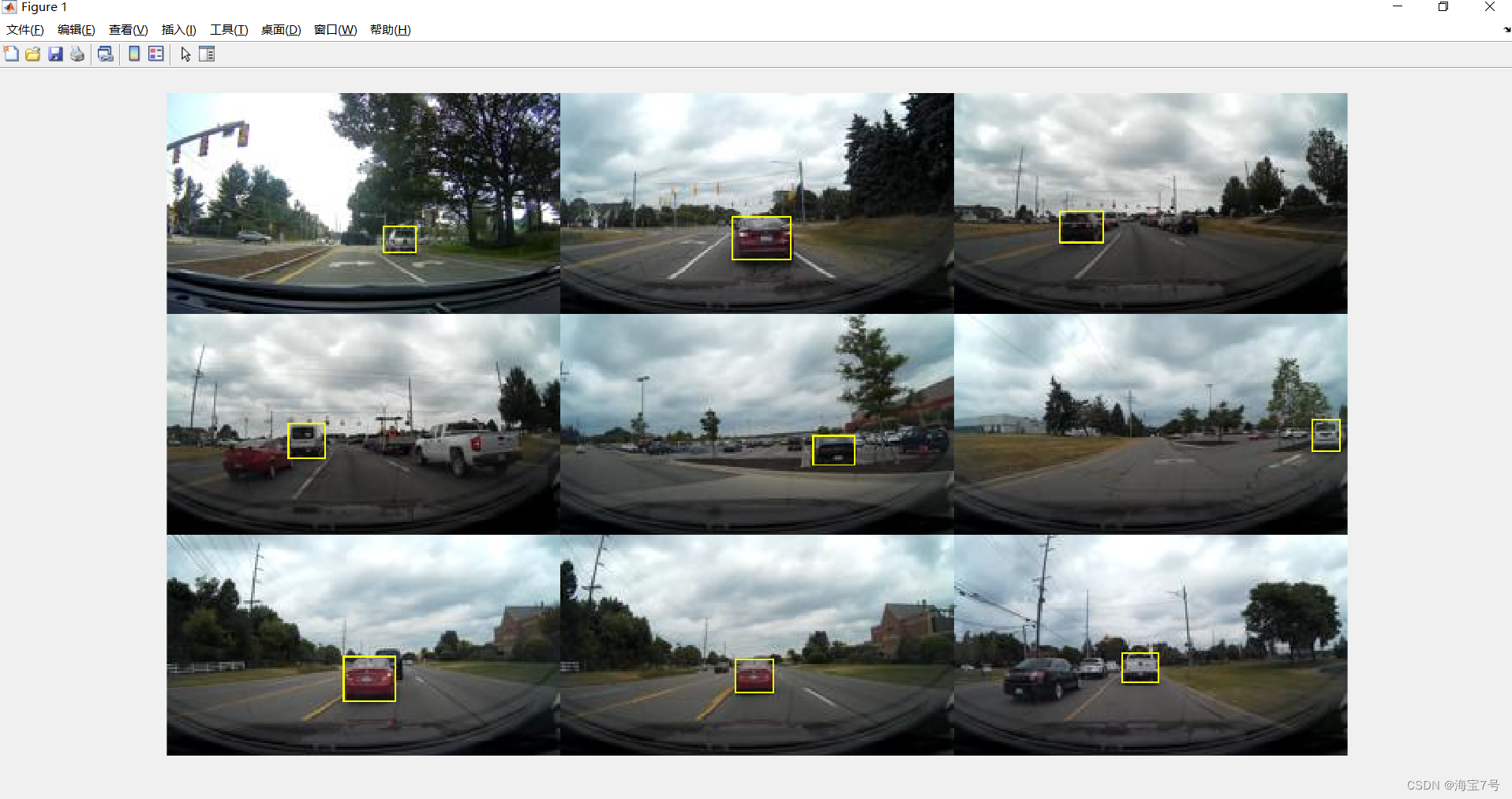

% 展示前9幅图片

k = 9;

I=zeros(128,228,3,k);

for i = 1 : k

% 读取图片

tmp = imread(vehicleDataset.imageFilename{i});

% 添加标识框

tmp = insertShape(tmp, 'Rectangle', vehicleDataset.vehicle{i});

I(:,:,:,i) = mat2gray(tmp);

end

% 显示

Is = I;

hfig = figure; montage(Is);

set(hfig, 'Units', 'Normalized', 'Position', [0, 0, 1, 1]);

pause(1);

% 将数据划分两部分

% 前70%的数据用于训练,后面30%用于测试

idx = floor(0.7 * height(vehicleDataset));

trainingData = vehicleDataset(1:idx,:);

testData = vehicleDataset(idx:end,:);

设计CNN网络结构

% 输入层,最小检测对象约32*32

inputLayer = imageInputLayer([32 32 3]);

% 中间层

% 定义卷基层参数

filterSize = [3 3];

numFilters = 32;

middleLayers = [

% 卷积+激活

convolution2dLayer(filterSize, numFilters, 'Padding', 1)

reluLayer()

% 卷积+激活+池化

convolution2dLayer(filterSize, numFilters, 'Padding', 1)

reluLayer()

maxPooling2dLayer(3, 'Stride',2)

];

% 输出层

finalLayers = [

% 新增一个包含64个输出的全连接层

fullyConnectedLayer(64)

% 新增一个非线性ReLU层

reluLayer

% 新增全连接层,用于判断图片是否包含检测对象

fullyConnectedLayer(width(vehicleDataset))

% 添加softmax和classification层

softmaxLayer

classificationLayer

];

% 组合所有层

layers = [

inputLayer

middleLayers

finalLayers

]

配置相关的训练参数

options = [

% 第1步,Training a Region Proposal Network (RPN)

trainingOptions('sgdm', 'MaxEpochs', 10,'InitialLearnRate', 1e-5,'CheckpointPath', tempdir)

% 第2步,Training a Fast R-CNN Network using the RPN from step 1

trainingOptions('sgdm', 'MaxEpochs', 10,'InitialLearnRate', 1e-5,'CheckpointPath', tempdir)

% 第3步,Re-training RPN using weight sharing with Fast R-CNN

trainingOptions('sgdm', 'MaxEpochs', 10,'InitialLearnRate', 1e-6,'CheckpointPath', tempdir)

% 第4步,Re-training Fast R-CNN using updated RPN

trainingOptions('sgdm', 'MaxEpochs', 10,'InitialLearnRate', 1e-6,'CheckpointPath', tempdir)

];

训练网络,进行本地处理

doTrainingAndEval = true;

if doTrainingAndEval

% 设置锚点,并执行训练

rng(0);

detector = trainFasterRCNNObjectDetector(trainingData, layers, options, ...

'NegativeOverlapRange', [0 0.3], ...

'PositiveOverlapRange', [0.6 1], ...

'BoxPyramidScale', 1.2);

else

% 直接载入

detector = data.detector;

end

测试结果:

I = imread('highway.png');

% 运行检测器,输出目标位置和得分

[bboxes, scores] = detect(detector, I);

% 在图像上标记处识别的小汽车

I = insertObjectAnnotation(I, 'rectangle', bboxes, scores);

figure

imshow(I)

结果评估分析:

I = imread('highway.png');

% 运行检测器,输出目标位置和得分

[bboxes, scores] = detect(detector, I);

% 在图像上标记处识别的小汽车

I = insertObjectAnnotation(I, 'rectangle', bboxes, scores);

figure

imshow(I)

%% 评估训练效果

if doTrainingAndEval

resultsStruct = struct([]);

for i = 1:height(testData)

% 读取测试图片

I = imread(testData.imageFilename{i});

% 运行RCNN检测器

[bboxes, scores, labels] = detect(detector, I);

% 结果保存到结构体

resultsStruct(i).Boxes = bboxes;

resultsStruct(i).Scores = scores;

resultsStruct(i).Labels = labels;

end

% 将结构体转换为table数据类型

results = struct2table(resultsStruct);

else

% 直接加载之前评估好的数据

results = data.results;

end

% 从测试数据中提取期望的小车位置

expectedResults = testData(:, 2:end);

本文的训练数据206,测试90,适合小批量样本的研究和应用。亲测实用,不过还有很多需要改进的地方。比如调参,还有环境的升级配置,换用其他类型的CNN网络进一步测试。

识别的初步结果:

尬住。

在实际场景的应用–处理一段视频可能存在小bug。

第二个程序文件夹来不及测试了

本文配套源文件

基于Faster R-CNN的深度学习检测汽车目标

主要侧重于数据集的分析和训练测试分析—亲测实用

下载–>传送门

基于计算机视觉的自动驾驶应用项目实战.

主要是实际场景的应用–处理一段视频可能存在小bug。来不及测试了—有待修订

下载2–>地址

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?