Airflow调度redshift数据库中的数据

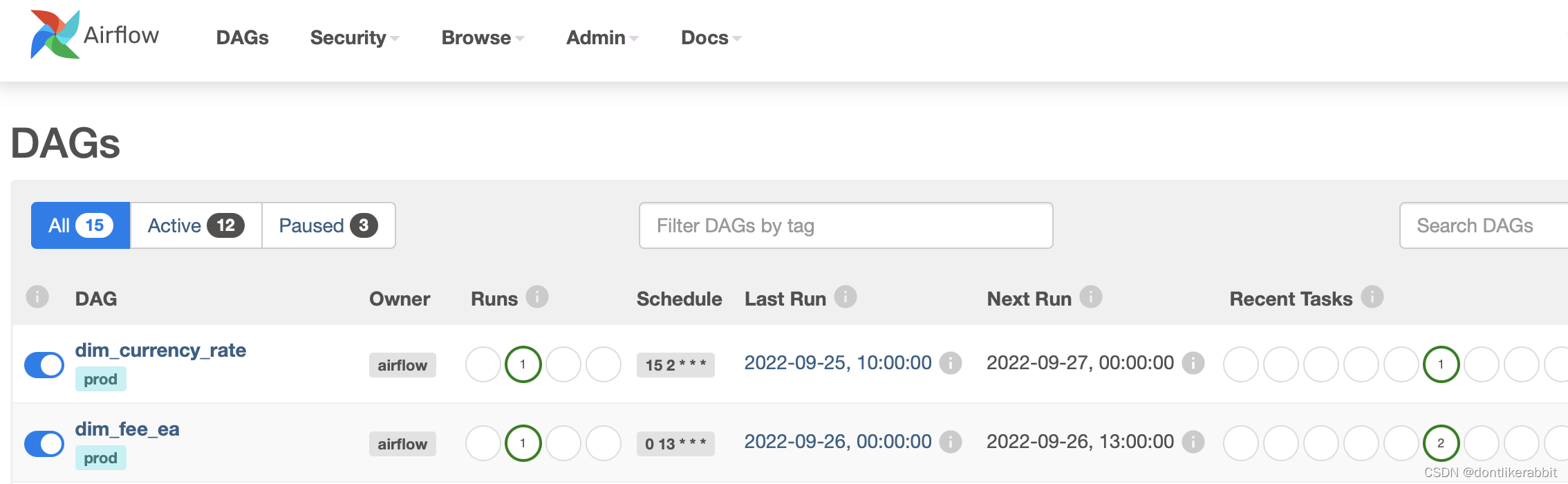

Airflow的DAG调度

py文件:

from airflow import DAG

from airflow.utils.dates import days_ago

from datetime import timedelta,date,datetime

from airflow.providers.amazon.aws.operators.redshift import RedshiftSQLOperator

# Import the Pendulum library.

import pendulum

# Instantiate Pendulum and set your timezone.

local_tz = pendulum.timezone("Asia/Shanghai")

default_args = {

"owner": "airflow",

"depends_on_past": False,

# Set the DAGs timezone by passing the timezone-aware Pendulum object.

"start_date": datetime(2022, 9, 26, tzinfo=local_tz),

"email": ["airflow@airflow.com"],

"email_on_failure": False,

"email_on_retry": False,

"retries": 1,

"retry_delay": timedelta(minutes=5)

}

with DAG(

dag_id="dim_fee_ea",#要根据自己的情况修改

default_args=default_args,

schedule_interval='0 13 * * *',#要根据自己的情况修改

catchup=False,

tags=['prod'],

) as dag:

fee_ea_dim_init = RedshiftSQLOperator(

task_id='fee_ea_dim_init',#下面的要根据自己的情况修改

redshift_conn_id='redshift_conn_id',

sql='sql/init_dim_fee_ea.sql',#下面的要根据自己的情况修改

)

fee_ea_dev_mysql2dim = RedshiftSQLOperator(

task_id='fee_ea_dev_mysql2dim',

redshift_conn_id='redshift_conn_id',

sql='sql/dev_dim_fee_ea.sql',

)

fee_ea_dim_init >> fee_ea_dev_mysql2dim #这个表示任务的依赖关系,先执行fee_ea_dim_init,再执行fee_ea_dev_mysql2dim

sql文件

--fee_ea_dim_init.sql文件内容

truncate dev.dim.dim_fee_ea

--fee_ea_dev_mysql2dim.sql文件内容

insert into dev.dim.dim_fee_ea

select * from dev.mysql_ustp_data_ow_finance.bi_tp_efs_fee_ea

where fetch_date = CURRENT_DATE

730

730

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?