The notes when study the Coursera class by Mr. Andrew Ng "Neural Networks & Deep Learning", section 3.2 "Neural Network Representation". Share it with you and hope it helps!

------------------

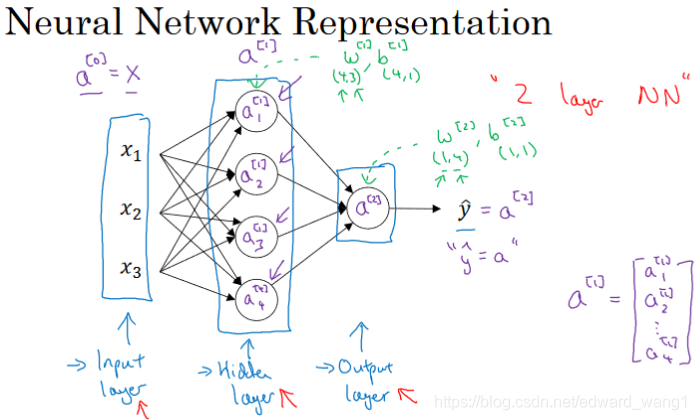

Figure-1 shows names of different parts of Neural Network.

- It has Input layer, hidden layer and output layer

- The term hidden layer refers to the fact that in a training set the true values for these nodes in the middle are not observed.

- An alternative notation for the input x is

where a stands for activation

- The hidden layer generates some set of activations called

. The first unit generates value

, the second unit generates value

and so on. So here

- The output layer generates value

which is just a real number. And

- One funny thing is this neural network here is called a 2-layer NN in convention. The reason is we don't count input layer.

- The hidden layer will have parameters associated with it:

and

- The output layer will have parameters associated with it:

and

<end>

本文分享了Andrew Ng教授在Coursera课程《深度学习》中关于神经网络表示的3.2节内容,涉及输入层、隐藏层和输出层的结构,参数定义,以及2层神经网络的传统命名。理解这些概念有助于深入学习神经网络技术。

本文分享了Andrew Ng教授在Coursera课程《深度学习》中关于神经网络表示的3.2节内容,涉及输入层、隐藏层和输出层的结构,参数定义,以及2层神经网络的传统命名。理解这些概念有助于深入学习神经网络技术。

5920

5920

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?