测试10个goroutine,每个goroutine循环1000000次执行随机存入和查询操作,key、value都一样都是string类型。

sync.map代码如下:

package main

import (

"fmt"

"log"

"math/rand"

"strconv"

"sync"

"time"

)

func main() {

SynTest()

}

func SynTest() {

var m sync.Map

m.Store("count", 0)

var wg sync.WaitGroup

start := time.Now()

ranRange := 10 * 1000000

rand.Seed(time.Now().UnixNano())

for numOfThread := 0; numOfThread < 10; numOfThread++ {

wg.Add(1)

go func() {

defer wg.Done()

for i := 0; i < 1000000; i++ {

randomNum := rand.Intn(ranRange)

val := strconv.Itoa(randomNum)

m.Store(val, val)

randomNum = rand.Intn(ranRange)

val = strconv.Itoa(randomNum)

m.Load(val)

}

}()

}

wg.Wait()

t := time.Now()

elapsed := t.Sub(start)

fmt.Println("sync map elapsed :", elapsed)

}

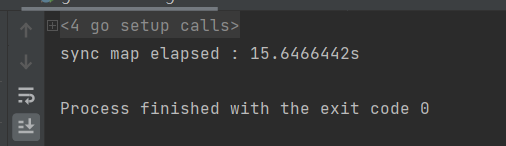

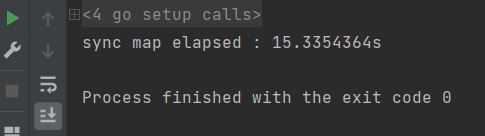

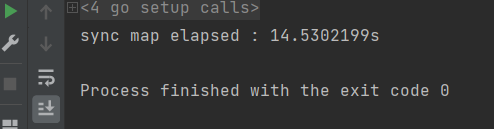

sync.map测试结果:

总共用时:45.511s

freecache代码如下:

package main

import (

"fmt"

"github.com/coocood/freecache"

"math/rand"

"runtime/debug"

"strconv"

"sync"

"time"

)

func main() {

// In bytes, where 1024 * 1024 represents a single Megabyte, and 100 * 1024*1024 represents 100 Megabytes.

cacheSize := 100 * 1024 * 1024

cache := freecache.NewCache(cacheSize)

debug.SetGCPercent(20)

var wg sync.WaitGroup

key := []byte("abc")

val := []byte("0")

expire := 0 // expire in 60 seconds

cache.Set(key, val, expire)

start := time.Now()

ranRange := 10 * 1000000

rand.Seed(time.Now().UnixNano())

for numOfThread := 0; numOfThread < 10; numOfThread++ {

wg.Add(1)

go func() {

defer wg.Done()

for i := 0; i < 1000000; i++ {

randomNum := rand.Intn(ranRange)

val := strconv.Itoa(randomNum)

cache.Set([]byte(val), []byte (val), 0)

randomNum = rand.Intn(ranRange)

val = strconv.Itoa(randomNum)

cache.Get([]byte (val))

}

}()

}

wg.Wait()

t := time.Now()

elapsed := t.Sub(start)

fmt.Println("free cache elapsed :", elapsed)

}

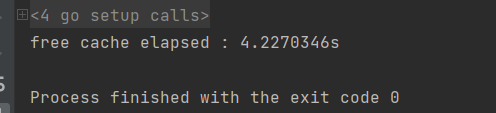

freecache测试结果:

总共用时:12.769

对比,freecache的占用时间时sync.map的28%,也就是快了两倍多。

sync.Map结构:

type Map struct {

mu Mutex

// read contains the portion of the map's contents that are safe for

// concurrent access (with or without mu held).

//

// The read field itself is always safe to load, but must only be stored with

// mu held.

//

// Entries stored in read may be updated concurrently without mu, but updating

// a previously-expunged entry requires that the entry be copied to the dirty

// map and unexpunged with mu held.

read atomic.Value // readOnly

// dirty contains the portion of the map's contents that require mu to be

// held. To ensure that the dirty map can be promoted to the read map quickly,

// it also includes all of the non-expunged entries in the read map.

//

// Expunged entries are not stored in the dirty map. An expunged entry in the

// clean map must be unexpunged and added to the dirty map before a new value

// can be stored to it.

//

// If the dirty map is nil, the next write to the map will initialize it by

// making a shallow copy of the clean map, omitting stale entries.

dirty map[any]*entry

// misses counts the number of loads since the read map was last updated that

// needed to lock mu to determine whether the key was present.

//

// Once enough misses have occurred to cover the cost of copying the dirty

// map, the dirty map will be promoted to the read map (in the unamended

// state) and the next store to the map will make a new dirty copy.

misses int

}根据注释的意思如果读操作的话,不需要持有锁。其中只有一把锁,粒度大。具体的真正实现原理还需要继续深入看。

freecache:

// Cache is a freecache instance.

type Cache struct {

locks [segmentCount]sync.Mutex

segments [segmentCount]segment

}

// a segment contains 256 slots, a slot is an array of entry pointers ordered by hash16 value

// the entry can be looked up by hash value of the key.

type segment struct {

rb RingBuf // ring buffer that stores data

segId int

_ uint32

missCount int64

hitCount int64

entryCount int64

totalCount int64 // number of entries in ring buffer, including deleted entries.

totalTime int64 // used to calculate least recent used entry.

timer Timer // Timer giving current time

totalEvacuate int64 // used for debug

totalExpired int64 // used for debug

overwrites int64 // used for debug

touched int64 // used for debug

vacuumLen int64 // up to vacuumLen, new data can be written without overwriting old data.

slotLens [256]int32 // The actual length for every slot.

slotCap int32 // max number of entry pointers a slot can hold.

slotsData []entryPtr // shared by all 256 slots

}

// Ring buffer has a fixed size, when data exceeds the

// size, old data will be overwritten by new data.

// It only contains the data in the stream from begin to end

type RingBuf struct {

begin int64 // beginning offset of the data stream.

end int64 // ending offset of the data stream.

data []byte

index int //range from '0' to 'len(rb.data)-1'

}这个就是行锁级别了,另外每把锁对应一个ringbuffer,。它用于存数据。先经过哈希计算,该key、val应该属于哪个ringbuffer,然后再对这个ringbuffer加锁操作。支持256个ringbuffer。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?